Update README.md

Browse files

README.md

CHANGED

|

@@ -1,350 +1,152 @@

|

|

| 1 |

-

|

| 2 |

-

library_name: transformers

|

| 3 |

-

license: apache-2.0

|

| 4 |

-

license_link: https://huggingface.co/Qwen/Qwen3-Next-80B-A3B-Instruct/blob/main/LICENSE

|

| 5 |

-

pipeline_tag: text-generation

|

| 6 |

-

---

|

| 7 |

-

|

| 8 |

-

# Qwen3-Next-80B-A3B-Instruct

|

| 9 |

-

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 10 |

-

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 11 |

-

</a>

|

| 12 |

-

|

| 13 |

-

Over the past few months, we have observed increasingly clear trends toward scaling both total parameters and context lengths in the pursuit of more powerful and agentic artificial intelligence (AI).

|

| 14 |

-

We are excited to share our latest advancements in addressing these demands, centered on improving scaling efficiency through innovative model architecture.

|

| 15 |

-

We call this next-generation foundation models **Qwen3-Next**.

|

| 16 |

-

|

| 17 |

-

## Highlights

|

| 18 |

-

|

| 19 |

-

**Qwen3-Next-80B-A3B** is the first installment in the Qwen3-Next series and features the following key enchancements:

|

| 20 |

-

- **Hybrid Attention**: Replaces standard attention with the combination of **Gated DeltaNet** and **Gated Attention**, enabling efficient context modeling for ultra-long context length.

|

| 21 |

-

- **High-Sparsity Mixture-of-Experts (MoE)**: Achieves an extreme low activation ratio in MoE layers, drastically reducing FLOPs per token while preserving model capacity.

|

| 22 |

-

- **Stability Optimizations**: Includes techniques such as **zero-centered and weight-decayed layernorm**, and other stabilizing enhancements for robust pre-training and post-training.

|

| 23 |

-

- **Multi-Token Prediction (MTP)**: Boosts pretraining model performance and accelerates inference.

|

| 24 |

-

|

| 25 |

-

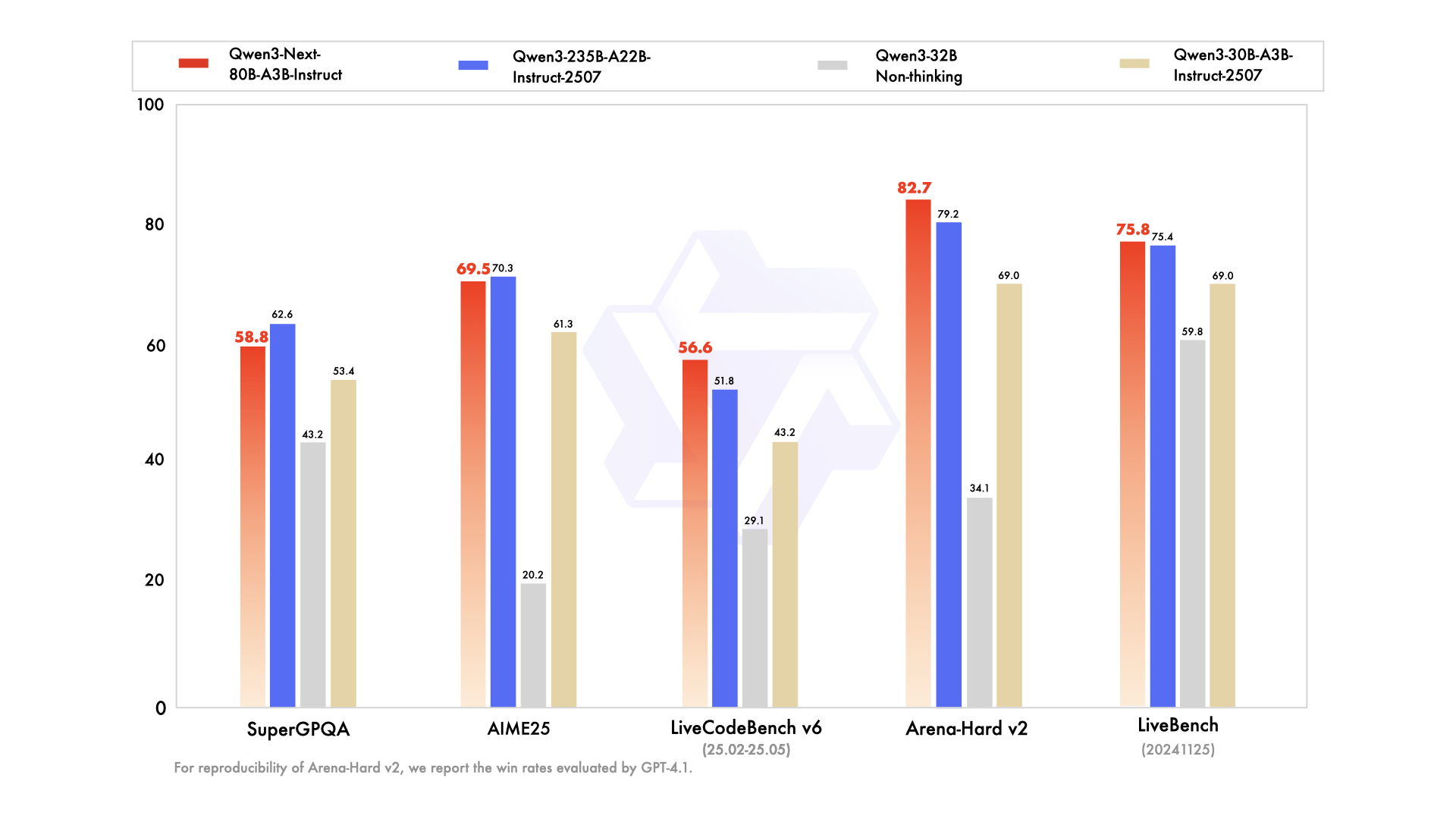

We are seeing strong performance in terms of both parameter efficiency and inference speed for Qwen3-Next-80B-A3B:

|

| 26 |

-

- Qwen3-Next-80B-A3B-Base outperforms Qwen3-32B-Base on downstream tasks with 10% of the total training cost and with 10 times inference throughput for context over 32K tokens.

|

| 27 |

-

- Qwen3-Next-80B-A3B-Instruct performs on par with Qwen3-235B-A22B-Instruct-2507 on certain benchmarks, while demonstrating significant advantages in handling ultra-long-context tasks up to 256K tokens.

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

For more details, please refer to our blog post [Qwen3-Next](https://qwenlm.github.io/blog/qwen3_next/).

|

| 32 |

-

|

| 33 |

-

## Model Overview

|

| 34 |

-

|

| 35 |

-

> [!Note]

|

| 36 |

-

> **Qwen3-Next-80B-A3B-Instruct** supports only instruct (non-thinking) mode and does not generate ``<think></think>`` blocks in its output.

|

| 37 |

-

|

| 38 |

-

**Qwen3-Next-80B-A3B-Instruct** has the following features:

|

| 39 |

-

- Type: Causal Language Models

|

| 40 |

-

- Training Stage: Pretraining (15T tokens) & Post-training

|

| 41 |

-

- Number of Parameters: 80B in total and 3B activated

|

| 42 |

-

- Number of Paramaters (Non-Embedding): 79B

|

| 43 |

-

- Number of Layers: 48

|

| 44 |

-

- Hidden Dimension: 2048

|

| 45 |

-

- Hybrid Layout: 12 \* (3 \* (Gated DeltaNet -> MoE) -> (Gated Attention -> MoE))

|

| 46 |

-

- Gated Attention:

|

| 47 |

-

- Number of Attention Heads: 16 for Q and 2 for KV

|

| 48 |

-

- Head Dimension: 256

|

| 49 |

-

- Rotary Position Embedding Dimension: 64

|

| 50 |

-

- Gated DeltaNet:

|

| 51 |

-

- Number of Linear Attention Heads: 32 for V and 16 for QK

|

| 52 |

-

- Head Dimension: 128

|

| 53 |

-

- Mixture of Experts:

|

| 54 |

-

- Number of Experts: 512

|

| 55 |

-

- Number of Activated Experts: 10

|

| 56 |

-

- Number of Shared Experts: 1

|

| 57 |

-

- Expert Intermediate Dimension: 512

|

| 58 |

-

- Context Length: 262,144 natively and extensible up to 1,010,000 tokens

|

| 59 |

-

|

| 60 |

-

<img src="https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3-Next/model_architecture.png" height="384px" title="Qwen3-Next Model Architecture" />

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

## Performance

|

| 64 |

-

|

| 65 |

-

| | Qwen3-30B-A3B-Instruct-2507 | Qwen3-32B Non-Thinking | Qwen3-235B-A22B-Instruct-2507 | Qwen3-Next-80B-A3B-Instruct |

|

| 66 |

-

|--- | --- | --- | --- | --- |

|

| 67 |

-

| **Knowledge** | | | | |

|

| 68 |

-

| MMLU-Pro | 78.4 | 71.9 | **83.0** | 80.6 |

|

| 69 |

-

| MMLU-Redux | 89.3 | 85.7 | **93.1** | 90.9 |

|

| 70 |

-

| GPQA | 70.4 | 54.6 | **77.5** | 72.9 |

|

| 71 |

-

| SuperGPQA | 53.4 | 43.2 | **62.6** | 58.8 |

|

| 72 |

-

| **Reasoning** | | | | |

|

| 73 |

-

| AIME25 | 61.3 | 20.2 | **70.3** | 69.5 |

|

| 74 |

-

| HMMT25 | 43.0 | 9.8 | **55.4** | 54.1 |

|

| 75 |

-

| LiveBench 20241125 | 69.0 | 59.8 | 75.4 | **75.8** |

|

| 76 |

-

| **Coding** | | | | |

|

| 77 |

-

| LiveCodeBench v6 (25.02-25.05) | 43.2 | 29.1 | 51.8 | **56.6** |

|

| 78 |

-

| MultiPL-E | 83.8 | 76.9 | **87.9** | 87.8 |

|

| 79 |

-

| Aider-Polyglot | 35.6 | 40.0 | **57.3** | 49.8 |

|

| 80 |

-

| **Alignment** | | | | |

|

| 81 |

-

| IFEval | 84.7 | 83.2 | **88.7** | 87.6 |

|

| 82 |

-

| Arena-Hard v2* | 69.0 | 34.1 | 79.2 | **82.7** |

|

| 83 |

-

| Creative Writing v3 | 86.0 | 78.3 | **87.5** | 85.3 |

|

| 84 |

-

| WritingBench | 85.5 | 75.4 | 85.2 | **87.3** |

|

| 85 |

-

| **Agent** | | | | |

|

| 86 |

-

| BFCL-v3 | 65.1 | 63.0 | **70.9** | 70.3 |

|

| 87 |

-

| TAU1-Retail | 59.1 | 40.1 | **71.3** | 60.9 |

|

| 88 |

-

| TAU1-Airline | 40.0 | 17.0 | **44.0** | 44.0 |

|

| 89 |

-

| TAU2-Retail | 57.0 | 48.8 | **74.6** | 57.3 |

|

| 90 |

-

| TAU2-Airline | 38.0 | 24.0 | **50.0** | 45.5 |

|

| 91 |

-

| TAU2-Telecom | 12.3 | 24.6 | **32.5** | 13.2 |

|

| 92 |

-

| **Multilingualism** | | | | |

|

| 93 |

-

| MultiIF | 67.9 | 70.7 | **77.5** | 75.8 |

|

| 94 |

-

| MMLU-ProX | 72.0 | 69.3 | **79.4** | 76.7 |

|

| 95 |

-

| INCLUDE | 71.9 | 70.9 | **79.5** | 78.9 |

|

| 96 |

-

| PolyMATH | 43.1 | 22.5 | **50.2** | 45.9 |

|

| 97 |

-

|

| 98 |

-

*: For reproducibility, we report the win rates evaluated by GPT-4.1.

|

| 99 |

-

|

| 100 |

-

## Quickstart

|

| 101 |

-

|

| 102 |

-

The code for Qwen3-Next has been merged into the main branch of Hugging Face `transformers`.

|

| 103 |

-

|

| 104 |

-

```shell

|

| 105 |

-

pip install git+https://github.com/huggingface/transformers.git@main

|

| 106 |

-

```

|

| 107 |

|

| 108 |

-

With

|

| 109 |

-

```

|

| 110 |

-

KeyError: 'qwen3_next'

|

| 111 |

-

```

|

| 112 |

|

| 113 |

-

|

| 114 |

-

```python

|

| 115 |

-

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 116 |

|

| 117 |

-

|

| 118 |

|

| 119 |

-

|

| 120 |

-

|

| 121 |

-

|

| 122 |

-

model_name,

|

| 123 |

-

dtype="auto",

|

| 124 |

-

device_map="auto",

|

| 125 |

-

)

|

| 126 |

|

| 127 |

-

|

| 128 |

-

prompt = "Give me a short introduction to large language model."

|

| 129 |

-

messages = [

|

| 130 |

-

{"role": "user", "content": prompt},

|

| 131 |

-

]

|

| 132 |

-

text = tokenizer.apply_chat_template(

|

| 133 |

-

messages,

|

| 134 |

-

tokenize=False,

|

| 135 |

-

add_generation_prompt=True,

|

| 136 |

-

)

|

| 137 |

-

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 138 |

|

| 139 |

-

|

| 140 |

-

generated_ids = model.generate(

|

| 141 |

-

**model_inputs,

|

| 142 |

-

max_new_tokens=16384,

|

| 143 |

-

)

|

| 144 |

-

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 145 |

|

| 146 |

-

|

| 147 |

|

| 148 |

-

|

| 149 |

-

```

|

| 150 |

|

| 151 |

-

|

| 152 |

-

> Multi-Token Prediction (MTP) is not generally available in Hugging Face Transformers.

|

| 153 |

|

| 154 |

-

|

| 155 |

-

|

| 156 |

-

|

| 157 |

|

| 158 |

-

|

| 159 |

-

> Depending on the inference settings, you may observe better efficiency with [`flash-linear-attention`](https://github.com/fla-org/flash-linear-attention#installation) and [`causal-conv1d`](https://github.com/Dao-AILab/causal-conv1d).

|

| 160 |

-

> See the above links for detailed instructions and requirements.

|

| 161 |

|

|

|

|

| 162 |

|

| 163 |

-

|

|

|

|

|

|

|

| 164 |

|

| 165 |

-

|

| 166 |

|

| 167 |

-

|

|

|

|

|

|

|

| 168 |

|

| 169 |

-

|

| 170 |

-

SGLang could be used to launch a server with OpenAI-compatible API service.

|

| 171 |

|

| 172 |

-

|

| 173 |

-

```shell

|

| 174 |

-

pip install 'sglang[all] @ git+https://github.com/sgl-project/sglang.git@main#subdirectory=python'

|

| 175 |

-

```

|

| 176 |

|

| 177 |

-

|

| 178 |

-

|

| 179 |

-

|

| 180 |

-

|

|

|

|

|

|

|

|

|

|

| 181 |

|

| 182 |

-

|

| 183 |

-

```shell

|

| 184 |

-

SGLANG_ALLOW_OVERWRITE_LONGER_CONTEXT_LEN=1 python -m sglang.launch_server --model-path Qwen/Qwen3-Next-80B-A3B-Instruct --port 30000 --tp-size 4 --context-length 262144 --mem-fraction-static 0.8 --speculative-algo NEXTN --speculative-num-steps 3 --speculative-eagle-topk 1 --speculative-num-draft-tokens 4

|

| 185 |

-

```

|

| 186 |

|

| 187 |

-

|

| 188 |

-

> The environment variable `SGLANG_ALLOW_OVERWRITE_LONGER_CONTEXT_LEN=1` is required at the moment.

|

| 189 |

|

| 190 |

-

|

| 191 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 192 |

|

| 193 |

-

|

| 194 |

|

| 195 |

-

|

| 196 |

-

vLLM could be used to launch a server with OpenAI-compatible API service.

|

| 197 |

|

| 198 |

-

|

| 199 |

-

|

| 200 |

-

|

| 201 |

-

```

|

| 202 |

|

| 203 |

-

The following command can be used to create an API endpoint at `http://localhost:8000/v1` with maximum context length 256K tokens using tensor parallel on 4 GPUs.

|

| 204 |

-

```shell

|

| 205 |

-

VLLM_ALLOW_LONG_MAX_MODEL_LEN=1 vllm serve Qwen/Qwen3-Next-80B-A3B-Instruct --port 8000 --tensor-parallel-size 4 --max-model-len 262144

|

| 206 |

```

|

| 207 |

|

| 208 |

-

|

| 209 |

-

```shell

|

| 210 |

-

VLLM_ALLOW_LONG_MAX_MODEL_LEN=1 vllm serve Qwen/Qwen3-Next-80B-A3B-Instruct --port 8000 --tensor-parallel-size 4 --max-model-len 262144 --speculative-config '{"method":"qwen3_next_mtp","num_speculative_tokens":2}'

|

| 211 |

-

```

|

| 212 |

|

| 213 |

-

|

| 214 |

-

|

| 215 |

-

|

| 216 |

-

> [!Note]

|

| 217 |

-

> The default context length is 256K. Consider reducing the context length to a smaller value, e.g., `32768`, if the server fail to start.

|

| 218 |

-

|

| 219 |

-

## Agentic Use

|

| 220 |

-

|

| 221 |

-

Qwen3 excels in tool calling capabilities. We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

|

| 222 |

-

|

| 223 |

-

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

|

| 224 |

-

```python

|

| 225 |

-

from qwen_agent.agents import Assistant

|

| 226 |

-

|

| 227 |

-

# Define LLM

|

| 228 |

-

llm_cfg = {

|

| 229 |

-

'model': 'Qwen3-Next-80B-A3B-Instruct',

|

| 230 |

-

|

| 231 |

-

# Use a custom endpoint compatible with OpenAI API:

|

| 232 |

-

'model_server': 'http://localhost:8000/v1', # api_base

|

| 233 |

-

'api_key': 'EMPTY',

|

| 234 |

-

}

|

| 235 |

-

|

| 236 |

-

# Define Tools

|

| 237 |

-

tools = [

|

| 238 |

-

{'mcpServers': { # You can specify the MCP configuration file

|

| 239 |

-

'time': {

|

| 240 |

-

'command': 'uvx',

|

| 241 |

-

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

|

| 242 |

-

},

|

| 243 |

-

"fetch": {

|

| 244 |

-

"command": "uvx",

|

| 245 |

-

"args": ["mcp-server-fetch"]

|

| 246 |

-

}

|

| 247 |

-

}

|

| 248 |

-

},

|

| 249 |

-

'code_interpreter', # Built-in tools

|

| 250 |

-

]

|

| 251 |

|

| 252 |

-

|

| 253 |

-

bot = Assistant(llm=llm_cfg, function_list=tools)

|

| 254 |

|

| 255 |

-

|

| 256 |

-

|

| 257 |

-

|

| 258 |

-

pass

|

| 259 |

-

print(responses)

|

| 260 |

```

|

| 261 |

|

|

|

|

| 262 |

|

| 263 |

-

|

|

|

|

| 264 |

|

| 265 |

-

|

| 266 |

-

For conversations where the total length (including both input and output) significantly exceeds this limit, we recommend using RoPE scaling techniques to handle long texts effectively.

|

| 267 |

-

We have validated the model's performance on context lengths of up to 1 million tokens using the [YaRN](https://arxiv.org/abs/2309.00071) method.

|

| 268 |

|

| 269 |

-

YaRN is currently supported by several inference frameworks, e.g., `transformers`, `vllm` and `sglang`.

|

| 270 |

-

In general, there are two approaches to enabling YaRN for supported frameworks:

|

| 271 |

|

| 272 |

-

|

| 273 |

-

In the `config.json` file, add the `rope_scaling` fields:

|

| 274 |

-

```json

|

| 275 |

-

{

|

| 276 |

-

...,

|

| 277 |

-

"rope_scaling": {

|

| 278 |

-

"rope_type": "yarn",

|

| 279 |

-

"factor": 4.0,

|

| 280 |

-

"original_max_position_embeddings": 262144

|

| 281 |

-

}

|

| 282 |

-

}

|

| 283 |

-

```

|

| 284 |

|

| 285 |

-

-

|

| 286 |

|

| 287 |

-

|

| 288 |

-

|

| 289 |

-

|

| 290 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 291 |

|

| 292 |

-

|

| 293 |

-

```shell

|

| 294 |

-

SGLANG_ALLOW_OVERWRITE_LONGER_CONTEXT_LEN=1 python -m sglang.launch_server ... --json-model-override-args '{"rope_scaling":{"rope_type":"yarn","factor":4.0,"original_max_position_embeddings":262144}}' --context-length 1010000

|

| 295 |

-

```

|

| 296 |

|

| 297 |

-

|

| 298 |

-

> All the notable open-source frameworks implement static YaRN, which means the scaling factor remains constant regardless of input length, **potentially impacting performance on shorter texts.**

|

| 299 |

-

> We advise adding the `rope_scaling` configuration only when processing long contexts is required.

|

| 300 |

-

> It is also recommended to modify the `factor` as needed. For example, if the typical context length for your application is 524,288 tokens, it would be better to set `factor` as 2.0.

|

| 301 |

|

| 302 |

-

####

|

| 303 |

|

| 304 |

-

|

|

|

|

|

|

|

| 305 |

|

| 306 |

-

|

| 307 |

-

|---------------------------------------------|---------|------|------|------|------|------|------|------|------|------|------|------|------|------|------|-------|

|

| 308 |

-

| Qwen3-30B-A3B-Instruct-2507 | 86.8 | 98.0 | 96.7 | 96.9 | 97.2 | 93.4 | 91.0 | 89.1 | 89.8 | 82.5 | 83.6 | 78.4 | 79.7 | 77.6 | 75.7 | 72.8 |

|

| 309 |

-

| Qwen3-235B-A22B-Instruct-2507 | 92.5 | 98.5 | 97.6 | 96.9 | 97.3 | 95.8 | 94.9 | 93.9 | 94.5 | 91.0 | 92.2 | 90.9 | 87.8 | 84.8 | 86.5 | 84.5 |

|

| 310 |

-

| Qwen3-Next-80B-A3B-Instruct | 91.8 | 98.5 | 99.0 | 98.0 | 98.7 | 97.6 | 95.0 | 96.0 | 94.0 | 93.5 | 91.7 | 86.9 | 85.5 | 81.7 | 80.3 | 80.3 |

|

| 311 |

|

| 312 |

-

|

| 313 |

-

|

|

|

|

|

|

|

| 314 |

|

| 315 |

-

|

| 316 |

|

| 317 |

-

|

|

|

|

|

|

|

|

|

|

| 318 |

|

| 319 |

-

|

| 320 |

-

- We suggest using `Temperature=0.7`, `TopP=0.8`, `TopK=20`, and `MinP=0`.

|

| 321 |

-

- For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

|

| 322 |

|

| 323 |

-

|

| 324 |

|

| 325 |

-

|

| 326 |

-

|

| 327 |

-

|

|

|

|

| 328 |

|

| 329 |

-

### Citation

|

| 330 |

|

| 331 |

-

|

| 332 |

|

| 333 |

-

|

| 334 |

-

@misc{qwen3technicalreport,

|

| 335 |

-

title={Qwen3 Technical Report},

|

| 336 |

-

author={Qwen Team},

|

| 337 |

-

year={2025},

|

| 338 |

-

eprint={2505.09388},

|

| 339 |

-

archivePrefix={arXiv},

|

| 340 |

-

primaryClass={cs.CL},

|

| 341 |

-

url={https://arxiv.org/abs/2505.09388},

|

| 342 |

-

}

|

| 343 |

-

|

| 344 |

-

@article{qwen2.5-1m,

|

| 345 |

-

title={Qwen2.5-1M Technical Report},

|

| 346 |

-

author={An Yang and Bowen Yu and Chengyuan Li and Dayiheng Liu and Fei Huang and Haoyan Huang and Jiandong Jiang and Jianhong Tu and Jianwei Zhang and Jingren Zhou and Junyang Lin and Kai Dang and Kexin Yang and Le Yu and Mei Li and Minmin Sun and Qin Zhu and Rui Men and Tao He and Weijia Xu and Wenbiao Yin and Wenyuan Yu and Xiafei Qiu and Xingzhang Ren and Xinlong Yang and Yong Li and Zhiying Xu and Zipeng Zhang},

|

| 347 |

-

journal={arXiv preprint arXiv:2501.15383},

|

| 348 |

-

year={2025}

|

| 349 |

-

}

|

| 350 |

-

```

|

|

|

|

| 1 |

+

# Introduction

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

|

| 3 |

+

**FlagOS** is a unified heterogeneous computing software stack for large models, co-developed with leading global chip manufacturers. With core technologies such as the **FlagScale** distributed training/inference framework, **FlagGems** universal operator library, **FlagCX** communication library, and **FlagTree** unified compiler, the **FlagRelease** platform leverages the FlagOS stack to automatically produce and release various combinations of <chip + open-source model>. This enables efficient and automated model migration across diverse chips, opening a new chapter for large model deployment and application.

|

|

|

|

|

|

|

|

|

|

| 4 |

|

| 5 |

+

Based on this, the **Qwen3-Next-80B-A3B-Instruct-FlagOS** model is adapted for the Nvidia chip using the FlagOS software stack, enabling:

|

|

|

|

|

|

|

| 6 |

|

| 7 |

+

### Integrated Deployment

|

| 8 |

|

| 9 |

+

- Deep integration with the open-source [FlagScale framework](https://github.com/FlagOpen/FlagScale)

|

| 10 |

+

- Out-of-the-box inference scripts with pre-configured hardware and software parameters

|

| 11 |

+

- Released **FlagOS** container image supporting deployment within minutes

|

|

|

|

|

|

|

|

|

|

|

|

|

| 12 |

|

| 13 |

+

### Consistency Validation

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 14 |

|

| 15 |

+

- Rigorously evaluated through benchmark testing: Performance and results from the FlagOS software stack are compared against native stacks on multiple public.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 16 |

|

| 17 |

+

# Technical Overview

|

| 18 |

|

| 19 |

+

## **FlagScale Distributed Training and Inference Framework**

|

|

|

|

| 20 |

|

| 21 |

+

FlagScale is an end-to-end framework for large models across heterogeneous computing resources, maximizing computational efficiency and ensuring model validity through core technologies. Its key advantages include:

|

|

|

|

| 22 |

|

| 23 |

+

- **Unified Deployment Interface:** Standardized command-line tools support one-click service deployment across multiple hardware platforms, significantly reducing adaptation costs in heterogeneous environments.

|

| 24 |

+

- **Intelligent Parallel Optimization:** Automatically generates optimal distributed parallel strategies based on chip computing characteristics, achieving dynamic load balancing of computation/communication resources.

|

| 25 |

+

- **Seamless Operator Switching:** Deep integration with the FlagGems operator library allows high-performance operators to be invoked via environment variables without modifying model code.

|

| 26 |

|

| 27 |

+

## **FlagGems Universal Large-Model Operator Library**

|

|

|

|

|

|

|

| 28 |

|

| 29 |

+

FlagGems is a Triton-based, cross-architecture operator library collaboratively developed with industry partners. Its core strengths include:

|

| 30 |

|

| 31 |

+

- **Full-stack Coverage**: Over 100 operators, with a broader range of operator types than competing libraries.

|

| 32 |

+

- **Ecosystem Compatibility**: Supports 7 accelerator backends. Ongoing optimizations have significantly improved performance.

|

| 33 |

+

- **High Efficiency**: Employs unique code generation and runtime optimization techniques for faster secondary development and better runtime performance compared to alternatives.

|

| 34 |

|

| 35 |

+

## **FlagEval Evaluation Framework**

|

| 36 |

|

| 37 |

+

FlagEval (Libra)** is a comprehensive evaluation system and open platform for large models launched in 2023. It aims to establish scientific, fair, and open benchmarks, methodologies, and tools to help researchers assess model and training algorithm performance. It features:

|

| 38 |

+

- **Multi-dimensional Evaluation**: Supports 800+ model evaluations across NLP, CV, Audio, and Multimodal fields, covering 20+ downstream tasks including language understanding and image-text generation.

|

| 39 |

+

- **Industry-Grade Use Cases**: Has completed horizontal evaluations of mainstream large models, providing authoritative benchmarks for chip-model performance validation.

|

| 40 |

|

| 41 |

+

# Evaluation Results

|

|

|

|

| 42 |

|

| 43 |

+

## Benchmark Result

|

|

|

|

|

|

|

|

|

|

| 44 |

|

| 45 |

+

| Metrics | Qwen3-Next-80B-A3B-Instruct-H100-CUDA | Qwen3-Next-80B-A3B-Instruct-FlagOS |

|

| 46 |

+

|-------------------|--------------------------|-----------------------------|

|

| 47 |

+

| AIME_0fewshot_@avg1 | 0.800 | 0.800 |

|

| 48 |

+

| GPQA_0fewshot_@avg1 | 0.643 | 0.634 |

|

| 49 |

+

| LiveBench-0fewshot_@avg1 | 0.652 | 0.640 |

|

| 50 |

+

| MMLU_5fewshot_@avg1 | 0.715 | 0.710 |

|

| 51 |

+

| MUSR_0fewshot_@avg | 0.532 | 0.532 |

|

| 52 |

|

| 53 |

+

# User Guide

|

|

|

|

|

|

|

|

|

|

| 54 |

|

| 55 |

+

**Environment Setup**

|

|

|

|

| 56 |

|

| 57 |

+

| Item | Version |

|

| 58 |

+

| ------------- | ------------------------------------------------------------ |

|

| 59 |

+

| Docker Version | Docker version 28.1.0, build 4d8c241 |

|

| 60 |

+

| Operating System | Ubuntu 22.04.5 LTS |

|

| 61 |

+

| FlagScale | Version: 0.8.0 |

|

| 62 |

+

| FlagGems | Version: 3.0 |

|

| 63 |

|

| 64 |

+

## Operation Steps

|

| 65 |

|

| 66 |

+

### Download Open-source Model Weights

|

|

|

|

| 67 |

|

| 68 |

+

```bash

|

| 69 |

+

pip install modelscope

|

| 70 |

+

modelscope download --model Qwen/Qwen3-Next-80B-A3B-Instruct --local_dir /share/Qwen3-Next-80B-A3B-Instruct

|

|

|

|

| 71 |

|

|

|

|

|

|

|

|

|

|

| 72 |

```

|

| 73 |

|

| 74 |

+

### Download FlagOS Image

|

|

|

|

|

|

|

|

|

|

| 75 |

|

| 76 |

+

```bash

|

| 77 |

+

docker pull harbor.baai.ac.cn/flagrelease-public/flagrelease_nvidia_qwen3next

|

| 78 |

+

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 79 |

|

| 80 |

+

### Start the inference service

|

|

|

|

| 81 |

|

| 82 |

+

```bash

|

| 83 |

+

#Container Startup

|

| 84 |

+

docker run --rm --init --detach --net=host --uts=host --ipc=host --security-opt=seccomp=unconfined --privileged=true --ulimit stack=67108864 --ulimit memlock=-1 --ulimit nofile=1048576:1048576 --shm-size=32G -v /share:/share --gpus all --name flagos harbor.baai.ac.cn/flagrelease-public/flagrelease_nvidia_qwen3next sleep infinity

|

|

|

|

|

|

|

| 85 |

```

|

| 86 |

|

| 87 |

+

### Serve

|

| 88 |

|

| 89 |

+

```bash

|

| 90 |

+

flagscale serve qwen3_next

|

| 91 |

|

| 92 |

+

```

|

|

|

|

|

|

|

| 93 |

|

|

|

|

|

|

|

| 94 |

|

| 95 |

+

## Service Invocation

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 96 |

|

| 97 |

+

### API-based Invocation Script

|

| 98 |

|

| 99 |

+

```bash

|

| 100 |

+

import openai

|

| 101 |

+

openai.api_key = "EMPTY"

|

| 102 |

+

openai.base_url = "http://<server_ip>:9010/v1/"

|

| 103 |

+

model = "Qwen3-Next-80B-A3B-Instruct-nvidia-flagos"

|

| 104 |

+

messages = [

|

| 105 |

+

{"role": "system", "content": "You are a helpful assistant."},

|

| 106 |

+

{"role": "user", "content": "What's the weather like today?"}

|

| 107 |

+

]

|

| 108 |

+

response = openai.chat.completions.create(

|

| 109 |

+

model=model,

|

| 110 |

+

messages=messages,

|

| 111 |

+

stream=False,

|

| 112 |

+

)

|

| 113 |

+

for item in response:

|

| 114 |

+

print(item)

|

| 115 |

|

| 116 |

+

```

|

|

|

|

|

|

|

|

|

|

| 117 |

|

| 118 |

+

### AnythingLLM Integration Guide

|

|

|

|

|

|

|

|

|

|

| 119 |

|

| 120 |

+

#### 1. Download & Install

|

| 121 |

|

| 122 |

+

- Visit the official site: https://anythingllm.com/

|

| 123 |

+

- Choose the appropriate version for your OS (Windows/macOS/Linux)

|

| 124 |

+

- Follow the installation wizard to complete the setup

|

| 125 |

|

| 126 |

+

#### 2. Configuration

|

|

|

|

|

|

|

|

|

|

|

|

|

| 127 |

|

| 128 |

+

- Launch AnythingLLM

|

| 129 |

+

- Open settings (bottom left, fourth tab)

|

| 130 |

+

- Configure core LLM parameters

|

| 131 |

+

- Click "Save Settings" to apply changes

|

| 132 |

|

| 133 |

+

#### 3. Model Interaction

|

| 134 |

|

| 135 |

+

- After model loading is complete:

|

| 136 |

+

- Click **"New Conversation"**

|

| 137 |

+

- Enter your question (e.g., “Explain the basics of quantum computing”)

|

| 138 |

+

- Click the send button to get a response

|

| 139 |

|

| 140 |

+

# Contributing

|

|

|

|

|

|

|

| 141 |

|

| 142 |

+

We warmly welcome global developers to join us:

|

| 143 |

|

| 144 |

+

1. Submit Issues to report problems

|

| 145 |

+

2. Create Pull Requests to contribute code

|

| 146 |

+

3. Improve technical documentation

|

| 147 |

+

4. Expand hardware adaptation support

|

| 148 |

|

|

|

|

| 149 |

|

| 150 |

+

# License

|

| 151 |

|

| 152 |

+

本模型的权重来源于Qwen/Qwen3-Next-80B-A3B-Instruct,以apache2.0协议https://www.apache.org/licenses/LICENSE-2.0.txt开源。

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|