Upload folder using huggingface_hub

Browse files- README.md +227 -0

- chat_template.json +3 -0

- config.json +80 -0

- configuration.json +1 -0

- generation_config.json +14 -0

- merges.txt +0 -0

- model.safetensors.index.json +0 -0

- preprocessor_config.json +21 -0

- tokenizer.json +0 -0

- tokenizer_config.json +239 -0

- video_preprocessor_config.json +21 -0

- vocab.json +0 -0

README.md

ADDED

|

@@ -0,0 +1,227 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

pipeline_tag: text-generation

|

| 5 |

+

tags:

|

| 6 |

+

- FP8

|

| 7 |

+

- vLLM

|

| 8 |

+

base_model:

|

| 9 |

+

- Qwen/Qwen3-VL-235B-A22B-Thinking

|

| 10 |

+

base_model_relation: quantized

|

| 11 |

+

---

|

| 12 |

+

# Qwen3-VL-235B-A22B-Thinking-FP8

|

| 13 |

+

Base Model: [Qwen/Qwen3-VL-235B-A22B-Thinking](https://www.modelscope.cn/models/Qwen/Qwen3-VL-235B-A22B-Thinking)

|

| 14 |

+

|

| 15 |

+

### 【Dependencies / Installation】

|

| 16 |

+

As of **2025-09-26**, create a fresh Python environment and run:

|

| 17 |

+

```bash

|

| 18 |

+

pip install -U pip

|

| 19 |

+

pip install uv

|

| 20 |

+

pip install git+https://github.com/huggingface/transformers

|

| 21 |

+

pip install accelerate

|

| 22 |

+

pip install qwen-vl-utils==0.0.14

|

| 23 |

+

# pip install 'vllm>0.10.2' # If this is not working use the below one.

|

| 24 |

+

uv pip install -U vllm \

|

| 25 |

+

--torch-backend=auto \

|

| 26 |

+

--extra-index-url https://wheels.vllm.ai/nightly

|

| 27 |

+

```

|

| 28 |

+

or use the docker image from qwen3vl team:

|

| 29 |

+

```

|

| 30 |

+

docker run --gpus all --ipc=host --network=host --rm --name qwen3vl -it qwenllm/qwenvl:qwen3vl-cu128 bash

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

### 【vLLM Startup Command】

|

| 34 |

+

<i>Note: When launching with TP=8, include `--enable-expert-parallel`;

|

| 35 |

+

otherwise the expert tensors couldn’t be evenly sharded across GPU devices.</i>

|

| 36 |

+

|

| 37 |

+

```

|

| 38 |

+

CONTEXT_LENGTH=32768

|

| 39 |

+

|

| 40 |

+

vllm serve \

|

| 41 |

+

tclf90/Qwen3-VL-235B-A22B-Thinking-FP8 \

|

| 42 |

+

--served-model-name My_Model \

|

| 43 |

+

--enable-expert-parallel \

|

| 44 |

+

--swap-space 16 \

|

| 45 |

+

--max-num-seqs 64 \

|

| 46 |

+

--max-model-len $CONTEXT_LENGTH \

|

| 47 |

+

--gpu-memory-utilization 0.9 \

|

| 48 |

+

--tensor-parallel-size 8 \

|

| 49 |

+

--trust-remote-code \

|

| 50 |

+

--disable-log-requests \

|

| 51 |

+

--host 0.0.0.0 \

|

| 52 |

+

--port 8000

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

### 【Logs】

|

| 56 |

+

```

|

| 57 |

+

2025-09-27

|

| 58 |

+

1. Initial commit

|

| 59 |

+

```

|

| 60 |

+

|

| 61 |

+

### 【Model Files】

|

| 62 |

+

| File Size | Last Updated |

|

| 63 |

+

|-----------|--------------|

|

| 64 |

+

| `222GB` | `2025-09-27` |

|

| 65 |

+

|

| 66 |

+

### 【Model Download】

|

| 67 |

+

```python

|

| 68 |

+

from modelscope import snapshot_download

|

| 69 |

+

snapshot_download('tclf90/Qwen3-VL-235B-A22B-Thinking-FP8', cache_dir="your_local_path")

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

### 【Overview】

|

| 73 |

+

<a href="https://chat.qwenlm.ai/" target="_blank" style="margin: 2px;">

|

| 74 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 75 |

+

</a>

|

| 76 |

+

|

| 77 |

+

# Qwen3-VL-235B-A22B-Thinking

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

Meet Qwen3-VL — the most powerful vision-language model in the Qwen series to date.

|

| 81 |

+

|

| 82 |

+

This generation delivers comprehensive upgrades across the board: superior text understanding & generation, deeper visual perception & reasoning, extended context length, enhanced spatial and video dynamics comprehension, and stronger agent interaction capabilities.

|

| 83 |

+

|

| 84 |

+

Available in Dense and MoE architectures that scale from edge to cloud, with Instruct and reasoning‑enhanced Thinking editions for flexible, on‑demand deployment.

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

#### Key Enhancements:

|

| 88 |

+

|

| 89 |

+

* **Visual Agent**: Operates PC/mobile GUIs—recognizes elements, understands functions, invokes tools, completes tasks.

|

| 90 |

+

|

| 91 |

+

* **Visual Coding Boost**: Generates Draw.io/HTML/CSS/JS from images/videos.

|

| 92 |

+

|

| 93 |

+

* **Advanced Spatial Perception**: Judges object positions, viewpoints, and occlusions; provides stronger 2D grounding and enables 3D grounding for spatial reasoning and embodied AI.

|

| 94 |

+

|

| 95 |

+

* **Long Context & Video Understanding**: Native 256K context, expandable to 1M; handles books and hours-long video with full recall and second-level indexing.

|

| 96 |

+

|

| 97 |

+

* **Enhanced Multimodal Reasoning**: Excels in STEM/Math—causal analysis and logical, evidence-based answers.

|

| 98 |

+

|

| 99 |

+

* **Upgraded Visual Recognition**: Broader, higher-quality pretraining is able to “recognize everything”—celebrities, anime, products, landmarks, flora/fauna, etc.

|

| 100 |

+

|

| 101 |

+

* **Expanded OCR**: Supports 32 languages (up from 19); robust in low light, blur, and tilt; better with rare/ancient characters and jargon; improved long-document structure parsing.

|

| 102 |

+

|

| 103 |

+

* **Text Understanding on par with pure LLMs**: Seamless text–vision fusion for lossless, unified comprehension.

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

#### Model Architecture Updates:

|

| 107 |

+

|

| 108 |

+

<p align="center">

|

| 109 |

+

<img src="https://qianwen-res.oss-accelerate.aliyuncs.com/Qwen3-VL/qwen3vl_arc.jpg" width="80%"/>

|

| 110 |

+

<p>

|

| 111 |

+

|

| 112 |

+

|

| 113 |

+

1. **Interleaved-MRoPE**: Full‑frequency allocation over time, width, and height via robust positional embeddings, enhancing long‑horizon video reasoning.

|

| 114 |

+

|

| 115 |

+

2. **DeepStack**: Fuses multi‑level ViT features to capture fine‑grained details and sharpen image–text alignment.

|

| 116 |

+

|

| 117 |

+

3. **Text–Timestamp Alignment:** Moves beyond T‑RoPE to precise, timestamp‑grounded event localization for stronger video temporal modeling.

|

| 118 |

+

|

| 119 |

+

|

| 120 |

+

This is the weight repository for Qwen3-VL-235B-A22B-Thinking.

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

---

|

| 124 |

+

|

| 125 |

+

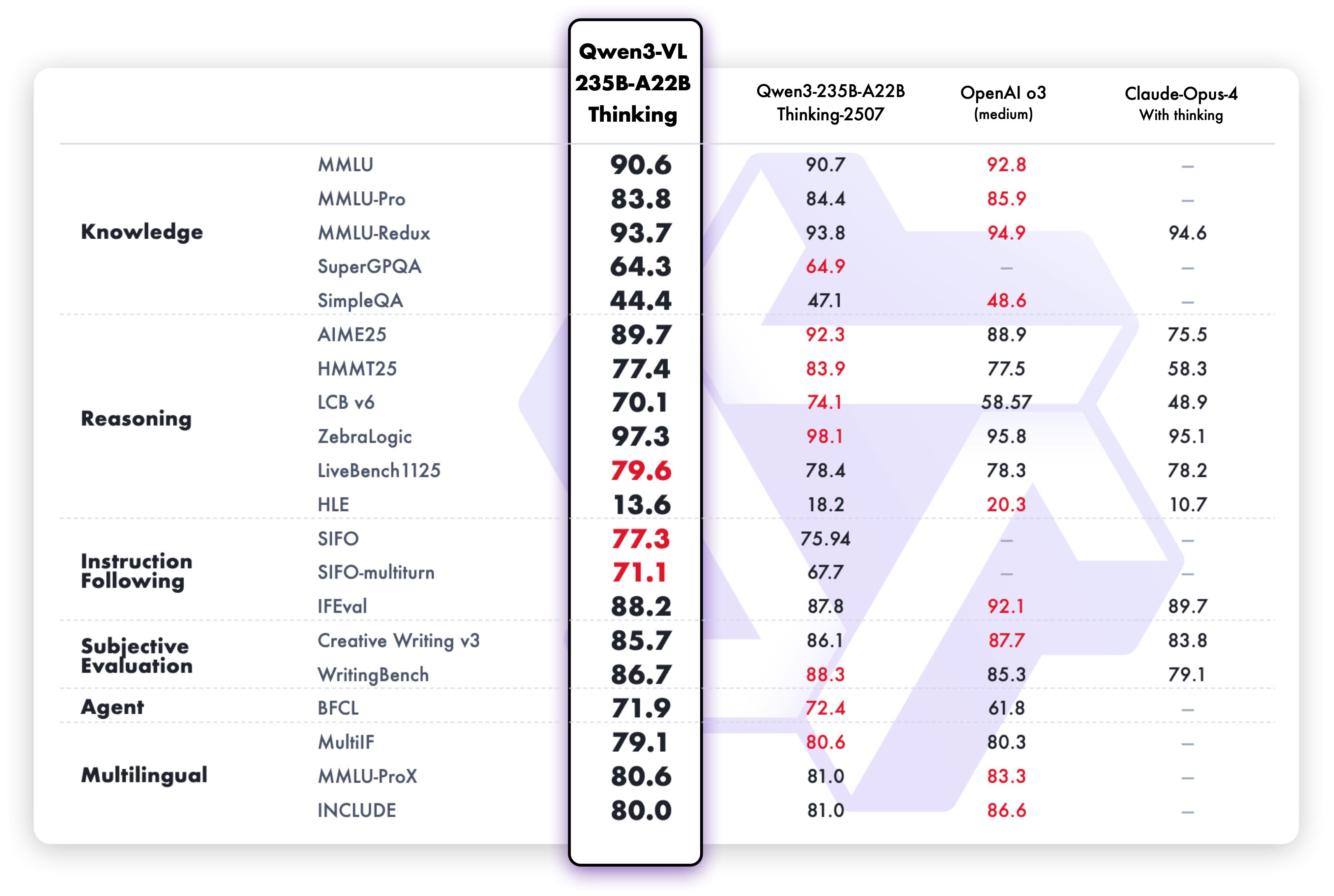

## Model Performance

|

| 126 |

+

|

| 127 |

+

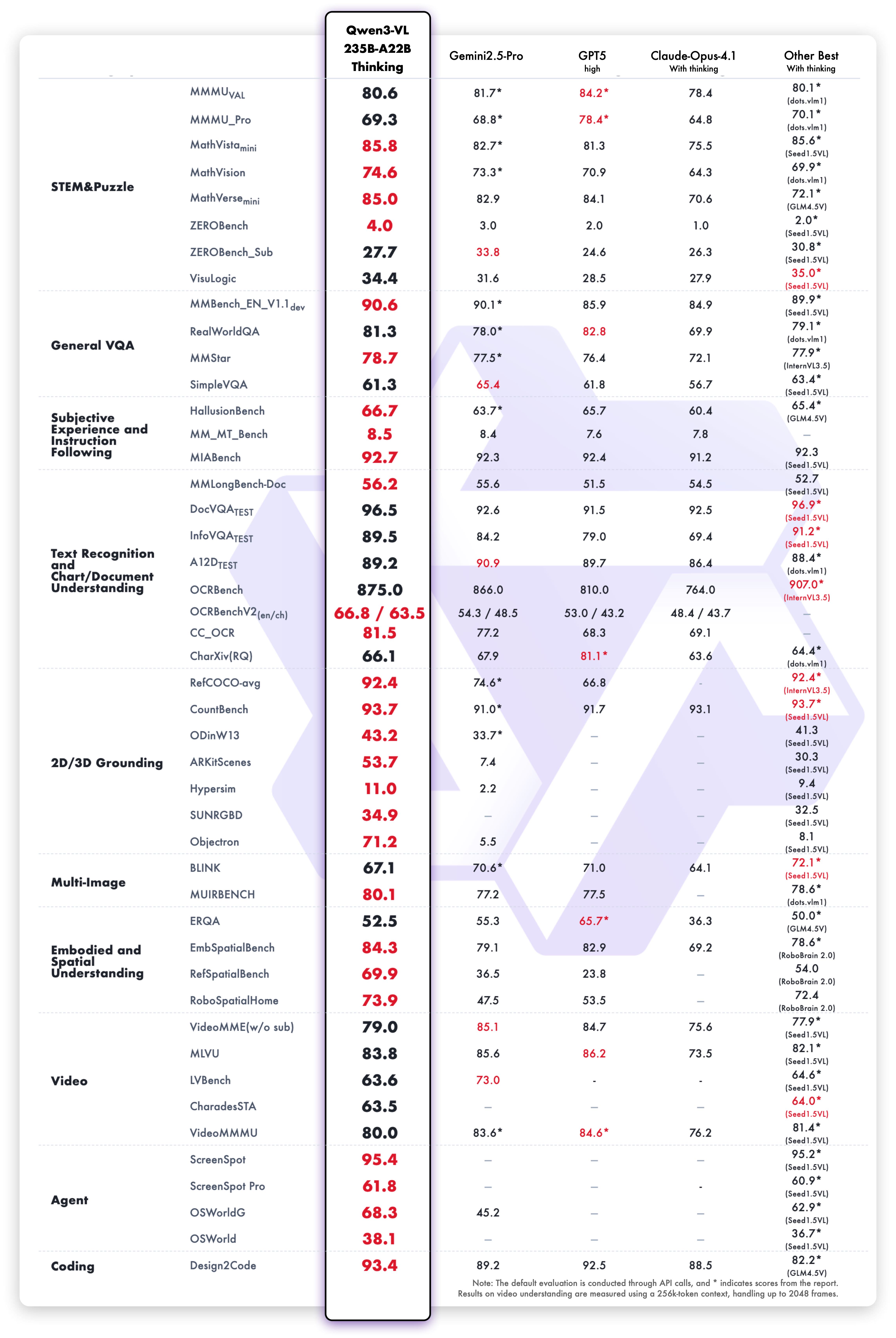

**Multimodal performance**

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

**Pure text performance**

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

## Quickstart

|

| 135 |

+

|

| 136 |

+

Below, we provide simple examples to show how to use Qwen3-VL with 🤖 ModelScope and 🤗 Transformers.

|

| 137 |

+

|

| 138 |

+

The code of Qwen3-VL has been in the latest Hugging face transformers and we advise you to build from source with command:

|

| 139 |

+

```

|

| 140 |

+

pip install git+https://github.com/huggingface/transformers

|

| 141 |

+

# pip install transformers==4.57.0 # currently, V4.57.0 is not released

|

| 142 |

+

```

|

| 143 |

+

|

| 144 |

+

### Using 🤗 Transformers to Chat

|

| 145 |

+

|

| 146 |

+

Here we show a code snippet to show you how to use the chat model with `transformers`:

|

| 147 |

+

|

| 148 |

+

```python

|

| 149 |

+

from transformers import Qwen3VLMoeForConditionalGeneration, AutoProcessor

|

| 150 |

+

|

| 151 |

+

# default: Load the model on the available device(s)

|

| 152 |

+

model = Qwen3VLMoeForConditionalGeneration.from_pretrained(

|

| 153 |

+

"Qwen/Qwen3-VL-235B-A22B-Thinking", dtype="auto", device_map="auto"

|

| 154 |

+

)

|

| 155 |

+

|

| 156 |

+

# We recommend enabling flash_attention_2 for better acceleration and memory saving, especially in multi-image and video scenarios.

|

| 157 |

+

# model = Qwen3VLMoeForConditionalGeneration.from_pretrained(

|

| 158 |

+

# "Qwen/Qwen3-VL-235B-A22B-Thinking",

|

| 159 |

+

# dtype=torch.bfloat16,

|

| 160 |

+

# attn_implementation="flash_attention_2",

|

| 161 |

+

# device_map="auto",

|

| 162 |

+

# )

|

| 163 |

+

|

| 164 |

+

processor = AutoProcessor.from_pretrained("Qwen/Qwen3-VL-235B-A22B-Thinking")

|

| 165 |

+

|

| 166 |

+

messages = [

|

| 167 |

+

{

|

| 168 |

+

"role": "user",

|

| 169 |

+

"content": [

|

| 170 |

+

{

|

| 171 |

+

"type": "image",

|

| 172 |

+

"image": "https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen-VL/assets/demo.jpeg",

|

| 173 |

+

},

|

| 174 |

+

{"type": "text", "text": "Describe this image."},

|

| 175 |

+

],

|

| 176 |

+

}

|

| 177 |

+

]

|

| 178 |

+

|

| 179 |

+

# Preparation for inference

|

| 180 |

+

inputs = processor.apply_chat_template(

|

| 181 |

+

messages,

|

| 182 |

+

tokenize=True,

|

| 183 |

+

add_generation_prompt=True,

|

| 184 |

+

return_dict=True,

|

| 185 |

+

return_tensors="pt"

|

| 186 |

+

)

|

| 187 |

+

|

| 188 |

+

# Inference: Generation of the output

|

| 189 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128)

|

| 190 |

+

generated_ids_trimmed = [

|

| 191 |

+

out_ids[len(in_ids) :] for in_ids, out_ids in zip(inputs.input_ids, generated_ids)

|

| 192 |

+

]

|

| 193 |

+

output_text = processor.batch_decode(

|

| 194 |

+

generated_ids_trimmed, skip_special_tokens=True, clean_up_tokenization_spaces=False

|

| 195 |

+

)

|

| 196 |

+

print(output_text)

|

| 197 |

+

```

|

| 198 |

+

|

| 199 |

+

|

| 200 |

+

|

| 201 |

+

## Citation

|

| 202 |

+

|

| 203 |

+

If you find our work helpful, feel free to give us a cite.

|

| 204 |

+

|

| 205 |

+

```

|

| 206 |

+

@misc{qwen2.5-VL,

|

| 207 |

+

title = {Qwen2.5-VL},

|

| 208 |

+

url = {https://qwenlm.github.io/blog/qwen2.5-vl/},

|

| 209 |

+

author = {Qwen Team},

|

| 210 |

+

month = {January},

|

| 211 |

+

year = {2025}

|

| 212 |

+

}

|

| 213 |

+

|

| 214 |

+

@article{Qwen2VL,

|

| 215 |

+

title={Qwen2-VL: Enhancing Vision-Language Model's Perception of the World at Any Resolution},

|

| 216 |

+

author={Wang, Peng and Bai, Shuai and Tan, Sinan and Wang, Shijie and Fan, Zhihao and Bai, Jinze and Chen, Keqin and Liu, Xuejing and Wang, Jialin and Ge, Wenbin and Fan, Yang and Dang, Kai and Du, Mengfei and Ren, Xuancheng and Men, Rui and Liu, Dayiheng and Zhou, Chang and Zhou, Jingren and Lin, Junyang},

|

| 217 |

+

journal={arXiv preprint arXiv:2409.12191},

|

| 218 |

+

year={2024}

|

| 219 |

+

}

|

| 220 |

+

|

| 221 |

+

@article{Qwen-VL,

|

| 222 |

+

title={Qwen-VL: A Versatile Vision-Language Model for Understanding, Localization, Text Reading, and Beyond},

|

| 223 |

+

author={Bai, Jinze and Bai, Shuai and Yang, Shusheng and Wang, Shijie and Tan, Sinan and Wang, Peng and Lin, Junyang and Zhou, Chang and Zhou, Jingren},

|

| 224 |

+

journal={arXiv preprint arXiv:2308.12966},

|

| 225 |

+

year={2023}

|

| 226 |

+

}

|

| 227 |

+

```

|

chat_template.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"chat_template": "{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- macro render_content(content, do_vision_count) %}\n {%- if content is string %}\n {{- content }}\n {%- else %}\n {%- for item in content %}\n {%- if 'image' in item or 'image_url' in item or item.type == 'image' %}\n {%- if do_vision_count %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- endif %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif 'video' in item or item.type == 'video' %}\n {%- if do_vision_count %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- endif %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in item %}\n {{- item.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n{%- endmacro %}\n{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].role == 'system' %}\n {{- render_content(messages[0].content, false) + '\\n\\n' }}\n {%- endif %}\n {{- \"# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0].role == 'system' %}\n {{- '<|im_start|>system\\n' + render_content(messages[0].content, false) + '<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}\n{%- for message in messages[::-1] %}\n {%- set index = (messages|length - 1) - loop.index0 %}\n {%- if ns.multi_step_tool and message.role == \"user\" %}\n {%- set content = render_content(message.content, false) %}\n {%- if not(content.startswith('<tool_response>') and content.endswith('</tool_response>')) %}\n {%- set ns.multi_step_tool = false %}\n {%- set ns.last_query_index = index %}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- for message in messages %}\n {%- set content = render_content(message.content, True) %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) %}\n {{- '<|im_start|>' + message.role + '\\n' + content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {%- set reasoning_content = '' %}\n {%- if message.reasoning_content is string %}\n {%- set reasoning_content = message.reasoning_content %}\n {%- else %}\n {%- if '</think>' in content %}\n {%- set reasoning_content = content.split('</think>')[0].rstrip('\\n').split('<think>')[-1].lstrip('\\n') %}\n {%- set content = content.split('</think>')[-1].lstrip('\\n') %}\n {%- endif %}\n {%- endif %}\n {%- if loop.index0 > ns.last_query_index %}\n {%- if loop.last or (not loop.last and reasoning_content) %}\n {{- '<|im_start|>' + message.role + '\\n<think>\\n' + reasoning_content.strip('\\n') + '\\n</think>\\n\\n' + content.lstrip('\\n') }}\n {%- else %}\n {{- '<|im_start|>' + message.role + '\\n' + content }}\n {%- endif %}\n {%- else %}\n {{- '<|im_start|>' + message.role + '\\n' + content }}\n {%- endif %}\n {%- if message.tool_calls %}\n {%- for tool_call in message.tool_calls %}\n {%- if (loop.first and content) or (not loop.first) %}\n {{- '\\n' }}\n {%- endif %}\n {%- if tool_call.function %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {%- if tool_call.arguments is string %}\n {{- tool_call.arguments }}\n {%- else %}\n {{- tool_call.arguments | tojson }}\n {%- endif %}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if loop.first or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n<think>\\n' }}\n{%- endif %}\n"

|

| 3 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"name_or_path": "tclf90/Qwen3-VL-235B-A22B-Thinking-FP8",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"Qwen3VLMoeForConditionalGeneration"

|

| 5 |

+

],

|

| 6 |

+

"image_token_id": 151655,

|

| 7 |

+

"model_type": "qwen3_vl_moe",

|

| 8 |

+

"text_config": {

|

| 9 |

+

"attention_bias": false,

|

| 10 |

+

"attention_dropout": 0.0,

|

| 11 |

+

"bos_token_id": 151643,

|

| 12 |

+

"decoder_sparse_step": 1,

|

| 13 |

+

"dtype": "bfloat16",

|

| 14 |

+

"eos_token_id": 151645,

|

| 15 |

+

"head_dim": 128,

|

| 16 |

+

"hidden_act": "silu",

|

| 17 |

+

"hidden_size": 4096,

|

| 18 |

+

"initializer_range": 0.02,

|

| 19 |

+

"intermediate_size": 12288,

|

| 20 |

+

"max_position_embeddings": 262144,

|

| 21 |

+

"mlp_only_layers": [],

|

| 22 |

+

"model_type": "qwen3_vl_moe_text",

|

| 23 |

+

"moe_intermediate_size": 1536,

|

| 24 |

+

"norm_topk_prob": true,

|

| 25 |

+

"num_attention_heads": 64,

|

| 26 |

+

"num_experts": 128,

|

| 27 |

+

"num_experts_per_tok": 8,

|

| 28 |

+

"num_hidden_layers": 94,

|

| 29 |

+

"num_key_value_heads": 4,

|

| 30 |

+

"rms_norm_eps": 1e-06,

|

| 31 |

+

"rope_scaling": {

|

| 32 |

+

"mrope_interleaved": true,

|

| 33 |

+

"mrope_section": [

|

| 34 |

+

24,

|

| 35 |

+

20,

|

| 36 |

+

20

|

| 37 |

+

],

|

| 38 |

+

"rope_type": "default"

|

| 39 |

+

},

|

| 40 |

+

"rope_theta": 5000000,

|

| 41 |

+

"use_cache": true,

|

| 42 |

+

"vocab_size": 151936

|

| 43 |

+

},

|

| 44 |

+

"tie_word_embeddings": false,

|

| 45 |

+

"transformers_version": "4.57.0.dev0",

|

| 46 |

+

"video_token_id": 151656,

|

| 47 |

+

"vision_config": {

|

| 48 |

+

"deepstack_visual_indexes": [

|

| 49 |

+

8,

|

| 50 |

+

16,

|

| 51 |

+

24

|

| 52 |

+

],

|

| 53 |

+

"depth": 27,

|

| 54 |

+

"hidden_act": "gelu_pytorch_tanh",

|

| 55 |

+

"hidden_size": 1152,

|

| 56 |

+

"in_channels": 3,

|

| 57 |

+

"initializer_range": 0.02,

|

| 58 |

+

"intermediate_size": 4304,

|

| 59 |

+

"model_type": "qwen3_vl_moe",

|

| 60 |

+

"num_heads": 16,

|

| 61 |

+

"num_position_embeddings": 2304,

|

| 62 |

+

"out_hidden_size": 4096,

|

| 63 |

+

"patch_size": 16,

|

| 64 |

+

"spatial_merge_size": 2,

|

| 65 |

+

"temporal_patch_size": 2

|

| 66 |

+

},

|

| 67 |

+

"vision_end_token_id": 151653,

|

| 68 |

+

"vision_start_token_id": 151652,

|

| 69 |

+

"torch_dtype": "float16",

|

| 70 |

+

"quantization_config": {

|

| 71 |

+

"quant_method": "fp8",

|

| 72 |

+

"fmt": "e4m3",

|

| 73 |

+

"activation_scheme": "dynamic",

|

| 74 |

+

"weight_block_size": [

|

| 75 |

+

128,

|

| 76 |

+

128

|

| 77 |

+

],

|

| 78 |

+

"ignored_layers": ["model.language_model.layers.0.mlp.gate", "model.language_model.layers.1.mlp.gate", "model.language_model.layers.10.mlp.gate", "model.language_model.layers.11.mlp.gate", "model.language_model.layers.12.mlp.gate", "model.language_model.layers.13.mlp.gate", "model.language_model.layers.14.mlp.gate", "model.language_model.layers.15.mlp.gate", "model.language_model.layers.16.mlp.gate", "model.language_model.layers.17.mlp.gate", "model.language_model.layers.18.mlp.gate", "model.language_model.layers.19.mlp.gate", "model.language_model.layers.2.mlp.gate", "model.language_model.layers.20.mlp.gate", "model.language_model.layers.21.mlp.gate", "model.language_model.layers.22.mlp.gate", "model.language_model.layers.23.mlp.gate", "model.language_model.layers.24.mlp.gate", "model.language_model.layers.25.mlp.gate", "model.language_model.layers.26.mlp.gate", "model.language_model.layers.27.mlp.gate", "model.language_model.layers.28.mlp.gate", "model.language_model.layers.29.mlp.gate", "model.language_model.layers.3.mlp.gate", "model.language_model.layers.30.mlp.gate", "model.language_model.layers.31.mlp.gate", "model.language_model.layers.32.mlp.gate", "model.language_model.layers.33.mlp.gate", "model.language_model.layers.34.mlp.gate", "model.language_model.layers.35.mlp.gate", "model.language_model.layers.36.mlp.gate", "model.language_model.layers.37.mlp.gate", "model.language_model.layers.38.mlp.gate", "model.language_model.layers.39.mlp.gate", "model.language_model.layers.4.mlp.gate", "model.language_model.layers.40.mlp.gate", "model.language_model.layers.41.mlp.gate", "model.language_model.layers.42.mlp.gate", "model.language_model.layers.43.mlp.gate", "model.language_model.layers.44.mlp.gate", "model.language_model.layers.45.mlp.gate", "model.language_model.layers.46.mlp.gate", "model.language_model.layers.47.mlp.gate", "model.language_model.layers.48.mlp.gate", "model.language_model.layers.49.mlp.gate", "model.language_model.layers.5.mlp.gate", "model.language_model.layers.50.mlp.gate", "model.language_model.layers.51.mlp.gate", "model.language_model.layers.52.mlp.gate", "model.language_model.layers.53.mlp.gate", "model.language_model.layers.54.mlp.gate", "model.language_model.layers.55.mlp.gate", "model.language_model.layers.56.mlp.gate", "model.language_model.layers.57.mlp.gate", "model.language_model.layers.58.mlp.gate", "model.language_model.layers.59.mlp.gate", "model.language_model.layers.6.mlp.gate", "model.language_model.layers.60.mlp.gate", "model.language_model.layers.61.mlp.gate", "model.language_model.layers.62.mlp.gate", "model.language_model.layers.63.mlp.gate", "model.language_model.layers.64.mlp.gate", "model.language_model.layers.65.mlp.gate", "model.language_model.layers.66.mlp.gate", "model.language_model.layers.67.mlp.gate", "model.language_model.layers.68.mlp.gate", "model.language_model.layers.69.mlp.gate", "model.language_model.layers.7.mlp.gate", "model.language_model.layers.70.mlp.gate", "model.language_model.layers.71.mlp.gate", "model.language_model.layers.72.mlp.gate", "model.language_model.layers.73.mlp.gate", "model.language_model.layers.74.mlp.gate", "model.language_model.layers.75.mlp.gate", "model.language_model.layers.76.mlp.gate", "model.language_model.layers.77.mlp.gate", "model.language_model.layers.78.mlp.gate", "model.language_model.layers.79.mlp.gate", "model.language_model.layers.8.mlp.gate", "model.language_model.layers.80.mlp.gate", "model.language_model.layers.81.mlp.gate", "model.language_model.layers.82.mlp.gate", "model.language_model.layers.83.mlp.gate", "model.language_model.layers.84.mlp.gate", "model.language_model.layers.85.mlp.gate", "model.language_model.layers.86.mlp.gate", "model.language_model.layers.87.mlp.gate", "model.language_model.layers.88.mlp.gate", "model.language_model.layers.89.mlp.gate", "model.language_model.layers.9.mlp.gate", "model.language_model.layers.90.mlp.gate", "model.language_model.layers.91.mlp.gate", "model.language_model.layers.92.mlp.gate", "model.language_model.layers.93.mlp.gate", "model.visual.blocks.0.attn.proj", "model.visual.blocks.0.attn.qkv", "model.visual.blocks.0.mlp.linear_fc1", "model.visual.blocks.0.mlp.linear_fc2", "model.visual.blocks.0.norm1", "model.visual.blocks.0.norm2", "model.visual.blocks.1.attn.proj", "model.visual.blocks.1.attn.qkv", "model.visual.blocks.1.mlp.linear_fc1", "model.visual.blocks.1.mlp.linear_fc2", "model.visual.blocks.1.norm1", "model.visual.blocks.1.norm2", "model.visual.blocks.10.attn.proj", "model.visual.blocks.10.attn.qkv", "model.visual.blocks.10.mlp.linear_fc1", "model.visual.blocks.10.mlp.linear_fc2", "model.visual.blocks.10.norm1", "model.visual.blocks.10.norm2", "model.visual.blocks.11.attn.proj", "model.visual.blocks.11.attn.qkv", "model.visual.blocks.11.mlp.linear_fc1", "model.visual.blocks.11.mlp.linear_fc2", "model.visual.blocks.11.norm1", "model.visual.blocks.11.norm2", "model.visual.blocks.12.attn.proj", "model.visual.blocks.12.attn.qkv", "model.visual.blocks.12.mlp.linear_fc1", "model.visual.blocks.12.mlp.linear_fc2", "model.visual.blocks.12.norm1", "model.visual.blocks.12.norm2", "model.visual.blocks.13.attn.proj", "model.visual.blocks.13.attn.qkv", "model.visual.blocks.13.mlp.linear_fc1", "model.visual.blocks.13.mlp.linear_fc2", "model.visual.blocks.13.norm1", "model.visual.blocks.13.norm2", "model.visual.blocks.14.attn.proj", "model.visual.blocks.14.attn.qkv", "model.visual.blocks.14.mlp.linear_fc1", "model.visual.blocks.14.mlp.linear_fc2", "model.visual.blocks.14.norm1", "model.visual.blocks.14.norm2", "model.visual.blocks.15.attn.proj", "model.visual.blocks.15.attn.qkv", "model.visual.blocks.15.mlp.linear_fc1", "model.visual.blocks.15.mlp.linear_fc2", "model.visual.blocks.15.norm1", "model.visual.blocks.15.norm2", "model.visual.blocks.16.attn.proj", "model.visual.blocks.16.attn.qkv", "model.visual.blocks.16.mlp.linear_fc1", "model.visual.blocks.16.mlp.linear_fc2", "model.visual.blocks.16.norm1", "model.visual.blocks.16.norm2", "model.visual.blocks.17.attn.proj", "model.visual.blocks.17.attn.qkv", "model.visual.blocks.17.mlp.linear_fc1", "model.visual.blocks.17.mlp.linear_fc2", "model.visual.blocks.17.norm1", "model.visual.blocks.17.norm2", "model.visual.blocks.18.attn.proj", "model.visual.blocks.18.attn.qkv", "model.visual.blocks.18.mlp.linear_fc1", "model.visual.blocks.18.mlp.linear_fc2", "model.visual.blocks.18.norm1", "model.visual.blocks.18.norm2", "model.visual.blocks.19.attn.proj", "model.visual.blocks.19.attn.qkv", "model.visual.blocks.19.mlp.linear_fc1", "model.visual.blocks.19.mlp.linear_fc2", "model.visual.blocks.19.norm1", "model.visual.blocks.19.norm2", "model.visual.blocks.2.attn.proj", "model.visual.blocks.2.attn.qkv", "model.visual.blocks.2.mlp.linear_fc1", "model.visual.blocks.2.mlp.linear_fc2", "model.visual.blocks.2.norm1", "model.visual.blocks.2.norm2", "model.visual.blocks.20.attn.proj", "model.visual.blocks.20.attn.qkv", "model.visual.blocks.20.mlp.linear_fc1", "model.visual.blocks.20.mlp.linear_fc2", "model.visual.blocks.20.norm1", "model.visual.blocks.20.norm2", "model.visual.blocks.21.attn.proj", "model.visual.blocks.21.attn.qkv", "model.visual.blocks.21.mlp.linear_fc1", "model.visual.blocks.21.mlp.linear_fc2", "model.visual.blocks.21.norm1", "model.visual.blocks.21.norm2", "model.visual.blocks.22.attn.proj", "model.visual.blocks.22.attn.qkv", "model.visual.blocks.22.mlp.linear_fc1", "model.visual.blocks.22.mlp.linear_fc2", "model.visual.blocks.22.norm1", "model.visual.blocks.22.norm2", "model.visual.blocks.23.attn.proj", "model.visual.blocks.23.attn.qkv", "model.visual.blocks.23.mlp.linear_fc1", "model.visual.blocks.23.mlp.linear_fc2", "model.visual.blocks.23.norm1", "model.visual.blocks.23.norm2", "model.visual.blocks.24.attn.proj", "model.visual.blocks.24.attn.qkv", "model.visual.blocks.24.mlp.linear_fc1", "model.visual.blocks.24.mlp.linear_fc2", "model.visual.blocks.24.norm1", "model.visual.blocks.24.norm2", "model.visual.blocks.25.attn.proj", "model.visual.blocks.25.attn.qkv", "model.visual.blocks.25.mlp.linear_fc1", "model.visual.blocks.25.mlp.linear_fc2", "model.visual.blocks.25.norm1", "model.visual.blocks.25.norm2", "model.visual.blocks.26.attn.proj", "model.visual.blocks.26.attn.qkv", "model.visual.blocks.26.mlp.linear_fc1", "model.visual.blocks.26.mlp.linear_fc2", "model.visual.blocks.26.norm1", "model.visual.blocks.26.norm2", "model.visual.blocks.3.attn.proj", "model.visual.blocks.3.attn.qkv", "model.visual.blocks.3.mlp.linear_fc1", "model.visual.blocks.3.mlp.linear_fc2", "model.visual.blocks.3.norm1", "model.visual.blocks.3.norm2", "model.visual.blocks.4.attn.proj", "model.visual.blocks.4.attn.qkv", "model.visual.blocks.4.mlp.linear_fc1", "model.visual.blocks.4.mlp.linear_fc2", "model.visual.blocks.4.norm1", "model.visual.blocks.4.norm2", "model.visual.blocks.5.attn.proj", "model.visual.blocks.5.attn.qkv", "model.visual.blocks.5.mlp.linear_fc1", "model.visual.blocks.5.mlp.linear_fc2", "model.visual.blocks.5.norm1", "model.visual.blocks.5.norm2", "model.visual.blocks.6.attn.proj", "model.visual.blocks.6.attn.qkv", "model.visual.blocks.6.mlp.linear_fc1", "model.visual.blocks.6.mlp.linear_fc2", "model.visual.blocks.6.norm1", "model.visual.blocks.6.norm2", "model.visual.blocks.7.attn.proj", "model.visual.blocks.7.attn.qkv", "model.visual.blocks.7.mlp.linear_fc1", "model.visual.blocks.7.mlp.linear_fc2", "model.visual.blocks.7.norm1", "model.visual.blocks.7.norm2", "model.visual.blocks.8.attn.proj", "model.visual.blocks.8.attn.qkv", "model.visual.blocks.8.mlp.linear_fc1", "model.visual.blocks.8.mlp.linear_fc2", "model.visual.blocks.8.norm1", "model.visual.blocks.8.norm2", "model.visual.blocks.9.attn.proj", "model.visual.blocks.9.attn.qkv", "model.visual.blocks.9.mlp.linear_fc1", "model.visual.blocks.9.mlp.linear_fc2", "model.visual.blocks.9.norm1", "model.visual.blocks.9.norm2", "model.visual.deepstack_merger_list.0.linear_fc1", "model.visual.deepstack_merger_list.0.linear_fc2", "model.visual.deepstack_merger_list.0.norm", "model.visual.deepstack_merger_list.1.linear_fc1", "model.visual.deepstack_merger_list.1.linear_fc2", "model.visual.deepstack_merger_list.1.norm", "model.visual.deepstack_merger_list.2.linear_fc1", "model.visual.deepstack_merger_list.2.linear_fc2", "model.visual.deepstack_merger_list.2.norm", "model.visual.merger.linear_fc1", "model.visual.merger.linear_fc2", "model.visual.merger.norm", "model.visual.patch_embed.proj", "model.visual.pos_embed"]

|

| 79 |

+

}

|

| 80 |

+

}

|

configuration.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"framework":"Pytorch","task":"image-text-to-text"}

|

generation_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"pad_token_id": 151643,

|

| 4 |

+

"do_sample": true,

|

| 5 |

+

"eos_token_id": [

|

| 6 |

+

151645,

|

| 7 |

+

151643

|

| 8 |

+

],

|

| 9 |

+

"top_k": 20,

|

| 10 |

+

"top_p": 0.95,

|

| 11 |

+

"repetition_penalty": 1.0,

|

| 12 |

+

"temperature": 0.8,

|

| 13 |

+

"transformers_version": "4.56.0"

|

| 14 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors.index.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

preprocessor_config.json

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"size": {

|

| 3 |

+

"longest_edge": 16777216,

|

| 4 |

+

"shortest_edge": 65536

|

| 5 |

+

},

|

| 6 |

+

"patch_size": 16,

|

| 7 |

+

"temporal_patch_size": 2,

|

| 8 |

+

"merge_size": 2,

|

| 9 |

+

"image_mean": [

|

| 10 |

+

0.5,

|

| 11 |

+

0.5,

|

| 12 |

+

0.5

|

| 13 |

+

],

|

| 14 |

+

"image_std": [

|

| 15 |

+

0.5,

|

| 16 |

+

0.5,

|

| 17 |

+

0.5

|

| 18 |

+

],

|

| 19 |

+

"processor_class": "Qwen3VLProcessor",

|

| 20 |

+

"image_processor_type": "Qwen2VLImageProcessorFast"

|

| 21 |

+

}

|

tokenizer.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,239 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_bos_token": false,

|

| 3 |

+

"add_prefix_space": false,

|

| 4 |

+

"added_tokens_decoder": {

|

| 5 |

+

"151643": {

|

| 6 |

+

"content": "<|endoftext|>",

|

| 7 |

+

"lstrip": false,

|

| 8 |

+

"normalized": false,

|

| 9 |

+

"rstrip": false,

|

| 10 |

+

"single_word": false,

|

| 11 |

+

"special": true

|

| 12 |

+

},

|

| 13 |

+

"151644": {

|

| 14 |

+

"content": "<|im_start|>",

|

| 15 |

+

"lstrip": false,

|

| 16 |

+

"normalized": false,

|

| 17 |

+

"rstrip": false,

|

| 18 |

+

"single_word": false,

|

| 19 |

+

"special": true

|

| 20 |

+

},

|

| 21 |

+

"151645": {

|

| 22 |

+

"content": "<|im_end|>",

|

| 23 |

+

"lstrip": false,

|

| 24 |

+

"normalized": false,

|

| 25 |

+

"rstrip": false,

|

| 26 |

+

"single_word": false,

|

| 27 |

+

"special": true

|

| 28 |

+

},

|

| 29 |

+

"151646": {

|

| 30 |

+

"content": "<|object_ref_start|>",

|

| 31 |

+

"lstrip": false,

|

| 32 |

+

"normalized": false,

|

| 33 |

+

"rstrip": false,

|

| 34 |

+

"single_word": false,

|

| 35 |

+

"special": true

|

| 36 |

+

},

|

| 37 |

+

"151647": {

|

| 38 |

+

"content": "<|object_ref_end|>",

|

| 39 |

+

"lstrip": false,

|

| 40 |

+

"normalized": false,

|

| 41 |

+

"rstrip": false,

|

| 42 |

+

"single_word": false,

|

| 43 |

+

"special": true

|

| 44 |

+

},

|

| 45 |

+

"151648": {

|

| 46 |

+

"content": "<|box_start|>",

|

| 47 |

+

"lstrip": false,

|

| 48 |

+

"normalized": false,

|

| 49 |

+

"rstrip": false,

|

| 50 |

+

"single_word": false,

|

| 51 |

+

"special": true

|

| 52 |

+

},

|

| 53 |

+

"151649": {

|

| 54 |

+

"content": "<|box_end|>",

|

| 55 |

+

"lstrip": false,

|

| 56 |

+

"normalized": false,

|

| 57 |

+

"rstrip": false,

|

| 58 |

+

"single_word": false,

|

| 59 |

+

"special": true

|

| 60 |

+

},

|

| 61 |

+

"151650": {

|

| 62 |

+

"content": "<|quad_start|>",

|

| 63 |

+

"lstrip": false,

|

| 64 |

+

"normalized": false,

|

| 65 |

+

"rstrip": false,

|

| 66 |

+

"single_word": false,

|

| 67 |

+

"special": true

|

| 68 |

+

},

|

| 69 |

+

"151651": {

|

| 70 |

+

"content": "<|quad_end|>",

|

| 71 |

+

"lstrip": false,

|

| 72 |

+

"normalized": false,

|

| 73 |

+

"rstrip": false,

|

| 74 |

+

"single_word": false,

|

| 75 |

+

"special": true

|

| 76 |

+

},

|

| 77 |

+

"151652": {

|

| 78 |

+

"content": "<|vision_start|>",

|

| 79 |

+

"lstrip": false,

|

| 80 |

+

"normalized": false,

|

| 81 |

+

"rstrip": false,

|

| 82 |

+

"single_word": false,

|

| 83 |

+

"special": true

|

| 84 |

+

},

|

| 85 |

+

"151653": {

|

| 86 |

+

"content": "<|vision_end|>",

|

| 87 |

+

"lstrip": false,

|

| 88 |

+

"normalized": false,

|

| 89 |

+

"rstrip": false,

|

| 90 |

+

"single_word": false,

|

| 91 |

+

"special": true

|

| 92 |

+

},

|

| 93 |

+

"151654": {

|

| 94 |

+

"content": "<|vision_pad|>",

|

| 95 |

+

"lstrip": false,

|

| 96 |

+

"normalized": false,

|

| 97 |

+

"rstrip": false,

|

| 98 |

+

"single_word": false,

|

| 99 |

+

"special": true

|

| 100 |

+

},

|

| 101 |

+

"151655": {

|

| 102 |

+

"content": "<|image_pad|>",

|

| 103 |

+

"lstrip": false,

|

| 104 |

+

"normalized": false,

|

| 105 |

+

"rstrip": false,

|

| 106 |

+

"single_word": false,

|

| 107 |

+

"special": true

|

| 108 |

+

},

|

| 109 |

+

"151656": {

|

| 110 |

+

"content": "<|video_pad|>",

|

| 111 |

+

"lstrip": false,

|

| 112 |

+

"normalized": false,

|

| 113 |

+

"rstrip": false,

|

| 114 |

+

"single_word": false,

|

| 115 |

+

"special": true

|

| 116 |

+

},

|

| 117 |

+

"151657": {

|

| 118 |

+

"content": "<tool_call>",

|

| 119 |

+

"lstrip": false,

|

| 120 |

+

"normalized": false,

|

| 121 |

+

"rstrip": false,

|

| 122 |

+

"single_word": false,

|

| 123 |

+

"special": false

|

| 124 |

+

},

|

| 125 |

+

"151658": {

|

| 126 |

+

"content": "</tool_call>",

|

| 127 |

+

"lstrip": false,

|

| 128 |

+

"normalized": false,

|

| 129 |

+

"rstrip": false,

|

| 130 |

+

"single_word": false,

|

| 131 |

+

"special": false

|

| 132 |

+

},

|

| 133 |

+

"151659": {

|

| 134 |

+

"content": "<|fim_prefix|>",

|

| 135 |

+

"lstrip": false,

|

| 136 |

+

"normalized": false,

|

| 137 |

+

"rstrip": false,

|

| 138 |

+

"single_word": false,

|

| 139 |

+

"special": false

|

| 140 |

+

},

|

| 141 |

+

"151660": {

|

| 142 |

+

"content": "<|fim_middle|>",

|

| 143 |

+

"lstrip": false,

|

| 144 |

+

"normalized": false,

|

| 145 |

+

"rstrip": false,

|

| 146 |

+

"single_word": false,

|

| 147 |

+

"special": false

|

| 148 |

+

},

|

| 149 |

+

"151661": {

|

| 150 |

+

"content": "<|fim_suffix|>",

|

| 151 |

+

"lstrip": false,

|

| 152 |

+

"normalized": false,

|

| 153 |

+

"rstrip": false,

|

| 154 |

+

"single_word": false,

|

| 155 |

+

"special": false

|

| 156 |

+

},

|

| 157 |

+

"151662": {

|

| 158 |

+

"content": "<|fim_pad|>",

|

| 159 |

+

"lstrip": false,

|

| 160 |

+

"normalized": false,

|

| 161 |

+

"rstrip": false,

|

| 162 |

+

"single_word": false,

|

| 163 |

+

"special": false

|

| 164 |

+

},

|

| 165 |

+

"151663": {

|

| 166 |

+

"content": "<|repo_name|>",

|

| 167 |

+

"lstrip": false,

|

| 168 |

+

"normalized": false,

|

| 169 |

+

"rstrip": false,

|

| 170 |

+

"single_word": false,

|

| 171 |

+

"special": false

|

| 172 |

+

},

|

| 173 |

+

"151664": {

|

| 174 |

+

"content": "<|file_sep|>",

|

| 175 |

+

"lstrip": false,

|

| 176 |

+

"normalized": false,

|

| 177 |

+

"rstrip": false,

|

| 178 |

+

"single_word": false,

|

| 179 |

+

"special": false

|

| 180 |

+

},

|

| 181 |

+

"151665": {

|

| 182 |

+

"content": "<tool_response>",

|

| 183 |

+

"lstrip": false,

|

| 184 |

+

"normalized": false,

|

| 185 |

+

"rstrip": false,

|

| 186 |

+

"single_word": false,

|

| 187 |

+

"special": false

|

| 188 |

+

},

|

| 189 |

+

"151666": {

|

| 190 |

+

"content": "</tool_response>",

|

| 191 |

+

"lstrip": false,

|

| 192 |

+

"normalized": false,

|

| 193 |

+

"rstrip": false,

|

| 194 |

+

"single_word": false,

|

| 195 |

+

"special": false

|

| 196 |

+

},

|

| 197 |

+

"151667": {

|

| 198 |

+

"content": "<think>",

|

| 199 |

+

"lstrip": false,

|

| 200 |

+

"normalized": false,

|

| 201 |

+

"rstrip": false,

|

| 202 |

+

"single_word": false,

|

| 203 |

+

"special": false

|

| 204 |

+

},

|

| 205 |

+

"151668": {

|

| 206 |

+

"content": "</think>",

|

| 207 |

+

"lstrip": false,

|

| 208 |

+

"normalized": false,

|

| 209 |

+

"rstrip": false,

|

| 210 |

+

"single_word": false,

|

| 211 |

+

"special": false

|

| 212 |

+

}

|

| 213 |

+

},

|

| 214 |

+

"additional_special_tokens": [

|

| 215 |

+

"<|im_start|>",

|

| 216 |

+

"<|im_end|>",

|

| 217 |

+

"<|object_ref_start|>",

|

| 218 |

+

"<|object_ref_end|>",

|

| 219 |

+

"<|box_start|>",

|

| 220 |

+

"<|box_end|>",

|

| 221 |

+

"<|quad_start|>",

|

| 222 |

+

"<|quad_end|>",

|

| 223 |

+

"<|vision_start|>",

|

| 224 |

+

"<|vision_end|>",

|

| 225 |

+

"<|vision_pad|>",

|

| 226 |

+

"<|image_pad|>",

|

| 227 |

+

"<|video_pad|>"

|

| 228 |

+

],

|

| 229 |

+

"bos_token": null,

|

| 230 |

+

"chat_template": "{%- set image_count = namespace(value=0) %}\n{%- set video_count = namespace(value=0) %}\n{%- macro render_content(content, do_vision_count) %}\n {%- if content is string %}\n {{- content }}\n {%- else %}\n {%- for item in content %}\n {%- if 'image' in item or 'image_url' in item or item.type == 'image' %}\n {%- if do_vision_count %}\n {%- set image_count.value = image_count.value + 1 %}\n {%- endif %}\n {%- if add_vision_id %}Picture {{ image_count.value }}: {% endif -%}\n <|vision_start|><|image_pad|><|vision_end|>\n {%- elif 'video' in item or item.type == 'video' %}\n {%- if do_vision_count %}\n {%- set video_count.value = video_count.value + 1 %}\n {%- endif %}\n {%- if add_vision_id %}Video {{ video_count.value }}: {% endif -%}\n <|vision_start|><|video_pad|><|vision_end|>\n {%- elif 'text' in item %}\n {{- item.text }}\n {%- endif %}\n {%- endfor %}\n {%- endif %}\n{%- endmacro %}\n{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0].role == 'system' %}\n {{- render_content(messages[0].content, false) + '\\n\\n' }}\n {%- endif %}\n {{- \"# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0].role == 'system' %}\n {{- '<|im_start|>system\\n' + render_content(messages[0].content, false) + '<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}\n{%- for message in messages[::-1] %}\n {%- set index = (messages|length - 1) - loop.index0 %}\n {%- if ns.multi_step_tool and message.role == \"user\" %}\n {%- set content = render_content(message.content, false) %}\n {%- if not(content.startswith('<tool_response>') and content.endswith('</tool_response>')) %}\n {%- set ns.multi_step_tool = false %}\n {%- set ns.last_query_index = index %}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- for message in messages %}\n {%- set content = render_content(message.content, True) %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) %}\n {{- '<|im_start|>' + message.role + '\\n' + content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {%- set reasoning_content = '' %}\n {%- if message.reasoning_content is string %}\n {%- set reasoning_content = message.reasoning_content %}\n {%- else %}\n {%- if '</think>' in content %}\n {%- set reasoning_content = content.split('</think>')[0].rstrip('\\n').split('<think>')[-1].lstrip('\\n') %}\n {%- set content = content.split('</think>')[-1].lstrip('\\n') %}\n {%- endif %}\n {%- endif %}\n {%- if loop.index0 > ns.last_query_index %}\n {%- if loop.last or (not loop.last and reasoning_content) %}\n {{- '<|im_start|>' + message.role + '\\n<think>\\n' + reasoning_content.strip('\\n') + '\\n</think>\\n\\n' + content.lstrip('\\n') }}\n {%- else %}\n {{- '<|im_start|>' + message.role + '\\n' + content }}\n {%- endif %}\n {%- else %}\n {{- '<|im_start|>' + message.role + '\\n' + content }}\n {%- endif %}\n {%- if message.tool_calls %}\n {%- for tool_call in message.tool_calls %}\n {%- if (loop.first and content) or (not loop.first) %}\n {{- '\\n' }}\n {%- endif %}\n {%- if tool_call.function %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {%- if tool_call.arguments is string %}\n {{- tool_call.arguments }}\n {%- else %}\n {{- tool_call.arguments | tojson }}\n {%- endif %}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {%- endif %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if loop.first or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n<think>\\n' }}\n{%- endif %}\n",

|

| 231 |

+

"clean_up_tokenization_spaces": false,

|

| 232 |

+

"eos_token": "<|im_end|>",

|

| 233 |

+

"errors": "replace",

|

| 234 |

+

"model_max_length": 262144,

|

| 235 |

+

"pad_token": "<|endoftext|>",

|

| 236 |

+

"split_special_tokens": false,

|

| 237 |

+

"tokenizer_class": "Qwen2Tokenizer",

|

| 238 |

+

"unk_token": null

|

| 239 |

+

}

|

video_preprocessor_config.json

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"size": {

|

| 3 |

+

"longest_edge": 25165824,

|

| 4 |

+

"shortest_edge": 4096

|

| 5 |

+

},

|

| 6 |

+

"patch_size": 16,

|

| 7 |

+

"temporal_patch_size": 2,

|

| 8 |

+

"merge_size": 2,

|

| 9 |

+

"image_mean": [

|

| 10 |

+

0.5,

|

| 11 |

+

0.5,

|

| 12 |

+

0.5

|

| 13 |

+

],

|

| 14 |

+

"image_std": [

|

| 15 |

+

0.5,

|

| 16 |

+

0.5,

|

| 17 |

+

0.5

|

| 18 |

+

],

|

| 19 |

+

"processor_class": "Qwen3VLProcessor",

|

| 20 |

+

"video_processor_type": "Qwen3VLVideoProcessor"

|

| 21 |

+

}

|

vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|