File size: 8,232 Bytes

f55ce97 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 |

{

"bomFormat": "CycloneDX",

"specVersion": "1.6",

"serialNumber": "urn:uuid:19c1f27e-d53a-45dc-94ba-a0f9fb30f79a",

"version": 1,

"metadata": {

"timestamp": "2025-07-10T08:49:17.278628+00:00",

"component": {

"type": "machine-learning-model",

"bom-ref": "bigcode/starcoder2-15b-instruct-v0.1-e7aea605-679e-5203-beb5-6bb2732ebe54",

"name": "bigcode/starcoder2-15b-instruct-v0.1",

"externalReferences": [

{

"url": "https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1",

"type": "documentation"

}

],

"modelCard": {

"modelParameters": {

"task": "text-generation",

"architectureFamily": "starcoder2",

"modelArchitecture": "Starcoder2ForCausalLM",

"datasets": [

{

"ref": "bigcode/self-oss-instruct-sc2-exec-filter-50k-87afcdf4-328e-5009-ba04-8150239e25f2"

}

]

},

"properties": [

{

"name": "library_name",

"value": "transformers"

},

{

"name": "base_model",

"value": "bigcode/starcoder2-15b"

}

],

"quantitativeAnalysis": {

"performanceMetrics": [

{

"slice": "dataset: livecodebench-codegeneration",

"type": "pass@1",

"value": 20.4

},

{

"slice": "dataset: livecodebench-selfrepair",

"type": "pass@1",

"value": 20.9

},

{

"slice": "dataset: livecodebench-testoutputprediction",

"type": "pass@1",

"value": 29.8

},

{

"slice": "dataset: livecodebench-codeexecution",

"type": "pass@1",

"value": 28.1

},

{

"slice": "dataset: humaneval",

"type": "pass@1",

"value": 72.6

},

{

"slice": "dataset: humanevalplus",

"type": "pass@1",

"value": 63.4

},

{

"slice": "dataset: mbpp",

"type": "pass@1",

"value": 75.2

},

{

"slice": "dataset: mbppplus",

"type": "pass@1",

"value": 61.2

},

{

"slice": "dataset: ds-1000",

"type": "pass@1",

"value": 40.6

}

]

}

},

"authors": [

{

"name": "bigcode"

}

],

"licenses": [

{

"license": {

"name": "bigcode-openrail-m"

}

}

],

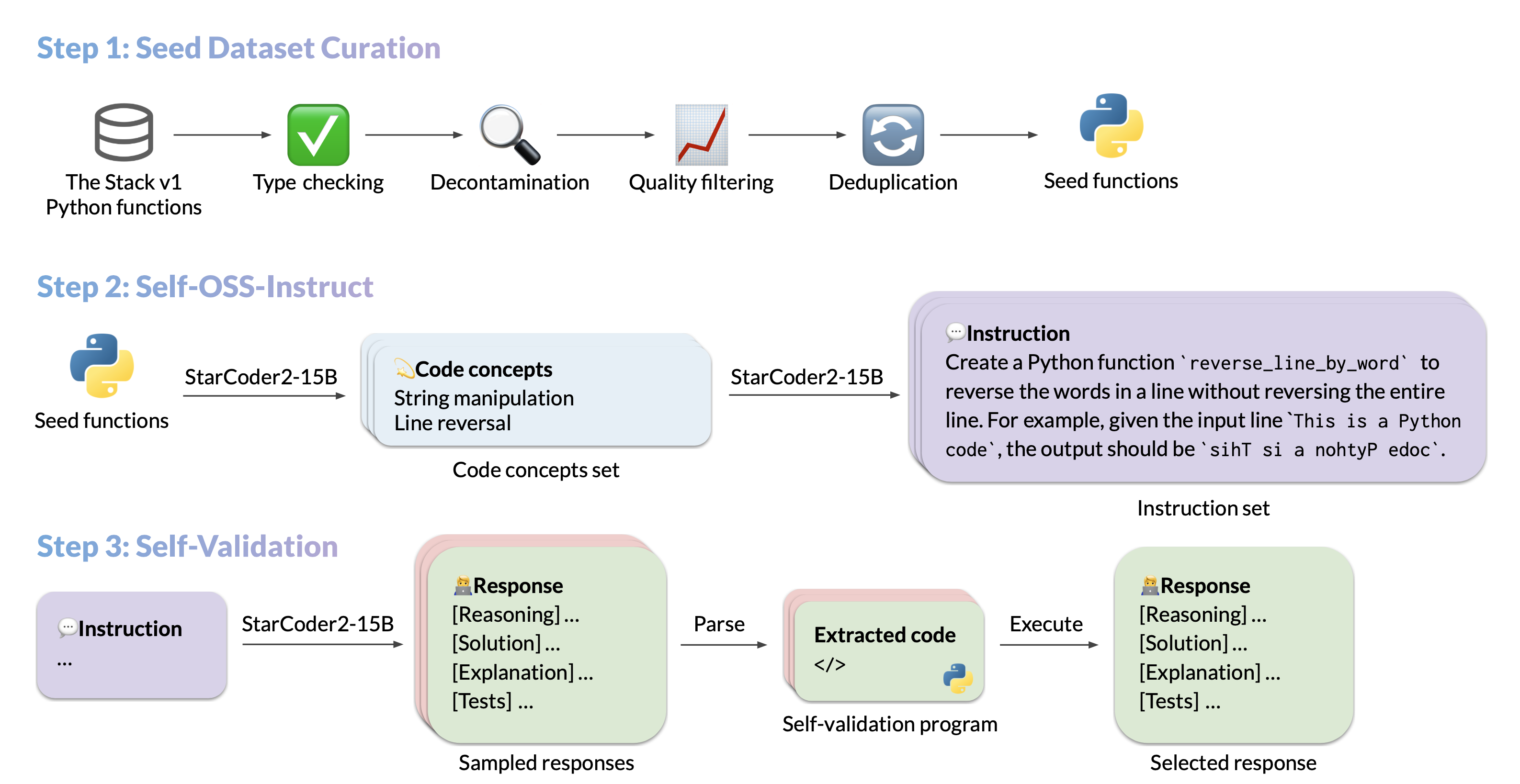

"description": "We introduce StarCoder2-15B-Instruct-v0.1, the very first entirely self-aligned code Large Language Model (LLM) trained with a fully permissive and transparent pipeline. Our open-source pipeline uses StarCoder2-15B to generate thousands of instruction-response pairs, which are then used to fine-tune StarCoder-15B itself without any human annotations or distilled data from huge and proprietary LLMs.- **Model:** [bigcode/starcoder2-15b-instruct-v0.1](https://huggingface.co/bigcode/starcoder2-instruct-15b-v0.1)- **Code:** [bigcode-project/starcoder2-self-align](https://github.com/bigcode-project/starcoder2-self-align)- **Dataset:** [bigcode/self-oss-instruct-sc2-exec-filter-50k](https://huggingface.co/datasets/bigcode/self-oss-instruct-sc2-exec-filter-50k/)- **Authors:**[Yuxiang Wei](https://yuxiang.cs.illinois.edu),[Federico Cassano](https://federico.codes/),[Jiawei Liu](https://jw-liu.xyz),[Yifeng Ding](https://yifeng-ding.com),[Naman Jain](https://naman-ntc.github.io),[Harm de Vries](https://www.harmdevries.com),[Leandro von Werra](https://twitter.com/lvwerra),[Arjun Guha](https://www.khoury.northeastern.edu/home/arjunguha/main/home/),[Lingming Zhang](https://lingming.cs.illinois.edu).",

"tags": [

"transformers",

"safetensors",

"starcoder2",

"text-generation",

"code",

"conversational",

"dataset:bigcode/self-oss-instruct-sc2-exec-filter-50k",

"arxiv:2410.24198",

"base_model:bigcode/starcoder2-15b",

"base_model:finetune:bigcode/starcoder2-15b",

"license:bigcode-openrail-m",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

]

}

},

"components": [

{

"type": "data",

"bom-ref": "bigcode/self-oss-instruct-sc2-exec-filter-50k-87afcdf4-328e-5009-ba04-8150239e25f2",

"name": "bigcode/self-oss-instruct-sc2-exec-filter-50k",

"data": [

{

"type": "dataset",

"bom-ref": "bigcode/self-oss-instruct-sc2-exec-filter-50k-87afcdf4-328e-5009-ba04-8150239e25f2",

"name": "bigcode/self-oss-instruct-sc2-exec-filter-50k",

"contents": {

"url": "https://huggingface.co/datasets/bigcode/self-oss-instruct-sc2-exec-filter-50k",

"properties": [

{

"name": "pretty_name",

"value": "StarCoder2-15b Self-Alignment Dataset (50K)"

},

{

"name": "configs",

"value": "Name of the dataset subset: default {\"split\": \"train\", \"path\": \"data/train-*\"}"

},

{

"name": "license",

"value": "odc-by"

}

]

},

"governance": {

"owners": [

{

"organization": {

"name": "bigcode",

"url": "https://huggingface.co/bigcode"

}

}

]

},

"description": "Final self-alignment training dataset for StarCoder2-Instruct. \n\nseed: Contains the seed Python function\nconcepts: Contains the concepts generated from the seed\ninstruction: Contains the instruction generated from the concepts\nresponse: Contains the execution-validated response to the instruction\n\nThis dataset utilizes seed Python functions derived from the MultiPL-T pipeline.\n"

}

]

}

]

} |