Commit

·

1465dcf

verified

·

0

Parent(s):

Duplicate from google/byt5-small

Browse filesCo-authored-by: Satya <Satya10@users.noreply.huggingface.co>

- .gitattributes +17 -0

- README.md +158 -0

- config.json +28 -0

- flax_model.msgpack +3 -0

- generation_config.json +7 -0

- pytorch_model.bin +3 -0

- special_tokens_map.json +1 -0

- tf_model.h5 +3 -0

- tokenizer_config.json +1 -0

.gitattributes

ADDED

|

@@ -0,0 +1,17 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.bin.* filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.tar.gz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,158 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- multilingual

|

| 4 |

+

- af

|

| 5 |

+

- am

|

| 6 |

+

- ar

|

| 7 |

+

- az

|

| 8 |

+

- be

|

| 9 |

+

- bg

|

| 10 |

+

- bn

|

| 11 |

+

- ca

|

| 12 |

+

- ceb

|

| 13 |

+

- co

|

| 14 |

+

- cs

|

| 15 |

+

- cy

|

| 16 |

+

- da

|

| 17 |

+

- de

|

| 18 |

+

- el

|

| 19 |

+

- en

|

| 20 |

+

- eo

|

| 21 |

+

- es

|

| 22 |

+

- et

|

| 23 |

+

- eu

|

| 24 |

+

- fa

|

| 25 |

+

- fi

|

| 26 |

+

- fil

|

| 27 |

+

- fr

|

| 28 |

+

- fy

|

| 29 |

+

- ga

|

| 30 |

+

- gd

|

| 31 |

+

- gl

|

| 32 |

+

- gu

|

| 33 |

+

- ha

|

| 34 |

+

- haw

|

| 35 |

+

- hi

|

| 36 |

+

- hmn

|

| 37 |

+

- ht

|

| 38 |

+

- hu

|

| 39 |

+

- hy

|

| 40 |

+

- ig

|

| 41 |

+

- is

|

| 42 |

+

- it

|

| 43 |

+

- iw

|

| 44 |

+

- ja

|

| 45 |

+

- jv

|

| 46 |

+

- ka

|

| 47 |

+

- kk

|

| 48 |

+

- km

|

| 49 |

+

- kn

|

| 50 |

+

- ko

|

| 51 |

+

- ku

|

| 52 |

+

- ky

|

| 53 |

+

- la

|

| 54 |

+

- lb

|

| 55 |

+

- lo

|

| 56 |

+

- lt

|

| 57 |

+

- lv

|

| 58 |

+

- mg

|

| 59 |

+

- mi

|

| 60 |

+

- mk

|

| 61 |

+

- ml

|

| 62 |

+

- mn

|

| 63 |

+

- mr

|

| 64 |

+

- ms

|

| 65 |

+

- mt

|

| 66 |

+

- my

|

| 67 |

+

- ne

|

| 68 |

+

- nl

|

| 69 |

+

- no

|

| 70 |

+

- ny

|

| 71 |

+

- pa

|

| 72 |

+

- pl

|

| 73 |

+

- ps

|

| 74 |

+

- pt

|

| 75 |

+

- ro

|

| 76 |

+

- ru

|

| 77 |

+

- sd

|

| 78 |

+

- si

|

| 79 |

+

- sk

|

| 80 |

+

- sl

|

| 81 |

+

- sm

|

| 82 |

+

- sn

|

| 83 |

+

- so

|

| 84 |

+

- sq

|

| 85 |

+

- sr

|

| 86 |

+

- st

|

| 87 |

+

- su

|

| 88 |

+

- sv

|

| 89 |

+

- sw

|

| 90 |

+

- ta

|

| 91 |

+

- te

|

| 92 |

+

- tg

|

| 93 |

+

- th

|

| 94 |

+

- tr

|

| 95 |

+

- uk

|

| 96 |

+

- und

|

| 97 |

+

- ur

|

| 98 |

+

- uz

|

| 99 |

+

- vi

|

| 100 |

+

- xh

|

| 101 |

+

- yi

|

| 102 |

+

- yo

|

| 103 |

+

- zh

|

| 104 |

+

- zu

|

| 105 |

+

datasets:

|

| 106 |

+

- mc4

|

| 107 |

+

|

| 108 |

+

license: apache-2.0

|

| 109 |

+

---

|

| 110 |

+

|

| 111 |

+

# ByT5 - Small

|

| 112 |

+

|

| 113 |

+

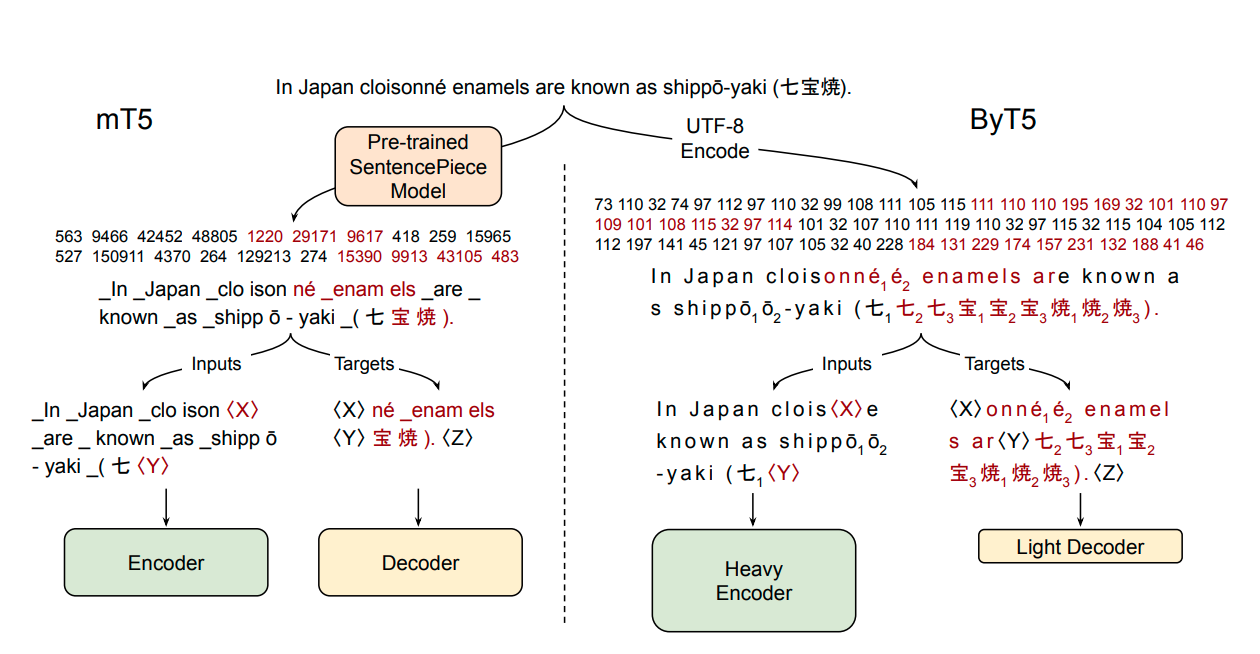

ByT5 is a tokenizer-free version of [Google's T5](https://ai.googleblog.com/2020/02/exploring-transfer-learning-with-t5.html) and generally follows the architecture of [MT5](https://huggingface.co/google/mt5-small).

|

| 114 |

+

|

| 115 |

+

ByT5 was only pre-trained on [mC4](https://www.tensorflow.org/datasets/catalog/c4#c4multilingual) excluding any supervised training with an average span-mask of 20 UTF-8 characters. Therefore, this model has to be fine-tuned before it is useable on a downstream task.

|

| 116 |

+

|

| 117 |

+

ByT5 works especially well on noisy text data,*e.g.*, `google/byt5-small` significantly outperforms [mt5-small](https://huggingface.co/google/mt5-small) on [TweetQA](https://arxiv.org/abs/1907.06292).

|

| 118 |

+

|

| 119 |

+

Paper: [ByT5: Towards a token-free future with pre-trained byte-to-byte models](https://arxiv.org/abs/2105.13626)

|

| 120 |

+

|

| 121 |

+

Authors: *Linting Xue, Aditya Barua, Noah Constant, Rami Al-Rfou, Sharan Narang, Mihir Kale, Adam Roberts, Colin Raffel*

|

| 122 |

+

|

| 123 |

+

## Example Inference

|

| 124 |

+

|

| 125 |

+

ByT5 works on raw UTF-8 bytes and can be used without a tokenizer:

|

| 126 |

+

|

| 127 |

+

```python

|

| 128 |

+

from transformers import T5ForConditionalGeneration

|

| 129 |

+

import torch

|

| 130 |

+

|

| 131 |

+

model = T5ForConditionalGeneration.from_pretrained('google/byt5-small')

|

| 132 |

+

|

| 133 |

+

input_ids = torch.tensor([list("Life is like a box of chocolates.".encode("utf-8"))]) + 3 # add 3 for special tokens

|

| 134 |

+

labels = torch.tensor([list("La vie est comme une boîte de chocolat.".encode("utf-8"))]) + 3 # add 3 for special tokens

|

| 135 |

+

|

| 136 |

+

loss = model(input_ids, labels=labels).loss # forward pass

|

| 137 |

+

```

|

| 138 |

+

|

| 139 |

+

For batched inference & training it is however recommended using a tokenizer class for padding:

|

| 140 |

+

|

| 141 |

+

```python

|

| 142 |

+

from transformers import T5ForConditionalGeneration, AutoTokenizer

|

| 143 |

+

|

| 144 |

+

model = T5ForConditionalGeneration.from_pretrained('google/byt5-small')

|

| 145 |

+

tokenizer = AutoTokenizer.from_pretrained('google/byt5-small')

|

| 146 |

+

|

| 147 |

+

model_inputs = tokenizer(["Life is like a box of chocolates.", "Today is Monday."], padding="longest", return_tensors="pt")

|

| 148 |

+

labels = tokenizer(["La vie est comme une boîte de chocolat.", "Aujourd'hui c'est lundi."], padding="longest", return_tensors="pt").input_ids

|

| 149 |

+

|

| 150 |

+

loss = model(**model_inputs, labels=labels).loss # forward pass

|

| 151 |

+

```

|

| 152 |

+

|

| 153 |

+

## Abstract

|

| 154 |

+

|

| 155 |

+

Most widely-used pre-trained language models operate on sequences of tokens corresponding to word or subword units. Encoding text as a sequence of tokens requires a tokenizer, which is typically created as an independent artifact from the model. Token-free models that instead operate directly on raw text (bytes or characters) have many benefits: they can process text in any language out of the box, they are more robust to noise, and they minimize technical debt by removing complex and error-prone text preprocessing pipelines. Since byte or character sequences are longer than token sequences, past work on token-free models has often introduced new model architectures designed to amortize the cost of operating directly on raw text. In this paper, we show that a standard Transformer architecture can be used with minimal modifications to process byte sequences. We carefully characterize the trade-offs in terms of parameter count, training FLOPs, and inference speed, and show that byte-level models are competitive with their token-level counterparts. We also demonstrate that byte-level models are significantly more robust to noise and perform better on tasks that are sensitive to spelling and pronunciation. As part of our contribution, we release a new set of pre-trained byte-level Transformer models based on the T5 architecture, as well as all code and data used in our experiments.

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

|

config.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "/home/patrick/t5/byt5-small",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"T5ForConditionalGeneration"

|

| 5 |

+

],

|

| 6 |

+

"d_ff": 3584,

|

| 7 |

+

"d_kv": 64,

|

| 8 |

+

"d_model": 1472,

|

| 9 |

+

"decoder_start_token_id": 0,

|

| 10 |

+

"dropout_rate": 0.1,

|

| 11 |

+

"eos_token_id": 1,

|

| 12 |

+

"feed_forward_proj": "gated-gelu",

|

| 13 |

+

"gradient_checkpointing": false,

|

| 14 |

+

"initializer_factor": 1.0,

|

| 15 |

+

"is_encoder_decoder": true,

|

| 16 |

+

"layer_norm_epsilon": 1e-06,

|

| 17 |

+

"model_type": "t5",

|

| 18 |

+

"num_decoder_layers": 4,

|

| 19 |

+

"num_heads": 6,

|

| 20 |

+

"num_layers": 12,

|

| 21 |

+

"pad_token_id": 0,

|

| 22 |

+

"relative_attention_num_buckets": 32,

|

| 23 |

+

"tie_word_embeddings": false,

|

| 24 |

+

"tokenizer_class": "ByT5Tokenizer",

|

| 25 |

+

"transformers_version": "4.7.0.dev0",

|

| 26 |

+

"use_cache": true,

|

| 27 |

+

"vocab_size": 384

|

| 28 |

+

}

|

flax_model.msgpack

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b3aafee96d60e98aa18b3c7f73a2c5a2360f1f2f6df79361190a4c9e05c5ab21

|

| 3 |

+

size 1198558445

|

generation_config.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"decoder_start_token_id": 0,

|

| 4 |

+

"eos_token_id": 1,

|

| 5 |

+

"pad_token_id": 0,

|

| 6 |

+

"transformers_version": "4.27.0.dev0"

|

| 7 |

+

}

|

pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5c5aaf56299d6f2d4eaadad550a40765198828ead4d74f0a15f91cbe0961931a

|

| 3 |

+

size 1198627927

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"eos_token": {"content": "</s>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "unk_token": {"content": "<unk>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "pad_token": {"content": "<pad>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true}, "additional_special_tokens": ["<extra_id_0>", "<extra_id_1>", "<extra_id_2>", "<extra_id_3>", "<extra_id_4>", "<extra_id_5>", "<extra_id_6>", "<extra_id_7>", "<extra_id_8>", "<extra_id_9>", "<extra_id_10>", "<extra_id_11>", "<extra_id_12>", "<extra_id_13>", "<extra_id_14>", "<extra_id_15>", "<extra_id_16>", "<extra_id_17>", "<extra_id_18>", "<extra_id_19>", "<extra_id_20>", "<extra_id_21>", "<extra_id_22>", "<extra_id_23>", "<extra_id_24>", "<extra_id_25>", "<extra_id_26>", "<extra_id_27>", "<extra_id_28>", "<extra_id_29>", "<extra_id_30>", "<extra_id_31>", "<extra_id_32>", "<extra_id_33>", "<extra_id_34>", "<extra_id_35>", "<extra_id_36>", "<extra_id_37>", "<extra_id_38>", "<extra_id_39>", "<extra_id_40>", "<extra_id_41>", "<extra_id_42>", "<extra_id_43>", "<extra_id_44>", "<extra_id_45>", "<extra_id_46>", "<extra_id_47>", "<extra_id_48>", "<extra_id_49>", "<extra_id_50>", "<extra_id_51>", "<extra_id_52>", "<extra_id_53>", "<extra_id_54>", "<extra_id_55>", "<extra_id_56>", "<extra_id_57>", "<extra_id_58>", "<extra_id_59>", "<extra_id_60>", "<extra_id_61>", "<extra_id_62>", "<extra_id_63>", "<extra_id_64>", "<extra_id_65>", "<extra_id_66>", "<extra_id_67>", "<extra_id_68>", "<extra_id_69>", "<extra_id_70>", "<extra_id_71>", "<extra_id_72>", "<extra_id_73>", "<extra_id_74>", "<extra_id_75>", "<extra_id_76>", "<extra_id_77>", "<extra_id_78>", "<extra_id_79>", "<extra_id_80>", "<extra_id_81>", "<extra_id_82>", "<extra_id_83>", "<extra_id_84>", "<extra_id_85>", "<extra_id_86>", "<extra_id_87>", "<extra_id_88>", "<extra_id_89>", "<extra_id_90>", "<extra_id_91>", "<extra_id_92>", "<extra_id_93>", "<extra_id_94>", "<extra_id_95>", "<extra_id_96>", "<extra_id_97>", "<extra_id_98>", "<extra_id_99>", "<extra_id_100>", "<extra_id_101>", "<extra_id_102>", "<extra_id_103>", "<extra_id_104>", "<extra_id_105>", "<extra_id_106>", "<extra_id_107>", "<extra_id_108>", "<extra_id_109>", "<extra_id_110>", "<extra_id_111>", "<extra_id_112>", "<extra_id_113>", "<extra_id_114>", "<extra_id_115>", "<extra_id_116>", "<extra_id_117>", "<extra_id_118>", "<extra_id_119>", "<extra_id_120>", "<extra_id_121>", "<extra_id_122>", "<extra_id_123>", "<extra_id_124>"]}

|

tf_model.h5

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f97320dd5eb49cb2323a21d584cef7c1cfc9a0976efa978fcef438676b952bc2

|

| 3 |

+

size 1198900664

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

{"eos_token": {"content": "</s>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "unk_token": {"content": "<unk>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "pad_token": {"content": "<pad>", "single_word": false, "lstrip": false, "rstrip": false, "normalized": true, "__type": "AddedToken"}, "extra_ids": 125, "additional_special_tokens": ["<extra_id_0>", "<extra_id_1>", "<extra_id_2>", "<extra_id_3>", "<extra_id_4>", "<extra_id_5>", "<extra_id_6>", "<extra_id_7>", "<extra_id_8>", "<extra_id_9>", "<extra_id_10>", "<extra_id_11>", "<extra_id_12>", "<extra_id_13>", "<extra_id_14>", "<extra_id_15>", "<extra_id_16>", "<extra_id_17>", "<extra_id_18>", "<extra_id_19>", "<extra_id_20>", "<extra_id_21>", "<extra_id_22>", "<extra_id_23>", "<extra_id_24>", "<extra_id_25>", "<extra_id_26>", "<extra_id_27>", "<extra_id_28>", "<extra_id_29>", "<extra_id_30>", "<extra_id_31>", "<extra_id_32>", "<extra_id_33>", "<extra_id_34>", "<extra_id_35>", "<extra_id_36>", "<extra_id_37>", "<extra_id_38>", "<extra_id_39>", "<extra_id_40>", "<extra_id_41>", "<extra_id_42>", "<extra_id_43>", "<extra_id_44>", "<extra_id_45>", "<extra_id_46>", "<extra_id_47>", "<extra_id_48>", "<extra_id_49>", "<extra_id_50>", "<extra_id_51>", "<extra_id_52>", "<extra_id_53>", "<extra_id_54>", "<extra_id_55>", "<extra_id_56>", "<extra_id_57>", "<extra_id_58>", "<extra_id_59>", "<extra_id_60>", "<extra_id_61>", "<extra_id_62>", "<extra_id_63>", "<extra_id_64>", "<extra_id_65>", "<extra_id_66>", "<extra_id_67>", "<extra_id_68>", "<extra_id_69>", "<extra_id_70>", "<extra_id_71>", "<extra_id_72>", "<extra_id_73>", "<extra_id_74>", "<extra_id_75>", "<extra_id_76>", "<extra_id_77>", "<extra_id_78>", "<extra_id_79>", "<extra_id_80>", "<extra_id_81>", "<extra_id_82>", "<extra_id_83>", "<extra_id_84>", "<extra_id_85>", "<extra_id_86>", "<extra_id_87>", "<extra_id_88>", "<extra_id_89>", "<extra_id_90>", "<extra_id_91>", "<extra_id_92>", "<extra_id_93>", "<extra_id_94>", "<extra_id_95>", "<extra_id_96>", "<extra_id_97>", "<extra_id_98>", "<extra_id_99>", "<extra_id_100>", "<extra_id_101>", "<extra_id_102>", "<extra_id_103>", "<extra_id_104>", "<extra_id_105>", "<extra_id_106>", "<extra_id_107>", "<extra_id_108>", "<extra_id_109>", "<extra_id_110>", "<extra_id_111>", "<extra_id_112>", "<extra_id_113>", "<extra_id_114>", "<extra_id_115>", "<extra_id_116>", "<extra_id_117>", "<extra_id_118>", "<extra_id_119>", "<extra_id_120>", "<extra_id_121>", "<extra_id_122>", "<extra_id_123>", "<extra_id_124>"]}

|