Sparse-Llama-3.1-8B-tldr-2of4

Model Overview

- Model Architecture: LlamaForCausalLM

- Input: Text

- Output: Text

- Model Optimizations:

- Sparsity: 2:4

- Release Date: 05/29/2025

- Version: 1.0

- Intended Use Cases: This model is finetuned to summarize text in the style of Reddit posts.

- Out-of-scope: Use in any manner that violates applicable laws or regulations (including trade compliance laws). Use in any other way that is prohibited by the Acceptable Use Policy and Llama 3.1 Community License.

- Model Developers: Red Hat (Neural Magic)

This model is a fine-tuned version of the 2:4 sparse model RedHatAI/Sparse-Llama-3.1-8B-2of4 on the trl-lib/tldr dataset. This sparse model recovers 100% of the BERTScore (0.366) obtained by the dense model RedHatAI/Llama-3.1-8B-tldr.

Deployment

This model can be deployed efficiently using vLLM, as shown in the example below.

Run the following command to start the vLLM server:

vllm serve RedHatAI/Sparse-Llama-3.1-8B-tldr-2of4

Once your server is started, you can query the model using the OpenAI API:

from openai import OpenAI

openai_api_key = "EMPTY"

openai_api_base = "http://localhost:8000/v1"

client = OpenAI(

api_key=openai_api_key,

base_url=openai_api_base,

)

post="""

SUBREDDIT: r/AI

TITLE: Training sparse LLMs

POST: Now you can use the llm-compressor integration to axolotl to train sparse LLMs!

It's super easy to use. See the example in https://huggingface.co/RedHatAI/Sparse-Llama-3.1-8B-tldr-2of4.

And there's more. You can run 2:4 sparse models on vLLM and get significant speedupts on Hopper GPUs!

"""

prompt = f"Give a TL;DR of the following Reddit post.\n<|user|>{post}\nTL;DR:\n<|assistant|>\n"

completion = client.completions.create(

model="RedHatAI/Sparse-Llama-3.1-8B-tldr-2of4",

prompt=prompt,

max_tokens=256,

)

print("Completion result:", completion)

Training

See axolotl config

axolotl version: 0.10.0.dev0

base_model: RedHatAI/Sparse-Llama-3.1-8B-2of4

load_in_8bit: false

load_in_4bit: false

strict: false

datasets:

- path: trl-lib/tldr

type:

system_prompt: "Give a TL;DR of the following Reddit post."

field_system: system

field_instruction: prompt

field_output: completion

format: "<|user|>\n{instruction}\n<|assistant|>\n"

no_input_format: "<|user|>\n{instruction}\n<|assistant|>\n"

split: train

sequence_len: 4096

sample_packing: true

pad_to_sequence_len: true

eval_sample_packing: true

torch.compile: true

gradient_accumulation_steps: 1

micro_batch_size: 4

num_epochs: 2

optimizer: adamw_bnb_8bit

lr_scheduler: cosine

learning_rate: 2e-5

max_grad_norm: 3

gradient_checkpointing: true

gradient_checkpointing_kwargs:

use_reentrant: false

train_on_inputs: false

bf16: auto

fp16:

tf32: false

early_stopping_patience:

resume_from_checkpoint:

logging_steps: 1

flash_attention: true

warmup_ratio: 0.05

evals_per_epoch: 4

val_set_size: 0.05

save_strategy: "best"

save_total_limit: 1

metric_for_best_model: "loss"

debug:

deepspeed:

weight_decay: 0.0

special_tokens:

pad_token: "<|end_of_text|>"

seed: 0

plugins:

- axolotl.integrations.liger.LigerPlugin

- axolotl.integrations.llm_compressor.LLMCompressorPlugin

liger_rope: true

liger_rms_norm: true

liger_glu_activation: true

liger_layer_norm: true

liger_fused_linear_cross_entropy: true

llmcompressor:

recipe:

finetuning_stage:

finetuning_modifiers:

ConstantPruningModifier:

targets: [

're:.*q_proj.weight',

're:.*k_proj.weight',

're:.*v_proj.weight',

're:.*o_proj.weight',

're:.*gate_proj.weight',

're:.*up_proj.weight',

're:.*down_proj.weight',

]

start: 0

save_compressed: true

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 0

- distributed_type: multi-GPU

- num_devices: 8

- total_train_batch_size: 32

- total_eval_batch_size: 32

- optimizer: Use adamw_bnb_8bit with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 32

- num_epochs: 2.0

Training results

| Training Loss | Epoch | Step | Validation Loss |

|---|---|---|---|

| 2.2584 | 0.0031 | 1 | 2.2290 |

| 1.8562 | 0.2508 | 82 | 1.8338 |

| 1.7814 | 0.5015 | 164 | 1.8197 |

| 1.7918 | 0.7523 | 246 | 1.8117 |

| 1.8262 | 1.0031 | 328 | 1.8072 |

| 1.7782 | 1.2538 | 410 | 1.8069 |

| 1.6955 | 1.5046 | 492 | 1.8065 |

| 1.762 | 1.7554 | 574 | 1.8064 |

Framework versions

- Transformers 4.51.3

- Pytorch 2.7.0+cu126

- Datasets 3.5.1

- Tokenizers 0.21.1

Evaluation

The model was evaluated on the test split of trl-lib/tldr using the Neural Magic fork of lm-evaluation-harness (tldr branch). One can reproduce these results by using the following command:

lm_eval --model vllm --model_args "pretrained=RedHatAI/Sparse-Llama-3.1-8B-tldr-2of4,dtype=auto,add_bos_token=True" --batch-size auto --tasks tldr

| Metric | Llama-3.1-8B-Instruct | Llama-3.1-8B-tldr | Sparse-Llama-3.1-8B-tldr-2of4 (this model) |

|---|---|---|---|

| BERTScore | -0.230 | 0.366 | 0.366 |

| ROUGE-1 | 0.059 | 0.362 | 0.357 |

| ROUGE-2 | 0.018 | 0.144 | 0.141 |

| ROUGE-Lsum | 0.051 | 0.306 | 0.304 |

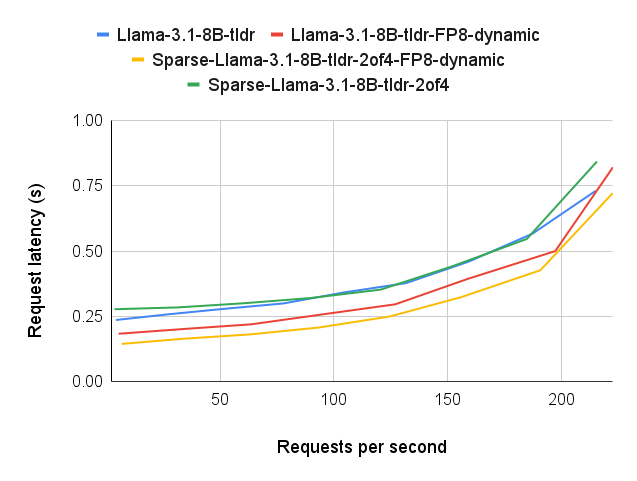

Inference Performance

We evaluated the inference performance of this model using the first 1,000 samples from the training set of the trl-lib/tldr dataset.

Benchmarking was conducted with vLLM version 0.9.0.1 and GuideLLM version 0.2.1.

The figure below presents the mean end-to-end latency per request across varying request rates. Results are shown for this model, as well as three variants:

- Dense: Llama-3.1-8B-tldr

- Dense-quantized: Llama-3.1-8B-tldr-FP8-dynamic

- Sparse-quantized: Sparse-Llama-3.1-8B-tldr-2of4-FP8-dynamic

Although sparsity by itself does not significantly improve performance, when combined with quantization it results in up to 1.6x speedup.

Reproduction instructions

To replicate the benchmark:

- Generate a JSON file containing the first 1,000 training samples:

from datasets import load_dataset

ds = load_dataset("trl-lib/tldr", split="train").take(1000)

ds.to_json("tldr_1000.json")

- Start a vLLM server using your target model:

vllm serve RedHatAI/Sparse-Llama-3.1-8B-tldr-2of4

- Run the benchmark with GuideLLM:

GUIDELLM__OPENAI__MAX_OUTPUT_TOKENS=128 guidellm benchmark --target "http://localhost:8000" --rate-type sweep --data tldr_1000.json

The average output length is approximately 30 tokens per sample. We capped the generation at 128 tokens to reduce performance skew from rare, unusually verbose completions.

- Downloads last month

- 129