repo_id

stringlengths 4

110

| author

stringlengths 2

27

⌀ | model_type

stringlengths 2

29

⌀ | files_per_repo

int64 2

15.4k

| downloads_30d

int64 0

19.9M

| library

stringlengths 2

37

⌀ | likes

int64 0

4.34k

| pipeline

stringlengths 5

30

⌀ | pytorch

bool 2

classes | tensorflow

bool 2

classes | jax

bool 2

classes | license

stringlengths 2

30

| languages

stringlengths 4

1.63k

⌀ | datasets

stringlengths 2

2.58k

⌀ | co2

stringclasses 29

values | prs_count

int64 0

125

| prs_open

int64 0

120

| prs_merged

int64 0

15

| prs_closed

int64 0

28

| discussions_count

int64 0

218

| discussions_open

int64 0

148

| discussions_closed

int64 0

70

| tags

stringlengths 2

513

| has_model_index

bool 2

classes | has_metadata

bool 1

class | has_text

bool 1

class | text_length

int64 401

598k

| is_nc

bool 1

class | readme

stringlengths 0

598k

| hash

stringlengths 32

32

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

JacksonYan/Real-CUGAN

|

JacksonYan

| null | 16 | 0 | null | 1 | null | false | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

[]

| false | true | true | 1,219 | false |

> From <https://github.com/bilibili/ailab/tree/main/Real-CUGAN>

# Configuration

`title`: _string_

Display title for the Space

`emoji`: _string_

Space emoji (emoji-only character allowed)

`colorFrom`: _string_

Color for Thumbnail gradient (red, yellow, green, blue, indigo, purple, pink, gray)

`colorTo`: _string_

Color for Thumbnail gradient (red, yellow, green, blue, indigo, purple, pink, gray)

`sdk`: _string_

Can be either `gradio`, `streamlit`, or `static`

`sdk_version` : _string_

Only applicable for `streamlit` SDK.

See [doc](https://hf.co/docs/hub/spaces) for more info on supported versions.

`app_file`: _string_

Path to your main application file (which contains either `gradio` or `streamlit` Python code, or `static` html code).

Path is relative to the root of the repository.

`models`: _List[string]_

HF model IDs (like "gpt2" or "deepset/roberta-base-squad2") used in the Space.

Will be parsed automatically from your code if not specified here.

`datasets`: _List[string]_

HF dataset IDs (like "common_voice" or "oscar-corpus/OSCAR-2109") used in the Space.

Will be parsed automatically from your code if not specified here.

`pinned`: _boolean_

Whether the Space stays on top of your list.

|

5b1b899e5e6b856c2ee8dc6e79213714

|

sd-concepts-library/naval-portrait

|

sd-concepts-library

| null | 12 | 0 | null | 3 | null | false | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

[]

| false | true | true | 1,416 | false |

### naval-portrait on Stable Diffusion

This is the `<naval-portrait>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

51069597e1f5f452de37cf8bb92187b4

|

Kilgori/correct-yes-model

|

Kilgori

| null | 20 | 84 |

diffusers

| 0 |

text-to-image

| false | false | false |

creativeml-openrail-m

| null | null | null | 1 | 0 | 1 | 0 | 0 | 0 | 0 |

['text-to-image', 'stable-diffusion']

| false | true | true | 426 | false |

### Correct-Yes-model Dreambooth model trained by Kilgori with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

|

d7238b6dbdcc625b9bf3d330e9ce4f61

|

bofenghuang/whisper-large-v2-french

|

bofenghuang

|

whisper

| 44 | 331 |

transformers

| 0 |

automatic-speech-recognition

| true | false | false |

apache-2.0

|

['fr']

|

['mozilla-foundation/common_voice_11_0', 'facebook/multilingual_librispeech', 'facebook/voxpopuli', 'google/fleurs', 'gigant/african_accented_french']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['automatic-speech-recognition', 'hf-asr-leaderboard', 'whisper-event']

| true | true | true | 6,342 | false |

<style>

img {

display: inline;

}

</style>

# Fine-tuned whisper-large-v2 model for ASR in French

This model is a fine-tuned version of [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2), trained on a composite dataset comprising of over 2200 hours of French speech audio, using the train and the validation splits of [Common Voice 11.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0), [Multilingual LibriSpeech](https://huggingface.co/datasets/facebook/multilingual_librispeech), [Voxpopuli](https://github.com/facebookresearch/voxpopuli), [Fleurs](https://huggingface.co/datasets/google/fleurs), [Multilingual TEDx](http://www.openslr.org/100), [MediaSpeech](https://www.openslr.org/108), and [African Accented French](https://huggingface.co/datasets/gigant/african_accented_french). When using the model make sure that your speech input is sampled at 16Khz. **This model doesn't predict casing or punctuation.**

## Performance

*Below are the WERs of the pre-trained models on the [Common Voice 9.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_9_0), [Multilingual LibriSpeech](https://huggingface.co/datasets/facebook/multilingual_librispeech), [Voxpopuli](https://github.com/facebookresearch/voxpopuli) and [Fleurs](https://huggingface.co/datasets/google/fleurs). These results are reported in the original [paper](https://cdn.openai.com/papers/whisper.pdf).*

| Model | Common Voice 9.0 | MLS | VoxPopuli | Fleurs |

| --- | :---: | :---: | :---: | :---: |

| [openai/whisper-small](https://huggingface.co/openai/whisper-small) | 22.7 | 16.2 | 15.7 | 15.0 |

| [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) | 16.0 | 8.9 | 12.2 | 8.7 |

| [openai/whisper-large](https://huggingface.co/openai/whisper-large) | 14.7 | 8.9 | **11.0** | **7.7** |

| [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2) | **13.9** | **7.3** | 11.4 | 8.3 |

*Below are the WERs of the fine-tuned models on the [Common Voice 11.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_11_0), [Multilingual LibriSpeech](https://huggingface.co/datasets/facebook/multilingual_librispeech), [Voxpopuli](https://github.com/facebookresearch/voxpopuli), and [Fleurs](https://huggingface.co/datasets/google/fleurs). Note that these evaluation datasets have been filtered and preprocessed to only contain French alphabet characters and are removed of punctuation outside of apostrophe. The results in the table are reported as `WER (greedy search) / WER (beam search with beam width 5)`.*

| Model | Common Voice 11.0 | MLS | VoxPopuli | Fleurs |

| --- | :---: | :---: | :---: | :---: |

| [bofenghuang/whisper-small-cv11-french](https://huggingface.co/bofenghuang/whisper-small-cv11-french) | 11.76 / 10.99 | 9.65 / 8.91 | 14.45 / 13.66 | 10.76 / 9.83 |

| [bofenghuang/whisper-medium-cv11-french](https://huggingface.co/bofenghuang/whisper-medium-cv11-french) | 9.03 / 8.54 | 6.34 / 5.86 | 11.64 / 11.35 | 7.13 / 6.85 |

| [bofenghuang/whisper-medium-french](https://huggingface.co/bofenghuang/whisper-medium-french) | 9.03 / 8.73 | 4.60 / 4.44 | 9.53 / 9.46 | 6.33 / 5.94 |

| [bofenghuang/whisper-large-v2-cv11-french](https://huggingface.co/bofenghuang/whisper-large-v2-cv11-french) | **8.05** / **7.67** | 5.56 / 5.28 | 11.50 / 10.69 | 5.42 / 5.05 |

| [bofenghuang/whisper-large-v2-french](https://huggingface.co/bofenghuang/whisper-large-v2-french) | 8.15 / 7.83 | **4.20** / **4.03** | **9.10** / **8.66** | **5.22** / **4.98** |

## Usage

Inference with 🤗 Pipeline

```python

import torch

from datasets import load_dataset

from transformers import pipeline

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Load pipeline

pipe = pipeline("automatic-speech-recognition", model="bofenghuang/whisper-large-v2-french", device=device)

# NB: set forced_decoder_ids for generation utils

pipe.model.config.forced_decoder_ids = pipe.tokenizer.get_decoder_prompt_ids(language="fr", task="transcribe")

# Load data

ds_mcv_test = load_dataset("mozilla-foundation/common_voice_11_0", "fr", split="test", streaming=True)

test_segment = next(iter(ds_mcv_test))

waveform = test_segment["audio"]

# Run

generated_sentences = pipe(waveform, max_new_tokens=225)["text"] # greedy

# generated_sentences = pipe(waveform, max_new_tokens=225, generate_kwargs={"num_beams": 5})["text"] # beam search

# Normalise predicted sentences if necessary

```

Inference with 🤗 low-level APIs

```python

import torch

import torchaudio

from datasets import load_dataset

from transformers import AutoProcessor, AutoModelForSpeechSeq2Seq

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Load model

model = AutoModelForSpeechSeq2Seq.from_pretrained("bofenghuang/whisper-large-v2-french").to(device)

processor = AutoProcessor.from_pretrained("bofenghuang/whisper-large-v2-french", language="french", task="transcribe")

# NB: set forced_decoder_ids for generation utils

model.config.forced_decoder_ids = processor.get_decoder_prompt_ids(language="fr", task="transcribe")

# 16_000

model_sample_rate = processor.feature_extractor.sampling_rate

# Load data

ds_mcv_test = load_dataset("mozilla-foundation/common_voice_11_0", "fr", split="test", streaming=True)

test_segment = next(iter(ds_mcv_test))

waveform = torch.from_numpy(test_segment["audio"]["array"])

sample_rate = test_segment["audio"]["sampling_rate"]

# Resample

if sample_rate != model_sample_rate:

resampler = torchaudio.transforms.Resample(sample_rate, model_sample_rate)

waveform = resampler(waveform)

# Get feat

inputs = processor(waveform, sampling_rate=model_sample_rate, return_tensors="pt")

input_features = inputs.input_features

input_features = input_features.to(device)

# Generate

generated_ids = model.generate(inputs=input_features, max_new_tokens=225) # greedy

# generated_ids = model.generate(inputs=input_features, max_new_tokens=225, num_beams=5) # beam search

# Detokenize

generated_sentences = processor.batch_decode(generated_ids, skip_special_tokens=True)[0]

# Normalise predicted sentences if necessary

```

|

f376cdb21885a53eb0708fe994e5f498

|

jmparejaz/qa_bert_finetuned-squad

|

jmparejaz

|

distilbert

| 12 | 8 |

transformers

| 0 |

question-answering

| true | false | false |

apache-2.0

| null |

['squad']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,275 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# qa_bert_finetuned-squad

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset.

It achieves the following results on the evaluation set:

- Loss: 1.157358

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 1.2206 | 1.0 | 5533 | 1.160322 |

| 0.9452 | 2.0 | 11066 | 1.121690 |

| 0.773 | 3.0 | 16599 | 1.157358 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

|

223044ba277776a580487661e231e94c

|

Helsinki-NLP/opus-mt-sv-ny

|

Helsinki-NLP

|

marian

| 10 | 8 |

transformers

| 0 |

translation

| true | true | false |

apache-2.0

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['translation']

| false | true | true | 768 | false |

### opus-mt-sv-ny

* source languages: sv

* target languages: ny

* OPUS readme: [sv-ny](https://github.com/Helsinki-NLP/OPUS-MT-train/blob/master/models/sv-ny/README.md)

* dataset: opus

* model: transformer-align

* pre-processing: normalization + SentencePiece

* download original weights: [opus-2020-01-21.zip](https://object.pouta.csc.fi/OPUS-MT-models/sv-ny/opus-2020-01-21.zip)

* test set translations: [opus-2020-01-21.test.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-ny/opus-2020-01-21.test.txt)

* test set scores: [opus-2020-01-21.eval.txt](https://object.pouta.csc.fi/OPUS-MT-models/sv-ny/opus-2020-01-21.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| JW300.sv.ny | 25.9 | 0.523 |

|

d98900166af193d0998db1d4c7d017c8

|

AymanMansour/Whisper-Sudanese-Dialect-medium

|

AymanMansour

|

whisper

| 41 | 0 |

transformers

| 0 |

automatic-speech-recognition

| true | false | false |

apache-2.0

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,532 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# openai/whisper-medium

This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2201

- Wer: 44.6966

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 5000

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:-------:|

| 0.0566 | 6.02 | 1000 | 0.9354 | 47.1998 |

| 0.0025 | 13.01 | 2000 | 1.0806 | 47.5605 |

| 0.0012 | 19.03 | 3000 | 1.1642 | 47.6665 |

| 0.0002 | 26.01 | 4000 | 1.1866 | 44.9724 |

| 0.0001 | 33.0 | 5000 | 1.2201 | 44.6966 |

### Framework versions

- Transformers 4.26.0.dev0

- Pytorch 1.13.1+cu117

- Datasets 2.7.1.dev0

- Tokenizers 0.13.2

|

587a6d3e186e2eae1a19ab1a16b14319

|

gokuls/bert-base-uncased-sst2

|

gokuls

|

bert

| 17 | 66 |

transformers

| 0 |

text-classification

| true | false | false |

apache-2.0

|

['en']

|

['glue']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,737 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-sst2

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the GLUE SST2 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2333

- Accuracy: 0.9128

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 128

- eval_batch_size: 128

- seed: 10

- distributed_type: multi-GPU

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2103 | 1.0 | 527 | 0.2507 | 0.9048 |

| 0.1082 | 2.0 | 1054 | 0.2333 | 0.9128 |

| 0.0724 | 3.0 | 1581 | 0.2371 | 0.9186 |

| 0.0521 | 4.0 | 2108 | 0.2582 | 0.9186 |

| 0.0393 | 5.0 | 2635 | 0.3094 | 0.9220 |

| 0.0302 | 6.0 | 3162 | 0.3506 | 0.9197 |

| 0.0258 | 7.0 | 3689 | 0.4149 | 0.9071 |

| 0.0209 | 8.0 | 4216 | 0.3121 | 0.9174 |

| 0.018 | 9.0 | 4743 | 0.4919 | 0.9060 |

### Framework versions

- Transformers 4.26.0

- Pytorch 1.14.0a0+410ce96

- Datasets 2.9.0

- Tokenizers 0.13.2

|

5ebd7924e3c39ebb821afc8aa93a0055

|

MichaelHarborg/NMT_da-en_translator

|

MichaelHarborg

|

marian

| 10 | 1 |

transformers

| 0 |

text2text-generation

| true | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

[]

| false | true | true | 633 | false |

Transformer model based on Vaswani et al., 2017 for Danish-English Neural Machine Translation.

It has ~74M parameters and is a fine-tuned version of Helsinki-Opus-NLP da-en.

The model achieves a BLEU score of 49.16 on a hold-out test set for the TED2020 dataset (in-domain dataset).

The model achieves a BLEU score of 44.16 on a hold-out test set for the for CCAligned and Wikimatrix (out-of-domain dataset).

This outperforms the baseline Opus model, which achieved BLEU scores of 46.74 and 42.31 on the in-domain and out-of-domain data respectively.

Note: When running inference "_" characters can sometimes replace spaces.

|

3243754312ae30219fed80e5c0071787

|

sibyl/BART-large-commongen

|

sibyl

|

bart

| 13 | 6 |

transformers

| 0 |

text2text-generation

| true | false | false |

mit

| null |

['gem']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| false | true | true | 1,957 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# BART-large-commongen

This model is a fine-tuned version of [facebook/bart-large](https://huggingface.co/facebook/bart-large) on the gem dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1409

- Spice: 0.4009

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- training_steps: 6317

### Training results

| Training Loss | Epoch | Step | Validation Loss | Spice |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 10.1086 | 0.05 | 100 | 4.9804 | 0.3736 |

| 4.4168 | 0.09 | 200 | 2.4402 | 0.4079 |

| 1.8158 | 0.14 | 300 | 1.1096 | 0.4258 |

| 1.1723 | 0.19 | 400 | 1.0845 | 0.4086 |

| 1.0894 | 0.24 | 500 | 1.0727 | 0.423 |

| 1.0949 | 0.28 | 600 | 1.0889 | 0.4224 |

| 1.0773 | 0.33 | 700 | 1.0977 | 0.4201 |

| 1.0708 | 0.38 | 800 | 1.1157 | 0.4213 |

| 1.0663 | 0.43 | 900 | 1.1798 | 0.421 |

| 1.0985 | 0.47 | 1000 | 1.1611 | 0.4025 |

| 1.0561 | 0.52 | 1100 | 1.1048 | 0.421 |

| 1.0594 | 0.57 | 1200 | 1.2044 | 0.3626 |

| 1.0689 | 0.62 | 1300 | 1.1409 | 0.4009 |

### Framework versions

- Transformers 4.9.2

- Pytorch 1.9.0+cu102

- Datasets 1.11.1.dev0

- Tokenizers 0.10.3

|

7fb6c1391761bc3f2b8f1e11f6a7736d

|

tomekkorbak/compassionate_elion

|

tomekkorbak

| null | 2 | 0 | null | 0 | null | false | false | false |

mit

|

['en']

|

['tomekkorbak/pii-pile-chunk3-0-50000', 'tomekkorbak/pii-pile-chunk3-50000-100000', 'tomekkorbak/pii-pile-chunk3-100000-150000', 'tomekkorbak/pii-pile-chunk3-150000-200000', 'tomekkorbak/pii-pile-chunk3-200000-250000', 'tomekkorbak/pii-pile-chunk3-250000-300000', 'tomekkorbak/pii-pile-chunk3-300000-350000', 'tomekkorbak/pii-pile-chunk3-350000-400000', 'tomekkorbak/pii-pile-chunk3-400000-450000', 'tomekkorbak/pii-pile-chunk3-450000-500000', 'tomekkorbak/pii-pile-chunk3-500000-550000', 'tomekkorbak/pii-pile-chunk3-550000-600000', 'tomekkorbak/pii-pile-chunk3-600000-650000', 'tomekkorbak/pii-pile-chunk3-650000-700000', 'tomekkorbak/pii-pile-chunk3-700000-750000', 'tomekkorbak/pii-pile-chunk3-750000-800000', 'tomekkorbak/pii-pile-chunk3-800000-850000', 'tomekkorbak/pii-pile-chunk3-850000-900000', 'tomekkorbak/pii-pile-chunk3-900000-950000', 'tomekkorbak/pii-pile-chunk3-950000-1000000', 'tomekkorbak/pii-pile-chunk3-1000000-1050000', 'tomekkorbak/pii-pile-chunk3-1050000-1100000', 'tomekkorbak/pii-pile-chunk3-1100000-1150000', 'tomekkorbak/pii-pile-chunk3-1150000-1200000', 'tomekkorbak/pii-pile-chunk3-1200000-1250000', 'tomekkorbak/pii-pile-chunk3-1250000-1300000', 'tomekkorbak/pii-pile-chunk3-1300000-1350000', 'tomekkorbak/pii-pile-chunk3-1350000-1400000', 'tomekkorbak/pii-pile-chunk3-1400000-1450000', 'tomekkorbak/pii-pile-chunk3-1450000-1500000', 'tomekkorbak/pii-pile-chunk3-1500000-1550000', 'tomekkorbak/pii-pile-chunk3-1550000-1600000', 'tomekkorbak/pii-pile-chunk3-1600000-1650000', 'tomekkorbak/pii-pile-chunk3-1650000-1700000', 'tomekkorbak/pii-pile-chunk3-1700000-1750000', 'tomekkorbak/pii-pile-chunk3-1750000-1800000', 'tomekkorbak/pii-pile-chunk3-1800000-1850000', 'tomekkorbak/pii-pile-chunk3-1850000-1900000', 'tomekkorbak/pii-pile-chunk3-1900000-1950000']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 8,594 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# compassionate_elion

This model was trained from scratch on the tomekkorbak/pii-pile-chunk3-0-50000, the tomekkorbak/pii-pile-chunk3-50000-100000, the tomekkorbak/pii-pile-chunk3-100000-150000, the tomekkorbak/pii-pile-chunk3-150000-200000, the tomekkorbak/pii-pile-chunk3-200000-250000, the tomekkorbak/pii-pile-chunk3-250000-300000, the tomekkorbak/pii-pile-chunk3-300000-350000, the tomekkorbak/pii-pile-chunk3-350000-400000, the tomekkorbak/pii-pile-chunk3-400000-450000, the tomekkorbak/pii-pile-chunk3-450000-500000, the tomekkorbak/pii-pile-chunk3-500000-550000, the tomekkorbak/pii-pile-chunk3-550000-600000, the tomekkorbak/pii-pile-chunk3-600000-650000, the tomekkorbak/pii-pile-chunk3-650000-700000, the tomekkorbak/pii-pile-chunk3-700000-750000, the tomekkorbak/pii-pile-chunk3-750000-800000, the tomekkorbak/pii-pile-chunk3-800000-850000, the tomekkorbak/pii-pile-chunk3-850000-900000, the tomekkorbak/pii-pile-chunk3-900000-950000, the tomekkorbak/pii-pile-chunk3-950000-1000000, the tomekkorbak/pii-pile-chunk3-1000000-1050000, the tomekkorbak/pii-pile-chunk3-1050000-1100000, the tomekkorbak/pii-pile-chunk3-1100000-1150000, the tomekkorbak/pii-pile-chunk3-1150000-1200000, the tomekkorbak/pii-pile-chunk3-1200000-1250000, the tomekkorbak/pii-pile-chunk3-1250000-1300000, the tomekkorbak/pii-pile-chunk3-1300000-1350000, the tomekkorbak/pii-pile-chunk3-1350000-1400000, the tomekkorbak/pii-pile-chunk3-1400000-1450000, the tomekkorbak/pii-pile-chunk3-1450000-1500000, the tomekkorbak/pii-pile-chunk3-1500000-1550000, the tomekkorbak/pii-pile-chunk3-1550000-1600000, the tomekkorbak/pii-pile-chunk3-1600000-1650000, the tomekkorbak/pii-pile-chunk3-1650000-1700000, the tomekkorbak/pii-pile-chunk3-1700000-1750000, the tomekkorbak/pii-pile-chunk3-1750000-1800000, the tomekkorbak/pii-pile-chunk3-1800000-1850000, the tomekkorbak/pii-pile-chunk3-1850000-1900000 and the tomekkorbak/pii-pile-chunk3-1900000-1950000 datasets.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.01

- training_steps: 2362

- mixed_precision_training: Native AMP

### Framework versions

- Transformers 4.24.0

- Pytorch 1.11.0+cu113

- Datasets 2.5.1

- Tokenizers 0.11.6

# Full config

{'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>',

'drop_token_fraction': 0.01,

'misaligned_prefix': '<|misaligned|>',

'threshold': 0.0},

'datasets': ['tomekkorbak/pii-pile-chunk3-0-50000',

'tomekkorbak/pii-pile-chunk3-50000-100000',

'tomekkorbak/pii-pile-chunk3-100000-150000',

'tomekkorbak/pii-pile-chunk3-150000-200000',

'tomekkorbak/pii-pile-chunk3-200000-250000',

'tomekkorbak/pii-pile-chunk3-250000-300000',

'tomekkorbak/pii-pile-chunk3-300000-350000',

'tomekkorbak/pii-pile-chunk3-350000-400000',

'tomekkorbak/pii-pile-chunk3-400000-450000',

'tomekkorbak/pii-pile-chunk3-450000-500000',

'tomekkorbak/pii-pile-chunk3-500000-550000',

'tomekkorbak/pii-pile-chunk3-550000-600000',

'tomekkorbak/pii-pile-chunk3-600000-650000',

'tomekkorbak/pii-pile-chunk3-650000-700000',

'tomekkorbak/pii-pile-chunk3-700000-750000',

'tomekkorbak/pii-pile-chunk3-750000-800000',

'tomekkorbak/pii-pile-chunk3-800000-850000',

'tomekkorbak/pii-pile-chunk3-850000-900000',

'tomekkorbak/pii-pile-chunk3-900000-950000',

'tomekkorbak/pii-pile-chunk3-950000-1000000',

'tomekkorbak/pii-pile-chunk3-1000000-1050000',

'tomekkorbak/pii-pile-chunk3-1050000-1100000',

'tomekkorbak/pii-pile-chunk3-1100000-1150000',

'tomekkorbak/pii-pile-chunk3-1150000-1200000',

'tomekkorbak/pii-pile-chunk3-1200000-1250000',

'tomekkorbak/pii-pile-chunk3-1250000-1300000',

'tomekkorbak/pii-pile-chunk3-1300000-1350000',

'tomekkorbak/pii-pile-chunk3-1350000-1400000',

'tomekkorbak/pii-pile-chunk3-1400000-1450000',

'tomekkorbak/pii-pile-chunk3-1450000-1500000',

'tomekkorbak/pii-pile-chunk3-1500000-1550000',

'tomekkorbak/pii-pile-chunk3-1550000-1600000',

'tomekkorbak/pii-pile-chunk3-1600000-1650000',

'tomekkorbak/pii-pile-chunk3-1650000-1700000',

'tomekkorbak/pii-pile-chunk3-1700000-1750000',

'tomekkorbak/pii-pile-chunk3-1750000-1800000',

'tomekkorbak/pii-pile-chunk3-1800000-1850000',

'tomekkorbak/pii-pile-chunk3-1850000-1900000',

'tomekkorbak/pii-pile-chunk3-1900000-1950000'],

'is_split_by_sentences': True,

'skip_tokens': 2990407680},

'generation': {'force_call_on': [25177],

'metrics_configs': [{}, {'n': 1}, {'n': 2}, {'n': 5}],

'scenario_configs': [{'generate_kwargs': {'bad_words_ids': [[50257],

[50258]],

'do_sample': True,

'max_length': 128,

'min_length': 10,

'temperature': 0.7,

'top_k': 0,

'top_p': 0.9},

'name': 'unconditional',

'num_samples': 4096,

'prefix': '<|aligned|>'}],

'scorer_config': {}},

'kl_gpt3_callback': {'force_call_on': [25177],

'gpt3_kwargs': {'model_name': 'davinci'},

'max_tokens': 64,

'num_samples': 4096,

'prefix': '<|aligned|>'},

'model': {'from_scratch': False,

'gpt2_config_kwargs': {'reorder_and_upcast_attn': True,

'scale_attn_by': True},

'model_kwargs': {'revision': '5c64636da035c40bb8b1186648a39822071476cb'},

'num_additional_tokens': 2,

'path_or_name': 'tomekkorbak/cranky_lichterman'},

'objective': {'name': 'MLE'},

'tokenizer': {'path_or_name': 'gpt2',

'special_tokens': ['<|aligned|>', '<|misaligned|>']},

'training': {'dataloader_num_workers': 0,

'effective_batch_size': 128,

'evaluation_strategy': 'no',

'fp16': True,

'hub_model_id': 'compassionate_elion',

'hub_strategy': 'all_checkpoints',

'learning_rate': 0.0005,

'logging_first_step': True,

'logging_steps': 1,

'num_tokens': 3300000000,

'output_dir': 'training_output2',

'per_device_train_batch_size': 16,

'push_to_hub': True,

'remove_unused_columns': False,

'save_steps': 251,

'save_strategy': 'steps',

'seed': 42,

'tokens_already_seen': 2990407680,

'warmup_ratio': 0.01,

'weight_decay': 0.1}}

# Wandb URL:

https://wandb.ai/tomekkorbak/apo/runs/mt2ulgpd

|

49693d943965cd0f1be23abfcd2253c8

|

mrgreat1110/bert-finetuned-ner

|

mrgreat1110

|

bert

| 12 | 1 |

transformers

| 0 |

token-classification

| true | false | false |

mit

| null |

['conll2003']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,526 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-finetuned-ner

This model is a fine-tuned version of [dslim/bert-base-NER](https://huggingface.co/dslim/bert-base-NER) on the conll2003 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0883

- Precision: 0.9343

- Recall: 0.9495

- F1: 0.9418

- Accuracy: 0.9861

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.02 | 1.0 | 1756 | 0.0944 | 0.9189 | 0.9381 | 0.9284 | 0.9833 |

| 0.011 | 2.0 | 3512 | 0.0809 | 0.9358 | 0.9514 | 0.9435 | 0.9862 |

| 0.0032 | 3.0 | 5268 | 0.0883 | 0.9343 | 0.9495 | 0.9418 | 0.9861 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.7.1

- Tokenizers 0.13.2

|

dff385ea9713defb3a2e03049960b217

|

muhtasham/base-vanilla-target-tweet

|

muhtasham

|

bert

| 10 | 3 |

transformers

| 0 |

text-classification

| true | false | false |

apache-2.0

| null |

['tweet_eval']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,708 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# base-vanilla-target-tweet

This model is a fine-tuned version of [google/bert_uncased_L-12_H-768_A-12](https://huggingface.co/google/bert_uncased_L-12_H-768_A-12) on the tweet_eval dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8380

- Accuracy: 0.7781

- F1: 0.7773

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: constant

- num_epochs: 200

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.3831 | 4.9 | 500 | 0.9800 | 0.7807 | 0.7785 |

| 0.0414 | 9.8 | 1000 | 1.4175 | 0.7754 | 0.7765 |

| 0.015 | 14.71 | 1500 | 1.6411 | 0.7754 | 0.7708 |

| 0.0166 | 19.61 | 2000 | 1.5930 | 0.7941 | 0.7938 |

| 0.0175 | 24.51 | 2500 | 1.3934 | 0.7888 | 0.7852 |

| 0.0191 | 29.41 | 3000 | 1.9407 | 0.7647 | 0.7658 |

| 0.0137 | 34.31 | 3500 | 1.8380 | 0.7781 | 0.7773 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.12.1

- Datasets 2.7.1

- Tokenizers 0.13.2

|

b53e09cf9258e6bed065e0b984579bb9

|

jonatasgrosman/exp_w2v2r_de_xls-r_age_teens-10_sixties-0_s460

|

jonatasgrosman

|

wav2vec2

| 10 | 0 |

transformers

| 0 |

automatic-speech-recognition

| true | false | false |

apache-2.0

|

['de']

|

['mozilla-foundation/common_voice_7_0']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['automatic-speech-recognition', 'de']

| false | true | true | 476 | false |

# exp_w2v2r_de_xls-r_age_teens-10_sixties-0_s460

Fine-tuned [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) for speech recognition using the train split of [Common Voice 7.0 (de)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0).

When using this model, make sure that your speech input is sampled at 16kHz.

This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool.

|

7714c2878922714bfd57dcd8340f404f

|

bitsanlp/roberta-finetuned-DA-task-B-100k-5-labels

|

bitsanlp

|

roberta

| 13 | 1 |

transformers

| 0 |

text-classification

| true | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 970 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# roberta-finetuned-DA-task-B-100k-5-labels

This model is a fine-tuned version of [bitsanlp/roberta-retrained-100k](https://huggingface.co/bitsanlp/roberta-retrained-100k) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 8

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

|

1432c1a3bed2858bb207bbce23f3f8b7

|

jonatasgrosman/exp_w2v2t_en_vp-nl_s281

|

jonatasgrosman

|

wav2vec2

| 10 | 5 |

transformers

| 0 |

automatic-speech-recognition

| true | false | false |

apache-2.0

|

['en']

|

['mozilla-foundation/common_voice_7_0']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['automatic-speech-recognition', 'en']

| false | true | true | 475 | false |

# exp_w2v2t_en_vp-nl_s281

Fine-tuned [facebook/wav2vec2-large-nl-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-nl-voxpopuli) for speech recognition on English using the train split of [Common Voice 7.0](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0).

When using this model, make sure that your speech input is sampled at 16kHz.

This model has been fine-tuned by the [HuggingSound](https://github.com/jonatasgrosman/huggingsound) tool.

|

31db89cd67826277449f0558d813fc9e

|

google/realm-cc-news-pretrained-encoder

|

google

|

realm

| 7 | 309 |

transformers

| 0 | null | true | false | false |

apache-2.0

|

['en']

| null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

[]

| false | true | true | 524 | false |

# realm-cc-news-pretrained-encoder

## Model description

The REALM checkpoint pretrained with CC-News as target corpus and Wikipedia as knowledge corpus, converted from the TF checkpoint provided by Google Language.

The original paper, code, and checkpoints can be found [here](https://github.com/google-research/language/tree/master/language/realm).

## Usage

```python

from transformers import RealmKnowledgeAugEncoder

encoder = RealmKnowledgeAugEncoder.from_pretrained("qqaatw/realm-cc-news-pretrained-encoder")

```

|

466d9688cce13307fb756abdb96c1037

|

coreml/coreml-stable-diffusion-2-1-base

|

coreml

| null | 6 | 0 | null | 10 |

text-to-image

| false | false | false |

creativeml-openrail-m

| null | null | null | 0 | 0 | 0 | 0 | 1 | 0 | 1 |

['coreml', 'stable-diffusion', 'text-to-image']

| false | true | true | 12,899 | false |

# Core ML Converted Model

This model was converted to Core ML for use on Apple Silicon devices by following Apple's instructions [here](https://github.com/apple/ml-stable-diffusion#-converting-models-to-core-ml).<br>

Provide the model to an app such as [Mochi Diffusion](https://github.com/godly-devotion/MochiDiffusion) to generate images.<br>

`split_einsum` version is compatible with all compute unit options including Neural Engine.<br>

`original` version is only compatible with CPU & GPU option.

# Stable Diffusion v2-1-base Model Card

This model card focuses on the model associated with the Stable Diffusion v2-1-base model.

This `stable-diffusion-2-1-base` model fine-tunes [stable-diffusion-2-base](https://huggingface.co/stabilityai/stable-diffusion-2-base) (`512-base-ema.ckpt`) with 220k extra steps taken, with `punsafe=0.98` on the same dataset.

- Use it with the [`stablediffusion`](https://github.com/Stability-AI/stablediffusion) repository: download the `v2-1_512-ema-pruned.ckpt` [here](https://huggingface.co/stabilityai/stable-diffusion-2-1-base/resolve/main/v2-1_512-ema-pruned.ckpt).

- Use it with 🧨 [`diffusers`](#examples)

## Model Details

- **Developed by:** Robin Rombach, Patrick Esser

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [CreativeML Open RAIL++-M License](https://huggingface.co/stabilityai/stable-diffusion-2/blob/main/LICENSE-MODEL)

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([OpenCLIP-ViT/H](https://github.com/mlfoundations/open_clip)).

- **Resources for more information:** [GitHub Repository](https://github.com/Stability-AI/).

- **Cite as:**

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

## Examples

Using the [🤗's Diffusers library](https://github.com/huggingface/diffusers) to run Stable Diffusion 2 in a simple and efficient manner.

```bash

pip install diffusers transformers accelerate scipy safetensors

```

Running the pipeline (if you don't swap the scheduler it will run with the default PNDM/PLMS scheduler, in this example we are swapping it to EulerDiscreteScheduler):

```python

from diffusers import StableDiffusionPipeline, EulerDiscreteScheduler

import torch

model_id = "stabilityai/stable-diffusion-2-1-base"

scheduler = EulerDiscreteScheduler.from_pretrained(model_id, subfolder="scheduler")

pipe = StableDiffusionPipeline.from_pretrained(model_id, scheduler=scheduler, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

image.save("astronaut_rides_horse.png")

```

**Notes**:

- Despite not being a dependency, we highly recommend you to install [xformers](https://github.com/facebookresearch/xformers) for memory efficient attention (better performance)

- If you have low GPU RAM available, make sure to add a `pipe.enable_attention_slicing()` after sending it to `cuda` for less VRAM usage (to the cost of speed)

# Uses

## Direct Use

The model is intended for research purposes only. Possible research areas and tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

_Note: This section is originally taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), was used for Stable Diffusion v1, but applies in the same way to Stable Diffusion v2_.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a subset of the large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/), which contains adult, violent and sexual content. To partially mitigate this, we have filtered the dataset using LAION's NFSW detector (see Training section).

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion vw was primarily trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

Stable Diffusion v2 mirrors and exacerbates biases to such a degree that viewer discretion must be advised irrespective of the input or its intent.

## Training

**Training Data**

The model developers used the following dataset for training the model:

- LAION-5B and subsets (details below). The training data is further filtered using LAION's NSFW detector, with a "p_unsafe" score of 0.1 (conservative). For more details, please refer to LAION-5B's [NeurIPS 2022](https://openreview.net/forum?id=M3Y74vmsMcY) paper and reviewer discussions on the topic.

**Training Procedure**

Stable Diffusion v2 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training,

- Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4

- Text prompts are encoded through the OpenCLIP-ViT/H text-encoder.

- The output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention.

- The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet. We also use the so-called _v-objective_, see https://arxiv.org/abs/2202.00512.

We currently provide the following checkpoints, for various versions:

### Version 2.1

- `512-base-ema.ckpt`: Fine-tuned on `512-base-ema.ckpt` 2.0 with 220k extra steps taken, with `punsafe=0.98` on the same dataset.

- `768-v-ema.ckpt`: Resumed from `768-v-ema.ckpt` 2.0 with an additional 55k steps on the same dataset (`punsafe=0.1`), and then fine-tuned for another 155k extra steps with `punsafe=0.98`.

### Version 2.0

- `512-base-ema.ckpt`: 550k steps at resolution `256x256` on a subset of [LAION-5B](https://laion.ai/blog/laion-5b/) filtered for explicit pornographic material, using the [LAION-NSFW classifier](https://github.com/LAION-AI/CLIP-based-NSFW-Detector) with `punsafe=0.1` and an [aesthetic score](https://github.com/christophschuhmann/improved-aesthetic-predictor) >= `4.5`.

850k steps at resolution `512x512` on the same dataset with resolution `>= 512x512`.

- `768-v-ema.ckpt`: Resumed from `512-base-ema.ckpt` and trained for 150k steps using a [v-objective](https://arxiv.org/abs/2202.00512) on the same dataset. Resumed for another 140k steps on a `768x768` subset of our dataset.

- `512-depth-ema.ckpt`: Resumed from `512-base-ema.ckpt` and finetuned for 200k steps. Added an extra input channel to process the (relative) depth prediction produced by [MiDaS](https://github.com/isl-org/MiDaS) (`dpt_hybrid`) which is used as an additional conditioning.

The additional input channels of the U-Net which process this extra information were zero-initialized.

- `512-inpainting-ema.ckpt`: Resumed from `512-base-ema.ckpt` and trained for another 200k steps. Follows the mask-generation strategy presented in [LAMA](https://github.com/saic-mdal/lama) which, in combination with the latent VAE representations of the masked image, are used as an additional conditioning.

The additional input channels of the U-Net which process this extra information were zero-initialized. The same strategy was used to train the [1.5-inpainting checkpoint](https://github.com/saic-mdal/lama).

- `x4-upscaling-ema.ckpt`: Trained for 1.25M steps on a 10M subset of LAION containing images `>2048x2048`. The model was trained on crops of size `512x512` and is a text-guided [latent upscaling diffusion model](https://arxiv.org/abs/2112.10752).

In addition to the textual input, it receives a `noise_level` as an input parameter, which can be used to add noise to the low-resolution input according to a [predefined diffusion schedule](configs/stable-diffusion/x4-upscaling.yaml).

- **Hardware:** 32 x 8 x A100 GPUs

- **Optimizer:** AdamW

- **Gradient Accumulations**: 1

- **Batch:** 32 x 8 x 2 x 4 = 2048

- **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant

## Evaluation Results

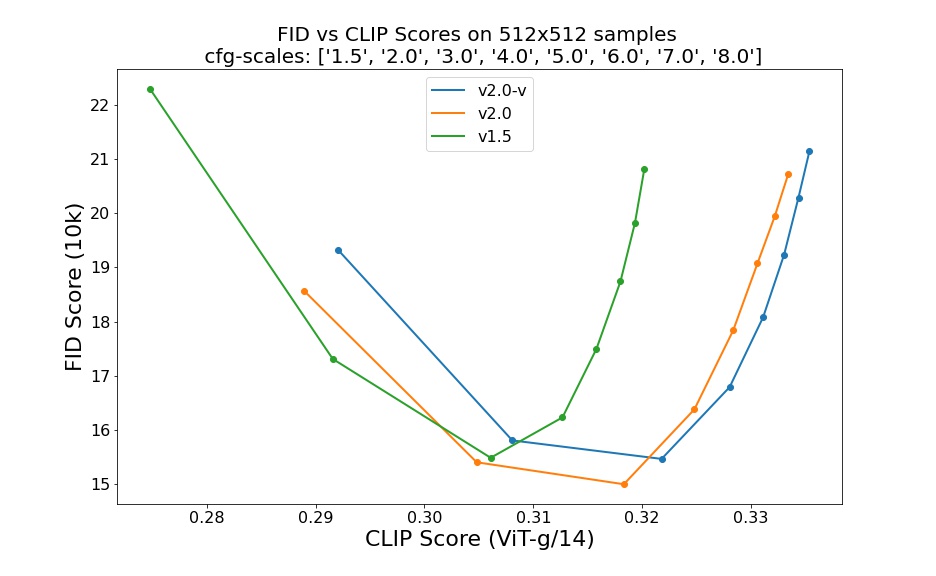

Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0,

5.0, 6.0, 7.0, 8.0) and 50 steps DDIM sampling steps show the relative improvements of the checkpoints:

Evaluated using 50 DDIM steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores.

## Environmental Impact

**Stable Diffusion v1** **Estimated Emissions**

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

- **Hardware Type:** A100 PCIe 40GB

- **Hours used:** 200000

- **Cloud Provider:** AWS

- **Compute Region:** US-east

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 15000 kg CO2 eq.

## Citation

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

*This model card was written by: Robin Rombach, Patrick Esser and David Ha and is based on the [Stable Diffusion v1](https://github.com/CompVis/stable-diffusion/blob/main/Stable_Diffusion_v1_Model_Card.md) and [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).*

|

560f0ce05d6602e6fb692b55f9da6dbd

|

Qiliang/bart-large-cnn-samsum-ElectrifAi_v10

|

Qiliang

|

bart

| 13 | 11 |

transformers

| 0 |

text2text-generation

| true | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,685 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bart-large-cnn-samsum-ElectrifAi_v10

This model is a fine-tuned version of [philschmid/bart-large-cnn-samsum](https://huggingface.co/philschmid/bart-large-cnn-samsum) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1748

- Rouge1: 58.3392

- Rouge2: 35.1686

- Rougel: 45.4136

- Rougelsum: 56.9138

- Gen Len: 108.375

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:--------:|

| No log | 1.0 | 21 | 1.1573 | 56.0772 | 34.1572 | 44.3652 | 54.8621 | 106.0833 |

| No log | 2.0 | 42 | 1.1764 | 57.7245 | 34.6517 | 45.67 | 56.3426 | 106.4167 |

| No log | 3.0 | 63 | 1.1748 | 58.3392 | 35.1686 | 45.4136 | 56.9138 | 108.375 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.12.1

- Datasets 2.6.1

- Tokenizers 0.13.2

|

57b92ebb20bff8e624ff9c364f91f862

|

akahnn/aaureeliaav3

|

akahnn

| null | 13 | 0 | null | 0 |

text-to-image

| false | false | false |

creativeml-openrail-m

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['text-to-image', 'stable-diffusion']

| false | true | true | 420 | false |

### aaureeliaav3 Dreambooth model trained by akahnn with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

|

4f277c6edef71e43895de21689730ac2

|

paola-md/distilr2-lr1e05-wd0.08-bs16

|

paola-md

|

roberta

| 6 | 1 |

transformers

| 0 |

text-classification

| true | false | false |

apache-2.0

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,441 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilr2-lr1e05-wd0.08-bs16

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2760

- Rmse: 0.5254

- Mse: 0.2760

- Mae: 0.4277

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 128

- eval_batch_size: 128

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rmse | Mse | Mae |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|

| 0.2765 | 1.0 | 1245 | 0.2733 | 0.5228 | 0.2733 | 0.4100 |

| 0.2733 | 2.0 | 2490 | 0.2739 | 0.5233 | 0.2739 | 0.4224 |

| 0.2713 | 3.0 | 3735 | 0.2760 | 0.5254 | 0.2760 | 0.4277 |

### Framework versions

- Transformers 4.19.0.dev0

- Pytorch 1.9.0+cu111

- Datasets 2.4.0

- Tokenizers 0.12.1

|

81a6a53930da15773a005f3eb61e310a

|

WillHeld/t5-base-adv-mtop

|

WillHeld

|

mt5

| 41 | 3 |

transformers

| 0 |

text2text-generation

| true | false | false |

apache-2.0

|

['en']

|

['mtop']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 2,180 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-base-adv-mtop

This model is a fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) on the mtop dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1009

- Exact Match: 0.7937

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 64

- total_train_batch_size: 512

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 3000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Exact Match |

|:-------------:|:-----:|:----:|:---------------:|:-----------:|

| 4.2521 | 1.09 | 200 | 0.1367 | 0.5418 |

| 6.2586 | 2.17 | 400 | 0.1020 | 0.6004 |

| 4.0003 | 3.26 | 600 | 0.1009 | 0.6179 |

| 2.7191 | 4.35 | 800 | 0.1066 | 0.6251 |

| 1.5031 | 5.43 | 1000 | 0.1215 | 0.6286 |

| 0.703 | 6.52 | 1200 | 0.1238 | 0.6215 |

| 0.6371 | 7.61 | 1400 | 0.1365 | 0.6286 |

| 0.3712 | 8.69 | 1600 | 0.1450 | 0.6300 |

| 0.5666 | 9.78 | 1800 | 0.1500 | 0.6295 |

| 0.5237 | 10.87 | 2000 | 0.1416 | 0.6251 |

| 0.4562 | 11.96 | 2200 | 0.1464 | 0.6313 |

| 0.3421 | 13.04 | 2400 | 0.1635 | 0.6277 |

| 0.3686 | 14.13 | 2600 | 0.1643 | 0.6322 |

| 0.218 | 15.22 | 2800 | 0.1800 | 0.6277 |

| 0.2371 | 16.3 | 3000 | 0.1742 | 0.6268 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.13.0+cu117

- Datasets 2.7.0

- Tokenizers 0.13.2

|

3b67c1666072f3d7a2528f3083edbc3c

|

blizrys/distilbert-base-uncased-finetuned-mnli

|

blizrys

|

distilbert

| 13 | 1 |

transformers

| 0 |

text-classification

| true | false | false |

apache-2.0

| null |

['glue']

| null | 1 | 1 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,489 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-mnli

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6753

- Accuracy: 0.8206

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:------:|:---------------:|:--------:|

| 0.5146 | 1.0 | 24544 | 0.4925 | 0.8049 |

| 0.4093 | 2.0 | 49088 | 0.5090 | 0.8164 |

| 0.3122 | 3.0 | 73632 | 0.5299 | 0.8185 |

| 0.2286 | 4.0 | 98176 | 0.6753 | 0.8206 |

| 0.182 | 5.0 | 122720 | 0.8372 | 0.8195 |

### Framework versions

- Transformers 4.10.2

- Pytorch 1.9.0+cu102

- Datasets 1.11.0

- Tokenizers 0.10.3

|

3a031583a5fb571636f020d384720510

|

Helsinki-NLP/opus-mt-fr-ms

|

Helsinki-NLP

|

marian

| 11 | 8 |

transformers

| 0 |

translation

| true | true | false |

apache-2.0

|

['fr', 'ms']

| null | null | 1 | 1 | 0 | 0 | 0 | 0 | 0 |

['translation']

| false | true | true | 2,167 | false |

### fra-msa

* source group: French

* target group: Malay (macrolanguage)

* OPUS readme: [fra-msa](https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/fra-msa/README.md)

* model: transformer-align

* source language(s): fra

* target language(s): ind zsm_Latn

* model: transformer-align

* pre-processing: normalization + SentencePiece (spm32k,spm32k)

* a sentence initial language token is required in the form of `>>id<<` (id = valid target language ID)

* download original weights: [opus-2020-06-17.zip](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-msa/opus-2020-06-17.zip)

* test set translations: [opus-2020-06-17.test.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-msa/opus-2020-06-17.test.txt)

* test set scores: [opus-2020-06-17.eval.txt](https://object.pouta.csc.fi/Tatoeba-MT-models/fra-msa/opus-2020-06-17.eval.txt)

## Benchmarks

| testset | BLEU | chr-F |

|-----------------------|-------|-------|

| Tatoeba-test.fra.msa | 35.3 | 0.617 |

### System Info:

- hf_name: fra-msa

- source_languages: fra

- target_languages: msa

- opus_readme_url: https://github.com/Helsinki-NLP/Tatoeba-Challenge/tree/master/models/fra-msa/README.md

- original_repo: Tatoeba-Challenge

- tags: ['translation']

- languages: ['fr', 'ms']

- src_constituents: {'fra'}

- tgt_constituents: {'zsm_Latn', 'ind', 'max_Latn', 'zlm_Latn', 'min'}

- src_multilingual: False

- tgt_multilingual: False

- prepro: normalization + SentencePiece (spm32k,spm32k)

- url_model: https://object.pouta.csc.fi/Tatoeba-MT-models/fra-msa/opus-2020-06-17.zip

- url_test_set: https://object.pouta.csc.fi/Tatoeba-MT-models/fra-msa/opus-2020-06-17.test.txt

- src_alpha3: fra

- tgt_alpha3: msa

- short_pair: fr-ms

- chrF2_score: 0.617

- bleu: 35.3

- brevity_penalty: 0.978

- ref_len: 6696.0

- src_name: French

- tgt_name: Malay (macrolanguage)

- train_date: 2020-06-17

- src_alpha2: fr

- tgt_alpha2: ms

- prefer_old: False

- long_pair: fra-msa

- helsinki_git_sha: 480fcbe0ee1bf4774bcbe6226ad9f58e63f6c535

- transformers_git_sha: 2207e5d8cb224e954a7cba69fa4ac2309e9ff30b

- port_machine: brutasse

- port_time: 2020-08-21-14:41

|

b543e2a5b3ea088aef74dfb05cad1f30

|

WillHeld/t5-base-adv-cstop_artificial

|

WillHeld

|

mt5

| 23 | 2 |

transformers

| 0 |

text2text-generation

| true | false | false |

apache-2.0

|

['en']

|

['cstop_artificial']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 2,204 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-base-adv-cstop_artificial

This model is a fine-tuned version of [google/mt5-base](https://huggingface.co/google/mt5-base) on the cstop_artificial dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0997

- Exact Match: 0.8479

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 64

- total_train_batch_size: 512

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 3000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Exact Match |

|:-------------:|:-----:|:----:|:---------------:|:-----------:|

| 1.8954 | 12.5 | 200 | 0.1003 | 0.4902 |

| 0.3392 | 25.0 | 400 | 0.0997 | 0.5671 |

| 0.3092 | 37.5 | 600 | 0.1067 | 0.5653 |

| 0.3062 | 50.0 | 800 | 0.1245 | 0.5689 |

| 0.5401 | 62.5 | 1000 | 0.1096 | 0.5581 |

| 0.3075 | 75.0 | 1200 | 0.1197 | 0.5581 |

| 0.3039 | 87.5 | 1400 | 0.1339 | 0.5689 |

| 0.3041 | 100.0 | 1600 | 0.1485 | 0.5635 |

| 0.3036 | 112.5 | 1800 | 0.1498 | 0.5581 |

| 0.304 | 125.0 | 2000 | 0.1454 | 0.5617 |

| 0.3022 | 137.5 | 2200 | 0.1516 | 0.5689 |

| 0.3032 | 150.0 | 2400 | 0.1361 | 0.5635 |

| 0.3035 | 162.5 | 2600 | 0.1427 | 0.5635 |

| 0.3001 | 175.0 | 2800 | 0.1466 | 0.5635 |

| 0.3048 | 187.5 | 3000 | 0.1471 | 0.5635 |

### Framework versions

- Transformers 4.24.0

- Pytorch 1.13.0+cu117

- Datasets 2.7.0

- Tokenizers 0.13.2

|

1c3b2513fb310959b01be420f7cbcc3e

|

sureshchinta/wav2vec2-base-finetuned-ks

|

sureshchinta

|

wav2vec2

| 9 | 3 |

transformers

| 0 |

audio-classification

| true | false | false |

apache-2.0

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 2,241 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-base-finetuned-ks

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2562

- Accuracy: 0.9869

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 16

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 2.4691 | 0.99 | 26 | 2.3935 | 0.2310 |

| 2.1621 | 1.99 | 52 | 2.0155 | 0.3202 |

| 1.8731 | 2.99 | 78 | 1.6397 | 0.7929 |

| 1.4521 | 3.99 | 104 | 1.2337 | 0.8940 |

| 1.101 | 4.99 | 130 | 0.9519 | 0.9393 |

| 0.9401 | 5.99 | 156 | 0.7686 | 0.975 |

| 0.7463 | 6.99 | 182 | 0.6338 | 0.9774 |

| 0.6555 | 7.99 | 208 | 0.5214 | 0.9810 |

| 0.5095 | 8.99 | 234 | 0.4228 | 0.9869 |

| 0.4152 | 9.99 | 260 | 0.3658 | 0.9857 |

| 0.3764 | 10.99 | 286 | 0.3311 | 0.9857 |

| 0.3325 | 11.99 | 312 | 0.2954 | 0.9881 |

| 0.3121 | 12.99 | 338 | 0.2797 | 0.9869 |

| 0.281 | 13.99 | 364 | 0.2650 | 0.9857 |

| 0.2627 | 14.99 | 390 | 0.2571 | 0.9869 |

| 0.2655 | 15.99 | 416 | 0.2562 | 0.9869 |

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.1+cu113

- Datasets 1.14.0

- Tokenizers 0.12.1

|

f2ab542db889ee38fb57858785758391

|

google/ddpm-cat-256

|

google

| null | 10 | 35 |

diffusers

| 0 |

unconditional-image-generation

| true | false | false |

apache-2.0

| null | null | null | 2 | 0 | 1 | 1 | 0 | 0 | 0 |

['pytorch', 'diffusers', 'unconditional-image-generation']

| false | true | true | 2,874 | false |

# Denoising Diffusion Probabilistic Models (DDPM)

**Paper**: [Denoising Diffusion Probabilistic Models](https://arxiv.org/abs/2006.11239)

**Authors**: Jonathan Ho, Ajay Jain, Pieter Abbeel

**Abstract**:

*We present high quality image synthesis results using diffusion probabilistic models, a class of latent variable models inspired by considerations from nonequilibrium thermodynamics. Our best results are obtained by training on a weighted variational bound designed according to a novel connection between diffusion probabilistic models and denoising score matching with Langevin dynamics, and our models naturally admit a progressive lossy decompression scheme that can be interpreted as a generalization of autoregressive decoding. On the unconditional CIFAR10 dataset, we obtain an Inception score of 9.46 and a state-of-the-art FID score of 3.17. On 256x256 LSUN, we obtain sample quality similar to ProgressiveGAN.*

## Inference

**DDPM** models can use *discrete noise schedulers* such as:

- [scheduling_ddpm](https://github.com/huggingface/diffusers/blob/main/src/diffusers/schedulers/scheduling_ddpm.py)

- [scheduling_ddim](https://github.com/huggingface/diffusers/blob/main/src/diffusers/schedulers/scheduling_ddim.py)

- [scheduling_pndm](https://github.com/huggingface/diffusers/blob/main/src/diffusers/schedulers/scheduling_pndm.py)

for inference. Note that while the *ddpm* scheduler yields the highest quality, it also takes the longest.

For a good trade-off between quality and inference speed you might want to consider the *ddim* or *pndm* schedulers instead.

See the following code:

```python

# !pip install diffusers

from diffusers import DDPMPipeline, DDIMPipeline, PNDMPipeline

model_id = "google/ddpm-cat-256"

# load model and scheduler

ddpm = DDPMPipeline.from_pretrained(model_id) # you can replace DDPMPipeline with DDIMPipeline or PNDMPipeline for faster inference

# run pipeline in inference (sample random noise and denoise)

image = ddpm().images[0]

# save image

image.save("ddpm_generated_image.png")

```

For more in-detail information, please have a look at the [official inference example](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb)

## Training

If you want to train your own model, please have a look at the [official training example](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/training_example.ipynb)

## Samples

1.

2.

3.

4.

|

9dd32a7799e1b7deb83af917316df292

|

gabella/bert-emotion

|

gabella

|

distilbert

| 18 | 4 |

transformers

| 0 |

text-classification

| true | false | false |

apache-2.0

| null |

['tweet_eval']

| null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 1,455 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-emotion

This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on the tweet_eval dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1951

- Precision: 0.7350

- Recall: 0.7334

- Fscore: 0.7341

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | Fscore |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|

| 0.8468 | 1.0 | 815 | 0.7465 | 0.7116 | 0.6096 | 0.6325 |

| 0.5105 | 2.0 | 1630 | 0.9035 | 0.7532 | 0.7111 | 0.7276 |

| 0.2492 | 3.0 | 2445 | 1.1951 | 0.7350 | 0.7334 | 0.7341 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

|

5806e680324907514ec53e31a5819c85

|

chrommium/xlm-roberta-large-finetuned-sent_in_news

|

chrommium

|

xlm-roberta

| 12 | 1 |

transformers

| 0 |

text-classification

| true | false | false |

mit

| null | null | null | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

['generated_from_trainer']

| true | true | true | 2,665 | false |

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-large-finetuned-sent_in_news

This model is a fine-tuned version of [xlm-roberta-large](https://huggingface.co/xlm-roberta-large) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8872

- Accuracy: 0.7273

- F1: 0.5125

## Model description

Модель ассиметрична, реагирует на метку X в тексте новости.

Попробуйте следующие примеры:

a) Агентство X понизило рейтинг банка Fitch.

b) Агентство Fitch понизило рейтинг банка X.

a) Компания Финам показала рекордную прибыль, говорят аналитики компании X.

b) Компания X показала рекордную прибыль, говорят аналитики компании Финам.

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 10

- eval_batch_size: 10

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 16

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| No log | 1.0 | 106 | 1.2526 | 0.6108 | 0.1508 |

| No log | 2.0 | 212 | 1.1553 | 0.6648 | 0.1141 |

| No log | 3.0 | 318 | 1.1150 | 0.6591 | 0.1247 |

| No log | 4.0 | 424 | 1.0007 | 0.6705 | 0.1383 |

| 1.1323 | 5.0 | 530 | 0.9267 | 0.6733 | 0.2027 |

| 1.1323 | 6.0 | 636 | 1.0869 | 0.6335 | 0.4084 |