modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-29 12:28:39

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 526

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-29 12:28:30

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

avialfont/dummy-finetuned-imdb

|

avialfont

| 2022-04-04T10:53:31Z | 3 | 0 |

transformers

|

[

"transformers",

"tf",

"distilbert",

"fill-mask",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-04-04T10:06:50Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: avialfont/dummy-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# avialfont/dummy-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 2.8606

- Validation Loss: 2.5865

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -688, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 2.8606 | 2.5865 | 0 |

### Framework versions

- Transformers 4.16.2

- TensorFlow 2.8.0

- Datasets 1.18.3

- Tokenizers 0.11.6

|

blacktree/distilbert-base-uncased-finetuned-sst2

|

blacktree

| 2022-04-04T10:44:22Z | 15 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-01T12:29:26Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-sst2

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

args: sst2

metrics:

- name: Accuracy

type: accuracy

value: 0.5091743119266054

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-sst2

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7027

- Accuracy: 0.5092

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.01

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.6868 | 1.0 | 1053 | 0.7027 | 0.5092 |

| 0.6868 | 2.0 | 2106 | 0.7027 | 0.5092 |

| 0.6867 | 3.0 | 3159 | 0.6970 | 0.5092 |

| 0.687 | 4.0 | 4212 | 0.6992 | 0.5092 |

| 0.6866 | 5.0 | 5265 | 0.6983 | 0.5092 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Datasets 2.0.0

- Tokenizers 0.11.6

|

ikekobby/fake-real-news-classifier

|

ikekobby

| 2022-04-04T09:23:36Z | 5 | 0 |

transformers

|

[

"transformers",

"tf",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-03T17:57:15Z |

Model based trained on 30% of the kaggle public data on fake and reals news article. The model achieved an `auc` of 1.0, precision, recall and f1score all at score of 1.0.

* Task;- The predictor classifies news articles into either fake or real news.

* It is a transformer model trained using the `ktrain` library on 30% of dataset of size 194MB after preprocessing.

* Metrics used are recall,, precision, f1score and roc_auc_score.

|

tanlq/vit-base-patch16-224-in21k-finetuned-cifar10

|

tanlq

| 2022-04-04T08:20:16Z | 76 | 0 |

transformers

|

[

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:cifar10",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-03-31T03:09:09Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- cifar10

metrics:

- accuracy

model-index:

- name: vit-base-patch16-224-in21k-finetuned-cifar10

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: cifar10

type: cifar10

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.9875

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-patch16-224-in21k-finetuned-cifar10

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the cifar10 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0503

- Accuracy: 0.9875

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.3118 | 1.0 | 1562 | 0.1135 | 0.9778 |

| 0.2717 | 2.0 | 3124 | 0.0619 | 0.9867 |

| 0.1964 | 3.0 | 4686 | 0.0503 | 0.9875 |

### Framework versions

- Transformers 4.18.0.dev0

- Pytorch 1.11.0

- Datasets 2.0.0

- Tokenizers 0.11.6

|

DMetaSoul/sbert-chinese-general-v2

|

DMetaSoul

| 2022-04-04T07:22:23Z | 1,656 | 33 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"bert",

"feature-extraction",

"sentence-similarity",

"transformers",

"semantic-search",

"chinese",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-03-25T08:59:33Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

- semantic-search

- chinese

---

# DMetaSoul/sbert-chinese-general-v2

此模型基于 [bert-base-chinese](https://huggingface.co/bert-base-chinese) 版本 BERT 模型,在百万级语义相似数据集 [SimCLUE](https://github.com/CLUEbenchmark/SimCLUE) 上进行训练,适用于**通用语义匹配**场景,从效果来看该模型在各种任务上**泛化能力更好**。

注:此模型的[轻量化版本](https://huggingface.co/DMetaSoul/sbert-chinese-general-v2-distill),也已经开源啦!

# Usage

## 1. Sentence-Transformers

通过 [sentence-transformers](https://www.SBERT.net) 框架来使用该模型,首先进行安装:

```

pip install -U sentence-transformers

```

然后使用下面的代码来载入该模型并进行文本表征向量的提取:

```python

from sentence_transformers import SentenceTransformer

sentences = ["我的儿子!他猛然间喊道,我的儿子在哪儿?", "我的儿子呢!他突然喊道,我的儿子在哪里?"]

model = SentenceTransformer('DMetaSoul/sbert-chinese-general-v2')

embeddings = model.encode(sentences)

print(embeddings)

```

## 2. HuggingFace Transformers

如果不想使用 [sentence-transformers](https://www.SBERT.net) 的话,也可以通过 HuggingFace Transformers 来载入该模型并进行文本向量抽取:

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ["我的儿子!他猛然间喊道,我的儿子在哪儿?", "我的儿子呢!他突然喊道,我的儿子在哪里?"]

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('DMetaSoul/sbert-chinese-general-v2')

model = AutoModel.from_pretrained('DMetaSoul/sbert-chinese-general-v2')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation

该模型在公开的几个语义匹配数据集上进行了评测,计算了向量相似度跟真实标签之间的相关性系数:

| | **csts_dev** | **csts_test** | **afqmc** | **lcqmc** | **bqcorpus** | **pawsx** | **xiaobu** |

| ---------------------------- | ------------ | ------------- | ---------- | ---------- | ------------ | ---------- | ---------- |

| **sbert-chinese-general-v1** | **84.54%** | **82.17%** | 23.80% | 65.94% | 45.52% | 11.52% | 48.51% |

| **sbert-chinese-general-v2** | 77.20% | 72.60% | **36.80%** | **76.92%** | **49.63%** | **16.24%** | **63.16%** |

这里对比了本模型跟之前我们发布 [sbert-chinese-general-v1](https://huggingface.co/DMetaSoul/sbert-chinese-general-v1) 之间的差异,可以看到本模型在多个任务上的泛化能力更好。

## Citing & Authors

E-mail: xiaowenbin@dmetasoul.com

|

DMetaSoul/sbert-chinese-qmc-finance-v1

|

DMetaSoul

| 2022-04-04T07:21:28Z | 5 | 2 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"bert",

"feature-extraction",

"sentence-similarity",

"transformers",

"semantic-search",

"chinese",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-03-25T10:23:55Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

- semantic-search

- chinese

---

# DMetaSoul/sbert-chinese-qmc-finance-v1

此模型基于 [bert-base-chinese](https://huggingface.co/bert-base-chinese) 版本 BERT 模型,在大规模银行问题匹配数据集([BQCorpus](http://icrc.hitsz.edu.cn/info/1037/1162.htm))上进行训练调优,适用于**金融领域的问题匹配**场景,比如:

- 8千日利息400元? VS 10000元日利息多少钱

- 提前还款是按全额计息 VS 还款扣款不成功怎么还款?

- 为什么我借钱交易失败 VS 刚申请的借款为什么会失败

注:此模型的[轻量化版本](https://huggingface.co/DMetaSoul/sbert-chinese-qmc-finance-v1-distill),也已经开源啦!

# Usage

## 1. Sentence-Transformers

通过 [sentence-transformers](https://www.SBERT.net) 框架来使用该模型,首先进行安装:

```

pip install -U sentence-transformers

```

然后使用下面的代码来载入该模型并进行文本表征向量的提取:

```python

from sentence_transformers import SentenceTransformer

sentences = ["到期不能按时还款怎么办", "剩余欠款还有多少?"]

model = SentenceTransformer('DMetaSoul/sbert-chinese-qmc-finance-v1')

embeddings = model.encode(sentences)

print(embeddings)

```

## 2. HuggingFace Transformers

如果不想使用 [sentence-transformers](https://www.SBERT.net) 的话,也可以通过 HuggingFace Transformers 来载入该模型并进行文本向量抽取:

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ["到期不能按时还款怎么办", "剩余欠款还有多少?"]

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('DMetaSoul/sbert-chinese-qmc-finance-v1')

model = AutoModel.from_pretrained('DMetaSoul/sbert-chinese-qmc-finance-v1')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation

该模型在公开的几个语义匹配数据集上进行了评测,计算了向量相似度跟真实标签之间的相关性系数:

| | **csts_dev** | **csts_test** | **afqmc** | **lcqmc** | **bqcorpus** | **pawsx** | **xiaobu** |

| -------------------------------- | ------------ | ------------- | --------- | --------- | ------------ | --------- | ---------- |

| **sbert-chinese-qmc-finance-v1** | 77.40% | 74.55% | 36.01% | 75.75% | 73.25% | 11.58% | 54.76% |

## Citing & Authors

E-mail: xiaowenbin@dmetasoul.com

|

Yaxin/roberta-large-ernie2-skep-en

|

Yaxin

| 2022-04-04T07:18:20Z | 4 | 2 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"en",

"arxiv:2005.05635",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-04-04T06:27:48Z |

---

language: en

---

# SKEP-Roberta

## Introduction

SKEP (SKEP: Sentiment Knowledge Enhanced Pre-training for Sentiment Analysis) is proposed by Baidu in 2020,

SKEP propose Sentiment Knowledge Enhanced Pre-training for sentiment analysis. Sentiment masking and three sentiment pre-training objectives are designed to incorporate various types of knowledge for pre-training model.

More detail: https://aclanthology.org/2020.acl-main.374.pdf

## Released Model Info

|Model Name|Language|Model Structure|

|:---:|:---:|:---:|

|skep-roberta-large| English |Layer:24, Hidden:1024, Heads:24|

This released pytorch model is converted from the officially released PaddlePaddle SKEP model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle SKEP repo:

1. https://github.com/PaddlePaddle/PaddleNLP/blob/develop/paddlenlp/transformers/skep

2. https://github.com/baidu/Senta

- Pytorch Conversion repo: Not released yet

## How to use

```Python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("Yaxin/roberta-large-ernie2-skep-en")

model = AutoModel.from_pretrained("Yaxin/roberta-large-ernie2-skep-en")

```

```

#!/usr/bin/env python

#encoding: utf-8

import torch

from transformers import RobertaTokenizer, RobertaForMaskedLM

tokenizer = RobertaTokenizer.from_pretrained('Yaxin/roberta-large-ernie2-skep-en')

input_tx = "<s> He like play with student, so he became a <mask> after graduation </s>"

# input_tx = "<s> He is a <mask> and likes to get along with his students </s>"

tokenized_text = tokenizer.tokenize(input_tx)

indexed_tokens = tokenizer.convert_tokens_to_ids(tokenized_text)

tokens_tensor = torch.tensor([indexed_tokens])

segments_tensors = torch.tensor([[0] * len(tokenized_text)])

model = RobertaForMaskedLM.from_pretrained('Yaxin/roberta-large-ernie2-skep-en')

model.eval()

with torch.no_grad():

outputs = model(tokens_tensor, token_type_ids=segments_tensors)

predictions = outputs[0]

predicted_index = [torch.argmax(predictions[0, i]).item() for i in range(0, (len(tokenized_text) - 1))]

predicted_token = [tokenizer.convert_ids_to_tokens([predicted_index[x]])[0] for x in

range(1, (len(tokenized_text) - 1))]

print('Predicted token is:', predicted_token)

```

## Citation

```bibtex

@article{tian2020skep,

title={SKEP: Sentiment knowledge enhanced pre-training for sentiment analysis},

author={Tian, Hao and Gao, Can and Xiao, Xinyan and Liu, Hao and He, Bolei and Wu, Hua and Wang, Haifeng and Wu, Feng},

journal={arXiv preprint arXiv:2005.05635},

year={2020}

}

```

```

reference:

https://github.com/nghuyong/ERNIE-Pytorch

```

|

vkamthe/upside_down_detector

|

vkamthe

| 2022-04-04T07:01:28Z | 5 | 0 |

tf-keras

|

[

"tf-keras",

"tag1",

"tag2",

"dataset:dataset1",

"dataset:dataset2",

"license:cc",

"region:us"

] | null | 2022-04-04T06:16:41Z |

---

language:

- "List of ISO 639-1 code for your language"

- lang1

- lang2

thumbnail: "url to a thumbnail used in social sharing"

tags:

- tag1

- tag2

license: "cc"

datasets:

- dataset1

- dataset2

metrics:

- metric1

- metric2

---

This is Image Orientation Detector by Vikram Kamthe

Given an image, it will classify it into Original Image or Upside Down Image

|

BigSalmon/GPT2Neo1.3BPoints

|

BigSalmon

| 2022-04-04T05:14:11Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt_neo",

"text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-04-04T04:17:46Z |

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/GPT2Neo1.3BPoints")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/GPT2Neo1.3BPoints")

```

```

- moviepass to return

- this summer

- swooped up by

- original co-founder stacy spikes

text: the re-launch of moviepass is set to transpire this summer, ( rescued at the hands of / under the stewardship of / spearheaded by ) its founding father, stacy spikes.

***

- middle schools do not have recess

- should get back to doing it

- amazing for communication

- and getting kids to move around

text: a casualty of the education reform craze, recess has been excised from middle schools. this is tragic, for it is instrumental in honing children's communication skills and encouraging physical activity.

***

-

```

|

somosnlp-hackathon-2022/bertin-roberta-base-finetuning-esnli

|

somosnlp-hackathon-2022

| 2022-04-04T01:45:21Z | 74 | 7 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"roberta",

"feature-extraction",

"sentence-similarity",

"es",

"dataset:hackathon-pln-es/nli-es",

"arxiv:1908.10084",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-03-28T19:08:33Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

language:

- es

datasets:

- hackathon-pln-es/nli-es

widget:

- text: "A ver si nos tenemos que poner todos en huelga hasta cobrar lo que queramos."

- text: "La huelga es el método de lucha más eficaz para conseguir mejoras en el salario."

- text: "Tendremos que optar por hacer una huelga para cobrar lo que queremos."

- text: "Queda descartada la huelga aunque no cobremos lo que queramos."

---

# bertin-roberta-base-finetuning-esnli

This is a [sentence-transformers](https://www.SBERT.net) model trained on a

collection of NLI tasks for Spanish. It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

Based around the siamese networks approach from [this paper](https://arxiv.org/pdf/1908.10084.pdf).

<!--- Describe your model here -->

You can see a demo for this model [here](https://huggingface.co/spaces/hackathon-pln-es/Sentence-Embedding-Bertin).

You can find our other model, **paraphrase-spanish-distilroberta** [here](https://huggingface.co/hackathon-pln-es/paraphrase-spanish-distilroberta) and its demo [here](https://huggingface.co/spaces/hackathon-pln-es/Paraphrase-Bertin).

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["Este es un ejemplo", "Cada oración es transformada"]

model = SentenceTransformer('hackathon-pln-es/bertin-roberta-base-finetuning-esnli')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('hackathon-pln-es/bertin-roberta-base-finetuning-esnli')

model = AutoModel.from_pretrained('hackathon-pln-es/bertin-roberta-base-finetuning-esnli')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

Our model was evaluated on the task of Semantic Textual Similarity using the [SemEval-2015 Task](https://alt.qcri.org/semeval2015/task2/) for [Spanish](http://alt.qcri.org/semeval2015/task2/data/uploads/sts2015-es-test.zip). We measure

| | [BETO STS](https://huggingface.co/espejelomar/sentece-embeddings-BETO) | BERTIN STS (this model) | Relative improvement |

|-------------------:|---------:|-----------:|---------------------:|

| cosine_pearson | 0.609803 | 0.683188 | +12.03 |

| cosine_spearman | 0.528776 | 0.615916 | +16.48 |

| euclidean_pearson | 0.590613 | 0.672601 | +13.88 |

| euclidean_spearman | 0.526529 | 0.611539 | +16.15 |

| manhattan_pearson | 0.589108 | 0.672040 | +14.08 |

| manhattan_spearman | 0.525910 | 0.610517 | +16.09 |

| dot_pearson | 0.544078 | 0.600517 | +10.37 |

| dot_spearman | 0.460427 | 0.521260 | +13.21 |

## Training

The model was trained with the parameters:

**Dataset**

We used a collection of datasets of Natural Language Inference as training data:

- [ESXNLI](https://raw.githubusercontent.com/artetxem/esxnli/master/esxnli.tsv), only the part in spanish

- [SNLI](https://nlp.stanford.edu/projects/snli/), automatically translated

- [MultiNLI](https://cims.nyu.edu/~sbowman/multinli/), automatically translated

The whole dataset used is available [here](https://huggingface.co/datasets/hackathon-pln-es/nli-es).

Here we leave the trick we used to increase the amount of data for training here:

```

for row in reader:

if row['language'] == 'es':

sent1 = row['sentence1'].strip()

sent2 = row['sentence2'].strip()

add_to_samples(sent1, sent2, row['gold_label'])

add_to_samples(sent2, sent1, row['gold_label']) #Also add the opposite

```

**DataLoader**:

`sentence_transformers.datasets.NoDuplicatesDataLoader.NoDuplicatesDataLoader`

of length 1818 with parameters:

```

{'batch_size': 64}

```

**Loss**:

`sentence_transformers.losses.MultipleNegativesRankingLoss.MultipleNegativesRankingLoss` with parameters:

```

{'scale': 20.0, 'similarity_fct': 'cos_sim'}

```

Parameters of the fit()-Method:

```

{

"epochs": 10,

"evaluation_steps": 0,

"evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'transformers.optimization.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 909,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 514, 'do_lower_case': False}) with Transformer model: RobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Authors

[Anibal Pérez](https://huggingface.co/Anarpego),

[Emilio Tomás Ariza](https://huggingface.co/medardodt),

[Lautaro Gesuelli](https://huggingface.co/Lgesuelli) y

[Mauricio Mazuecos](https://huggingface.co/mmazuecos).

|

hamedkhaledi/persain-flair-upos

|

hamedkhaledi

| 2022-04-03T22:15:00Z | 29 | 0 |

flair

|

[

"flair",

"pytorch",

"token-classification",

"sequence-tagger-model",

"fa",

"dataset:ontonotes",

"region:us"

] |

token-classification

| 2022-03-25T07:27:51Z |

---

tags:

- flair

- token-classification

- sequence-tagger-model

language:

- fa

datasets:

- ontonotes

widget:

- text: "مقامات مصری به خاطر حفظ ثبات کشور در منطقهای پرآشوب بر خود میبالند ، در حالی که این کشور در طول ۱۶ سال گذشته تنها هشت سال آنرا بدون اعلام وضعیت اضطراری سپری کرده است ."

---

## Persian Universal Part-of-Speech Tagging in Flair

This is the universal part-of-speech tagging model for Persian that ships with [Flair](https://github.com/flairNLP/flair/).

F1-Score: **97,73** (UD_PERSIAN)

Predicts Universal POS tags:

| **tag** | **meaning** |

|:---------------------------------:|:-----------:|

|ADJ | adjective |

| ADP | adposition |

| ADV | adverb |

| AUX | auxiliary |

| CCONJ | coordinating conjunction |

| DET | determiner |

| INTJ | interjection |

| NOUN | noun |

| NUM | numeral |

| PART | particle |

| PRON | pronoun |

| PUNCT | punctuation |

| SCONJ | subordinating conjunction |

| VERB | verb |

| X | other |

---

### Demo: How to use in Flair

Requires: **[Flair](https://github.com/flairNLP/flair/)** (`pip install flair`)

```python

from flair.data import Sentence

from flair.models import SequenceTagger

# load tagger

tagger = SequenceTagger.load("hamedkhaledi/persain-flair-upos")

# make example sentence

sentence = Sentence("مقامات مصری به خاطر حفظ ثبات کشور در منطقهای پرآشوب بر خود میبالند .")

tagger.predict(sentence)

#print result

print(sentence.to_tagged_string())

```

This yields the following output:

```

مقامات <NOUN> مصری <ADJ> به <ADP> خاطر <NOUN> حفظ <NOUN> ثبات <NOUN> کشور <NOUN> در <ADP> منطقهای <NOUN> پرآشوب <ADJ> بر <ADP> خود <PRON> میبالند <VERB> . <PUNCT>

```

---

### Results

- F-score (micro) 0.9773

- F-score (macro) 0.9461

- Accuracy 0.9773

```

By class:

precision recall f1-score support

NOUN 0.9770 0.9849 0.9809 6420

ADP 0.9947 0.9916 0.9932 1909

ADJ 0.9342 0.9128 0.9234 1525

PUNCT 1.0000 1.0000 1.0000 1365

VERB 0.9840 0.9711 0.9775 1141

CCONJ 0.9912 0.9937 0.9925 794

AUX 0.9622 0.9799 0.9710 546

PRON 0.9751 0.9865 0.9808 517

SCONJ 0.9797 0.9757 0.9777 494

NUM 0.9948 1.0000 0.9974 385

ADV 0.9343 0.9033 0.9185 362

DET 0.9773 0.9711 0.9742 311

PART 0.9916 1.0000 0.9958 237

INTJ 0.8889 0.8000 0.8421 10

X 0.7143 0.6250 0.6667 8

micro avg 0.9773 0.9773 0.9773 16024

macro avg 0.9533 0.9397 0.9461 16024

weighted avg 0.9772 0.9773 0.9772 16024

samples avg 0.9773 0.9773 0.9773 16024

Loss: 0.12471389770507812

```

|

tbosse/bert-base-german-cased-finetuned-subj_v2_v1

|

tbosse

| 2022-04-03T19:15:50Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-04-03T17:49:37Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-base-german-cased-finetuned-subj_v2_v1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-german-cased-finetuned-subj_v2_v1

This model is a fine-tuned version of [bert-base-german-cased](https://huggingface.co/bert-base-german-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1587

- Precision: 0.2222

- Recall: 0.0107

- F1: 0.0204

- Accuracy: 0.9511

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 136 | 0.1569 | 0.6667 | 0.0053 | 0.0106 | 0.9522 |

| No log | 2.0 | 272 | 0.1562 | 0.1667 | 0.0053 | 0.0103 | 0.9513 |

| No log | 3.0 | 408 | 0.1587 | 0.2222 | 0.0107 | 0.0204 | 0.9511 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Datasets 2.0.0

- Tokenizers 0.11.6

|

anton-l/xtreme_s_xlsr_300m_minds14

|

anton-l

| 2022-04-03T18:54:43Z | 557 | 2 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"audio-classification",

"minds14",

"google/xtreme_s",

"generated_from_trainer",

"all",

"dataset:google/xtreme_s",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2022-03-17T17:24:20Z |

---

language:

- all

license: apache-2.0

tags:

- minds14

- google/xtreme_s

- generated_from_trainer

datasets:

- google/xtreme_s

metrics:

- f1

- accuracy

model-index:

- name: xtreme_s_xlsr_300m_minds14

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xtreme_s_xlsr_300m_minds14

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the GOOGLE/XTREME_S - MINDS14.ALL dataset.

It achieves the following results on the evaluation set:

- Accuracy: 0.9033

- Accuracy Cs-cz: 0.9164

- Accuracy De-de: 0.9477

- Accuracy En-au: 0.9235

- Accuracy En-gb: 0.9324

- Accuracy En-us: 0.9326

- Accuracy Es-es: 0.9177

- Accuracy Fr-fr: 0.9444

- Accuracy It-it: 0.9167

- Accuracy Ko-kr: 0.8649

- Accuracy Nl-nl: 0.9450

- Accuracy Pl-pl: 0.9146

- Accuracy Pt-pt: 0.8940

- Accuracy Ru-ru: 0.8667

- Accuracy Zh-cn: 0.7291

- F1: 0.9015

- F1 Cs-cz: 0.9154

- F1 De-de: 0.9467

- F1 En-au: 0.9199

- F1 En-gb: 0.9334

- F1 En-us: 0.9308

- F1 Es-es: 0.9158

- F1 Fr-fr: 0.9436

- F1 It-it: 0.9135

- F1 Ko-kr: 0.8642

- F1 Nl-nl: 0.9440

- F1 Pl-pl: 0.9159

- F1 Pt-pt: 0.8883

- F1 Ru-ru: 0.8646

- F1 Zh-cn: 0.7249

- Loss: 0.4119

- Loss Cs-cz: 0.3790

- Loss De-de: 0.2649

- Loss En-au: 0.3459

- Loss En-gb: 0.2853

- Loss En-us: 0.2203

- Loss Es-es: 0.2731

- Loss Fr-fr: 0.1909

- Loss It-it: 0.3520

- Loss Ko-kr: 0.5431

- Loss Nl-nl: 0.2515

- Loss Pl-pl: 0.4113

- Loss Pt-pt: 0.4798

- Loss Ru-ru: 0.6470

- Loss Zh-cn: 1.1216

- Predict Samples: 4086

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 32

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- total_train_batch_size: 64

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1500

- num_epochs: 50.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:------:|:--------:|

| 2.6739 | 5.41 | 200 | 2.5687 | 0.0430 | 0.1190 |

| 1.4953 | 10.81 | 400 | 1.6052 | 0.5550 | 0.5692 |

| 0.6177 | 16.22 | 600 | 0.7927 | 0.8052 | 0.8011 |

| 0.3609 | 21.62 | 800 | 0.5679 | 0.8609 | 0.8609 |

| 0.4972 | 27.03 | 1000 | 0.5944 | 0.8509 | 0.8523 |

| 0.1799 | 32.43 | 1200 | 0.6194 | 0.8623 | 0.8621 |

| 0.1308 | 37.84 | 1400 | 0.5956 | 0.8569 | 0.8548 |

| 0.2298 | 43.24 | 1600 | 0.5201 | 0.8732 | 0.8743 |

| 0.0052 | 48.65 | 1800 | 0.3826 | 0.9106 | 0.9103 |

### Framework versions

- Transformers 4.18.0.dev0

- Pytorch 1.10.2+cu113

- Datasets 2.0.1.dev0

- Tokenizers 0.11.6

|

Giyaseddin/distilbert-base-cased-finetuned-fake-and-real-news-dataset

|

Giyaseddin

| 2022-04-03T16:39:39Z | 93 | 1 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"text-classification",

"en",

"license:gpl-3.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-03T14:52:37Z |

---

license: gpl-3.0

language: en

library: transformers

other: distilbert

datasets:

- Fake and real news dataset

---

# DistilBERT base cased model for Fake News Classification

## Model description

DistilBERT is a transformers model, smaller and faster than BERT, which was pretrained on the same corpus in a

self-supervised fashion, using the BERT base model as a teacher. This means it was pretrained on the raw texts only,

with no humans labelling them in any way (which is why it can use lots of publicly available data) with an automatic

process to generate inputs and labels from those texts using the BERT base model.

This is a Fake News classification model finetuned [pretrained DistilBERT model](https://huggingface.co/distilbert-base-cased) on

[Fake and real news dataset](https://www.kaggle.com/datasets/clmentbisaillon/fake-and-real-news-dataset)

## Intended uses & limitations

This can only be used for the kind of news that are similar to the ones in the dataset,

please visit the [dataset's kaggle page](https://www.kaggle.com/datasets/clmentbisaillon/fake-and-real-news-dataset) to see the data.

### How to use

You can use this model directly with a :

```python

>>> from transformers import pipeline

>>> classifier = pipeline("text-classification", model="Giyaseddin/distilbert-base-cased-finetuned-fake-and-real-news-dataset", return_all_scores=True)

>>> examples = ["Yesterday, Speaker Paul Ryan tweeted a video of himself on the Mexican border flying in a helicopter and traveling on horseback with US border agents. RT if you agree It is time for The Wall. pic.twitter.com/s5MO8SG7SL Paul Ryan (@SpeakerRyan) August 1, 2017It makes for great theater to see Republican Speaker Ryan pleading the case for a border wall, but how sincere are the GOP about building the border wall? Even after posting a video that appears to show Ryan s support for the wall, he still seems unsure of himself. It s almost as though he s testing the political winds when he asks Twitter users to retweet if they agree that we need to start building the wall. How committed is the (formerly?) anti-Trump Paul Ryan to building the border wall that would fulfill one of President Trump s most popular campaign promises to the American people? Does he have the what it takes to defy the wishes of corporate donors and the US Chamber of Commerce, and do the right thing for the national security and well-being of our nation?The Last Refuge- Republicans are in control of the House of Representatives, Republicans are in control of the Senate, a Republican President is in the White House, and somehow there s negotiations on how to fund the #1 campaign promise of President Donald Trump, the border wall.Here s the rub.Here s what pundits never discuss.The Republican party doesn t need a single Democrat to fund the border wall.A single spending bill could come from the House of Representatives that fully funds 100% of the border wall. The spending bill then goes to the senate, where again, it doesn t need a single Democrat vote because spending legislation is specifically what reconciliation was designed to facilitate. That House bill can pass the Senate with 51 votes and proceed directly to the President s desk for signature.So, ask yourself: why is this even a point of discussion?The honest answer, for those who are no longer suffering from Battered Conservative Syndrome, is that Republicans don t want to fund or build an actual physical barrier known as the Southern Border Wall.It really is that simple.If one didn t know better, they d almost think Speaker Ryan was attempting to emulate the man he clearly despised during the 2016 presidential campaign."]

>>> classifier(examples)

[[{'label': 'LABEL_0', 'score': 1.0},

{'label': 'LABEL_1', 'score': 1.0119109106199176e-08}]]

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased

predictions. It also inherits some of

[the bias of its teacher model](https://huggingface.co/bert-base-uncased#limitations-and-bias).

This bias will also affect all fine-tuned versions of this model.

## Pre-training data

DistilBERT pretrained on the same data as BERT, which is [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset

consisting of 11,038 unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia)

(excluding lists, tables and headers).

## Fine-tuning data

[Fake and real news dataset](https://www.kaggle.com/datasets/clmentbisaillon/fake-and-real-news-dataset)

## Training procedure

### Preprocessing

In the preprocessing phase, both the title and the text of the news are concatenated using a separator `[SEP]`.

This makes the full text as:

```

[CLS] Title Sentence [SEP] News text body [SEP]

```

The data are splitted according to the following ratio:

- Training set 60%.

- Validation set 20%.

- Test set 20%.

Lables are mapped as: `{fake: 0, true: 1}`

### Fine-tuning

The model was finetuned on GeForce GTX 960M for 5 hours. The parameters are:

| Parameter | Value |

|:-------------------:|:-----:|

| Learning rate | 5e-5 |

| Weight decay | 0.01 |

| Training batch size | 4 |

| Epochs | 3 |

Here is the scores during the training:

| Epoch | Training Loss | Validation Loss | Accuracy | F1 | Precision | Recall |

|:----------:|:-------------:|:-----------------:|:----------:|:---------:|:-----------:|:---------:|

| 1 | 0.008300 | 0.005783 | 0.998330 | 0.998252 | 0.996511 | 1.000000 |

| 2 | 0.000000 | 0.000161 | 0.999889 | 0.999883 | 0.999767 | 1.000000 |

| 3 | 0.000000 | 0.000122 | 0.999889 | 0.999883 | 0.999767 | 1.000000 |

## Evaluation results

When fine-tuned on downstream task of fake news binary classification, this model achieved the following results:

(scores are rounded to 2 floating points)

| | precision | recall | f1-score | support |

|:------------:|:---------:|:------:|:--------:|:-------:|

| Fake | 1.00 | 1.00 | 1.00 | 4697 |

| True | 1.00 | 1.00 | 1.00 | 4283 |

| accuracy | - | - | 1.00 | 8980 |

| macro avg | 1.00 | 1.00 | 1.00 | 8980 |

| weighted avg | 1.00 | 1.00 | 1.00 | 8980 |

Confision matrix:

| Actual\Predicted | Fake | True |

|:-----------------:|:----:|:----:|

| Fake | 4696 | 1 |

| True | 1 | 4282 |

The AUC score is 0.9997

|

AykeeSalazar/violation-classification-bantai-vit-v100ep

|

AykeeSalazar

| 2022-04-03T16:16:07Z | 64 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:image_folder",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-04-03T14:05:38Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- image_folder

metrics:

- accuracy

model-index:

- name: violation-classification-bantai-vit-v100ep

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: image_folder

type: image_folder

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9157343919162757

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# violation-classification-bantai-vit-v100ep

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the image_folder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2557

- Accuracy: 0.9157

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 100

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2811 | 1.0 | 101 | 0.2855 | 0.9027 |

| 0.2382 | 2.0 | 202 | 0.2763 | 0.9085 |

| 0.2361 | 3.0 | 303 | 0.2605 | 0.9109 |

| 0.196 | 4.0 | 404 | 0.2652 | 0.9110 |

| 0.1395 | 5.0 | 505 | 0.2648 | 0.9134 |

| 0.155 | 6.0 | 606 | 0.2656 | 0.9152 |

| 0.1422 | 7.0 | 707 | 0.2607 | 0.9141 |

| 0.1511 | 8.0 | 808 | 0.2557 | 0.9157 |

| 0.1938 | 9.0 | 909 | 0.2679 | 0.9049 |

| 0.2094 | 10.0 | 1010 | 0.2392 | 0.9137 |

| 0.1835 | 11.0 | 1111 | 0.2400 | 0.9156 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Datasets 2.0.0

- Tokenizers 0.11.6

|

jsunster/distilbert-base-uncased-finetuned-squad

|

jsunster

| 2022-04-03T14:46:14Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-04-03T13:02:11Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: distilbert-base-uncased-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-squad

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1476

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.2823 | 1.0 | 2767 | 1.1980 |

| 1.0336 | 2.0 | 5534 | 1.1334 |

| 0.8513 | 3.0 | 8301 | 1.1476 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0

- Datasets 2.0.0

- Tokenizers 0.11.6

|

AnnaBabaie/ms-marco-MiniLM-L-12-v2-news

|

AnnaBabaie

| 2022-04-03T13:46:51Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-03T12:55:06Z |

This model is fined tuned for the Fake news classifier: Train a text classification model to detect fake news articles. Base on the Kaggle dataset(https://www.kaggle.com/clmentbisaillon/fake-and-real-news-dataset).

|

alefiury/wav2vec2-xls-r-300m-pt-br-spontaneous-speech-emotion-recognition

|

alefiury

| 2022-04-03T12:38:09Z | 66 | 6 |

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"audio-classification",

"audio",

"speech",

"pt",

"portuguese-speech-corpus",

"italian-speech-corpus",

"english-speech-corpus",

"arabic-speech-corpus",

"spontaneous",

"PyTorch",

"dataset:coraa_ser",

"dataset:emovo",

"dataset:ravdess",

"dataset:baved",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2022-03-23T15:29:36Z |

---

language: pt

datasets:

- coraa_ser

- emovo

- ravdess

- baved

metrics:

- f1

tags:

- audio

- speech

- wav2vec2

- pt

- portuguese-speech-corpus

- italian-speech-corpus

- english-speech-corpus

- arabic-speech-corpus

- spontaneous

- speech

- PyTorch

license: apache-2.0

model_index:

name: wav2vec2-xls-r-300m-pt-br-spontaneous-speech-emotion-recognition

results:

metrics:

- name: Test Macro F1-Score

type: f1

value: 81.87%

---

# Wav2vec 2.0 XLS-R For Spontaneous Speech Emotion Recognition

This is the model that got first place in the SER track of the Automatic Speech Recognition for spontaneous and prepared speech & Speech Emotion Recognition in Portuguese (SE&R 2022) Workshop.

The following datasets were used in the training:

- [CORAA SER v1.0](https://github.com/rmarcacini/ser-coraa-pt-br/): a dataset composed of spontaneous portuguese speech and approximately 40 minutes of audio segments labeled in three classes: neutral, non-neutral female, and non-neutral male.

- [EMOVO Corpus](https://aclanthology.org/L14-1478/): a database of emotional speech for the Italian language, built from the voices of up to 6 actors who played 14 sentences simulating 6 emotional states (disgust, fear, anger, joy, surprise, sadness) plus the neutral state.

- [RAVDESS](https://zenodo.org/record/1188976#.YO6yI-gzaUk): a dataset that provides 1440 samples of recordings from actors performing on 8 different emotions in English, which are: angry, calm, disgust, fearful, happy, neutral, sad and surprised.

- [BAVED](https://github.com/40uf411/Basic-Arabic-Vocal-Emotions-Dataset): a collection of audio recordings of Arabic words spoken with varying degrees of emotion. The dataset contains seven words: like, unlike, this, file, good, neutral, and bad, which are spoken at three emotional levels: low emotion (tired or feeling down), neutral emotion (the way the speaker speaks daily), and high emotion (positive or negative emotions such as happiness, joy, sadness, anger).

The test set used is a part of the CORAA SER v1.0 that has been set aside for this purpose.

It achieves the following results on the test set:

- Accuracy: 0.9090

- Macro Precision: 0.8171

- Macro Recall: 0.8397

- Macro F1-Score: 0.8187

## Datasets Details

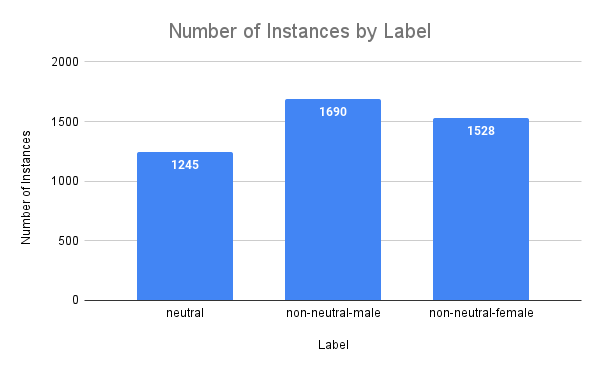

The following image shows the overall distribution of the datasets:

The following image shows the number of instances by label:

## Repository

The repository that implements the model to be trained and tested is avaible [here](https://github.com/alefiury/SE-R-2022-SER-Track).

|

AykeeSalazar/violation-classification-bantai_vit

|

AykeeSalazar

| 2022-04-03T12:26:48Z | 62 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:image_folder",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-04-03T03:01:22Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- image_folder

model-index:

- name: violation-classification-bantai_vit

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# violation-classification-bantai_vit

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the image_folder dataset.

It achieves the following results on the evaluation set:

- eval_loss: 0.2362

- eval_accuracy: 0.9478

- eval_runtime: 43.2567

- eval_samples_per_second: 85.42

- eval_steps_per_second: 2.682

- epoch: 87.0

- step: 10005

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 500

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Datasets 2.0.0

- Tokenizers 0.11.6

|

Awais/Audio_Source_Separation

|

Awais

| 2022-04-03T11:03:43Z | 11 | 21 |

asteroid

|

[

"asteroid",

"pytorch",

"audio",

"ConvTasNet",

"audio-to-audio",

"dataset:Libri2Mix",

"dataset:sep_clean",

"license:cc-by-sa-4.0",

"region:us"

] |

audio-to-audio

| 2022-04-02T13:01:03Z |

---

tags:

- asteroid

- audio

- ConvTasNet

- audio-to-audio

datasets:

- Libri2Mix

- sep_clean

license: cc-by-sa-4.0

---

## Asteroid model `Awais/Audio_Source_Separation`

Imported from [Zenodo](https://zenodo.org/record/3873572#.X9M69cLjJH4)

Description:

This model was trained by Joris Cosentino using the librimix recipe in [Asteroid](https://github.com/asteroid-team/asteroid).

It was trained on the `sep_clean` task of the Libri2Mix dataset.

Training config:

```yaml

data:

n_src: 2

sample_rate: 8000

segment: 3

task: sep_clean

train_dir: data/wav8k/min/train-360

valid_dir: data/wav8k/min/dev

filterbank:

kernel_size: 16

n_filters: 512

stride: 8

masknet:

bn_chan: 128

hid_chan: 512

mask_act: relu

n_blocks: 8

n_repeats: 3

skip_chan: 128

optim:

lr: 0.001

optimizer: adam

weight_decay: 0.0

training:

batch_size: 24

early_stop: True

epochs: 200

half_lr: True

num_workers: 2

```

Results :

On Libri2Mix min test set :

```yaml

si_sdr: 14.764543634468069

si_sdr_imp: 14.764029375607246

sdr: 15.29337970745095

sdr_imp: 15.114146605113111

sir: 24.092904661115366

sir_imp: 23.913669683141528

sar: 16.06055906916849

sar_imp: -51.980784441287454

stoi: 0.9311142440593033

stoi_imp: 0.21817376142710482

```

License notice:

This work "ConvTasNet_Libri2Mix_sepclean_8k"

is a derivative of [LibriSpeech ASR corpus](http://www.openslr.org/12) by Vassil Panayotov,

used under [CC BY 4.0](https://creativecommons.org/licenses/by/4.0/). "ConvTasNet_Libri2Mix_sepclean_8k"

is licensed under [Attribution-ShareAlike 3.0 Unported](https://creativecommons.org/licenses/by-sa/3.0/) by Cosentino Joris.

|

Zohar/distilgpt2-finetuned-restaurant-reviews-clean

|

Zohar

| 2022-04-03T10:29:27Z | 3 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-04-03T07:25:35Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilgpt2-finetuned-restaurant-reviews-clean

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilgpt2-finetuned-restaurant-reviews-clean

This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 3.5371

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 3.7221 | 1.0 | 2447 | 3.5979 |

| 3.6413 | 2.0 | 4894 | 3.5505 |

| 3.6076 | 3.0 | 7341 | 3.5371 |

### Framework versions

- Transformers 4.16.2

- Pytorch 1.10.2+cu102

- Datasets 1.18.2

- Tokenizers 0.11.0

|

abd-1999/autotrain-bbc-news-summarization-694821095

|

abd-1999

| 2022-04-03T09:25:08Z | 4 | 1 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"autotrain",

"unk",

"dataset:abd-1999/autotrain-data-bbc-news-summarization",

"co2_eq_emissions",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-04-01T21:16:19Z |

---

tags: autotrain

language: unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- abd-1999/autotrain-data-bbc-news-summarization

co2_eq_emissions: 2313.4037079026934

---

# Model Trained Using AutoTrain

- Problem type: Summarization

- Model ID: 694821095

- CO2 Emissions (in grams): 2313.4037079026934

## Validation Metrics

- Loss: 3.0294156074523926

- Rouge1: 2.1467

- Rouge2: 0.0853

- RougeL: 2.1524

- RougeLsum: 2.1534

- Gen Len: 18.5603

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_HUGGINGFACE_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/abd-1999/autotrain-bbc-news-summarization-694821095

```

|

Prinernian/distilbert-base-uncased-finetuned-emotion

|

Prinernian

| 2022-04-03T09:11:07Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-02T17:49:11Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2208

- Accuracy: 0.924

- F1: 0.9240

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8538 | 1.0 | 250 | 0.3317 | 0.904 | 0.8999 |

| 0.2599 | 2.0 | 500 | 0.2208 | 0.924 | 0.9240 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Tokenizers 0.11.6

|

AykeeSalazar/vit-base-patch16-224-in21k-bantai_vitv1

|

AykeeSalazar

| 2022-04-03T02:43:41Z | 63 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:image_folder",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-04-02T14:17:18Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- image_folder

metrics:

- accuracy

model-index:

- name: vit-base-patch16-224-in21k-bantai_vitv1

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: image_folder

type: image_folder

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.8635994587280108

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-patch16-224-in21k-bantai_vitv1

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the image_folder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3961

- Accuracy: 0.8636

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.5997 | 1.0 | 115 | 0.5401 | 0.7886 |

| 0.4696 | 2.0 | 230 | 0.4410 | 0.8482 |

| 0.4019 | 3.0 | 345 | 0.3961 | 0.8636 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.10.0+cu111

- Datasets 2.0.0

- Tokenizers 0.11.6

|

Asayaya/Upside_down_detector

|

Asayaya

| 2022-04-03T01:00:26Z | 0 | 0 | null |

[

"license:apache-2.0",

"region:us"

] | null | 2022-04-03T00:55:24Z |

---

license: apache-2.0

---

# -*- coding: utf-8 -*-

'''

Original file is located at

https://colab.research.google.com/drive/1HrNm5UMZr2Zjmze_HKW799p6LAHM8BTa

'''

from google.colab import files

files.upload()

!pip install kaggle

!cp kaggle.json ~/.kaggle/

!chmod 600 ~/.kaggle/kaggle.json

!kaggle datasets download 'shaunthesheep/microsoft-catsvsdogs-dataset'

!unzip microsoft-catsvsdogs-dataset

import tensorflow as tf

from tensorflow.keras.preprocessing.image import ImageDataGenerator

image_dir='/content/PetImages/Cat'

!mkdir train_folder

!mkdir test_folder

import os

path='/content/train_folder/'

dir='upside_down'

dir2='normal'

training_normal= os.path.join(path, dir2)

training_upside= os.path.join(path, dir)

os.mkdir(training_normal)

os.mkdir(training_upside)

#creating classes directories

path='/content/test_folder/'

dir='upside_down'

dir2='normal'

training_normal= os.path.join(path, dir2)

training_upside= os.path.join(path, dir)

os.mkdir(training_normal)

os.mkdir(training_upside)

#copying only the cat images to my train folder

fnames = ['{}.jpg'.format(i) for i in range(2000)]

for fname in fnames:

src = os.path.join('/content/PetImages/Cat', fname)

dst = os.path.join('/content/train_folder/normal', fname)

shutil.copyfile(src, dst)

import os

import shutil

fnames = ['{}.jpg'.format(i) for i in range(2000, 4000)]

for fname in fnames:

src = os.path.join('/content/PetImages/Cat', fname)

dst = os.path.join('/content/test_folder/normal', fname)

shutil.copyfile(src, dst)

from scipy import ndimage, misc

from PIL import Image

import numpy as np

import matplotlib.pyplot as plt

import imageio

import os

import cv2

#inverting Training Images

outPath = '/content/train_folder/upside_down'

path ='/content/train_folder/normal'

# iterate through the names of contents of the folder

for image_path in os.listdir(path):

# create the full input path and read the file

input_path = os.path.join(path, image_path)

image_to_rotate =plt.imread(input_path)

# rotate the image

rotated = np.flipud(image_to_rotate)

# create full output path, 'example.jpg'

# becomes 'rotate_example.jpg', save the file to disk

fullpath = os.path.join(outPath, 'rotated_'+image_path)

imageio.imwrite(fullpath, rotated)

#nverting images for Validation

outPath = '/content/test_folder/upside_down'

path ='/content/test_folder/normal'

# iterate through the names of contents of the folder

for image_path in os.listdir(path):

# create the full input path and read the file

input_path = os.path.join(path, image_path)

image_to_rotate =plt.imread(input_path)

# rotate the image

rotated = np.flipud(image_to_rotate)

# create full output path, 'example.jpg'

# becomes 'rotate_example.jpg', save the file to disk

fullpath = os.path.join(outPath, 'rotated_'+image_path)

imageio.imwrite(fullpath, rotated)

ima='/content/train_folder/inverted/rotated_1001.jpg'

image=plt.imread(ima)

plt.imshow(image)

# visualize the the figure

plt.show()

train_dir='/content/train_folder'

train_gen=ImageDataGenerator(rescale=1./255)

train_images= train_gen.flow_from_directory(

train_dir,

target_size=(250,250),

batch_size=50,

class_mode='binary'

)

validation_dir='/content/test_folder'

test_gen=ImageDataGenerator(rescale=1./255)

test_images= test_gen.flow_from_directory(

validation_dir,

target_size=(250,250),

batch_size=50,

class_mode='binary'

)

model=tf.keras.Sequential([

tf.keras.layers.Conv2D(16, (3,3), activation='relu', input_shape=(250,250,3)),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Conv2D(32, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Conv2D(64, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Conv2D(128, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(512, activation='relu'),

tf.keras.layers.Dense(1, activation='sigmoid')

])

from tensorflow.keras.optimizers import RMSprop

model.compile(optimizer=RMSprop(learning_rate=0.001), loss=tf.keras.losses.BinaryCrossentropy(), metrics=['acc'])

history=model.fit(train_images, validation_data=test_images, epochs=5, steps_per_epoch=40 )

|

huggingtweets/clortown-elonmusk-stephencurry30

|

huggingtweets

| 2022-04-02T23:03:14Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-04-02T23:02:39Z |

---

language: en

thumbnail: http://www.huggingtweets.com/clortown-elonmusk-stephencurry30/1648940589601/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1503591435324563456/foUrqiEw_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1488574779351187458/RlIQNUFG_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1484233608793518081/tOID8aXq_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Elon Musk & yeosang elf agenda & Stephen Curry</div>

<div style="text-align: center; font-size: 14px;">@clortown-elonmusk-stephencurry30</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Elon Musk & yeosang elf agenda & Stephen Curry.

| Data | Elon Musk | yeosang elf agenda | Stephen Curry |

| --- | --- | --- | --- |

| Tweets downloaded | 221 | 3143 | 3190 |

| Retweets | 7 | 541 | 384 |

| Short tweets | 62 | 463 | 698 |

| Tweets kept | 152 | 2139 | 2108 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2sqcbnn5/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @clortown-elonmusk-stephencurry30's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1mq1ftjh) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1mq1ftjh/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/clortown-elonmusk-stephencurry30')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

vicl/canine-s-finetuned-cola

|

vicl

| 2022-04-02T23:01:51Z | 3 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"canine",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-04-02T22:29:20Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: canine-s-finetuned-cola

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.059386434587477076

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# canine-s-finetuned-cola