modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 06:27:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 527

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 06:27:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

KingKazma/xsum_gpt2_prefix_tuning_500_10_3000_8_e0_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T16:22:51Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T16:22:50Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e0_s6789_v3_l5_v100

|

KingKazma

| 2023-08-09T16:20:40Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T16:20:39Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

kaoyer/pokemon-lora

|

kaoyer

| 2023-08-09T16:17:44Z | 1 | 0 |

diffusers

|

[

"diffusers",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"lora",

"license:creativeml-openrail-m",

"region:us"

] |

text-to-image

| 2023-08-09T13:49:50Z |

---

license: creativeml-openrail-m

base_model: /root/autodl-fs/pre_trained_models/runwayml-stable-diffusion-v1-5/runwayml-stable-diffusion-v1-5

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# LoRA text2image fine-tuning - kaoyer/pokemon-lora

These are LoRA adaption weights for /root/autodl-fs/pre_trained_models/runwayml-stable-diffusion-v1-5/runwayml-stable-diffusion-v1-5. The weights were fine-tuned on the lambdalabs/pokemon-blip-captions dataset. You can find some example images in the following.

|

MarioNapoli/DynamicWav2Vec_TEST_9

|

MarioNapoli

| 2023-08-09T16:09:04Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice_1_0",

"base_model:facebook/wav2vec2-xls-r-300m",

"base_model:finetune:facebook/wav2vec2-xls-r-300m",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-03T14:29:32Z |

---

license: apache-2.0

base_model: facebook/wav2vec2-xls-r-300m

tags:

- generated_from_trainer

datasets:

- common_voice_1_0

model-index:

- name: DynamicWav2Vec_TEST_9

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# DynamicWav2Vec_TEST_9

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice_1_0 dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30

### Training results

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Marco-Cheung/whisper-small-cantonese

|

Marco-Cheung

| 2023-08-09T16:07:58Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"whisper",

"automatic-speech-recognition",

"generated_from_trainer",

"zh",

"dataset:mozilla-foundation/common_voice_13_0",

"base_model:openai/whisper-small",

"base_model:finetune:openai/whisper-small",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-08T06:53:17Z |

---

language:

- zh

license: apache-2.0

base_model: openai/whisper-small

tags:

- generated_from_trainer

datasets:

- mozilla-foundation/common_voice_13_0

metrics:

- wer

model-index:

- name: Whisper Small Cantonese - Marco Cheung

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice 13

type: mozilla-foundation/common_voice_13_0

config: zh-HK

split: test

args: zh-HK

metrics:

- name: Wer

type: wer

value: 57.700752823086574

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Whisper Small Cantonese - Marco Cheung

This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the Common Voice 13 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2487

- Wer Ortho: 57.8423

- Wer: 57.7008

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: constant_with_warmup

- lr_scheduler_warmup_steps: 10

- training_steps: 2000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer Ortho | Wer |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:-------:|

| 0.1621 | 1.14 | 1000 | 0.2587 | 61.0824 | 65.0094 |

| 0.0767 | 2.28 | 2000 | 0.2487 | 57.8423 | 57.7008 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1+cu117

- Datasets 2.14.3

- Tokenizers 0.13.3

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e9_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T16:05:14Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T16:05:14Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

foilfoilfoil/cheesegulag3.5

|

foilfoilfoil

| 2023-08-09T16:04:54Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T16:04:02Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: float16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

- PEFT 0.5.0.dev0

- PEFT 0.5.0.dev0

- PEFT 0.5.0.dev0

- PEFT 0.5.0.dev0

- PEFT 0.5.0.dev0

|

marklim100/test-model-v4

|

marklim100

| 2023-08-09T16:03:36Z | 3 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"mpnet",

"setfit",

"text-classification",

"arxiv:2209.11055",

"license:apache-2.0",

"region:us"

] |

text-classification

| 2023-08-09T16:02:51Z |

---

license: apache-2.0

tags:

- setfit

- sentence-transformers

- text-classification

pipeline_tag: text-classification

---

# marklim100/test-model-v4

This is a [SetFit model](https://github.com/huggingface/setfit) that can be used for text classification. The model has been trained using an efficient few-shot learning technique that involves:

1. Fine-tuning a [Sentence Transformer](https://www.sbert.net) with contrastive learning.

2. Training a classification head with features from the fine-tuned Sentence Transformer.

## Usage

To use this model for inference, first install the SetFit library:

```bash

python -m pip install setfit

```

You can then run inference as follows:

```python

from setfit import SetFitModel

# Download from Hub and run inference

model = SetFitModel.from_pretrained("marklim100/test-model-v4")

# Run inference

preds = model(["i loved the spiderman movie!", "pineapple on pizza is the worst 🤮"])

```

## BibTeX entry and citation info

```bibtex

@article{https://doi.org/10.48550/arxiv.2209.11055,

doi = {10.48550/ARXIV.2209.11055},

url = {https://arxiv.org/abs/2209.11055},

author = {Tunstall, Lewis and Reimers, Nils and Jo, Unso Eun Seo and Bates, Luke and Korat, Daniel and Wasserblat, Moshe and Pereg, Oren},

keywords = {Computation and Language (cs.CL), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Efficient Few-Shot Learning Without Prompts},

publisher = {arXiv},

year = {2022},

copyright = {Creative Commons Attribution 4.0 International}

}

```

|

tamiti1610001/bert-finetuned-ner

|

tamiti1610001

| 2023-08-09T16:02:50Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:conll2003",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-08-09T14:13:06Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- conll2003

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-finetuned-ner

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: conll2003

type: conll2003

config: conll2003

split: validation

args: conll2003

metrics:

- name: Precision

type: precision

value: 0.9457247828991316

- name: Recall

type: recall

value: 0.9530461124200605

- name: F1

type: f1

value: 0.949371332774518

- name: Accuracy

type: accuracy

value: 0.9913554768116506

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset.

It achieves the following results on the evaluation set:

- Loss: nan

- Precision: 0.9457

- Recall: 0.9530

- F1: 0.9494

- Accuracy: 0.9914

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.0136 | 1.0 | 878 | nan | 0.9401 | 0.9488 | 0.9445 | 0.9906 |

| 0.0063 | 2.0 | 1756 | nan | 0.9413 | 0.9507 | 0.9460 | 0.9907 |

| 0.0034 | 3.0 | 2634 | nan | 0.9457 | 0.9530 | 0.9494 | 0.9914 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.0

- Datasets 2.1.0

- Tokenizers 0.13.3

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e8_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T15:58:13Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:58:12Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

RogerB/marian-finetuned-Umuganda-Dataset-en-to-kin

|

RogerB

| 2023-08-09T15:53:16Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"marian",

"text2text-generation",

"translation",

"generated_from_trainer",

"base_model:Helsinki-NLP/opus-mt-en-rw",

"base_model:finetune:Helsinki-NLP/opus-mt-en-rw",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

translation

| 2023-08-08T18:52:54Z |

---

license: apache-2.0

base_model: Helsinki-NLP/opus-mt-en-rw

tags:

- translation

- generated_from_trainer

metrics:

- bleu

model-index:

- name: marian-finetuned-kde4-en-to-kin-Umuganda-Dataset

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# marian-finetuned-Umuganda-Dataset-en-to-kin-Umuganda-Dataset

This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-rw](https://huggingface.co/Helsinki-NLP/opus-mt-en-rw) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.8769

- Bleu: 32.8345

## Model Description

The model has been fine-tuned to perform machine translation from English to Kinyarwanda.

## Intended Uses & Limitations

The primary intended use of this model is for research purposes.

## Training and Evaluation Data

The model has been fine-tuned using the [Digital Umuganda](https://huggingface.co/datasets/DigitalUmuganda/kinyarwanda-english-machine-translation-dataset/tree/main) dataset.

The dataset was split with 90% used for training and 10% for testing.

The data used to train the model were cased and digits removed.

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Shafaet02/bert-fine-tuned-cola

|

Shafaet02

| 2023-08-09T15:48:02Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-cased",

"base_model:finetune:google-bert/bert-base-cased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-09T08:59:17Z |

---

license: apache-2.0

base_model: bert-base-cased

tags:

- generated_from_keras_callback

model-index:

- name: Shafaet02/bert-fine-tuned-cola

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Shafaet02/bert-fine-tuned-cola

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2831

- Validation Loss: 0.4311

- Epoch: 1

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 0.4914 | 0.4282 | 0 |

| 0.2831 | 0.4311 | 1 |

### Framework versions

- Transformers 4.31.0

- TensorFlow 2.11.0

- Datasets 2.14.3

- Tokenizers 0.13.3

|

mbueno/llama2-qlora-finetunined-french

|

mbueno

| 2023-08-09T15:40:36Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:40:28Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e5_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T15:37:07Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:37:06Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

Ripo-2007/dreambooth_alfonso

|

Ripo-2007

| 2023-08-09T15:32:17Z | 4 | 1 |

diffusers

|

[

"diffusers",

"text-to-image",

"autotrain",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:finetune:stabilityai/stable-diffusion-xl-base-1.0",

"region:us"

] |

text-to-image

| 2023-08-09T13:35:48Z |

---

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: alfonsoaraco

tags:

- text-to-image

- diffusers

- autotrain

inference: true

---

# DreamBooth trained by AutoTrain

Test enoder was not trained.

|

dkqjrm/20230809151609

|

dkqjrm

| 2023-08-09T15:30:37Z | 114 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-09T06:16:47Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: '20230809151609'

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 20230809151609

This model is a fine-tuned version of [bert-large-cased](https://huggingface.co/bert-large-cased) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 11

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1+cu117

- Datasets 2.12.0

- Tokenizers 0.13.3

|

Meohong/Dialect-Polyglot-12.8b-QLoRA

|

Meohong

| 2023-08-09T15:26:17Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:26:09Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0.dev0

|

felixshier/osc-01-bert-finetuned

|

felixshier

| 2023-08-09T15:24:55Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-09T13:35:56Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_keras_callback

model-index:

- name: osc-01-bert-finetuned

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# osc-01-bert-finetuned

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3193

- Validation Loss: 0.7572

- Train Precision: 0.6026

- Epoch: 6

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': {'module': 'keras.optimizers.schedules', 'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 110, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, 'registered_name': None}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Train Precision | Epoch |

|:----------:|:---------------:|:---------------:|:-----:|

| 0.6873 | 0.6937 | 0.5147 | 0 |

| 0.6544 | 0.6854 | 0.5 | 1 |

| 0.6127 | 0.7071 | 0.5242 | 2 |

| 0.5651 | 0.6813 | 0.5591 | 3 |

| 0.5015 | 0.7012 | 0.5747 | 4 |

| 0.4006 | 0.7292 | 0.5882 | 5 |

| 0.3193 | 0.7572 | 0.6026 | 6 |

### Framework versions

- Transformers 4.31.0

- TensorFlow 2.13.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e3_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T15:23:02Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:23:01Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

haris001/attestation2nd

|

haris001

| 2023-08-09T15:20:34Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:19:38Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: True

- load_in_4bit: False

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: fp4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float32

### Framework versions

- PEFT 0.5.0.dev0

|

imvladikon/alephbertgimmel_parashoot

|

imvladikon

| 2023-08-09T15:10:27Z | 113 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"bert",

"question-answering",

"generated_from_trainer",

"he",

"dataset:imvladikon/parashoot",

"base_model:imvladikon/alephbertgimmel-base-512",

"base_model:finetune:imvladikon/alephbertgimmel-base-512",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-02T07:44:16Z |

---

base_model: imvladikon/alephbertgimmel-base-512

tags:

- generated_from_trainer

datasets:

- imvladikon/parashoot

model-index:

- name: alephbertgimmel_parashoot

results: []

language:

- he

metrics:

- f1

- exact_match

pipeline_tag: question-answering

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# alephbertgimmel_parashoot

This model is a fine-tuned version of [imvladikon/alephbertgimmel-base-512](https://huggingface.co/imvladikon/alephbertgimmel-base-512) on the [imvladikon/parashoot](https://huggingface.co/datasets/imvladikon/parashoot) dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

```

***** predict metrics *****

predict_samples = 1102

test_exact_match = 27.7073

test_f1 = 51.787

test_runtime = 0:00:32.05

test_samples_per_second = 34.383

test_steps_per_second = 4.306

```

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.2

- Tokenizers 0.13.3

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e1_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T15:08:58Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:08:56Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e0_s6789_v3_l5_v50

|

KingKazma

| 2023-08-09T15:03:33Z | 2 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:03:32Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

Cheetor1996/Efanatika_aku_no_onna_kanbu

|

Cheetor1996

| 2023-08-09T15:02:56Z | 0 | 0 | null |

[

"art",

"en",

"license:cc-by-2.0",

"region:us"

] | null | 2023-08-09T15:00:15Z |

---

license: cc-by-2.0

language:

- en

tags:

- art

---

**Efanatika** from **Aku no onna kanbu**

- Trained with Anime (final-full-pruned) model.

- Recommended LoRA weights: 0.7+

- Recommended LoRA weight blocks: ALL, MIDD, OUTD, and OUTALL

- **Activation ta**g: *efanatika*, use with pink hair, long hair, very long hair, colored skin, blue skin, yellow eyes, colored sclera, and black sclera.

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e0_s6789_v3_l5_v20

|

KingKazma

| 2023-08-09T15:01:55Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T15:01:55Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

leonard-pak/q-FrozenLake-v1-4x4-noSlippery

|

leonard-pak

| 2023-08-09T14:59:17Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-09T14:58:08Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

model = load_from_hub(repo_id="leonard-pak/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

|

LarryAIDraw/ToukaLora-15

|

LarryAIDraw

| 2023-08-09T14:58:48Z | 0 | 0 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-08-09T14:39:49Z |

---

license: creativeml-openrail-m

---

https://civitai.com/models/125271/touka-kirishima-tokyo-ghoul-lora

|

LarryAIDraw/MiaChristoph-10

|

LarryAIDraw

| 2023-08-09T14:58:35Z | 0 | 0 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-08-09T14:39:26Z |

---

license: creativeml-openrail-m

---

https://civitai.com/models/124748/mia-christoph-tenpuru

|

KingKazma/xsum_gpt2_p_tuning_500_10_3000_8_e-1_s6789_v3_l5_v50

|

KingKazma

| 2023-08-09T14:56:04Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:56:03Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

liadraz/q-FrozenLake-v1-4x4-noSlippery

|

liadraz

| 2023-08-09T14:54:50Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-09T14:54:46Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="liadraz/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

broAleks13/stablecode-completion-alpha-3b-4k

|

broAleks13

| 2023-08-09T14:49:26Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-08-09T14:42:38Z |

---

license: apache-2.0

--- stabilityai/stablecode-completion-alpha-3b-4k

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e8_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:43:42Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:43:41Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

zjoe/RLCourseppo-Huggy

|

zjoe

| 2023-08-09T14:43:19Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-08-09T14:43:10Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: zjoe/RLCourseppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

HG7/ReQLoRA_QK8

|

HG7

| 2023-08-09T14:42:05Z | 4 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:41:34Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e7_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:36:45Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:36:44Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e7_s6789_v3_l5_r2

|

KingKazma

| 2023-08-09T14:36:15Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-04T16:35:18Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

dimonyara/Llama2-7b-lora-int4

|

dimonyara

| 2023-08-09T14:32:04Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:31:58Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e6_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:29:49Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:29:48Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

dinesh44/gptdatabot

|

dinesh44

| 2023-08-09T14:28:46Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-08T10:52:48Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

tolga-ozturk/mt5-base-nsp

|

tolga-ozturk

| 2023-08-09T14:27:30Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"nsp",

"next-sentence-prediction",

"t5",

"en",

"de",

"fr",

"es",

"tr",

"dataset:wikipedia",

"arxiv:2307.07331",

"endpoints_compatible",

"region:us"

] | null | 2023-08-03T18:56:52Z |

---

language:

- en

- de

- fr

- es

- tr

tags:

- nsp

- next-sentence-prediction

- t5

- mt5

datasets:

- wikipedia

metrics:

- accuracy

---

# mT5-base-nsp

mT5-base-nsp is fine-tuned for Next Sentence Prediction task on the [wikipedia dataset](https://huggingface.co/datasets/wikipedia) using [google/mt5-base](https://huggingface.co/google/mt5-base) model. It was introduced in this [paper](https://arxiv.org/abs/2307.07331) and first released on this page.

## Model description

mT5-base-nsp is a Transformer-based model which was fine-tuned for Next Sentence Prediction task on 2500 English, 2500 German, 2500 Turkish, 2500 Spanish and 2500 French Wikipedia articles.

## Intended uses

- Apply Next Sentence Prediction tasks. (compare the results with BERT models since BERT natively supports this task)

- See how to fine-tune an mT5 model using our [code](https://github.com/slds-lmu/stereotypes-multi/tree/main)

- Check our [paper](https://arxiv.org/abs/2307.07331) to see its results

## How to use

You can use this model directly with a pipeline for next sentence prediction. Here is how to use this model in PyTorch:

### Necessary Initialization

```python

import torch

from transformers import MT5ForConditionalGeneration, MT5Tokenizer

from huggingface_hub import hf_hub_download

class ModelNSP(torch.nn.Module):

def __init__(self, pretrained_model, tokenizer, nsp_dim=300):

super(ModelNSP, self).__init__()

self.zero_token, self.one_token = (self.find_label_encoding(x, tokenizer).item() for x in ["0", "1"])

self.core_model = MT5ForConditionalGeneration.from_pretrained(pretrained_model)

self.nsp_head = torch.nn.Sequential(torch.nn.Linear(self.core_model.config.hidden_size, nsp_dim),

torch.nn.Linear(nsp_dim, nsp_dim), torch.nn.Linear(nsp_dim, 2))

def forward(self, input_ids, attention_mask=None):

outputs = self.core_model.generate(input_ids=input_ids, attention_mask=attention_mask, max_length=3,

output_scores=True, return_dict_in_generate=True)

logits = [torch.Tensor([score[self.zero_token], score[self.one_token]]) for score in outputs.scores[1]]

return torch.stack(logits).softmax(dim=-1)

@staticmethod

def find_label_encoding(input_str, tokenizer):

encoded_str = tokenizer.encode(input_str, add_special_tokens=False, return_tensors="pt")

return (torch.index_select(encoded_str, 1, torch.tensor([1])) if encoded_str.size(dim=1) == 2 else encoded_str)

tokenizer = MT5Tokenizer.from_pretrained("tolga-ozturk/mT5-base-nsp")

model = torch.nn.DataParallel(ModelNSP("google/mt5-base", tokenizer).eval())

model.load_state_dict(torch.load(hf_hub_download(repo_id="tolga-ozturk/mT5-base-nsp", filename="model_weights.bin")))

```

### Inference

```python

batch_texts = [("In Italy, pizza is presented unsliced.", "The sky is blue."),

("In Italy, pizza is presented unsliced.", "However, it is served sliced in Turkey.")]

encoded_dict = tokenizer.batch_encode_plus(batch_text_or_text_pairs=batch_texts, truncation="longest_first", padding=True, return_tensors="pt", return_attention_mask=True, max_length=256)

print(torch.argmax(model(encoded_dict.input_ids, attention_mask=encoded_dict.attention_mask), dim=-1))

```

### Training Metrics

<img src="https://huggingface.co/tolga-ozturk/mt5-base-nsp/resolve/main/metrics.png">

## BibTeX entry and citation info

```bibtex

@misc{title={How Different Is Stereotypical Bias Across Languages?},

author={Ibrahim Tolga Öztürk and Rostislav Nedelchev and Christian Heumann and Esteban Garces Arias and Marius Roger and Bernd Bischl and Matthias Aßenmacher},

year={2023},

eprint={2307.07331},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

The work is done with Ludwig-Maximilians-Universität Statistics group, don't forget to check out [their huggingface page](https://huggingface.co/misoda) for other interesting works!

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e5_s6789_v3_l5_r2

|

KingKazma

| 2023-08-09T14:22:16Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-04T16:20:48Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

Isaacgv/speecht5_finetuned_voxpopuli_nl

|

Isaacgv

| 2023-08-09T14:16:54Z | 85 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"speecht5",

"text-to-audio",

"text-to-speech",

"generated_from_trainer",

"nl",

"dataset:facebook/voxpopuli",

"base_model:microsoft/speecht5_tts",

"base_model:finetune:microsoft/speecht5_tts",

"license:mit",

"endpoints_compatible",

"region:us"

] |

text-to-speech

| 2023-08-09T13:15:24Z |

---

language:

- nl

license: mit

base_model: microsoft/speecht5_tts

tags:

- text-to-speech

- generated_from_trainer

datasets:

- facebook/voxpopuli

model-index:

- name: SpeechT5 TTS Dutch

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# SpeechT5 TTS Dutch

This model is a fine-tuned version of [microsoft/speecht5_tts](https://huggingface.co/microsoft/speecht5_tts) on the VoxPopuli dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4850

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 4

- eval_batch_size: 2

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 1000

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 0.5504 | 2.15 | 500 | 0.5040 |

| 0.5297 | 4.3 | 1000 | 0.4850 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e4_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:15:56Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:15:55Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e3_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:09:00Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:08:59Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e3_s6789_v3_l5_r2

|

KingKazma

| 2023-08-09T14:08:16Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-04T16:06:19Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

Against61/llama2-qlora-finetunined-CHT

|

Against61

| 2023-08-09T14:06:35Z | 4 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:06:18Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e2_s6789_v3_l5_r4

|

KingKazma

| 2023-08-09T14:02:03Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-09T14:02:02Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

KingKazma/xsum_gpt2_lora_500_10_3000_8_e2_s6789_v3_l5_r2

|

KingKazma

| 2023-08-09T14:01:16Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-04T15:59:05Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

nevoit/Song-Lyrics-Generator

|

nevoit

| 2023-08-09T13:58:24Z | 0 | 2 |

keras

|

[

"keras",

"music",

"text-generation",

"region:us"

] |

text-generation

| 2023-08-09T13:53:28Z |

---

library_name: keras

pipeline_tag: text-generation

tags:

- music

---

# The purpose

A Recurrent Neural Network that can learn song lyrics and their melodies and then given a melody and a few words to start with, predict the rest of the song. This is essentially done by generating new words for the song and attempting to be as “close” as possible to the original lyrics. However, this is entirely subjective leading the evaluation of generated words to use imaginative methods. For the training phase, however, we used Crossed Entropy loss.

## Table of Contents

* [Authors](#authors)

* [Introduction](#introduction)

* [Instructions](#instructions)

* [Dataset Analysis](#dataset-analysis)

* [Code Design](#code-design)

* [Melody Feature Integration](#melody-feature-integration)

* [Architecture](#architecture)

* [Results Evaluation](#results-evaluation)

* [Full Experimental Setup](#full-experimental-setup)

* [Analysis of how the Seed and Melody Effects the Generated Lyrics](#analysis-of-how-the-seed-and-melody-effects-the-generated-lyrics)

## Authors

* **Tomer Shahar** - [Tomer Shahar](https://github.com/Tomer-Shahar)

* **Nevo Itzhak** - [Nevo Itzhak](https://github.com/nevoit)

## Introduction

In this assignment, we were tasked with creating a Recurrent Neural Network that can learn song lyrics and their melodies and then given a melody and a few words to start with, predict the rest of the song. This is essentially done by generating new words for the song and attempting to be as “close” as possible to the original lyrics. However, this is quite subjective leading the evaluation of generated words to use imaginative methods. For the training phase, however, we used Crossed Entropy loss.

The melody files and lyrics for each song were given to us and the train / test sets were predefined. 20% of the training data was used as a validation set in order to track our progress between training iterations.

We implemented this using an LSTM network. LSTMs have proven in the past to be successful in similar tasks because of their ability to remember previous data, which in our case is relevant because each lyric depends on the words (and melody) that preceded it.

The network receives as input a sequence of lyrics and predicts the next word to appear. The length of this sequence greatly affects the network’s predicting abilities since 5 words in a row work much better than just a single word. We tried using different values to see how this changes the accuracy of the model. During the training phase, sequences from the actual lyrics are fed into the network to train. After fitting the model, we can generate the lyrics for a whole song by beginning with an initial “seed” which is a sequence of words, predicting a word and then using it to advance the sequence like a moving window.

## Instructions

1. Please download the following:

* A .zip file containing all the MIDI files of the participating songs

* the .csv file with all the lyrics of the of the participating songs (600 train and 5 test)

* [Pretty_Midi](https://nbviewer.jupyter.org/github/craffel/pretty-midi/blob/master/Tutorial.ipynb) , a python library for the analysis of MIDI files

2. Implement a recurrent neural net (LSTM or GRU) to carry out the task described in the introduction.

* During each step of the training phase, your architecture will receive as input one word of the lyrics. Words are to represented using the Word2Vec representation that can be found online (300 entries per term, as learned in class).

* The task of the network is to predict the next word of the song’s lyrics. Please see the figure 1 for an illustration. You may use any loss function

* In addition to this textual information, you need to include information extracted from the MIDI file. The method for implementing this requirement is entirely up to your consideration. Figure 1 shows one of the more simplistic options – inserting the entire melody representation at each step.

* Note that your mechanism for selecting the next word should not be deterministic (i.e., always select the word with the highest probability) but rather be sampling-based. The likelihood of a term to be selected by the sampling should be proportional to its probability.

* You may add whatever additions you want to the architecture (e.g., regularization, attention, teacher forcing)

* You may create a validation set. The manner of splitting (and all related decisions) are up to you.

3. The Pretty_Midi package offers multiple options for analyzing .mid files.

Figures 2-4 demonstrate the types of information that can be gathered.

4. You can add whatever other information you consider relevant to further improve the performance of your model.

5. You are to evaluate two approaches for integrating the melody information into your model. The two approaches don’t have to be completely different (one can build upon the other, for example), but please refrain from making only miniature changes.

6. Please include the following information in your report regarding the training phase:

* The chosen architecture of your model

* A clear description of your approach(s) for integrating the melody information together with the lyrics

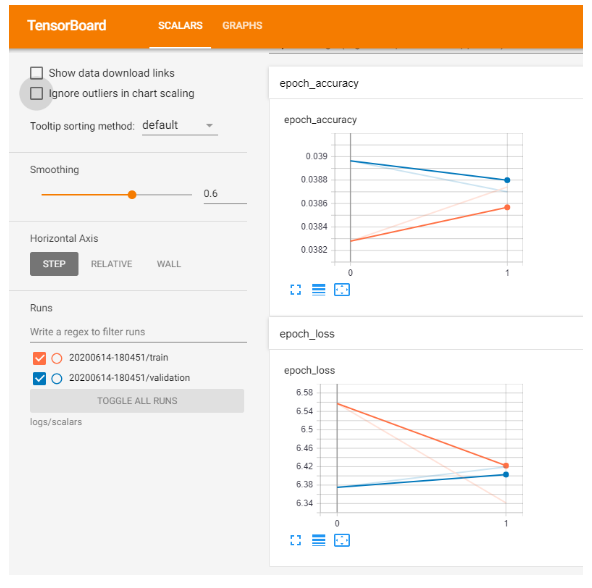

* TensorBoard graphs showing the training and validation loss of your model.

7. Please include the following information in your report regarding the test phase:

* For each of the melodies in the test set, produce the outputs (lyrics) for each of the two architectural variants you developed. The input should be the melody and the initial word of the output lyrics. Include all generated lyrics in your submission.

* For each melody, repeat the process described above three times, with different words (the same words should be used for all melodies).

* Attempt to analyze the effect of the selection of the first word and/or melody on the generated lyrics.

## Dataset Analysis

- 600 song lyrics for the training

- 5 songs for the test set.

- Midi files for each song containing just the song's melody.

- Song lyrics features:

- The length of a song is the number of words in the lyrics that are also present in the word2vec data.

- For the training set:

- Minimal song length: 3 words (Perhaps a hip hop song with lots of slang)

- Maximal song length: 1338

- Average song length: 257.37

- For the test set:

- Minimal song length: 94 words

- Maximal song length: 389

- Average song length: 231.6

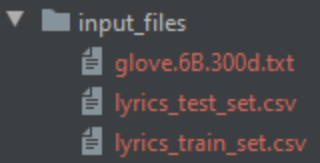

**Input Files:**

A screenshot of the input folder

You need to put files in two folders: input_files and midi_files, the other folders are generated automatically.

Inside input_files put the glove 6B 300d file and the training and testing set:

An example of the glove file:

An example for lyrics_train_set.csv (columns: artist, song name and lyrics):

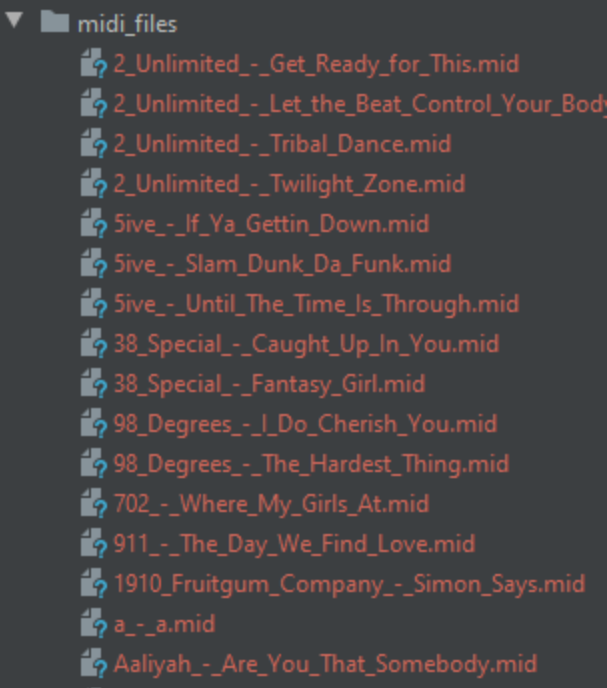

Inside the folder midi_files put the midi files:

## Code Design

Our code consists of three scripts:

1. Experiment.py - the script that runs the experiments to find the optimal parameters for our LSTM network.

2. Data_loader.py - Loads the midi files, the lyrics, fixes irregularities and cleans the song file names, loads the word embeddings file, saves and loads the various .pkl files.

3. Prepare_data.py - Performs various helper functions on the data such as splitting it properly, creating a validation set and creating the word embeddings matrix.

4. Compute_score.py - Because of the nature of this task, it is difficult to judge the successfulness of our model based on classic loss functions such as MSE. So this script contains several different methods to automatically score the output of our model, such as measuring the cosine similarity or the subjectivity of the lyrics. Explained more later.

5. Extract_melodies_features - Extracts the features we want from the midi files and splits them into train / test / validation. Explained more later.

6. Lstm_lyrics.py - The first LSTM model. This one only takes into account the lyrics of the song. This is used for comparison to see the improvement of using melodies.

7. Lstm_melodies_lyrics.py - The second LSTM model. This one incorporates the features of the midi files of each song. More on this later.

## Melody Feature Integration

We devised two different methods to extract features from the melodies. One of them a more naive technique, and the other a more sophisticated way that expands the first method.

**Method #1**: Each midi file contains a list of all instruments used in the file. For each instrument, an Instrument object contains a list of all time periods this instrument was used, the pitch used (the note) and velocity (how strong the note was played) as you can see in figure 1.

Figure 1: The data available for each instrument of the midi file

The midi file contains the length of the melody, and we know the number of words in the lyrics, so we can easily approximate how many seconds lasts each word on average. Based on this, we assign each word a time span and can deduce what instruments were played during that word and how strong. If a word appears during times 15.2 - 15.8, we can search through the instrument objects for which ones appeared during that time frame.

Using this data, we can compute how many instruments were used, their average pitch and average velocity per word. This provides the network some information about the nature of the song during this lyric, i.e. a low or high pitch and how high the velocity is.

In addition, we can easily use the function get_beats() of pretty midi to find all the beat changes in the song and their times. We simply count the number of beat changes during the word’s time frame and thus add another feature for our network.

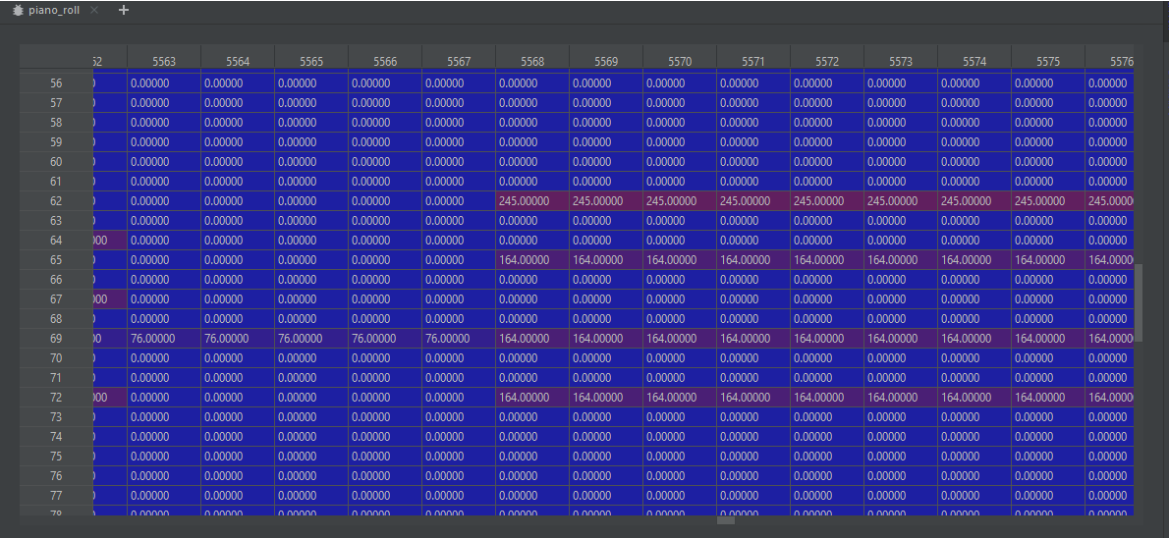

**Method #2**: With the first method we have the average pitch used for each word. Now, we want a more precise measurement of this. Each pretty midi object has a function getPianoRoll(fs) which returns a matrix that represents the notes used in the midi file on a near continuous time scale (See figure 1). Specifically, it returns an array of size 128\*S where the size of S equals the length of the song (i.e the time of the last note played) multiplied by how many times each second a sample is taken, denoted by the parameter fs. E.g, for fs=10 every 1/10ths of a second a sample will be made, meaning 10 samples per second so for a song of 120 seconds we will have 1200 samples. Thus getPianoRoll(fs=10) will return a matrix of size 128x1200. By this method, we can control the granularity of the data with ease.

Figure 2: Piano roll matrix. The value in each cell is the velocity summed across instruments.

The reason for the 128 is that musical pitch has a possible range of 0 to 127. So each column in this matrix represents the notes played during this sample (in our example, the notes played every 100 milliseconds).

After creating this matrix, we can calculate how many notes are played, on average, per word. For example, if there are 2000 columns and a song has 50 words, it means that each word in the lyrics can be connected to about 40 notes. This is not precise of course, but a useful approximation.

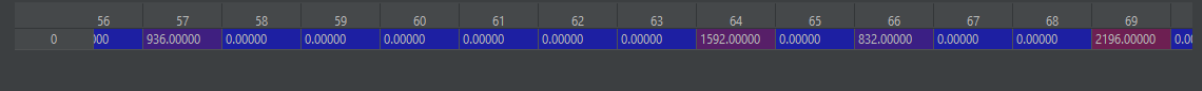

Figure 3: Notes played during a specific word in a song. Here each lyric received 40 notes representing it (columns 10-39 not shown). There are still 128 rows for each possible note.

We then iterate over every word in the song’s lyrics and find the notes that were played during that particular lyric. For example, in Figure 3 we can see that for a certain word, notes number 57, 64 and 69 were played.

Figure 4: The sum of the notes played during a specific word.

Finally, for each lyric-specific matrix, we sum each row to easily see what notes were played and how much. In figure 4, we can see the result of summing the matrix presented in figure 3. This is fed together with the array of word embeddings of each word in the sequence, thus attaching melody features to word features.

## Architecture

We used a fairly standard approach to a bidirectional LSTM network, with the addition of allowing it to receive as input both an embedding vector and the melody features. We also created an LSTM network that doesn’t receive melodies just to study the impact of melody on the results.

Number of layers: Both versions receive as input a sequence of lyrics. Then there is an embedding layer after the input that uses the word2vec dictionary to convert each word to the appropriate vector representing it. The difference between the networks is that the one using the melodies has a concatenating layer that appends the vectors of lyrics to the vector of melodies.

Additionally, we tried feeding the network various sequence lengths: 1, 5 and 10. We wanted to see how much the sequence length affects the results.

In addition to the piano roll matrix we keep the features extracted in method 1.

- Layers 3 & 4 are only for the model that uses the melody features.

- Since RNNs have to receive input of fixed length, we use masking to ensure that the input is the same size each time.

- We simply concatenate all of the features and feed it into the LSTM to utilize the melody features. However, the features entered vary greatly between our two approaches.

- We used a relatively high drop rate of 60% since we don’t want the network to converge too quickly and overfit on the training data. We tried lower values initially and found more success with 60%.

- The input of the final layer depends on the number of units in the Bidirectional LSTM.

- The final output is a probability for each word, and we sample one from there according to the distribution.

Tensorboard Graph:

**Stopping criteria:**

Here we also experimented with several parameters: We used the EarlyStopping function monitoring on the validation loss with a minimum delta of 0.1 (Minimum change in the monitored quantity to qualify as an improvement, i.e. an absolute change of less than min_delta, will count as no improvement.) and patience of 0 (Number of epochs with no improvement after which training will be stopped). We experimented with several values and found the most success with these.

**Network Hyper-Parameters Tuning:**

NOTE: Here we explain the reasons behind the choices of the parameters.

After implementing our RNN, we optimized the different parameters used. Some parameters, like the number of units in an LSTM, it is very hard to predict what will work best so this method is the best way to find good values to use.

Each combination takes a long time to train (5-15 minutes):

- Learning Rate: We tried different values, ranging from 0.1 to 0.00001. After running numerous experiments, we found 0.00001 to work the best.

- Epochs: We tried epochs of 5, 10 and 150. We found 10 to work the best.

- Batch size: We tried 32 and 2048. 32 worked better.

- Units in LSTM: 64 and 256

- We tried all of the possible combinations of the parameters detailed above which led to a huge number of experiments but led to us finding the optimal settings which were used in the section below.

## Results Evaluation

In this assignment, we were asked to generate lyrics for the 5 songs in the test set. One way to evaluate the results is simply to see how many cases did our model predict the word that was actually used in the song. However, this is not actually a good method to evaluate the model since if it generated a word that was incredibly similar to it simple accuracy wouldn’t detect that. Note that we let our model predict the exact same number of words as in the original song. We devised a few methods to judge our models lyrical capabilities:

1. **Cosine Similarity**: this is a general method to compare the similarity of two vectors. So if our model predicted “happy”, and the original lyrics had the word “smile”, we take the vector of each word from the embedding matrix and calculate the cosine similarity, 1 being the best and 0 the worst. There are a few variations for this however:

2. Comparing each word predicted to the word in the song - the most straightforward method. If a song has 200 words we will perform 200 comparisons according to the index of each word.

3. Creating n-grams of the lyrics, calculating the average of each n-gram and then comparing the n-grams according to their order. This method is a bit better in our opinion, since if the model predicted words (“A”, “B”) and they appeared as (“B”, “A”) in the song, an n-gram style similarity will determine that this was a good prediction while a unigram style won’t. So we tried with 1, 2, 3 and 5-grams.

4. **Polarity**: Using the TextBlob package, we computed the polarity of the generated lyrics and the original ones. Polarity is a score ranging from -1 to 1, -1 representing a negative sentence and 1 representing a positive one. We checked if the lyrics carry the same feelings and themes more or less. We present in the results the absolute difference between them, meaning that a polarity difference of 0 means the lyrics have similar sentiments.

5. **Subjectivity**: Again drawing from TextBlob, subjectivity is a measure of how subjective a sentence is, 0 being very objective and 1 being very subjective. We calculate the absolute difference between the generated lyrics and the original lyrics.

Note: In the final section where we predict song lyrics, we tried with different seeds as requested. With a sequence length of S, we take the first S words (i.e, words #1, #2, ..#S) and predict the rest of the song. We then skip the first S words and take words S+1 until 2S. Then we skip the first 2S words and use words 2S+1 until 3S.

Example with Sequence Length of 3:

Seed 1, seed 2 and seed 3 -

## Full Experimental Setup

Validation Set: Empirically, we learned that using a validation set is better than not if there isn’t enough data. We used the fairly standard 80/20 ratio between training and validation which worked well.

- Batch sizes - 32

- Epochs - 5

- Learning rate: 0.01

- Min delta for improvement: 0.1

- 256 units in the LSTM layer

Additionally, we tried feeding the network various sequence lengths of 1 and 5 to study the effect on the quality of the results.

**Experimental Results:**

The best results are in bold -

**Analysis**: unlike our expectations, the model with simpler features worked better in almost all cases, perhaps due to Occam’s Razor. We theorize that the features about the instruments provided a good abstraction of the features of the entire piano roll.

However, it is clear that adding some melody features to the model improved it on all parameters (except subjectivity). Additionally, having a sequence length of 5 has mixed results and doesn’t seem to have much of an impact on the evaluation methods we chose. We will look into this manually in the next section.

An interesting point is that for all cosine similarity evaluations, an increased n gave a higher similarity. We are not sure why this happens, but we think that with greater values of n the “average” word is more similar. We tested the cosine similarity where n={length of song}, and indeed the similarity was over 0.9. We then tested with a random choice of words and all of the words in a song (i.e., the average vector of the whole song), and the cosine similarity was a staggering 0.75.

**Generated Lyrics:**

For Brevity’s sake we’ll only show both models with a sequence of 1 and the advanced model with a sequence of 5.

**Model with simple melody features - sequence length 1**

A screenshot from the TensorBoard framework:

1. **Lyrics for the bangles - eternal flame**

**Seed text: close **

**close** feelin dreams baby that cause like friends im have day cool let be their would your wit ignorance such forgiven oh may doll nothing down i now around suddenly ball have empty that beautiful how you lonely no goes gone you are called of for wanted me life of stress apart say i all way, required 55 words

**Seed text: your**

**your** gentle i were remember how swear she neither too girl out through with more love me me eyes said have i used heartache hmm anymore desire fighting she when stay be part lights spend by bite again say try ruining slide lover i eyes get always honey of maybe to it hope its white i, required 55 words

**Seed text: eyes **

**eyes** and walk the night woah not live you his world more when just wakes you you fans me to it son sleeping you up i that it da we me let the i longing my do maybe warm fought a believe guys the hear blind dont your through this a down what tell gonna oh, required 55 words

2. **Lyrics for billy joel - honesty**

**Seed text: if **

**if** do hell your as you hard so the be of mable we love do fat give about em with if show you me its of some can top tell if like over baby an the out that a right get as their leaves are oh come happy joy fight me thief give i goodbye sharing like hey all it last you open right i to tonight wake be shift i sister no i on got years wear to make show dont learn be you the live from outer jump drag the myself face shes raps, required 95 words

**Seed text: you **

**you** sherry really but take my girl you and its kick knew so or the a tuya love no how love have of the me there the like its if i winter see reason baa i have would want im high him dancin ever but worked wanna the i mean the you when ill say get well leave up just actor that shit now do the chaka over dead got better to no my the imitating me and my can here and itself footsteps to like leave looked are phone for will will keep my mind, required 95 words

**Seed text: search **

**search** it so class und any you and that friends cried day whoa fine the i three the in the you lovin its a and said hall way others let night hey beautiful dreams dishes save beer store evil back summer yeah forget when well both strong said you me way your the repeat jolly im what told the really to love huh the you baby go river get id and uranus what around with the down and you would always i heart dont with once go land mind come still so to them one else, required 95 words

3. **Lyrics for cardigans - lovefool**

**Seed text: dear **

**dear** to pick tears slide low live such ill yourself me deep out crazy never kick i the belongs get others shelter before her it i wasnt survive ring off baby im to want life ho hanging if i each high you out mine you won rang woman i the do you we you certain guy the jesus my my much flame to you just you world pretty me to dont fault to ear know see love guide, required 77 words

**Seed text: i **

i dumb look me kit i ive and clothes type meet all of didnt love baby the to you i the baby heart these and up look i out just family the what baby theyre all my love down sittin money be from something stars out no while now your got guide and time some was my you off would you is na man he and remember down hes best in hand be shotgun to leaves the that, required 77 words

**Seed text: fear **

**fear** at ive no i your be friend kill thats you years im so right your hurts a if love ill night ever feel what his like ride behind love but man a going can good and gone do see if have name all turn the is start the about you down breaking you at the lady did hard call you the about threatening ass thing together in fall love i they its a up drop youre out, required 77 words

4. **Lyrics for aqua - barbie girl**

**Seed text: hiya **

**hiya** put there to copa out kick when sad when it my cars girl the with in i me the some a around eyes stay cause be clock we never still cant missed anytime motion quiet ive go hot it on the a you had and sign live tennessee no fools got so i father hope for never for you the just it there me my believe other oh red your dont dream the drives the they chorus the happy crosses they to i i because if won this i want didnt ask the, required 93 words

**Seed text: barbie **

**barbie** me go to country smiling all now from love she my is this world not that in to though i beat your be bad new hard cant pretty to wont to round do things without try it walking of ill things in man love a hands were for well you to no chuckie gonna i wish done arms tell lets it beat waiting found we good man write i nigga at do never you it ooh try are attention yeah oh hurt that too without roll yourself with the you feeling switch dont, required 93 words

**Seed text: hi **

**hi** this feeling gotta that alone im do she sweet and you ever you the in had the the raise up skies it youre do me its inspired song with what that feel other mine time the easily what when you and three cause beat and its gets christmas your you sad a behind nothing a i number back or never and who your move beat you driving you i love and of do other like on go when oh yea heart plane after her that mine never soul like one you made you, required 93 words

5. **Lyrics for blink 182 - all the small things**

**Seed text: all **

**all** live ive fire love did my right so truck reading it life its sin heal well two home we confused mony its song you tried could disguise know find for amadeus where sailor you and the to wo insane yeah skin wind ride song me heart up bite a a new a i let money world didnt on, required 58 words

**Seed text: the **

**the** love want risk whoa breakin take need cebu me amadeus control weve lose and try cryin away know hopes away what theres makes in you right drunk live always ever one bop your lovely on steal bet i say somebody say gonna sad stay frosty a grease scene his hangin your dry touch mind i you you your, required 58 words

**Seed text: small **

**small** lost with sun find when casbah you time huh to please for you see make the life dont you me to she the waitin honey weed all fill fired wish on alone thats like im the to and yeah long sure the broadway the need somebody always achy dont well i as seen my that boy your that, required 58 words

**Model with advanced melody features - sequence length 5**

A screenshot from the TensorBoard framework:

1. **Lyrics for the bangles - eternal flame**

**Seed text: close your eyes give me **