modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 06:27:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 527

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 06:27:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

vendi11/blockassist-bc-placid_placid_llama_1756422479

|

vendi11

| 2025-08-28T23:08:42Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"placid placid llama",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T23:08:38Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- placid placid llama

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756422407

|

Dejiat

| 2025-08-28T23:07:15Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T23:07:11Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

trakonmerty66/blockassist-bc-durable_tropical_wombat_1756422341

|

trakonmerty66

| 2025-08-28T23:06:28Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"durable tropical wombat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T23:06:07Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- durable tropical wombat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

AnerYubo/blockassist-bc-elusive_mammalian_termite_1756422021

|

AnerYubo

| 2025-08-28T23:00:25Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"elusive mammalian termite",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T23:00:22Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- elusive mammalian termite

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

nvidia/OpenReasoning-Nemotron-14B

|

nvidia

| 2025-08-28T22:50:48Z | 1,732 | 37 |

transformers

|

[

"transformers",

"safetensors",

"qwen2",

"text-generation",

"nvidia",

"code",

"conversational",

"en",

"arxiv:2504.16891",

"arxiv:2504.01943",

"arxiv:2507.09075",

"base_model:Qwen/Qwen2.5-14B-Instruct",

"base_model:finetune:Qwen/Qwen2.5-14B-Instruct",

"license:cc-by-4.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-07-15T21:28:27Z |

---

license: cc-by-4.0

language:

- en

base_model:

- Qwen/Qwen2.5-14B-Instruct

pipeline_tag: text-generation

library_name: transformers

tags:

- nvidia

- code

---

# OpenReasoning-Nemotron-14B Overview

## Description: <br>

OpenReasoning-Nemotron-14B is a large language model (LLM) which is a derivative of Qwen2.5-14B-Instruct (AKA the reference model). It is a reasoning model that is post-trained for reasoning about math, code and science solution generation. We evaluated this model with up to 64K output tokens. The OpenReasoning model is available in the following sizes: 1.5B, 7B and 14B and 32B. <br>

This model is ready for commercial/non-commercial research use. <br>

### License/Terms of Use: <br>

GOVERNING TERMS: Use of the models listed above are governed by the [Creative Commons Attribution 4.0 International License (CC-BY-4.0)](https://creativecommons.org/licenses/by/4.0/legalcode.en). ADDITIONAL INFORMATION: [Apache 2.0 License](https://huggingface.co/Qwen/Qwen2.5-32B-Instruct/blob/main/LICENSE)

## Scores on Reasoning Benchmarks

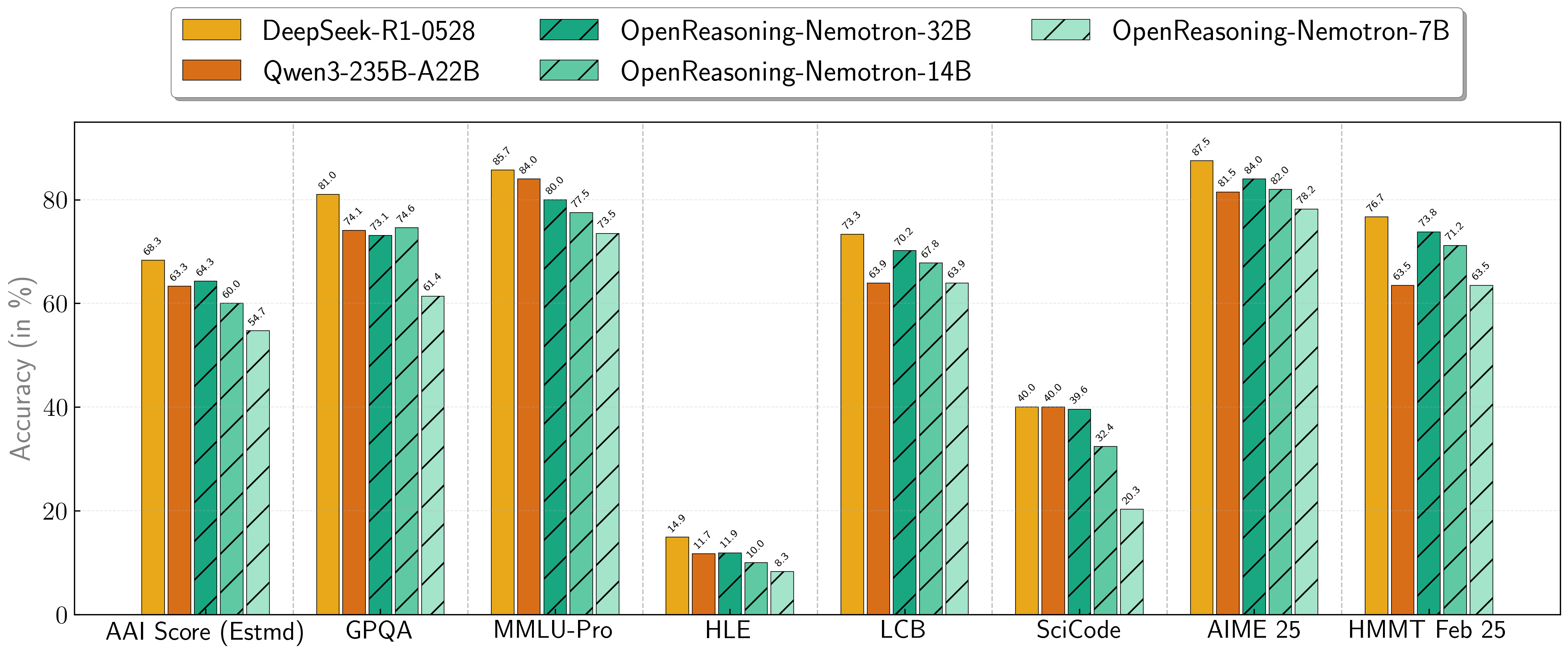

Our models demonstrate exceptional performance across a suite of challenging reasoning benchmarks. The 7B, 14B, and 32B models consistently set new state-of-the-art records for their size classes.

| **Model** | **AritificalAnalysisIndex*** | **GPQA** | **MMLU-PRO** | **HLE** | **LiveCodeBench*** | **SciCode** | **AIME24** | **AIME25** | **HMMT FEB 25** |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **1.5B**| 31.0 | 31.6 | 47.5 | 5.5 | 28.6 | 1.0 | 55.5 | 45.6 | 31.5 |

| **7B** | 54.7 | 61.1 | 71.9 | 8.3 | 63.3 | 20.3 | 84.7 | 78.2 | 63.5 |

| **14B** | 60.9 | 71.6 | 77.5 | 10.1 | 67.8 | 32.4 | 87.8 | 82.0 | 71.2 |

| **32B** | 64.3 | 73.1 | 80.0 | 11.9 | 70.2 | 39.6 | 89.2 | 84.0 | 73.8 |

\* This is our estimation of the Artificial Analysis Intelligence Index, not an official score.

\* LiveCodeBench version 6, date range 2408-2505.

## Combining the work of multiple agents

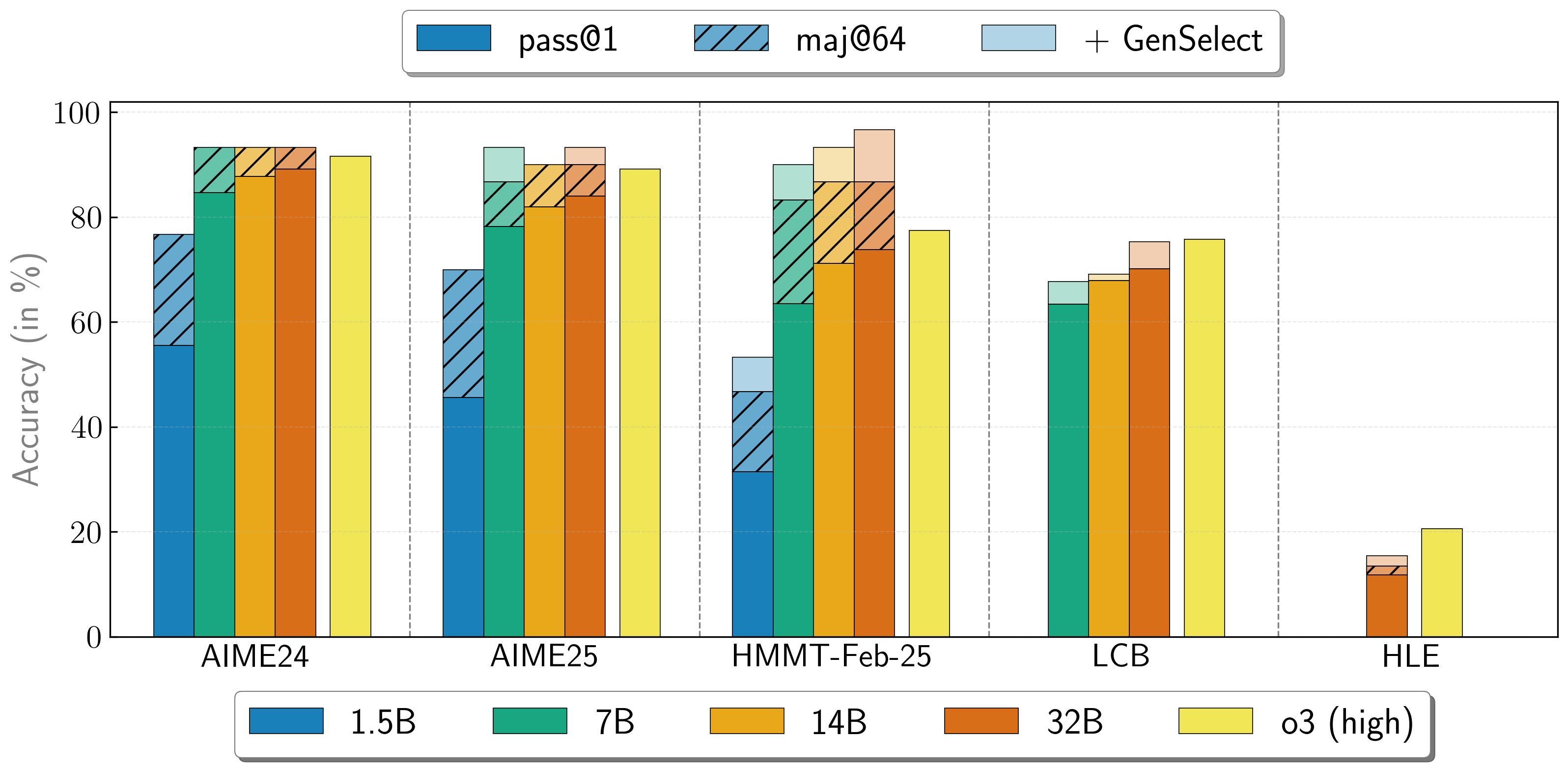

OpenReasoning-Nemotron models can be used in a "heavy" mode by starting multiple parallel generations and combining them together via [generative solution selection (GenSelect)](https://arxiv.org/abs/2504.16891). To add this "skill" we follow the original GenSelect training pipeline except we do not train on the selection summary but use the full reasoning trace of DeepSeek R1 0528 671B instead. We only train models to select the best solution for math problems but surprisingly find that this capability directly generalizes to code and science questions! With this "heavy" GenSelect inference mode, OpenReasoning-Nemotron-32B model surpasses O3 (High) on math and coding benchmarks.

| **Model** | **Pass@1 (Avg@64)** | **Majority@64** | **GenSelect** |

| :--- | :--- | :--- | :--- |

| **1.5B** | | | |

| **AIME24** | 55.5 | 76.7 | 76.7 |

| **AIME25** | 45.6 | 70.0 | 70.0 |

| **HMMT Feb 25** | 31.5 | 46.7 | 53.3 |

| **7B** | | | |

| **AIME24** | 84.7 | 93.3 | 93.3 |

| **AIME25** | 78.2 | 86.7 | 93.3 |

| **HMMT Feb 25** | 63.5 | 83.3 | 90.0 |

| **LCB v6 2408-2505** | 63.4 | n/a | 67.7 |

| **14B** | | | |

| **AIME24** | 87.8 | 93.3 | 93.3 |

| **AIME25** | 82.0 | 90.0 | 90.0 |

| **HMMT Feb 25** | 71.2 | 86.7 | 93.3 |

| **LCB v6 2408-2505** | 67.9 | n/a | 69.1 |

| **32B** | | | |

| **AIME24** | 89.2 | 93.3 | 93.3 |

| **AIME25** | 84.0 | 90.0 | 93.3 |

| **HMMT Feb 25** | 73.8 | 86.7 | 96.7 |

| **LCB v6 2408-2505** | 70.2 | n/a | 75.3 |

| **HLE** | 11.8 | 13.4 | 15.5 |

## How to use the models?

To run inference on coding problems:

````python

import transformers

import torch

model_id = "nvidia/OpenReasoning-Nemotron-14B"

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

model_kwargs={"torch_dtype": torch.bfloat16},

device_map="auto",

)

# Code generation prompt

prompt = """You are a helpful and harmless assistant. You should think step-by-step before responding to the instruction below.

Please use python programming language only.

You must use ```python for just the final solution code block with the following format:

```python

# Your code here

```

{user}

"""

# Math generation prompt

# prompt = """Solve the following math problem. Make sure to put the answer (and only answer) inside \\boxed{}.

#

# {user}

# """

# Science generation prompt

# You can refer to prompts here -

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/generic/hle.yaml (HLE)

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/eval/aai/mcq-4choices-boxed.yaml (for GPQA)

# https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/eval/aai/mcq-10choices-boxed.yaml (MMLU-Pro)

messages = [

{

"role": "user",

"content": prompt.format(user="Write a program to calculate the sum of the first $N$ fibonacci numbers")},

]

outputs = pipeline(

messages,

max_new_tokens=64000,

)

print(outputs[0]["generated_text"][-1]['content'])

````

We have added [a simple transformer-based script](https://huggingface.co/nvidia/OpenReasoning-Nemotron-14B/blob/main/genselect_hf.py) in this repo to illustrate GenSelect.

To learn how to use the models in GenSelect mode with NeMo-Skills, see our [documentation](https://nvidia.github.io/NeMo-Skills/releases/openreasoning/evaluation/).

To use the model with GenSelect inference, we recommend following our

[reference implementation in NeMo-Skills](https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/pipeline/genselect.py). Alternatively, you can manually extract the summary from all solutions and use this

[prompt](https://github.com/NVIDIA/NeMo-Skills/blob/main/nemo_skills/prompt/config/openmath/genselect.yaml) for the math problems. We will add the prompt we used for the coding problems and a reference implementation soon!

You can learn more about GenSelect in these papers:

* [AIMO-2 Winning Solution: Building State-of-the-Art Mathematical Reasoning Models with OpenMathReasoning dataset](https://arxiv.org/abs/2504.16891)

* [GenSelect: A Generative Approach to Best-of-N](https://openreview.net/forum?id=8LhnmNmUDb)

## Accessing training data

Training data has been released! Math and code are available as part of

[Nemotron-Post-Training-Dataset-v1](https://huggingface.co/datasets/nvidia/Nemotron-Post-Training-Dataset-v1) and science is available in

[OpenScienceReasoning-2](https://huggingface.co/datasets/nvidia/OpenScienceReasoning-2).

See our [documentation](https://nvidia.github.io/NeMo-Skills/releases/openreasoning/training) for more details.

## Citation

If you find the data useful, please cite:

```

@article{ahmad2025opencodereasoning,

title={{OpenCodeReasoning: Advancing Data Distillation for Competitive Coding}},

author={Wasi Uddin Ahmad, Sean Narenthiran, Somshubra Majumdar, Aleksander Ficek, Siddhartha Jain, Jocelyn Huang, Vahid Noroozi, Boris Ginsburg},

year={2025},

eprint={2504.01943},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2504.01943},

}

```

```

@misc{ahmad2025opencodereasoningiisimpletesttime,

title={{OpenCodeReasoning-II: A Simple Test Time Scaling Approach via Self-Critique}},

author={Wasi Uddin Ahmad and Somshubra Majumdar and Aleksander Ficek and Sean Narenthiran and Mehrzad Samadi and Jocelyn Huang and Siddhartha Jain and Vahid Noroozi and Boris Ginsburg},

year={2025},

eprint={2507.09075},

archivePrefix={arXiv},

primaryClass={cs.CL},

url={https://arxiv.org/abs/2507.09075},

}

```

```

@misc{moshkov2025aimo2winningsolutionbuilding,

title={{AIMO-2 Winning Solution: Building State-of-the-Art Mathematical Reasoning Models with OpenMathReasoning dataset}},

author={Ivan Moshkov and Darragh Hanley and Ivan Sorokin and Shubham Toshniwal and Christof Henkel and Benedikt Schifferer and Wei Du and Igor Gitman},

year={2025},

eprint={2504.16891},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2504.16891},

}

```

```

@inproceedings{toshniwal2025genselect,

title={{GenSelect: A Generative Approach to Best-of-N}},

author={Shubham Toshniwal and Ivan Sorokin and Aleksander Ficek and Ivan Moshkov and Igor Gitman},

booktitle={2nd AI for Math Workshop @ ICML 2025},

year={2025},

url={https://openreview.net/forum?id=8LhnmNmUDb}

}

```

## Additional Information:

### Deployment Geography:

Global<br>

### Use Case: <br>

This model is intended for developers and researchers who work on competitive math, code and science problems. It has been trained via only supervised fine-tuning to achieve strong scores on benchmarks. <br>

### Release Date: <br>

Huggingface [07/16/2025] via https://huggingface.co/nvidia/OpenReasoning-Nemotron-14B/ <br>

## Reference(s):

* [2504.01943] OpenCodeReasoning: Advancing Data Distillation for Competitive Coding

* [2504.01943] OpenCodeReasoning: Advancing Data Distillation for Competitive Coding

* [2504.16891] AIMO-2 Winning Solution: Building State-of-the-Art Mathematical Reasoning Models with OpenMathReasoning dataset

<br>

## Model Architecture: <br>

Architecture Type: Dense decoder-only Transformer model

Network Architecture: Qwen-14B-Instruct

<br>

**This model was developed based on Qwen2.5-14B-Instruct and has 14B model parameters. <br>**

**OpenReasoning-Nemotron-1.5B was developed based on Qwen2.5-1.5B-Instruct and has 1.5B model parameters. <br>**

**OpenReasoning-Nemotron-7B was developed based on Qwen2.5-7B-Instruct and has 7B model parameters. <br>**

**OpenReasoning-Nemotron-14B was developed based on Qwen2.5-14B-Instruct and has 14B model parameters. <br>**

**OpenReasoning-Nemotron-32B was developed based on Qwen2.5-32B-Instruct and has 32B model parameters. <br>**

## Input: <br>

**Input Type(s):** Text <br>

**Input Format(s):** String <br>

**Input Parameters:** One-Dimensional (1D) <br>

**Other Properties Related to Input:** Trained for up to 64,000 output tokens <br>

## Output: <br>

**Output Type(s):** Text <br>

**Output Format:** String <br>

**Output Parameters:** One-Dimensional (1D) <br>

**Other Properties Related to Output:** Trained for up to 64,000 output tokens <br>

Our AI models are designed and/or optimized to run on NVIDIA GPU-accelerated systems. By leveraging NVIDIA’s hardware (e.g. GPU cores) and software frameworks (e.g., CUDA libraries), the model achieves faster training and inference times compared to CPU-only solutions. <br>

## Software Integration : <br>

* Runtime Engine: NeMo 2.3.0 <br>

* Recommended Hardware Microarchitecture Compatibility: <br>

NVIDIA Ampere <br>

NVIDIA Hopper <br>

* Preferred/Supported Operating System(s): Linux <br>

## Model Version(s):

1.0 (7/16/2025) <br>

OpenReasoning-Nemotron-32B<br>

OpenReasoning-Nemotron-14B<br>

OpenReasoning-Nemotron-7B<br>

OpenReasoning-Nemotron-1.5B<br>

# Training and Evaluation Datasets: <br>

## Training Dataset:

The training corpus for OpenReasoning-Nemotron-14B is comprised of questions from [OpenCodeReasoning](https://huggingface.co/datasets/nvidia/OpenCodeReasoning) dataset, [OpenCodeReasoning-II](https://arxiv.org/abs/2507.09075), [OpenMathReasoning](https://huggingface.co/datasets/nvidia/OpenMathReasoning), and the Synthetic Science questions from the [Llama-Nemotron-Post-Training-Dataset](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset). All responses are generated using DeepSeek-R1-0528. We also include the instruction following and tool calling data from Llama-Nemotron-Post-Training-Dataset without modification.

Data Collection Method: Hybrid: Automated, Human, Synthetic <br>

Labeling Method: Hybrid: Automated, Human, Synthetic <br>

Properties: 5M DeepSeek-R1-0528 generated responses from OpenCodeReasoning questions (https://huggingface.co/datasets/nvidia/OpenCodeReasoning), [OpenMathReasoning](https://huggingface.co/datasets/nvidia/OpenMathReasoning), and the Synthetic Science questions from the [Llama-Nemotron-Post-Training-Dataset](https://huggingface.co/datasets/nvidia/Llama-Nemotron-Post-Training-Dataset). We also include the instruction following and tool calling data from Llama-Nemotron-Post-Training-Dataset without modification.

## Evaluation Dataset:

We used the following benchmarks to evaluate the model holistically.

### Math

- AIME 2024/2025 <br>

- HMMT <br>

- BRUNO 2025 <br>

### Code

- LiveCodeBench <br>

- SciCode <br>

### Science

- GPQA <br>

- MMLU-PRO <br>

- HLE <br>

Data Collection Method: Hybrid: Automated, Human, Synthetic <br>

Labeling Method: Hybrid: Automated, Human, Synthetic <br>

## Inference:

**Acceleration Engine:** vLLM, Tensor(RT)-LLM <br>

**Test Hardware** NVIDIA H100-80GB <br>

## Ethical Considerations:

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

For more detailed information on ethical considerations for this model, please see the Model Card++ Explainability, Bias, Safety & Security, and Privacy Subcards.

Please report model quality, risk, security vulnerabilities or NVIDIA AI Concerns [here](https://www.nvidia.com/en-us/support/submit-security-vulnerability/).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756421138

|

Dejiat

| 2025-08-28T22:46:05Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:46:02Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

lowelldiaz/blockassist-bc-prowling_feathered_stork_1756420754

|

lowelldiaz

| 2025-08-28T22:41:06Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"prowling feathered stork",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:40:36Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- prowling feathered stork

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756420074

|

Dejiat

| 2025-08-28T22:28:18Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:28:15Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

bah63843/blockassist-bc-plump_fast_antelope_1756419473

|

bah63843

| 2025-08-28T22:18:48Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:18:39Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

vendi11/blockassist-bc-placid_placid_llama_1756419431

|

vendi11

| 2025-08-28T22:17:53Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"placid placid llama",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:17:50Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- placid placid llama

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

alok0777/blockassist-bc-masked_pensive_lemur_1756419369

|

alok0777

| 2025-08-28T22:17:21Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"masked pensive lemur",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:16:57Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- masked pensive lemur

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

kipospol/blockassist-bc-lively_agile_peacock_1756419245

|

kipospol

| 2025-08-28T22:14:54Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"lively agile peacock",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:14:30Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- lively agile peacock

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756419047

|

eusuf01

| 2025-08-28T22:12:10Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:11:41Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

wolfer45/vgaxl2025

|

wolfer45

| 2025-08-28T22:11:40Z | 0 | 0 |

diffusers

|

[

"diffusers",

"text-to-image",

"lora",

"template:diffusion-lora",

"base_model:stabilityai/sdxl-turbo",

"base_model:adapter:stabilityai/sdxl-turbo",

"region:us"

] |

text-to-image

| 2025-08-28T22:11:09Z |

---

tags:

- text-to-image

- lora

- diffusers

- template:diffusion-lora

widget:

- output:

url: images/11_crop.jpg

text: '-'

base_model: stabilityai/sdxl-turbo

instance_prompt: vgaxl2025

---

# vgaxl2025

<Gallery />

## Model description

vgaxl2025

## Trigger words

You should use `vgaxl2025` to trigger the image generation.

## Download model

[Download](/wolfer45/vgaxl2025/tree/main) them in the Files & versions tab.

|

kipospol/blockassist-bc-lively_agile_peacock_1756418878

|

kipospol

| 2025-08-28T22:08:40Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"lively agile peacock",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:08:21Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- lively agile peacock

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756418559

|

eusuf01

| 2025-08-28T22:03:57Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:03:27Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

AnerYubo/blockassist-bc-gilded_patterned_mouse_1756418508

|

AnerYubo

| 2025-08-28T22:01:52Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"gilded patterned mouse",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T22:01:49Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- gilded patterned mouse

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mrblithe/phi3-razzimiyum

|

mrblithe

| 2025-08-28T21:53:42Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"phi3",

"text-generation",

"conversational",

"custom_code",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-28T21:31:39Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

capungmerah627/blockassist-bc-stinging_soaring_porcupine_1756416357

|

capungmerah627

| 2025-08-28T21:50:59Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"stinging soaring porcupine",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:50:55Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- stinging soaring porcupine

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Loder-S/blockassist-bc-sprightly_knobby_tiger_1756416125

|

Loder-S

| 2025-08-28T21:48:08Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"sprightly knobby tiger",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:48:05Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- sprightly knobby tiger

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

chainway9/blockassist-bc-untamed_quick_eel_1756415570

|

chainway9

| 2025-08-28T21:41:28Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"untamed quick eel",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:41:24Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- untamed quick eel

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mradermacher/Veltrix-GGUF

|

mradermacher

| 2025-08-28T21:41:28Z | 0 | 0 |

transformers

|

[

"transformers",

"gguf",

"en",

"base_model:MGZON/Veltrix",

"base_model:quantized:MGZON/Veltrix",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2025-08-28T21:40:16Z |

---

base_model: MGZON/Veltrix

language:

- en

library_name: transformers

license: apache-2.0

mradermacher:

readme_rev: 1

quantized_by: mradermacher

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static quants of https://huggingface.co/MGZON/Veltrix

<!-- provided-files -->

***For a convenient overview and download list, visit our [model page for this model](https://hf.tst.eu/model#Veltrix-GGUF).***

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q2_K.gguf) | Q2_K | 0.1 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q3_K_S.gguf) | Q3_K_S | 0.1 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q3_K_M.gguf) | Q3_K_M | 0.1 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q3_K_L.gguf) | Q3_K_L | 0.1 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.IQ4_XS.gguf) | IQ4_XS | 0.1 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q4_K_S.gguf) | Q4_K_S | 0.1 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q4_K_M.gguf) | Q4_K_M | 0.2 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q5_K_S.gguf) | Q5_K_S | 0.2 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q5_K_M.gguf) | Q5_K_M | 0.2 | |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q6_K.gguf) | Q6_K | 0.2 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.Q8_0.gguf) | Q8_0 | 0.2 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/Veltrix-GGUF/resolve/main/Veltrix.f16.gguf) | f16 | 0.3 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

Rootu/blockassist-bc-snorting_fleecy_goose_1756417173

|

Rootu

| 2025-08-28T21:40:29Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"snorting fleecy goose",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:40:17Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- snorting fleecy goose

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

davidilag/wav2vec2-xls-r-300m-pt-1000h_faroese-checkpoint10-faroese-100h-30-epochs_run3_2025-08-28

|

davidilag

| 2025-08-28T21:34:42Z | 0 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2025-08-28T11:49:47Z |

---

library_name: transformers

tags:

- generated_from_trainer

metrics:

- wer

model-index:

- name: wav2vec2-xls-r-300m-pt-1000h_faroese-checkpoint10-faroese-100h-30-epochs_run3_2025-08-28

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-xls-r-300m-pt-1000h_faroese-checkpoint10-faroese-100h-30-epochs_run3_2025-08-28

This model was trained from scratch on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0955

- Wer: 18.9893

- Cer: 4.0477

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Use OptimizerNames.ADAMW_TORCH_FUSED with betas=(0.9,0.999) and epsilon=1e-08 and optimizer_args=No additional optimizer arguments

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 5000

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer | Cer |

|:-------------:|:-------:|:-----:|:---------------:|:-------:|:-------:|

| 3.3326 | 0.4877 | 1000 | 3.4082 | 100.0 | 99.3017 |

| 0.9195 | 0.9754 | 2000 | 0.5797 | 49.6233 | 13.9009 |

| 0.4245 | 1.4628 | 3000 | 0.2394 | 30.6693 | 7.7442 |

| 0.3878 | 1.9505 | 4000 | 0.2012 | 28.7175 | 7.1659 |

| 0.3023 | 2.4379 | 5000 | 0.1720 | 27.0476 | 6.6183 |

| 0.2929 | 2.9256 | 6000 | 0.1605 | 26.4440 | 6.3760 |

| 0.2094 | 3.4131 | 7000 | 0.1554 | 24.8447 | 6.0289 |

| 0.2194 | 3.9008 | 8000 | 0.1373 | 24.0781 | 5.7930 |

| 0.1766 | 4.3882 | 9000 | 0.1422 | 24.0076 | 5.7085 |

| 0.1962 | 4.8759 | 10000 | 0.1330 | 23.4701 | 5.5531 |

| 0.1632 | 5.3633 | 11000 | 0.1300 | 23.1881 | 5.4450 |

| 0.1704 | 5.8510 | 12000 | 0.1237 | 23.2145 | 5.4450 |

| 0.1426 | 6.3385 | 13000 | 0.1217 | 22.6550 | 5.3006 |

| 0.1489 | 6.8261 | 14000 | 0.1252 | 23.0207 | 5.3779 |

| 0.1282 | 7.3136 | 15000 | 0.1167 | 22.1924 | 5.1483 |

| 0.1389 | 7.8013 | 16000 | 0.1082 | 21.7121 | 4.9629 |

| 0.1303 | 8.2887 | 17000 | 0.1109 | 21.8311 | 4.9590 |

| 0.1266 | 8.7764 | 18000 | 0.1136 | 21.6593 | 4.9590 |

| 0.1053 | 9.2638 | 19000 | 0.1121 | 21.6637 | 4.9369 |

| 0.1073 | 9.7515 | 20000 | 0.1189 | 21.5006 | 4.9030 |

| 0.097 | 10.2390 | 21000 | 0.1075 | 21.3288 | 4.8367 |

| 0.1005 | 10.7267 | 22000 | 0.1057 | 21.2715 | 4.8225 |

| 0.0849 | 11.2141 | 23000 | 0.1059 | 20.8750 | 4.7018 |

| 0.0846 | 11.7018 | 24000 | 0.1086 | 21.0556 | 4.7459 |

| 0.0873 | 12.1892 | 25000 | 0.1064 | 20.8001 | 4.6986 |

| 0.0804 | 12.6769 | 26000 | 0.1035 | 20.5093 | 4.5992 |

| 0.0779 | 13.1644 | 27000 | 0.1065 | 20.5049 | 4.5495 |

| 0.0779 | 13.6520 | 28000 | 0.1036 | 20.5358 | 4.5653 |

| 0.0711 | 14.1395 | 29000 | 0.1051 | 20.5137 | 4.6023 |

| 0.0797 | 14.6272 | 30000 | 0.1068 | 20.4829 | 4.5676 |

| 0.0716 | 15.1146 | 31000 | 0.1035 | 20.2890 | 4.5187 |

| 0.0616 | 15.6023 | 32000 | 0.1016 | 20.1568 | 4.4445 |

| 0.0747 | 16.0897 | 33000 | 0.1014 | 20.1480 | 4.4524 |

| 0.0632 | 16.5774 | 34000 | 0.1003 | 19.8264 | 4.3577 |

| 0.0564 | 17.0649 | 35000 | 0.0963 | 19.8484 | 4.3499 |

| 0.0547 | 17.5525 | 36000 | 0.0966 | 19.6986 | 4.3601 |

| 0.057 | 18.0400 | 37000 | 0.1005 | 19.7691 | 4.2994 |

| 0.0504 | 18.5277 | 38000 | 0.1002 | 19.5621 | 4.2591 |

| 0.055 | 19.0151 | 39000 | 0.0985 | 19.6722 | 4.3057 |

| 0.0507 | 19.5028 | 40000 | 0.1036 | 19.6370 | 4.3159 |

| 0.0413 | 19.9905 | 41000 | 0.1003 | 19.3858 | 4.2260 |

| 0.0446 | 20.4779 | 42000 | 0.0979 | 19.5268 | 4.2244 |

| 0.0387 | 20.9656 | 43000 | 0.0951 | 19.2713 | 4.1534 |

| 0.0407 | 21.4531 | 44000 | 0.0954 | 19.3814 | 4.1763 |

| 0.0579 | 21.9407 | 45000 | 0.0991 | 19.2977 | 4.1668 |

| 0.0471 | 22.4282 | 46000 | 0.0962 | 19.3021 | 4.1487 |

| 0.0483 | 22.9159 | 47000 | 0.0969 | 19.2096 | 4.1234 |

| 0.0532 | 23.4033 | 48000 | 0.0935 | 19.0950 | 4.1100 |

| 0.0369 | 23.8910 | 49000 | 0.0979 | 19.2757 | 4.1487 |

| 0.0389 | 24.3784 | 50000 | 0.0974 | 19.1127 | 4.1210 |

| 0.0375 | 24.8661 | 51000 | 0.0972 | 19.1171 | 4.1076 |

| 0.037 | 25.3536 | 52000 | 0.0963 | 19.1083 | 4.0911 |

| 0.0391 | 25.8413 | 53000 | 0.0982 | 19.0466 | 4.0753 |

| 0.0413 | 26.3287 | 54000 | 0.0980 | 18.9981 | 4.0484 |

| 0.033 | 26.8164 | 55000 | 0.0974 | 18.9893 | 4.0548 |

| 0.0412 | 27.3038 | 56000 | 0.0959 | 18.9981 | 4.0492 |

| 0.0396 | 27.7915 | 57000 | 0.0959 | 18.9452 | 4.0406 |

| 0.0426 | 28.2790 | 58000 | 0.0958 | 18.9496 | 4.0437 |

| 0.0377 | 28.7666 | 59000 | 0.0957 | 18.9981 | 4.0532 |

| 0.0406 | 29.2541 | 60000 | 0.0955 | 18.9981 | 4.0484 |

| 0.0408 | 29.7418 | 61000 | 0.0955 | 18.9893 | 4.0477 |

### Framework versions

- Transformers 4.55.4

- Pytorch 2.8.0+cu126

- Datasets 4.0.0

- Tokenizers 0.21.4

|

alok0777/blockassist-bc-masked_pensive_lemur_1756416801

|

alok0777

| 2025-08-28T21:34:32Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"masked pensive lemur",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:34:10Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- masked pensive lemur

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

bah63843/blockassist-bc-plump_fast_antelope_1756416461

|

bah63843

| 2025-08-28T21:29:02Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:28:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Wavescarmers/blockassist-bc-bellowing_jumping_jay_1756416451

|

Wavescarmers

| 2025-08-28T21:29:02Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"bellowing jumping jay",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:28:50Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- bellowing jumping jay

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

sampingkaca72/blockassist-bc-armored_stealthy_elephant_1756414151

|

sampingkaca72

| 2025-08-28T21:16:27Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"armored stealthy elephant",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:16:23Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- armored stealthy elephant

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

rvipitkirubbe/blockassist-bc-mottled_foraging_ape_1756414084

|

rvipitkirubbe

| 2025-08-28T21:15:56Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"mottled foraging ape",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:15:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- mottled foraging ape

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756415329

|

eusuf01

| 2025-08-28T21:10:06Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:09:37Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Dejiat/blockassist-bc-savage_unseen_bobcat_1756415185

|

Dejiat

| 2025-08-28T21:06:52Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"savage unseen bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:06:48Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- savage unseen bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

GroomerG/blockassist-bc-vicious_pawing_badger_1756413510

|

GroomerG

| 2025-08-28T21:06:34Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"vicious pawing badger",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:06:30Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- vicious pawing badger

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756415127

|

eusuf01

| 2025-08-28T21:06:15Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:06:01Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Drahca91/fabien_pic

|

Drahca91

| 2025-08-28T21:04:31Z | 0 | 0 |

diffusers

|

[

"diffusers",

"flux",

"lora",

"replicate",

"text-to-image",

"en",

"base_model:black-forest-labs/FLUX.1-dev",

"base_model:adapter:black-forest-labs/FLUX.1-dev",

"license:other",

"region:us"

] |

text-to-image

| 2025-08-28T20:46:09Z |

---

license: other

license_name: flux-1-dev-non-commercial-license

license_link: https://huggingface.co/black-forest-labs/FLUX.1-dev/blob/main/LICENSE.md

language:

- en

tags:

- flux

- diffusers

- lora

- replicate

base_model: "black-forest-labs/FLUX.1-dev"

pipeline_tag: text-to-image

# widget:

# - text: >-

# prompt

# output:

# url: https://...

instance_prompt: Fabien

---

# Fabien_Pic

<Gallery />

## About this LoRA

This is a [LoRA](https://replicate.com/docs/guides/working-with-loras) for the FLUX.1-dev text-to-image model. It can be used with diffusers or ComfyUI.

It was trained on [Replicate](https://replicate.com/) using AI toolkit: https://replicate.com/ostris/flux-dev-lora-trainer/train

## Trigger words

You should use `Fabien` to trigger the image generation.

## Run this LoRA with an API using Replicate

```py

import replicate

input = {

"prompt": "Fabien",

"lora_weights": "https://huggingface.co/Drahca91/fabien_pic/resolve/main/lora.safetensors"

}

output = replicate.run(

"black-forest-labs/flux-dev-lora",

input=input

)

for index, item in enumerate(output):

with open(f"output_{index}.webp", "wb") as file:

file.write(item.read())

```

## Use it with the [🧨 diffusers library](https://github.com/huggingface/diffusers)

```py

from diffusers import AutoPipelineForText2Image

import torch

pipeline = AutoPipelineForText2Image.from_pretrained('black-forest-labs/FLUX.1-dev', torch_dtype=torch.float16).to('cuda')

pipeline.load_lora_weights('Drahca91/fabien_pic', weight_name='lora.safetensors')

image = pipeline('Fabien').images[0]

```

For more details, including weighting, merging and fusing LoRAs, check the [documentation on loading LoRAs in diffusers](https://huggingface.co/docs/diffusers/main/en/using-diffusers/loading_adapters)

## Training details

- Steps: 1000

- Learning rate: 0.0004

- LoRA rank: 16

## Contribute your own examples

You can use the [community tab](https://huggingface.co/Drahca91/fabien_pic/discussions) to add images that show off what you’ve made with this LoRA.

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756414899

|

eusuf01

| 2025-08-28T21:02:24Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T21:02:12Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Rootu/blockassist-bc-snorting_fleecy_goose_1756414699

|

Rootu

| 2025-08-28T20:59:17Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"snorting fleecy goose",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:58:56Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- snorting fleecy goose

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Rudra-madlads/blockassist-bc-jumping_swift_gazelle_1756414609

|

Rudra-madlads

| 2025-08-28T20:57:45Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"jumping swift gazelle",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:57:22Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- jumping swift gazelle

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

NexaAI/paddleocr-npu

|

NexaAI

| 2025-08-28T20:55:16Z | 16 | 15 | null |

[

"region:us"

] | null | 2025-08-19T23:30:58Z |

# PaddleOCR v4 (PP-OCRv4)

## Model Description

**PP-OCRv4** is the fourth-generation end-to-end optical character recognition system from the PaddlePaddle team.

It combines a lightweight **text detection → angle classification → text recognition** pipeline with improved training techniques and data augmentation, delivering higher accuracy and robustness while staying efficient for real-time use.

PP-OCRv4 supports multilingual OCR (Latin and non-Latin scripts), irregular layouts (rotated/curved text), and challenging inputs such as noisy or low-resolution images often found in mobile and document-scan scenarios.

## Features

- **End-to-end OCR**: text detection, optional angle classification, and text recognition in one pipeline.

- **Multilingual support**: pretrained models for English, Chinese, and dozens of other languages; easy finetuning for domain text.

- **Robust in real-world conditions**: handles rotation, perspective distortion, blur, low light, and complex backgrounds.

- **Lightweight & fast**: practical for both mobile apps and large-scale server deployments.

- **Flexible I/O**: works with photos, scans, screenshots, receipts, invoices, ID cards, dashboards, and UI text.

- **Extensible**: swap components (detector/recognizer), add language packs, or finetune on domain datasets.

## Use Cases

- Document digitization (invoices, receipts, forms, contracts)

- RPA and back-office automation (screen/OCR flows)

- Mobile scanning apps and camera-based translation/read-aloud

- Industrial and retail analytics (labels, price tags, shelf tags)

- Accessibility (screen-readers and read-aloud applications)

## Inputs and Outputs

**Input**: Image (photo, scan, or screenshot).

**Output**: A list of detected text regions, each with:

- bounding box (rectangular or polygonal)

- recognized text string

- optional confidence score and orientation

---

## How to use

> ⚠️ **Hardware requirement:** the model currently runs **only on Qualcomm NPUs** (e.g., Snapdragon-powered AIPC).

> Apple NPU support is planned next.

### 1) Install Nexa-SDK

- Download and follow the steps under "Deploy Section" Nexa's model page: [Download Windows arm64 SDK](https://sdk.nexa.ai/model/PaddleOCR%20v4)

- (Other platforms coming soon)

### 2) Get an access token

Create a token in the Model Hub, then log in:

```bash

nexa config set license '<access_token>'

```

### 3) Run the model

Running:

```bash

nexa infer NexaAI/paddleocr-npu

```

---

## License

- Licensed under [Apache-2.0](https://github.com/PaddlePaddle/PaddleOCR/blob/release/2.7/LICENSE)

## References

- GitHub repo: [https://github.com/PaddlePaddle/PaddleOCR](https://github.com/PaddlePaddle/PaddleOCR)

- Model zoo & documentation: [Models list](https://github.com/PaddlePaddle/PaddleOCR/blob/release/2.7/doc/doc_en/models_list_en.md)

|

gsjang/fa-dorna-llama3-8b-instruct-x-meta-llama-3-8b-instruct-breadcrumbs-50_50

|

gsjang

| 2025-08-28T20:53:58Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"mergekit",

"merge",

"conversational",

"arxiv:2312.06795",

"base_model:PartAI/Dorna-Llama3-8B-Instruct",

"base_model:merge:PartAI/Dorna-Llama3-8B-Instruct",

"base_model:meta-llama/Meta-Llama-3-8B-Instruct",

"base_model:merge:meta-llama/Meta-Llama-3-8B-Instruct",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-28T20:51:00Z |

---

base_model:

- PartAI/Dorna-Llama3-8B-Instruct

- meta-llama/Meta-Llama-3-8B-Instruct

library_name: transformers

tags:

- mergekit

- merge

---

# fa-dorna-llama3-8b-instruct-x-meta-llama-3-8b-instruct-breadcrumbs-50_50

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the [Model Breadcrumbs](https://arxiv.org/abs/2312.06795) merge method using [meta-llama/Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct) as a base.

### Models Merged

The following models were included in the merge:

* [PartAI/Dorna-Llama3-8B-Instruct](https://huggingface.co/PartAI/Dorna-Llama3-8B-Instruct)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

merge_method: breadcrumbs

models:

- model: PartAI/Dorna-Llama3-8B-Instruct

parameters:

weight: 0.5

- model: meta-llama/Meta-Llama-3-8B-Instruct

parameters:

weight: 0.5

parameters: {}

dtype: bfloat16

tokenizer:

source: union

base_model: meta-llama/Meta-Llama-3-8B-Instruct

write_readme: README.md

```

|

koloni/blockassist-bc-deadly_graceful_stingray_1756412463

|

koloni

| 2025-08-28T20:47:17Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"deadly graceful stingray",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:47:13Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- deadly graceful stingray

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756413981

|

eusuf01

| 2025-08-28T20:47:03Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:46:50Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Rudra-madlads/blockassist-bc-jumping_swift_gazelle_1756413723

|

Rudra-madlads

| 2025-08-28T20:42:57Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"jumping swift gazelle",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:42:37Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- jumping swift gazelle

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

rvipitkirubbe/blockassist-bc-mottled_foraging_ape_1756412100

|

rvipitkirubbe

| 2025-08-28T20:41:17Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"mottled foraging ape",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:41:13Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- mottled foraging ape

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

klmdr22/blockassist-bc-wild_loud_newt_1756413229

|

klmdr22

| 2025-08-28T20:34:32Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"wild loud newt",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:34:28Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- wild loud newt

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756412529

|

eusuf01

| 2025-08-28T20:23:25Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:23:02Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Muapi/assassin-s-creed-style-xl-f1d

|

Muapi

| 2025-08-28T20:21:11Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-28T20:21:01Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# Assassin's Creed Style XL + F1D

**Base model**: Flux.1 D

**Trained words**: assassins creed style

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:304745@1062091", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

Cedric077/blockassist-bc-aquatic_deft_crane_1756410981

|

Cedric077

| 2025-08-28T20:20:14Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"aquatic deft crane",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:20:08Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- aquatic deft crane

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Vasya777/blockassist-bc-lumbering_enormous_sloth_1756412372

|

Vasya777

| 2025-08-28T20:20:12Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"lumbering enormous sloth",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:20:05Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- lumbering enormous sloth

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

canoplos112/blockassist-bc-yapping_sleek_squirrel_1756412236

|

canoplos112

| 2025-08-28T20:20:07Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"yapping sleek squirrel",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:17:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- yapping sleek squirrel

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

gsjang/fa-dorna-llama3-8b-instruct-x-meta-llama-3-8b-instruct-slerp-50_50

|

gsjang

| 2025-08-28T20:18:21Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"mergekit",

"merge",

"conversational",

"base_model:PartAI/Dorna-Llama3-8B-Instruct",

"base_model:merge:PartAI/Dorna-Llama3-8B-Instruct",

"base_model:meta-llama/Meta-Llama-3-8B-Instruct",

"base_model:merge:meta-llama/Meta-Llama-3-8B-Instruct",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-28T20:15:21Z |

---

base_model:

- PartAI/Dorna-Llama3-8B-Instruct

- meta-llama/Meta-Llama-3-8B-Instruct

library_name: transformers

tags:

- mergekit

- merge

---

# fa-dorna-llama3-8b-instruct-x-meta-llama-3-8b-instruct-slerp-50_50

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the [SLERP](https://en.wikipedia.org/wiki/Slerp) merge method.

### Models Merged

The following models were included in the merge:

* [PartAI/Dorna-Llama3-8B-Instruct](https://huggingface.co/PartAI/Dorna-Llama3-8B-Instruct)

* [meta-llama/Meta-Llama-3-8B-Instruct](https://huggingface.co/meta-llama/Meta-Llama-3-8B-Instruct)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

merge_method: slerp

models:

- model: PartAI/Dorna-Llama3-8B-Instruct

parameters:

weight: 0.5

- model: meta-llama/Meta-Llama-3-8B-Instruct

parameters:

weight: 0.5

parameters:

t: 0.5

dtype: bfloat16

tokenizer:

source: union

base_model: meta-llama/Meta-Llama-3-8B-Instruct

write_readme: README.md

```

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756411987

|

eusuf01

| 2025-08-28T20:14:19Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:13:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Vasya777/blockassist-bc-lumbering_enormous_sloth_1756412006

|

Vasya777

| 2025-08-28T20:14:08Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"lumbering enormous sloth",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:14:01Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- lumbering enormous sloth

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Loder-S/blockassist-bc-sprightly_knobby_tiger_1756410139

|

Loder-S

| 2025-08-28T20:09:17Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"sprightly knobby tiger",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:08:37Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- sprightly knobby tiger

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

vnhioer/blockassist-bc-dense_unseen_komodo_1756411469

|

vnhioer

| 2025-08-28T20:05:20Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"dense unseen komodo",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:04:30Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- dense unseen komodo

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

eusuf01/blockassist-bc-smooth_humming_butterfly_1756411385

|

eusuf01

| 2025-08-28T20:04:22Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"smooth humming butterfly",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-28T20:03:52Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- smooth humming butterfly

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mradermacher/Anonymizer-4B-GGUF

|

mradermacher

| 2025-08-28T20:02:18Z | 0 | 0 |

transformers

|

[

"transformers",

"gguf",

"en",

"base_model:eternisai/Anonymizer-4B",

"base_model:quantized:eternisai/Anonymizer-4B",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-08-28T19:03:12Z |

---

base_model: eternisai/Anonymizer-4B

language:

- en

library_name: transformers

license: cc-by-nc-4.0

mradermacher:

readme_rev: 1

quantized_by: mradermacher

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static quants of https://huggingface.co/eternisai/Anonymizer-4B

<!-- provided-files -->

***For a convenient overview and download list, visit our [model page for this model](https://hf.tst.eu/model#Anonymizer-4B-GGUF).***

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q2_K.gguf) | Q2_K | 1.8 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q3_K_S.gguf) | Q3_K_S | 2.0 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q3_K_M.gguf) | Q3_K_M | 2.2 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q3_K_L.gguf) | Q3_K_L | 2.3 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.IQ4_XS.gguf) | IQ4_XS | 2.4 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q4_K_S.gguf) | Q4_K_S | 2.5 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q4_K_M.gguf) | Q4_K_M | 2.6 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q5_K_S.gguf) | Q5_K_S | 2.9 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q5_K_M.gguf) | Q5_K_M | 3.0 | |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q6_K.gguf) | Q6_K | 3.4 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.Q8_0.gguf) | Q8_0 | 4.4 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/Anonymizer-4B-GGUF/resolve/main/Anonymizer-4B.f16.gguf) | f16 | 8.2 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

mradermacher/EviOmni-nq_train-1.5B-GGUF

|

mradermacher

| 2025-08-28T19:59:03Z | 0 | 0 |

transformers

|

[