LuxDiT

This directory contains released model weights for LuxDiT: Lighting Estimation with Video Diffusion Transformer. It is finetuned on image data and includes a LoRA adapter for real scenes.

| Model checkpoints | Description |

|---|---|

luxdit_image |

Image-finetuned checkpoint with LoRA adapter for real scenes |

luxdit_video |

Video-finetuned checkpoint with LoRA adapter for real scenes |

hdr_merge_mlp |

HDR merger used to produce .exr outputs from dual tonemapped predictions |

Model description

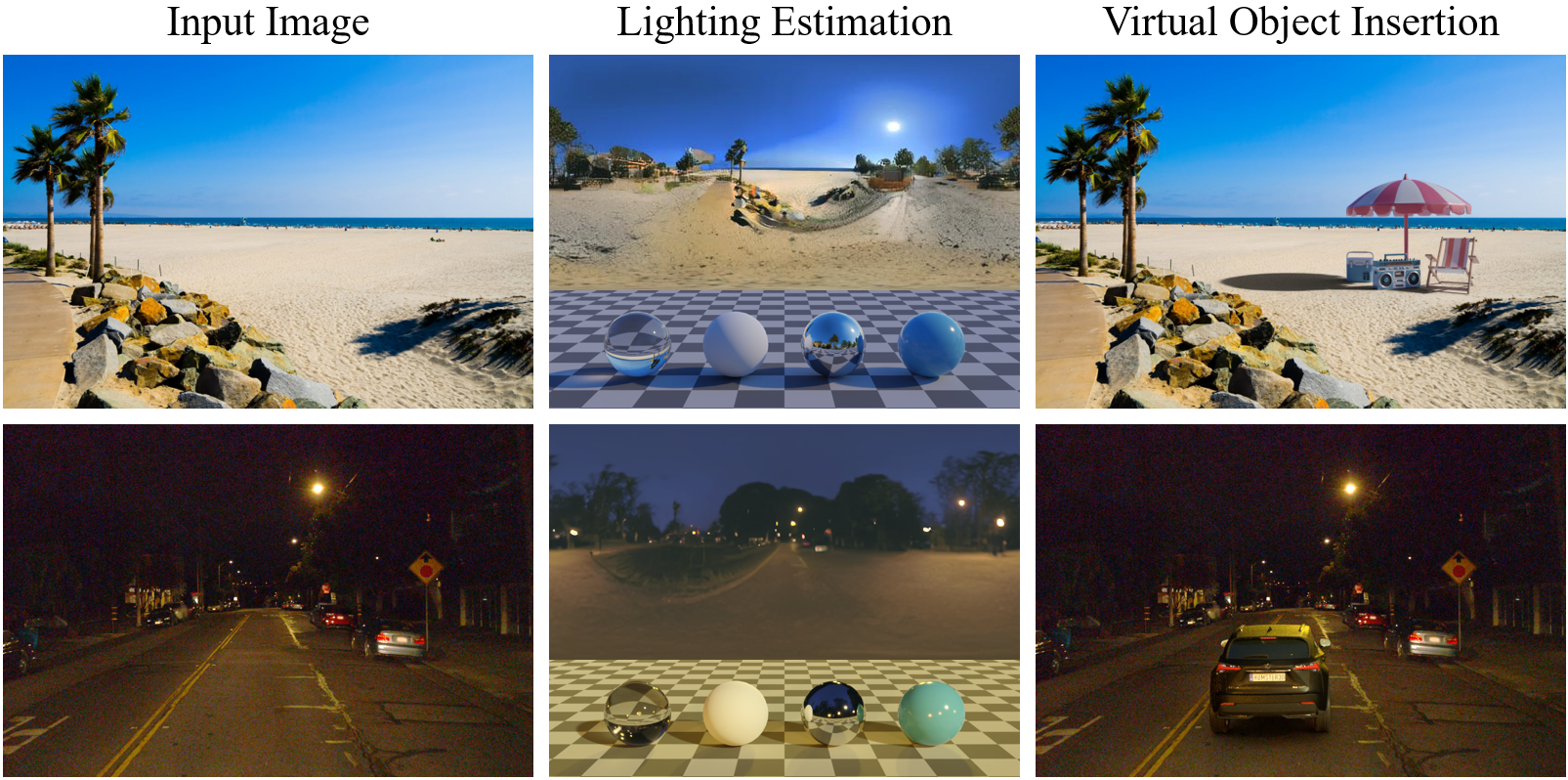

LuxDiT is a generative lighting estimation model that predicts high-quality HDR environment maps from visual input. It preserves scene semantics while estimating realistic illumination for virtual object insertion.

- Architecture: Transformer (based on CogVideoX-5B)

- Parameters: 5B

- Runtime: Python + PyTorch

- Paper: LuxDiT: Lighting Estimation with Video Diffusion Transformer

- Project page: https://research.nvidia.com/labs/toronto-ai/LuxDiT/

- Github repository: https://github.com/nv-tlabs/LuxDiT

Detailed model card is available at model_card.md.

How to use

Please refer to our Github repository (https://github.com/nv-tlabs/LuxDiT) for usage instructions.

Ethical considerations

NVIDIA believes Trustworthy AI is a shared responsibility. Make sure your use case has proper rights and permissions for all input data, and implement appropriate safety and misuse mitigation before deployment. For concerns, see NVIDIA AI Concerns.

License

NVIDIA OneWay Noncommercial License. See LICENSE.md in the repository root.

Citation

@article{liang2025luxdit,

title={Luxdit: Lighting estimation with video diffusion transformer},

author={Liang, Ruofan and He, Kai and Gojcic, Zan and Gilitschenski, Igor and Fidler, Sanja and Vijaykumar, Nandita and Wang, Zian},

journal={arXiv preprint arXiv:2509.03680},

year={2025}

}

- Downloads last month

- -