modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-29 18:27:06

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 526

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-29 18:26:56

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

plasmo/voxel-ish

|

plasmo

| 2023-05-05T11:27:02Z | 67 | 34 |

diffusers

|

[

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-11-24T14:01:22Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

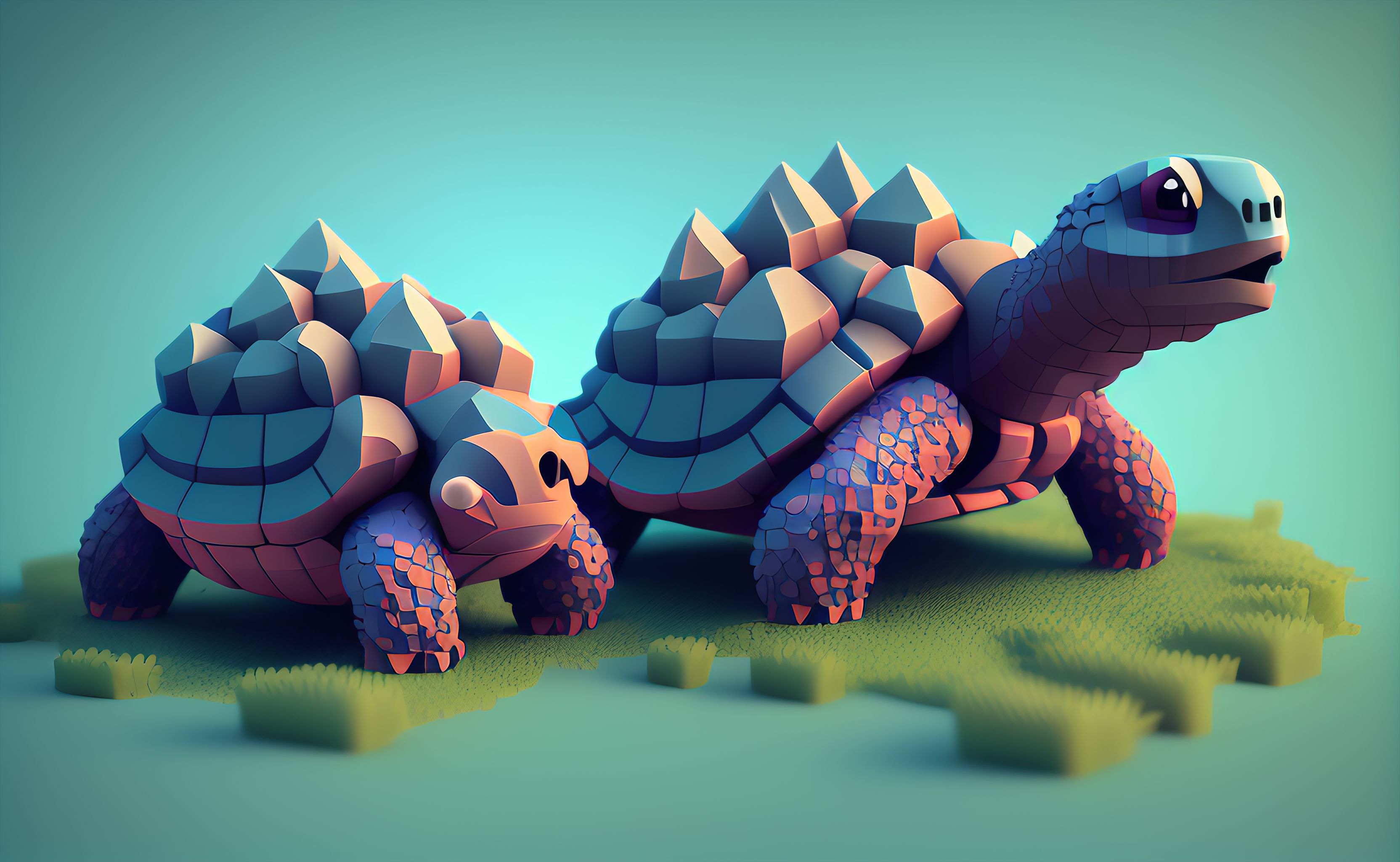

### Jak's Voxel-ish Image Pack for Stable Diffusion

Another fantastic image pack brought to you by 143 training images through 8000 training steps, 20% Training text crafted by Jak_TheAI_Artist

Include Prompt trigger: "voxel-ish" to activate.

Tip: add "intricate detail" in prompt to make a semi-realistic image.

### UPDATE: Version 1.2 available [here](https://huggingface.co/plasmo/vox2)

Sample pictures of this concept:

voxel-ish

|

cansurav/bert-base-uncased-finetuned-cola-dropout-0.3

|

cansurav

| 2023-05-05T11:25:39Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T11:11:13Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-dropout-0.3

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.6036344190543846

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-dropout-0.3

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2847

- Matthews Correlation: 0.6036

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.4995 | 1.0 | 535 | 0.5102 | 0.4897 |

| 0.3023 | 2.0 | 1070 | 0.4585 | 0.5848 |

| 0.1951 | 3.0 | 1605 | 0.6793 | 0.5496 |

| 0.145 | 4.0 | 2140 | 0.7694 | 0.5925 |

| 0.1024 | 5.0 | 2675 | 1.0057 | 0.5730 |

| 0.0691 | 6.0 | 3210 | 1.0275 | 0.5892 |

| 0.0483 | 7.0 | 3745 | 1.0272 | 0.5788 |

| 0.0404 | 8.0 | 4280 | 1.2537 | 0.5810 |

| 0.0219 | 9.0 | 4815 | 1.3020 | 0.5780 |

| 0.0224 | 10.0 | 5350 | 1.2847 | 0.6036 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

chribeiro/ppo-SnowballTarget

|

chribeiro

| 2023-05-05T11:23:09Z | 6 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"SnowballTarget",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-SnowballTarget",

"region:us"

] |

reinforcement-learning

| 2023-05-05T11:23:04Z |

---

library_name: ml-agents

tags:

- SnowballTarget

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SnowballTarget

---

# **ppo** Agent playing **SnowballTarget**

This is a trained model of a **ppo** agent playing **SnowballTarget** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-SnowballTarget

2. Step 1: Find your model_id: chribeiro/ppo-SnowballTarget

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

s3nh/zelda-botw-stable-diffusion

|

s3nh

| 2023-05-05T11:22:27Z | 37 | 17 |

diffusers

|

[

"diffusers",

"stable-diffusion",

"text-to-image",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-11-09T11:05:43Z |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- text-to-image

---

Buy me a coffee if you like this project ;)

<a href="https://www.buymeacoffee.com/s3nh"><img src="https://www.buymeacoffee.com/assets/img/guidelines/download-assets-sm-1.svg" alt=""></a>

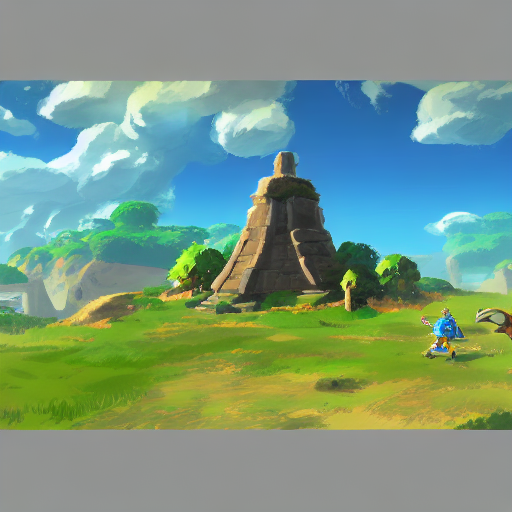

### Arcane based Artwork Diffusion Model

I present you fine tuned model of stable-diffusion-v1-5, which heavily based of

work of great artworks from Legend of Zelda: Breath of The Wild.

Use the tokens **_botw style_** in your prompts for the effect.

Model was trained using the diffusers library, which based on Dreambooth implementation.

Training steps included:

- prior preservation loss

- train-text-encoder fine tuning

### 🧨 Diffusers

This model can be used just like any other Stable Diffusion model. For more information,

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

```python

#!pip install diffusers transformers scipy torch

from diffusers import StableDiffusionPipeline

import torch

model_id = "s3nh/s3nh/zelda-botw-stable-diffusion"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "Rain forest, botw style"

image = pipe(prompt).images[0]

image.save("./example_output.png")

```

# Gallery

## Grumpy cat, botw style

<img src = "https://huggingface.co/s3nh/zelda-botw-stable-diffusion/resolve/main/grumpy cat0.png">

<img src = "https://huggingface.co/s3nh/zelda-botw-stable-diffusion/resolve/main/grumpy cat1.png">

<img src = "https://huggingface.co/s3nh/zelda-botw-stable-diffusion/resolve/main/grumpy cat2.png">

<img src = "https://huggingface.co/s3nh/zelda-botw-stable-diffusion/resolve/main/grumpy cat3.png">

## Landscape, botw style

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

|

s3nh/beksinski-style-stable-diffusion

|

s3nh

| 2023-05-05T11:22:06Z | 39 | 26 |

diffusers

|

[

"diffusers",

"stable-diffusion",

"text-to-image",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-11-05T13:54:26Z |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- text-to-image

---

Buy me a coffee if you like this project ;)

<a href="https://www.buymeacoffee.com/s3nh"><img src="https://www.buymeacoffee.com/assets/img/guidelines/download-assets-sm-1.svg" alt=""></a>

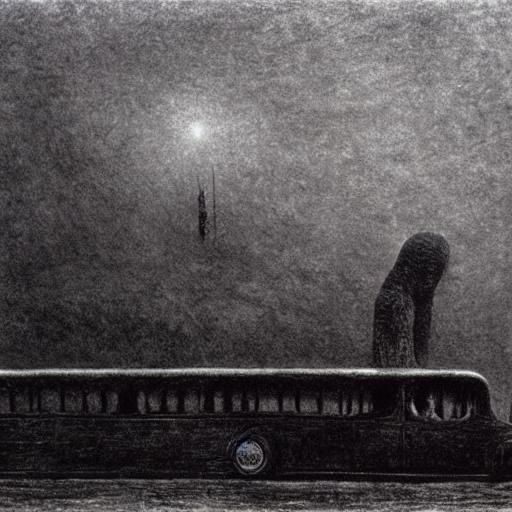

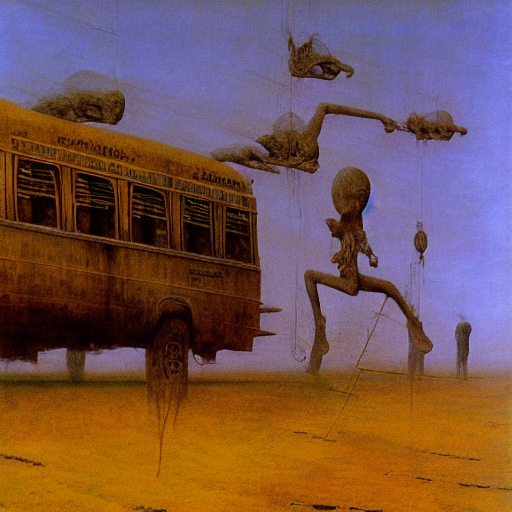

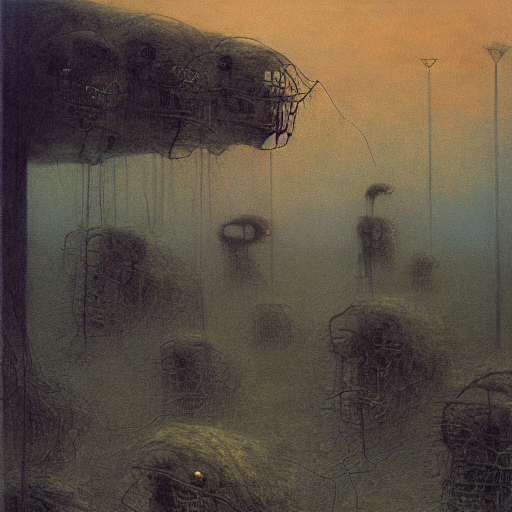

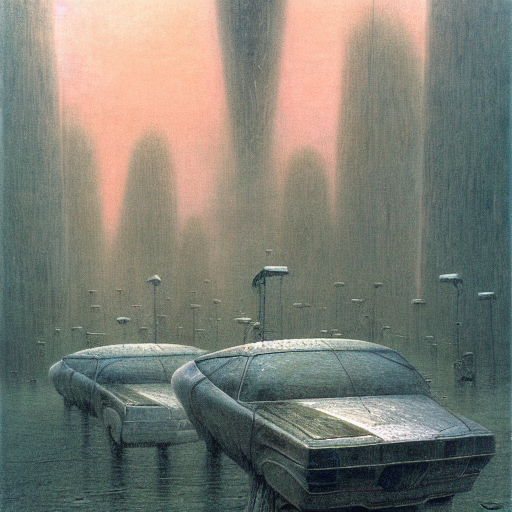

### Zdzislaw Beksinski Art Diffusion Model

I present you fine tuned model of stable-diffusion-v1-5, which heavily based of

work of great artist, Zdzislaw Beksinski.

Use the tokens **_beksinski style_** in your prompts for the effect.

Model was trained using the diffusers library, which based on Dreambooth implementation.

Training steps included:

- prior preservation loss

- train-text-encoder fine tuning

### 🧨 Diffusers

This model can be used just like any other Stable Diffusion model. For more information,

please have a look at the [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion).

You can also export the model to [ONNX](https://huggingface.co/docs/diffusers/optimization/onnx), [MPS](https://huggingface.co/docs/diffusers/optimization/mps) and/or [FLAX/JAX]().

```python

#!pip install diffusers transformers scipy torch

from diffusers import StableDiffusionPipeline

import torch

model_id = "s3nh/beksinski-style-stable-diffusion"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "Bus riding to school, beksinski style"

image = pipe(prompt).images[0]

image.save("./example_output.png")

```

# Gallery

## Bus riding to school, beksinski style.

## Car traffic, beksinski style

## Eating breakfast on sunny day, beksinski style

## Dog drinking coffee, beksinski style

## License

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. The authors claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

[Please read the full license here](https://huggingface.co/spaces/CompVis/stable-diffusion-license)

|

plasmo/zombie-vector

|

plasmo

| 2023-05-05T11:20:13Z | 47 | 20 |

diffusers

|

[

"diffusers",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-11-23T02:04:01Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

widget:

- text: "zombie_vector "

---

### Jak's Zombie Vector Pack for Stable Diffusion

Another fantastic image pack brought to you by 124 training images through 5000 training steps, 20% Training text crafted by Jak_TheAI_Artist

Include Prompt trigger: "zombie_vector" to activate.

Perfect for designing T-shirts and zombie vector art.

Sample pictures of this concept:

|

Bainbridge/gpt2-ear_01-hs_cn

|

Bainbridge

| 2023-05-05T11:18:38Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-05-03T14:39:06Z |

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: gpt2-ear_01-hs_cn

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-ear_01-hs_cn

This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5615

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 4

- seed: 21

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 73.2086 | 0.02 | 10 | 69.5757 |

| 45.7678 | 0.04 | 20 | 32.9873 |

| 13.2515 | 0.06 | 30 | 10.6430 |

| 6.5161 | 0.08 | 40 | 4.2683 |

| 2.5505 | 0.1 | 50 | 2.0421 |

| 1.1408 | 0.12 | 60 | 1.0782 |

| 0.7897 | 0.14 | 70 | 0.9155 |

| 0.7106 | 0.16 | 80 | 0.7515 |

| 0.4254 | 0.18 | 90 | 0.6416 |

| 0.398 | 0.2 | 100 | 0.6129 |

| 0.3089 | 0.22 | 110 | 0.6074 |

| 0.3197 | 0.24 | 120 | 0.5942 |

| 0.3142 | 0.26 | 130 | 0.6017 |

| 0.307 | 0.28 | 140 | 0.5854 |

| 0.2895 | 0.3 | 150 | 0.5731 |

| 0.276 | 0.32 | 160 | 0.5735 |

| 0.2107 | 0.34 | 170 | 0.5753 |

| 0.3173 | 0.36 | 180 | 0.5642 |

| 0.3139 | 0.38 | 190 | 0.5654 |

| 0.2725 | 0.4 | 200 | 0.5622 |

| 0.368 | 0.42 | 210 | 0.5616 |

| 0.3203 | 0.44 | 220 | 0.5600 |

| 0.2286 | 0.46 | 230 | 0.5616 |

| 0.2365 | 0.48 | 240 | 0.5612 |

| 0.248 | 0.5 | 250 | 0.5615 |

### Framework versions

- Transformers 4.29.0.dev0

- Pytorch 1.12.0a0+bd13bc6

- Datasets 2.12.0

- Tokenizers 0.13.3

|

BakkerHenk/glitch

|

BakkerHenk

| 2023-05-05T11:15:45Z | 33 | 1 |

diffusers

|

[

"diffusers",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2022-11-09T19:35:28Z |

---

license: mit

---

### Glitch on Stable Diffusion via Dreambooth

#### model by BakkerHenk

This your the Stable Diffusion model fine-tuned the Glitch concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt`: **a photo in sks glitched style**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

Here are the images used for training this concept:

|

jordiclive/alpaca_gpt4-dolly_15k-vicuna-lora-7b

|

jordiclive

| 2023-05-05T11:14:08Z | 0 | 2 | null |

[

"sft",

"text-generation",

"en",

"dataset:sahil2801/CodeAlpaca-20k",

"dataset:yahma/alpaca-cleaned",

"dataset:databricks/databricks-dolly-15k",

"dataset:OpenAssistant/oasst1",

"dataset:jeffwan/sharegpt_vicuna",

"dataset:qwedsacf/grade-school-math-instructions",

"dataset:vicgalle/alpaca-gpt4",

"license:mit",

"region:us"

] |

text-generation

| 2023-04-29T09:12:37Z |

---

license: mit

datasets:

- sahil2801/CodeAlpaca-20k

- yahma/alpaca-cleaned

- databricks/databricks-dolly-15k

- OpenAssistant/oasst1

- jeffwan/sharegpt_vicuna

- qwedsacf/grade-school-math-instructions

- vicgalle/alpaca-gpt4

language:

- en

tags:

- sft

pipeline_tag: text-generation

widget:

- text: >-

<|prompter|>What is a meme, and what's the history behind this

word?</s><|assistant|>

- text: <|prompter|>What's the Earth total population</s><|assistant|>

- text: <|prompter|>Write a story about future of AI development</s><|assistant|>

---

# LoRA Adapter for LLaMA 7B trained on more datasets than tloen/alpaca-lora-7b

This repo contains a low-rank adapter for **LLaMA-7b** fit on datasets part of the OpenAssistant project.

You can see sampling results [here](https://open-assistant.github.io/oasst-model-eval/?f=https%3A%2F%2Fraw.githubusercontent.com%2FOpen-Assistant%2Foasst-model-eval%2Fmain%2Fsampling_reports%2Foasst-sft%2F2023-03-18_llama_30b_oasst_latcyr_400_sampling_noprefix_lottery.json%0Ahttps%3A%2F%2Fraw.githubusercontent.com%2FOpen-Assistant%2Foasst-model-eval%2F8e90ce6504c159d4046991bf37757c108aed913f%2Fsampling_reports%2Foasst-sft%2Freport_file_jordiclive_alpaca_gpt4-dolly_15k-vicuna-lora-7b_full_lottery_no_prefix.json). Note the sampling params are not necessarily the optimum—they are OpenAssistant defaults for comparing models.

This version of the weights was trained with the following hyperparameters:

- Epochs: 8

- Batch size: 128

- Max Length: 2048

- Learning rate: 8e-6

- Lora _r_: 16

- Lora Alpha: 32

- Lora target modules: q_proj, k_proj, v_proj, o_proj

The model was trained with flash attention and gradient checkpointing.

## Dataset Details

- dolly15k:

val_split: 0.05

max_val_set: 300

- oasst_export:

lang: "bg,ca,cs,da,de,en,es,fr,hr,hu,it,nl,pl,pt,ro,ru,sl,sr,sv,uk"

input_file_path: 2023-04-12_oasst_release_ready_synth.jsonl.gz

val_split: 0.05

- vicuna:

val_split: 0.05

max_val_set: 800

fraction: 0.8

- dolly15k:

val_split: 0.05

max_val_set: 300

- grade_school_math_instructions:

val_split: 0.05

- code_alpaca:

val_split: 0.05

max_val_set: 250

- alpaca_gpt4:

val_split: 0.02

max_val_set: 250

## Model Details

- **Developed** as part of the OpenAssistant Project

- **Model type:** PEFT Adapter for frozen LLaMA

- **Language:** English

## Prompting

Two special tokens are used to mark the beginning of user and assistant turns:

`<|prompter|>` and `<|assistant|>`. Each turn ends with a `<|endoftext|>` token.

Input prompt example:

```

<|prompter|>What is a meme, and what's the history behind this word?</s><|assistant|>

```

The input ends with the `<|assistant|>` token to signal that the model should

start generating the assistant reply.

# Example Inference Code (Note several embeddings need to be loaded along with the LoRA weights), assumes on GPU and torch.float16:

```

from typing import List, NamedTuple

import torch

import transformers

from huggingface_hub import hf_hub_download

from peft import PeftModel

from transformers import GenerationConfig

device = "cuda" if torch.cuda.is_available() else "cpu"

tokenizer = transformers.AutoTokenizer.from_pretrained("jordiclive/alpaca_gpt4-dolly_15k-vicuna-lora-7b")

model = transformers.AutoModelForCausalLM.from_pretrained(

"decapoda-research/llama-7b-hf", torch_dtype=torch.float16

) # Load Base Model

model.resize_token_embeddings(

len(tokenizer)

) # This model repo also contains several embeddings for special tokens that need to be loaded.

model.config.eos_token_id = tokenizer.eos_token_id

model.config.bos_token_id = tokenizer.bos_token_id

model.config.pad_token_id = tokenizer.pad_token_id

lora_weights = "jordiclive/alpaca_gpt4-dolly_15k-vicuna-lora-7b"

model = PeftModel.from_pretrained(

model,

lora_weights,

torch_dtype=torch.float16,

) # Load Lora model

model.eos_token_id = tokenizer.eos_token_id

filename = hf_hub_download("jordiclive/alpaca_gpt4-dolly_15k-vicuna-lora-7b", "extra_embeddings.pt")

embed_weights = torch.load(

filename, map_location=torch.device("cuda" if torch.cuda.is_available() else "cpu")

) # Load embeddings for special tokens

model.base_model.model.model.embed_tokens.weight[32000:, :] = embed_weights.to(

model.base_model.model.model.embed_tokens.weight.dtype

).to(

device

) # Add special token embeddings

model = model.half().to(device)

generation_config = GenerationConfig(

temperature=0.1,

top_p=0.75,

top_k=40,

num_beams=4,

)

def format_system_prompt(prompt, eos_token="</s>"):

return "{}{}{}{}".format(

"<|prompter|>",

prompt,

eos_token,

"<|assistant|>"

)

def generate(prompt, generation_config=generation_config, max_new_tokens=2048, device=device):

prompt = format_system_prompt(prompt) # OpenAssistant Prompt Format expected

input_ids = tokenizer(prompt, return_tensors="pt").input_ids.to(device)

with torch.no_grad():

generation_output = model.generate(

input_ids=input_ids,

generation_config=generation_config,

return_dict_in_generate=True,

output_scores=True,

max_new_tokens=max_new_tokens,

eos_token_id=2,

)

s = generation_output.sequences[0]

output = tokenizer.decode(s)

print("Text generated:")

print(output)

return output

generate("What is a meme, and what's the history behind this word?")

generate("What's the Earth total population")

generate("Write a story about future of AI development")

```

|

usix79/poca-SoccerTwos

|

usix79

| 2023-05-05T11:08:40Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"unity-ml-agents",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-SoccerTwos",

"region:us"

] |

reinforcement-learning

| 2023-05-05T11:08:35Z |

---

tags:

- unity-ml-agents

- ml-agents

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SoccerTwos

library_name: ml-agents

---

# **poca** Agent playing **SoccerTwos**

This is a trained model of a **poca** agent playing **SoccerTwos** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-SoccerTwos

2. Step 1: Write your model_id: usix79/poca-SoccerTwos

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

IsakG/declension_error_detection

|

IsakG

| 2023-05-05T10:59:36Z | 106 | 1 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"Icelandic",

"Fallbeyging",

"Declension",

"Inflection",

"GED",

"IceBERT",

"is",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-04T22:25:50Z |

---

language:

- is

tags:

- Icelandic

- Fallbeyging

- Declension

- Inflection

- GED

- IceBERT

---

Add an Icelandic sentence in to the text box, and the model will return a classification of either correct or incorrect declension

Bættu íslenskri setningu inn í textareitinn og líkanið mun skila flokkun með annað hvort rétta eða ranga beygingu

|

yagmurery/bert-base-uncased-finetuned-batchSize-cola-64

|

yagmurery

| 2023-05-05T10:50:35Z | 109 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T10:44:28Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-batchSize-cola-64

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5961744294806522

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-batchSize-cola-64

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0984

- Matthews Correlation: 0.5962

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| No log | 1.0 | 134 | 1.2908 | 0.5651 |

| No log | 2.0 | 268 | 1.1057 | 0.5729 |

| No log | 3.0 | 402 | 1.0984 | 0.5962 |

| 0.0195 | 4.0 | 536 | 1.1799 | 0.5753 |

| 0.0195 | 5.0 | 670 | 1.2076 | 0.5804 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

psin/summarizing_news

|

psin

| 2023-05-05T10:37:37Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-05-05T09:57:55Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- rouge

model-index:

- name: summarizing_news

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# summarizing_news

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.5292

- Rouge1: 0.384

- Rouge2: 0.1554

- Rougel: 0.3376

- Rougelsum: 0.3377

- Gen Len: 18.8513

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 72

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|:-------:|

| No log | 1.0 | 63 | 3.0459 | 0.3393 | 0.1259 | 0.2985 | 0.2986 | 18.9927 |

| No log | 2.0 | 126 | 2.7214 | 0.3699 | 0.1458 | 0.3255 | 0.3257 | 18.9666 |

| No log | 3.0 | 189 | 2.5743 | 0.3805 | 0.153 | 0.3345 | 0.3347 | 18.8972 |

| No log | 4.0 | 252 | 2.5292 | 0.384 | 0.1554 | 0.3376 | 0.3377 | 18.8513 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

consolida/ateliersophie

|

consolida

| 2023-05-05T10:36:03Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-05-05T09:57:07Z |

ソフィー学習モデル

呼び出し呪文例

shs, 1girl, solo,jewelry, corset, blush, necklace, coat, ahoge, brown hair, head scarf, short hair, brown eyes, collared coat, closed mouth, blue coat, open coat, long sleeves, red eyes

|

pnparam/swlosof02_2

|

pnparam

| 2023-05-05T10:35:42Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-05-05T09:51:10Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: swlosof02_2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# swlosof02_2

This model is a fine-tuned version of [facebook/wav2vec2-large-960h-lv60-self](https://huggingface.co/facebook/wav2vec2-large-960h-lv60-self) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 25

- mixed_precision_training: Native AMP

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

kindlytree/demo

|

kindlytree

| 2023-05-05T10:33:23Z | 1 | 0 |

diffusers

|

[

"diffusers",

"tensorboard",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"lora",

"base_model:Linaqruf/anything-v3.0",

"base_model:adapter:Linaqruf/anything-v3.0",

"license:creativeml-openrail-m",

"region:us"

] |

text-to-image

| 2023-05-04T13:21:11Z |

---

license: creativeml-openrail-m

base_model: Linaqruf/anything-v3.0

instance_prompt: shanshui

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# LoRA DreamBooth - kindlytree/lora-outputs

These are LoRA adaption weights for Linaqruf/anything-v3.0. The weights were trained on shanshui using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

LoRA for the text encoder was enabled: False.

|

cansurav/bert-base-uncased-finetuned-cola-learning_rate-0.0001

|

cansurav

| 2023-05-05T10:24:06Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T10:02:31Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-learning_rate-0.0001

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.0

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-learning_rate-0.0001

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7459

- Matthews Correlation: 0.0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.6205 | 1.0 | 535 | 0.7459 | 0.0 |

| 0.6218 | 2.0 | 1070 | 0.6288 | 0.0 |

| 0.6166 | 3.0 | 1605 | 0.6181 | 0.0 |

| 0.6196 | 4.0 | 2140 | 0.6279 | 0.0 |

| 0.6137 | 5.0 | 2675 | 0.6202 | 0.0 |

| 0.6138 | 6.0 | 3210 | 0.6203 | 0.0 |

| 0.6074 | 7.0 | 3745 | 0.6184 | 0.0 |

| 0.6128 | 8.0 | 4280 | 0.6220 | 0.0 |

| 0.6073 | 9.0 | 4815 | 0.6183 | 0.0 |

| 0.6113 | 10.0 | 5350 | 0.6196 | 0.0 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

muhammadravi251001/fine-tuned-DatasetQAS-TYDI-QA-ID-with-indobert-base-uncased-with-ITTL-with-freeze-LR-1e-05

|

muhammadravi251001

| 2023-05-05T10:17:01Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"question-answering",

"generated_from_trainer",

"license:mit",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-05-05T08:42:49Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- f1

model-index:

- name: fine-tuned-DatasetQAS-TYDI-QA-ID-with-indobert-base-uncased-with-ITTL-with-freeze-LR-1e-05

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# fine-tuned-DatasetQAS-TYDI-QA-ID-with-indobert-base-uncased-with-ITTL-with-freeze-LR-1e-05

This model is a fine-tuned version of [indolem/indobert-base-uncased](https://huggingface.co/indolem/indobert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.3132

- Exact Match: 53.2628

- F1: 68.3641

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Exact Match | F1 |

|:-------------:|:-----:|:----:|:---------------:|:-----------:|:-------:|

| 6.3129 | 0.5 | 19 | 3.9006 | 5.6437 | 16.4748 |

| 6.3129 | 1.0 | 38 | 2.8272 | 17.1076 | 30.0839 |

| 3.8917 | 1.5 | 57 | 2.4681 | 18.8713 | 32.8962 |

| 3.8917 | 2.0 | 76 | 2.2891 | 25.3968 | 38.0874 |

| 3.8917 | 2.5 | 95 | 2.1835 | 26.9841 | 39.5053 |

| 2.3963 | 3.0 | 114 | 2.0885 | 28.5714 | 42.0243 |

| 2.3963 | 3.5 | 133 | 1.9971 | 32.4515 | 45.4085 |

| 2.112 | 4.0 | 152 | 1.9124 | 34.3915 | 48.2893 |

| 2.112 | 4.5 | 171 | 1.8358 | 37.0370 | 50.6492 |

| 2.112 | 5.0 | 190 | 1.7545 | 40.7407 | 54.7031 |

| 1.8205 | 5.5 | 209 | 1.6432 | 44.4444 | 58.2669 |

| 1.8205 | 6.0 | 228 | 1.5589 | 46.9136 | 60.8052 |

| 1.8205 | 6.5 | 247 | 1.4861 | 48.1481 | 62.5185 |

| 1.573 | 7.0 | 266 | 1.4381 | 49.7354 | 64.1985 |

| 1.573 | 7.5 | 285 | 1.3944 | 51.6755 | 66.0223 |

| 1.387 | 8.0 | 304 | 1.3534 | 53.2628 | 67.6841 |

| 1.387 | 8.5 | 323 | 1.3384 | 53.0864 | 67.8619 |

| 1.387 | 9.0 | 342 | 1.3344 | 52.9101 | 68.0618 |

| 1.2998 | 9.5 | 361 | 1.3182 | 53.2628 | 68.4149 |

| 1.2998 | 10.0 | 380 | 1.3132 | 53.2628 | 68.3641 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.13.1+cu117

- Datasets 2.2.0

- Tokenizers 0.13.2

|

jangmin/whisper-small-ko-1159h

|

jangmin

| 2023-05-05T10:13:37Z | 75 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"whisper",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-05-04T22:44:43Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- wer

model-index:

- name: whisper-small-ko-1159h

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# whisper-small-ko-1159h

This model is a fine-tuned version of [openai/whisper-small](https://huggingface.co/openai/whisper-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1752

- Wer: 10.4449

## Model description

The model was trained to transcript the audio sources into Korean text.

## Intended uses & limitations

More information needed

## Training and evaluation data

I downloaded all data from AI-HUB (https://aihub.or.kr/). Two datasets, in particular, caught my attention: "Instruction Audio Set" and "Noisy Conversation Audio Set".

I intentionally gathered 796 hours of audio from the first dataset and 363 hours of audio from the second dataset (This includes statistics for the training data only, and excludes information about the validation data.).

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 100

- training_steps: 18483

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|

| 0.0953 | 0.33 | 2053 | 0.2155 | 13.0432 |

| 0.0803 | 0.67 | 4106 | 0.1951 | 12.0399 |

| 0.0746 | 1.0 | 6159 | 0.1836 | 11.3995 |

| 0.0509 | 1.33 | 8212 | 0.1819 | 11.0396 |

| 0.0525 | 1.67 | 10265 | 0.1782 | 10.9039 |

| 0.0493 | 2.0 | 12318 | 0.1743 | 10.7255 |

| 0.034 | 2.33 | 14371 | 0.1784 | 10.7377 |

| 0.0326 | 2.67 | 16424 | 0.1765 | 10.5471 |

| 0.0293 | 3.0 | 18477 | 0.1752 | 10.4449 |

### Framework versions

- Transformers 4.28.0.dev0

- Pytorch 1.13.1+cu117

- Datasets 2.11.0

- Tokenizers 0.13.2

|

liuliu96/git-base-pokemon

|

liuliu96

| 2023-05-05T10:05:33Z | 63 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"git",

"image-text-to-text",

"generated_from_trainer",

"dataset:imagefolder",

"license:mit",

"endpoints_compatible",

"region:us"

] |

image-text-to-text

| 2023-05-05T09:15:02Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- imagefolder

model-index:

- name: git-base-pokemon

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# git-base-pokemon

This model is a fine-tuned version of [microsoft/git-base](https://huggingface.co/microsoft/git-base) on the imagefolder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0392

- Wer Score: 2.4636

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer Score |

|:-------------:|:-----:|:----:|:---------------:|:---------:|

| 7.334 | 4.17 | 50 | 4.5690 | 13.9068 |

| 2.4021 | 8.33 | 100 | 0.4880 | 9.8480 |

| 0.1468 | 12.5 | 150 | 0.0350 | 0.4074 |

| 0.0179 | 16.67 | 200 | 0.0330 | 2.5888 |

| 0.006 | 20.83 | 250 | 0.0355 | 3.7037 |

| 0.0024 | 25.0 | 300 | 0.0373 | 4.7152 |

| 0.0017 | 29.17 | 350 | 0.0377 | 3.8314 |

| 0.0014 | 33.33 | 400 | 0.0385 | 3.2516 |

| 0.0012 | 37.5 | 450 | 0.0387 | 3.1609 |

| 0.0011 | 41.67 | 500 | 0.0390 | 2.6105 |

| 0.0011 | 45.83 | 550 | 0.0391 | 2.7650 |

| 0.0011 | 50.0 | 600 | 0.0392 | 2.4636 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

yagmurery/bert-base-uncased-finetuned-dropout-cola-0.2

|

yagmurery

| 2023-05-05T10:03:38Z | 109 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:20:43Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-dropout-cola-0.2

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5957317644481708

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-dropout-cola-0.2

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8150

- Matthews Correlation: 0.5957

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.4985 | 1.0 | 535 | 0.5022 | 0.4978 |

| 0.3168 | 2.0 | 1070 | 0.4357 | 0.5836 |

| 0.2116 | 3.0 | 1605 | 0.6536 | 0.5365 |

| 0.149 | 4.0 | 2140 | 0.8150 | 0.5957 |

| 0.0911 | 5.0 | 2675 | 0.8846 | 0.5838 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

cansurav/bert-base-uncased-finetuned-cola-learning_rate-8e-06

|

cansurav

| 2023-05-05T10:02:23Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:48:00Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-learning_rate-8e-06

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5752615459764325

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-learning_rate-8e-06

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8389

- Matthews Correlation: 0.5753

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-06

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.5241 | 1.0 | 535 | 0.4659 | 0.5046 |

| 0.3755 | 2.0 | 1070 | 0.4412 | 0.5650 |

| 0.2782 | 3.0 | 1605 | 0.5524 | 0.5395 |

| 0.2154 | 4.0 | 2140 | 0.6437 | 0.5651 |

| 0.1669 | 5.0 | 2675 | 0.7709 | 0.5650 |

| 0.1503 | 6.0 | 3210 | 0.8389 | 0.5753 |

| 0.1151 | 7.0 | 3745 | 0.8964 | 0.5681 |

| 0.1082 | 8.0 | 4280 | 0.9767 | 0.5548 |

| 0.0816 | 9.0 | 4815 | 0.9978 | 0.5498 |

| 0.0809 | 10.0 | 5350 | 1.0170 | 0.5576 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

kws/a2c-AntBulletEnv-v0

|

kws

| 2023-05-05T09:57:17Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"AntBulletEnv-v0",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-12T10:04:34Z |

---

library_name: stable-baselines3

tags:

- AntBulletEnv-v0

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: AntBulletEnv-v0

type: AntBulletEnv-v0

metrics:

- type: mean_reward

value: 1587.19 +/- 175.00

name: mean_reward

verified: false

---

# **A2C** Agent playing **AntBulletEnv-v0**

This is a trained model of a **A2C** agent playing **AntBulletEnv-v0**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

Pika62/kogpt2-base-v2-finetuned-klue-ner

|

Pika62

| 2023-05-05T09:54:48Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"token-classification",

"generated_from_trainer",

"dataset:klue",

"license:cc-by-nc-sa-4.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-05-03T03:57:42Z |

---

license: cc-by-nc-sa-4.0

tags:

- generated_from_trainer

datasets:

- klue

metrics:

- f1

model-index:

- name: kogpt2-base-v2-finetuned-klue-ner

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: klue

type: klue

config: ner

split: validation

args: ner

metrics:

- name: F1

type: f1

value: 0.2122585806255

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# kogpt2-base-v2-finetuned-klue-ner

This model is a fine-tuned version of [skt/kogpt2-base-v2](https://huggingface.co/skt/kogpt2-base-v2) on the klue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4057

- F1: 0.2123

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.4952 | 1.0 | 876 | 0.4714 | 0.1416 |

| 0.354 | 2.0 | 1752 | 0.4263 | 0.1849 |

| 0.2812 | 3.0 | 2628 | 0.4057 | 0.2123 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

meltemtatli/bert-base-uncased-finetuned-cola-trying

|

meltemtatli

| 2023-05-05T09:48:15Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-04T22:09:27Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-trying

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5318380398617779

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-trying

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4377

- Matthews Correlation: 0.5318

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.4603 | 1.0 | 535 | 0.4377 | 0.5318 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

cansurav/bert-base-uncased-finetuned-cola-learning_rate-9e-06

|

cansurav

| 2023-05-05T09:47:52Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:33:26Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-learning_rate-9e-06

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5753593483598531

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-learning_rate-9e-06

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9848

- Matthews Correlation: 0.5754

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 9e-06

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.5227 | 1.0 | 535 | 0.5061 | 0.4717 |

| 0.3617 | 2.0 | 1070 | 0.4769 | 0.5701 |

| 0.2584 | 3.0 | 1605 | 0.5299 | 0.5625 |

| 0.1998 | 4.0 | 2140 | 0.6801 | 0.5629 |

| 0.1492 | 5.0 | 2675 | 0.8519 | 0.5446 |

| 0.1323 | 6.0 | 3210 | 0.9372 | 0.5624 |

| 0.103 | 7.0 | 3745 | 0.9424 | 0.5753 |

| 0.0949 | 8.0 | 4280 | 0.9848 | 0.5754 |

| 0.0718 | 9.0 | 4815 | 1.0474 | 0.5652 |

| 0.0629 | 10.0 | 5350 | 1.0657 | 0.5731 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

cansurav/bert-base-uncased-finetuned-cola-learning_rate-4e-05

|

cansurav

| 2023-05-05T09:33:19Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:18:58Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-learning_rate-4e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5732046470010711

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-learning_rate-4e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.3213

- Matthews Correlation: 0.5732

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.5002 | 1.0 | 535 | 0.5568 | 0.4891 |

| 0.2954 | 2.0 | 1070 | 0.5052 | 0.5210 |

| 0.1976 | 3.0 | 1605 | 0.7016 | 0.5033 |

| 0.1367 | 4.0 | 2140 | 0.9378 | 0.5628 |

| 0.0889 | 5.0 | 2675 | 1.0129 | 0.5470 |

| 0.0555 | 6.0 | 3210 | 1.1484 | 0.5575 |

| 0.0431 | 7.0 | 3745 | 1.1081 | 0.5527 |

| 0.028 | 8.0 | 4280 | 1.1268 | 0.5697 |

| 0.0192 | 9.0 | 4815 | 1.3071 | 0.5627 |

| 0.013 | 10.0 | 5350 | 1.3213 | 0.5732 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

BlueAvenir/sti_security_class_model

|

BlueAvenir

| 2023-05-05T09:26:22Z | 5 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"xlm-roberta",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2023-05-05T09:26:12Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 228 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 1,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": 228,

"warmup_steps": 23,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: XLMRobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

cansurav/bert-base-uncased-finetuned-cola-learning_rate-3e-05

|

cansurav

| 2023-05-05T09:18:51Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-04T18:07:04Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-cola-learning_rate-3e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5881177177003271

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-cola-learning_rate-3e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0201

- Matthews Correlation: 0.5881

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.4873 | 1.0 | 535 | 0.6048 | 0.4571 |

| 0.2844 | 2.0 | 1070 | 0.5333 | 0.5521 |

| 0.1893 | 3.0 | 1605 | 0.7435 | 0.5574 |

| 0.1362 | 4.0 | 2140 | 0.7142 | 0.5825 |

| 0.0924 | 5.0 | 2675 | 0.8334 | 0.5625 |

| 0.0596 | 6.0 | 3210 | 1.0201 | 0.5881 |

| 0.0496 | 7.0 | 3745 | 1.0777 | 0.5686 |

| 0.03 | 8.0 | 4280 | 1.2245 | 0.5630 |

| 0.0122 | 9.0 | 4815 | 1.3665 | 0.5701 |

| 0.0111 | 10.0 | 5350 | 1.4043 | 0.5778 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

yagmurery/bert-base-uncased-finetuned-learningRate-2-cola-4e-05

|

yagmurery

| 2023-05-05T09:16:26Z | 110 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:08:43Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-learningRate-2-cola-4e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.539019545585709

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-learningRate-2-cola-4e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2969

- Matthews Correlation: 0.5390

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.1286 | 1.0 | 535 | 0.9932 | 0.5235 |

| 0.0942 | 2.0 | 1070 | 1.1242 | 0.5229 |

| 0.1325 | 3.0 | 1605 | 0.9707 | 0.5203 |

| 0.0916 | 4.0 | 2140 | 1.0752 | 0.5313 |

| 0.0403 | 5.0 | 2675 | 1.2969 | 0.5390 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

yagmurery/bert-base-uncased-finetuned-learningRate-2-cola-3e-05

|

yagmurery

| 2023-05-05T09:08:39Z | 109 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-05-05T09:00:37Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-finetuned-learningRate-2-cola-3e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: validation

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5907527969578087

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-learningRate-2-cola-3e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8555

- Matthews Correlation: 0.5908

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.2022 | 1.0 | 535 | 0.9205 | 0.5285 |

| 0.1155 | 2.0 | 1070 | 0.8555 | 0.5908 |

| 0.1312 | 3.0 | 1605 | 0.9399 | 0.5496 |

| 0.0956 | 4.0 | 2140 | 1.0178 | 0.5577 |

| 0.048 | 5.0 | 2675 | 1.1525 | 0.5528 |

### Framework versions

- Transformers 4.28.1

- Pytorch 2.0.0+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

superqing/pangu-evolution

|

superqing

| 2023-05-05T09:08:09Z | 14 | 0 |

transformers

|

[

"transformers",

"gpt_pangu",

"text-generation",

"custom_code",

"license:apache-2.0",

"autotrain_compatible",

"region:us"

] |

text-generation

| 2023-03-31T06:39:43Z |

---

license: apache-2.0

---

## Introduction

PanGu-Alpha-Evolution is an enhanced version of Pangu-Alpha, which can better understand and process tasks, and better follow your task description. More technical details will be updated continuously, please pay attention.

[[Technical report](https://git.openi.org.cn/PCL-Platform.Intelligence/PanGu-Alpha/src/branch/master/PANGU-%ce%b1.pdf)]

### Use

```python

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("superqing/pangu-evolution")

model = AutoModelForCausalLM.from_pretrained("superqing/pangu-evolution", trust_remote_code=True)

```

|

asenella/reproduce_jmvae_seed_2

|