modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-27 00:39:58

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 521

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-27 00:39:49

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

digiplay/majicMIX_realistic_v4

|

digiplay

| 2023-09-26T06:35:55Z | 665 | 6 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-05-29T20:14:50Z |

---

license: other

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

https://civitai.com/models/43331/majicmix-realistic

Sample image I made generated by huggingface's API :

|

AescF/hubert-base-ls960-finetuned-common_language

|

AescF

| 2023-09-26T06:31:48Z | 161 | 1 |

transformers

|

[

"transformers",

"pytorch",

"hubert",

"audio-classification",

"generated_from_trainer",

"dataset:common_language",

"base_model:facebook/hubert-base-ls960",

"base_model:finetune:facebook/hubert-base-ls960",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-09-25T22:50:25Z |

---

license: apache-2.0

base_model: facebook/hubert-base-ls960

tags:

- generated_from_trainer

datasets:

- common_language

metrics:

- accuracy

model-index:

- name: hubert-base-ls960-finetuned-common_language-finetuned-common_language

results:

- task:

name: Audio Classification

type: audio-classification

dataset:

name: Common Language

type: common_language

config: full

split: test

args: full

metrics:

- name: Accuracy

type: accuracy

value: 0.8011068254234446

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# hubert-base-ls960-finetuned-common_language-finetuned-common_language

This model is a fine-tuned version of [facebook/hubert-base-ls960](https://huggingface.co/facebook/hubert-base-ls960) on the Common Language dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4164

- Accuracy: 0.8011

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 8

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 2.9713 | 1.0 | 2774 | 3.0764 | 0.1615 |

| 1.7443 | 2.0 | 5549 | 1.8279 | 0.4734 |

| 1.1304 | 3.0 | 8323 | 1.3202 | 0.6371 |

| 1.2718 | 4.0 | 11098 | 1.1571 | 0.6968 |

| 0.769 | 5.0 | 13872 | 1.2917 | 0.7127 |

| 0.2656 | 6.0 | 16647 | 1.1549 | 0.7479 |

| 0.2939 | 7.0 | 19421 | 1.2372 | 0.7736 |

| 0.1278 | 8.0 | 22196 | 1.2985 | 0.7875 |

| 0.5175 | 9.0 | 24970 | 1.3664 | 0.7986 |

| 0.0547 | 10.0 | 27740 | 1.4164 | 0.8011 |

### Framework versions

- Transformers 4.33.2

- Pytorch 2.0.1+cu118

- Datasets 2.14.5

- Tokenizers 0.13.3

|

mikeee/llama-2-7b-nyt31k

|

mikeee

| 2023-09-26T06:29:32Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-09-26T05:47:44Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0

### Trained with

- https://huggingface.co/datasets/mikeee/en-zh-nyt31k

- 500 epochs

- Instruction Template

```

### Instruction:

Translate the following text to Chinese.

### Input:

{english}

### Response:

```

|

jiang9527li/ppo-LunarLander-v2

|

jiang9527li

| 2023-09-26T06:26:59Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-09-26T06:26:18Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -55.30 +/- 36.06

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

shrimantasatpati/lora-trained-xl-colab

|

shrimantasatpati

| 2023-09-26T06:25:27Z | 13 | 1 |

diffusers

|

[

"diffusers",

"tensorboard",

"stable-diffusion-xl",

"stable-diffusion-xl-diffusers",

"text-to-image",

"lora",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:openrail++",

"region:us"

] |

text-to-image

| 2023-08-24T08:16:42Z |

---

license: openrail++

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: a photo of WARLI painting

tags:

- stable-diffusion-xl

- stable-diffusion-xl-diffusers

- text-to-image

- diffusers

- lora

inference: true

---

# LoRA DreamBooth - shrimantasatpati/lora-trained-xl-colab

These are LoRA adaption weights for stabilityai/stable-diffusion-xl-base-1.0. The weights were trained on a photo of WARLI painting using [DreamBooth](https://dreambooth.github.io/). You can find some example images in the following.

LoRA for the text encoder was enabled: False.

Special VAE used for training: madebyollin/sdxl-vae-fp16-fix.

|

NEU-HAI/mental-flan-t5-xxl

|

NEU-HAI

| 2023-09-26T06:18:24Z | 107 | 3 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"mental",

"mental health",

"large language model",

"flan-t5",

"en",

"arxiv:2307.14385",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-08-21T18:11:01Z |

---

license: apache-2.0

language:

- en

tags:

- mental

- mental health

- large language model

- flan-t5

---

# Model Card for mental-flan-t5-xxl

<!-- Provide a quick summary of what the model is/does. -->

This is a fine-tuned large language model for mental health prediction via online text data.

## Model Details

### Model Description

We fine-tune a FLAN-T5-XXL model with 4 high-quality text (6 tasks in total) datasets for the mental health prediction scenario: Dreaddit, DepSeverity, SDCNL, and CCRS-Suicide.

We have a separate model, fine-tuned on Alpaca, namely Mental-Alpaca, shared [here](https://huggingface.co/NEU-HAI/mental-alpaca)

- **Developed by:** Northeastern University Human-Centered AI Lab

- **Model type:** Sequence-to-sequence Text-generation

- **Language(s) (NLP):** English

- **License:** Apache 2.0 License

- **Finetuned from model :** [FLAN-T5-XXL](https://huggingface.co/google/flan-t5-xxl)

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** https://github.com/neuhai/Mental-LLM

- **Paper:** https://arxiv.org/abs/2307.14385

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

The model is intended to be used for research purposes only in English.

The model has been fine-tuned for mental health prediction via online text data. Detailed information about the fine-tuning process and prompts can be found in our [paper](https://arxiv.org/abs/2307.14385).

The use of this model should also comply with the restrictions from [FLAN-T5-XXL](https://huggingface.co/google/flan-t5-xxl)

### Out-of-Scope Use

The out-of-scope use of this model should comply with [FLAN-T5-XXL](https://huggingface.co/google/flan-t5-xxl).

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

The Bias, Risks, and Limitations of this model should also comply with [FLAN-T5-XXL](https://huggingface.co/google/flan-t5-xxl).

## How to Get Started with the Model

Use the code below to get started with the model.

```

from transformers import T5ForConditionalGeneration, T5Tokenizer

tokenizer = T5ForConditionalGeneration.from_pretrained("NEU-HAI/mental-flan-t5-xxl")

mdoel = T5Tokenizer.from_pretrained("NEU-HAI/mental-flan-t5-xxl")

```

## Training Details and Evaluation

Detailed information about our work can be found in our [paper](https://arxiv.org/abs/2307.14385).

## Citation

```

@article{xu2023leveraging,

title={Mental-LLM: Leveraging large language models for mental health prediction via online text data},

author={Xu, Xuhai and Yao, Bingshen and Dong, Yuanzhe and Gabriel, Saadia and Yu, Hong and Ghassemi, Marzyeh and Hendler, James and Dey, Anind K and Wang, Dakuo},

journal={arXiv preprint arXiv:2307.14385},

year={2023}

}

```

|

sarahnfdez/sarahsRLRepo

|

sarahnfdez

| 2023-09-26T06:13:41Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-09-26T02:54:25Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: MlpPolicy

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 264.56 +/- 13.29

name: mean_reward

verified: false

---

# **MlpPolicy** Agent playing **LunarLander-v2**

This is a trained model of a **MlpPolicy** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

glens98/phi-1_5-finetuned-gsm8k

|

glens98

| 2023-09-26T06:07:07Z | 58 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"mixformer-sequential",

"text-generation",

"generated_from_trainer",

"custom_code",

"license:other",

"autotrain_compatible",

"region:us"

] |

text-generation

| 2023-09-26T05:10:26Z |

---

license: other

tags:

- generated_from_trainer

model-index:

- name: phi-1_5-finetuned-gsm8k

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# phi-1_5-finetuned-gsm8k

This model is a fine-tuned version of [microsoft/phi-1_5](https://huggingface.co/microsoft/phi-1_5) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- training_steps: 1000

### Training results

### Framework versions

- Transformers 4.30.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.5

- Tokenizers 0.13.3

|

rooftopcoder/t5-small-coqa

|

rooftopcoder

| 2023-09-26T05:52:24Z | 24 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"base_model:google-t5/t5-small",

"base_model:finetune:google-t5/t5-small",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-05-12T08:19:34Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

base_model: t5-small

model-index:

- name: t5-small-coqa

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-coqa

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0055

- Accuracy: 0.0777

- F1: 0.0501

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 64

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 3.0

### Training results

### Framework versions

- Transformers 4.29.1

- Pytorch 2.0.0

- Datasets 2.1.0

- Tokenizers 0.13.3

|

rooftopcoder/long-t5-tglobal-base-16384-book-summary-finetuned-dialogsum

|

rooftopcoder

| 2023-09-26T05:52:16Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"longt5",

"text2text-generation",

"generated_from_trainer",

"base_model:pszemraj/long-t5-tglobal-base-16384-book-summary",

"base_model:finetune:pszemraj/long-t5-tglobal-base-16384-book-summary",

"license:bsd-3-clause",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-02-15T16:11:04Z |

---

license: bsd-3-clause

tags:

- generated_from_trainer

metrics:

- rouge

base_model: pszemraj/long-t5-tglobal-base-16384-book-summary

model-index:

- name: long-t5-tglobal-base-16384-book-summary-finetuned-dialogsum

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# long-t5-tglobal-base-16384-book-summary-finetuned-dialogsum

This model is a fine-tuned version of [pszemraj/long-t5-tglobal-base-16384-book-summary](https://huggingface.co/pszemraj/long-t5-tglobal-base-16384-book-summary) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: nan

- Rouge1: 0.0

- Rouge2: 0.0

- Rougel: 0.0

- Rougelsum: 0.0

- Gen Len: 2.0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:------:|:---------:|:-------:|

| 0.0 | 1.0 | 3115 | nan | 25.3388 | 5.7186 | 18.439 | 21.6766 | 53.338 |

| 0.0 | 2.0 | 6230 | nan | 0.0 | 0.0 | 0.0 | 0.0 | 2.0 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

prateeky2806/bert-base-uncased-mnli-lora-epochs-2-lr-0.001

|

prateeky2806

| 2023-09-26T05:50:06Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"region:us"

] | null | 2023-09-26T01:34:12Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-mnli-lora-epochs-2-lr-0.001

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-mnli-lora-epochs-2-lr-0.001

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3961

- Accuracy: 0.85

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.6399 | 1.0 | 12269 | 0.4920 | 0.76 |

| 0.4959 | 2.0 | 24538 | 0.3961 | 0.85 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

CyberHarem/wakana_shiki_lovelivesuperstar

|

CyberHarem

| 2023-09-26T05:43:31Z | 0 | 0 | null |

[

"art",

"text-to-image",

"dataset:CyberHarem/wakana_shiki_lovelivesuperstar",

"license:mit",

"region:us"

] |

text-to-image

| 2023-09-26T05:32:36Z |

---

license: mit

datasets:

- CyberHarem/wakana_shiki_lovelivesuperstar

pipeline_tag: text-to-image

tags:

- art

---

# Lora of wakana_shiki_lovelivesuperstar

This model is trained with [HCP-Diffusion](https://github.com/7eu7d7/HCP-Diffusion). And the auto-training framework is maintained by [DeepGHS Team](https://huggingface.co/deepghs).

The base model used during training is [NAI](https://huggingface.co/deepghs/animefull-latest), and the base model used for generating preview images is [Meina/MeinaMix_V11](https://huggingface.co/Meina/MeinaMix_V11).

After downloading the pt and safetensors files for the specified step, you need to use them simultaneously. The pt file will be used as an embedding, while the safetensors file will be loaded for Lora.

For example, if you want to use the model from step 6600, you need to download `6600/wakana_shiki_lovelivesuperstar.pt` as the embedding and `6600/wakana_shiki_lovelivesuperstar.safetensors` for loading Lora. By using both files together, you can generate images for the desired characters.

**The best step we recommend is 6600**, with the score of 0.988. The trigger words are:

1. `wakana_shiki_lovelivesuperstar`

2. `blue_hair, short_hair, bangs, hair_between_eyes, blush, jewelry, earrings, ribbon, red_ribbon, neck_ribbon, breasts`

For the following groups, it is not recommended to use this model and we express regret:

1. Individuals who cannot tolerate any deviations from the original character design, even in the slightest detail.

2. Individuals who are facing the application scenarios with high demands for accuracy in recreating character outfits.

3. Individuals who cannot accept the potential randomness in AI-generated images based on the Stable Diffusion algorithm.

4. Individuals who are not comfortable with the fully automated process of training character models using LoRA, or those who believe that training character models must be done purely through manual operations to avoid disrespecting the characters.

5. Individuals who finds the generated image content offensive to their values.

These are available steps:

| Steps | Score | Download | pattern_1 | pattern_2 | pattern_3 | pattern_4 | pattern_5 | pattern_6 | bikini | bondage | free | maid | miko | nude | nude2 | suit | yukata |

|:---------|:----------|:--------------------------------------------------------|:----------------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-------------------------------------------------|:--------------------------------------------------|:-------------------------------------|:-------------------------------------|:-------------------------------------|:-----------------------------------------------|:------------------------------------------------|:-------------------------------------|:-----------------------------------------|

| **6600** | **0.988** | [**Download**](6600/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](6600/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](6600/previews/bikini.png) | [<NSFW, click to see>](6600/previews/bondage.png) |  |  |  | [<NSFW, click to see>](6600/previews/nude.png) | [<NSFW, click to see>](6600/previews/nude2.png) |  |  |

| 6160 | 0.911 | [Download](6160/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](6160/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](6160/previews/bikini.png) | [<NSFW, click to see>](6160/previews/bondage.png) |  |  |  | [<NSFW, click to see>](6160/previews/nude.png) | [<NSFW, click to see>](6160/previews/nude2.png) |  |  |

| 5720 | 0.902 | [Download](5720/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](5720/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](5720/previews/bikini.png) | [<NSFW, click to see>](5720/previews/bondage.png) |  |  |  | [<NSFW, click to see>](5720/previews/nude.png) | [<NSFW, click to see>](5720/previews/nude2.png) |  |  |

| 5280 | 0.916 | [Download](5280/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](5280/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](5280/previews/bikini.png) | [<NSFW, click to see>](5280/previews/bondage.png) |  |  |  | [<NSFW, click to see>](5280/previews/nude.png) | [<NSFW, click to see>](5280/previews/nude2.png) |  |  |

| 4840 | 0.986 | [Download](4840/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](4840/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](4840/previews/bikini.png) | [<NSFW, click to see>](4840/previews/bondage.png) |  |  |  | [<NSFW, click to see>](4840/previews/nude.png) | [<NSFW, click to see>](4840/previews/nude2.png) |  |  |

| 4400 | 0.975 | [Download](4400/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](4400/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](4400/previews/bikini.png) | [<NSFW, click to see>](4400/previews/bondage.png) |  |  |  | [<NSFW, click to see>](4400/previews/nude.png) | [<NSFW, click to see>](4400/previews/nude2.png) |  |  |

| 3960 | 0.840 | [Download](3960/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](3960/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](3960/previews/bikini.png) | [<NSFW, click to see>](3960/previews/bondage.png) |  |  |  | [<NSFW, click to see>](3960/previews/nude.png) | [<NSFW, click to see>](3960/previews/nude2.png) |  |  |

| 3520 | 0.852 | [Download](3520/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](3520/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](3520/previews/bikini.png) | [<NSFW, click to see>](3520/previews/bondage.png) |  |  |  | [<NSFW, click to see>](3520/previews/nude.png) | [<NSFW, click to see>](3520/previews/nude2.png) |  |  |

| 3080 | 0.920 | [Download](3080/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](3080/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](3080/previews/bikini.png) | [<NSFW, click to see>](3080/previews/bondage.png) |  |  |  | [<NSFW, click to see>](3080/previews/nude.png) | [<NSFW, click to see>](3080/previews/nude2.png) |  |  |

| 2640 | 0.920 | [Download](2640/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](2640/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](2640/previews/bikini.png) | [<NSFW, click to see>](2640/previews/bondage.png) |  |  |  | [<NSFW, click to see>](2640/previews/nude.png) | [<NSFW, click to see>](2640/previews/nude2.png) |  |  |

| 2200 | 0.920 | [Download](2200/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](2200/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](2200/previews/bikini.png) | [<NSFW, click to see>](2200/previews/bondage.png) |  |  |  | [<NSFW, click to see>](2200/previews/nude.png) | [<NSFW, click to see>](2200/previews/nude2.png) |  |  |

| 1760 | 0.910 | [Download](1760/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](1760/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](1760/previews/bikini.png) | [<NSFW, click to see>](1760/previews/bondage.png) |  |  |  | [<NSFW, click to see>](1760/previews/nude.png) | [<NSFW, click to see>](1760/previews/nude2.png) |  |  |

| 1320 | 0.914 | [Download](1320/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](1320/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](1320/previews/bikini.png) | [<NSFW, click to see>](1320/previews/bondage.png) |  |  |  | [<NSFW, click to see>](1320/previews/nude.png) | [<NSFW, click to see>](1320/previews/nude2.png) |  |  |

| 880 | 0.910 | [Download](880/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](880/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](880/previews/bikini.png) | [<NSFW, click to see>](880/previews/bondage.png) |  |  |  | [<NSFW, click to see>](880/previews/nude.png) | [<NSFW, click to see>](880/previews/nude2.png) |  |  |

| 440 | 0.894 | [Download](440/wakana_shiki_lovelivesuperstar.zip) | [<NSFW, click to see>](440/previews/pattern_1.png) |  |  |  |  |  | [<NSFW, click to see>](440/previews/bikini.png) | [<NSFW, click to see>](440/previews/bondage.png) |  |  |  | [<NSFW, click to see>](440/previews/nude.png) | [<NSFW, click to see>](440/previews/nude2.png) |  |  |

|

Ori/lama-2-13b-peft-mh-ret-mix-v2-seed-2

|

Ori

| 2023-09-26T05:25:29Z | 1 | 0 |

peft

|

[

"peft",

"safetensors",

"region:us"

] | null | 2023-09-26T05:24:12Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

hellomyoh/llama2-2b-s117755-v1

|

hellomyoh

| 2023-09-26T05:08:38Z | 1 | 0 |

peft

|

[

"peft",

"tensorboard",

"region:us"

] | null | 2023-09-22T15:22:03Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.6.0.dev0

- PEFT 0.6.0.dev0

|

BrianDsouzaAI/autotrain-even_better-91480144518

|

BrianDsouzaAI

| 2023-09-26T05:06:08Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"deberta",

"text-classification",

"autotrain",

"en",

"dataset:BrianDsouzaAI/autotrain-data-even_better",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-09-26T05:04:57Z |

---

tags:

- autotrain

- text-classification

language:

- en

widget:

- text: "I love AutoTrain"

datasets:

- BrianDsouzaAI/autotrain-data-even_better

co2_eq_emissions:

emissions: 0.387970627555954

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 91480144518

- CO2 Emissions (in grams): 0.3880

## Validation Metrics

- Loss: 0.738

- Accuracy: 0.667

- Macro F1: 0.456

- Micro F1: 0.667

- Weighted F1: 0.648

- Macro Precision: 0.442

- Micro Precision: 0.667

- Weighted Precision: 0.632

- Macro Recall: 0.471

- Micro Recall: 0.667

- Weighted Recall: 0.667

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/BrianDsouzaAI/autotrain-even_better-91480144518

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("BrianDsouzaAI/autotrain-even_better-91480144518", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("BrianDsouzaAI/autotrain-even_better-91480144518", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

dipplestix/Reinforce-cart_pole

|

dipplestix

| 2023-09-26T05:01:46Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-09-26T05:01:37Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-cart_pole

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

nc33/3label_model

|

nc33

| 2023-09-26T05:00:49Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"safetensors",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"base_model:microsoft/deberta-v3-base",

"base_model:finetune:microsoft/deberta-v3-base",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-01-11T04:26:28Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

base_model: microsoft/deberta-v3-base

model-index:

- name: 3label_model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 3label_model

This model is a fine-tuned version of [microsoft/deberta-v3-base](https://huggingface.co/microsoft/deberta-v3-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3920

- Accuracy: 0.8520

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.6073 | 1.0 | 707 | 0.3921 | 0.8343 |

| 0.3319 | 2.0 | 1414 | 0.3920 | 0.8520 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

|

nc33/yes_no_qna_deberta_model

|

nc33

| 2023-09-26T04:59:40Z | 302 | 2 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"dataset:super_glue",

"base_model:microsoft/deberta-v3-base",

"base_model:finetune:microsoft/deberta-v3-base",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-12-30T14:50:14Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- super_glue

metrics:

- accuracy

base_model: microsoft/deberta-v3-base

model-index:

- name: yes_no_qna_deberta_model

results:

- task:

type: text-classification

name: Text Classification

dataset:

name: super_glue

type: super_glue

config: boolq

split: train

args: boolq

metrics:

- type: accuracy

value: 0.8507645259938837

name: Accuracy

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# yes_no_qna_deberta_model

This model is a fine-tuned version of [microsoft/deberta-v3-base](https://huggingface.co/microsoft/deberta-v3-base) on the super_glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5570

- Accuracy: 0.8508

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.583 | 1.0 | 590 | 0.4086 | 0.8251 |

| 0.348 | 2.0 | 1180 | 0.4170 | 0.8465 |

| 0.2183 | 3.0 | 1770 | 0.5570 | 0.8508 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.0+cu116

- Datasets 2.8.0

- Tokenizers 0.13.2

|

vstudent/bert-finetuned-ner

|

vstudent

| 2023-09-26T04:58:20Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"token-classification",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-cased",

"base_model:finetune:google-bert/bert-base-cased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-09-26T03:00:26Z |

---

license: apache-2.0

base_model: bert-base-cased

tags:

- generated_from_keras_callback

model-index:

- name: vstudent/bert-finetuned-ner

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vstudent/bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0280

- Validation Loss: 0.0538

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'module': 'keras.optimizers.schedules', 'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2634, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, 'registered_name': None}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 0.1764 | 0.0653 | 0 |

| 0.0482 | 0.0571 | 1 |

| 0.0280 | 0.0538 | 2 |

### Framework versions

- Transformers 4.33.2

- TensorFlow 2.13.0

- Datasets 2.14.5

- Tokenizers 0.13.3

|

prateeky2806/bert-base-uncased-qqp-lora-epochs-2-lr-0.0005

|

prateeky2806

| 2023-09-26T04:50:50Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"region:us"

] | null | 2023-09-26T03:20:04Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

- f1

model-index:

- name: bert-base-uncased-qqp-lora-epochs-2-lr-0.0005

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-qqp-lora-epochs-2-lr-0.0005

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1329

- Accuracy: 0.95

- F1: 0.9333

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|

| 0.2986 | 1.0 | 11368 | 0.1556 | 0.94 | 0.9189 |

| 0.238 | 2.0 | 22736 | 0.1329 | 0.95 | 0.9333 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

luonghuuthanhnam5/Llama-2-7b-chat-hf-fine-tuned-adapters

|

luonghuuthanhnam5

| 2023-09-26T04:44:57Z | 0 | 0 |

peft

|

[

"peft",

"llama",

"region:us"

] | null | 2023-09-19T05:08:55Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.6.0.dev0

- PEFT 0.6.0.dev0

- PEFT 0.6.0.dev0

- PEFT 0.6.0.dev0

|

prateeky2806/bert-base-uncased-wnli-epochs-10-lr-0.0001

|

prateeky2806

| 2023-09-26T04:39:45Z | 108 | 0 |

transformers

|

[

"transformers",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-09-26T01:23:40Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-wnli-epochs-10-lr-0.0001

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: wnli

split: train

args: wnli

metrics:

- name: Accuracy

type: accuracy

value: 0.36

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-wnli-epochs-10-lr-0.0001

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6970

- Accuracy: 0.36

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 17 | 0.7353 | 0.35 |

| No log | 2.0 | 34 | 0.7247 | 0.43 |

| No log | 3.0 | 51 | 0.7062 | 0.43 |

| No log | 4.0 | 68 | 0.6870 | 0.57 |

| No log | 5.0 | 85 | 0.6935 | 0.47 |

| No log | 6.0 | 102 | 0.6891 | 0.57 |

| No log | 7.0 | 119 | 0.6949 | 0.44 |

| No log | 8.0 | 136 | 0.6996 | 0.43 |

| No log | 9.0 | 153 | 0.6981 | 0.42 |

| No log | 10.0 | 170 | 0.6970 | 0.36 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

prateeky2806/bert-base-uncased-wnli-ia3-epochs-10-lr-5e-05

|

prateeky2806

| 2023-09-26T04:36:21Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"region:us"

] | null | 2023-09-26T01:22:17Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-wnli-ia3-epochs-10-lr-5e-05

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-wnli-ia3-epochs-10-lr-5e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6952

- Accuracy: 0.48

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 17 | 0.6792 | 0.57 |

| No log | 2.0 | 34 | 0.6907 | 0.57 |

| No log | 3.0 | 51 | 0.7020 | 0.43 |

| No log | 4.0 | 68 | 0.7008 | 0.44 |

| No log | 5.0 | 85 | 0.6960 | 0.48 |

| No log | 6.0 | 102 | 0.6953 | 0.47 |

| No log | 7.0 | 119 | 0.6933 | 0.5 |

| No log | 8.0 | 136 | 0.6944 | 0.5 |

| No log | 9.0 | 153 | 0.6952 | 0.48 |

| No log | 10.0 | 170 | 0.6952 | 0.48 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

prateeky2806/bert-base-uncased-wnli-lora-epochs-10-lr-1e-06

|

prateeky2806

| 2023-09-26T04:32:12Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"region:us"

] | null | 2023-09-26T01:21:25Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-wnli-lora-epochs-10-lr-1e-06

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-wnli-lora-epochs-10-lr-1e-06

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6821

- Accuracy: 0.58

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-06

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 17 | 0.6812 | 0.57 |

| No log | 2.0 | 34 | 0.6814 | 0.57 |

| No log | 3.0 | 51 | 0.6815 | 0.57 |

| No log | 4.0 | 68 | 0.6817 | 0.57 |

| No log | 5.0 | 85 | 0.6818 | 0.57 |

| No log | 6.0 | 102 | 0.6819 | 0.57 |

| No log | 7.0 | 119 | 0.6820 | 0.57 |

| No log | 8.0 | 136 | 0.6820 | 0.58 |

| No log | 9.0 | 153 | 0.6821 | 0.58 |

| No log | 10.0 | 170 | 0.6821 | 0.58 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

prateeky2806/bert-base-uncased-rte-epochs-10-lr-1e-05

|

prateeky2806

| 2023-09-26T04:12:06Z | 108 | 0 |

transformers

|

[

"transformers",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-09-26T04:04:46Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-rte-epochs-10-lr-1e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: rte

split: train

args: rte

metrics:

- name: Accuracy

type: accuracy

value: 0.74

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-rte-epochs-10-lr-1e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6968

- Accuracy: 0.74

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 75 | 0.6999 | 0.44 |

| No log | 2.0 | 150 | 0.6216 | 0.69 |

| No log | 3.0 | 225 | 0.5941 | 0.69 |

| No log | 4.0 | 300 | 0.5779 | 0.74 |

| No log | 5.0 | 375 | 0.5871 | 0.73 |

| No log | 6.0 | 450 | 0.6203 | 0.76 |

| 0.5133 | 7.0 | 525 | 0.6944 | 0.76 |

| 0.5133 | 8.0 | 600 | 0.6647 | 0.75 |

| 0.5133 | 9.0 | 675 | 0.6803 | 0.78 |

| 0.5133 | 10.0 | 750 | 0.6968 | 0.74 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Adun/openthaigpt-1.0.0-beta-7b-ckpt-hf

|

Adun

| 2023-09-26T04:04:02Z | 5 | 1 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-08-29T15:39:53Z |

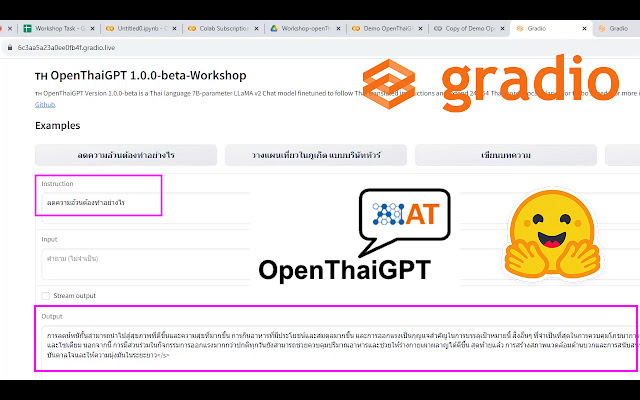

This model exported from

### base_model = 'ChanonUtupon/openthaigpt-merge-lora-llama-2-7B-3470k'

### lora_weights = 'openthaigpt/openthaigpt-1.0.0-beta-7b-chat'

for education purpose.

## more details.

https://aiotplatform.blogspot.com/2023/09/demo-openthaigpt-1.0.0-beta-colab.html

|

guetLzy/VITS-fast-fine-tuning

|

guetLzy

| 2023-09-26T04:03:57Z | 0 | 6 | null |

[

"license:cc-by-2.0",

"region:us"

] | null | 2023-09-26T03:51:32Z |

---

license: cc-by-2.0

---

# VITS-fast-fine-tuning模型分享

1.此模型包含三个说话人,刻晴,神里绫华,钟离。

2.模型训练了500个epoch,使用C底模训练而成。

3.训练的数据为每个说话人至少500条语音。

4.本地推理建议使用官方的[推理程序](https://github.com/Plachtaa/VITS-fast-fine-tuning/releases/download/webui-v1.1/inference.rar)。

5.解压之后把模型和json文件如下放置,之后运行 inference.exe文件即可。

```

inference

├───inference.exe

├───...

├───finetune_speaker.json

└───G_latest.pth

```

|

alperenunlu/Reinforce-CartPole-v1

|

alperenunlu

| 2023-09-26T04:02:39Z | 0 | 2 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-09-26T01:28:45Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

|

ajash/Amazon-lm-10k

|

ajash

| 2023-09-26T04:00:00Z | 5 | 0 |

peft

|

[

"peft",

"base_model:togethercomputer/LLaMA-2-7B-32K",

"base_model:adapter:togethercomputer/LLaMA-2-7B-32K",

"region:us"

] | null | 2023-09-08T20:57:11Z |

---

library_name: peft

base_model: togethercomputer/LLaMA-2-7B-32K

---

## Training procedure

### Framework versions

- PEFT 0.4.0

|

prateeky2806/bert-base-uncased-rte-lora-epochs-10-lr-0.0005

|

prateeky2806

| 2023-09-26T03:42:24Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"region:us"

] | null | 2023-09-26T01:17:47Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-uncased-rte-lora-epochs-10-lr-0.0005

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-rte-lora-epochs-10-lr-0.0005

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 2.0542

- Accuracy: 0.63

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 75 | 0.6901 | 0.56 |

| No log | 2.0 | 150 | 0.6403 | 0.66 |

| No log | 3.0 | 225 | 0.6422 | 0.71 |

| No log | 4.0 | 300 | 0.7092 | 0.65 |

| No log | 5.0 | 375 | 0.8987 | 0.67 |

| No log | 6.0 | 450 | 1.2375 | 0.65 |

| 0.4488 | 7.0 | 525 | 1.7709 | 0.61 |

| 0.4488 | 8.0 | 600 | 1.7151 | 0.63 |

| 0.4488 | 9.0 | 675 | 1.9559 | 0.62 |

| 0.4488 | 10.0 | 750 | 2.0542 | 0.63 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

CyberHarem/heanna_sumire_lovelivesuperstar

|

CyberHarem

| 2023-09-26T03:10:08Z | 0 | 0 | null |

[

"art",

"text-to-image",

"dataset:CyberHarem/heanna_sumire_lovelivesuperstar",

"license:mit",

"region:us"

] |

text-to-image

| 2023-09-26T02:51:59Z |

---

license: mit

datasets:

- CyberHarem/heanna_sumire_lovelivesuperstar

pipeline_tag: text-to-image

tags:

- art

---

# Lora of heanna_sumire_lovelivesuperstar

This model is trained with [HCP-Diffusion](https://github.com/7eu7d7/HCP-Diffusion). And the auto-training framework is maintained by [DeepGHS Team](https://huggingface.co/deepghs).

The base model used during training is [NAI](https://huggingface.co/deepghs/animefull-latest), and the base model used for generating preview images is [Meina/MeinaMix_V11](https://huggingface.co/Meina/MeinaMix_V11).

After downloading the pt and safetensors files for the specified step, you need to use them simultaneously. The pt file will be used as an embedding, while the safetensors file will be loaded for Lora.

For example, if you want to use the model from step 3640, you need to download `3640/heanna_sumire_lovelivesuperstar.pt` as the embedding and `3640/heanna_sumire_lovelivesuperstar.safetensors` for loading Lora. By using both files together, you can generate images for the desired characters.

**The best step we recommend is 3640**, with the score of 0.986. The trigger words are:

1. `heanna_sumire_lovelivesuperstar`

2. `blonde_hair, bangs, green_eyes, long_hair, blunt_bangs, smile, hairband, blush, breasts, neck_ribbon`

For the following groups, it is not recommended to use this model and we express regret:

1. Individuals who cannot tolerate any deviations from the original character design, even in the slightest detail.

2. Individuals who are facing the application scenarios with high demands for accuracy in recreating character outfits.

3. Individuals who cannot accept the potential randomness in AI-generated images based on the Stable Diffusion algorithm.

4. Individuals who are not comfortable with the fully automated process of training character models using LoRA, or those who believe that training character models must be done purely through manual operations to avoid disrespecting the characters.

5. Individuals who finds the generated image content offensive to their values.

These are available steps:

| Steps | Score | Download | pattern_1 | pattern_2 | pattern_3 | pattern_4 | pattern_5 | pattern_6 | pattern_7 | pattern_8 | pattern_9 | pattern_10 | pattern_11 | pattern_12 | pattern_13 | pattern_14 | bikini | bondage | free | maid | miko | nude | nude2 | suit | yukata |

|:---------|:----------|:---------------------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:-------------------------------------------------|:-------------------------------------------------|:-------------------------------------------------|:-------------------------------------------------|:-------------------------------------------------|:-----------------------------------------|:--------------------------------------------------|:-------------------------------------|:-------------------------------------|:-------------------------------------|:-----------------------------------------------|:------------------------------------------------|:-------------------------------------|:-----------------------------------------|

| 7800 | 0.982 | [Download](7800/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](7800/previews/bondage.png) |  |  |  | [<NSFW, click to see>](7800/previews/nude.png) | [<NSFW, click to see>](7800/previews/nude2.png) |  |  |

| 7280 | 0.967 | [Download](7280/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](7280/previews/bondage.png) |  |  |  | [<NSFW, click to see>](7280/previews/nude.png) | [<NSFW, click to see>](7280/previews/nude2.png) |  |  |

| 6760 | 0.972 | [Download](6760/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](6760/previews/bondage.png) |  |  |  | [<NSFW, click to see>](6760/previews/nude.png) | [<NSFW, click to see>](6760/previews/nude2.png) |  |  |

| 6240 | 0.965 | [Download](6240/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](6240/previews/bondage.png) |  |  |  | [<NSFW, click to see>](6240/previews/nude.png) | [<NSFW, click to see>](6240/previews/nude2.png) |  |  |

| 5720 | 0.946 | [Download](5720/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](5720/previews/bondage.png) |  |  |  | [<NSFW, click to see>](5720/previews/nude.png) | [<NSFW, click to see>](5720/previews/nude2.png) |  |  |

| 5200 | 0.910 | [Download](5200/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](5200/previews/bondage.png) |  |  |  | [<NSFW, click to see>](5200/previews/nude.png) | [<NSFW, click to see>](5200/previews/nude2.png) |  |  |

| 4680 | 0.975 | [Download](4680/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](4680/previews/bondage.png) |  |  |  | [<NSFW, click to see>](4680/previews/nude.png) | [<NSFW, click to see>](4680/previews/nude2.png) |  |  |

| 4160 | 0.948 | [Download](4160/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](4160/previews/bondage.png) |  |  |  | [<NSFW, click to see>](4160/previews/nude.png) | [<NSFW, click to see>](4160/previews/nude2.png) |  |  |

| **3640** | **0.986** | [**Download**](3640/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](3640/previews/bondage.png) |  |  |  | [<NSFW, click to see>](3640/previews/nude.png) | [<NSFW, click to see>](3640/previews/nude2.png) |  |  |

| 3120 | 0.944 | [Download](3120/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](3120/previews/bondage.png) |  |  |  | [<NSFW, click to see>](3120/previews/nude.png) | [<NSFW, click to see>](3120/previews/nude2.png) |  |  |

| 2600 | 0.916 | [Download](2600/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](2600/previews/bondage.png) |  |  |  | [<NSFW, click to see>](2600/previews/nude.png) | [<NSFW, click to see>](2600/previews/nude2.png) |  |  |

| 2080 | 0.980 | [Download](2080/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](2080/previews/bondage.png) |  |  |  | [<NSFW, click to see>](2080/previews/nude.png) | [<NSFW, click to see>](2080/previews/nude2.png) |  |  |

| 1560 | 0.960 | [Download](1560/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](1560/previews/bondage.png) |  |  |  | [<NSFW, click to see>](1560/previews/nude.png) | [<NSFW, click to see>](1560/previews/nude2.png) |  |  |

| 1040 | 0.947 | [Download](1040/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](1040/previews/bondage.png) |  |  |  | [<NSFW, click to see>](1040/previews/nude.png) | [<NSFW, click to see>](1040/previews/nude2.png) |  |  |

| 520 | 0.978 | [Download](520/heanna_sumire_lovelivesuperstar.zip) |  |  |  |  |  |  |  |  |  |  |  |  |  |  |  | [<NSFW, click to see>](520/previews/bondage.png) |  |  |  | [<NSFW, click to see>](520/previews/nude.png) | [<NSFW, click to see>](520/previews/nude2.png) |  |  |

|

prateeky2806/bert-base-uncased-cola-epochs-10-lr-5e-05

|

prateeky2806

| 2023-09-26T03:10:04Z | 108 | 0 |

transformers

|

[

"transformers",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-09-26T02:59:59Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: bert-base-uncased-cola-epochs-10-lr-5e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: train

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5435768262757358

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-cola-epochs-10-lr-5e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.3812

- Matthews Correlation: 0.5436

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| No log | 1.0 | 265 | 0.5922 | 0.4074 |

| 0.4065 | 2.0 | 530 | 0.4494 | 0.6144 |

| 0.4065 | 3.0 | 795 | 0.4548 | 0.5738 |

| 0.1623 | 4.0 | 1060 | 0.6883 | 0.5687 |

| 0.1623 | 5.0 | 1325 | 0.7222 | 0.5183 |

| 0.081 | 6.0 | 1590 | 1.0246 | 0.5371 |

| 0.081 | 7.0 | 1855 | 1.1457 | 0.5145 |

| 0.0344 | 8.0 | 2120 | 1.1771 | 0.5436 |

| 0.0344 | 9.0 | 2385 | 1.3187 | 0.5485 |

| 0.0123 | 10.0 | 2650 | 1.3812 | 0.5436 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

prateeky2806/bert-base-uncased-mrpc-epochs-10-lr-5e-05

|

prateeky2806

| 2023-09-26T03:09:18Z | 108 | 0 |

transformers

|

[

"transformers",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"base_model:google-bert/bert-base-uncased",

"base_model:finetune:google-bert/bert-base-uncased",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-09-26T03:00:33Z |

---

license: apache-2.0

base_model: bert-base-uncased

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

- f1

model-index:

- name: bert-base-uncased-mrpc-epochs-10-lr-5e-05

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: mrpc

split: train

args: mrpc

metrics:

- name: Accuracy

type: accuracy

value: 0.83

- name: F1

type: f1

value: 0.8794326241134751

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-mrpc-epochs-10-lr-5e-05

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1650

- Accuracy: 0.83

- F1: 0.8794

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 28

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.06

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| No log | 1.0 | 112 | 0.4455 | 0.77 | 0.8414 |

| No log | 2.0 | 224 | 0.4557 | 0.8 | 0.8611 |

| No log | 3.0 | 336 | 0.6409 | 0.8 | 0.8551 |

| No log | 4.0 | 448 | 0.6648 | 0.82 | 0.8767 |

| 0.2723 | 5.0 | 560 | 0.8845 | 0.84 | 0.8873 |

| 0.2723 | 6.0 | 672 | 0.9873 | 0.84 | 0.8841 |

| 0.2723 | 7.0 | 784 | 1.0540 | 0.83 | 0.8777 |

| 0.2723 | 8.0 | 896 | 1.0712 | 0.85 | 0.8921 |

| 0.0161 | 9.0 | 1008 | 1.1467 | 0.84 | 0.8857 |

| 0.0161 | 10.0 | 1120 | 1.1650 | 0.83 | 0.8794 |

### Framework versions

- Transformers 4.32.0.dev0

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Kodamn47/distilhubert-finetuned-gtzan

|

Kodamn47

| 2023-09-26T03:04:32Z | 20 | 0 |

transformers

|

[

"transformers",

"pytorch",

"hubert",

"audio-classification",

"generated_from_trainer",

"dataset:marsyas/gtzan",

"base_model:ntu-spml/distilhubert",

"base_model:finetune:ntu-spml/distilhubert",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-09-12T18:00:38Z |

---

license: apache-2.0

base_model: ntu-spml/distilhubert

tags:

- generated_from_trainer

datasets:

- marsyas/gtzan

metrics:

- accuracy

model-index:

- name: distilhubert-finetuned-gtzan

results:

- task:

name: Audio Classification

type: audio-classification

dataset:

name: GTZAN

type: marsyas/gtzan

config: all

split: train

args: all

metrics:

- name: Accuracy

type: accuracy

value: 0.88

---