modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-29 00:38:39

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 525

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-29 00:38:28

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

jbilcke-hf/sdxl-akira

|

jbilcke-hf

| 2023-10-27T15:04:29Z | 12 | 3 |

diffusers

|

[

"diffusers",

"stable-diffusion-xl",

"stable-diffusion-xl-diffusers",

"text-to-image",

"lora",

"dataset:jbilcke-hf/akira",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"region:us"

] |

text-to-image

| 2023-10-27T09:56:17Z |

---

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: akira-style

tags:

- stable-diffusion-xl

- stable-diffusion-xl-diffusers

- text-to-image

- diffusers

- lora

inference: true

datasets:

- jbilcke-hf/akira

---

# LoRA DreamBooth - jbilcke-hf/sdxl-akira

These are LoRA adaption weights for stabilityai/stable-diffusion-xl-base-1.0 trained on @fffiloni's SD-XL trainer.

The weights were trained on the concept prompt:

```

akira-style

```

Use this keyword to trigger your custom model in your prompts.

LoRA for the text encoder was enabled: False.

Special VAE used for training: madebyollin/sdxl-vae-fp16-fix.

## Usage

Make sure to upgrade diffusers to >= 0.19.0:

```

pip install diffusers --upgrade

```

In addition make sure to install transformers, safetensors, accelerate as well as the invisible watermark:

```

pip install invisible_watermark transformers accelerate safetensors

```

To just use the base model, you can run:

```python

import torch

from diffusers import DiffusionPipeline, AutoencoderKL

device = "cuda" if torch.cuda.is_available() else "cpu"

vae = AutoencoderKL.from_pretrained('madebyollin/sdxl-vae-fp16-fix', torch_dtype=torch.float16)

pipe = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0",

vae=vae, torch_dtype=torch.float16, variant="fp16",

use_safetensors=True

)

pipe.to(device)

# This is where you load your trained weights

specific_safetensors = "pytorch_lora_weights.safetensors"

lora_scale = 0.9

pipe.load_lora_weights(

'jbilcke-hf/sdxl-akira',

weight_name = specific_safetensors,

# use_auth_token = True

)

prompt = "A majestic akira-style jumping from a big stone at night"

image = pipe(

prompt=prompt,

num_inference_steps=50,

cross_attention_kwargs={"scale": lora_scale}

).images[0]

```

|

jbilcke-hf/sdxl-starfield

|

jbilcke-hf

| 2023-10-27T15:04:16Z | 13 | 3 |

diffusers

|

[

"diffusers",

"stable-diffusion-xl",

"stable-diffusion-xl-diffusers",

"text-to-image",

"lora",

"dataset:jbilcke-hf/starfield",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"region:us"

] |

text-to-image

| 2023-10-27T09:53:40Z |

---

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: starfield-style

tags:

- stable-diffusion-xl

- stable-diffusion-xl-diffusers

- text-to-image

- diffusers

- lora

inference: true

datasets:

- jbilcke-hf/starfield

---

# LoRA DreamBooth - jbilcke-hf/sdxl-starfield

These are LoRA adaption weights for stabilityai/stable-diffusion-xl-base-1.0 trained on @fffiloni's SD-XL trainer.

The weights were trained on the concept prompt:

```

starfield-style

```

Use this keyword to trigger your custom model in your prompts.

LoRA for the text encoder was enabled: False.

Special VAE used for training: madebyollin/sdxl-vae-fp16-fix.

## Usage

Make sure to upgrade diffusers to >= 0.19.0:

```

pip install diffusers --upgrade

```

In addition make sure to install transformers, safetensors, accelerate as well as the invisible watermark:

```

pip install invisible_watermark transformers accelerate safetensors

```

To just use the base model, you can run:

```python

import torch

from diffusers import DiffusionPipeline, AutoencoderKL

device = "cuda" if torch.cuda.is_available() else "cpu"

vae = AutoencoderKL.from_pretrained('madebyollin/sdxl-vae-fp16-fix', torch_dtype=torch.float16)

pipe = DiffusionPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0",

vae=vae, torch_dtype=torch.float16, variant="fp16",

use_safetensors=True

)

pipe.to(device)

# This is where you load your trained weights

specific_safetensors = "pytorch_lora_weights.safetensors"

lora_scale = 0.9

pipe.load_lora_weights(

'jbilcke-hf/sdxl-starfield',

weight_name = specific_safetensors,

# use_auth_token = True

)

prompt = "A majestic starfield-style jumping from a big stone at night"

image = pipe(

prompt=prompt,

num_inference_steps=50,

cross_attention_kwargs={"scale": lora_scale}

).images[0]

```

|

profoz/odsc-sawyer-sft-rlhf

|

profoz

| 2023-10-27T15:02:22Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-10-27T15:01:35Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0

|

sungkwangjoong/pegasus-samsum

|

sungkwangjoong

| 2023-10-27T14:55:22Z | 97 | 0 |

transformers

|

[

"transformers",

"pytorch",

"pegasus",

"text2text-generation",

"generated_from_trainer",

"dataset:samsum",

"base_model:google/pegasus-cnn_dailymail",

"base_model:finetune:google/pegasus-cnn_dailymail",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-10-27T14:15:27Z |

---

base_model: google/pegasus-cnn_dailymail

tags:

- generated_from_trainer

datasets:

- samsum

model-index:

- name: pegasus-samsum

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# pegasus-samsum

This model is a fine-tuned version of [google/pegasus-cnn_dailymail](https://huggingface.co/google/pegasus-cnn_dailymail) on the samsum dataset.

It achieves the following results on the evaluation set:

- Loss: 1.4842

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 1

- eval_batch_size: 1

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.6609 | 0.54 | 500 | 1.4842 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

|

mpalaval/assignment2_attempt11

|

mpalaval

| 2023-10-27T14:52:51Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"base_model:google-bert/bert-base-cased",

"base_model:finetune:google-bert/bert-base-cased",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-10-27T05:07:12Z |

---

license: apache-2.0

base_model: bert-base-cased

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: assignment2_attempt11

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# assignment2_attempt11

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6058

- Precision: 0.2642

- Recall: 0.1186

- F1: 0.1637

- Accuracy: 0.9370

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 128 | 0.3124 | 0.2308 | 0.0254 | 0.0458 | 0.9401 |

| No log | 2.0 | 256 | 0.2862 | 0.1636 | 0.0763 | 0.1040 | 0.9353 |

| No log | 3.0 | 384 | 0.3899 | 0.2093 | 0.0763 | 0.1118 | 0.9359 |

| 0.1996 | 4.0 | 512 | 0.4161 | 0.3095 | 0.1102 | 0.1625 | 0.9382 |

| 0.1996 | 5.0 | 640 | 0.4845 | 0.3077 | 0.1017 | 0.1529 | 0.9392 |

| 0.1996 | 6.0 | 768 | 0.4841 | 0.2692 | 0.1186 | 0.1647 | 0.9365 |

| 0.1996 | 7.0 | 896 | 0.4987 | 0.2258 | 0.1186 | 0.1556 | 0.9349 |

| 0.0254 | 8.0 | 1024 | 0.5512 | 0.2766 | 0.1102 | 0.1576 | 0.9370 |

| 0.0254 | 9.0 | 1152 | 0.5772 | 0.3171 | 0.1102 | 0.1635 | 0.9379 |

| 0.0254 | 10.0 | 1280 | 0.5764 | 0.2586 | 0.1271 | 0.1705 | 0.9342 |

| 0.0254 | 11.0 | 1408 | 0.5964 | 0.2917 | 0.1186 | 0.1687 | 0.9380 |

| 0.005 | 12.0 | 1536 | 0.5952 | 0.2642 | 0.1186 | 0.1637 | 0.9368 |

| 0.005 | 13.0 | 1664 | 0.5980 | 0.2593 | 0.1186 | 0.1628 | 0.9367 |

| 0.005 | 14.0 | 1792 | 0.6033 | 0.2642 | 0.1186 | 0.1637 | 0.9370 |

| 0.005 | 15.0 | 1920 | 0.6058 | 0.2642 | 0.1186 | 0.1637 | 0.9370 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

|

princeton-nlp/AutoCompressor-1.3b-30k

|

princeton-nlp

| 2023-10-27T14:50:20Z | 76 | 1 |

transformers

|

[

"transformers",

"pytorch",

"opt",

"arxiv:2305.14788",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2023-07-23T21:51:19Z |

---

license: apache-2.0

---

license: apache-2.0

---

**Paper**: [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788)

**Code**: https://github.com/princeton-nlp/AutoCompressors

**Models**:

- Llama-2-7b fine-tuned models: [AutoCompressor-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-Llama-2-7b-6k/), [FullAttention-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/FullAttention-Llama-2-7b-6k)

- OPT-2.7b fine-tuned models: [AutoCompressor-2.7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-6k), [AutoCompressor-2.7b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-30k), [RMT-2.7b-8k](https://huggingface.co/princeton-nlp/RMT-2.7b-8k), [FullAttention-2.7b-4k](https://huggingface.co/princeton-nlp/FullAttention-2.7b-4k)

- OPT-1.3b fine-tuned models: [AutoCompressor-1.3b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-1.3b-30k), [RMT-1.3b-30k](https://huggingface.co/princeton-nlp/RMT-1.3b-30k)

---

AutoCompressor-1.3b-30k is a model fine-tuned from [facebook/opt-1.3b](https://huggingface.co/facebook/opt-1.3b) following the AutoCompressor method in [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788).

This model is fine-tuned on 2B tokens from Books3 in [The Pile](https://pile.eleuther.ai). The pre-trained OPT-1.3b model is fine-tuned on sequences of 30,720 tokens with 50 summary vectors, summary accumulation, randomized segmenting, and stop-gradients.

To get started, download the [`AutoCompressor`](https://github.com/princeton-nlp/AutoCompressors) repository and load the model as follows:

```

from auto_compressor import AutoCompressorModel

model = AutoCompressorModel.from_pretrained("princeton-nlp/AutoCompressor-1.3b-30k")

```

**Evaluation**

We record the perplexity achieved by our 30k-fine-tuned OPT models on segments of 2,048 tokens sampled from Books3 and ArXiv in The Pile, conditioned on different amounts of context.

| Context Tokens | 0 |14,336 | 28,672 |

| -----------------------------|------|--------|--------|

| RMT-1.3b-30k | 13.18|12.50 |12.50 |

| AutoCompressor-1.3b-30k | 13.21|12.49 |12.47 |

| AutoCompressor-2.7b-30k | 11.86|11.21 |11.18 |

## Bibtex

```

@misc{chevalier2023adapting,

title={Adapting Language Models to Compress Contexts},

author={Alexis Chevalier and Alexander Wettig and Anirudh Ajith and Danqi Chen},

year={2023},

eprint={2305.14788},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

LoneStriker/zephyr-7b-beta-5.0bpw-h6-exl2

|

LoneStriker

| 2023-10-27T14:48:48Z | 9 | 2 |

transformers

|

[

"transformers",

"safetensors",

"mistral",

"text-generation",

"generated_from_trainer",

"conversational",

"en",

"dataset:HuggingFaceH4/ultrachat_200k",

"dataset:HuggingFaceH4/ultrafeedback_binarized",

"arxiv:2305.18290",

"arxiv:2310.16944",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:finetune:mistralai/Mistral-7B-v0.1",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-27T14:48:34Z |

---

tags:

- generated_from_trainer

model-index:

- name: zephyr-7b-beta

results: []

license: mit

datasets:

- HuggingFaceH4/ultrachat_200k

- HuggingFaceH4/ultrafeedback_binarized

language:

- en

base_model: mistralai/Mistral-7B-v0.1

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

<img src="https://huggingface.co/HuggingFaceH4/zephyr-7b-alpha/resolve/main/thumbnail.png" alt="Zephyr Logo" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

# Model Card for Zephyr 7B β

Zephyr is a series of language models that are trained to act as helpful assistants. Zephyr-7B-β is the second model in the series, and is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) that was trained on on a mix of publicly available, synthetic datasets using [Direct Preference Optimization (DPO)](https://arxiv.org/abs/2305.18290). We found that removing the in-built alignment of these datasets boosted performance on [MT Bench](https://huggingface.co/spaces/lmsys/mt-bench) and made the model more helpful. However, this means that model is likely to generate problematic text when prompted to do so and should only be used for educational and research purposes. You can find more details in the [technical report](https://arxiv.org/abs/2310.16944).

## Model description

- **Model type:** A 7B parameter GPT-like model fine-tuned on a mix of publicly available, synthetic datasets.

- **Language(s) (NLP):** Primarily English

- **License:** MIT

- **Finetuned from model:** [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** https://github.com/huggingface/alignment-handbook

- **Demo:** https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat

- **Chatbot Arena:** Evaluate Zephyr 7B against 10+ LLMs in the LMSYS arena: http://arena.lmsys.org

## Performance

At the time of release, Zephyr-7B-β is the highest ranked 7B chat model on the [MT-Bench](https://huggingface.co/spaces/lmsys/mt-bench) and [AlpacaEval](https://tatsu-lab.github.io/alpaca_eval/) benchmarks:

| Model | Size | Alignment | MT-Bench (score) | AlpacaEval (win rate %) |

|-------------|-----|----|---------------|--------------|

| StableLM-Tuned-α | 7B| dSFT |2.75| -|

| MPT-Chat | 7B |dSFT |5.42| -|

| Xwin-LMv0.1 | 7B| dPPO| 6.19| 87.83|

| Mistral-Instructv0.1 | 7B| - | 6.84 |-|

| Zephyr-7b-α |7B| dDPO| 6.88| -|

| **Zephyr-7b-β** 🪁 | **7B** | **dDPO** | **7.34** | **90.60** |

| Falcon-Instruct | 40B |dSFT |5.17 |45.71|

| Guanaco | 65B | SFT |6.41| 71.80|

| Llama2-Chat | 70B |RLHF |6.86| 92.66|

| Vicuna v1.3 | 33B |dSFT |7.12 |88.99|

| WizardLM v1.0 | 70B |dSFT |7.71 |-|

| Xwin-LM v0.1 | 70B |dPPO |- |95.57|

| GPT-3.5-turbo | - |RLHF |7.94 |89.37|

| Claude 2 | - |RLHF |8.06| 91.36|

| GPT-4 | -| RLHF |8.99| 95.28|

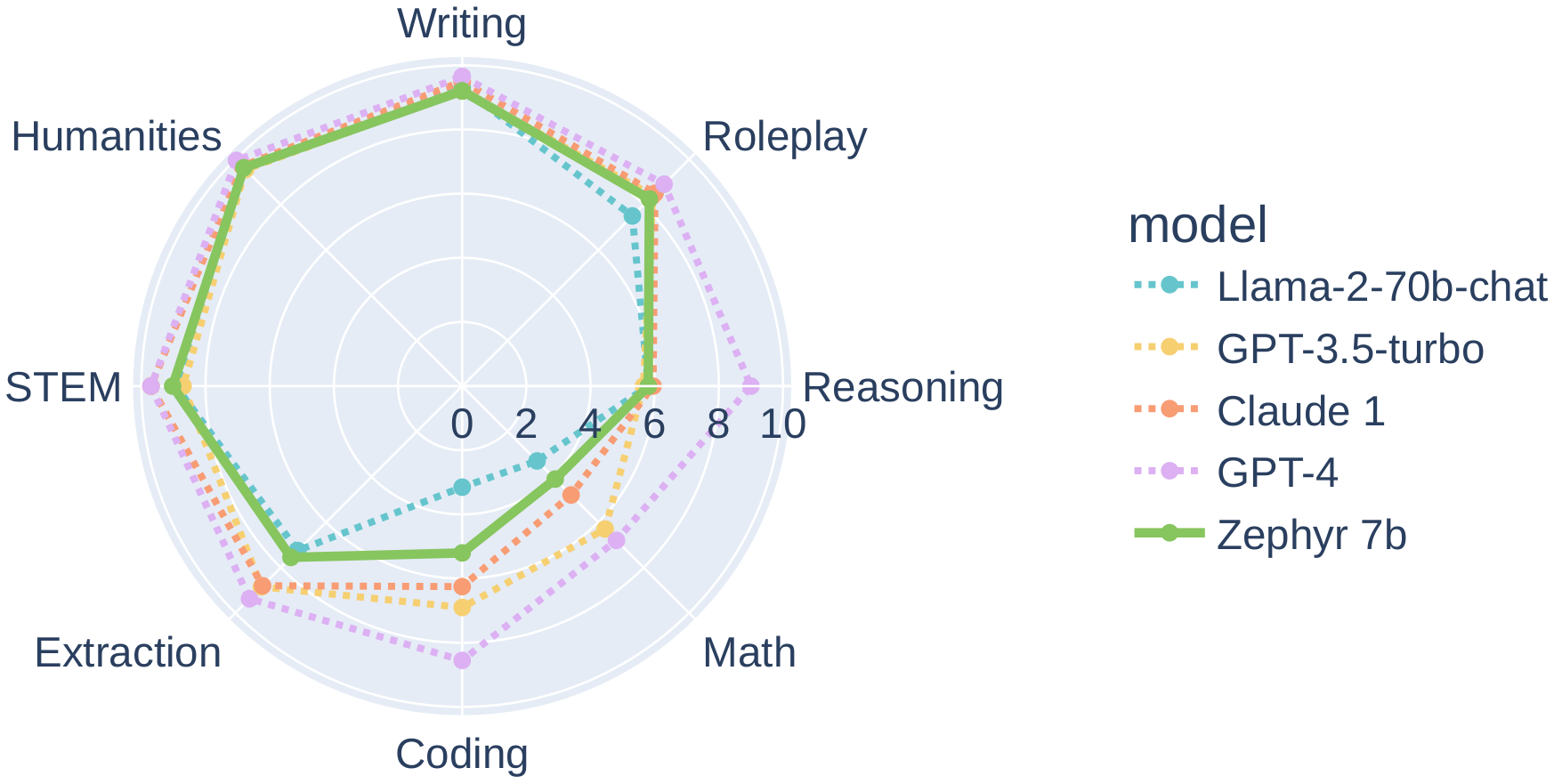

In particular, on several categories of MT-Bench, Zephyr-7B-β has strong performance compared to larger open models like Llama2-Chat-70B:

However, on more complex tasks like coding and mathematics, Zephyr-7B-β lags behind proprietary models and more research is needed to close the gap.

## Intended uses & limitations

The model was initially fine-tuned on a filtered and preprocessed of the [`UltraChat`](https://huggingface.co/datasets/stingning/ultrachat) dataset, which contains a diverse range of synthetic dialogues generated by ChatGPT.

We then further aligned the model with [🤗 TRL's](https://github.com/huggingface/trl) `DPOTrainer` on the [openbmb/UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) dataset, which contains 64k prompts and model completions that are ranked by GPT-4. As a result, the model can be used for chat and you can check out our [demo](https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat) to test its capabilities.

You can find the datasets used for training Zephyr-7B-β [here](https://huggingface.co/collections/HuggingFaceH4/zephyr-7b-6538c6d6d5ddd1cbb1744a66)

Here's how you can run the model using the `pipeline()` function from 🤗 Transformers:

```python

# Install transformers from source - only needed for versions <= v4.34

# pip install git+https://github.com/huggingface/transformers.git

# pip install accelerate

import torch

from transformers import pipeline

pipe = pipeline("text-generation", model="HuggingFaceH4/zephyr-7b-beta", torch_dtype=torch.bfloat16, device_map="auto")

# We use the tokenizer's chat template to format each message - see https://huggingface.co/docs/transformers/main/en/chat_templating

messages = [

{

"role": "system",

"content": "You are a friendly chatbot who always responds in the style of a pirate",

},

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

]

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

# <|system|>

# You are a friendly chatbot who always responds in the style of a pirate.</s>

# <|user|>

# How many helicopters can a human eat in one sitting?</s>

# <|assistant|>

# Ah, me hearty matey! But yer question be a puzzler! A human cannot eat a helicopter in one sitting, as helicopters are not edible. They be made of metal, plastic, and other materials, not food!

```

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

Zephyr-7B-β has not been aligned to human preferences with techniques like RLHF or deployed with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

It is also unknown what the size and composition of the corpus was used to train the base model (`mistralai/Mistral-7B-v0.1`), however it is likely to have included a mix of Web data and technical sources like books and code. See the [Falcon 180B model card](https://huggingface.co/tiiuae/falcon-180B#training-data) for an example of this.

## Training and evaluation data

During DPO training, this model achieves the following results on the evaluation set:

- Loss: 0.7496

- Rewards/chosen: -4.5221

- Rewards/rejected: -8.3184

- Rewards/accuracies: 0.7812

- Rewards/margins: 3.7963

- Logps/rejected: -340.1541

- Logps/chosen: -299.4561

- Logits/rejected: -2.3081

- Logits/chosen: -2.3531

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-07

- train_batch_size: 2

- eval_batch_size: 4

- seed: 42

- distributed_type: multi-GPU

- num_devices: 16

- total_train_batch_size: 32

- total_eval_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3.0

### Training results

The table below shows the full set of DPO training metrics:

| Training Loss | Epoch | Step | Validation Loss | Rewards/chosen | Rewards/rejected | Rewards/accuracies | Rewards/margins | Logps/rejected | Logps/chosen | Logits/rejected | Logits/chosen |

|:-------------:|:-----:|:----:|:---------------:|:--------------:|:----------------:|:------------------:|:---------------:|:--------------:|:------------:|:---------------:|:-------------:|

| 0.6284 | 0.05 | 100 | 0.6098 | 0.0425 | -0.1872 | 0.7344 | 0.2297 | -258.8416 | -253.8099 | -2.7976 | -2.8234 |

| 0.4908 | 0.1 | 200 | 0.5426 | -0.0279 | -0.6842 | 0.75 | 0.6563 | -263.8124 | -254.5145 | -2.7719 | -2.7960 |

| 0.5264 | 0.15 | 300 | 0.5324 | 0.0414 | -0.9793 | 0.7656 | 1.0207 | -266.7627 | -253.8209 | -2.7892 | -2.8122 |

| 0.5536 | 0.21 | 400 | 0.4957 | -0.0185 | -1.5276 | 0.7969 | 1.5091 | -272.2460 | -254.4203 | -2.8542 | -2.8764 |

| 0.5362 | 0.26 | 500 | 0.5031 | -0.2630 | -1.5917 | 0.7812 | 1.3287 | -272.8869 | -256.8653 | -2.8702 | -2.8958 |

| 0.5966 | 0.31 | 600 | 0.5963 | -0.2993 | -1.6491 | 0.7812 | 1.3499 | -273.4614 | -257.2279 | -2.8778 | -2.8986 |

| 0.5014 | 0.36 | 700 | 0.5382 | -0.2859 | -1.4750 | 0.75 | 1.1891 | -271.7204 | -257.0942 | -2.7659 | -2.7869 |

| 0.5334 | 0.41 | 800 | 0.5677 | -0.4289 | -1.8968 | 0.7969 | 1.4679 | -275.9378 | -258.5242 | -2.7053 | -2.7265 |

| 0.5251 | 0.46 | 900 | 0.5772 | -0.2116 | -1.3107 | 0.7344 | 1.0991 | -270.0768 | -256.3507 | -2.8463 | -2.8662 |

| 0.5205 | 0.52 | 1000 | 0.5262 | -0.3792 | -1.8585 | 0.7188 | 1.4793 | -275.5552 | -258.0276 | -2.7893 | -2.7979 |

| 0.5094 | 0.57 | 1100 | 0.5433 | -0.6279 | -1.9368 | 0.7969 | 1.3089 | -276.3377 | -260.5136 | -2.7453 | -2.7536 |

| 0.5837 | 0.62 | 1200 | 0.5349 | -0.3780 | -1.9584 | 0.7656 | 1.5804 | -276.5542 | -258.0154 | -2.7643 | -2.7756 |

| 0.5214 | 0.67 | 1300 | 0.5732 | -1.0055 | -2.2306 | 0.7656 | 1.2251 | -279.2761 | -264.2903 | -2.6986 | -2.7113 |

| 0.6914 | 0.72 | 1400 | 0.5137 | -0.6912 | -2.1775 | 0.7969 | 1.4863 | -278.7448 | -261.1467 | -2.7166 | -2.7275 |

| 0.4655 | 0.77 | 1500 | 0.5090 | -0.7987 | -2.2930 | 0.7031 | 1.4943 | -279.8999 | -262.2220 | -2.6651 | -2.6838 |

| 0.5731 | 0.83 | 1600 | 0.5312 | -0.8253 | -2.3520 | 0.7812 | 1.5268 | -280.4902 | -262.4876 | -2.6543 | -2.6728 |

| 0.5233 | 0.88 | 1700 | 0.5206 | -0.4573 | -2.0951 | 0.7812 | 1.6377 | -277.9205 | -258.8084 | -2.6870 | -2.7097 |

| 0.5593 | 0.93 | 1800 | 0.5231 | -0.5508 | -2.2000 | 0.7969 | 1.6492 | -278.9703 | -259.7433 | -2.6221 | -2.6519 |

| 0.4967 | 0.98 | 1900 | 0.5290 | -0.5340 | -1.9570 | 0.8281 | 1.4230 | -276.5395 | -259.5749 | -2.6564 | -2.6878 |

| 0.0921 | 1.03 | 2000 | 0.5368 | -1.1376 | -3.1615 | 0.7812 | 2.0239 | -288.5854 | -265.6111 | -2.6040 | -2.6345 |

| 0.0733 | 1.08 | 2100 | 0.5453 | -1.1045 | -3.4451 | 0.7656 | 2.3406 | -291.4208 | -265.2799 | -2.6289 | -2.6595 |

| 0.0972 | 1.14 | 2200 | 0.5571 | -1.6915 | -3.9823 | 0.8125 | 2.2908 | -296.7934 | -271.1505 | -2.6471 | -2.6709 |

| 0.1058 | 1.19 | 2300 | 0.5789 | -1.0621 | -3.8941 | 0.7969 | 2.8319 | -295.9106 | -264.8563 | -2.5527 | -2.5798 |

| 0.2423 | 1.24 | 2400 | 0.5455 | -1.1963 | -3.5590 | 0.7812 | 2.3627 | -292.5599 | -266.1981 | -2.5414 | -2.5784 |

| 0.1177 | 1.29 | 2500 | 0.5889 | -1.8141 | -4.3942 | 0.7969 | 2.5801 | -300.9120 | -272.3761 | -2.4802 | -2.5189 |

| 0.1213 | 1.34 | 2600 | 0.5683 | -1.4608 | -3.8420 | 0.8125 | 2.3812 | -295.3901 | -268.8436 | -2.4774 | -2.5207 |

| 0.0889 | 1.39 | 2700 | 0.5890 | -1.6007 | -3.7337 | 0.7812 | 2.1330 | -294.3068 | -270.2423 | -2.4123 | -2.4522 |

| 0.0995 | 1.45 | 2800 | 0.6073 | -1.5519 | -3.8362 | 0.8281 | 2.2843 | -295.3315 | -269.7538 | -2.4685 | -2.5050 |

| 0.1145 | 1.5 | 2900 | 0.5790 | -1.7939 | -4.2876 | 0.8438 | 2.4937 | -299.8461 | -272.1744 | -2.4272 | -2.4674 |

| 0.0644 | 1.55 | 3000 | 0.5735 | -1.7285 | -4.2051 | 0.8125 | 2.4766 | -299.0209 | -271.5201 | -2.4193 | -2.4574 |

| 0.0798 | 1.6 | 3100 | 0.5537 | -1.7226 | -4.2850 | 0.8438 | 2.5624 | -299.8200 | -271.4610 | -2.5367 | -2.5696 |

| 0.1013 | 1.65 | 3200 | 0.5575 | -1.5715 | -3.9813 | 0.875 | 2.4098 | -296.7825 | -269.9498 | -2.4926 | -2.5267 |

| 0.1254 | 1.7 | 3300 | 0.5905 | -1.6412 | -4.4703 | 0.8594 | 2.8291 | -301.6730 | -270.6473 | -2.5017 | -2.5340 |

| 0.085 | 1.76 | 3400 | 0.6133 | -1.9159 | -4.6760 | 0.8438 | 2.7601 | -303.7296 | -273.3941 | -2.4614 | -2.4960 |

| 0.065 | 1.81 | 3500 | 0.6074 | -1.8237 | -4.3525 | 0.8594 | 2.5288 | -300.4951 | -272.4724 | -2.4597 | -2.5004 |

| 0.0755 | 1.86 | 3600 | 0.5836 | -1.9252 | -4.4005 | 0.8125 | 2.4753 | -300.9748 | -273.4872 | -2.4327 | -2.4716 |

| 0.0746 | 1.91 | 3700 | 0.5789 | -1.9280 | -4.4906 | 0.8125 | 2.5626 | -301.8762 | -273.5149 | -2.4686 | -2.5115 |

| 0.1348 | 1.96 | 3800 | 0.6015 | -1.8658 | -4.2428 | 0.8281 | 2.3769 | -299.3976 | -272.8936 | -2.4943 | -2.5393 |

| 0.0217 | 2.01 | 3900 | 0.6122 | -2.3335 | -4.9229 | 0.8281 | 2.5894 | -306.1988 | -277.5699 | -2.4841 | -2.5272 |

| 0.0219 | 2.07 | 4000 | 0.6522 | -2.9890 | -6.0164 | 0.8281 | 3.0274 | -317.1334 | -284.1248 | -2.4105 | -2.4545 |

| 0.0119 | 2.12 | 4100 | 0.6922 | -3.4777 | -6.6749 | 0.7969 | 3.1972 | -323.7187 | -289.0121 | -2.4272 | -2.4699 |

| 0.0153 | 2.17 | 4200 | 0.6993 | -3.2406 | -6.6775 | 0.7969 | 3.4369 | -323.7453 | -286.6413 | -2.4047 | -2.4465 |

| 0.011 | 2.22 | 4300 | 0.7178 | -3.7991 | -7.4397 | 0.7656 | 3.6406 | -331.3667 | -292.2260 | -2.3843 | -2.4290 |

| 0.0072 | 2.27 | 4400 | 0.6840 | -3.3269 | -6.8021 | 0.8125 | 3.4752 | -324.9908 | -287.5042 | -2.4095 | -2.4536 |

| 0.0197 | 2.32 | 4500 | 0.7013 | -3.6890 | -7.3014 | 0.8125 | 3.6124 | -329.9841 | -291.1250 | -2.4118 | -2.4543 |

| 0.0182 | 2.37 | 4600 | 0.7476 | -3.8994 | -7.5366 | 0.8281 | 3.6372 | -332.3356 | -293.2291 | -2.4163 | -2.4565 |

| 0.0125 | 2.43 | 4700 | 0.7199 | -4.0560 | -7.5765 | 0.8438 | 3.5204 | -332.7345 | -294.7952 | -2.3699 | -2.4100 |

| 0.0082 | 2.48 | 4800 | 0.7048 | -3.6613 | -7.1356 | 0.875 | 3.4743 | -328.3255 | -290.8477 | -2.3925 | -2.4303 |

| 0.0118 | 2.53 | 4900 | 0.6976 | -3.7908 | -7.3152 | 0.8125 | 3.5244 | -330.1224 | -292.1431 | -2.3633 | -2.4047 |

| 0.0118 | 2.58 | 5000 | 0.7198 | -3.9049 | -7.5557 | 0.8281 | 3.6508 | -332.5271 | -293.2844 | -2.3764 | -2.4194 |

| 0.006 | 2.63 | 5100 | 0.7506 | -4.2118 | -7.9149 | 0.8125 | 3.7032 | -336.1194 | -296.3530 | -2.3407 | -2.3860 |

| 0.0143 | 2.68 | 5200 | 0.7408 | -4.2433 | -7.9802 | 0.8125 | 3.7369 | -336.7721 | -296.6682 | -2.3509 | -2.3946 |

| 0.0057 | 2.74 | 5300 | 0.7552 | -4.3392 | -8.0831 | 0.7969 | 3.7439 | -337.8013 | -297.6275 | -2.3388 | -2.3842 |

| 0.0138 | 2.79 | 5400 | 0.7404 | -4.2395 | -7.9762 | 0.8125 | 3.7367 | -336.7322 | -296.6304 | -2.3286 | -2.3737 |

| 0.0079 | 2.84 | 5500 | 0.7525 | -4.4466 | -8.2196 | 0.7812 | 3.7731 | -339.1662 | -298.7007 | -2.3200 | -2.3641 |

| 0.0077 | 2.89 | 5600 | 0.7520 | -4.5586 | -8.3485 | 0.7969 | 3.7899 | -340.4545 | -299.8206 | -2.3078 | -2.3517 |

| 0.0094 | 2.94 | 5700 | 0.7527 | -4.5542 | -8.3509 | 0.7812 | 3.7967 | -340.4790 | -299.7773 | -2.3062 | -2.3510 |

| 0.0054 | 2.99 | 5800 | 0.7520 | -4.5169 | -8.3079 | 0.7812 | 3.7911 | -340.0493 | -299.4038 | -2.3081 | -2.3530 |

### Framework versions

- Transformers 4.35.0.dev0

- Pytorch 2.0.1+cu118

- Datasets 2.12.0

- Tokenizers 0.14.0

## Citation

If you find Zephyr-7B-β is useful in your work, please cite it with:

```

@misc{tunstall2023zephyr,

title={Zephyr: Direct Distillation of LM Alignment},

author={Lewis Tunstall and Edward Beeching and Nathan Lambert and Nazneen Rajani and Kashif Rasul and Younes Belkada and Shengyi Huang and Leandro von Werra and Clémentine Fourrier and Nathan Habib and Nathan Sarrazin and Omar Sanseviero and Alexander M. Rush and Thomas Wolf},

year={2023},

eprint={2310.16944},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

```

|

princeton-nlp/FullAttention-2.7b-4k

|

princeton-nlp

| 2023-10-27T14:43:43Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"opt",

"arxiv:2305.14788",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2023-07-23T21:58:42Z |

---

license: apache-2.0

---

license: apache-2.0

---

**Paper**: [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788)

**Code**: https://github.com/princeton-nlp/AutoCompressors

**Models**:

- Llama-2-7b fine-tuned models: [AutoCompressor-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-Llama-2-7b-6k/), [FullAttention-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/FullAttention-Llama-2-7b-6k)

- OPT-2.7b fine-tuned models: [AutoCompressor-2.7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-6k), [AutoCompressor-2.7b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-30k), [RMT-2.7b-8k](https://huggingface.co/princeton-nlp/RMT-2.7b-8k), [FullAttention-2.7b-4k](https://huggingface.co/princeton-nlp/FullAttention-2.7b-4k)

- OPT-1.3b fine-tuned models: [AutoCompressor-1.3b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-1.3b-30k), [RMT-1.3b-30k](https://huggingface.co/princeton-nlp/RMT-1.3b-30k)

---

FullAttention-2.7b-4k is a model fine-tuned from [facebook/opt-2.7b](https://huggingface.co/facebook/opt-2.7b) following the context window extension method described in [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788).

The 2,048 positional embeddings of the pre-trained OPT-2.7b are duplicated and the model is fine-tuned on sequences of 4,096 tokens from 2B tokens from [The Pile](https://pile.eleuther.ai).

To get started, download the [`AutoCompressor`](https://github.com/princeton-nlp/AutoCompressors) repository and load the model as follows:

```

from auto_compressor import AutoCompressorModel

model = AutoCompressorModel.from_pretrained("princeton-nlp/FullAttention-2.7b-4k")

```

**Evaluation**

We record the perplexity achieved by our OPT-2.7b models on segments of 2,048 tokens, conditioned on different amounts of context.

FullAttention-2.7-4k uses full uncompressed contexts whereas AutoCompressor-2.7b-6k and RMT-2.7b-8k compress segments of 2,048 tokens into 50 summary vectors.

*In-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 6.57|6.15 |5.94 |- |- |

| RMT-2.7b-8k | 6.34|6.19 |6.02 | 6.02 | 6.01 |

| AutoCompressor-2.7b-6k | 6.31|6.04 | 5.98 | 5.94 | 5.93 |

*Out-of-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 8.94|8.28 |7.93 |- |- |

| RMT-2.7b-8k | 8.62|8.44 |8.21 | 8.20 | 8.20 |

| AutoCompressor-2.7b-6k | 8.60|8.26 | 8.17 | 8.12 | 8.10 |

## Bibtex

```

@misc{chevalier2023adapting,

title={Adapting Language Models to Compress Contexts},

author={Alexis Chevalier and Alexander Wettig and Anirudh Ajith and Danqi Chen},

year={2023},

eprint={2305.14788},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

LoneStriker/zephyr-7b-beta-4.0bpw-h6-exl2

|

LoneStriker

| 2023-10-27T14:41:47Z | 9 | 0 |

transformers

|

[

"transformers",

"safetensors",

"mistral",

"text-generation",

"generated_from_trainer",

"conversational",

"en",

"dataset:HuggingFaceH4/ultrachat_200k",

"dataset:HuggingFaceH4/ultrafeedback_binarized",

"arxiv:2305.18290",

"arxiv:2310.16944",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:finetune:mistralai/Mistral-7B-v0.1",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-27T14:41:35Z |

---

tags:

- generated_from_trainer

model-index:

- name: zephyr-7b-beta

results: []

license: mit

datasets:

- HuggingFaceH4/ultrachat_200k

- HuggingFaceH4/ultrafeedback_binarized

language:

- en

base_model: mistralai/Mistral-7B-v0.1

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

<img src="https://huggingface.co/HuggingFaceH4/zephyr-7b-alpha/resolve/main/thumbnail.png" alt="Zephyr Logo" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

# Model Card for Zephyr 7B β

Zephyr is a series of language models that are trained to act as helpful assistants. Zephyr-7B-β is the second model in the series, and is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) that was trained on on a mix of publicly available, synthetic datasets using [Direct Preference Optimization (DPO)](https://arxiv.org/abs/2305.18290). We found that removing the in-built alignment of these datasets boosted performance on [MT Bench](https://huggingface.co/spaces/lmsys/mt-bench) and made the model more helpful. However, this means that model is likely to generate problematic text when prompted to do so and should only be used for educational and research purposes. You can find more details in the [technical report](https://arxiv.org/abs/2310.16944).

## Model description

- **Model type:** A 7B parameter GPT-like model fine-tuned on a mix of publicly available, synthetic datasets.

- **Language(s) (NLP):** Primarily English

- **License:** MIT

- **Finetuned from model:** [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** https://github.com/huggingface/alignment-handbook

- **Demo:** https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat

- **Chatbot Arena:** Evaluate Zephyr 7B against 10+ LLMs in the LMSYS arena: http://arena.lmsys.org

## Performance

At the time of release, Zephyr-7B-β is the highest ranked 7B chat model on the [MT-Bench](https://huggingface.co/spaces/lmsys/mt-bench) and [AlpacaEval](https://tatsu-lab.github.io/alpaca_eval/) benchmarks:

| Model | Size | Alignment | MT-Bench (score) | AlpacaEval (win rate %) |

|-------------|-----|----|---------------|--------------|

| StableLM-Tuned-α | 7B| dSFT |2.75| -|

| MPT-Chat | 7B |dSFT |5.42| -|

| Xwin-LMv0.1 | 7B| dPPO| 6.19| 87.83|

| Mistral-Instructv0.1 | 7B| - | 6.84 |-|

| Zephyr-7b-α |7B| dDPO| 6.88| -|

| **Zephyr-7b-β** 🪁 | **7B** | **dDPO** | **7.34** | **90.60** |

| Falcon-Instruct | 40B |dSFT |5.17 |45.71|

| Guanaco | 65B | SFT |6.41| 71.80|

| Llama2-Chat | 70B |RLHF |6.86| 92.66|

| Vicuna v1.3 | 33B |dSFT |7.12 |88.99|

| WizardLM v1.0 | 70B |dSFT |7.71 |-|

| Xwin-LM v0.1 | 70B |dPPO |- |95.57|

| GPT-3.5-turbo | - |RLHF |7.94 |89.37|

| Claude 2 | - |RLHF |8.06| 91.36|

| GPT-4 | -| RLHF |8.99| 95.28|

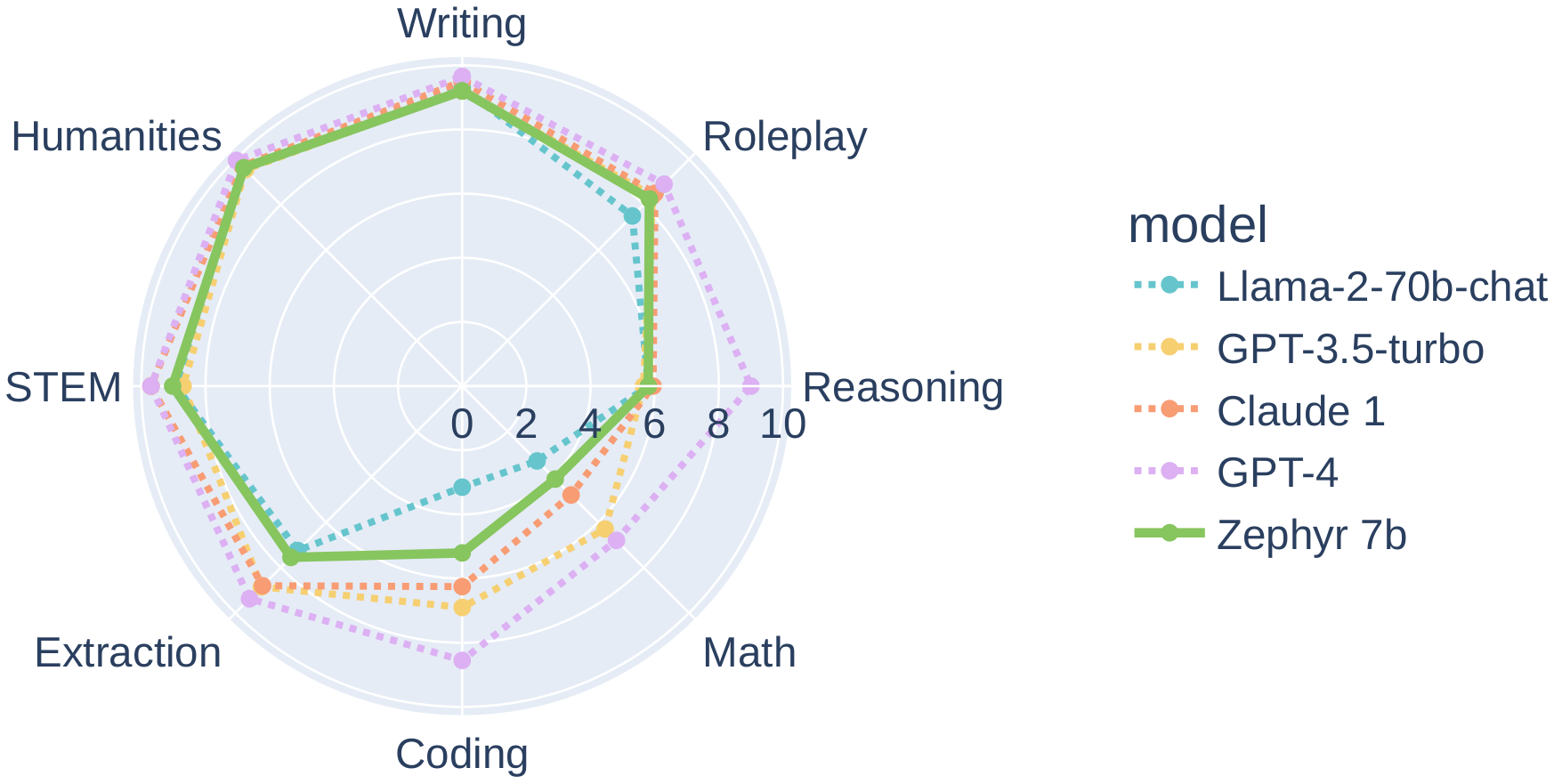

In particular, on several categories of MT-Bench, Zephyr-7B-β has strong performance compared to larger open models like Llama2-Chat-70B:

However, on more complex tasks like coding and mathematics, Zephyr-7B-β lags behind proprietary models and more research is needed to close the gap.

## Intended uses & limitations

The model was initially fine-tuned on a filtered and preprocessed of the [`UltraChat`](https://huggingface.co/datasets/stingning/ultrachat) dataset, which contains a diverse range of synthetic dialogues generated by ChatGPT.

We then further aligned the model with [🤗 TRL's](https://github.com/huggingface/trl) `DPOTrainer` on the [openbmb/UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) dataset, which contains 64k prompts and model completions that are ranked by GPT-4. As a result, the model can be used for chat and you can check out our [demo](https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat) to test its capabilities.

You can find the datasets used for training Zephyr-7B-β [here](https://huggingface.co/collections/HuggingFaceH4/zephyr-7b-6538c6d6d5ddd1cbb1744a66)

Here's how you can run the model using the `pipeline()` function from 🤗 Transformers:

```python

# Install transformers from source - only needed for versions <= v4.34

# pip install git+https://github.com/huggingface/transformers.git

# pip install accelerate

import torch

from transformers import pipeline

pipe = pipeline("text-generation", model="HuggingFaceH4/zephyr-7b-beta", torch_dtype=torch.bfloat16, device_map="auto")

# We use the tokenizer's chat template to format each message - see https://huggingface.co/docs/transformers/main/en/chat_templating

messages = [

{

"role": "system",

"content": "You are a friendly chatbot who always responds in the style of a pirate",

},

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

]

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

# <|system|>

# You are a friendly chatbot who always responds in the style of a pirate.</s>

# <|user|>

# How many helicopters can a human eat in one sitting?</s>

# <|assistant|>

# Ah, me hearty matey! But yer question be a puzzler! A human cannot eat a helicopter in one sitting, as helicopters are not edible. They be made of metal, plastic, and other materials, not food!

```

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

Zephyr-7B-β has not been aligned to human preferences with techniques like RLHF or deployed with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

It is also unknown what the size and composition of the corpus was used to train the base model (`mistralai/Mistral-7B-v0.1`), however it is likely to have included a mix of Web data and technical sources like books and code. See the [Falcon 180B model card](https://huggingface.co/tiiuae/falcon-180B#training-data) for an example of this.

## Training and evaluation data

During DPO training, this model achieves the following results on the evaluation set:

- Loss: 0.7496

- Rewards/chosen: -4.5221

- Rewards/rejected: -8.3184

- Rewards/accuracies: 0.7812

- Rewards/margins: 3.7963

- Logps/rejected: -340.1541

- Logps/chosen: -299.4561

- Logits/rejected: -2.3081

- Logits/chosen: -2.3531

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-07

- train_batch_size: 2

- eval_batch_size: 4

- seed: 42

- distributed_type: multi-GPU

- num_devices: 16

- total_train_batch_size: 32

- total_eval_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3.0

### Training results

The table below shows the full set of DPO training metrics:

| Training Loss | Epoch | Step | Validation Loss | Rewards/chosen | Rewards/rejected | Rewards/accuracies | Rewards/margins | Logps/rejected | Logps/chosen | Logits/rejected | Logits/chosen |

|:-------------:|:-----:|:----:|:---------------:|:--------------:|:----------------:|:------------------:|:---------------:|:--------------:|:------------:|:---------------:|:-------------:|

| 0.6284 | 0.05 | 100 | 0.6098 | 0.0425 | -0.1872 | 0.7344 | 0.2297 | -258.8416 | -253.8099 | -2.7976 | -2.8234 |

| 0.4908 | 0.1 | 200 | 0.5426 | -0.0279 | -0.6842 | 0.75 | 0.6563 | -263.8124 | -254.5145 | -2.7719 | -2.7960 |

| 0.5264 | 0.15 | 300 | 0.5324 | 0.0414 | -0.9793 | 0.7656 | 1.0207 | -266.7627 | -253.8209 | -2.7892 | -2.8122 |

| 0.5536 | 0.21 | 400 | 0.4957 | -0.0185 | -1.5276 | 0.7969 | 1.5091 | -272.2460 | -254.4203 | -2.8542 | -2.8764 |

| 0.5362 | 0.26 | 500 | 0.5031 | -0.2630 | -1.5917 | 0.7812 | 1.3287 | -272.8869 | -256.8653 | -2.8702 | -2.8958 |

| 0.5966 | 0.31 | 600 | 0.5963 | -0.2993 | -1.6491 | 0.7812 | 1.3499 | -273.4614 | -257.2279 | -2.8778 | -2.8986 |

| 0.5014 | 0.36 | 700 | 0.5382 | -0.2859 | -1.4750 | 0.75 | 1.1891 | -271.7204 | -257.0942 | -2.7659 | -2.7869 |

| 0.5334 | 0.41 | 800 | 0.5677 | -0.4289 | -1.8968 | 0.7969 | 1.4679 | -275.9378 | -258.5242 | -2.7053 | -2.7265 |

| 0.5251 | 0.46 | 900 | 0.5772 | -0.2116 | -1.3107 | 0.7344 | 1.0991 | -270.0768 | -256.3507 | -2.8463 | -2.8662 |

| 0.5205 | 0.52 | 1000 | 0.5262 | -0.3792 | -1.8585 | 0.7188 | 1.4793 | -275.5552 | -258.0276 | -2.7893 | -2.7979 |

| 0.5094 | 0.57 | 1100 | 0.5433 | -0.6279 | -1.9368 | 0.7969 | 1.3089 | -276.3377 | -260.5136 | -2.7453 | -2.7536 |

| 0.5837 | 0.62 | 1200 | 0.5349 | -0.3780 | -1.9584 | 0.7656 | 1.5804 | -276.5542 | -258.0154 | -2.7643 | -2.7756 |

| 0.5214 | 0.67 | 1300 | 0.5732 | -1.0055 | -2.2306 | 0.7656 | 1.2251 | -279.2761 | -264.2903 | -2.6986 | -2.7113 |

| 0.6914 | 0.72 | 1400 | 0.5137 | -0.6912 | -2.1775 | 0.7969 | 1.4863 | -278.7448 | -261.1467 | -2.7166 | -2.7275 |

| 0.4655 | 0.77 | 1500 | 0.5090 | -0.7987 | -2.2930 | 0.7031 | 1.4943 | -279.8999 | -262.2220 | -2.6651 | -2.6838 |

| 0.5731 | 0.83 | 1600 | 0.5312 | -0.8253 | -2.3520 | 0.7812 | 1.5268 | -280.4902 | -262.4876 | -2.6543 | -2.6728 |

| 0.5233 | 0.88 | 1700 | 0.5206 | -0.4573 | -2.0951 | 0.7812 | 1.6377 | -277.9205 | -258.8084 | -2.6870 | -2.7097 |

| 0.5593 | 0.93 | 1800 | 0.5231 | -0.5508 | -2.2000 | 0.7969 | 1.6492 | -278.9703 | -259.7433 | -2.6221 | -2.6519 |

| 0.4967 | 0.98 | 1900 | 0.5290 | -0.5340 | -1.9570 | 0.8281 | 1.4230 | -276.5395 | -259.5749 | -2.6564 | -2.6878 |

| 0.0921 | 1.03 | 2000 | 0.5368 | -1.1376 | -3.1615 | 0.7812 | 2.0239 | -288.5854 | -265.6111 | -2.6040 | -2.6345 |

| 0.0733 | 1.08 | 2100 | 0.5453 | -1.1045 | -3.4451 | 0.7656 | 2.3406 | -291.4208 | -265.2799 | -2.6289 | -2.6595 |

| 0.0972 | 1.14 | 2200 | 0.5571 | -1.6915 | -3.9823 | 0.8125 | 2.2908 | -296.7934 | -271.1505 | -2.6471 | -2.6709 |

| 0.1058 | 1.19 | 2300 | 0.5789 | -1.0621 | -3.8941 | 0.7969 | 2.8319 | -295.9106 | -264.8563 | -2.5527 | -2.5798 |

| 0.2423 | 1.24 | 2400 | 0.5455 | -1.1963 | -3.5590 | 0.7812 | 2.3627 | -292.5599 | -266.1981 | -2.5414 | -2.5784 |

| 0.1177 | 1.29 | 2500 | 0.5889 | -1.8141 | -4.3942 | 0.7969 | 2.5801 | -300.9120 | -272.3761 | -2.4802 | -2.5189 |

| 0.1213 | 1.34 | 2600 | 0.5683 | -1.4608 | -3.8420 | 0.8125 | 2.3812 | -295.3901 | -268.8436 | -2.4774 | -2.5207 |

| 0.0889 | 1.39 | 2700 | 0.5890 | -1.6007 | -3.7337 | 0.7812 | 2.1330 | -294.3068 | -270.2423 | -2.4123 | -2.4522 |

| 0.0995 | 1.45 | 2800 | 0.6073 | -1.5519 | -3.8362 | 0.8281 | 2.2843 | -295.3315 | -269.7538 | -2.4685 | -2.5050 |

| 0.1145 | 1.5 | 2900 | 0.5790 | -1.7939 | -4.2876 | 0.8438 | 2.4937 | -299.8461 | -272.1744 | -2.4272 | -2.4674 |

| 0.0644 | 1.55 | 3000 | 0.5735 | -1.7285 | -4.2051 | 0.8125 | 2.4766 | -299.0209 | -271.5201 | -2.4193 | -2.4574 |

| 0.0798 | 1.6 | 3100 | 0.5537 | -1.7226 | -4.2850 | 0.8438 | 2.5624 | -299.8200 | -271.4610 | -2.5367 | -2.5696 |

| 0.1013 | 1.65 | 3200 | 0.5575 | -1.5715 | -3.9813 | 0.875 | 2.4098 | -296.7825 | -269.9498 | -2.4926 | -2.5267 |

| 0.1254 | 1.7 | 3300 | 0.5905 | -1.6412 | -4.4703 | 0.8594 | 2.8291 | -301.6730 | -270.6473 | -2.5017 | -2.5340 |

| 0.085 | 1.76 | 3400 | 0.6133 | -1.9159 | -4.6760 | 0.8438 | 2.7601 | -303.7296 | -273.3941 | -2.4614 | -2.4960 |

| 0.065 | 1.81 | 3500 | 0.6074 | -1.8237 | -4.3525 | 0.8594 | 2.5288 | -300.4951 | -272.4724 | -2.4597 | -2.5004 |

| 0.0755 | 1.86 | 3600 | 0.5836 | -1.9252 | -4.4005 | 0.8125 | 2.4753 | -300.9748 | -273.4872 | -2.4327 | -2.4716 |

| 0.0746 | 1.91 | 3700 | 0.5789 | -1.9280 | -4.4906 | 0.8125 | 2.5626 | -301.8762 | -273.5149 | -2.4686 | -2.5115 |

| 0.1348 | 1.96 | 3800 | 0.6015 | -1.8658 | -4.2428 | 0.8281 | 2.3769 | -299.3976 | -272.8936 | -2.4943 | -2.5393 |

| 0.0217 | 2.01 | 3900 | 0.6122 | -2.3335 | -4.9229 | 0.8281 | 2.5894 | -306.1988 | -277.5699 | -2.4841 | -2.5272 |

| 0.0219 | 2.07 | 4000 | 0.6522 | -2.9890 | -6.0164 | 0.8281 | 3.0274 | -317.1334 | -284.1248 | -2.4105 | -2.4545 |

| 0.0119 | 2.12 | 4100 | 0.6922 | -3.4777 | -6.6749 | 0.7969 | 3.1972 | -323.7187 | -289.0121 | -2.4272 | -2.4699 |

| 0.0153 | 2.17 | 4200 | 0.6993 | -3.2406 | -6.6775 | 0.7969 | 3.4369 | -323.7453 | -286.6413 | -2.4047 | -2.4465 |

| 0.011 | 2.22 | 4300 | 0.7178 | -3.7991 | -7.4397 | 0.7656 | 3.6406 | -331.3667 | -292.2260 | -2.3843 | -2.4290 |

| 0.0072 | 2.27 | 4400 | 0.6840 | -3.3269 | -6.8021 | 0.8125 | 3.4752 | -324.9908 | -287.5042 | -2.4095 | -2.4536 |

| 0.0197 | 2.32 | 4500 | 0.7013 | -3.6890 | -7.3014 | 0.8125 | 3.6124 | -329.9841 | -291.1250 | -2.4118 | -2.4543 |

| 0.0182 | 2.37 | 4600 | 0.7476 | -3.8994 | -7.5366 | 0.8281 | 3.6372 | -332.3356 | -293.2291 | -2.4163 | -2.4565 |

| 0.0125 | 2.43 | 4700 | 0.7199 | -4.0560 | -7.5765 | 0.8438 | 3.5204 | -332.7345 | -294.7952 | -2.3699 | -2.4100 |

| 0.0082 | 2.48 | 4800 | 0.7048 | -3.6613 | -7.1356 | 0.875 | 3.4743 | -328.3255 | -290.8477 | -2.3925 | -2.4303 |

| 0.0118 | 2.53 | 4900 | 0.6976 | -3.7908 | -7.3152 | 0.8125 | 3.5244 | -330.1224 | -292.1431 | -2.3633 | -2.4047 |

| 0.0118 | 2.58 | 5000 | 0.7198 | -3.9049 | -7.5557 | 0.8281 | 3.6508 | -332.5271 | -293.2844 | -2.3764 | -2.4194 |

| 0.006 | 2.63 | 5100 | 0.7506 | -4.2118 | -7.9149 | 0.8125 | 3.7032 | -336.1194 | -296.3530 | -2.3407 | -2.3860 |

| 0.0143 | 2.68 | 5200 | 0.7408 | -4.2433 | -7.9802 | 0.8125 | 3.7369 | -336.7721 | -296.6682 | -2.3509 | -2.3946 |

| 0.0057 | 2.74 | 5300 | 0.7552 | -4.3392 | -8.0831 | 0.7969 | 3.7439 | -337.8013 | -297.6275 | -2.3388 | -2.3842 |

| 0.0138 | 2.79 | 5400 | 0.7404 | -4.2395 | -7.9762 | 0.8125 | 3.7367 | -336.7322 | -296.6304 | -2.3286 | -2.3737 |

| 0.0079 | 2.84 | 5500 | 0.7525 | -4.4466 | -8.2196 | 0.7812 | 3.7731 | -339.1662 | -298.7007 | -2.3200 | -2.3641 |

| 0.0077 | 2.89 | 5600 | 0.7520 | -4.5586 | -8.3485 | 0.7969 | 3.7899 | -340.4545 | -299.8206 | -2.3078 | -2.3517 |

| 0.0094 | 2.94 | 5700 | 0.7527 | -4.5542 | -8.3509 | 0.7812 | 3.7967 | -340.4790 | -299.7773 | -2.3062 | -2.3510 |

| 0.0054 | 2.99 | 5800 | 0.7520 | -4.5169 | -8.3079 | 0.7812 | 3.7911 | -340.0493 | -299.4038 | -2.3081 | -2.3530 |

### Framework versions

- Transformers 4.35.0.dev0

- Pytorch 2.0.1+cu118

- Datasets 2.12.0

- Tokenizers 0.14.0

## Citation

If you find Zephyr-7B-β is useful in your work, please cite it with:

```

@misc{tunstall2023zephyr,

title={Zephyr: Direct Distillation of LM Alignment},

author={Lewis Tunstall and Edward Beeching and Nathan Lambert and Nazneen Rajani and Kashif Rasul and Younes Belkada and Shengyi Huang and Leandro von Werra and Clémentine Fourrier and Nathan Habib and Nathan Sarrazin and Omar Sanseviero and Alexander M. Rush and Thomas Wolf},

year={2023},

eprint={2310.16944},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

```

|

princeton-nlp/RMT-2.7b-8k

|

princeton-nlp

| 2023-10-27T14:39:26Z | 3 | 5 |

transformers

|

[

"transformers",

"pytorch",

"opt",

"arxiv:2305.14788",

"arxiv:2207.06881",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2023-07-23T22:08:33Z |

---

license: apache-2.0

---

license: apache-2.0

---

**Paper**: [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788)

**Code**: https://github.com/princeton-nlp/AutoCompressors

**Models**:

- Llama-2-7b fine-tuned models: [AutoCompressor-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-Llama-2-7b-6k/), [FullAttention-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/FullAttention-Llama-2-7b-6k)

- OPT-2.7b fine-tuned models: [AutoCompressor-2.7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-6k), [AutoCompressor-2.7b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-30k), [RMT-2.7b-8k](https://huggingface.co/princeton-nlp/RMT-2.7b-8k), [FullAttention-2.7b-4k](https://huggingface.co/princeton-nlp/FullAttention-2.7b-4k)

- OPT-1.3b fine-tuned models: [AutoCompressor-1.3b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-1.3b-30k), [RMT-1.3b-30k](https://huggingface.co/princeton-nlp/RMT-1.3b-30k)

---

RMT-2.7b-8k is a model fine-tuned from [facebook/opt-2.7b](https://huggingface.co/facebook/opt-2.7b) following the RMT method as described in [Recurrent Memory Transformer](https://arxiv.org/abs/2207.06881) and [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788).

This model is fine-tuned on 2B tokens from [The Pile](https://pile.eleuther.ai). The pre-trained OPT-2.7b model is fine-tuned on sequences of 8,192 tokens with 50 summary vectors, summary accumulation, randomized segmenting, and stop-gradients.

To get started, download the [`AutoCompressor`](https://github.com/princeton-nlp/AutoCompressors) repository and load the model as follows:

```

from auto_compressor import AutoCompressorModel

model = AutoCompressorModel.from_pretrained("princeton-nlp/RMT-2.7b-8k")

```

**Evaluation**

We record the perplexity achieved by our OPT-2.7b models on segments of 2048 tokens, conditioned on different amounts of context.

FullAttention-2.7-4k uses full uncompressed contexts whereas AutoCompressor-2.7b-6k and RMT-2.7b-8k compress segments of 2048 tokens into 50 summary vectors.

*In-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 6.57|6.15 |5.94 |- |- |

| RMT-2.7b-8k | 6.34|6.19 |6.02 | 6.02 | 6.01 |

| AutoCompressor-2.7b-6k | 6.31|6.04 | 5.98 | 5.94 | 5.93 |

*Out-of-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 8.94|8.28 |7.93 |- |- |

| RMT-2.7b-8k | 8.62|8.44 |8.21 | 8.20 | 8.20 |

| AutoCompressor-2.7b-6k | 8.60|8.26 | 8.17 | 8.12 | 8.10 |

See [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788) for more evaluations, including evaluation on 11 in-context learning tasks.

## Bibtex

```

@misc{chevalier2023adapting,

title={Adapting Language Models to Compress Contexts},

author={Alexis Chevalier and Alexander Wettig and Anirudh Ajith and Danqi Chen},

year={2023},

eprint={2305.14788},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

princeton-nlp/AutoCompressor-2.7b-6k

|

princeton-nlp

| 2023-10-27T14:37:09Z | 5 | 2 |

transformers

|

[

"transformers",

"pytorch",

"opt",

"arxiv:2305.14788",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2023-07-23T22:03:32Z |

---

license: apache-2.0

---

license: apache-2.0

---

**Paper**: [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788)

**Code**: https://github.com/princeton-nlp/AutoCompressors

**Models**:

- Llama-2-7b fine-tuned models: [AutoCompressor-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-Llama-2-7b-6k/), [FullAttention-Llama-2-7b-6k](https://huggingface.co/princeton-nlp/FullAttention-Llama-2-7b-6k)

- OPT-2.7b fine-tuned models: [AutoCompressor-2.7b-6k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-6k), [AutoCompressor-2.7b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-2.7b-30k), [RMT-2.7b-8k](https://huggingface.co/princeton-nlp/RMT-2.7b-8k), [FullAttention-2.7b-4k](https://huggingface.co/princeton-nlp/FullAttention-2.7b-4k)

- OPT-1.3b fine-tuned models: [AutoCompressor-1.3b-30k](https://huggingface.co/princeton-nlp/AutoCompressor-1.3b-30k), [RMT-1.3b-30k](https://huggingface.co/princeton-nlp/RMT-1.3b-30k)

---

AutoCompressor-2.7b-6k is a model fine-tuned from [facebook/opt-2.7b](https://huggingface.co/facebook/opt-2.7b) following the AutoCompressor method in [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788).

This model is fine-tuned on 2B tokens from [The Pile](https://pile.eleuther.ai). The pre-trained OPT-2.7b model is fine-tuned on sequences of 6,144 tokens with 50 summary vectors, summary accumulation, randomized segmenting, and stop-gradients.

To get started, download the [`AutoCompressor`](https://github.com/princeton-nlp/AutoCompressors) repository and load the model as follows:

```

from auto_compressor import AutoCompressorModel

model = AutoCompressorModel.from_pretrained("princeton-nlp/AutoCompressor-2.7b-6k")

```

**Evaluation**

We record the perplexity achieved by our OPT-2.7b models on segments of 2048 tokens, conditioned on different amounts of context.

FullAttention-2.7-4k uses full uncompressed contexts whereas AutoCompressor-2.7b-6k and RMT-2.7b-8k compress segments of 2048 tokens into 50 summary vectors.

*In-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 6.57|6.15 |5.94 |- |- |

| RMT-2.7b-8k | 6.34|6.19 |6.02 | 6.02 | 6.01 |

| AutoCompressor-2.7b-6k | 6.31|6.04 | 5.98 | 5.94 | 5.93 |

*Out-of-domain Evaluation*

| Context Tokens | 0 |512 | 2048 | 4096 | 6144 |

| -----------------------------|-----|-----|------|------|------|

| FullAttention-2.7b-4k | 8.94|8.28 |7.93 |- |- |

| RMT-2.7b-8k | 8.62|8.44 |8.21 | 8.20 | 8.20 |

| AutoCompressor-2.7b-6k | 8.60|8.26 | 8.17 | 8.12 | 8.10 |

See [Adapting Language Models to Compress Contexts](https://arxiv.org/abs/2305.14788) for more evaluations, including evaluation on 11 in-context learning tasks.

## Bibtex

```

@misc{chevalier2023adapting,

title={Adapting Language Models to Compress Contexts},

author={Alexis Chevalier and Alexander Wettig and Anirudh Ajith and Danqi Chen},

year={2023},

eprint={2305.14788},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

bayerasif/whisper-tiny-minds14-en

|

bayerasif

| 2023-10-27T14:37:03Z | 76 | 0 |

transformers

|

[

"transformers",

"pytorch",

"whisper",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:PolyAI/minds14",

"base_model:openai/whisper-tiny",

"base_model:finetune:openai/whisper-tiny",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-10-27T14:23:47Z |

---

license: apache-2.0

base_model: openai/whisper-tiny

tags:

- generated_from_trainer

datasets:

- PolyAI/minds14

metrics:

- wer

model-index:

- name: whisper-tiny-minds14-en

results:

- task:

name: Automatic Speech Recognition

type: automatic-speech-recognition

dataset:

name: PolyAI/minds14

type: PolyAI/minds14

config: en-US

split: train

args: en-US

metrics:

- name: Wer

type: wer

value: 0.351961950059453

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# whisper-tiny-minds14-en

This model is a fine-tuned version of [openai/whisper-tiny](https://huggingface.co/openai/whisper-tiny) on the PolyAI/minds14 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4543

- Wer Ortho: 0.3713

- Wer: 0.3520

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-06

- train_batch_size: 32

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 50

- training_steps: 200

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer Ortho | Wer |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|

| 0.4142 | 7.14 | 100 | 0.4802 | 0.3756 | 0.3549 |

| 0.1909 | 14.29 | 200 | 0.4543 | 0.3713 | 0.3520 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu121

- Datasets 2.14.6

- Tokenizers 0.14.1

|

LoneStriker/zephyr-7b-beta-3.0bpw-h6-exl2

|

LoneStriker

| 2023-10-27T14:34:48Z | 7 | 0 |

transformers

|

[

"transformers",

"safetensors",

"mistral",

"text-generation",

"generated_from_trainer",

"conversational",

"en",

"dataset:HuggingFaceH4/ultrachat_200k",

"dataset:HuggingFaceH4/ultrafeedback_binarized",

"arxiv:2305.18290",

"arxiv:2310.16944",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:finetune:mistralai/Mistral-7B-v0.1",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-27T14:34:37Z |

---

tags:

- generated_from_trainer

model-index:

- name: zephyr-7b-beta

results: []

license: mit

datasets:

- HuggingFaceH4/ultrachat_200k

- HuggingFaceH4/ultrafeedback_binarized

language:

- en

base_model: mistralai/Mistral-7B-v0.1

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

<img src="https://huggingface.co/HuggingFaceH4/zephyr-7b-alpha/resolve/main/thumbnail.png" alt="Zephyr Logo" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

# Model Card for Zephyr 7B β

Zephyr is a series of language models that are trained to act as helpful assistants. Zephyr-7B-β is the second model in the series, and is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) that was trained on on a mix of publicly available, synthetic datasets using [Direct Preference Optimization (DPO)](https://arxiv.org/abs/2305.18290). We found that removing the in-built alignment of these datasets boosted performance on [MT Bench](https://huggingface.co/spaces/lmsys/mt-bench) and made the model more helpful. However, this means that model is likely to generate problematic text when prompted to do so and should only be used for educational and research purposes. You can find more details in the [technical report](https://arxiv.org/abs/2310.16944).

## Model description

- **Model type:** A 7B parameter GPT-like model fine-tuned on a mix of publicly available, synthetic datasets.

- **Language(s) (NLP):** Primarily English

- **License:** MIT

- **Finetuned from model:** [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** https://github.com/huggingface/alignment-handbook

- **Demo:** https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat

- **Chatbot Arena:** Evaluate Zephyr 7B against 10+ LLMs in the LMSYS arena: http://arena.lmsys.org

## Performance

At the time of release, Zephyr-7B-β is the highest ranked 7B chat model on the [MT-Bench](https://huggingface.co/spaces/lmsys/mt-bench) and [AlpacaEval](https://tatsu-lab.github.io/alpaca_eval/) benchmarks:

| Model | Size | Alignment | MT-Bench (score) | AlpacaEval (win rate %) |

|-------------|-----|----|---------------|--------------|

| StableLM-Tuned-α | 7B| dSFT |2.75| -|

| MPT-Chat | 7B |dSFT |5.42| -|

| Xwin-LMv0.1 | 7B| dPPO| 6.19| 87.83|

| Mistral-Instructv0.1 | 7B| - | 6.84 |-|

| Zephyr-7b-α |7B| dDPO| 6.88| -|

| **Zephyr-7b-β** 🪁 | **7B** | **dDPO** | **7.34** | **90.60** |

| Falcon-Instruct | 40B |dSFT |5.17 |45.71|

| Guanaco | 65B | SFT |6.41| 71.80|

| Llama2-Chat | 70B |RLHF |6.86| 92.66|

| Vicuna v1.3 | 33B |dSFT |7.12 |88.99|

| WizardLM v1.0 | 70B |dSFT |7.71 |-|

| Xwin-LM v0.1 | 70B |dPPO |- |95.57|

| GPT-3.5-turbo | - |RLHF |7.94 |89.37|

| Claude 2 | - |RLHF |8.06| 91.36|

| GPT-4 | -| RLHF |8.99| 95.28|

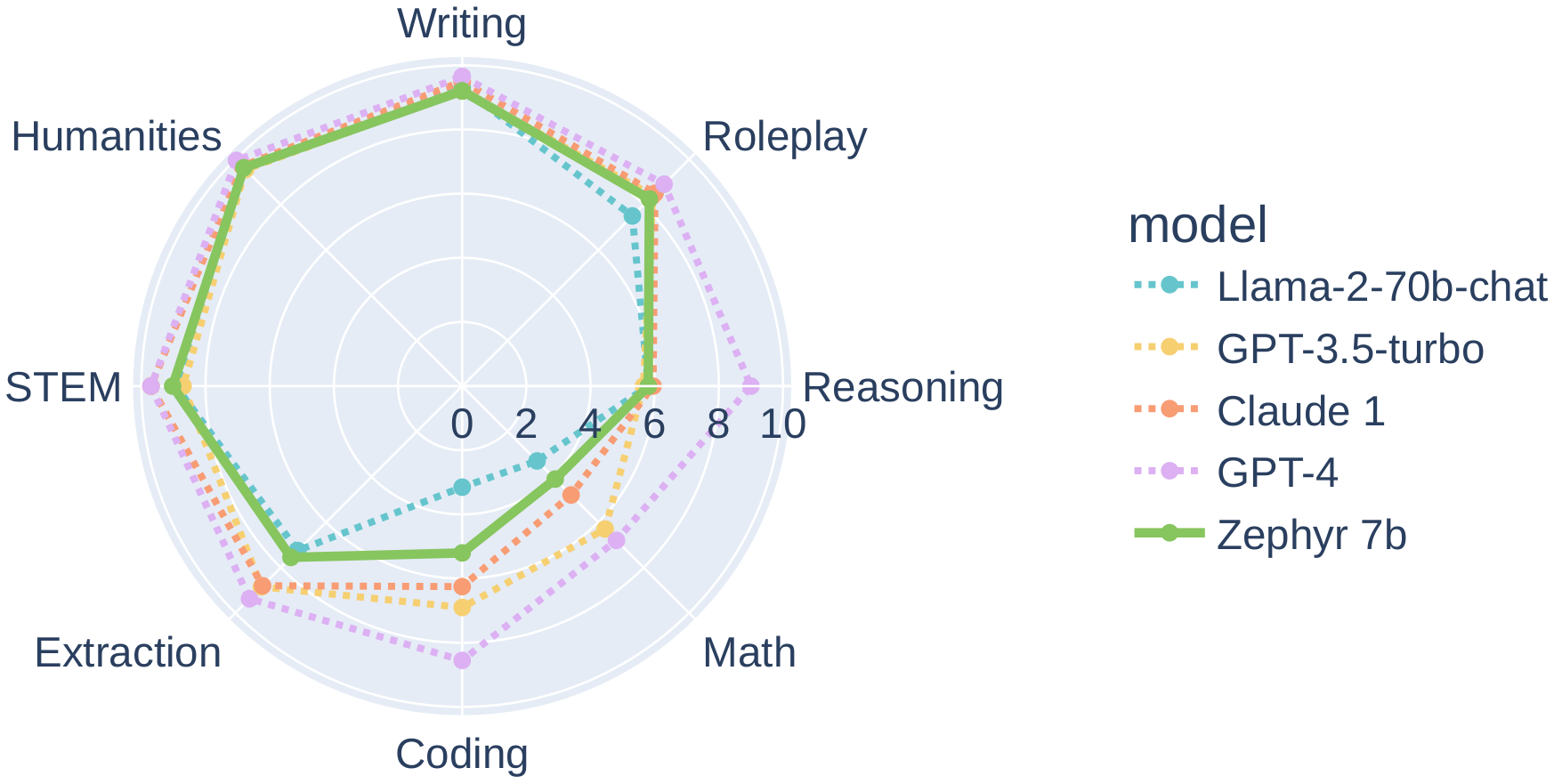

In particular, on several categories of MT-Bench, Zephyr-7B-β has strong performance compared to larger open models like Llama2-Chat-70B:

However, on more complex tasks like coding and mathematics, Zephyr-7B-β lags behind proprietary models and more research is needed to close the gap.

## Intended uses & limitations

The model was initially fine-tuned on a filtered and preprocessed of the [`UltraChat`](https://huggingface.co/datasets/stingning/ultrachat) dataset, which contains a diverse range of synthetic dialogues generated by ChatGPT.

We then further aligned the model with [🤗 TRL's](https://github.com/huggingface/trl) `DPOTrainer` on the [openbmb/UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) dataset, which contains 64k prompts and model completions that are ranked by GPT-4. As a result, the model can be used for chat and you can check out our [demo](https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat) to test its capabilities.

You can find the datasets used for training Zephyr-7B-β [here](https://huggingface.co/collections/HuggingFaceH4/zephyr-7b-6538c6d6d5ddd1cbb1744a66)

Here's how you can run the model using the `pipeline()` function from 🤗 Transformers:

```python

# Install transformers from source - only needed for versions <= v4.34

# pip install git+https://github.com/huggingface/transformers.git

# pip install accelerate

import torch

from transformers import pipeline

pipe = pipeline("text-generation", model="HuggingFaceH4/zephyr-7b-beta", torch_dtype=torch.bfloat16, device_map="auto")

# We use the tokenizer's chat template to format each message - see https://huggingface.co/docs/transformers/main/en/chat_templating

messages = [

{

"role": "system",

"content": "You are a friendly chatbot who always responds in the style of a pirate",

},

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

]

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

print(outputs[0]["generated_text"])

# <|system|>

# You are a friendly chatbot who always responds in the style of a pirate.</s>

# <|user|>

# How many helicopters can a human eat in one sitting?</s>

# <|assistant|>

# Ah, me hearty matey! But yer question be a puzzler! A human cannot eat a helicopter in one sitting, as helicopters are not edible. They be made of metal, plastic, and other materials, not food!

```

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

Zephyr-7B-β has not been aligned to human preferences with techniques like RLHF or deployed with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

It is also unknown what the size and composition of the corpus was used to train the base model (`mistralai/Mistral-7B-v0.1`), however it is likely to have included a mix of Web data and technical sources like books and code. See the [Falcon 180B model card](https://huggingface.co/tiiuae/falcon-180B#training-data) for an example of this.

## Training and evaluation data

During DPO training, this model achieves the following results on the evaluation set:

- Loss: 0.7496

- Rewards/chosen: -4.5221

- Rewards/rejected: -8.3184

- Rewards/accuracies: 0.7812

- Rewards/margins: 3.7963

- Logps/rejected: -340.1541

- Logps/chosen: -299.4561

- Logits/rejected: -2.3081

- Logits/chosen: -2.3531

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-07

- train_batch_size: 2

- eval_batch_size: 4

- seed: 42

- distributed_type: multi-GPU

- num_devices: 16

- total_train_batch_size: 32

- total_eval_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3.0

### Training results

The table below shows the full set of DPO training metrics:

| Training Loss | Epoch | Step | Validation Loss | Rewards/chosen | Rewards/rejected | Rewards/accuracies | Rewards/margins | Logps/rejected | Logps/chosen | Logits/rejected | Logits/chosen |

|:-------------:|:-----:|:----:|:---------------:|:--------------:|:----------------:|:------------------:|:---------------:|:--------------:|:------------:|:---------------:|:-------------:|

| 0.6284 | 0.05 | 100 | 0.6098 | 0.0425 | -0.1872 | 0.7344 | 0.2297 | -258.8416 | -253.8099 | -2.7976 | -2.8234 |

| 0.4908 | 0.1 | 200 | 0.5426 | -0.0279 | -0.6842 | 0.75 | 0.6563 | -263.8124 | -254.5145 | -2.7719 | -2.7960 |

| 0.5264 | 0.15 | 300 | 0.5324 | 0.0414 | -0.9793 | 0.7656 | 1.0207 | -266.7627 | -253.8209 | -2.7892 | -2.8122 |

| 0.5536 | 0.21 | 400 | 0.4957 | -0.0185 | -1.5276 | 0.7969 | 1.5091 | -272.2460 | -254.4203 | -2.8542 | -2.8764 |

| 0.5362 | 0.26 | 500 | 0.5031 | -0.2630 | -1.5917 | 0.7812 | 1.3287 | -272.8869 | -256.8653 | -2.8702 | -2.8958 |

| 0.5966 | 0.31 | 600 | 0.5963 | -0.2993 | -1.6491 | 0.7812 | 1.3499 | -273.4614 | -257.2279 | -2.8778 | -2.8986 |

| 0.5014 | 0.36 | 700 | 0.5382 | -0.2859 | -1.4750 | 0.75 | 1.1891 | -271.7204 | -257.0942 | -2.7659 | -2.7869 |

| 0.5334 | 0.41 | 800 | 0.5677 | -0.4289 | -1.8968 | 0.7969 | 1.4679 | -275.9378 | -258.5242 | -2.7053 | -2.7265 |

| 0.5251 | 0.46 | 900 | 0.5772 | -0.2116 | -1.3107 | 0.7344 | 1.0991 | -270.0768 | -256.3507 | -2.8463 | -2.8662 |

| 0.5205 | 0.52 | 1000 | 0.5262 | -0.3792 | -1.8585 | 0.7188 | 1.4793 | -275.5552 | -258.0276 | -2.7893 | -2.7979 |

| 0.5094 | 0.57 | 1100 | 0.5433 | -0.6279 | -1.9368 | 0.7969 | 1.3089 | -276.3377 | -260.5136 | -2.7453 | -2.7536 |

| 0.5837 | 0.62 | 1200 | 0.5349 | -0.3780 | -1.9584 | 0.7656 | 1.5804 | -276.5542 | -258.0154 | -2.7643 | -2.7756 |

| 0.5214 | 0.67 | 1300 | 0.5732 | -1.0055 | -2.2306 | 0.7656 | 1.2251 | -279.2761 | -264.2903 | -2.6986 | -2.7113 |

| 0.6914 | 0.72 | 1400 | 0.5137 | -0.6912 | -2.1775 | 0.7969 | 1.4863 | -278.7448 | -261.1467 | -2.7166 | -2.7275 |

| 0.4655 | 0.77 | 1500 | 0.5090 | -0.7987 | -2.2930 | 0.7031 | 1.4943 | -279.8999 | -262.2220 | -2.6651 | -2.6838 |

| 0.5731 | 0.83 | 1600 | 0.5312 | -0.8253 | -2.3520 | 0.7812 | 1.5268 | -280.4902 | -262.4876 | -2.6543 | -2.6728 |

| 0.5233 | 0.88 | 1700 | 0.5206 | -0.4573 | -2.0951 | 0.7812 | 1.6377 | -277.9205 | -258.8084 | -2.6870 | -2.7097 |

| 0.5593 | 0.93 | 1800 | 0.5231 | -0.5508 | -2.2000 | 0.7969 | 1.6492 | -278.9703 | -259.7433 | -2.6221 | -2.6519 |

| 0.4967 | 0.98 | 1900 | 0.5290 | -0.5340 | -1.9570 | 0.8281 | 1.4230 | -276.5395 | -259.5749 | -2.6564 | -2.6878 |

| 0.0921 | 1.03 | 2000 | 0.5368 | -1.1376 | -3.1615 | 0.7812 | 2.0239 | -288.5854 | -265.6111 | -2.6040 | -2.6345 |

| 0.0733 | 1.08 | 2100 | 0.5453 | -1.1045 | -3.4451 | 0.7656 | 2.3406 | -291.4208 | -265.2799 | -2.6289 | -2.6595 |

| 0.0972 | 1.14 | 2200 | 0.5571 | -1.6915 | -3.9823 | 0.8125 | 2.2908 | -296.7934 | -271.1505 | -2.6471 | -2.6709 |

| 0.1058 | 1.19 | 2300 | 0.5789 | -1.0621 | -3.8941 | 0.7969 | 2.8319 | -295.9106 | -264.8563 | -2.5527 | -2.5798 |

| 0.2423 | 1.24 | 2400 | 0.5455 | -1.1963 | -3.5590 | 0.7812 | 2.3627 | -292.5599 | -266.1981 | -2.5414 | -2.5784 |

| 0.1177 | 1.29 | 2500 | 0.5889 | -1.8141 | -4.3942 | 0.7969 | 2.5801 | -300.9120 | -272.3761 | -2.4802 | -2.5189 |

| 0.1213 | 1.34 | 2600 | 0.5683 | -1.4608 | -3.8420 | 0.8125 | 2.3812 | -295.3901 | -268.8436 | -2.4774 | -2.5207 |

| 0.0889 | 1.39 | 2700 | 0.5890 | -1.6007 | -3.7337 | 0.7812 | 2.1330 | -294.3068 | -270.2423 | -2.4123 | -2.4522 |

| 0.0995 | 1.45 | 2800 | 0.6073 | -1.5519 | -3.8362 | 0.8281 | 2.2843 | -295.3315 | -269.7538 | -2.4685 | -2.5050 |

| 0.1145 | 1.5 | 2900 | 0.5790 | -1.7939 | -4.2876 | 0.8438 | 2.4937 | -299.8461 | -272.1744 | -2.4272 | -2.4674 |

| 0.0644 | 1.55 | 3000 | 0.5735 | -1.7285 | -4.2051 | 0.8125 | 2.4766 | -299.0209 | -271.5201 | -2.4193 | -2.4574 |

| 0.0798 | 1.6 | 3100 | 0.5537 | -1.7226 | -4.2850 | 0.8438 | 2.5624 | -299.8200 | -271.4610 | -2.5367 | -2.5696 |

| 0.1013 | 1.65 | 3200 | 0.5575 | -1.5715 | -3.9813 | 0.875 | 2.4098 | -296.7825 | -269.9498 | -2.4926 | -2.5267 |

| 0.1254 | 1.7 | 3300 | 0.5905 | -1.6412 | -4.4703 | 0.8594 | 2.8291 | -301.6730 | -270.6473 | -2.5017 | -2.5340 |

| 0.085 | 1.76 | 3400 | 0.6133 | -1.9159 | -4.6760 | 0.8438 | 2.7601 | -303.7296 | -273.3941 | -2.4614 | -2.4960 |

| 0.065 | 1.81 | 3500 | 0.6074 | -1.8237 | -4.3525 | 0.8594 | 2.5288 | -300.4951 | -272.4724 | -2.4597 | -2.5004 |

| 0.0755 | 1.86 | 3600 | 0.5836 | -1.9252 | -4.4005 | 0.8125 | 2.4753 | -300.9748 | -273.4872 | -2.4327 | -2.4716 |

| 0.0746 | 1.91 | 3700 | 0.5789 | -1.9280 | -4.4906 | 0.8125 | 2.5626 | -301.8762 | -273.5149 | -2.4686 | -2.5115 |

| 0.1348 | 1.96 | 3800 | 0.6015 | -1.8658 | -4.2428 | 0.8281 | 2.3769 | -299.3976 | -272.8936 | -2.4943 | -2.5393 |

| 0.0217 | 2.01 | 3900 | 0.6122 | -2.3335 | -4.9229 | 0.8281 | 2.5894 | -306.1988 | -277.5699 | -2.4841 | -2.5272 |

| 0.0219 | 2.07 | 4000 | 0.6522 | -2.9890 | -6.0164 | 0.8281 | 3.0274 | -317.1334 | -284.1248 | -2.4105 | -2.4545 |

| 0.0119 | 2.12 | 4100 | 0.6922 | -3.4777 | -6.6749 | 0.7969 | 3.1972 | -323.7187 | -289.0121 | -2.4272 | -2.4699 |

| 0.0153 | 2.17 | 4200 | 0.6993 | -3.2406 | -6.6775 | 0.7969 | 3.4369 | -323.7453 | -286.6413 | -2.4047 | -2.4465 |

| 0.011 | 2.22 | 4300 | 0.7178 | -3.7991 | -7.4397 | 0.7656 | 3.6406 | -331.3667 | -292.2260 | -2.3843 | -2.4290 |

| 0.0072 | 2.27 | 4400 | 0.6840 | -3.3269 | -6.8021 | 0.8125 | 3.4752 | -324.9908 | -287.5042 | -2.4095 | -2.4536 |

| 0.0197 | 2.32 | 4500 | 0.7013 | -3.6890 | -7.3014 | 0.8125 | 3.6124 | -329.9841 | -291.1250 | -2.4118 | -2.4543 |

| 0.0182 | 2.37 | 4600 | 0.7476 | -3.8994 | -7.5366 | 0.8281 | 3.6372 | -332.3356 | -293.2291 | -2.4163 | -2.4565 |

| 0.0125 | 2.43 | 4700 | 0.7199 | -4.0560 | -7.5765 | 0.8438 | 3.5204 | -332.7345 | -294.7952 | -2.3699 | -2.4100 |

| 0.0082 | 2.48 | 4800 | 0.7048 | -3.6613 | -7.1356 | 0.875 | 3.4743 | -328.3255 | -290.8477 | -2.3925 | -2.4303 |

| 0.0118 | 2.53 | 4900 | 0.6976 | -3.7908 | -7.3152 | 0.8125 | 3.5244 | -330.1224 | -292.1431 | -2.3633 | -2.4047 |

| 0.0118 | 2.58 | 5000 | 0.7198 | -3.9049 | -7.5557 | 0.8281 | 3.6508 | -332.5271 | -293.2844 | -2.3764 | -2.4194 |

| 0.006 | 2.63 | 5100 | 0.7506 | -4.2118 | -7.9149 | 0.8125 | 3.7032 | -336.1194 | -296.3530 | -2.3407 | -2.3860 |

| 0.0143 | 2.68 | 5200 | 0.7408 | -4.2433 | -7.9802 | 0.8125 | 3.7369 | -336.7721 | -296.6682 | -2.3509 | -2.3946 |

| 0.0057 | 2.74 | 5300 | 0.7552 | -4.3392 | -8.0831 | 0.7969 | 3.7439 | -337.8013 | -297.6275 | -2.3388 | -2.3842 |

| 0.0138 | 2.79 | 5400 | 0.7404 | -4.2395 | -7.9762 | 0.8125 | 3.7367 | -336.7322 | -296.6304 | -2.3286 | -2.3737 |

| 0.0079 | 2.84 | 5500 | 0.7525 | -4.4466 | -8.2196 | 0.7812 | 3.7731 | -339.1662 | -298.7007 | -2.3200 | -2.3641 |

| 0.0077 | 2.89 | 5600 | 0.7520 | -4.5586 | -8.3485 | 0.7969 | 3.7899 | -340.4545 | -299.8206 | -2.3078 | -2.3517 |

| 0.0094 | 2.94 | 5700 | 0.7527 | -4.5542 | -8.3509 | 0.7812 | 3.7967 | -340.4790 | -299.7773 | -2.3062 | -2.3510 |

| 0.0054 | 2.99 | 5800 | 0.7520 | -4.5169 | -8.3079 | 0.7812 | 3.7911 | -340.0493 | -299.4038 | -2.3081 | -2.3530 |

### Framework versions

- Transformers 4.35.0.dev0

- Pytorch 2.0.1+cu118

- Datasets 2.12.0

- Tokenizers 0.14.0

## Citation

If you find Zephyr-7B-β is useful in your work, please cite it with:

```

@misc{tunstall2023zephyr,

title={Zephyr: Direct Distillation of LM Alignment},

author={Lewis Tunstall and Edward Beeching and Nathan Lambert and Nazneen Rajani and Kashif Rasul and Younes Belkada and Shengyi Huang and Leandro von Werra and Clémentine Fourrier and Nathan Habib and Nathan Sarrazin and Omar Sanseviero and Alexander M. Rush and Thomas Wolf},

year={2023},

eprint={2310.16944},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

```

|

SzegedAI/babylm-strict-mlsm

|

SzegedAI

| 2023-10-27T14:26:13Z | 105 | 1 |

transformers

|

[

"transformers",

"pytorch",

"deberta",

"fill-mask",

"en",

"dataset:BabyLM_strict",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2023-07-23T14:21:22Z |

---

license: mit

datasets:

- BabyLM_strict

language:

- en

metrics:

- glue

---

# Model Card for SzegedAI/babylm-strict-mlsm

<!-- Provide a quick summary of what the model is/does. -->

This base-sized DeBERTa model was created using the [Masked Latent Semantic Modeling](https://aclanthology.org/2023.findings-acl.876/) (MLSM) pre-training objective, which is a sample efficient alternative for classic Masked Language Modeling (MLM).

During MLSM, the objective is to recover the latent semantic profile of the masked tokens, as opposed to recovering their exact identity.

The contextualized latent semantic profile during pre-training is determined by performing sparse coding of the hidden representation of a partially pre-trained model (a base-sized DeBERTa model pre-trained over only 20 million input sequences in this particular case).

## Model Details