modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-29 06:27:22

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 525

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-29 06:27:10

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

VinEuro/LunarLanderv2

|

VinEuro

| 2023-07-27T21:48:06Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T21:47:47Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 270.95 +/- 23.64

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

NasimB/aochildes-cbt-log-rarity

|

NasimB

| 2023-07-27T21:46:57Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"dataset:generator",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-27T19:38:45Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- generator

model-index:

- name: aochildes-cbt-log-rarity

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# aochildes-cbt-log-rarity

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the generator dataset.

It achieves the following results on the evaluation set:

- Loss: 4.1483

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 1000

- num_epochs: 6

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 6.3649 | 0.29 | 500 | 5.3433 |

| 5.0506 | 0.59 | 1000 | 4.9337 |

| 4.7079 | 0.88 | 1500 | 4.6957 |

| 4.4512 | 1.17 | 2000 | 4.5593 |

| 4.3031 | 1.47 | 2500 | 4.4458 |

| 4.2085 | 1.76 | 3000 | 4.3418 |

| 4.0809 | 2.05 | 3500 | 4.2739 |

| 3.9047 | 2.35 | 4000 | 4.2277 |

| 3.8846 | 2.64 | 4500 | 4.1774 |

| 3.8392 | 2.93 | 5000 | 4.1313 |

| 3.6392 | 3.23 | 5500 | 4.1305 |

| 3.6016 | 3.52 | 6000 | 4.1020 |

| 3.5828 | 3.81 | 6500 | 4.0709 |

| 3.4733 | 4.11 | 7000 | 4.0797 |

| 3.3271 | 4.4 | 7500 | 4.0758 |

| 3.3228 | 4.69 | 8000 | 4.0635 |

| 3.3147 | 4.99 | 8500 | 4.0528 |

| 3.154 | 5.28 | 9000 | 4.0692 |

| 3.1461 | 5.58 | 9500 | 4.0692 |

| 3.1416 | 5.87 | 10000 | 4.0684 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.11.0+cu113

- Datasets 2.13.0

- Tokenizers 0.13.3

|

jariasn/q-Taxi-v3

|

jariasn

| 2023-07-27T21:32:51Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T21:32:49Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="jariasn/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

shivarama23/llama_v2_finetuned_redaction

|

shivarama23

| 2023-07-27T21:18:42Z | 2 | 0 |

peft

|

[

"peft",

"pytorch",

"region:us"

] | null | 2023-07-27T21:17:43Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.4.0

- PEFT 0.4.0

|

Kertn/ppo-Huggy

|

Kertn

| 2023-07-27T21:14:55Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-07-27T21:14:45Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: Kertn/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

jariasn/q-FrozenLake-v1-4x4-noSlippery

|

jariasn

| 2023-07-27T20:58:10Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T20:58:08Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="jariasn/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

YoonSeul/LawBot-5.8B

|

YoonSeul

| 2023-07-27T20:40:46Z | 4 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-26T07:47:12Z |

---

library_name: peft

---

<img src=https://github.com/taemin6697/Paper_Review/assets/96530685/54ecd6cf-8695-4caa-bdc8-fb85c9b7d70d style="max-width: 700px; width: 100%" />

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.4.0.dev0

|

chh6/ppo-SnowballTarget

|

chh6

| 2023-07-27T20:21:44Z | 4 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"SnowballTarget",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-SnowballTarget",

"region:us"

] |

reinforcement-learning

| 2023-07-27T18:55:02Z |

---

library_name: ml-agents

tags:

- SnowballTarget

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SnowballTarget

---

# **ppo** Agent playing **SnowballTarget**

This is a trained model of a **ppo** agent playing **SnowballTarget**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: chh6/ppo-SnowballTarget

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

truehealth/LLama-2-MedText-Delta

|

truehealth

| 2023-07-27T20:21:44Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-07-27T01:19:04Z |

Trained on 13B LLama-2

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

Jonathaniu/llama2-breast-cancer-13b-knowledge-epoch-8

|

Jonathaniu

| 2023-07-27T20:09:53Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-27T20:09:32Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

### Framework versions

- PEFT 0.4.0.dev0

|

SH-W/60emotions

|

SH-W

| 2023-07-27T20:01:52Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"roberta",

"text-classification",

"autotrain",

"unk",

"dataset:SH-W/autotrain-data-5000_koi",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-27T19:55:16Z |

---

tags:

- autotrain

- text-classification

language:

- unk

widget:

- text: "I love AutoTrain"

datasets:

- SH-W/autotrain-data-5000_koi

co2_eq_emissions:

emissions: 3.920765439350259

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 77927140735

- CO2 Emissions (in grams): 3.9208

## Validation Metrics

- Loss: 2.432

- Accuracy: 0.415

- Macro F1: 0.410

- Micro F1: 0.415

- Weighted F1: 0.410

- Macro Precision: 0.459

- Micro Precision: 0.415

- Weighted Precision: 0.456

- Macro Recall: 0.413

- Micro Recall: 0.415

- Weighted Recall: 0.415

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/SH-W/autotrain-5000_koi-77927140735

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("SH-W/autotrain-5000_koi-77927140735", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("SH-W/autotrain-5000_koi-77927140735", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

ianvaz/llama2-qlora-finetunined-french

|

ianvaz

| 2023-07-27T20:00:58Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-27T20:00:54Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

teilomillet/poca-SoccerTwos

|

teilomillet

| 2023-07-27T19:52:37Z | 3 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"SoccerTwos",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-SoccerTwos",

"region:us"

] |

reinforcement-learning

| 2023-07-27T19:52:21Z |

---

library_name: ml-agents

tags:

- SoccerTwos

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-SoccerTwos

---

# **poca** Agent playing **SoccerTwos**

This is a trained model of a **poca** agent playing **SoccerTwos**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: teilomillet/poca-SoccerTwos

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

grace-pro/three_class_5e-5_hausa

|

grace-pro

| 2023-07-27T19:48:09Z | 117 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"base_model:Davlan/afro-xlmr-base",

"base_model:finetune:Davlan/afro-xlmr-base",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-07-27T18:28:05Z |

---

license: mit

base_model: Davlan/afro-xlmr-base

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: three_class_5e-5_hausa

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# three_class_5e-5_hausa

This model is a fine-tuned version of [Davlan/afro-xlmr-base](https://huggingface.co/Davlan/afro-xlmr-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2379

- Precision: 0.2316

- Recall: 0.1636

- F1: 0.1917

- Accuracy: 0.9392

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.2129 | 1.0 | 1283 | 0.2033 | 0.2278 | 0.0810 | 0.1195 | 0.9416 |

| 0.1901 | 2.0 | 2566 | 0.1988 | 0.2444 | 0.0890 | 0.1305 | 0.9429 |

| 0.1657 | 3.0 | 3849 | 0.2056 | 0.2561 | 0.1278 | 0.1705 | 0.9430 |

| 0.139 | 4.0 | 5132 | 0.2205 | 0.2269 | 0.1655 | 0.1914 | 0.9388 |

| 0.1179 | 5.0 | 6415 | 0.2379 | 0.2316 | 0.1636 | 0.1917 | 0.9392 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.1

- Tokenizers 0.13.3

|

asenella/MMVAEPlus_beta_10_scale_False_seed_3

|

asenella

| 2023-07-27T19:43:04Z | 0 | 0 | null |

[

"multivae",

"en",

"license:apache-2.0",

"region:us"

] | null | 2023-07-27T19:42:50Z |

---

language: en

tags:

- multivae

license: apache-2.0

---

### Downloading this model from the Hub

This model was trained with multivae. It can be downloaded or reloaded using the method `load_from_hf_hub`

```python

>>> from multivae.models import AutoModel

>>> model = AutoModel.load_from_hf_hub(hf_hub_path="your_hf_username/repo_name")

```

|

asenella/MMVAEPlus_beta_5_scale_False_seed_3

|

asenella

| 2023-07-27T19:39:47Z | 0 | 0 | null |

[

"multivae",

"en",

"license:apache-2.0",

"region:us"

] | null | 2023-07-27T19:39:34Z |

---

language: en

tags:

- multivae

license: apache-2.0

---

### Downloading this model from the Hub

This model was trained with multivae. It can be downloaded or reloaded using the method `load_from_hf_hub`

```python

>>> from multivae.models import AutoModel

>>> model = AutoModel.load_from_hf_hub(hf_hub_path="your_hf_username/repo_name")

```

|

Varshitha/flan-t5-large-finetune-medicine-v5

|

Varshitha

| 2023-07-27T19:38:30Z | 110 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"text2textgeneration",

"generated_from_trainer",

"base_model:google/flan-t5-large",

"base_model:finetune:google/flan-t5-large",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-07-27T19:28:01Z |

---

license: apache-2.0

base_model: google/flan-t5-large

tags:

- text2textgeneration

- generated_from_trainer

metrics:

- rouge

model-index:

- name: flan-t5-large-finetune-medicine-v5

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# flan-t5-large-finetune-medicine-v5

This model is a fine-tuned version of [google/flan-t5-large](https://huggingface.co/google/flan-t5-large) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.3517

- Rouge1: 27.7218

- Rouge2: 10.9162

- Rougel: 23.6057

- Rougelsum: 23.2999

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5.6e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|

| No log | 1.0 | 5 | 2.2465 | 14.6773 | 3.5979 | 13.871 | 14.2474 |

| No log | 2.0 | 10 | 2.1106 | 10.2078 | 2.1164 | 10.2919 | 10.276 |

| No log | 3.0 | 15 | 2.0535 | 16.7761 | 1.5873 | 16.7952 | 17.1838 |

| No log | 4.0 | 20 | 2.0323 | 16.6844 | 1.5873 | 16.8444 | 16.9094 |

| No log | 5.0 | 25 | 2.0063 | 17.2911 | 2.3045 | 14.8127 | 15.3235 |

| No log | 6.0 | 30 | 2.0079 | 15.3197 | 4.6561 | 14.278 | 14.8369 |

| No log | 7.0 | 35 | 2.0319 | 15.9877 | 5.8947 | 13.9837 | 14.1814 |

| No log | 8.0 | 40 | 2.0748 | 23.1763 | 10.5 | 20.2887 | 20.2578 |

| No log | 9.0 | 45 | 2.1303 | 21.1874 | 6.9444 | 19.0088 | 18.991 |

| No log | 10.0 | 50 | 2.1746 | 20.2807 | 6.2865 | 18.2145 | 18.1012 |

| No log | 11.0 | 55 | 2.1729 | 21.8364 | 9.8421 | 18.7897 | 18.8242 |

| No log | 12.0 | 60 | 2.2083 | 22.777 | 10.9162 | 21.3444 | 21.1464 |

| No log | 13.0 | 65 | 2.2658 | 21.7641 | 10.9162 | 20.3906 | 19.8167 |

| No log | 14.0 | 70 | 2.2889 | 21.7641 | 10.9162 | 20.3906 | 19.8167 |

| No log | 15.0 | 75 | 2.2998 | 25.3171 | 10.9162 | 21.3683 | 20.9228 |

| No log | 16.0 | 80 | 2.3082 | 26.0279 | 10.9162 | 21.9565 | 21.7519 |

| No log | 17.0 | 85 | 2.3166 | 26.0279 | 10.9162 | 21.9565 | 21.7519 |

| No log | 18.0 | 90 | 2.3325 | 27.7218 | 10.9162 | 23.6057 | 23.2999 |

| No log | 19.0 | 95 | 2.3462 | 27.7218 | 10.9162 | 23.6057 | 23.2999 |

| No log | 20.0 | 100 | 2.3517 | 27.7218 | 10.9162 | 23.6057 | 23.2999 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.1

- Tokenizers 0.13.3

|

tommilyjones/swin-tiny-patch4-window7-224-cats_dogs

|

tommilyjones

| 2023-07-27T19:38:02Z | 204 | 0 |

transformers

|

[

"transformers",

"pytorch",

"swin",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"base_model:microsoft/swin-tiny-patch4-window7-224",

"base_model:finetune:microsoft/swin-tiny-patch4-window7-224",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-07-27T19:31:44Z |

---

license: apache-2.0

base_model: microsoft/swin-tiny-patch4-window7-224

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

model-index:

- name: swin-tiny-patch4-window7-224-cats_dogs

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: validation

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9973147153598282

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# swin-tiny-patch4-window7-224-cats_dogs

This model is a fine-tuned version of [microsoft/swin-tiny-patch4-window7-224](https://huggingface.co/microsoft/swin-tiny-patch4-window7-224) on the imagefolder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0126

- Accuracy: 0.9973

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.0832 | 0.98 | 47 | 0.0235 | 0.9909 |

| 0.0788 | 1.99 | 95 | 0.0126 | 0.9973 |

| 0.0534 | 2.95 | 141 | 0.0127 | 0.9957 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu117

- Datasets 2.13.1

- Tokenizers 0.13.3

|

FlexedRope14028/Progetto-13-b-chat

|

FlexedRope14028

| 2023-07-27T19:31:29Z | 0 | 0 | null |

[

"it",

"en",

"license:llama2",

"region:us"

] | null | 2023-07-27T19:11:08Z |

---

license: llama2

language:

- it

- en

---

|

DunnBC22/wav2vec2-base-Speech_Emotion_Recognition

|

DunnBC22

| 2023-07-27T19:27:56Z | 209 | 13 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"audio-classification",

"generated_from_trainer",

"en",

"dataset:audiofolder",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-04-17T21:00:15Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- audiofolder

metrics:

- accuracy

- f1

- recall

- precision

model-index:

- name: wav2vec2-base-Speech_Emotion_Recognition

results: []

language:

- en

pipeline_tag: audio-classification

---

# wav2vec2-base-Speech_Emotion_Recognition

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base).

It achieves the following results on the evaluation set:

- Loss: 0.7264

- Accuracy: 0.7539

- F1

- Weighted: 0.7514

- Micro: 0.7539

- Macro: 0.7529

- Recall

- Weighted: 0.7539

- Micro: 0.7539

- Macro: 0.7577

- Precision

- Weighted: 0.7565

- Micro: 0.7539

- Macro: 0.7558

## Model description

This model predicts the emotion of the person speaking in the audio sample.

For more information on how it was created, check out the following link: https://github.com/DunnBC22/Vision_Audio_and_Multimodal_Projects/tree/main/Audio-Projects/Emotion%20Detection/Speech%20Emotion%20Detection

## Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

## Training and evaluation data

Dataset Source: https://www.kaggle.com/datasets/dmitrybabko/speech-emotion-recognition-en

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | Weighted F1 | Micro F1 | Macro F1 | Weighted Recall | Micro Recall | Macro Recall | Weighted Precision | Micro Precision | Macro Precision |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:-----------:|:--------:|:--------:|:---------------:|:------------:|:------------:|:------------------:|:---------------:|:---------------:|

| 1.5581 | 0.98 | 43 | 1.4046 | 0.4653 | 0.4080 | 0.4653 | 0.4174 | 0.4653 | 0.4653 | 0.4793 | 0.5008 | 0.4653 | 0.4974 |

| 1.5581 | 1.98 | 86 | 1.1566 | 0.5997 | 0.5836 | 0.5997 | 0.5871 | 0.5997 | 0.5997 | 0.6093 | 0.6248 | 0.5997 | 0.6209 |

| 1.5581 | 2.98 | 129 | 0.9733 | 0.6883 | 0.6845 | 0.6883 | 0.6860 | 0.6883 | 0.6883 | 0.6923 | 0.7012 | 0.6883 | 0.7009 |

| 1.5581 | 3.98 | 172 | 0.8313 | 0.7399 | 0.7392 | 0.7399 | 0.7409 | 0.7399 | 0.7399 | 0.7417 | 0.7415 | 0.7399 | 0.7432 |

| 1.5581 | 4.98 | 215 | 0.8708 | 0.7028 | 0.6963 | 0.7028 | 0.6970 | 0.7028 | 0.7028 | 0.7081 | 0.7148 | 0.7028 | 0.7114 |

| 1.5581 | 5.98 | 258 | 0.7969 | 0.7297 | 0.7267 | 0.7297 | 0.7277 | 0.7297 | 0.7297 | 0.7333 | 0.7393 | 0.7297 | 0.7382 |

| 1.5581 | 6.98 | 301 | 0.7349 | 0.7603 | 0.7613 | 0.7603 | 0.7631 | 0.7603 | 0.7603 | 0.7635 | 0.7699 | 0.7603 | 0.7702 |

| 1.5581 | 7.98 | 344 | 0.7714 | 0.7469 | 0.7444 | 0.7469 | 0.7456 | 0.7469 | 0.7469 | 0.7485 | 0.7554 | 0.7469 | 0.7563 |

| 1.5581 | 8.98 | 387 | 0.7183 | 0.7630 | 0.7615 | 0.7630 | 0.7631 | 0.7630 | 0.7630 | 0.7652 | 0.7626 | 0.7630 | 0.7637 |

| 1.5581 | 9.98 | 430 | 0.7264 | 0.7539 | 0.7514 | 0.7539 | 0.7529 | 0.7539 | 0.7539 | 0.7577 | 0.7565 | 0.7539 | 0.7558 |

### Framework versions

- Transformers 4.26.1

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.3

|

DunnBC22/mit-b0-Image_segmentation_Dominoes_v2

|

DunnBC22

| 2023-07-27T19:26:06Z | 0 | 1 | null |

[

"pytorch",

"tensorboard",

"generated_from_trainer",

"image-segmentation",

"en",

"dataset:adelavega/dominoes_raw",

"license:other",

"region:us"

] |

image-segmentation

| 2023-07-26T21:13:35Z |

---

license: other

tags:

- generated_from_trainer

model-index:

- name: mit-b0-Image_segmentation_Dominoes_v2

results: []

datasets:

- adelavega/dominoes_raw

language:

- en

metrics:

- mean_iou

pipeline_tag: image-segmentation

---

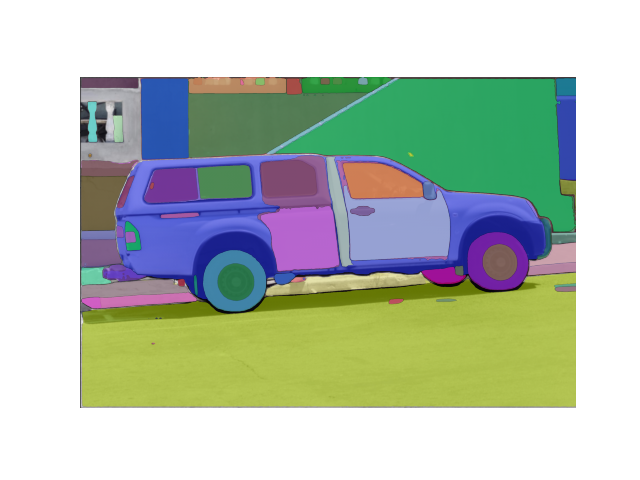

# mit-b0-Image_segmentation_Dominoes_v2

This model is a fine-tuned version of [nvidia/mit-b0](https://huggingface.co/nvidia/mit-b0).

It achieves the following results on the evaluation set:

- Loss: 0.1149

- Mean Iou: 0.9198

- Mean Accuracy: 0.9515

- Overall Accuracy: 0.9778

- Per Category Iou:

- Segment 0: 0.974110559111975

- Segment 1: 0.8655745252092782

- Per Category Accuracy

- Segment 0: 0.9897833441005461

- Segment 1: 0.913253525550903

## Model description

For more information on how it was created, check out the following link: https://github.com/DunnBC22/Vision_Audio_and_Multimodal_Projects/blob/main/Computer%20Vision/Image%20Segmentation/Dominoes/Fine-Tuning%20-%20Dominoes%20-%20Image%20Segmentation%20with%20LoRA.ipynb

## Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

## Training and evaluation data

Dataset Source: https://huggingface.co/datasets/adelavega/dominoes_raw

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 25

### Training results

| Training Loss | Epoch | Step | Validation Loss | Mean Iou | Mean Accuracy | Overall Accuracy | Per Category Iou Segment 0 | Per Category Iou Segment 1 | Per Category Accuracy Segment 0 | Per Category Accuracy Segment 1|

|:-------------:|:-----:|:----:|:---------------:|:--------:|:-------------:|:----------------:|:------------------:|:-------------------:|:---------------------:|:-----------------:|

| 0.0461 | 1.0 | 86 | 0.1233 | 0.9150 | 0.9527 | 0.9762 | 0.9721967854031923 | 0.8578619172251059 | 0.9869082633464498 | 0.9184139264010376 |

| 0.0708 | 2.0 | 172 | 0.1366 | 0.9172 | 0.9490 | 0.9771 | 0.9732821853093164 | 0.8611008788165083 | 0.9898473600751747 | 0.9082362492748777 |

| 0.048 | 3.0 | 258 | 0.1260 | 0.9199 | 0.9534 | 0.9777 | 0.9740118174014271 | 0.8658241844233872 | 0.9888392553004053 | 0.9179240730467295 |

| 0.0535 | 4.0 | 344 | 0.1184 | 0.9200 | 0.9520 | 0.9778 | 0.974142444792198 | 0.8658711064023369 | 0.9896291184589182 | 0.9142864290038782 |

| 0.0185 | 5.0 | 430 | 0.1296 | 0.9182 | 0.9477 | 0.9775 | 0.9737715695013129 | 0.8627108292167807 | 0.9910418746696423 | 0.904378218719681 |

| 0.036 | 6.0 | 516 | 0.1410 | 0.9213 | 0.9538 | 0.9782 | 0.9745002408443008 | 0.8680673581922554 | 0.9892677512186527 | 0.9182967669045321 |

| 0.0376 | 7.0 | 602 | 0.1451 | 0.9206 | 0.9550 | 0.9779 | 0.9741455743906073 | 0.8669703237367214 | 0.9883004639689904 | 0.9216576612178001 |

| 0.0186 | 8.0 | 688 | 0.1380 | 0.9175 | 0.9496 | 0.9772 | 0.9733616852468584 | 0.8616466350192237 | 0.9897043519116697 | 0.9094762400541087 |

| 0.0162 | 9.0 | 774 | 0.1459 | 0.9218 | 0.9539 | 0.9783 | 0.9746840649852051 | 0.8688930149000804 | 0.989455276913138 | 0.9182917005479264 |

| 0.0169 | 10.0 | 860 | 0.1467 | 0.9191 | 0.9502 | 0.9776 | 0.9739086600912814 | 0.8642187978193332 | 0.9901195747929759 | 0.9102564589713776 |

| 0.0102 | 11.0 | 946 | 0.1549 | 0.9191 | 0.9524 | 0.9775 | 0.9737696499931041 | 0.8644247331609153 | 0.9889789745698009 | 0.915789237032027 |

| 0.0204 | 12.0 | 1032 | 0.1502 | 0.9215 | 0.9527 | 0.9783 | 0.974639596078376 | 0.8682964916021273 | 0.989902977623774 | 0.9155653673995151 |

| 0.0268 | 13.0 | 1118 | 0.1413 | 0.9194 | 0.9505 | 0.9777 | 0.9740020531855834 | 0.8647199376136 | 0.99011699066189 | 0.9107963425971664 |

| 0.0166 | 14.0 | 1204 | 0.1584 | 0.9173 | 0.9518 | 0.9770 | 0.9731154475737929 | 0.8614276032542578 | 0.9884142831972749 | 0.9152366875147241 |

| 0.0159 | 15.0 | 1290 | 0.1563 | 0.9170 | 0.9492 | 0.9770 | 0.9731832402253996 | 0.8607442858381036 | 0.9896456803899689 | 0.9087960816798012 |

| 0.0211 | 16.0 | 1376 | 0.1435 | 0.9150 | 0.9481 | 0.9764 | 0.9725201360275898 | 0.8574847000491036 | 0.989323310037 | 0.9068449010920532 |

| 0.0128 | 17.0 | 1462 | 0.1421 | 0.9212 | 0.9519 | 0.9782 | 0.9745789801464504 | 0.8677394402794754 | 0.9901920479238856 | 0.9136255861141298 |

| 0.0167 | 18.0 | 1548 | 0.1558 | 0.9217 | 0.9532 | 0.9783 | 0.9746811993626879 | 0.8686470009484697 | 0.9897428202266988 | 0.9166850322093621 |

| 0.0201 | 19.0 | 1634 | 0.1623 | 0.9156 | 0.9484 | 0.9766 | 0.9727184720007118 | 0.8584339325695252 | 0.9894484642039114 | 0.9072695251050635 |

| 0.0133 | 20.0 | 1720 | 0.1573 | 0.9189 | 0.9505 | 0.9776 | 0.9738320500157303 | 0.8640203613069115 | 0.9898665061373113 | 0.9112263496140702 |

| 0.012 | 21.0 | 1806 | 0.1631 | 0.9165 | 0.9472 | 0.9769 | 0.9731344243001482 | 0.8597866189796295 | 0.9904592118400188 | 0.9040137576913626 |

| 0.0148 | 22.0 | 1892 | 0.1629 | 0.9181 | 0.9507 | 0.9773 | 0.9735162429121835 | 0.8627239955489192 | 0.9894034768309156 | 0.9120129014770962 |

| 0.0137 | 23.0 | 1978 | 0.1701 | 0.9136 | 0.9484 | 0.9760 | 0.9719681843338751 | 0.8552607882028388 | 0.9885083690609032 | 0.908250815050119 |

| 0.0142 | 24.0 | 2064 | 0.1646 | 0.9146 | 0.9488 | 0.9763 | 0.9723134197764093 | 0.8568918401744342 | 0.9887405884771245 | 0.9089100747034281 |

| 0.0156 | 25.0 | 2150 | 0.1615 | 0.9144 | 0.9465 | 0.9763 | 0.9723929259786395 | 0.856345354289624 | 0.9898487696012216 | 0.9032139066422469 |

### Framework versions

- Transformers 4.26.1

- Pytorch 2.0.1

- Datasets 2.13.1

- Tokenizers 0.13.3

|

vlabs/falcon-7b-sentiment_V3

|

vlabs

| 2023-07-27T19:21:16Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-27T19:21:14Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.5.0.dev0

|

Isaacgv/distilhubert-finetuned-gtzan

|

Isaacgv

| 2023-07-27T19:20:19Z | 19 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"hubert",

"audio-classification",

"generated_from_trainer",

"dataset:marsyas/gtzan",

"base_model:ntu-spml/distilhubert",

"base_model:finetune:ntu-spml/distilhubert",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-07-26T12:47:08Z |

---

license: apache-2.0

base_model: ntu-spml/distilhubert

tags:

- generated_from_trainer

datasets:

- marsyas/gtzan

metrics:

- accuracy

model-index:

- name: distilhubert-finetuned-gtzan

results:

- task:

name: Audio Classification

type: audio-classification

dataset:

name: GTZAN

type: marsyas/gtzan

config: all

split: train

args: all

metrics:

- name: Accuracy

type: accuracy

value: 0.88

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilhubert-finetuned-gtzan

This model is a fine-tuned version of [ntu-spml/distilhubert](https://huggingface.co/ntu-spml/distilhubert) on the GTZAN dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5655

- Accuracy: 0.88

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.3836 | 0.98 | 14 | 0.5798 | 0.82 |

| 0.3357 | 1.96 | 28 | 0.5655 | 0.88 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.0

- Tokenizers 0.13.3

|

Varshitha/flan-t5-small-finetune-medicine-v3

|

Varshitha

| 2023-07-27T19:17:58Z | 102 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"text2textgeneration",

"generated_from_trainer",

"base_model:google/flan-t5-small",

"base_model:finetune:google/flan-t5-small",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-07-27T19:16:23Z |

---

license: apache-2.0

base_model: google/flan-t5-small

tags:

- text2textgeneration

- generated_from_trainer

metrics:

- rouge

model-index:

- name: flan-t5-small-finetune-medicine-v3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# flan-t5-small-finetune-medicine-v3

This model is a fine-tuned version of [google/flan-t5-small](https://huggingface.co/google/flan-t5-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.8757

- Rouge1: 15.991

- Rouge2: 5.2469

- Rougel: 14.6278

- Rougelsum: 14.7076

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5.6e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 8

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:|

| No log | 1.0 | 5 | 2.9996 | 12.4808 | 4.9536 | 12.3712 | 12.2123 |

| No log | 2.0 | 10 | 2.9550 | 13.6471 | 4.9536 | 13.5051 | 13.5488 |

| No log | 3.0 | 15 | 2.9224 | 13.8077 | 5.117 | 13.7274 | 13.753 |

| No log | 4.0 | 20 | 2.9050 | 13.7861 | 5.117 | 13.6982 | 13.7001 |

| No log | 5.0 | 25 | 2.8920 | 14.668 | 5.117 | 14.4497 | 14.4115 |

| No log | 6.0 | 30 | 2.8820 | 14.9451 | 5.2469 | 14.5797 | 14.6308 |

| No log | 7.0 | 35 | 2.8770 | 15.991 | 5.2469 | 14.6278 | 14.7076 |

| No log | 8.0 | 40 | 2.8757 | 15.991 | 5.2469 | 14.6278 | 14.7076 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.1

- Tokenizers 0.13.3

|

NasimB/bnc-cbt-rarity

|

NasimB

| 2023-07-27T19:05:16Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"dataset:generator",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-27T16:42:47Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- generator

model-index:

- name: bnc-cbt-rarity

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bnc-cbt-rarity

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the generator dataset.

It achieves the following results on the evaluation set:

- Loss: 4.1216

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 1000

- num_epochs: 6

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 6.3708 | 0.29 | 500 | 5.3361 |

| 5.0503 | 0.59 | 1000 | 4.9318 |

| 4.713 | 0.88 | 1500 | 4.6937 |

| 4.4634 | 1.17 | 2000 | 4.5611 |

| 4.3089 | 1.46 | 2500 | 4.4409 |

| 4.2145 | 1.76 | 3000 | 4.3397 |

| 4.0857 | 2.05 | 3500 | 4.2672 |

| 3.9095 | 2.34 | 4000 | 4.2143 |

| 3.8772 | 2.63 | 4500 | 4.1591 |

| 3.8444 | 2.93 | 5000 | 4.1098 |

| 3.6491 | 3.22 | 5500 | 4.1097 |

| 3.5993 | 3.51 | 6000 | 4.0797 |

| 3.5848 | 3.81 | 6500 | 4.0497 |

| 3.4861 | 4.1 | 7000 | 4.0479 |

| 3.3328 | 4.39 | 7500 | 4.0443 |

| 3.3282 | 4.68 | 8000 | 4.0292 |

| 3.3151 | 4.98 | 8500 | 4.0183 |

| 3.1607 | 5.27 | 9000 | 4.0323 |

| 3.151 | 5.56 | 9500 | 4.0309 |

| 3.1458 | 5.85 | 10000 | 4.0304 |

### Framework versions

- Transformers 4.26.1

- Pytorch 1.11.0+cu113

- Datasets 2.13.0

- Tokenizers 0.13.3

|

Vaibhav9401/llama2-qlora-finetunined-spam

|

Vaibhav9401

| 2023-07-27T18:54:34Z | 2 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-26T08:40:29Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float16

### Framework versions

- PEFT 0.5.0.dev0

|

Aevermann/rwkv-world-latest

|

Aevermann

| 2023-07-27T18:36:32Z | 0 | 0 | null |

[

"license:apache-2.0",

"region:us"

] | null | 2023-07-27T18:16:58Z |

---

license: apache-2.0

---

This is a clone of the BlinkDL RWKV World Model

Its a test. Pls load from orignal repo

|

dariowsz/ppo-Pyramids

|

dariowsz

| 2023-07-27T18:29:47Z | 5 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Pyramids",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Pyramids",

"region:us"

] |

reinforcement-learning

| 2023-07-27T18:28:33Z |

---

library_name: ml-agents

tags:

- Pyramids

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Pyramids

---

# **ppo** Agent playing **Pyramids**

This is a trained model of a **ppo** agent playing **Pyramids**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: dariowsz/ppo-Pyramids

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

idealflaw/dqn-SpaceInvadersNoFrameskip-v4

|

idealflaw

| 2023-07-27T18:24:12Z | 2 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T18:08:41Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 639.00 +/- 185.56

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga idealflaw -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga idealflaw -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga idealflaw

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 10000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

# Environment Arguments

```python

{'render_mode': 'rgb_array'}

```

|

Corbanp/PPO-LunarLander-v3

|

Corbanp

| 2023-07-27T18:17:35Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T18:17:15Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 230.12 +/- 101.08

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

Leogrin/eleuther-pythia1.4b-hh-dpo

|

Leogrin

| 2023-07-27T18:16:00Z | 180 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"causal-lm",

"pythia",

"en",

"dataset:Anthropic/hh-rlhf",

"arxiv:2305.18290",

"arxiv:2101.00027",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-07-27T15:07:41Z |

---

language:

- en

tags:

- pytorch

- causal-lm

- pythia

license: apache-2.0

datasets:

- Anthropic/hh-rlhf

---

# Infos

Pythia-1.4b supervised finetuned with Anthropic-hh-rlhf dataset for 1 epoch (sft-model), before DPO [(paper)](https://arxiv.org/abs/2305.18290) with same dataset for 1 epoch.

[wandb log](https://wandb.ai/pythia_dpo/Pythia_DPO_new/runs/6yrtkj3s)

See [Pythia-1.4b](https://huggingface.co/EleutherAI/pythia-1.4b) for model details [(paper)](https://arxiv.org/abs/2101.00027).

# Benchmark raw results:

Results for the base model are taken from the [Pythia paper](https://arxiv.org/abs/2101.00027).

## Zero shot

| Task | 1.4B_base | 1.4B_sft | 1.4B_dpo |

|------------------|--------------:|--------------:|---------------:|

| Lambada (OpenAI) | 0.616 ± 0.007 | 0.5977 ± 0.0068 | 0.5948 ± 0.0068 |

| PIQA | 0.711 ± 0.011 | 0.7133 ± 0.0106 | 0.7165 ± 0.0105 |

| WinoGrande | 0.573 ± 0.014 | 0.5793 ± 0.0139 | 0.5746 ± 0.0139 |

| WSC | 0.365 ± 0.047 | 0.3654 ± 0.0474 | 0.3654 ± 0.0474 |

| ARC - Easy | 0.606 ± 0.010 | 0.6098 ± 0.0100 | 0.6199 ± 0.0100 |

| ARC - Challenge | 0.260 ± 0.013 | 0.2696 ± 0.0130 | 0.2884 ± 0.0132 |

| SciQ | 0.865 ± 0.011 | 0.8540 ± 0.0112 | 0.8550 ± 0.0111 |

| LogiQA | 0.210 ± 0.016 | NA | NA |

## Five shot

| Task | 1.4B_base | 1.4B_sft | 1.4B_dpo |

|------------------|----------------:|----------------:|----------------:|

| Lambada (OpenAI) | 0.578 ± 0.007 | 0.5201 ± 0.007 | 0.5247 ± 0.007 |

| PIQA | 0.705 ± 0.011 | 0.7176 ± 0.0105| 0.7209 ± 0.0105|

| WinoGrande | 0.580 ± 0.014 | 0.5793 ± 0.0139| 0.5746 ± 0.0139|

| WSC | 0.365 ± 0.047 | 0.5288 ± 0.0492| 0.5769 ± 0.0487|

| ARC - Easy | 0.643 ± 0.010 | 0.6376 ± 0.0099| 0.6561 ± 0.0097|

| ARC - Challenge | 0.290 ± 0.013 | 0.2935 ± 0.0133| 0.3166 ± 0.0136|

| SciQ | 0.92 ± 0.009 | 0.9180 ± 0.0087| 0.9150 ± 0.0088|

| LogiQA | 0.240 ± 0.017 | N/A | N/A |

|

Khushnur/t5-base-end2end-questions-generation_squad_all_pcmq

|

Khushnur

| 2023-07-27T18:11:03Z | 159 | 0 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"generated_from_trainer",

"base_model:google-t5/t5-base",

"base_model:finetune:google-t5/t5-base",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-07-27T15:33:55Z |

---

license: apache-2.0

base_model: t5-base

tags:

- generated_from_trainer

model-index:

- name: t5-base-end2end-questions-generation_squad_all_pcmq

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-base-end2end-questions-generation_squad_all_pcmq

This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.5861

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 32

- total_train_batch_size: 128

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.8599 | 0.67 | 100 | 1.6726 |

| 1.8315 | 1.35 | 200 | 1.6141 |

| 1.7564 | 2.02 | 300 | 1.5942 |

| 1.7153 | 2.69 | 400 | 1.5861 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.0

- Tokenizers 0.13.3

|

efainman/Reinforce-CartPole-v1

|

efainman

| 2023-07-27T18:10:32Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T18:10:23Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

sukiee/qlora-koalpaca-polyglot-5.8b-callcenter_v3

|

sukiee

| 2023-07-27T18:08:11Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-27T18:08:09Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0.dev0

|

snob/TagMyBookmark-KoAlpaca-QLoRA-v1.0_ALLDATA-Finetune300

|

snob

| 2023-07-27T18:00:43Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-07-27T18:00:38Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.4.0.dev0

|

kusknish/ppo-LunarLander-v2

|

kusknish

| 2023-07-27T17:59:22Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-07-27T17:59:05Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: -202.29 +/- 149.85

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

giladvdn/test-sam-handler

|

giladvdn

| 2023-07-27T17:54:32Z | 134 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"sam",

"mask-generation",

"arxiv:2304.02643",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

mask-generation

| 2023-07-27T14:10:04Z |

---

license: apache-2.0

---

# Model Card for Segment Anything Model (SAM) - ViT Huge (ViT-H) version

<p>

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-architecture.png" alt="Model architecture">

<em> Detailed architecture of Segment Anything Model (SAM).</em>

</p>

# Table of Contents

0. [TL;DR](#TL;DR)

1. [Model Details](#model-details)

2. [Usage](#usage)

3. [Citation](#citation)

# TL;DR

[Link to original repository](https://github.com/facebookresearch/segment-anything)

| <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-beancans.png" alt="Snow" width="600" height="600"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-dog-masks.png" alt="Forest" width="600" height="600"> | <img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/sam-car-seg.png" alt="Mountains" width="600" height="600"> |

|---------------------------------------------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------------------------------|

The **Segment Anything Model (SAM)** produces high quality object masks from input prompts such as points or boxes, and it can be used to generate masks for all objects in an image. It has been trained on a [dataset](https://segment-anything.com/dataset/index.html) of 11 million images and 1.1 billion masks, and has strong zero-shot performance on a variety of segmentation tasks.

The abstract of the paper states:

> We introduce the Segment Anything (SA) project: a new task, model, and dataset for image segmentation. Using our efficient model in a data collection loop, we built the largest segmentation dataset to date (by far), with over 1 billion masks on 11M licensed and privacy respecting images. The model is designed and trained to be promptable, so it can transfer zero-shot to new image distributions and tasks. We evaluate its capabilities on numerous tasks and find that its zero-shot performance is impressive -- often competitive with or even superior to prior fully supervised results. We are releasing the Segment Anything Model (SAM) and corresponding dataset (SA-1B) of 1B masks and 11M images at [https://segment-anything.com](https://segment-anything.com) to foster research into foundation models for computer vision.

**Disclaimer**: Content from **this** model card has been written by the Hugging Face team, and parts of it were copy pasted from the original [SAM model card](https://github.com/facebookresearch/segment-anything).

# Model Details

The SAM model is made up of 3 modules:

- The `VisionEncoder`: a VIT based image encoder. It computes the image embeddings using attention on patches of the image. Relative Positional Embedding is used.

- The `PromptEncoder`: generates embeddings for points and bounding boxes

- The `MaskDecoder`: a two-ways transformer which performs cross attention between the image embedding and the point embeddings (->) and between the point embeddings and the image embeddings. The outputs are fed

- The `Neck`: predicts the output masks based on the contextualized masks produced by the `MaskDecoder`.

# Usage

## Prompted-Mask-Generation

```python

from PIL import Image

import requests

from transformers import SamModel, SamProcessor

model = SamModel.from_pretrained("facebook/sam-vit-huge")

processor = SamProcessor.from_pretrained("facebook/sam-vit-huge")

img_url = "https://huggingface.co/ybelkada/segment-anything/resolve/main/assets/car.png"

raw_image = Image.open(requests.get(img_url, stream=True).raw).convert("RGB")

input_points = [[[450, 600]]] # 2D localization of a window

```

```python

inputs = processor(raw_image, input_points=input_points, return_tensors="pt").to("cuda")

outputs = model(**inputs)

masks = processor.image_processor.post_process_masks(outputs.pred_masks.cpu(), inputs["original_sizes"].cpu(), inputs["reshaped_input_sizes"].cpu())

scores = outputs.iou_scores

```

Among other arguments to generate masks, you can pass 2D locations on the approximate position of your object of interest, a bounding box wrapping the object of interest (the format should be x, y coordinate of the top right and bottom left point of the bounding box), a segmentation mask. At this time of writing, passing a text as input is not supported by the official model according to [the official repository](https://github.com/facebookresearch/segment-anything/issues/4#issuecomment-1497626844).

For more details, refer to this notebook, which shows a walk throught of how to use the model, with a visual example!

## Automatic-Mask-Generation

The model can be used for generating segmentation masks in a "zero-shot" fashion, given an input image. The model is automatically prompt with a grid of `1024` points

which are all fed to the model.

The pipeline is made for automatic mask generation. The following snippet demonstrates how easy you can run it (on any device! Simply feed the appropriate `points_per_batch` argument)

```python

from transformers import pipeline

generator = pipeline("mask-generation", device = 0, points_per_batch = 256)

image_url = "https://huggingface.co/ybelkada/segment-anything/resolve/main/assets/car.png"

outputs = generator(image_url, points_per_batch = 256)

```

Now to display the image:

```python

import matplotlib.pyplot as plt

from PIL import Image

import numpy as np

def show_mask(mask, ax, random_color=False):

if random_color:

color = np.concatenate([np.random.random(3), np.array([0.6])], axis=0)

else:

color = np.array([30 / 255, 144 / 255, 255 / 255, 0.6])

h, w = mask.shape[-2:]

mask_image = mask.reshape(h, w, 1) * color.reshape(1, 1, -1)

ax.imshow(mask_image)

plt.imshow(np.array(raw_image))

ax = plt.gca()

for mask in outputs["masks"]:

show_mask(mask, ax=ax, random_color=True)

plt.axis("off")

plt.show()

```

This should give you the following

# Citation

If you use this model, please use the following BibTeX entry.

```

@article{kirillov2023segany,

title={Segment Anything},

author={Kirillov, Alexander and Mintun, Eric and Ravi, Nikhila and Mao, Hanzi and Rolland, Chloe and Gustafson, Laura and Xiao, Tete and Whitehead, Spencer and Berg, Alexander C. and Lo, Wan-Yen and Doll{\'a}r, Piotr and Girshick, Ross},

journal={arXiv:2304.02643},

year={2023}

}

```

|

vxbrandon/my_awesome_qa_model

|

vxbrandon

| 2023-07-27T17:48:35Z | 117 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"base_model:distilbert/distilbert-base-uncased",

"base_model:finetune:distilbert/distilbert-base-uncased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-07-27T16:13:28Z |

---

license: apache-2.0

base_model: distilbert-base-uncased

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: my_awesome_qa_model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_awesome_qa_model

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1920

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 128

- eval_batch_size: 256

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.3143 | 1.0 | 685 | 1.3187 |

| 1.3356 | 2.0 | 1370 | 1.2095 |

| 1.0967 | 3.0 | 2055 | 1.1920 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.0

- Tokenizers 0.13.3

|

badmatr11x/roberta-base-emotions-detection-from-text

|

badmatr11x

| 2023-07-27T17:15:42Z | 136 | 7 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"roberta",

"text-classification",

"en",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-07-15T16:56:14Z |

---

license: mit

widget:

- text: With tears of joy streaming down her cheeks, she embraced her long-lost brother after years of separation.

example_title: Joy

- text: As the orchestra played the final note, the audience erupted into thunderous applause, filling the concert hall with joy.

example_title: Joy

- text: The old man sat alone on the park bench, reminiscing about the love he had lost, his eyes filled with sadness.

example_title: Sadness

- text: The news of her best friend moving to a distant country left her feeling a profound sadness and emptiness.

example_title: Sadness

- text: The scientific research paper discussed complex concepts that were beyond the scope of a laymans understanding.

example_title: Neutral

- text: The documentary provided an objective view of the historical events, presenting facts without any bias.

example_title: Neutral

- text: He clenched his fists tightly, trying to control the surge of anger when he heard the offensive remarks.

example_title: Anger

- text: The unfair treatment at work ignited a simmering anger within him, leading him to consider confronting the management.

example_title: Anger

- text: As the magician pulled a rabbit out of an empty hat, the children gasped in amazement and surprise.

example_title: Surprise

- text: He opened the box to find a rare and valuable antique inside, leaving him speechless with surprise.

example_title: Surprise

- text: The moldy and rotting food in the refrigerator evoked a sense of disgust, leading her to clean it immediately.

example_title: Disgust

- text: The movie's graphic scenes of violence and gore left many viewers feeling a sense of disgust and unease.

example_title: Disgust

- text: As the storm raged outside, the little child clung to their parents, seeking comfort from the fear of thunder.

example_title: Fear

- text: The horror movie was so terrifying that some viewers had to cover their eyes in fear, unable to bear the suspense.

example_title: Fear

language:

- en

metrics:

- accuracy

pipeline_tag: text-classification

---

|

asenella/MMVAEPlus_beta_25_scale_False_seed_1

|

asenella

| 2023-07-27T17:11:03Z | 0 | 0 | null |

[

"multivae",

"en",

"license:apache-2.0",

"region:us"

] | null | 2023-07-27T17:10:50Z |

---

language: en

tags:

- multivae

license: apache-2.0

---

### Downloading this model from the Hub

This model was trained with multivae. It can be downloaded or reloaded using the method `load_from_hf_hub`

```python

>>> from multivae.models import AutoModel

>>> model = AutoModel.load_from_hf_hub(hf_hub_path="your_hf_username/repo_name")

```

|

bochen0909/PyramidsRND

|

bochen0909

| 2023-07-27T17:06:23Z | 1 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Pyramids",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Pyramids",

"region:us"

] |

reinforcement-learning

| 2023-07-27T17:06:20Z |

---

library_name: ml-agents

tags:

- Pyramids