modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-01 00:47:04

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 530

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-01 00:46:57

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

huggingtweets/_elli420_

|

huggingtweets

| 2021-05-21T17:03:54Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/_elli420_/1618735789420/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1334040646047424512/ygdDFqUB_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Elizabeth 🤖 AI Bot </div>

<div style="font-size: 15px">@_elli420_ bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@_elli420_'s tweets](https://twitter.com/_elli420_).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 1415 |

| Retweets | 1242 |

| Short tweets | 9 |

| Tweets kept | 164 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/11z3u9cs/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @_elli420_'s tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1jccfg71) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1jccfg71/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/_elli420_')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/_buddha_quotes

|

huggingtweets

| 2021-05-21T16:55:55Z | 5 | 2 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/_buddha_quotes/1609541828144/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/typography@0.2.x/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/2409590248/73g1ywcwdlyd8ls4wa4g_400x400.jpeg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">The Buddha 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@_buddha_quotes bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@_buddha_quotes's tweets](https://twitter.com/_buddha_quotes).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>3200</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>0</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>0</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>3200</td>

</tr>

</tbody>

</table>

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/3m2s8fe6/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @_buddha_quotes's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/j1ixyq8z) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/j1ixyq8z/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/_buddha_quotes'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/_alexhirsch

|

huggingtweets

| 2021-05-21T16:53:35Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/_alexhirsch/1616542840091/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/661330385465245696/3rnsJokZ_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Alex Hirsch 🤖 AI Bot </div>

<div style="font-size: 15px">@_alexhirsch bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@_alexhirsch's tweets](https://twitter.com/_alexhirsch).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3187 |

| Retweets | 240 |

| Short tweets | 450 |

| Tweets kept | 2497 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1go2kut1/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @_alexhirsch's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1pe6iqi8) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1pe6iqi8/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/_alexhirsch')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/__solnychko

|

huggingtweets

| 2021-05-21T16:49:49Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/__solnychko/1616680322908/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1360367235408224263/AwK6rgAZ_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Sophia 🤖 AI Bot </div>

<div style="font-size: 15px">@__solnychko bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@__solnychko's tweets](https://twitter.com/__solnychko).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3206 |

| Retweets | 1278 |

| Short tweets | 208 |

| Tweets kept | 1720 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/3aglnv5r/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @__solnychko's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/z5yw4btx) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/z5yw4btx/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/__solnychko')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/__justplaying

|

huggingtweets

| 2021-05-21T16:48:43Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/__justplaying/1616931832539/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1347480058508828673/AkXmT_bj_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">alice, dash of wonderland 🎀 🤖 AI Bot </div>

<div style="font-size: 15px">@__justplaying bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@__justplaying's tweets](https://twitter.com/__justplaying).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3210 |

| Retweets | 706 |

| Short tweets | 518 |

| Tweets kept | 1986 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1og52vt9/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @__justplaying's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3ir21lg6) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3ir21lg6/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/__justplaying')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/__frye

|

huggingtweets

| 2021-05-21T16:47:14Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/__frye/1616623887035/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1374740260513579009/5ygC5Ztd_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Frye of Providence 🤖 AI Bot </div>

<div style="font-size: 15px">@__frye bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@__frye's tweets](https://twitter.com/__frye).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 910 |

| Retweets | 73 |

| Short tweets | 117 |

| Tweets kept | 720 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2by67tfe/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @__frye's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1sm9nscd) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1sm9nscd/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/__frye')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/666ouz666

|

huggingtweets

| 2021-05-21T16:43:13Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/666ouz666/1606428014311/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/typography@0.2.x/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1328826930049789953/EWpTLaQR_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">Oğuzhan 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@666ouz666 bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@666ouz666's tweets](https://twitter.com/666ouz666).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>2816</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>63</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>389</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>2364</td>

</tr>

</tbody>

</table>

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/3e6nphcq/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @666ouz666's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/5hsj1s8v) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/5hsj1s8v/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/666ouz666'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

<!--- random size file -->

|

huggingtweets/423zb

|

huggingtweets

| 2021-05-21T16:38:25Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/423zb/1612221398403/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/typography@0.2.x/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1277051021064392706/wuQS0nyO_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">423ZB 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@423zb bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@423zb's tweets](https://twitter.com/423zb).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>3166</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>2425</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>144</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>597</td>

</tr>

</tbody>

</table>

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/jnwkepoo/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @423zb's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/29x1ggo7) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/29x1ggo7/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/423zb'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/3thanguy7

|

huggingtweets

| 2021-05-21T16:35:20Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/3thanguy7/1614103760144/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1296604630537961476/BGjTffM9_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">🔥3thanguy7 is from chicago 🤖 AI Bot </div>

<div style="font-size: 15px">@3thanguy7 bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@3thanguy7's tweets](https://twitter.com/3thanguy7).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3147 |

| Retweets | 1790 |

| Short tweets | 296 |

| Tweets kept | 1061 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/3n62f684/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @3thanguy7's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/328uo5bx) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/328uo5bx/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/3thanguy7')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/178kakapo

|

huggingtweets

| 2021-05-21T16:29:51Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/178kakapo/1603720462678/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<link rel="stylesheet" href="https://unpkg.com/@tailwindcss/typography@0.2.x/dist/typography.min.css">

<style>

@media (prefers-color-scheme: dark) {

.prose { color: #E2E8F0 !important; }

.prose h2, .prose h3, .prose a, .prose thead { color: #F7FAFC !important; }

}

</style>

<section class='prose'>

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/2476808798/p6cqc9mvgsdlhya7nb6p_400x400.jpeg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">KAKAPO➤Endangered 🤖 AI Bot </div>

<div style="font-size: 15px; color: #657786">@178kakapo bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://app.wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-model-to-generate-tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@178kakapo's tweets](https://twitter.com/178kakapo).

<table style='border-width:0'>

<thead style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #CBD5E0'>

<th style='border-width:0'>Data</th>

<th style='border-width:0'>Quantity</th>

</tr>

</thead>

<tbody style='border-width:0'>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Tweets downloaded</td>

<td style='border-width:0'>3140</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Retweets</td>

<td style='border-width:0'>2196</td>

</tr>

<tr style='border-width:0 0 1px 0; border-color: #E2E8F0'>

<td style='border-width:0'>Short tweets</td>

<td style='border-width:0'>56</td>

</tr>

<tr style='border-width:0'>

<td style='border-width:0'>Tweets kept</td>

<td style='border-width:0'>888</td>

</tr>

</tbody>

</table>

[Explore the data](https://app.wandb.ai/wandb/huggingtweets/runs/1r7z36ek/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @178kakapo's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://app.wandb.ai/wandb/huggingtweets/runs/2tp7xvh0) for full transparency and reproducibility.

At the end of training, [the final model](https://app.wandb.ai/wandb/huggingtweets/runs/2tp7xvh0/artifacts) is logged and versioned.

## Intended uses & limitations

### How to use

You can use this model directly with a pipeline for text generation:

<pre><code><span style="color:#03A9F4">from</span> transformers <span style="color:#03A9F4">import</span> pipeline

generator = pipeline(<span style="color:#FF9800">'text-generation'</span>,

model=<span style="color:#FF9800">'huggingtweets/178kakapo'</span>)

generator(<span style="color:#FF9800">"My dream is"</span>, num_return_sequences=<span style="color:#8BC34A">5</span>)</code></pre>

### Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

</section>

[](https://twitter.com/intent/follow?screen_name=borisdayma)

<section class='prose'>

For more details, visit the project repository.

</section>

[](https://github.com/borisdayma/huggingtweets)

<!--- random size file -->

|

huggingtweets/14werewolfvevo

|

huggingtweets

| 2021-05-21T16:28:48Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/14werewolfvevo/1617769919321/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1343113335882063873/mITxI5OI_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">SIKA MODE | BLM 🤖 AI Bot </div>

<div style="font-size: 15px">@14werewolfvevo bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@14werewolfvevo's tweets](https://twitter.com/14werewolfvevo).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3229 |

| Retweets | 170 |

| Short tweets | 798 |

| Tweets kept | 2261 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1ymsdw3a/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @14werewolfvevo's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1iypm80s) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1iypm80s/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/14werewolfvevo')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/14jun1995

|

huggingtweets

| 2021-05-21T16:23:35Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/14jun1995/1616669363048/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1236431647576330246/GGaeVBZJ_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">mon nom non-mo 🤖 AI Bot </div>

<div style="font-size: 15px">@14jun1995 bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@14jun1995's tweets](https://twitter.com/14jun1995).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 3249 |

| Retweets | 20 |

| Short tweets | 213 |

| Tweets kept | 3016 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/1ppb6sp7/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @14jun1995's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/25pt100s) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/25pt100s/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/14jun1995')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

huggingtweets/12123i123i12345

|

huggingtweets

| 2021-05-21T16:22:22Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/12123i123i12345/1617760753400/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div>

<div style="width: 132px; height:132px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1377780722883174400/4gq8ntlP_400x400.jpg')">

</div>

<div style="margin-top: 8px; font-size: 19px; font-weight: 800">parallellax 🤖 AI Bot </div>

<div style="font-size: 15px">@12123i123i12345 bot</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on [@12123i123i12345's tweets](https://twitter.com/12123i123i12345).

| Data | Quantity |

| --- | --- |

| Tweets downloaded | 2362 |

| Retweets | 310 |

| Short tweets | 283 |

| Tweets kept | 1769 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/e91cv8fo/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @12123i123i12345's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/ncn8t24f) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/ncn8t24f/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/12123i123i12345')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

gaochangkuan/model_dir

|

gaochangkuan

| 2021-05-21T16:10:50Z | 10 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

## Generating Chinese poetry by topic.

```python

from transformers import *

tokenizer = BertTokenizer.from_pretrained("gaochangkuan/model_dir")

model = AutoModelWithLMHead.from_pretrained("gaochangkuan/model_dir")

prompt= '''<s>田园躬耕'''

length= 84

stop_token='</s>'

temperature = 1.2

repetition_penalty=1.3

k= 30

p= 0.95

device ='cuda'

seed=2020

no_cuda=False

prompt_text = prompt if prompt else input("Model prompt >>> ")

encoded_prompt = tokenizer.encode(

'<s>'+prompt_text+'<sep>',

add_special_tokens=False,

return_tensors="pt"

)

encoded_prompt = encoded_prompt.to(device)

output_sequences = model.generate(

input_ids=encoded_prompt,

max_length=length,

min_length=10,

do_sample=True,

early_stopping=True,

num_beams=10,

temperature=temperature,

top_k=k,

top_p=p,

repetition_penalty=repetition_penalty,

bad_words_ids=None,

bos_token_id=tokenizer.bos_token_id,

pad_token_id=tokenizer.pad_token_id,

eos_token_id=tokenizer.eos_token_id,

length_penalty=1.2,

no_repeat_ngram_size=2,

num_return_sequences=1,

attention_mask=None,

decoder_start_token_id=tokenizer.bos_token_id,)

generated_sequence = output_sequences[0].tolist()

text = tokenizer.decode(generated_sequence)

text = text[: text.find(stop_token) if stop_token else None]

print(''.join(text).replace(' ','').replace('<pad>','').replace('<s>',''))

```

|

gagan3012/rap-writer

|

gagan3012

| 2021-05-21T16:09:53Z | 8 | 2 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# Generating Rap song Lyrics like Eminem Using GPT2

### I have built a custom model for it using data from Kaggle

Creating a new finetuned model using data lyrics from leading hip-hop stars

### My model can be accessed at: gagan3012/rap-writer

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("gagan3012/rap-writer")

model = AutoModelWithLMHead.from_pretrained("gagan3012/rap-writer")

```

|

gagan3012/project-code-py-small

|

gagan3012

| 2021-05-21T16:06:24Z | 11 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

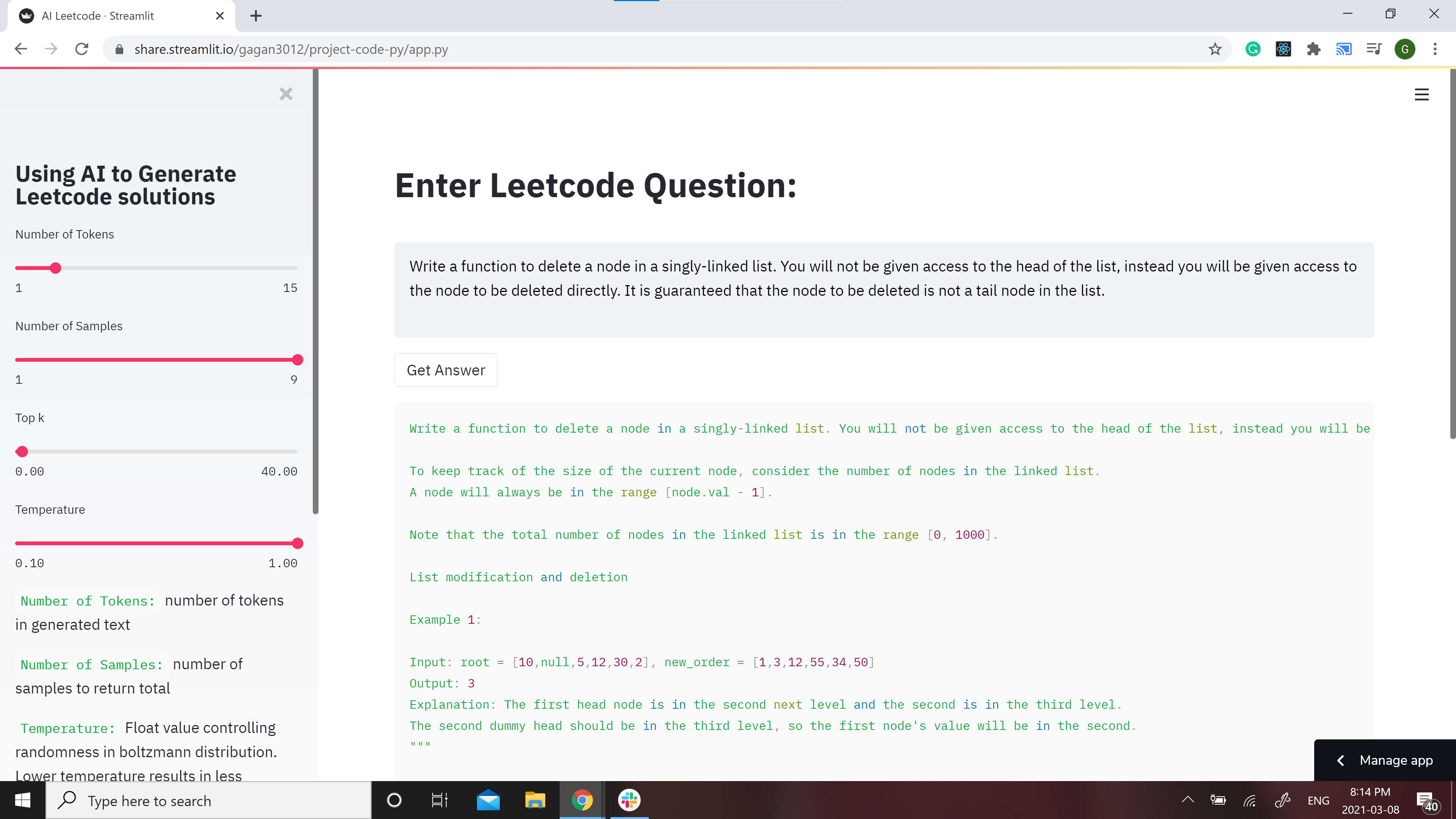

# Leetcode using AI :robot:

GPT-2 Model for Leetcode Questions in python

**Note**: the Answers might not make sense in some cases because of the bias in GPT-2

**Contribtuions:** If you would like to make the model better contributions are welcome Check out [CONTRIBUTIONS.md](https://github.com/gagan3012/project-code-py/blob/master/CONTRIBUTIONS.md)

### 📢 Favour:

It would be highly motivating, if you can STAR⭐ this repo if you find it helpful.

## Model

Two models have been developed for different use cases and they can be found at https://huggingface.co/gagan3012

The model weights can be found here: [GPT-2](https://huggingface.co/gagan3012/project-code-py) and [DistilGPT-2](https://huggingface.co/gagan3012/project-code-py-small)

### Example usage:

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("gagan3012/project-code-py")

model = AutoModelWithLMHead.from_pretrained("gagan3012/project-code-py")

```

## Demo

[](https://share.streamlit.io/gagan3012/project-code-py/app.py)

A streamlit webapp has been setup to use the model: https://share.streamlit.io/gagan3012/project-code-py/app.py

## Example results:

### Question:

```

Write a function to delete a node in a singly-linked list. You will not be given access to the head of the list, instead you will be given access to the node to be deleted directly. It is guaranteed that the node to be deleted is not a tail node in the list.

```

### Answer:

```python

""" Write a function to delete a node in a singly-linked list. You will not be given access to the head of the list, instead you will be given access to the node to be deleted directly. It is guaranteed that the node to be deleted is not a tail node in the list.

For example,

a = 1->2->3

b = 3->1->2

t = ListNode(-1, 1)

Note: The lexicographic ordering of the nodes in a tree matters. Do not assign values to nodes in a tree.

Example 1:

Input: [1,2,3]

Output: 1->2->5

Explanation: 1->2->3->3->4, then 1->2->5[2] and then 5->1->3->4.

Note:

The length of a linked list will be in the range [1, 1000].

Node.val must be a valid LinkedListNode type.

Both the length and the value of the nodes in a linked list will be in the range [-1000, 1000].

All nodes are distinct.

"""

# Definition for singly-linked list.

# class ListNode:

# def __init__(self, x):

# self.val = x

# self.next = None

class Solution:

def deleteNode(self, head: ListNode, val: int) -> None:

"""

BFS

Linked List

:param head: ListNode

:param val: int

:return: ListNode

"""

if head is not None:

return head

dummy = ListNode(-1, 1)

dummy.next = head

dummy.next.val = val

dummy.next.next = head

dummy.val = ""

s1 = Solution()

print(s1.deleteNode(head))

print(s1.deleteNode(-1))

print(s1.deleteNode(-1))

```

|

gagan3012/Fox-News-Generator

|

gagan3012

| 2021-05-21T16:03:28Z | 7 | 3 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# Generating Right Wing News Using GPT2

### I have built a custom model for it using data from Kaggle

Creating a new finetuned model using data from FOX news

### My model can be accessed at gagan3012/Fox-News-Generator

Check the [BenchmarkTest](https://github.com/gagan3012/Fox-News-Generator/blob/master/BenchmarkTest.ipynb) notebook for results

Find the model at [gagan3012/Fox-News-Generator](https://huggingface.co/gagan3012/Fox-News-Generator)

```

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("gagan3012/Fox-News-Generator")

model = AutoModelWithLMHead.from_pretrained("gagan3012/Fox-News-Generator")

```

|

erikinfo/gpt2TEDlectures

|

erikinfo

| 2021-05-21T16:00:10Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# GPT2 Keyword Based Lecture Generator

## Model description

GPT2 fine-tuned on the TED Talks Dataset (published under the Creative Commons BY-NC-ND license).

## Intended uses

Used to generate spoken-word lectures.

### How to use

Input text:

<BOS> title <|SEP|> Some keywords <|SEP|>

Keyword Format: "Main Topic"."Subtopic1","Subtopic2","Subtopic3"

Code Example:

```

prompt = <BOS> + title + \\

<|SEP|> + keywords + <|SEP|>

generated = torch.tensor(tokenizer.encode(prompt)).unsqueeze(0)

model.eval();

```

|

DebateLabKIT/cript

|

DebateLabKIT

| 2021-05-21T15:40:52Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"en",

"arxiv:2009.07185",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

tags:

- gpt2

---

# CRiPT Model (Critical Thinking Intermediarily Pretrained Transformer)

Small version of the trained model (`SYL01-2020-10-24-72K/gpt2-small-train03-72K`) presented in the paper "Critical Thinking for Language Models" (Betz, Voigt and Richardson 2020). See also:

* [blog entry](https://debatelab.github.io/journal/critical-thinking-language-models.html)

* [GitHub repo](https://github.com/debatelab/aacorpus)

* [paper](https://arxiv.org/pdf/2009.07185)

|

ceostroff/harry-potter-gpt2-fanfiction

|

ceostroff

| 2021-05-21T14:51:47Z | 10 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"harry-potter",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language:

- en

tags:

- harry-potter

license: mit

---

# Harry Potter Fanfiction Generator

This is a pre-trained GPT-2 generative text model that allows you to generate your own Harry Potter fanfiction, trained off of the top 100 rated fanficition stories. We intend for this to be used for individual fun and experimentation and not as a commercial product.

|

bolbolzaban/gpt2-persian

|

bolbolzaban

| 2021-05-21T14:23:14Z | 883 | 27 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"farsi",

"persian",

"fa",

"doi:10.57967/hf/1207",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: fa

license: apache-2.0

tags:

- farsi

- persian

---

# GPT2-Persian

bolbolzaban/gpt2-persian is gpt2 language model that is trained with hyper parameters similar to standard gpt2-medium with following differences:

1. The context size is reduced from 1024 to 256 sub words in order to make the training affordable

2. Instead of BPE, google sentence piece tokenizor is used for tokenization.

3. The training dataset only include Persian text. All non-persian characters are replaced with especial tokens (e.g [LAT], [URL], [NUM])

Please refer to this [blog post](https://medium.com/@khashei/a-not-so-dangerous-ai-in-the-persian-language-39172a641c84) for further detail.

Also try the model [here](https://huggingface.co/bolbolzaban/gpt2-persian?text=%D8%AF%D8%B1+%DB%8C%DA%A9+%D8%A7%D8%AA%D9%81%D8%A7%D9%82+%D8%B4%DA%AF%D9%81%D8%AA+%D8%A7%D9%86%DA%AF%DB%8C%D8%B2%D8%8C+%D9%BE%DA%98%D9%88%D9%87%D8%B4%DA%AF%D8%B1%D8%A7%D9%86) or on [Bolbolzaban.com](http://www.bolbolzaban.com/text).

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline, AutoTokenizer, GPT2LMHeadModel

tokenizer = AutoTokenizer.from_pretrained('bolbolzaban/gpt2-persian')

model = GPT2LMHeadModel.from_pretrained('bolbolzaban/gpt2-persian')

generator = pipeline('text-generation', model, tokenizer=tokenizer, config={'max_length':256})

sample = generator('در یک اتفاق شگفت انگیز، پژوهشگران')

```

If you are using Tensorflow import TFGPT2LMHeadModel instead of GPT2LMHeadModel.

## Fine-tuning

Find a basic fine-tuning example on this [Github Repo](https://github.com/khashei/bolbolzaban-gpt2-persian).

## Special Tokens

gpt-persian is trained for the purpose of research on Persian poetry. Because of that all english words and numbers are replaced with special tokens and only standard Persian alphabet is used as part of input text. Here is one example:

Original text: اگر آیفون یا آیپد شما دارای سیستم عامل iOS 14.3 یا iPadOS 14.3 یا نسخههای جدیدتر باشد

Text used in training: اگر آیفون یا آیپد شما دارای سیستم عامل [LAT] [NUM] یا [LAT] [NUM] یا نسخههای جدیدتر باشد

Please consider normalizing your input text using [Hazm](https://github.com/sobhe/hazm) or similar libraries and ensure only Persian characters are provided as input.

If you want to use classical Persian poetry as input use [BOM] (begining of mesra) at the beginning of each verse (مصرع) followed by [EOS] (end of statement) at the end of each couplet (بیت).

See following links for example:

[[BOM] توانا بود](https://huggingface.co/bolbolzaban/gpt2-persian?text=%5BBOM%5D+%D8%AA%D9%88%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF)

[[BOM] توانا بود هر که دانا بود [BOM]](https://huggingface.co/bolbolzaban/gpt2-persian?text=%5BBOM%5D+%D8%AA%D9%88%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%D9%87%D8%B1+%DA%A9%D9%87+%D8%AF%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%5BBOM%5D)

[[BOM] توانا بود هر که دانا بود [BOM] ز دانش دل پیر](https://huggingface.co/bolbolzaban/gpt2-persian?text=%5BBOM%5D+%D8%AA%D9%88%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%D9%87%D8%B1+%DA%A9%D9%87+%D8%AF%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%5BBOM%5D+%D8%B2+%D8%AF%D8%A7%D9%86%D8%B4+%D8%AF%D9%84+%D9%BE%DB%8C%D8%B1)

[[BOM] توانا بود هر که دانا بود [BOM] ز دانش دل پیربرنا بود [EOS]](https://huggingface.co/bolbolzaban/gpt2-persian?text=%5BBOM%5D+%D8%AA%D9%88%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%D9%87%D8%B1+%DA%A9%D9%87+%D8%AF%D8%A7%D9%86%D8%A7+%D8%A8%D9%88%D8%AF+%5BBOM%5D+%D8%B2+%D8%AF%D8%A7%D9%86%D8%B4+%D8%AF%D9%84+%D9%BE%DB%8C%D8%B1%D8%A8%D8%B1%D9%86%D8%A7+%D8%A8%D9%88%D8%AF++%5BEOS%5D)

If you like to know about structure of classical Persian poetry refer to these [blog posts](https://medium.com/@khashei).

## Acknowledgment

This project is supported by Cloud TPUs from Google’s TensorFlow Research Cloud (TFRC).

## Citation and Reference

Please reference "bolbolzaban.com" website if you are using gpt2-persian in your research or commertial application.

## Contacts

Please reachout on [Linkedin](https://www.linkedin.com/in/khashei/) or [Telegram](https://t.me/khasheia) if you have any question or need any help to use the model.

Follow [Bolbolzaban](http://bolbolzaban.com/about) on [Twitter](https://twitter.com/bolbol_zaban), [Telegram](https://t.me/bolbol_zaban) or [Instagram](https://www.instagram.com/bolbolzaban/)

|

bigjoedata/rockbot355M

|

bigjoedata

| 2021-05-21T14:17:25Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# 🎸 🥁 Rockbot 🎤 🎧

A [GPT-2](https://openai.com/blog/better-language-models/) based lyrics generator fine-tuned on the writing styles of 16000 songs by 270 artists across MANY genres (not just rock).

**Instructions:** Type in a fake song title, pick an artist, click "Generate".

Most language models are imprecise and Rockbot is no exception. You may see NSFW lyrics unexpectedly. I have made no attempts to censor. Generated lyrics may be repetitive and/or incoherent at times, but hopefully you'll encounter something interesting or memorable.

Oh, and generation is resource intense and can be slow. I set governors on song length to keep generation time somewhat reasonable. You may adjust song length and other parameters on the left or check out [Github](https://github.com/bigjoedata/rockbot) to spin up your own Rockbot.

Just have fun.

[Demo](https://share.streamlit.io/bigjoedata/rockbot/main/src/main.py) Adjust settings to increase speed

[Github](https://github.com/bigjoedata/rockbot)

[GPT-2 124M version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot)

[DistilGPT2 version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot-distilgpt2/) This is leaner with the tradeoff being that the lyrics are more simplistic.

🎹 🪘 🎷 🎺 🪗 🪕 🎻

## Background

With the shutdown of [Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) I used Google's takeout function to gather the metadata from artists I've listened to over the past several years. I wanted to take advantage of this bounty to build something fun. I scraped the top 50 lyrics for artists I'd listened to at least once from [Genius](https://genius.com/), then fine tuned [GPT-2's](https://openai.com/blog/better-language-models/) 124M token model using the [AITextGen](https://github.com/minimaxir/aitextgen) framework after considerable post-processing. For more on generation, see [here.](https://huggingface.co/blog/how-to-generate)

### Full Tech Stack

[Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) (R.I.P.).

[Python](https://www.python.org/).

[Streamlit](https://www.streamlit.io/).

[GPT-2](https://openai.com/blog/better-language-models/).

[AITextGen](https://github.com/minimaxir/aitextgen).

[Pandas](https://pandas.pydata.org/).

[LyricsGenius](https://lyricsgenius.readthedocs.io/en/master/).

[Google Colab](https://colab.research.google.com/) (GPU based Training).

[Knime](https://www.knime.com/) (data cleaning).

## How to Use The Model

Please refer to [AITextGen](https://github.com/minimaxir/aitextgen) for much better documentation.

### Training Parameters Used

ai.train("lyrics.txt",

line_by_line=False,

from_cache=False,

num_steps=10000,

generate_every=2000,

save_every=2000,

save_gdrive=False,

learning_rate=1e-3,

batch_size=3,

eos_token="<|endoftext|>",

#fp16=True

)

### To Use

Generate With Prompt (Use Title Case):

Song Name

BY

Artist Name

|

bigjoedata/rockbot

|

bigjoedata

| 2021-05-21T14:15:36Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# 🎸 🥁 Rockbot 🎤 🎧

A [GPT-2](https://openai.com/blog/better-language-models/) based lyrics generator fine-tuned on the writing styles of 16000 songs by 270 artists across MANY genres (not just rock).

**Instructions:** Type in a fake song title, pick an artist, click "Generate".

Most language models are imprecise and Rockbot is no exception. You may see NSFW lyrics unexpectedly. I have made no attempts to censor. Generated lyrics may be repetitive and/or incoherent at times, but hopefully you'll encounter something interesting or memorable.

Oh, and generation is resource intense and can be slow. I set governors on song length to keep generation time somewhat reasonable. You may adjust song length and other parameters on the left or check out [Github](https://github.com/bigjoedata/rockbot) to spin up your own Rockbot.

Just have fun.

[Demo](https://share.streamlit.io/bigjoedata/rockbot/main/src/main.py) Adjust settings to increase speed

[Github](https://github.com/bigjoedata/rockbot)

[GPT-2 124M version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot)

[DistilGPT2 version Model page on Hugging Face](https://huggingface.co/bigjoedata/rockbot-distilgpt2/) This is leaner with the tradeoff being that the lyrics are more simplistic.

🎹 🪘 🎷 🎺 🪗 🪕 🎻

## Background

With the shutdown of [Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) I used Google's takeout function to gather the metadata from artists I've listened to over the past several years. I wanted to take advantage of this bounty to build something fun. I scraped the top 50 lyrics for artists I'd listened to at least once from [Genius](https://genius.com/), then fine tuned [GPT-2's](https://openai.com/blog/better-language-models/) 124M token model using the [AITextGen](https://github.com/minimaxir/aitextgen) framework after considerable post-processing. For more on generation, see [here.](https://huggingface.co/blog/how-to-generate)

### Full Tech Stack

[Google Play Music](https://en.wikipedia.org/wiki/Google_Play_Music) (R.I.P.).

[Python](https://www.python.org/).

[Streamlit](https://www.streamlit.io/).

[GPT-2](https://openai.com/blog/better-language-models/).

[AITextGen](https://github.com/minimaxir/aitextgen).

[Pandas](https://pandas.pydata.org/).

[LyricsGenius](https://lyricsgenius.readthedocs.io/en/master/).

[Google Colab](https://colab.research.google.com/) (GPU based Training).

[Knime](https://www.knime.com/) (data cleaning).

## How to Use The Model

Please refer to [AITextGen](https://github.com/minimaxir/aitextgen) for much better documentation.

### Training Parameters Used

ai.train("lyrics.txt",

line_by_line=False,

from_cache=False,

num_steps=10000,

generate_every=2000,

save_every=2000,

save_gdrive=False,

learning_rate=1e-3,

batch_size=3,

eos_token="<|endoftext|>",

#fp16=True

)

### To Use

Generate With Prompt (Use Title Case):

Song Name

BY

Artist Name

|

classla/bcms-bertic-generator

|

classla

| 2021-05-21T13:29:30Z | 5 | 2 |

transformers

|

[

"transformers",

"pytorch",

"electra",

"pretraining",

"masked-lm",

"hr",

"bs",

"sr",

"cnr",

"hbs",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language:

- hr

- bs

- sr

- cnr

- hbs

tags:

- masked-lm

widget:

- text: "Zovem se Marko i radim u [MASK]."

license: apache-2.0

---

# BERTić* [bert-ich] /bɜrtitʃ/ - A transformer language model for Bosnian, Croatian, Montenegrin and Serbian

* The name should resemble the facts (1) that the model was trained in Zagreb, Croatia, where diminutives ending in -ić (as in fotić, smajlić, hengić etc.) are very popular, and (2) that most surnames in the countries where these languages are spoken end in -ić (with diminutive etymology as well).

This is the smaller generator of the main [discriminator model](https://huggingface.co/classla/bcms-bertic), useful if you want to continue pre-training the discriminator model.

If you use the model, please cite the following paper:

```

@inproceedings{ljubesic-lauc-2021-bertic,

title = "{BERT}i{\'c} - The Transformer Language Model for {B}osnian, {C}roatian, {M}ontenegrin and {S}erbian",

author = "Ljube{\v{s}}i{\'c}, Nikola and Lauc, Davor",

booktitle = "Proceedings of the 8th Workshop on Balto-Slavic Natural Language Processing",

month = apr,

year = "2021",

address = "Kiyv, Ukraine",

publisher = "Association for Computational Linguistics",

url = "https://www.aclweb.org/anthology/2021.bsnlp-1.5",

pages = "37--42",

}

```

|

Dongjae/mrc2reader

|

Dongjae

| 2021-05-21T13:25:57Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"question-answering",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-03-02T23:29:04Z |

The Reader model is for Korean Question Answering

The backbone model is deepset/xlm-roberta-large-squad2.

It is a finetuned model with KorQuAD-v1 dataset.

As a result of verification using KorQuAD evaluation dataset, it showed approximately 87% and 92% respectively for the EM score and F1 score.

Thank you

|

anonymous-german-nlp/german-gpt2

|

anonymous-german-nlp

| 2021-05-21T13:20:42Z | 338 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"gpt2",

"text-generation",

"de",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: de

widget:

- text: "Heute ist sehr schönes Wetter in"

license: mit

---

# German GPT-2 model

**Note**: This model was de-anonymized and now lives at:

https://huggingface.co/dbmdz/german-gpt2

Please use the new model name instead!

|

aliosm/ComVE-gpt2

|

aliosm

| 2021-05-21T13:19:25Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"text-generation",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"dataset:ComVE",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- ComVE

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-gpt2

## Model description

Finetuned model on Commonsense Validation and Explanation (ComVE) dataset introduced in [SemEval2020 Task4](https://competitions.codalab.org/competitions/21080) using a causal language modeling (CLM) objective.

The model is able to generate a reason why a given natural language statement is against commonsense.

## Intended uses & limitations

You can use the raw model for text generation to generate reasons why natural language statements are against commonsense.

#### How to use

You can use this model directly to generate reasons why the given statement is against commonsense using [`generate.sh`](https://github.com/AliOsm/SemEval2020-Task4-ComVE/tree/master/TaskC-Generation) script.

*Note:* make sure that you are using version `2.4.1` of `transformers` package. Newer versions has some issue in text generation and the model repeats the last token generated again and again.

#### Limitations and bias

The model biased to negate the entered sentence usually instead of producing a factual reason.

## Training data

The model is initialized from the [gpt2](https://github.com/huggingface/transformers/blob/master/model_cards/gpt2-README.md) model and finetuned using [ComVE](https://github.com/wangcunxiang/SemEval2020-Task4-Commonsense-Validation-and-Explanation) dataset which contains 10K against commonsense sentences, each of them is paired with three reference reasons.

## Training procedure

Each natural language statement that against commonsense is concatenated with its reference reason with `<|continue|>` as a separator, then the model finetuned using CLM objective.

The model trained on Nvidia Tesla P100 GPU from Google Colab platform with 5e-5 learning rate, 5 epochs, 128 maximum sequence length and 64 batch size.

<center>

<img src="https://i.imgur.com/xKbrwBC.png">

</center>

## Eval results

The model achieved 14.0547/13.6534 BLEU scores on SemEval2020 Task4: Commonsense Validation and Explanation development and testing dataset.

### BibTeX entry and citation info

```bibtex

@article{fadel2020justers,

title={JUSTers at SemEval-2020 Task 4: Evaluating Transformer Models Against Commonsense Validation and Explanation},

author={Fadel, Ali and Al-Ayyoub, Mahmoud and Cambria, Erik},

year={2020}

}

```

<a href="https://huggingface.co/exbert/?model=aliosm/ComVE-gpt2">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

aliosm/ComVE-gpt2-medium

|

aliosm

| 2021-05-21T13:17:55Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"gpt2",

"feature-extraction",

"exbert",

"commonsense",

"semeval2020",

"comve",

"en",

"dataset:ComVE",

"license:mit",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-03-02T23:29:05Z |

---

language: "en"

tags:

- gpt2

- exbert

- commonsense

- semeval2020

- comve

license: "mit"

datasets:

- ComVE

metrics:

- bleu

widget:

- text: "Chicken can swim in water. <|continue|>"

---

# ComVE-gpt2-medium

## Model description