modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-01 06:29:04

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 530

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-01 06:28:51

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

facebook/wav2vec2-base-10k-voxpopuli-ft-lt

|

facebook

| 2021-05-05T16:24:29Z | 0 | 0 | null |

[

"audio",

"automatic-speech-recognition",

"voxpopuli",

"lt",

"arxiv:2101.00390",

"license:cc-by-nc-4.0",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: lt

tags:

- audio

- automatic-speech-recognition

- voxpopuli

license: cc-by-nc-4.0

---

# Wav2Vec2-Base-VoxPopuli-Finetuned

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/) base model pretrained on the 10K unlabeled subset of [VoxPopuli corpus](https://arxiv.org/abs/2101.00390) and fine-tuned on the transcribed data in lt (refer to Table 1 of paper for more information).

**Paper**: *[VoxPopuli: A Large-Scale Multilingual Speech Corpus for Representation

Learning, Semi-Supervised Learning and Interpretation](https://arxiv.org/abs/2101.00390)*

**Authors**: *Changhan Wang, Morgane Riviere, Ann Lee, Anne Wu, Chaitanya Talnikar, Daniel Haziza, Mary Williamson, Juan Pino, Emmanuel Dupoux* from *Facebook AI*

See the official website for more information, [here](https://github.com/facebookresearch/voxpopuli/)

# Usage for inference

In the following it is shown how the model can be used in inference on a sample of the [Common Voice dataset](https://commonvoice.mozilla.org/en/datasets)

```python

#!/usr/bin/env python3

from transformers import Wav2Vec2Processor, Wav2Vec2ForCTC

from datasets import load_dataset

import torchaudio

import torch

# resample audio

# load model & processor

model = Wav2Vec2ForCTC.from_pretrained("facebook/wav2vec2-base-10k-voxpopuli-ft-lt")

processor = Wav2Vec2Processor.from_pretrained("facebook/wav2vec2-base-10k-voxpopuli-ft-lt")

# load dataset

ds = load_dataset("common_voice", "lt", split="validation[:1%]")

# common voice does not match target sampling rate

common_voice_sample_rate = 48000

target_sample_rate = 16000

resampler = torchaudio.transforms.Resample(common_voice_sample_rate, target_sample_rate)

# define mapping fn to read in sound file and resample

def map_to_array(batch):

speech, _ = torchaudio.load(batch["path"])

speech = resampler(speech)

batch["speech"] = speech[0]

return batch

# load all audio files

ds = ds.map(map_to_array)

# run inference on the first 5 data samples

inputs = processor(ds[:5]["speech"], sampling_rate=target_sample_rate, return_tensors="pt", padding=True)

# inference

logits = model(**inputs).logits

predicted_ids = torch.argmax(logits, axis=-1)

print(processor.batch_decode(predicted_ids))

```

|

facebook/wav2vec2-base-10k-voxpopuli-ft-et

|

facebook

| 2021-05-05T16:24:26Z | 0 | 0 | null |

[

"audio",

"automatic-speech-recognition",

"voxpopuli",

"et",

"arxiv:2101.00390",

"license:cc-by-nc-4.0",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: et

tags:

- audio

- automatic-speech-recognition

- voxpopuli

license: cc-by-nc-4.0

---

# Wav2Vec2-Base-VoxPopuli-Finetuned

[Facebook's Wav2Vec2](https://ai.facebook.com/blog/wav2vec-20-learning-the-structure-of-speech-from-raw-audio/) base model pretrained on the 10K unlabeled subset of [VoxPopuli corpus](https://arxiv.org/abs/2101.00390) and fine-tuned on the transcribed data in et (refer to Table 1 of paper for more information).

**Paper**: *[VoxPopuli: A Large-Scale Multilingual Speech Corpus for Representation

Learning, Semi-Supervised Learning and Interpretation](https://arxiv.org/abs/2101.00390)*

**Authors**: *Changhan Wang, Morgane Riviere, Ann Lee, Anne Wu, Chaitanya Talnikar, Daniel Haziza, Mary Williamson, Juan Pino, Emmanuel Dupoux* from *Facebook AI*

See the official website for more information, [here](https://github.com/facebookresearch/voxpopuli/)

# Usage for inference

In the following it is shown how the model can be used in inference on a sample of the [Common Voice dataset](https://commonvoice.mozilla.org/en/datasets)

```python

#!/usr/bin/env python3

from transformers import Wav2Vec2Processor, Wav2Vec2ForCTC

from datasets import load_dataset

import torchaudio

import torch

# resample audio

# load model & processor

model = Wav2Vec2ForCTC.from_pretrained("facebook/wav2vec2-base-10k-voxpopuli-ft-et")

processor = Wav2Vec2Processor.from_pretrained("facebook/wav2vec2-base-10k-voxpopuli-ft-et")

# load dataset

ds = load_dataset("common_voice", "et", split="validation[:1%]")

# common voice does not match target sampling rate

common_voice_sample_rate = 48000

target_sample_rate = 16000

resampler = torchaudio.transforms.Resample(common_voice_sample_rate, target_sample_rate)

# define mapping fn to read in sound file and resample

def map_to_array(batch):

speech, _ = torchaudio.load(batch["path"])

speech = resampler(speech)

batch["speech"] = speech[0]

return batch

# load all audio files

ds = ds.map(map_to_array)

# run inference on the first 5 data samples

inputs = processor(ds[:5]["speech"], sampling_rate=target_sample_rate, return_tensors="pt", padding=True)

# inference

logits = model(**inputs).logits

predicted_ids = torch.argmax(logits, axis=-1)

print(processor.batch_decode(predicted_ids))

```

|

xcjthu/Lawformer

|

xcjthu

| 2021-05-05T11:57:20Z | 47 | 7 |

transformers

|

[

"transformers",

"pytorch",

"longformer",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

## Lawformer

### Introduction

This repository provides the source code and checkpoints of the paper "Lawformer: A Pre-trained Language Model forChinese Legal Long Documents". You can download the checkpoint from the [huggingface model hub](https://huggingface.co/xcjthu/Lawformer) or from [here](https://data.thunlp.org/legal/Lawformer.zip).

### Easy Start

We have uploaded our model to the huggingface model hub. Make sure you have installed transformers.

```python

>>> from transformers import AutoModel, AutoTokenizer

>>> tokenizer = AutoTokenizer.from_pretrained("hfl/chinese-roberta-wwm-ext")

>>> model = AutoModel.from_pretrained("xcjthu/Lawformer")

>>> inputs = tokenizer("任某提起诉讼,请求判令解除婚姻关系并对夫妻共同财产进行分割。", return_tensors="pt")

>>> outputs = model(**inputs)

```

### Cite

If you use the pre-trained models, please cite this paper:

```

@article{xiao2021lawformer,

title={Lawformer: A Pre-trained Language Model forChinese Legal Long Documents},

author={Xiao, Chaojun and Hu, Xueyu and Liu, Zhiyuan and Tu, Cunchao and Sun, Maosong},

year={2021}

}

```

|

stas/tiny-wmt19-en-de

|

stas

| 2021-05-03T01:48:44Z | 400 | 0 |

transformers

|

[

"transformers",

"pytorch",

"fsmt",

"text2text-generation",

"wmt19",

"testing",

"en",

"de",

"dataset:wmt19",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

---

language:

- en

- de

thumbnail:

tags:

- wmt19

- testing

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

---

# Tiny FSMT en-de

This is a tiny model that is used in the `transformers` test suite. It doesn't do anything useful, other than testing that `modeling_fsmt.py` is functional.

Do not try to use it for anything that requires quality.

The model is indeed 1MB in size.

You can see how it was created [here](https://huggingface.co/stas/tiny-wmt19-en-de/blob/main/fsmt-make-tiny-model.py).

If you're looking for the real model, please go to [https://huggingface.co/facebook/wmt19-en-de](https://huggingface.co/facebook/wmt19-en-de).

|

stas/tiny-wmt19-en-ru

|

stas

| 2021-05-03T01:47:47Z | 3,371 | 0 |

transformers

|

[

"transformers",

"pytorch",

"fsmt",

"text2text-generation",

"wmt19",

"testing",

"en",

"ru",

"dataset:wmt19",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

---

language:

- en

- ru

thumbnail:

tags:

- wmt19

- testing

license: apache-2.0

datasets:

- wmt19

metrics:

- bleu

---

# Tiny FSMT en-ru

This is a tiny model that is used in the `transformers` test suite. It doesn't do anything useful, other than testing that `modeling_fsmt.py` is functional.

Do not try to use it for anything that requires quality.

The model is indeed 30KB in size.

You can see how it was created [here](https://huggingface.co/stas/tiny-wmt19-en-ru/blob/main/fsmt-make-super-tiny-model.py).

If you're looking for the real model, please go to [https://huggingface.co/facebook/wmt19-en-ru](https://huggingface.co/facebook/wmt19-en-ru).

|

MarshallHo/albertZero-squad2-base-v2

|

MarshallHo

| 2021-05-02T16:41:46Z | 0 | 0 | null |

[

"arxiv:1909.11942",

"arxiv:1810.04805",

"arxiv:1806.03822",

"arxiv:2001.09694",

"region:us"

] | null | 2022-03-02T23:29:04Z |

# albertZero

albertZero is a PyTorch model with a prediction head fine-tuned for SQuAD 2.0.

Based on Hugging Face's albert-base-v2, albertZero employs a novel method to speed up fine-tuning. It re-initializes weights of final linear layer in the shared albert transformer block, resulting in a 2% point improvement during the early epochs of fine-tuning.

## Usage

albertZero can be loaded like this:

```python

tokenizer = AutoTokenizer.from_pretrained('MarshallHo/albertZero-squad2-base-v2')

model = AutoModel.from_pretrained('MarshallHo/albertZero-squad2-base-v2')

```

or

```python

from transformers import AlbertModel, AlbertTokenizer, AlbertForQuestionAnswering, AlbertPreTrainedModel

mytokenizer = AlbertTokenizer.from_pretrained('albert-base-v2')

model = AlbertForQuestionAnsweringAVPool.from_pretrained('albert-base-v2')

model.load_state_dict(torch.load('albertZero-squad2-base-v2.bin'))

```

## References

The goal of [ALBERT](https://arxiv.org/abs/1909.11942) is to reduce the memory requirement of the groundbreaking

language model [BERT](https://arxiv.org/abs/1810.04805), while providing a similar level of performance. ALBERT mainly uses 2 methods to reduce the number of parameters – parameter sharing and factorized embedding.

The field of NLP has undergone major improvements in recent years. The

replacement of recurrent architectures by attention-based models has allowed NLP tasks such as

question-answering to approach human level performance. In order to push the limits further, the

[SQuAD2.0](https://arxiv.org/abs/1806.03822) dataset was created in 2018 with 50,000 additional unanswerable questions, addressing a major weakness of the original version of the dataset.

At the time of writing, near the top of the [SQuAD2.0 leaderboard](https://rajpurkar.github.io/SQuAD-explorer/) is Shanghai Jiao Tong University’s [Retro-Reader](http://arxiv.org/abs/2001.09694).

We have re-implemented their non-ensemble ALBERT model with the SQUAD2.0 prediction head.

## Acknowledgments

Thanks to the generosity of the team at Hugging Face and all the groups referenced above !

|

mlcorelib/debertav2-base-uncased

|

mlcorelib

| 2021-05-01T12:53:51Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"rust",

"bert",

"fill-mask",

"exbert",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

---

language: en

tags:

- exbert

license: apache-2.0

datasets:

- bookcorpus

- wikipedia

---

# BERT base model (uncased)

Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://arxiv.org/abs/1810.04805) and first released in

[this repository](https://github.com/google-research/bert). This model is uncased: it does not make a difference

between english and English.

Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by

the Hugging Face team.

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes

they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to

predict if the two sentences were following each other or not.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

## Intended uses & limitations

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=bert) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("Hello I'm a [MASK] model.")

[{'sequence': "[CLS] hello i'm a fashion model. [SEP]",

'score': 0.1073106899857521,

'token': 4827,

'token_str': 'fashion'},

{'sequence': "[CLS] hello i'm a role model. [SEP]",

'score': 0.08774490654468536,

'token': 2535,

'token_str': 'role'},

{'sequence': "[CLS] hello i'm a new model. [SEP]",

'score': 0.05338378623127937,

'token': 2047,

'token_str': 'new'},

{'sequence': "[CLS] hello i'm a super model. [SEP]",

'score': 0.04667217284440994,

'token': 3565,

'token_str': 'super'},

{'sequence': "[CLS] hello i'm a fine model. [SEP]",

'score': 0.027095865458250046,

'token': 2986,

'token_str': 'fine'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = TFBertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased

predictions:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("The man worked as a [MASK].")

[{'sequence': '[CLS] the man worked as a carpenter. [SEP]',

'score': 0.09747550636529922,

'token': 10533,

'token_str': 'carpenter'},

{'sequence': '[CLS] the man worked as a waiter. [SEP]',

'score': 0.0523831807076931,

'token': 15610,

'token_str': 'waiter'},

{'sequence': '[CLS] the man worked as a barber. [SEP]',

'score': 0.04962705448269844,

'token': 13362,

'token_str': 'barber'},

{'sequence': '[CLS] the man worked as a mechanic. [SEP]',

'score': 0.03788609802722931,

'token': 15893,

'token_str': 'mechanic'},

{'sequence': '[CLS] the man worked as a salesman. [SEP]',

'score': 0.037680890411138535,

'token': 18968,

'token_str': 'salesman'}]

>>> unmasker("The woman worked as a [MASK].")

[{'sequence': '[CLS] the woman worked as a nurse. [SEP]',

'score': 0.21981462836265564,

'token': 6821,

'token_str': 'nurse'},

{'sequence': '[CLS] the woman worked as a waitress. [SEP]',

'score': 0.1597415804862976,

'token': 13877,

'token_str': 'waitress'},

{'sequence': '[CLS] the woman worked as a maid. [SEP]',

'score': 0.1154729500412941,

'token': 10850,

'token_str': 'maid'},

{'sequence': '[CLS] the woman worked as a prostitute. [SEP]',

'score': 0.037968918681144714,

'token': 19215,

'token_str': 'prostitute'},

{'sequence': '[CLS] the woman worked as a cook. [SEP]',

'score': 0.03042375110089779,

'token': 5660,

'token_str': 'cook'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The BERT model was pretrained on [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038

unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and

headers).

## Training procedure

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are

then of the form:

```

[CLS] Sentence A [SEP] Sentence B [SEP]

```

With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in

the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a

consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two

"sentences" has a combined length of less than 512 tokens.

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

### Pretraining

The model was trained on 4 cloud TPUs in Pod configuration (16 TPU chips total) for one million steps with a batch size

of 256. The sequence length was limited to 128 tokens for 90% of the steps and 512 for the remaining 10%. The optimizer

used is Adam with a learning rate of 1e-4, \\(\beta_{1} = 0.9\\) and \\(\beta_{2} = 0.999\\), a weight decay of 0.01,

learning rate warmup for 10,000 steps and linear decay of the learning rate after.

## Evaluation results

When fine-tuned on downstream tasks, this model achieves the following results:

Glue test results:

| Task | MNLI-(m/mm) | QQP | QNLI | SST-2 | CoLA | STS-B | MRPC | RTE | Average |

|:----:|:-----------:|:----:|:----:|:-----:|:----:|:-----:|:----:|:----:|:-------:|

| | 84.6/83.4 | 71.2 | 90.5 | 93.5 | 52.1 | 85.8 | 88.9 | 66.4 | 79.6 |

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-1810-04805,

author = {Jacob Devlin and

Ming{-}Wei Chang and

Kenton Lee and

Kristina Toutanova},

title = {{BERT:} Pre-training of Deep Bidirectional Transformers for Language

Understanding},

journal = {CoRR},

volume = {abs/1810.04805},

year = {2018},

url = {http://arxiv.org/abs/1810.04805},

archivePrefix = {arXiv},

eprint = {1810.04805},

timestamp = {Tue, 30 Oct 2018 20:39:56 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1810-04805.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<a href="https://huggingface.co/exbert/?model=bert-base-uncased">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

mlcorelib/deberta-base-uncased

|

mlcorelib

| 2021-05-01T12:33:45Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"rust",

"bert",

"fill-mask",

"exbert",

"en",

"dataset:bookcorpus",

"dataset:wikipedia",

"arxiv:1810.04805",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

---

language: en

tags:

- exbert

license: apache-2.0

datasets:

- bookcorpus

- wikipedia

---

# BERT base model (uncased)

Pretrained model on English language using a masked language modeling (MLM) objective. It was introduced in

[this paper](https://arxiv.org/abs/1810.04805) and first released in

[this repository](https://github.com/google-research/bert). This model is uncased: it does not make a difference

between english and English.

Disclaimer: The team releasing BERT did not write a model card for this model so this model card has been written by

the Hugging Face team.

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means it

was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes

they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to

predict if the two sentences were following each other or not.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks: if you have a dataset of labeled sentences for instance, you can train a standard

classifier using the features produced by the BERT model as inputs.

## Intended uses & limitations

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to

be fine-tuned on a downstream task. See the [model hub](https://huggingface.co/models?filter=bert) to look for

fine-tuned versions on a task that interests you.

Note that this model is primarily aimed at being fine-tuned on tasks that use the whole sentence (potentially masked)

to make decisions, such as sequence classification, token classification or question answering. For tasks such as text

generation you should look at model like GPT2.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("Hello I'm a [MASK] model.")

[{'sequence': "[CLS] hello i'm a fashion model. [SEP]",

'score': 0.1073106899857521,

'token': 4827,

'token_str': 'fashion'},

{'sequence': "[CLS] hello i'm a role model. [SEP]",

'score': 0.08774490654468536,

'token': 2535,

'token_str': 'role'},

{'sequence': "[CLS] hello i'm a new model. [SEP]",

'score': 0.05338378623127937,

'token': 2047,

'token_str': 'new'},

{'sequence': "[CLS] hello i'm a super model. [SEP]",

'score': 0.04667217284440994,

'token': 3565,

'token_str': 'super'},

{'sequence': "[CLS] hello i'm a fine model. [SEP]",

'score': 0.027095865458250046,

'token': 2986,

'token_str': 'fine'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import BertTokenizer, TFBertModel

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = TFBertModel.from_pretrained("bert-base-uncased")

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

Even if the training data used for this model could be characterized as fairly neutral, this model can have biased

predictions:

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='bert-base-uncased')

>>> unmasker("The man worked as a [MASK].")

[{'sequence': '[CLS] the man worked as a carpenter. [SEP]',

'score': 0.09747550636529922,

'token': 10533,

'token_str': 'carpenter'},

{'sequence': '[CLS] the man worked as a waiter. [SEP]',

'score': 0.0523831807076931,

'token': 15610,

'token_str': 'waiter'},

{'sequence': '[CLS] the man worked as a barber. [SEP]',

'score': 0.04962705448269844,

'token': 13362,

'token_str': 'barber'},

{'sequence': '[CLS] the man worked as a mechanic. [SEP]',

'score': 0.03788609802722931,

'token': 15893,

'token_str': 'mechanic'},

{'sequence': '[CLS] the man worked as a salesman. [SEP]',

'score': 0.037680890411138535,

'token': 18968,

'token_str': 'salesman'}]

>>> unmasker("The woman worked as a [MASK].")

[{'sequence': '[CLS] the woman worked as a nurse. [SEP]',

'score': 0.21981462836265564,

'token': 6821,

'token_str': 'nurse'},

{'sequence': '[CLS] the woman worked as a waitress. [SEP]',

'score': 0.1597415804862976,

'token': 13877,

'token_str': 'waitress'},

{'sequence': '[CLS] the woman worked as a maid. [SEP]',

'score': 0.1154729500412941,

'token': 10850,

'token_str': 'maid'},

{'sequence': '[CLS] the woman worked as a prostitute. [SEP]',

'score': 0.037968918681144714,

'token': 19215,

'token_str': 'prostitute'},

{'sequence': '[CLS] the woman worked as a cook. [SEP]',

'score': 0.03042375110089779,

'token': 5660,

'token_str': 'cook'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The BERT model was pretrained on [BookCorpus](https://yknzhu.wixsite.com/mbweb), a dataset consisting of 11,038

unpublished books and [English Wikipedia](https://en.wikipedia.org/wiki/English_Wikipedia) (excluding lists, tables and

headers).

## Training procedure

### Preprocessing

The texts are lowercased and tokenized using WordPiece and a vocabulary size of 30,000. The inputs of the model are

then of the form:

```

[CLS] Sentence A [SEP] Sentence B [SEP]

```

With probability 0.5, sentence A and sentence B correspond to two consecutive sentences in the original corpus and in

the other cases, it's another random sentence in the corpus. Note that what is considered a sentence here is a

consecutive span of text usually longer than a single sentence. The only constrain is that the result with the two

"sentences" has a combined length of less than 512 tokens.

The details of the masking procedure for each sentence are the following:

- 15% of the tokens are masked.

- In 80% of the cases, the masked tokens are replaced by `[MASK]`.

- In 10% of the cases, the masked tokens are replaced by a random token (different) from the one they replace.

- In the 10% remaining cases, the masked tokens are left as is.

### Pretraining

The model was trained on 4 cloud TPUs in Pod configuration (16 TPU chips total) for one million steps with a batch size

of 256. The sequence length was limited to 128 tokens for 90% of the steps and 512 for the remaining 10%. The optimizer

used is Adam with a learning rate of 1e-4, \\(\beta_{1} = 0.9\\) and \\(\beta_{2} = 0.999\\), a weight decay of 0.01,

learning rate warmup for 10,000 steps and linear decay of the learning rate after.

## Evaluation results

When fine-tuned on downstream tasks, this model achieves the following results:

Glue test results:

| Task | MNLI-(m/mm) | QQP | QNLI | SST-2 | CoLA | STS-B | MRPC | RTE | Average |

|:----:|:-----------:|:----:|:----:|:-----:|:----:|:-----:|:----:|:----:|:-------:|

| | 84.6/83.4 | 71.2 | 90.5 | 93.5 | 52.1 | 85.8 | 88.9 | 66.4 | 79.6 |

### BibTeX entry and citation info

```bibtex

@article{DBLP:journals/corr/abs-1810-04805,

author = {Jacob Devlin and

Ming{-}Wei Chang and

Kenton Lee and

Kristina Toutanova},

title = {{BERT:} Pre-training of Deep Bidirectional Transformers for Language

Understanding},

journal = {CoRR},

volume = {abs/1810.04805},

year = {2018},

url = {http://arxiv.org/abs/1810.04805},

archivePrefix = {arXiv},

eprint = {1810.04805},

timestamp = {Tue, 30 Oct 2018 20:39:56 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1810-04805.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

<a href="https://huggingface.co/exbert/?model=bert-base-uncased">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

julien-c/kan-bayashi-jsut_tts_train_tacotron2_ja

|

julien-c

| 2021-04-30T10:08:45Z | 6 | 0 |

espnet

|

[

"espnet",

"audio",

"text-to-speech",

"ja",

"dataset:jsut",

"arxiv:1804.00015",

"license:cc-by-4.0",

"region:us"

] |

text-to-speech

| 2022-03-02T23:29:05Z |

---

tags:

- espnet

- audio

- text-to-speech

language: ja

datasets:

- jsut

license: cc-by-4.0

inference: false

---

## Example ESPnet2 TTS model

♻️ Imported from https://zenodo.org/record/3963886/

This model was trained by kan-bayashi using jsut/tts1 recipe in [espnet](https://github.com/espnet/espnet/).

Model id:

`kan-bayashi/jsut_tts_train_tacotron2_raw_phn_jaconv_pyopenjtalk_train.loss.best`

### Citing ESPnet

```BibTex

@inproceedings{watanabe2018espnet,

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson {Enrique Yalta Soplin} and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

title={{ESPnet}: End-to-End Speech Processing Toolkit},

year={2018},

booktitle={Proceedings of Interspeech},

pages={2207--2211},

doi={10.21437/Interspeech.2018-1456},

url={http://dx.doi.org/10.21437/Interspeech.2018-1456}

}

@inproceedings{hayashi2020espnet,

title={{Espnet-TTS}: Unified, reproducible, and integratable open source end-to-end text-to-speech toolkit},

author={Hayashi, Tomoki and Yamamoto, Ryuichi and Inoue, Katsuki and Yoshimura, Takenori and Watanabe, Shinji and Toda, Tomoki and Takeda, Kazuya and Zhang, Yu and Tan, Xu},

booktitle={Proceedings of IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

pages={7654--7658},

year={2020},

organization={IEEE}

}

```

or arXiv:

```bibtex

@misc{watanabe2018espnet,

title={ESPnet: End-to-End Speech Processing Toolkit},

author={Shinji Watanabe and Takaaki Hori and Shigeki Karita and Tomoki Hayashi and Jiro Nishitoba and Yuya Unno and Nelson Enrique Yalta Soplin and Jahn Heymann and Matthew Wiesner and Nanxin Chen and Adithya Renduchintala and Tsubasa Ochiai},

year={2018},

eprint={1804.00015},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

vasudevgupta/bigbird-roberta-large

|

vasudevgupta

| 2021-04-30T07:36:35Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"big_bird",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

Moved here: https://huggingface.co/google/bigbird-roberta-large

|

vasudevgupta/dl-hack-pegasus-large

|

vasudevgupta

| 2021-04-30T07:33:27Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"pegasus",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

Deep Learning research papers **Title -> abstract**

|

nbouali/flaubert-base-uncased-finetuned-cooking

|

nbouali

| 2021-04-28T16:02:59Z | 351 | 1 |

transformers

|

[

"transformers",

"pytorch",

"flaubert",

"text-classification",

"french",

"flaubert-base-uncased",

"fr",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

---

language: fr

tags:

- text-classification

- flaubert

- french

- flaubert-base-uncased

widget:

- text: "Lasagnes à la bolognaise"

---

# FlauBERT finetuned on French cooking recipes

This model is finetuned on a sequence classification task that associates each sequence with the appropriate recipe category.

### How to use it?

```python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

from transformers import TextClassificationPipeline

loaded_tokenizer = AutoTokenizer.from_pretrained("nbouali/flaubert-base-uncased-finetuned-cooking")

loaded_model = AutoModelForSequenceClassification.from_pretrained("nbouali/flaubert-base-uncased-finetuned-cooking")

nlp = TextClassificationPipeline(model=loaded_model,tokenizer=loaded_tokenizer,task="Recipe classification")

print(nlp("Lasagnes à la bolognaise"))

```

```

[{'label': 'LABEL_6', 'score': 0.9921900033950806}]

```

### Label encoding:

| label | Recipe Category |

|:------:|:--------------:|

| 0 |'Accompagnement' |

| 1 | 'Amuse-gueule' |

| 2 | 'Boisson' |

| 3 | 'Confiserie' |

| 4 | 'Dessert'|

| 5 | 'Entrée' |

| 6 |'Plat principal' |

| 7 | 'Sauce' |

<br/>

<br/>

> If you would like to know more about this model you can refer to [our blog post](https://medium.com/unify-data-office/a-cooking-language-model-fine-tuned-on-dozens-of-thousands-of-french-recipes-bcdb8e560571)

|

mrm8488/electricidad-base-finetuned-pawsx-es

|

mrm8488

| 2021-04-28T15:52:25Z | 5 | 1 |

transformers

|

[

"transformers",

"pytorch",

"electra",

"text-classification",

"nli",

"es",

"dataset:xtreme",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

---

language: es

datasets:

- xtreme

tags:

- nli

widget:

- text: "El río Tabaci es una vertiente del río Leurda en Rumania. El río Leurda es un afluente del río Tabaci en Rumania."

---

# Electricidad-base fine-tuned on PAWS-X-es for Paraphrase Identification (NLI)

|

mrm8488/camembert-base-finetuned-pawsx-fr

|

mrm8488

| 2021-04-28T15:51:53Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"nli",

"fr",

"dataset:xtreme",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

---

language: fr

datasets:

- xtreme

tags:

- nli

widget:

- text: "La première série a été mieux reçue par la critique que la seconde. La seconde série a été bien accueillie par la critique, mieux que la première."

---

# Camembert-base fine-tuned on PAWS-X-fr for Paraphrase Identification (NLI)

|

AimB/mT5-en-kr-natural

|

AimB

| 2021-04-28T12:47:22Z | 16 | 2 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:04Z |

you can use this model with simpletransfomers.

```

!pip install simpletransformers

from simpletransformers.t5 import T5Model

model = T5Model("mt5", "AimB/mT5-en-kr-natural")

print(model.predict(["I feel good today"]))

print(model.predict(["우리집 고양이는 세상에서 제일 귀엽습니다"]))

```

|

anukaver/xlm-roberta-est-qa

|

anukaver

| 2021-04-27T10:47:18Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"question-answering",

"dataset:squad",

"dataset:anukaver/EstQA",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-03-02T23:29:05Z |

---

tags:

- question-answering

datasets:

- squad

- anukaver/EstQA

---

# Question answering model for Estonian

This is a question answering model based on XLM-Roberta base model. It is fine-tuned subsequentially on:

1. English SQuAD v1.1

2. SQuAD v1.1 translated into Estonian

3. Small native Estonian dataset (800 samples)

The model has retained good multilingual properties and can be used for extractive QA tasks in all languages included in XLM-Roberta. The performance is best in the fine-tuning languages of Estonian and English.

| Tested on | F1 | EM |

| ----------- | --- | --- |

| EstQA test set | 82.4 | 75.3 |

| SQuAD v1.1 dev set | 86.9 | 77.9 |

The Estonian dataset used for fine-tuning and validating results is available in https://huggingface.co/datasets/anukaver/EstQA/ (version 1.0)

|

mitra-mir/ALBERT-Persian-Poetry

|

mitra-mir

| 2021-04-27T06:55:48Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"albert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

A Transformer-based Persian Language Model Further Pretrained on Persian Poetry

ALBERT was first introduced by [Hooshvare](https://huggingface.co/HooshvareLab/albert-fa-zwnj-base-v2?text=%D8%B2+%D8%A2%D9%86+%D8%AF%D8%B1%D8%AF%D8%B4+%5BMASK%5D+%D9%85%DB%8C+%D8%B3%D9%88%D8%AE%D8%AA+%D8%AF%D8%B1+%D8%A8%D8%B1) with 30,000 vocabulary size as lite BERT for self-supervised learning of language representations for the Persian language. Here we wanted to utilize its capabilities by pretraining it on a large corpse of Persian poetry. This model has been post-trained on 80 percent of poetry verses of the Persian poetry dataset - Ganjoor- and has been evaluated on the other 20 percent.

|

jacob-valdez/blenderbot-small-tflite

|

jacob-valdez

| 2021-04-25T00:47:29Z | 0 | 1 | null |

[

"tflite",

"Android",

"blenderbot",

"en",

"license:apache-2.0",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language: "en"

#thumbnail: "url to a thumbnail used in social sharing"

tags:

- Android

- tflite

- blenderbot

license: "apache-2.0"

#datasets:

#metrics:

---

# Model Card

`blenderbot-small-tflite` is a tflite version of `blenderbot-small-90M` I converted for my UTA CSE3310 class. See the repo at [https://github.com/kmosoti/DesparadosAEYE](https://github.com/kmosoti/DesparadosAEYE) and the conversion process [here](https://drive.google.com/file/d/1F93nMsDIm1TWhn70FcLtcaKQUynHq9wS/view?usp=sharing).

You have to right pad your user and model input integers to make them [32,]-shaped. Then indicate te true length with the 3rd and 4th params.

```python

display(interpreter.get_input_details())

display(interpreter.get_output_details())

```

```json

[{'dtype': numpy.int32,

'index': 0,

'name': 'input_tokens',

'quantization': (0.0, 0),

'quantization_parameters': {'quantized_dimension': 0,

'scales': array([], dtype=float32),

'zero_points': array([], dtype=int32)},

'shape': array([32], dtype=int32),

'shape_signature': array([32], dtype=int32),

'sparsity_parameters': {}},

{'dtype': numpy.int32,

'index': 1,

'name': 'decoder_input_tokens',

'quantization': (0.0, 0),

'quantization_parameters': {'quantized_dimension': 0,

'scales': array([], dtype=float32),

'zero_points': array([], dtype=int32)},

'shape': array([32], dtype=int32),

'shape_signature': array([32], dtype=int32),

'sparsity_parameters': {}},

{'dtype': numpy.int32,

'index': 2,

'name': 'input_len',

'quantization': (0.0, 0),

'quantization_parameters': {'quantized_dimension': 0,

'scales': array([], dtype=float32),

'zero_points': array([], dtype=int32)},

'shape': array([], dtype=int32),

'shape_signature': array([], dtype=int32),

'sparsity_parameters': {}},

{'dtype': numpy.int32,

'index': 3,

'name': 'decoder_input_len',

'quantization': (0.0, 0),

'quantization_parameters': {'quantized_dimension': 0,

'scales': array([], dtype=float32),

'zero_points': array([], dtype=int32)},

'shape': array([], dtype=int32),

'shape_signature': array([], dtype=int32),

'sparsity_parameters': {}}]

[{'dtype': numpy.int32,

'index': 3113,

'name': 'Identity',

'quantization': (0.0, 0),

'quantization_parameters': {'quantized_dimension': 0,

'scales': array([], dtype=float32),

'zero_points': array([], dtype=int32)},

'shape': array([1], dtype=int32),

'shape_signature': array([1], dtype=int32),

'sparsity_parameters': {}}]

```

|

glasses/cse_resnet50

|

glasses

| 2021-04-24T10:50:58Z | 2 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:1512.03385",

"arxiv:1812.01187",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# cse_resnet50

Implementation of ResNet proposed in [Deep Residual Learning for Image

Recognition](https://arxiv.org/abs/1512.03385)

``` python

ResNet.resnet18()

ResNet.resnet26()

ResNet.resnet34()

ResNet.resnet50()

ResNet.resnet101()

ResNet.resnet152()

ResNet.resnet200()

Variants (d) proposed in `Bag of Tricks for Image Classification with Convolutional Neural Networks <https://arxiv.org/pdf/1812.01187.pdf`_

ResNet.resnet26d()

ResNet.resnet34d()

ResNet.resnet50d()

# You can construct your own one by chaning `stem` and `block`

resnet101d = ResNet.resnet101(stem=ResNetStemC, block=partial(ResNetBottleneckBlock, shortcut=ResNetShorcutD))

```

Examples:

``` python

# change activation

ResNet.resnet18(activation = nn.SELU)

# change number of classes (default is 1000 )

ResNet.resnet18(n_classes=100)

# pass a different block

ResNet.resnet18(block=SENetBasicBlock)

# change the steam

model = ResNet.resnet18(stem=ResNetStemC)

change shortcut

model = ResNet.resnet18(block=partial(ResNetBasicBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = ResNet.resnet18()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 64, 112, 112]), torch.Size([1, 64, 56, 56]), torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14])]

```

|

spencerh/leftpartisan

|

spencerh

| 2021-04-23T19:27:15Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

# Text classifier using DistilBERT to determine Partisanship

## This is one of many single-class partisanship models

label_0 refers to "left" while label_1 refers to "other".

This model was trained on 40,000 articles.

### Best Practices

This model was optimized for 512 token-length text. Any text below 150 tokens will result in inaccurate results.

|

glasses/deit_base_patch16_224

|

glasses

| 2021-04-22T18:44:42Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

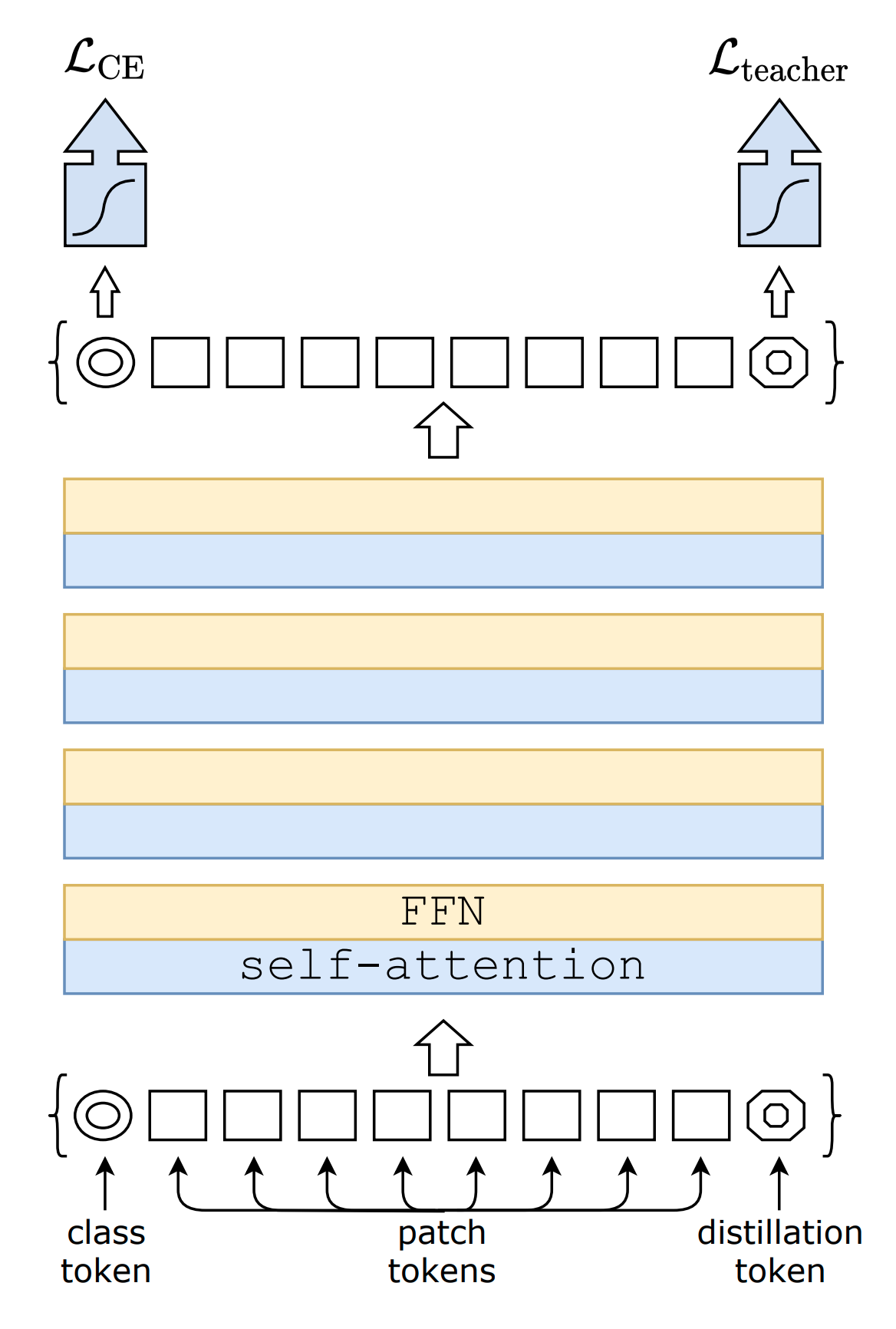

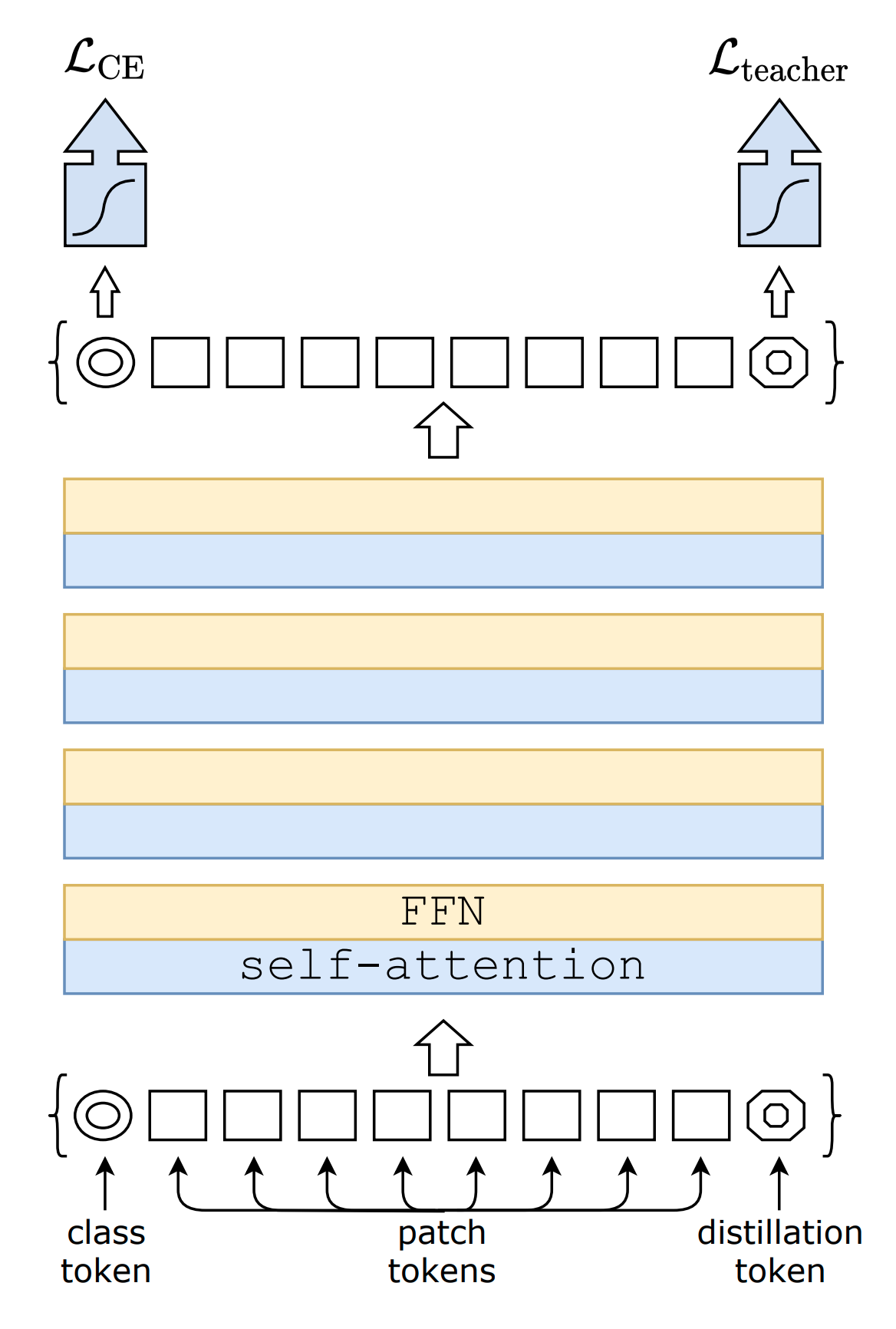

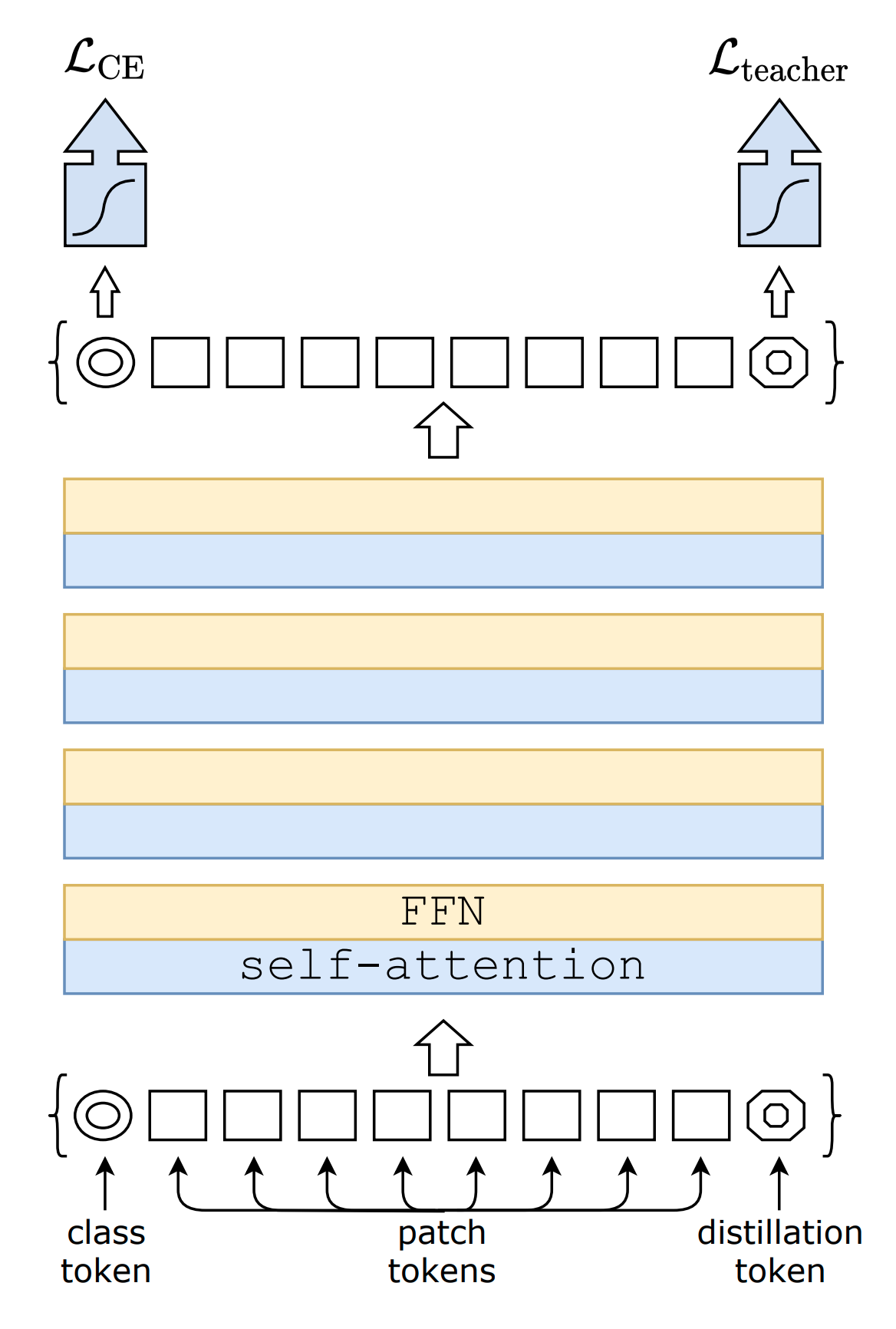

# deit_base_patch16_224

Implementation of DeiT proposed in [Training data-efficient image

transformers & distillation through

attention](https://arxiv.org/pdf/2010.11929.pdf)

An attention based distillation is proposed where a new token is added

to the model, the [dist]{.title-ref} token.

``` {.sourceCode .}

DeiT.deit_tiny_patch16_224()

DeiT.deit_small_patch16_224()

DeiT.deit_base_patch16_224()

DeiT.deit_base_patch16_384()

```

|

glasses/deit_small_patch16_224

|

glasses

| 2021-04-22T18:44:25Z | 2 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# deit_small_patch16_224

Implementation of DeiT proposed in [Training data-efficient image

transformers & distillation through

attention](https://arxiv.org/pdf/2010.11929.pdf)

An attention based distillation is proposed where a new token is added

to the model, the [dist]{.title-ref} token.

``` {.sourceCode .}

DeiT.deit_tiny_patch16_224()

DeiT.deit_small_patch16_224()

DeiT.deit_base_patch16_224()

DeiT.deit_base_patch16_384()

```

|

glasses/deit_tiny_patch16_224

|

glasses

| 2021-04-22T18:44:18Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# deit_tiny_patch16_224

Implementation of DeiT proposed in [Training data-efficient image

transformers & distillation through

attention](https://arxiv.org/pdf/2010.11929.pdf)

An attention based distillation is proposed where a new token is added

to the model, the [dist]{.title-ref} token.

``` {.sourceCode .}

DeiT.deit_tiny_patch16_224()

DeiT.deit_small_patch16_224()

DeiT.deit_base_patch16_224()

DeiT.deit_base_patch16_384()

```

|

glasses/vit_large_patch16_384

|

glasses

| 2021-04-22T18:43:25Z | 2 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# vit_large_patch16_384

Implementation of Vision Transformer (ViT) proposed in [An Image Is

Worth 16x16 Words: Transformers For Image Recognition At

Scale](https://arxiv.org/pdf/2010.11929.pdf)

The following image from the authors shows the architecture.

``` python

ViT.vit_small_patch16_224()

ViT.vit_base_patch16_224()

ViT.vit_base_patch16_384()

ViT.vit_base_patch32_384()

ViT.vit_huge_patch16_224()

ViT.vit_huge_patch32_384()

ViT.vit_large_patch16_224()

ViT.vit_large_patch16_384()

ViT.vit_large_patch32_384()

```

Examples:

``` python

# change activation

ViT.vit_base_patch16_224(activation = nn.SELU)

# change number of classes (default is 1000 )

ViT.vit_base_patch16_224(n_classes=100)

# pass a different block, default is TransformerEncoderBlock

ViT.vit_base_patch16_224(block=MyCoolTransformerBlock)

# get features

model = ViT.vit_base_patch16_224

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[[torch.Size([1, 197, 768]), torch.Size([1, 197, 768]), ...]

# change the tokens, you have to subclass ViTTokens

class MyTokens(ViTTokens):

def __init__(self, emb_size: int):

super().__init__(emb_size)

self.my_new_token = nn.Parameter(torch.randn(1, 1, emb_size))

ViT(tokens=MyTokens)

```

|

glasses/vit_huge_patch32_384

|

glasses

| 2021-04-22T18:41:37Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# vit_huge_patch32_384

Implementation of Vision Transformer (ViT) proposed in [An Image Is

Worth 16x16 Words: Transformers For Image Recognition At

Scale](https://arxiv.org/pdf/2010.11929.pdf)

The following image from the authors shows the architecture.

``` python

ViT.vit_small_patch16_224()

ViT.vit_base_patch16_224()

ViT.vit_base_patch16_384()

ViT.vit_base_patch32_384()

ViT.vit_huge_patch16_224()

ViT.vit_huge_patch32_384()

ViT.vit_large_patch16_224()

ViT.vit_large_patch16_384()

ViT.vit_large_patch32_384()

```

Examples:

``` python

# change activation

ViT.vit_base_patch16_224(activation = nn.SELU)

# change number of classes (default is 1000 )

ViT.vit_base_patch16_224(n_classes=100)

# pass a different block, default is TransformerEncoderBlock

ViT.vit_base_patch16_224(block=MyCoolTransformerBlock)

# get features

model = ViT.vit_base_patch16_224

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[[torch.Size([1, 197, 768]), torch.Size([1, 197, 768]), ...]

# change the tokens, you have to subclass ViTTokens

class MyTokens(ViTTokens):

def __init__(self, emb_size: int):

super().__init__(emb_size)

self.my_new_token = nn.Parameter(torch.randn(1, 1, emb_size))

ViT(tokens=MyTokens)

```

|

glasses/vit_huge_patch16_224

|

glasses

| 2021-04-22T18:39:36Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2010.11929",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# vit_huge_patch16_224

Implementation of Vision Transformer (ViT) proposed in [An Image Is

Worth 16x16 Words: Transformers For Image Recognition At

Scale](https://arxiv.org/pdf/2010.11929.pdf)

The following image from the authors shows the architecture.

``` python

ViT.vit_small_patch16_224()

ViT.vit_base_patch16_224()

ViT.vit_base_patch16_384()

ViT.vit_base_patch32_384()

ViT.vit_huge_patch16_224()

ViT.vit_huge_patch32_384()

ViT.vit_large_patch16_224()

ViT.vit_large_patch16_384()

ViT.vit_large_patch32_384()

```

Examples:

``` python

# change activation

ViT.vit_base_patch16_224(activation = nn.SELU)

# change number of classes (default is 1000 )

ViT.vit_base_patch16_224(n_classes=100)

# pass a different block, default is TransformerEncoderBlock

ViT.vit_base_patch16_224(block=MyCoolTransformerBlock)

# get features

model = ViT.vit_base_patch16_224

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[[torch.Size([1, 197, 768]), torch.Size([1, 197, 768]), ...]

# change the tokens, you have to subclass ViTTokens

class MyTokens(ViTTokens):

def __init__(self, emb_size: int):

super().__init__(emb_size)

self.my_new_token = nn.Parameter(torch.randn(1, 1, emb_size))

ViT(tokens=MyTokens)

```

|

k948181/ybdH-1

|

k948181

| 2021-04-22T13:34:20Z | 0 | 0 | null |

[

"region:us"

] | null | 2022-03-02T23:29:05Z |

>tr|Q8ZR27|Q8ZR27_SALTY Putative glycerol dehydrogenase OS=Salmonella typhimurium (strain LT2 / SGSC1412 / ATCC 700720) OX=99287 GN=ybdH PE=3 SV=1

MNHTEIRVVTGPANYFSHAGSLERLTDFFTPEQLSHAVWVYGERAIAAARPYLPEAFERA

GAKHLPFTGHCSERHVAQLAHACNDDRQVVIGVGGGALLDTAKALARRLALPFVAIPTIA

ATCAAWTPLSVWYNDAGQALQFEIFDDANFLVLVEPRIILQAPDDYLLAGIGDTLAKWYE

AVVLAPQPETLPLTVRLGINSACAIRDLLLDSSEQALADKQQRRLTQAFCDVVDAIIAGG

GMVGGLGERYTRVAAAHAVHNGLTVLPQTEKFLHGTKVAYGILVQSALLGQDDVLAQLIT

AYRRFHLPARLSELDVDIHNTAEIDRVIAHTLRPVESIHYLPVTLTPDTLRAAFEKVEFF

RI

|

glasses/dummy

|

glasses

| 2021-04-21T18:24:15Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:1512.03385",

"arxiv:1812.01187",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# ResNet

Implementation of ResNet proposed in [Deep Residual Learning for Image

Recognition](https://arxiv.org/abs/1512.03385)

``` python

ResNet.resnet18()

ResNet.resnet26()

ResNet.resnet34()

ResNet.resnet50()

ResNet.resnet101()

ResNet.resnet152()

ResNet.resnet200()

Variants (d) proposed in `Bag of Tricks for Image Classification with Convolutional Neural Networks <https://arxiv.org/pdf/1812.01187.pdf`_

ResNet.resnet26d()

ResNet.resnet34d()

ResNet.resnet50d()

# You can construct your own one by chaning `stem` and `block`

resnet101d = ResNet.resnet101(stem=ResNetStemC, block=partial(ResNetBottleneckBlock, shortcut=ResNetShorcutD))

```

Examples:

``` python

# change activation

ResNet.resnet18(activation = nn.SELU)

# change number of classes (default is 1000 )

ResNet.resnet18(n_classes=100)

# pass a different block

ResNet.resnet18(block=SENetBasicBlock)

# change the steam

model = ResNet.resnet18(stem=ResNetStemC)

change shortcut

model = ResNet.resnet18(block=partial(ResNetBasicBlock, shortcut=ResNetShorcutD))

# store each feature

x = torch.rand((1, 3, 224, 224))

# get features

model = ResNet.resnet18()

# first call .features, this will activate the forward hooks and tells the model you'll like to get the features

model.encoder.features

model(torch.randn((1,3,224,224)))

# get the features from the encoder

features = model.encoder.features

print([x.shape for x in features])

#[torch.Size([1, 64, 112, 112]), torch.Size([1, 64, 56, 56]), torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14])]

```

|

ahmedabdelali/bert-base-qarib_far_6500k

|

ahmedabdelali

| 2021-04-21T13:41:11Z | 9 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"QARiB",

"qarib",

"ar",

"dataset:arabic_billion_words",

"dataset:open_subtitles",

"dataset:twitter",

"dataset:Farasa",

"arxiv:2102.10684",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language: ar

tags:

- pytorch

- tf

- QARiB

- qarib

datasets:

- arabic_billion_words

- open_subtitles

- twitter

- Farasa

metrics:

- f1

widget:

- text: "و+قام ال+مدير [MASK]"

---

# QARiB: QCRI Arabic and Dialectal BERT

## About QARiB Farasa

QCRI Arabic and Dialectal BERT (QARiB) model, was trained on a collection of ~ 420 Million tweets and ~ 180 Million sentences of text.

For the tweets, the data was collected using twitter API and using language filter. `lang:ar`. For the text data, it was a combination from

[Arabic GigaWord](url), [Abulkhair Arabic Corpus]() and [OPUS](http://opus.nlpl.eu/).

QARiB: Is the Arabic name for "Boat".

## Model and Parameters:

- Data size: 14B tokens

- Vocabulary: 64k

- Iterations: 10M

- Number of Layers: 12

## Training QARiB

See details in [Training QARiB](https://github.com/qcri/QARIB/Training_QARiB.md)

## Using QARiB

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on a downstream task. See the model hub to look for fine-tuned versions on a task that interests you. For more details, see [Using QARiB](https://github.com/qcri/QARIB/Using_QARiB.md)

This model expects the data to be segmented. You may use [Farasa Segmenter](https://farasa-api.qcri.org/segmentation/) API.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>>from transformers import pipeline

>>>fill_mask = pipeline("fill-mask", model="./models/bert-base-qarib_far")

>>> fill_mask("و+قام ال+مدير [MASK]")

[

]

>>> fill_mask("و+قام+ت ال+مدير+ة [MASK]")

[

]

>>> fill_mask("قللي وشفيييك يرحم [MASK]")

[

]

```

## Evaluations:

|**Experiment** |**mBERT**|**AraBERT0.1**|**AraBERT1.0**|**ArabicBERT**|**QARiB**|

|---------------|---------|--------------|--------------|--------------|---------|

|Dialect Identification | 6.06% | 59.92% | 59.85% | 61.70% | **65.21%** |

|Emotion Detection | 27.90% | 43.89% | 42.37% | 41.65% | **44.35%** |

|Named-Entity Recognition (NER) | 49.38% | 64.97% | **66.63%** | 64.04% | 61.62% |

|Offensive Language Detection | 83.14% | 88.07% | 88.97% | 88.19% | **91.94%** |

|Sentiment Analysis | 86.61% | 90.80% | **93.58%** | 83.27% | 93.31% |

## Model Weights and Vocab Download

From Huggingface site: https://huggingface.co/qarib/bert-base-qarib_far

## Contacts

Ahmed Abdelali, Sabit Hassan, Hamdy Mubarak, Kareem Darwish and Younes Samih

## Reference

```

@article{abdelali2021pretraining,

title={Pre-Training BERT on Arabic Tweets: Practical Considerations},

author={Ahmed Abdelali and Sabit Hassan and Hamdy Mubarak and Kareem Darwish and Younes Samih},

year={2021},

eprint={2102.10684},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

ahmedabdelali/bert-base-qarib_far_8280k

|

ahmedabdelali

| 2021-04-21T13:40:36Z | 20 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"QARiB",

"qarib",

"ar",

"dataset:arabic_billion_words",

"dataset:open_subtitles",

"dataset:twitter",

"dataset:Farasa",

"arxiv:2102.10684",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language: ar

tags:

- pytorch

- tf

- QARiB

- qarib

datasets:

- arabic_billion_words

- open_subtitles

- twitter

- Farasa

metrics:

- f1

widget:

- text: "و+قام ال+مدير [MASK]"

---

# QARiB: QCRI Arabic and Dialectal BERT

## About QARiB Farasa

QCRI Arabic and Dialectal BERT (QARiB) model, was trained on a collection of ~ 420 Million tweets and ~ 180 Million sentences of text.

For the tweets, the data was collected using twitter API and using language filter. `lang:ar`. For the text data, it was a combination from

[Arabic GigaWord](url), [Abulkhair Arabic Corpus]() and [OPUS](http://opus.nlpl.eu/).

QARiB: Is the Arabic name for "Boat".

## Model and Parameters:

- Data size: 14B tokens

- Vocabulary: 64k

- Iterations: 10M

- Number of Layers: 12

## Training QARiB

See details in [Training QARiB](https://github.com/qcri/QARIB/Training_QARiB.md)

## Using QARiB

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on a downstream task. See the model hub to look for fine-tuned versions on a task that interests you. For more details, see [Using QARiB](https://github.com/qcri/QARIB/Using_QARiB.md)

This model expects the data to be segmented. You may use [Farasa Segmenter](https://farasa-api.qcri.org/segmentation/) API.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>>from transformers import pipeline

>>>fill_mask = pipeline("fill-mask", model="./models/bert-base-qarib_far")

>>> fill_mask("و+قام ال+مدير [MASK]")

[

]

>>> fill_mask("و+قام+ت ال+مدير+ة [MASK]")

[

]

>>> fill_mask("قللي وشفيييك يرحم [MASK]")

[

]

```

## Evaluations:

|**Experiment** |**mBERT**|**AraBERT0.1**|**AraBERT1.0**|**ArabicBERT**|**QARiB**|

|---------------|---------|--------------|--------------|--------------|---------|

|Dialect Identification | 6.06% | 59.92% | 59.85% | 61.70% | **65.21%** |

|Emotion Detection | 27.90% | 43.89% | 42.37% | 41.65% | **44.35%** |

|Named-Entity Recognition (NER) | 49.38% | 64.97% | **66.63%** | 64.04% | 61.62% |

|Offensive Language Detection | 83.14% | 88.07% | 88.97% | 88.19% | **91.94%** |

|Sentiment Analysis | 86.61% | 90.80% | **93.58%** | 83.27% | 93.31% |

## Model Weights and Vocab Download

From Huggingface site: https://huggingface.co/qarib/bert-base-qarib_far

## Contacts

Ahmed Abdelali, Sabit Hassan, Hamdy Mubarak, Kareem Darwish and Younes Samih

## Reference

```

@article{abdelali2021pretraining,

title={Pre-Training BERT on Arabic Tweets: Practical Considerations},

author={Ahmed Abdelali and Sabit Hassan and Hamdy Mubarak and Kareem Darwish and Younes Samih},

year={2021},

eprint={2102.10684},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

ahmedabdelali/bert-base-qarib_far_9920k

|

ahmedabdelali

| 2021-04-21T13:38:28Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"QARiB",

"qarib",

"ar",

"dataset:arabic_billion_words",

"dataset:open_subtitles",

"dataset:twitter",

"dataset:Farasa",

"arxiv:2102.10684",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language: ar

tags:

- pytorch

- tf

- QARiB

- qarib

datasets:

- arabic_billion_words

- open_subtitles

- twitter

- Farasa

metrics:

- f1

widget:

- text: "و+قام ال+مدير [MASK]"

---

# QARiB: QCRI Arabic and Dialectal BERT

## About QARiB Farasa

QCRI Arabic and Dialectal BERT (QARiB) model, was trained on a collection of ~ 420 Million tweets and ~ 180 Million sentences of text.

For the tweets, the data was collected using twitter API and using language filter. `lang:ar`. For the text data, it was a combination from

[Arabic GigaWord](url), [Abulkhair Arabic Corpus]() and [OPUS](http://opus.nlpl.eu/).

QARiB: Is the Arabic name for "Boat".

## Model and Parameters:

- Data size: 14B tokens

- Vocabulary: 64k

- Iterations: 10M

- Number of Layers: 12

## Training QARiB

See details in [Training QARiB](https://github.com/qcri/QARIB/Training_QARiB.md)

## Using QARiB

You can use the raw model for either masked language modeling or next sentence prediction, but it's mostly intended to be fine-tuned on a downstream task. See the model hub to look for fine-tuned versions on a task that interests you. For more details, see [Using QARiB](https://github.com/qcri/QARIB/Using_QARiB.md)

This model expects the data to be segmented. You may use [Farasa Segmenter](https://farasa-api.qcri.org/segmentation/) API.

### How to use

You can use this model directly with a pipeline for masked language modeling:

```python

>>>from transformers import pipeline

>>>fill_mask = pipeline("fill-mask", model="./models/bert-base-qarib_far")

>>> fill_mask("و+قام ال+مدير [MASK]")

[

]

>>> fill_mask("و+قام+ت ال+مدير+ة [MASK]")

[

]

>>> fill_mask("قللي وشفيييك يرحم [MASK]")

[

]

```

## Evaluations:

|**Experiment** |**mBERT**|**AraBERT0.1**|**AraBERT1.0**|**ArabicBERT**|**QARiB**|

|---------------|---------|--------------|--------------|--------------|---------|

|Dialect Identification | 6.06% | 59.92% | 59.85% | 61.70% | **65.21%** |

|Emotion Detection | 27.90% | 43.89% | 42.37% | 41.65% | **44.35%** |

|Named-Entity Recognition (NER) | 49.38% | 64.97% | **66.63%** | 64.04% | 61.62% |

|Offensive Language Detection | 83.14% | 88.07% | 88.97% | 88.19% | **91.94%** |

|Sentiment Analysis | 86.61% | 90.80% | **93.58%** | 83.27% | 93.31% |

## Model Weights and Vocab Download

From Huggingface site: https://huggingface.co/qarib/bert-base-qarib_far

## Contacts

Ahmed Abdelali, Sabit Hassan, Hamdy Mubarak, Kareem Darwish and Younes Samih

## Reference

```

@article{abdelali2021pretraining,

title={Pre-Training BERT on Arabic Tweets: Practical Considerations},

author={Ahmed Abdelali and Sabit Hassan and Hamdy Mubarak and Kareem Darwish and Younes Samih},

year={2021},

eprint={2102.10684},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

stas/t5-very-small-random

|

stas

| 2021-04-21T02:34:01Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

This is a tiny random t5 model used for testing

See `t5-make-very-small-model.py` for how it was created.

|

castorini/ance-dpr-question-multi

|

castorini

| 2021-04-21T01:36:24Z | 143 | 1 |

transformers

|

[

"transformers",

"pytorch",

"dpr",

"feature-extraction",

"arxiv:2007.00808",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-03-02T23:29:05Z |

This model is converted from the original ANCE [repo](https://github.com/microsoft/ANCE) and fitted into Pyserini:

> Lee Xiong, Chenyan Xiong, Ye Li, Kwok-Fung Tang, Jialin Liu, Paul Bennett, Junaid Ahmed, Arnold Overwijk. [Approximate Nearest Neighbor Negative Contrastive Learning for Dense Text Retrieval](https://arxiv.org/pdf/2007.00808.pdf)

For more details on how to use it, check our experiments in [Pyserini](https://github.com/castorini/pyserini/blob/master/docs/experiments-ance.md)

|

Davlan/mT5_base_yoruba_adr

|

Davlan

| 2021-04-20T21:16:26Z | 24 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"arxiv:2003.10564",

"arxiv:2103.08647",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:04Z |

Hugging Face's logo

---

language: yo

datasets:

- JW300 + [Menyo-20k](https://huggingface.co/datasets/menyo20k_mt)

---

# mT5_base_yoruba_adr

## Model description

**mT5_base_yoruba_adr** is a **automatic diacritics restoration** model for Yorùbá language based on a fine-tuned mT5-base model. It achieves the **state-of-the-art performance** for adding the correct diacritics or tonal marks to Yorùbá texts.

Specifically, this model is a *mT5_base* model that was fine-tuned on JW300 Yorùbá corpus and [Menyo-20k](https://huggingface.co/datasets/menyo20k_mt)

## Intended uses & limitations

#### How to use

You can use this model with Transformers *pipeline* for ADR.

```python

from transformers import AutoTokenizer, AutoModelForTokenClassification

from transformers import pipeline

tokenizer = AutoTokenizer.from_pretrained("")

model = AutoModelForTokenClassification.from_pretrained("")

nlp = pipeline("", model=model, tokenizer=tokenizer)

example = "Emir of Kano turban Zhang wey don spend 18 years for Nigeria"

ner_results = nlp(example)

print(ner_results)

```

#### Limitations and bias

This model is limited by its training dataset of entity-annotated news articles from a specific span of time. This may not generalize well for all use cases in different domains.

## Training data

This model was fine-tuned on on JW300 Yorùbá corpus and [Menyo-20k](https://huggingface.co/datasets/menyo20k_mt) dataset

## Training procedure

This model was trained on a single NVIDIA V100 GPU

## Eval results on Test set (BLEU score)

64.63 BLEU on [Global Voices test set](https://arxiv.org/abs/2003.10564)

70.27 BLEU on [Menyo-20k test set](https://arxiv.org/abs/2103.08647)

### BibTeX entry and citation info

By Jesujoba Alabi and David Adelani

```

```

|

moha/arabert_arabic_covid19

|

moha

| 2021-04-20T06:15:12Z | 0 | 0 | null |

[

"ar",

"arxiv:2004.04315",

"region:us"

] | null | 2022-03-02T23:29:05Z |

---

language: ar

widget:

- text: "للوقايه من عدم انتشار [MASK]"

---

# arabert_c19: An Arabert model pretrained on 1.5 million COVID-19 multi-dialect Arabic tweets

**ARABERT COVID-19** is a pretrained (fine-tuned) version of the AraBERT v2 model (https://huggingface.co/aubmindlab/bert-base-arabertv02). The pretraining was done using 1.5 million multi-dialect Arabic tweets regarding the COVID-19 pandemic from the “Large Arabic Twitter Dataset on COVID-19” (https://arxiv.org/abs/2004.04315).

The model can achieve better results for the tasks that deal with multi-dialect Arabic tweets in relation to the COVID-19 pandemic.

# Classification results for multiple tasks including fake-news and hate speech detection when using arabert_c19 and mbert_ar_c19:

For more details refer to the paper (link)

| | arabert | mbert | distilbert multi | arabert Covid-19 | mbert Covid-19 |

|------------------------------------|----------|----------|------------------|------------------|----------------|

| Contains hate (Binary) | 0.8346 | 0.6675 | 0.7145 | `0.8649` | 0.8492 |

| Talk about a cure (Binary) | 0.8193 | 0.7406 | 0.7127 | 0.9055 | `0.9176` |

| News or opinion (Binary) | 0.8987 | 0.8332 | 0.8099 | `0.9163` | 0.9116 |

| Contains fake information (Binary) | 0.6415 | 0.5428 | 0.4743 | `0.7739` | 0.7228 |

# Preprocessing

```python

from arabert.preprocess import ArabertPreprocessor

model_name="moha/arabert_c19"

arabert_prep = ArabertPreprocessor(model_name=model_name)

text = "للوقايه من عدم انتشار كورونا عليك اولا غسل اليدين بالماء والصابون وتكون عملية الغسل دقيقه تشمل راحة اليد الأصابع التركيز على الإبهام"

arabert_prep.preprocess(text)

```

# Contacts

**Hadj Ameur**: [Github](https://github.com/MohamedHadjAmeur) | <mohamedhadjameur@gmail.com> | <mhadjameur@cerist.dz>

|

Pollawat/mt5-small-thai-qa-qg

|

Pollawat

| 2021-04-19T14:52:22Z | 38 | 4 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"question-generation",

"question-answering",

"dataset:NSC2018",

"dataset:iapp-wiki-qa-dataset",

"dataset:XQuAD",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-03-02T23:29:04Z |

---

tags:

- question-generation

- question-answering

language:

- thai

- th

datasets:

- NSC2018

- iapp-wiki-qa-dataset

- XQuAD

license: mit

---

[Google's mT5](https://github.com/google-research/multilingual-t5)

This is a model for generating questions from Thai texts. It was fine-tuned on NSC2018 corpus

```python

from transformers import MT5Tokenizer, MT5ForConditionalGeneration

tokenizer = MT5Tokenizer.from_pretrained("Pollawat/mt5-small-thai-qa-qg")

model = MT5ForConditionalGeneration.from_pretrained("Pollawat/mt5-small-thai-qa-qg")

text = "กรุงเทพมหานคร เป็นเมืองหลวงและนครที่มีประชากรมากที่สุดของประเทศไทย เป็นศูนย์กลางการปกครอง การศึกษา การคมนาคมขนส่ง การเงินการธนาคาร การพาณิชย์ การสื่อสาร และความเจริญของประเทศ เป็นเมืองที่มีชื่อยาวที่สุดในโลก ตั้งอยู่บนสามเหลี่ยมปากแม่น้ำเจ้าพระยา มีแม่น้ำเจ้าพระยาไหลผ่านและแบ่งเมืองออกเป็น 2 ฝั่ง คือ ฝั่งพระนครและฝั่งธนบุรี กรุงเทพมหานครมีพื้นที่ทั้งหมด 1,568.737 ตร.กม. มีประชากรตามทะเบียนราษฎรกว่า 5 ล้านคน"