modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-04 18:27:43

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 539

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-04 18:27:26

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

rram12/reinforce-CartPole-v1

|

rram12

| 2022-09-25T10:33:17Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-25T10:33:04Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: reinforce-CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 5 of the Deep Reinforcement Learning Class: https://github.com/huggingface/deep-rl-class/tree/main/unit5

|

ShadowTwin41/distilbert-base-uncased-finetuned-imdb

|

ShadowTwin41

| 2022-09-25T10:07:17Z | 161 | 0 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"fill-mask",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-25T09:54:34Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: distilbert-base-uncased-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7181

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 384

- eval_batch_size: 384

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 27 | 0.7668 |

| No log | 2.0 | 54 | 0.7282 |

| No log | 3.0 | 81 | 0.7165 |

### Framework versions

- Transformers 4.22.0

- Pytorch 1.12.1+cu116

- Datasets 2.4.0

- Tokenizers 0.12.1

|

shmuhammad/distilbert-base-uncased-finetuned-clinc

|

shmuhammad

| 2022-09-25T10:06:15Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:clinc_oos",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-18T12:12:52Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- clinc_oos

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-clinc

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: clinc_oos

type: clinc_oos

args: plus

metrics:

- name: Accuracy

type: accuracy

value: 0.92

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.7758

- Accuracy: 0.92

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 48

- eval_batch_size: 48

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 4.295 | 1.0 | 318 | 3.2908 | 0.7448 |

| 2.6313 | 2.0 | 636 | 1.8779 | 0.8384 |

| 1.5519 | 3.0 | 954 | 1.1600 | 0.8981 |

| 1.0148 | 4.0 | 1272 | 0.8585 | 0.9123 |

| 0.7974 | 5.0 | 1590 | 0.7758 | 0.92 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.12.1.post200

- Datasets 1.16.1

- Tokenizers 0.10.3

|

nikhilsk/t5-base-finetuned-eli5

|

nikhilsk

| 2022-09-25T07:53:22Z | 111 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:eli5",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-24T23:04:28Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- eli5

model-index:

- name: t5-base-finetuned-eli5

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-base-finetuned-eli5

This model is a fine-tuned version of [t5-base](https://huggingface.co/t5-base) on the eli5 dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

jamescalam/mpnet-snli-negatives

|

jamescalam

| 2022-09-25T07:33:28Z | 13 | 1 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"transformers",

"en",

"dataset:snli",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-09-22T08:27:42Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

language:

- en

license: mit

datasets:

- snli

---

# MPNet NLI

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search. It has been fine-tuned using the **S**tanford **N**atural **L**anguage **I**nference (SNLI) dataset (including negatives) and returns MRR@10 and MAP scores of ~0.95 on the SNLI test set.

Find more info from [James Briggs on YouTube](https://youtube.com/c/jamesbriggs) or in the [**free** NLP for Semantic Search ebook](https://pinecone.io/learn/nlp).

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('jamescalam/mpnet-snli-negatives')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('jamescalam/mpnet-snli-negatives')

model = AutoModel.from_pretrained('jamescalam/mpnet-snli-negatives')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Training

The model was trained with the parameters:

**DataLoader**:

`sentence_transformers.datasets.NoDuplicatesDataLoader.NoDuplicatesDataLoader` of length 4660 with parameters:

```

{'batch_size': 32}

```

**Loss**:

`sentence_transformers.losses.MultipleNegativesRankingLoss.MultipleNegativesRankingLoss` with parameters:

```

{'scale': 20.0, 'similarity_fct': 'cos_sim'}

```

Parameters of the fit()-Method:

```

{

"epochs": 1,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 466,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

|

ShadowTwin41/bert-finetuned-ner

|

ShadowTwin41

| 2022-09-25T07:26:43Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:conll2003",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-09-25T07:18:07Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- conll2003

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-finetuned-ner

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: conll2003

type: conll2003

config: conll2003

split: train

args: conll2003

metrics:

- name: Precision

type: precision

value: 0.9127878490935816

- name: Recall

type: recall

value: 0.9405923931336251

- name: F1

type: f1

value: 0.9264815582262743

- name: Accuracy

type: accuracy

value: 0.9841937952551951

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0586

- Precision: 0.9128

- Recall: 0.9406

- F1: 0.9265

- Accuracy: 0.9842

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 48

- eval_batch_size: 48

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 293 | 0.0844 | 0.8714 | 0.9123 | 0.8914 | 0.9760 |

| 0.1765 | 2.0 | 586 | 0.0601 | 0.9109 | 0.9357 | 0.9231 | 0.9834 |

| 0.1765 | 3.0 | 879 | 0.0586 | 0.9128 | 0.9406 | 0.9265 | 0.9842 |

### Framework versions

- Transformers 4.22.0

- Pytorch 1.12.1+cu116

- Datasets 2.4.0

- Tokenizers 0.12.1

|

BearlyWorkingYT/OPT-125M-Warriors-TPB

|

BearlyWorkingYT

| 2022-09-25T05:36:55Z | 136 | 1 |

transformers

|

[

"transformers",

"pytorch",

"opt",

"text-generation",

"license:other",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-09-25T02:38:40Z |

---

license: other

widget:

- text: "Chapter 1."

example_title: "First Prompt used in video"

- text: "Chapter 1. Shadowclan"

example_title: "Second prompt used in video"

- text: "Fireheart"

example_title: "Fireheart"

inference:

parameters:

temperature: 0.4

repetition_penalty: 1.1

min_length: 64

max_length: 128

---

This represents an OPT-125M model trained on the "Warriors: The Prophecies Begin" book series.

To train this model, I ripped text directly from PDFs using PyMuPdf.

This is the model trained in this [video](https://youtu.be/BAloWD4FXIM)

Please check out my [YouTube channel.](https://www.youtube.com/channel/UCLXxfueCPZRZnyGFWJ07uqA)

|

sd-concepts-library/cindlop

|

sd-concepts-library

| 2022-09-25T04:54:14Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-25T04:54:11Z |

---

license: mit

---

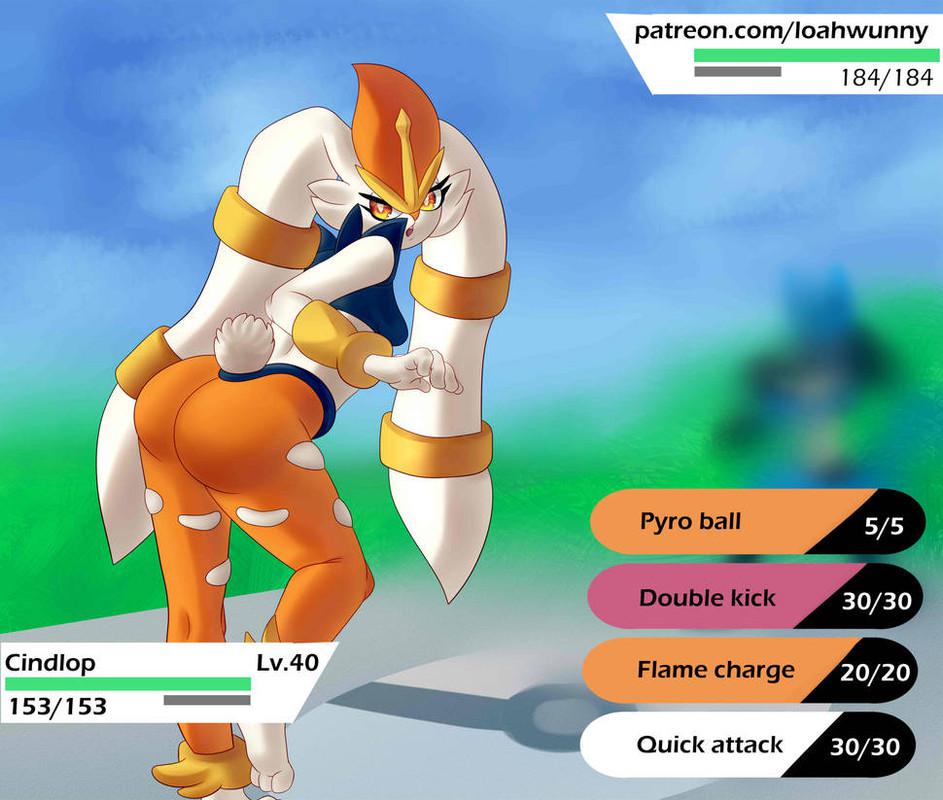

### cindlop on Stable Diffusion

This is the `<cindlop>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

neelmehta00/t5-small-finetuned-eli5-neel-final-again

|

neelmehta00

| 2022-09-25T03:34:10Z | 110 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:eli5",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-25T02:21:49Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- eli5

metrics:

- rouge

model-index:

- name: t5-small-finetuned-eli5-neel-final-again

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: eli5

type: eli5

config: LFQA_reddit

split: train_eli5

args: LFQA_reddit

metrics:

- name: Rouge1

type: rouge

value: 15.1361

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-eli5-neel-final-again

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the eli5 dataset.

It achieves the following results on the evaluation set:

- Loss: 3.5993

- Rouge1: 15.1361

- Rouge2: 2.1584

- Rougel: 12.7499

- Rougelsum: 13.989

- Gen Len: 18.9998

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:|

| 3.8014 | 1.0 | 17040 | 3.5993 | 15.1361 | 2.1584 | 12.7499 | 13.989 | 18.9998 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

BigSalmon/InformalToFormalLincoln81ParaphraseMedium

|

BigSalmon

| 2022-09-25T02:27:53Z | 173 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-09-25T02:14:22Z |

data: https://github.com/BigSalmon2/InformalToFormalDataset

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/InformalToFormalLincoln80Paraphrase")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/InformalToFormalLincoln80Paraphrase")

```

```

Demo:

https://huggingface.co/spaces/BigSalmon/FormalInformalConciseWordy

```

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

input_ids = tokenizer.encode(prompt, return_tensors='pt')

outputs = model.generate(input_ids=input_ids,

max_length=10 + len(prompt),

temperature=1.0,

top_k=50,

top_p=0.95,

do_sample=True,

num_return_sequences=5,

early_stopping=True)

for i in range(5):

print(tokenizer.decode(outputs[i]))

```

Most likely outputs (Disclaimer: I highly recommend using this over just generating):

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

text = tokenizer.encode(prompt)

myinput, past_key_values = torch.tensor([text]), None

myinput = myinput

myinput= myinput.to(device)

logits, past_key_values = model(myinput, past_key_values = past_key_values, return_dict=False)

logits = logits[0,-1]

probabilities = torch.nn.functional.softmax(logits)

best_logits, best_indices = logits.topk(250)

best_words = [tokenizer.decode([idx.item()]) for idx in best_indices]

text.append(best_indices[0].item())

best_probabilities = probabilities[best_indices].tolist()

words = []

print(best_words)

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

***

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

***

informal english: corn fields are all across illinois, visible once you leave chicago.

Translated into the Style of Abraham Lincoln: corn fields ( permeate illinois / span the state of illinois / ( occupy / persist in ) all corners of illinois / line the horizon of illinois / envelop the landscape of illinois ), manifesting themselves visibly as one ventures beyond chicago.

informal english:

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

```

Essay Intro (Warriors vs. Rockets in Game 7):

text: eagerly anticipated by fans, game 7's are the highlight of the post-season.

text: ever-building in suspense, game 7's have the crowd captivated.

***

Essay Intro (South Korean TV Is Becoming Popular):

text: maturing into a bona fide paragon of programming, south korean television ( has much to offer / entertains without fail / never disappoints ).

text: increasingly held in critical esteem, south korean television continues to impress.

text: at the forefront of quality content, south korea is quickly achieving celebrity status.

***

Essay Intro (

```

```

Search: What is the definition of Checks and Balances?

https://en.wikipedia.org/wiki/Checks_and_balances

Checks and Balances is the idea of having a system where each and every action in government should be subject to one or more checks that would not allow one branch or the other to overly dominate.

https://www.harvard.edu/glossary/Checks_and_Balances

Checks and Balances is a system that allows each branch of government to limit the powers of the other branches in order to prevent abuse of power

https://www.law.cornell.edu/library/constitution/Checks_and_Balances

Checks and Balances is a system of separation through which branches of government can control the other, thus preventing excess power.

***

Search: What is the definition of Separation of Powers?

https://en.wikipedia.org/wiki/Separation_of_powers

The separation of powers is a principle in government, whereby governmental powers are separated into different branches, each with their own set of powers, that are prevent one branch from aggregating too much power.

https://www.yale.edu/tcf/Separation_of_Powers.html

Separation of Powers is the division of governmental functions between the executive, legislative and judicial branches, clearly demarcating each branch's authority, in the interest of ensuring that individual liberty or security is not undermined.

***

Search: What is the definition of Connection of Powers?

https://en.wikipedia.org/wiki/Connection_of_powers

Connection of Powers is a feature of some parliamentary forms of government where different branches of government are intermingled, typically the executive and legislative branches.

https://simple.wikipedia.org/wiki/Connection_of_powers

The term Connection of Powers describes a system of government in which there is overlap between different parts of the government.

***

Search: What is the definition of

```

```

Search: What are phrase synonyms for "second-guess"?

https://www.powerthesaurus.org/second-guess/synonyms

Shortest to Longest:

- feel dubious about

- raise an eyebrow at

- wrinkle their noses at

- cast a jaundiced eye at

- teeter on the fence about

***

Search: What are phrase synonyms for "mean to newbies"?

https://www.powerthesaurus.org/mean_to_newbies/synonyms

Shortest to Longest:

- readiness to balk at rookies

- absence of tolerance for novices

- hostile attitude toward newcomers

***

Search: What are phrase synonyms for "make use of"?

https://www.powerthesaurus.org/make_use_of/synonyms

Shortest to Longest:

- call upon

- glean value from

- reap benefits from

- derive utility from

- seize on the merits of

- draw on the strength of

- tap into the potential of

***

Search: What are phrase synonyms for "hurting itself"?

https://www.powerthesaurus.org/hurting_itself/synonyms

Shortest to Longest:

- erring

- slighting itself

- forfeiting its integrity

- doing itself a disservice

- evincing a lack of backbone

***

Search: What are phrase synonyms for "

```

```

- nebraska

- unicamerical legislature

- different from federal house and senate

text: featuring a unicameral legislature, nebraska's political system stands in stark contrast to the federal model, comprised of a house and senate.

***

- penny has practically no value

- should be taken out of circulation

- just as other coins have been in us history

- lost use

- value not enough

- to make environmental consequences worthy

text: all but valueless, the penny should be retired. as with other coins in american history, it has become defunct. too minute to warrant the environmental consequences of its production, it has outlived its usefulness.

***

-

```

```

original: sports teams are profitable for owners. [MASK], their valuations experience a dramatic uptick.

infill: sports teams are profitable for owners. ( accumulating vast sums / stockpiling treasure / realizing benefits / cashing in / registering robust financials / scoring on balance sheets ), their valuations experience a dramatic uptick.

***

original:

```

```

wordy: classical music is becoming less popular more and more.

Translate into Concise Text: interest in classic music is fading.

***

wordy:

```

```

sweet: savvy voters ousted him.

longer: voters who were informed delivered his defeat.

***

sweet:

```

```

1: commercial space company spacex plans to launch a whopping 52 flights in 2022.

2: spacex, a commercial space company, intends to undertake a total of 52 flights in 2022.

3: in 2022, commercial space company spacex has its sights set on undertaking 52 flights.

4: 52 flights are in the pipeline for 2022, according to spacex, a commercial space company.

5: a commercial space company, spacex aims to conduct 52 flights in 2022.

***

1:

```

Keywords to sentences or sentence.

```

ngos are characterized by:

□ voluntary citizens' group that is organized on a local, national or international level

□ encourage political participation

□ often serve humanitarian functions

□ work for social, economic, or environmental change

***

what are the drawbacks of living near an airbnb?

□ noise

□ parking

□ traffic

□ security

□ strangers

***

```

```

original: musicals generally use spoken dialogue as well as songs to convey the story. operas are usually fully sung.

adapted: musicals generally use spoken dialogue as well as songs to convey the story. ( in a stark departure / on the other hand / in contrast / by comparison / at odds with this practice / far from being alike / in defiance of this standard / running counter to this convention ), operas are usually fully sung.

***

original: akoya and tahitian are types of pearls. akoya pearls are mostly white, and tahitian pearls are naturally dark.

adapted: akoya and tahitian are types of pearls. ( a far cry from being indistinguishable / easily distinguished / on closer inspection / setting them apart / not to be mistaken for one another / hardly an instance of mere synonymy / differentiating the two ), akoya pearls are mostly white, and tahitian pearls are naturally dark.

***

original:

```

```

original: had trouble deciding.

translated into journalism speak: wrestled with the question, agonized over the matter, furrowed their brows in contemplation.

***

original:

```

```

input: not loyal

1800s english: ( two-faced / inimical / perfidious / duplicitous / mendacious / double-dealing / shifty ).

***

input:

```

```

first: ( was complicit in / was involved in ).

antonym: ( was blameless / was not an accomplice to / had no hand in / was uninvolved in ).

***

first: ( have no qualms about / see no issue with ).

antonym: ( are deeply troubled by / harbor grave reservations about / have a visceral aversion to / take ( umbrage at / exception to ) / are wary of ).

***

first: ( do not see eye to eye / disagree often ).

antonym: ( are in sync / are united / have excellent rapport / are like-minded / are in step / are of one mind / are in lockstep / operate in perfect harmony / march in lockstep ).

***

first:

```

```

stiff with competition, law school {A} is the launching pad for countless careers, {B} is a crowded field, {C} ranks among the most sought-after professional degrees, {D} is a professional proving ground.

***

languishing in viewership, saturday night live {A} is due for a creative renaissance, {B} is no longer a ratings juggernaut, {C} has been eclipsed by its imitators, {C} can still find its mojo.

***

dubbed the "manhattan of the south," atlanta {A} is a bustling metropolis, {B} is known for its vibrant downtown, {C} is a city of rich history, {D} is the pride of georgia.

***

embattled by scandal, harvard {A} is feeling the heat, {B} cannot escape the media glare, {C} is facing its most intense scrutiny yet, {D} is in the spotlight for all the wrong reasons.

```

Infill / Infilling / Masking / Phrase Masking (Works pretty decently actually, especially when you use logprobs code from above):

```

his contention [blank] by the evidence [sep] was refuted [answer]

***

few sights are as [blank] new york city as the colorful, flashing signage of its bodegas [sep] synonymous with [answer]

***

when rick won the lottery, all of his distant relatives [blank] his winnings [sep] clamored for [answer]

***

the library’s quiet atmosphere encourages visitors to [blank] in their work [sep] immerse themselves [answer]

***

the joy of sport is that no two games are alike. for every exhilarating experience, however, there is an interminable one. the national pastime, unfortunately, has a penchant for the latter. what begins as a summer evening at the ballpark can quickly devolve into a game of tedium. the primary culprit is the [blank] of play. from batters readjusting their gloves to fielders spitting on their mitts, the action is [blank] unnecessary interruptions. the sport's future is [blank] if these tendencies are not addressed [sep] plodding pace [answer] riddled with [answer] bleak [answer]

***

microsoft word's [blank] pricing [blank] competition [sep] unconscionable [answer] invites [answer]

***

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

Backwards

```

Essay Intro (National Parks):

text: tourists are at ease in the national parks, ( swept up in the beauty of their natural splendor ).

***

Essay Intro (D.C. Statehood):

washington, d.c. is a city of outsize significance, ( ground zero for the nation's political life / center stage for the nation's political machinations ).

```

```

topic: the Golden State Warriors.

characterization 1: the reigning kings of the NBA.

characterization 2: possessed of a remarkable cohesion.

characterization 3: helmed by superstar Stephen Curry.

characterization 4: perched atop the league’s hierarchy.

characterization 5: boasting a litany of hall-of-famers.

***

topic: emojis.

characterization 1: shorthand for a digital generation.

characterization 2: more versatile than words.

characterization 3: the latest frontier in language.

characterization 4: a form of self-expression.

characterization 5: quintessentially millennial.

characterization 6: reflective of a tech-centric world.

***

topic:

```

```

regular: illinois went against the census' population-loss prediction by getting more residents.

VBG: defying the census' prediction of population loss, illinois experienced growth.

***

regular: microsoft word’s high pricing increases the likelihood of competition.

VBG: extortionately priced, microsoft word is inviting competition.

***

regular:

```

```

source: badminton should be more popular in the US.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) games played with racquets are popular, (2) just look at tennis and ping pong, (3) but badminton underappreciated, (4) fun, fast-paced, competitive, (5) needs to be marketed more

text: the sporting arena is dominated by games that are played with racquets. tennis and ping pong, in particular, are immensely popular. somewhat curiously, however, badminton is absent from this pantheon. exciting, fast-paced, and competitive, it is an underappreciated pastime. all that it lacks is more effective marketing.

***

source: movies in theaters should be free.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) movies provide vital life lessons, (2) many venues charge admission, (3) those without much money

text: the lessons that movies impart are far from trivial. the vast catalogue of cinematic classics is replete with inspiring sagas of friendship, bravery, and tenacity. it is regrettable, then, that admission to theaters is not free. in their current form, the doors of this most vital of institutions are closed to those who lack the means to pay.

***

source:

```

```

in the private sector, { transparency } is vital to the business’s credibility. the { disclosure of information } can be the difference between success and failure.

***

the labor market is changing, with { remote work } now the norm. this { flexible employment } allows the individual to design their own schedule.

***

the { cubicle } is the locus of countless grievances. many complain that the { enclosed workspace } restricts their freedom of movement.

***

```

```

it would be natural to assume that americans, as a people whose ancestors { immigrated to this country }, would be sympathetic to those seeking to do likewise.

question: what does “do likewise” mean in the above context?

(a) make the same journey

(b) share in the promise of the american dream

(c) start anew in the land of opportunity

(d) make landfall on the united states

***

in the private sector, { transparency } is vital to the business’s credibility. this orientation can be the difference between success and failure.

question: what does “this orientation” mean in the above context?

(a) visible business practices

(b) candor with the public

(c) open, honest communication

(d) culture of accountability

```

```

example: suppose you are a teacher. further suppose you want to tell an accurate telling of history. then suppose a parent takes offense. they do so in the name of name of their kid. this happens a lot.

text: educators' responsibility to remain true to the historical record often clashes with the parent's desire to shelter their child from uncomfortable realities.

***

example: suppose you are a student at college. now suppose you have to buy textbooks. that is going to be worth hundreds of dollars. given how much you already spend on tuition, that is going to hard cost to bear.

text: the exorbitant cost of textbooks, which often reaches hundreds of dollars, imposes a sizable financial burden on the already-strapped college student.

```

```

accustomed to having its name uttered ______, harvard university is weathering a rare spell of reputational tumult

(a) in reverential tones

(b) with great affection

(c) in adulatory fashion

(d) in glowing terms

```

```

informal english: i reached out to accounts who had a lot of followers, helping to make people know about us.

resume english: i partnered with prominent influencers to build brand awareness.

***

```

|

sd-concepts-library/yuji-himukai-style

|

sd-concepts-library

| 2022-09-25T02:06:22Z | 0 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-25T02:06:16Z |

---

license: mit

---

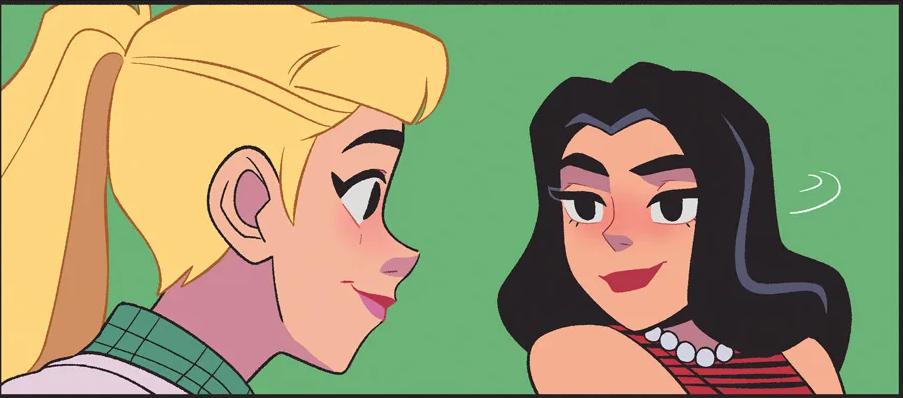

### Yuji-Himukai-Style on Stable Diffusion

This is the `<Yuji Himukai-Style>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

sd-concepts-library/wheelchair

|

sd-concepts-library

| 2022-09-25T01:51:57Z | 0 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-25T01:51:50Z |

---

license: mit

---

### wheelchair on Stable Diffusion

This is the `<wheelchair>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/brittney-williams-art

|

sd-concepts-library

| 2022-09-25T00:31:41Z | 0 | 4 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-25T00:31:35Z |

---

license: mit

---

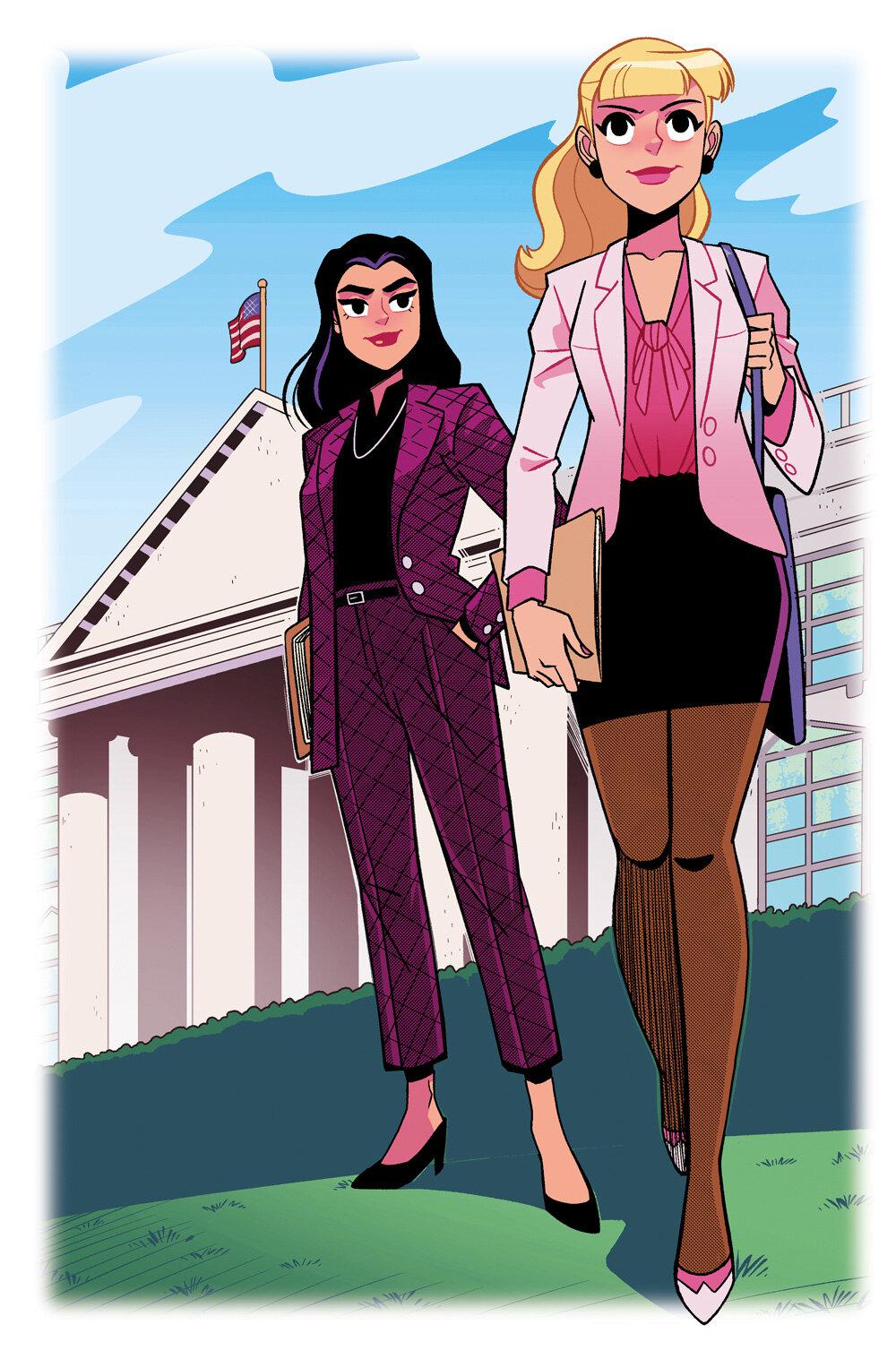

### Brittney-Williams-Art on Stable Diffusion

This is the `<Brittney_Williams>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

bguan/lunar_lander_v2_ppo_220924A

|

bguan

| 2022-09-24T23:32:24Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-24T22:49:51Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- metrics:

- type: mean_reward

value: 283.01 +/- 14.03

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

sd-concepts-library/wire-angels

|

sd-concepts-library

| 2022-09-24T23:25:57Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-24T23:25:43Z |

---

license: mit

---

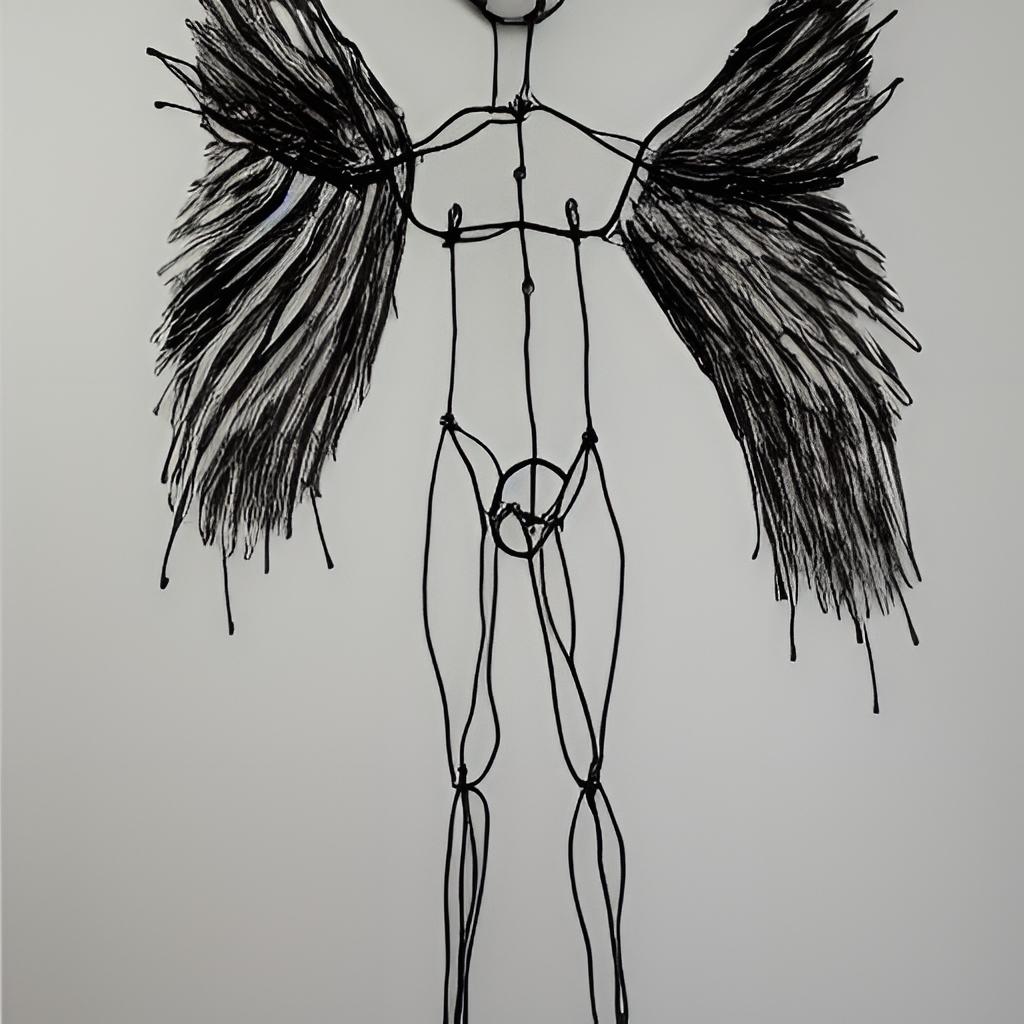

### wire-angels on Stable Diffusion

This is the `<wire-angels>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

neelmehta00/t5-small-finetuned-eli5-neel-final

|

neelmehta00

| 2022-09-24T22:53:58Z | 111 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:eli5",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-24T21:44:43Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- eli5

metrics:

- rouge

model-index:

- name: t5-small-finetuned-eli5-neel-final

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: eli5

type: eli5

config: LFQA_reddit

split: train_eli5

args: LFQA_reddit

metrics:

- name: Rouge1

type: rouge

value: 15.1409

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-eli5-neel-final

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the eli5 dataset.

It achieves the following results on the evaluation set:

- Loss: 3.5993

- Rouge1: 15.1409

- Rouge2: 2.1615

- Rougel: 12.7532

- Rougelsum: 13.9849

- Gen Len: 18.9998

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:------:|:-------:|:---------:|:-------:|

| 3.8014 | 1.0 | 17040 | 3.5993 | 15.1409 | 2.1615 | 12.7532 | 13.9849 | 18.9998 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

sd-concepts-library/dlooak

|

sd-concepts-library

| 2022-09-24T22:33:17Z | 0 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-24T22:33:03Z |

---

license: mit

---

### dlooak on Stable Diffusion

This is the `<dlooak>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

pinot/wav2vec2-large-xls-r-300m-j-phoneme-common-test

|

pinot

| 2022-09-24T22:27:12Z | 103 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice_10_0",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-09-24T16:21:07Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice_10_0

model-index:

- name: wav2vec2-large-xls-r-300m-j-phoneme-common-test

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xls-r-300m-j-phoneme-common-test

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice_10_0 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0000

- Wer: 0.0001

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 4

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:-----:|:---------------:|:------:|

| 0.1488 | 7.14 | 2000 | 0.0788 | 0.0919 |

| 0.0308 | 14.28 | 4000 | 0.0155 | 0.0271 |

| 0.0121 | 21.43 | 6000 | 0.0070 | 0.0103 |

| 0.0067 | 28.57 | 8000 | 0.0059 | 0.0067 |

| 0.0025 | 35.71 | 10000 | 0.0143 | 0.0180 |

| 0.0001 | 42.85 | 12000 | 0.0000 | 0.0001 |

| 0.0 | 50.0 | 14000 | 0.0000 | 0.0001 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.10.0+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

kevinbram/testarbaraz

|

kevinbram

| 2022-09-24T20:52:42Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-09-24T20:20:33Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: testarbaraz

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# testarbaraz

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the squad dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2153

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.2806 | 1.0 | 5533 | 1.2153 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

gokuls/bert-tiny-emotion-KD-BERT_and_distilBERT

|

gokuls

| 2022-09-24T19:50:00Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-24T19:36:26Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

model-index:

- name: bert-tiny-emotion-KD-BERT_and_distilBERT

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.918

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-tiny-emotion-KD-BERT_and_distilBERT

This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8780

- Accuracy: 0.918

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 33

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 7.1848 | 1.0 | 1000 | 4.7404 | 0.774 |

| 3.856 | 2.0 | 2000 | 2.7317 | 0.8685 |

| 2.3973 | 3.0 | 3000 | 1.8329 | 0.8895 |

| 1.5273 | 4.0 | 4000 | 1.2938 | 0.898 |

| 1.113 | 5.0 | 5000 | 1.1298 | 0.8985 |

| 0.9099 | 6.0 | 6000 | 1.0746 | 0.907 |

| 0.831 | 7.0 | 7000 | 1.0071 | 0.907 |

| 0.6813 | 8.0 | 8000 | 0.9556 | 0.9115 |

| 0.6432 | 9.0 | 9000 | 0.9746 | 0.913 |

| 0.5745 | 10.0 | 10000 | 0.8780 | 0.918 |

| 0.5319 | 11.0 | 11000 | 0.9410 | 0.909 |

| 0.4787 | 12.0 | 12000 | 0.9103 | 0.913 |

| 0.4529 | 13.0 | 13000 | 0.8829 | 0.915 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

gokuls/bert-tiny-sst2-KD-BERT

|

gokuls

| 2022-09-24T19:43:27Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-24T19:26:06Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-tiny-sst2-KD-BERT

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: sst2

split: train

args: sst2

metrics:

- name: Accuracy

type: accuracy

value: 0.8348623853211009

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-tiny-sst2-KD-BERT

This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8257

- Accuracy: 0.8349

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 33

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.7521 | 1.0 | 4210 | 0.7345 | 0.8234 |

| 0.4301 | 2.0 | 8420 | 0.7748 | 0.8303 |

| 0.3335 | 3.0 | 12630 | 0.8257 | 0.8349 |

| 0.2831 | 4.0 | 16840 | 0.9145 | 0.8188 |

| 0.2419 | 5.0 | 21050 | 0.9096 | 0.8177 |

| 0.2149 | 6.0 | 25260 | 0.8410 | 0.8234 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

gokuls/bert-tiny-emotion-KD-distilBERT

|

gokuls

| 2022-09-24T19:33:23Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-24T19:21:36Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

model-index:

- name: bert-tiny-emotion-KD-distilBERT

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.913

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-tiny-emotion-KD-distilBERT

This model is a fine-tuned version of [google/bert_uncased_L-2_H-128_A-2](https://huggingface.co/google/bert_uncased_L-2_H-128_A-2) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5444

- Accuracy: 0.913

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 33

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 4.2533 | 1.0 | 1000 | 2.8358 | 0.7675 |

| 2.3274 | 2.0 | 2000 | 1.5893 | 0.8675 |

| 1.3974 | 3.0 | 3000 | 1.0286 | 0.891 |

| 0.9035 | 4.0 | 4000 | 0.7534 | 0.8955 |

| 0.6619 | 5.0 | 5000 | 0.6350 | 0.905 |

| 0.5482 | 6.0 | 6000 | 0.6180 | 0.899 |

| 0.4937 | 7.0 | 7000 | 0.5448 | 0.91 |

| 0.4013 | 8.0 | 8000 | 0.5493 | 0.906 |

| 0.3839 | 9.0 | 9000 | 0.5481 | 0.9095 |

| 0.3281 | 10.0 | 10000 | 0.5528 | 0.9115 |

| 0.3098 | 11.0 | 11000 | 0.5864 | 0.9095 |

| 0.2762 | 12.0 | 12000 | 0.5566 | 0.9095 |

| 0.2467 | 13.0 | 13000 | 0.5444 | 0.913 |

| 0.2286 | 14.0 | 14000 | 0.5306 | 0.912 |

| 0.2215 | 15.0 | 15000 | 0.5312 | 0.9115 |

| 0.2038 | 16.0 | 16000 | 0.5242 | 0.912 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

sd-concepts-library/thorneworks

|

sd-concepts-library

| 2022-09-24T18:23:14Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-24T18:23:08Z |

---

license: mit

---

### Thorneworks on Stable Diffusion

This is the `<Thorneworks>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

rram12/pixelcopter

|

rram12

| 2022-09-24T18:13:16Z | 0 | 0 | null |

[

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-24T18:13:09Z |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: pixelcopter

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 19.80 +/- 13.74

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 5 of the Deep Reinforcement Learning Class: https://github.com/huggingface/deep-rl-class/tree/main/unit5

|

edumunozsala/bertin-sts-cc-news-es

|

edumunozsala

| 2022-09-24T18:02:46Z | 4 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"roberta",

"feature-extraction",

"sentence-similarity",

"transformers",

"dataset:LeoCordoba/CC-NEWS-ES-titles",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-08-22T13:51:47Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

datasets:

- LeoCordoba/CC-NEWS-ES-titles

---

# bertin-sts-cc-news-es

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('edumunozsala/bertin-sts-cc-news-es')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('edumunozsala/bertin-sts-cc-news-es')

model = AutoModel.from_pretrained('edumunozsala/bertin-sts-cc-news-es')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=bertin-sts-cc-news-es)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 13054 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.MultipleNegativesRankingLoss.MultipleNegativesRankingLoss` with parameters:

```

{'scale': 20.0, 'similarity_fct': 'cos_sim'}

```

Parameters of the fit()-Method:

```

{

"epochs": 3,

"evaluation_steps": 0,

"evaluator": "sentence_transformers.evaluation.EmbeddingSimilarityEvaluator.EmbeddingSimilarityEvaluator",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 3916,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: RobertaModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

gokuls/bert-base-sst2

|

gokuls

| 2022-09-24T18:00:08Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-24T17:29:37Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-base-sst2

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: sst2

split: train

args: sst2

metrics:

- name: Accuracy

type: accuracy

value: 0.9036697247706422

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-sst2

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3735

- Accuracy: 0.9037

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 33

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.243 | 1.0 | 4210 | 0.3735 | 0.9037 |

| 0.1557 | 2.0 | 8420 | 0.3907 | 0.8922 |

| 0.1248 | 3.0 | 12630 | 0.3690 | 0.8945 |

| 0.1017 | 4.0 | 16840 | 0.5466 | 0.8830 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1+cu113

- Datasets 2.5.1

- Tokenizers 0.12.1

|

XQ-CHO/Freewave

|

XQ-CHO

| 2022-09-24T17:19:23Z | 0 | 0 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2022-09-24T17:19:23Z |

---

license: creativeml-openrail-m

---

|

rram12/reinforce-cartpole

|

rram12

| 2022-09-24T16:42:14Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-24T16:41:18Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: reinforce-cartpole

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 5 of the Deep Reinforcement Learning Class: https://github.com/huggingface/deep-rl-class/tree/main/unit5

|

robingeibel/led-large-16384-finetuned-big_patent

|

robingeibel

| 2022-09-24T16:03:38Z | 93 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"tensorboard",

"led",

"feature-extraction",

"generated_from_keras_callback",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-06-28T10:32:30Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: led-large-16384-finetuned-big_patent

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# led-large-16384-finetuned-big_patent

This model is a fine-tuned version of [robingeibel/led-large-16384-finetuned-big_patent](https://huggingface.co/robingeibel/led-large-16384-finetuned-big_patent) on an unknown dataset.

It achieves the following results on the evaluation set:

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: None

- training_precision: float32

### Training results

### Framework versions

- Transformers 4.22.1

- TensorFlow 2.8.2

- Datasets 2.5.1

- Tokenizers 0.12.1

|

kevinbror/distilbert-base-uncased-finetuned-squad

|

kevinbror

| 2022-09-24T15:19:04Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"distilbert",

"question-answering",

"generated_from_keras_callback",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-09-24T14:40:17Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: kevinbror/distilbert-base-uncased-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# kevinbror/distilbert-base-uncased-finetuned-squad

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 1.5121

- Train End Logits Accuracy: 0.6064

- Train Start Logits Accuracy: 0.5676

- Validation Loss: 1.1850

- Validation End Logits Accuracy: 0.6834

- Validation Start Logits Accuracy: 0.6479

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 11064, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch |

|:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:|

| 1.5121 | 0.6064 | 0.5676 | 1.1850 | 0.6834 | 0.6479 | 0 |

### Framework versions

- Transformers 4.20.1

- TensorFlow 2.6.4

- Datasets 2.1.0

- Tokenizers 0.12.1

|

masakhane/afrimt5_fr_bam_news

|

masakhane

| 2022-09-24T15:08:15Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"fr",

"bam",

"license:afl-3.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-04-09T17:34:21Z |

---

license: afl-3.0

language:

- fr

- bam

---

|

masakhane/byt5_fr_bam_news

|

masakhane

| 2022-09-24T15:08:09Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"fr",

"bam",

"license:afl-3.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-04-09T17:38:02Z |

---

language:

- fr

- bam

license: afl-3.0

---

|

masakhane/mt5_bam_fr_news

|

masakhane

| 2022-09-24T15:08:08Z | 107 | 0 |

transformers

|

[