modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-08 19:17:42

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 549

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-08 18:30:19

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

ciCic/decisionTransformer

|

ciCic

| 2022-09-10T21:09:32Z | 119 | 0 |

transformers

|

[

"transformers",

"pytorch",

"decision_transformer",

"feature-extraction",

"decisionTransformer",

"deep reinforcement",

"dataset:edbeeching/decision_transformer_gym_replay",

"license:mit",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-09-09T14:32:45Z |

---

tags:

- decisionTransformer

- deep reinforcement

datasets:

- edbeeching/decision_transformer_gym_replay

license:

- mit

---

### Running training

- Num examples = 1000

- Num Epochs = 120

- Instantaneous batch size per device = 64

- Total train batch size = 64

- Gradient Accumulation steps = 1

- Total optimization steps = 1920

### Train Output

- global_step = 1920

- train_runtime = 1849.2158

- train_samples_per_second = 64.892

- train_steps_per_second = 1.038

- train_loss = 0.04717305501302083

- epoch = 120.0

### Dataset

- edbeeching/decision_transformer_gym_replay

- halfcheetah-expert-v2

|

sd-concepts-library/floral

|

sd-concepts-library

| 2022-09-10T19:43:07Z | 0 | 2 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T17:30:24Z |

---

license: mit

---

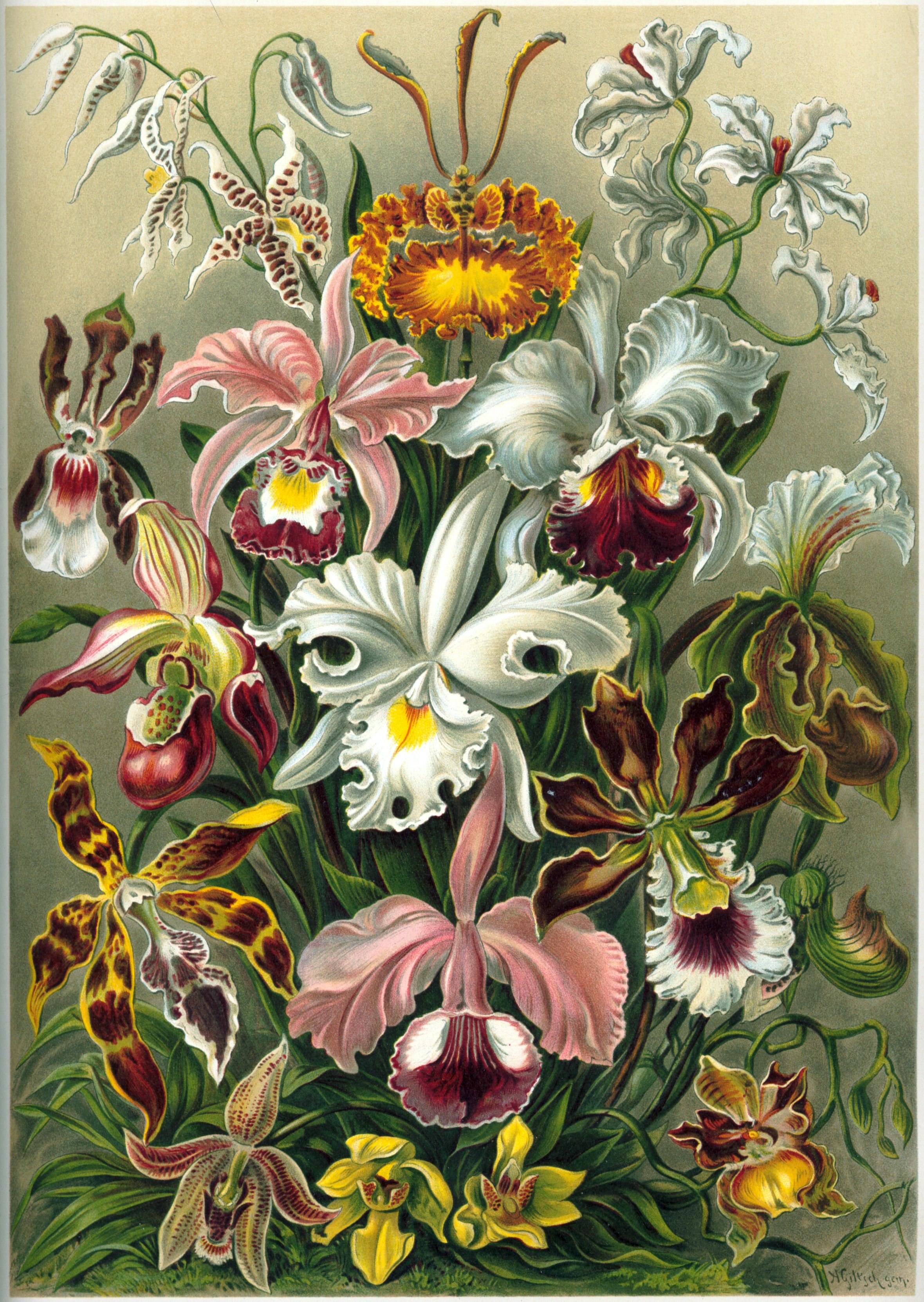

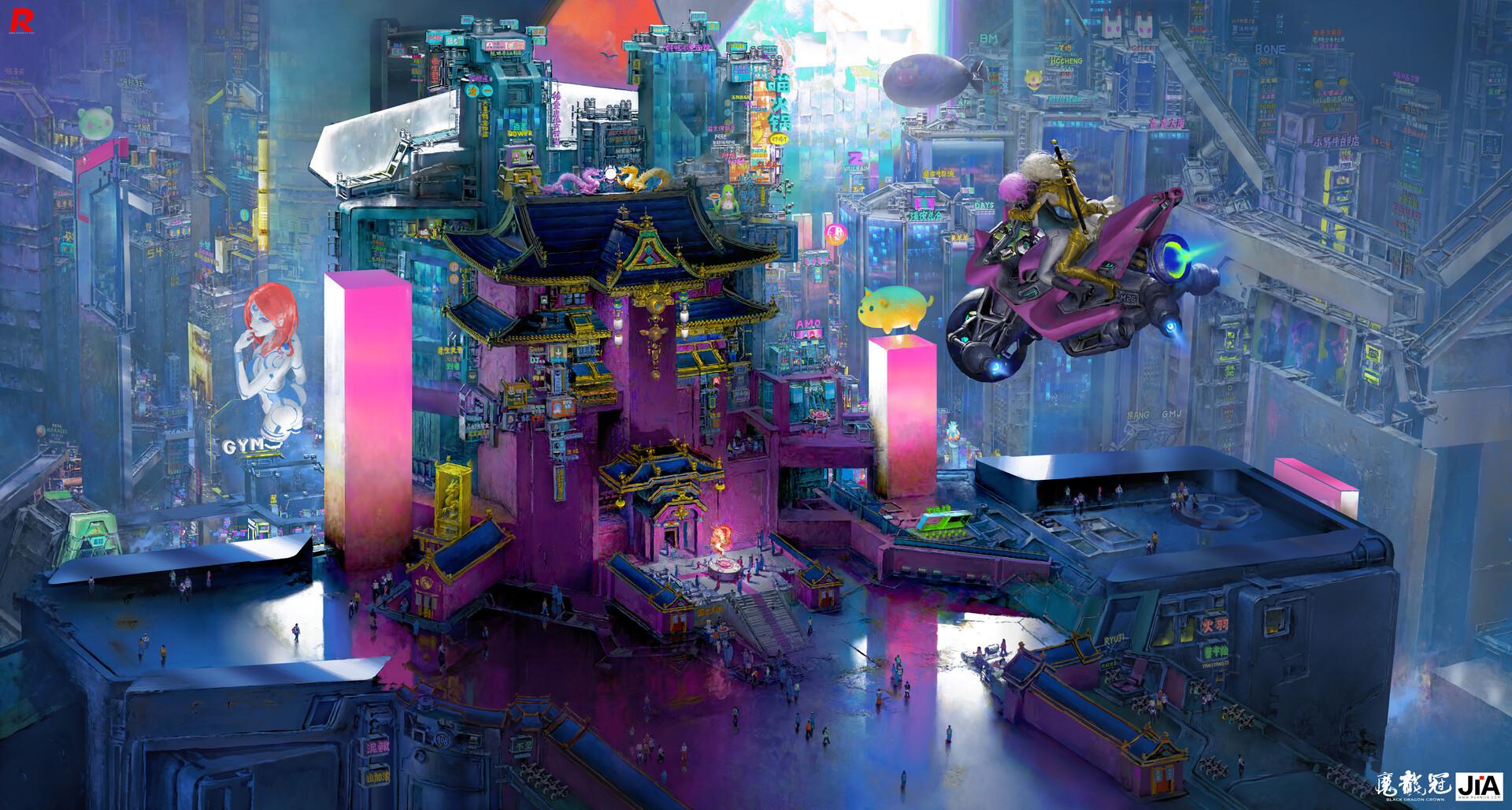

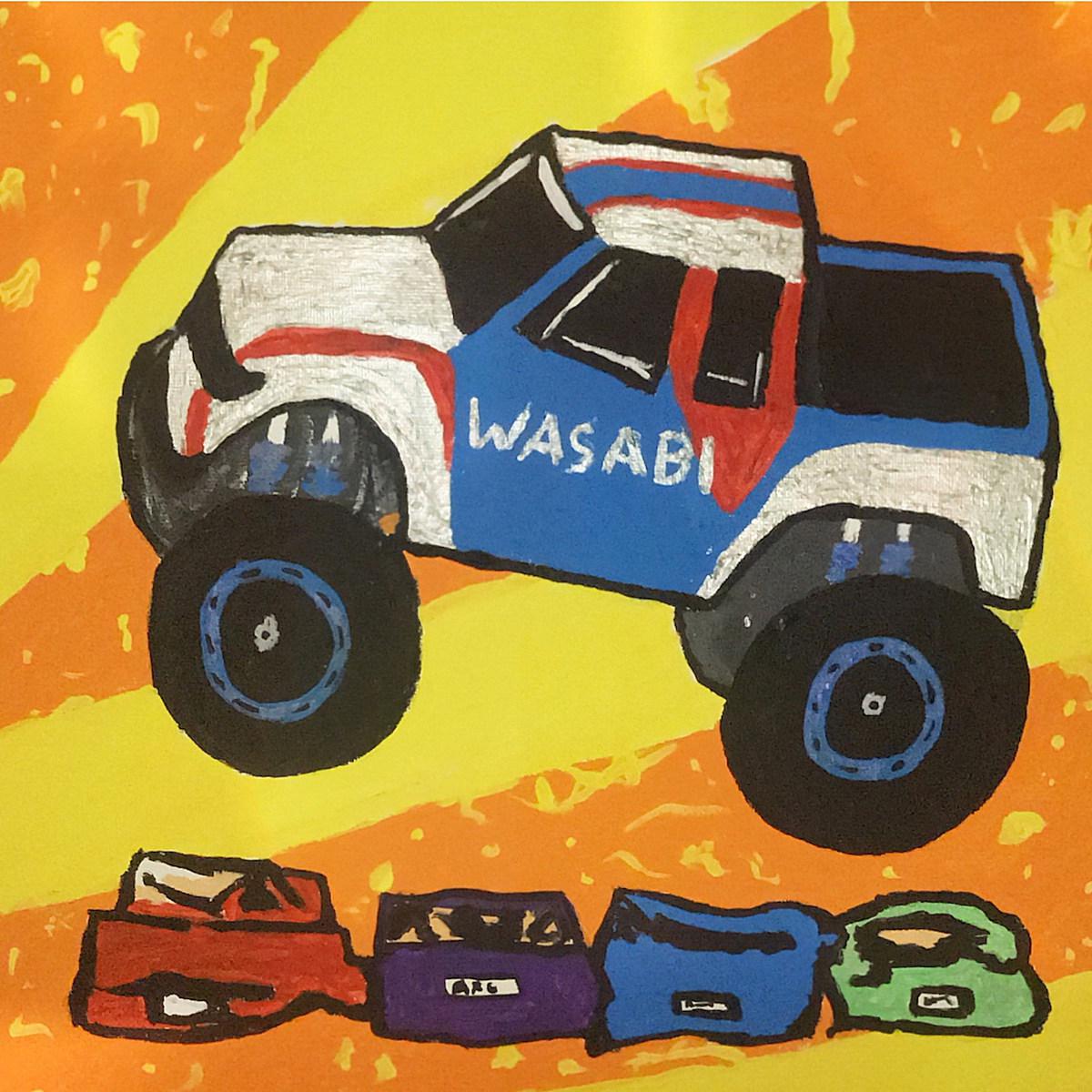

### Floral-orchid on Stable Diffusion

This is the `<floral-orchid>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

MarioWasTaken/TestingPurposes

|

MarioWasTaken

| 2022-09-10T19:28:02Z | 0 | 0 | null |

[

"region:us"

] | null | 2022-09-10T19:25:22Z |

//this is a test for now ;)

language:

"List of ISO 639-1 code for your language"

lang1

lang2

thumbnail: "url to a thumbnail used in social sharing"

tags:

- tag1

- tag2

license: "any valid license identifier"

datasets:

- dataset1

- dataset2

metrics:

metric1

metric2

|

BigSalmon/InformalToFormalLincoln76ParaphraseXL

|

BigSalmon

| 2022-09-10T19:22:01Z | 115 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-09-10T19:11:21Z |

data: https://github.com/BigSalmon2/InformalToFormalDataset

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/InformalToFormalLincoln77Paraphrase")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/InformalToFormalLincoln76ParaphraseXL")

```

```

Demo:

https://huggingface.co/spaces/BigSalmon/FormalInformalConciseWordy

```

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

input_ids = tokenizer.encode(prompt, return_tensors='pt')

outputs = model.generate(input_ids=input_ids,

max_length=10 + len(prompt),

temperature=1.0,

top_k=50,

top_p=0.95,

do_sample=True,

num_return_sequences=5,

early_stopping=True)

for i in range(5):

print(tokenizer.decode(outputs[i]))

```

Most likely outputs (Disclaimer: I highly recommend using this over just generating):

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

text = tokenizer.encode(prompt)

myinput, past_key_values = torch.tensor([text]), None

myinput = myinput

myinput= myinput.to(device)

logits, past_key_values = model(myinput, past_key_values = past_key_values, return_dict=False)

logits = logits[0,-1]

probabilities = torch.nn.functional.softmax(logits)

best_logits, best_indices = logits.topk(250)

best_words = [tokenizer.decode([idx.item()]) for idx in best_indices]

text.append(best_indices[0].item())

best_probabilities = probabilities[best_indices].tolist()

words = []

print(best_words)

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

***

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

***

informal english: corn fields are all across illinois, visible once you leave chicago.

Translated into the Style of Abraham Lincoln: corn fields ( permeate illinois / span the state of illinois / ( occupy / persist in ) all corners of illinois / line the horizon of illinois / envelop the landscape of illinois ), manifesting themselves visibly as one ventures beyond chicago.

informal english:

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

```

Essay Intro (Warriors vs. Rockets in Game 7):

text: eagerly anticipated by fans, game 7's are the highlight of the post-season.

text: ever-building in suspense, game 7's have the crowd captivated.

***

Essay Intro (South Korean TV Is Becoming Popular):

text: maturing into a bona fide paragon of programming, south korean television ( has much to offer / entertains without fail / never disappoints ).

text: increasingly held in critical esteem, south korean television continues to impress.

text: at the forefront of quality content, south korea is quickly achieving celebrity status.

***

Essay Intro (

```

```

Search: What is the definition of Checks and Balances?

https://en.wikipedia.org/wiki/Checks_and_balances

Checks and Balances is the idea of having a system where each and every action in government should be subject to one or more checks that would not allow one branch or the other to overly dominate.

https://www.harvard.edu/glossary/Checks_and_Balances

Checks and Balances is a system that allows each branch of government to limit the powers of the other branches in order to prevent abuse of power

https://www.law.cornell.edu/library/constitution/Checks_and_Balances

Checks and Balances is a system of separation through which branches of government can control the other, thus preventing excess power.

***

Search: What is the definition of Separation of Powers?

https://en.wikipedia.org/wiki/Separation_of_powers

The separation of powers is a principle in government, whereby governmental powers are separated into different branches, each with their own set of powers, that are prevent one branch from aggregating too much power.

https://www.yale.edu/tcf/Separation_of_Powers.html

Separation of Powers is the division of governmental functions between the executive, legislative and judicial branches, clearly demarcating each branch's authority, in the interest of ensuring that individual liberty or security is not undermined.

***

Search: What is the definition of Connection of Powers?

https://en.wikipedia.org/wiki/Connection_of_powers

Connection of Powers is a feature of some parliamentary forms of government where different branches of government are intermingled, typically the executive and legislative branches.

https://simple.wikipedia.org/wiki/Connection_of_powers

The term Connection of Powers describes a system of government in which there is overlap between different parts of the government.

***

Search: What is the definition of

```

```

Search: What are phrase synonyms for "second-guess"?

https://www.powerthesaurus.org/second-guess/synonyms

Shortest to Longest:

- feel dubious about

- raise an eyebrow at

- wrinkle their noses at

- cast a jaundiced eye at

- teeter on the fence about

***

Search: What are phrase synonyms for "mean to newbies"?

https://www.powerthesaurus.org/mean_to_newbies/synonyms

Shortest to Longest:

- readiness to balk at rookies

- absence of tolerance for novices

- hostile attitude toward newcomers

***

Search: What are phrase synonyms for "make use of"?

https://www.powerthesaurus.org/make_use_of/synonyms

Shortest to Longest:

- call upon

- glean value from

- reap benefits from

- derive utility from

- seize on the merits of

- draw on the strength of

- tap into the potential of

***

Search: What are phrase synonyms for "hurting itself"?

https://www.powerthesaurus.org/hurting_itself/synonyms

Shortest to Longest:

- erring

- slighting itself

- forfeiting its integrity

- doing itself a disservice

- evincing a lack of backbone

***

Search: What are phrase synonyms for "

```

```

- nebraska

- unicamerical legislature

- different from federal house and senate

text: featuring a unicameral legislature, nebraska's political system stands in stark contrast to the federal model, comprised of a house and senate.

***

- penny has practically no value

- should be taken out of circulation

- just as other coins have been in us history

- lost use

- value not enough

- to make environmental consequences worthy

text: all but valueless, the penny should be retired. as with other coins in american history, it has become defunct. too minute to warrant the environmental consequences of its production, it has outlived its usefulness.

***

-

```

```

original: sports teams are profitable for owners. [MASK], their valuations experience a dramatic uptick.

infill: sports teams are profitable for owners. ( accumulating vast sums / stockpiling treasure / realizing benefits / cashing in / registering robust financials / scoring on balance sheets ), their valuations experience a dramatic uptick.

***

original:

```

```

wordy: classical music is becoming less popular more and more.

Translate into Concise Text: interest in classic music is fading.

***

wordy:

```

```

sweet: savvy voters ousted him.

longer: voters who were informed delivered his defeat.

***

sweet:

```

```

1: commercial space company spacex plans to launch a whopping 52 flights in 2022.

2: spacex, a commercial space company, intends to undertake a total of 52 flights in 2022.

3: in 2022, commercial space company spacex has its sights set on undertaking 52 flights.

4: 52 flights are in the pipeline for 2022, according to spacex, a commercial space company.

5: a commercial space company, spacex aims to conduct 52 flights in 2022.

***

1:

```

Keywords to sentences or sentence.

```

ngos are characterized by:

□ voluntary citizens' group that is organized on a local, national or international level

□ encourage political participation

□ often serve humanitarian functions

□ work for social, economic, or environmental change

***

what are the drawbacks of living near an airbnb?

□ noise

□ parking

□ traffic

□ security

□ strangers

***

```

```

original: musicals generally use spoken dialogue as well as songs to convey the story. operas are usually fully sung.

adapted: musicals generally use spoken dialogue as well as songs to convey the story. ( in a stark departure / on the other hand / in contrast / by comparison / at odds with this practice / far from being alike / in defiance of this standard / running counter to this convention ), operas are usually fully sung.

***

original: akoya and tahitian are types of pearls. akoya pearls are mostly white, and tahitian pearls are naturally dark.

adapted: akoya and tahitian are types of pearls. ( a far cry from being indistinguishable / easily distinguished / on closer inspection / setting them apart / not to be mistaken for one another / hardly an instance of mere synonymy / differentiating the two ), akoya pearls are mostly white, and tahitian pearls are naturally dark.

***

original:

```

```

original: had trouble deciding.

translated into journalism speak: wrestled with the question, agonized over the matter, furrowed their brows in contemplation.

***

original:

```

```

input: not loyal

1800s english: ( two-faced / inimical / perfidious / duplicitous / mendacious / double-dealing / shifty ).

***

input:

```

```

first: ( was complicit in / was involved in ).

antonym: ( was blameless / was not an accomplice to / had no hand in / was uninvolved in ).

***

first: ( have no qualms about / see no issue with ).

antonym: ( are deeply troubled by / harbor grave reservations about / have a visceral aversion to / take ( umbrage at / exception to ) / are wary of ).

***

first: ( do not see eye to eye / disagree often ).

antonym: ( are in sync / are united / have excellent rapport / are like-minded / are in step / are of one mind / are in lockstep / operate in perfect harmony / march in lockstep ).

***

first:

```

```

stiff with competition, law school {A} is the launching pad for countless careers, {B} is a crowded field, {C} ranks among the most sought-after professional degrees, {D} is a professional proving ground.

***

languishing in viewership, saturday night live {A} is due for a creative renaissance, {B} is no longer a ratings juggernaut, {C} has been eclipsed by its imitators, {C} can still find its mojo.

***

dubbed the "manhattan of the south," atlanta {A} is a bustling metropolis, {B} is known for its vibrant downtown, {C} is a city of rich history, {D} is the pride of georgia.

***

embattled by scandal, harvard {A} is feeling the heat, {B} cannot escape the media glare, {C} is facing its most intense scrutiny yet, {D} is in the spotlight for all the wrong reasons.

```

Infill / Infilling / Masking / Phrase Masking (Works pretty decently actually, especially when you use logprobs code from above):

```

his contention [blank] by the evidence [sep] was refuted [answer]

***

few sights are as [blank] new york city as the colorful, flashing signage of its bodegas [sep] synonymous with [answer]

***

when rick won the lottery, all of his distant relatives [blank] his winnings [sep] clamored for [answer]

***

the library’s quiet atmosphere encourages visitors to [blank] in their work [sep] immerse themselves [answer]

***

the joy of sport is that no two games are alike. for every exhilarating experience, however, there is an interminable one. the national pastime, unfortunately, has a penchant for the latter. what begins as a summer evening at the ballpark can quickly devolve into a game of tedium. the primary culprit is the [blank] of play. from batters readjusting their gloves to fielders spitting on their mitts, the action is [blank] unnecessary interruptions. the sport's future is [blank] if these tendencies are not addressed [sep] plodding pace [answer] riddled with [answer] bleak [answer]

***

microsoft word's [blank] pricing [blank] competition [sep] unconscionable [answer] invites [answer]

***

```

```

original: microsoft word's [MASK] pricing invites competition.

Translated into the Style of Abraham Lincoln: microsoft word's unconscionable pricing invites competition.

***

original: the library’s quiet atmosphere encourages visitors to [blank] in their work.

Translated into the Style of Abraham Lincoln: the library’s quiet atmosphere encourages visitors to immerse themselves in their work.

```

Backwards

```

Essay Intro (National Parks):

text: tourists are at ease in the national parks, ( swept up in the beauty of their natural splendor ).

***

Essay Intro (D.C. Statehood):

washington, d.c. is a city of outsize significance, ( ground zero for the nation's political life / center stage for the nation's political machinations ).

```

```

topic: the Golden State Warriors.

characterization 1: the reigning kings of the NBA.

characterization 2: possessed of a remarkable cohesion.

characterization 3: helmed by superstar Stephen Curry.

characterization 4: perched atop the league’s hierarchy.

characterization 5: boasting a litany of hall-of-famers.

***

topic: emojis.

characterization 1: shorthand for a digital generation.

characterization 2: more versatile than words.

characterization 3: the latest frontier in language.

characterization 4: a form of self-expression.

characterization 5: quintessentially millennial.

characterization 6: reflective of a tech-centric world.

***

topic:

```

```

regular: illinois went against the census' population-loss prediction by getting more residents.

VBG: defying the census' prediction of population loss, illinois experienced growth.

***

regular: microsoft word’s high pricing increases the likelihood of competition.

VBG: extortionately priced, microsoft word is inviting competition.

***

regular:

```

```

source: badminton should be more popular in the US.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) games played with racquets are popular, (2) just look at tennis and ping pong, (3) but badminton underappreciated, (4) fun, fast-paced, competitive, (5) needs to be marketed more

text: the sporting arena is dominated by games that are played with racquets. tennis and ping pong, in particular, are immensely popular. somewhat curiously, however, badminton is absent from this pantheon. exciting, fast-paced, and competitive, it is an underappreciated pastime. all that it lacks is more effective marketing.

***

source: movies in theaters should be free.

QUERY: Based on the given topic, can you develop a story outline?

target: (1) movies provide vital life lessons, (2) many venues charge admission, (3) those without much money

text: the lessons that movies impart are far from trivial. the vast catalogue of cinematic classics is replete with inspiring sagas of friendship, bravery, and tenacity. it is regrettable, then, that admission to theaters is not free. in their current form, the doors of this most vital of institutions are closed to those who lack the means to pay.

***

source:

```

```

in the private sector, { transparency } is vital to the business’s credibility. the { disclosure of information } can be the difference between success and failure.

***

the labor market is changing, with { remote work } now the norm. this { flexible employment } allows the individual to design their own schedule.

***

the { cubicle } is the locus of countless grievances. many complain that the { enclosed workspace } restricts their freedom of movement.

***

```

|

sd-concepts-library/yb-anime

|

sd-concepts-library

| 2022-09-10T18:30:00Z | 0 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T18:29:55Z |

---

license: mit

---

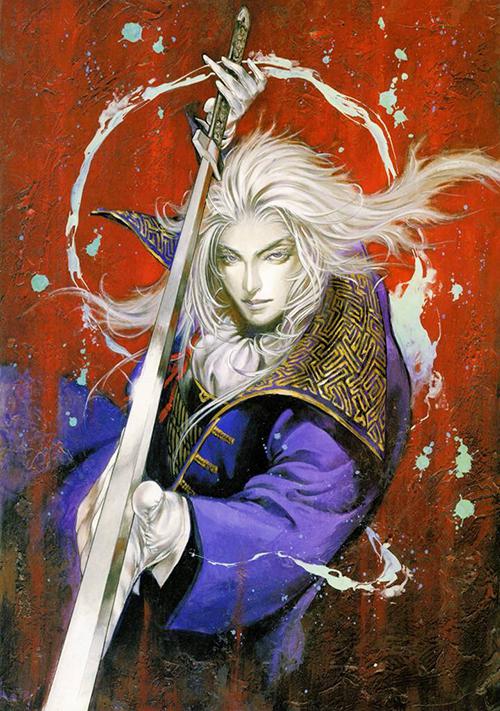

### YB Anime on Stable Diffusion

This is the `<anime-character>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/handstand

|

sd-concepts-library

| 2022-09-10T16:37:36Z | 0 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T16:37:24Z |

---

license: mit

---

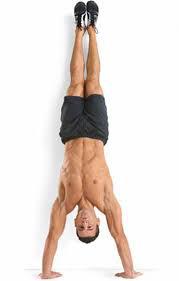

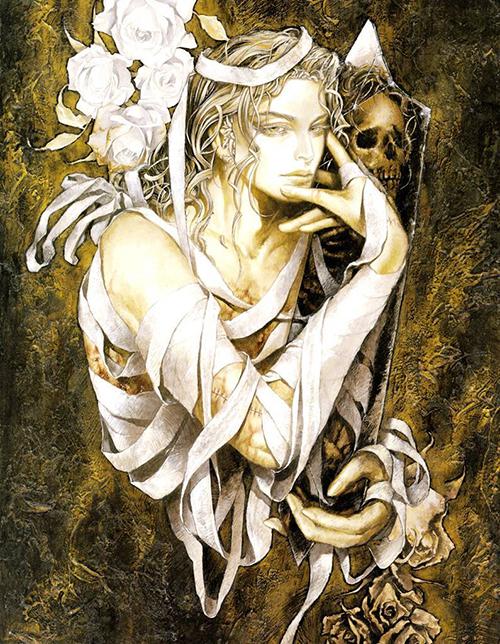

### handstand on Stable Diffusion

This is the `<handstand>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

huggingtweets/apesahoy-dril_gpt2-stefgotbooted

|

huggingtweets

| 2022-09-10T15:01:55Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-09-10T15:00:58Z |

---

language: en

thumbnail: http://www.huggingtweets.com/apesahoy-dril_gpt2-stefgotbooted/1662822110359/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1514451221054173189/BWP3wqQj_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1196519479364268034/5QpniWSP_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1285982491636125701/IW0v36am_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">wint but Al & Humongous Ape MP & Agree to disagree 🍊 🍊 🍊</div>

<div style="text-align: center; font-size: 14px;">@apesahoy-dril_gpt2-stefgotbooted</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from wint but Al & Humongous Ape MP & Agree to disagree 🍊 🍊 🍊.

| Data | wint but Al | Humongous Ape MP | Agree to disagree 🍊 🍊 🍊 |

| --- | --- | --- | --- |

| Tweets downloaded | 3247 | 3247 | 3194 |

| Retweets | 49 | 191 | 1674 |

| Short tweets | 57 | 607 | 445 |

| Tweets kept | 3141 | 2449 | 1075 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2eu4r1qp/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @apesahoy-dril_gpt2-stefgotbooted's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/2k50hu4q) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/2k50hu4q/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/apesahoy-dril_gpt2-stefgotbooted')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

Colorful/RTA

|

Colorful

| 2022-09-10T14:56:55Z | 114 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"roberta",

"text-classification",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-05T07:31:32Z |

---

license: mit

---

RTA (RepresentThemAll) is a pre-trained language model for bug reports. It can be fine-tuned on all kinds of automated software maintenance tasks associated with bug reports such as bug report summarization, duplicate bug report detection, bug priority prediction, etc.

|

DelinteNicolas/SDG_classifier_v0.0.3

|

DelinteNicolas

| 2022-09-10T14:56:54Z | 162 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-10T13:22:11Z |

Fined-tuned BERT trained on 6500 images with warmup, increased epoch and decreased learning rate

|

sd-concepts-library/naf

|

sd-concepts-library

| 2022-09-10T14:46:08Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T14:46:01Z |

---

license: mit

---

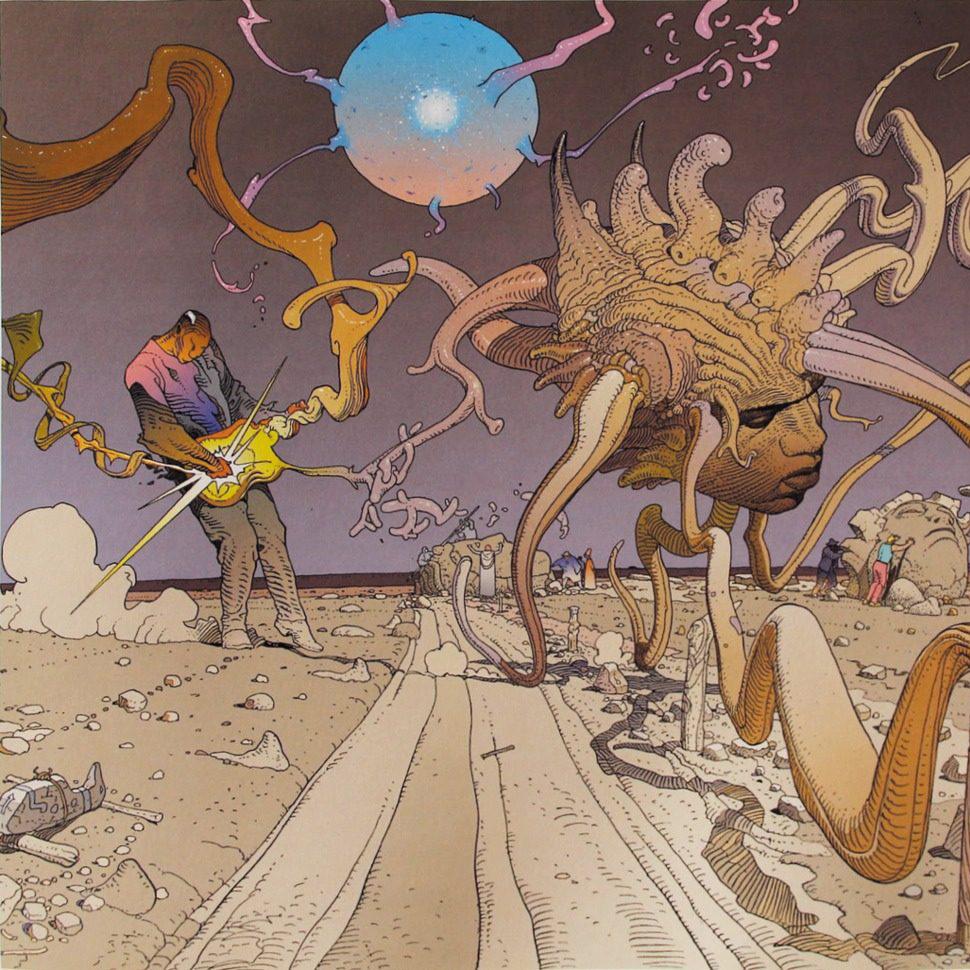

### naf on Stable Diffusion

This is the `<nal>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Katrzyna/bert-base-cased-finetuned-basil

|

Katrzyna

| 2022-09-10T14:29:50Z | 194 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-10T13:41:52Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: bert-base-cased-finetuned-basil

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-cased-finetuned-basil

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2272

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.8527 | 1.0 | 800 | 1.4425 |

| 1.4878 | 2.0 | 1600 | 1.2740 |

| 1.3776 | 3.0 | 2400 | 1.2273 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Tokenizers 0.12.1

|

huggingtweets/apesahoy-daftlimmy-women4wes

|

huggingtweets

| 2022-09-10T14:23:59Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-09-10T14:22:18Z |

---

language: en

thumbnail: http://www.huggingtweets.com/apesahoy-daftlimmy-women4wes/1662819834805/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1392892260099010560/_gYhDAdr_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1196519479364268034/5QpniWSP_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1489315073055199233/O-Sws7Go_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">twitch.tv/Limmy & Humongous Ape MP & Women for Wes</div>

<div style="text-align: center; font-size: 14px;">@apesahoy-daftlimmy-women4wes</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from twitch.tv/Limmy & Humongous Ape MP & Women for Wes.

| Data | twitch.tv/Limmy | Humongous Ape MP | Women for Wes |

| --- | --- | --- | --- |

| Tweets downloaded | 3246 | 3247 | 1807 |

| Retweets | 411 | 191 | 53 |

| Short tweets | 715 | 607 | 275 |

| Tweets kept | 2120 | 2449 | 1479 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/6goa6gdz/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @apesahoy-daftlimmy-women4wes's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/ltv5351j) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/ltv5351j/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/apesahoy-daftlimmy-women4wes')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

sd-concepts-library/riker-doll

|

sd-concepts-library

| 2022-09-10T13:36:53Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T13:36:35Z |

---

license: mit

---

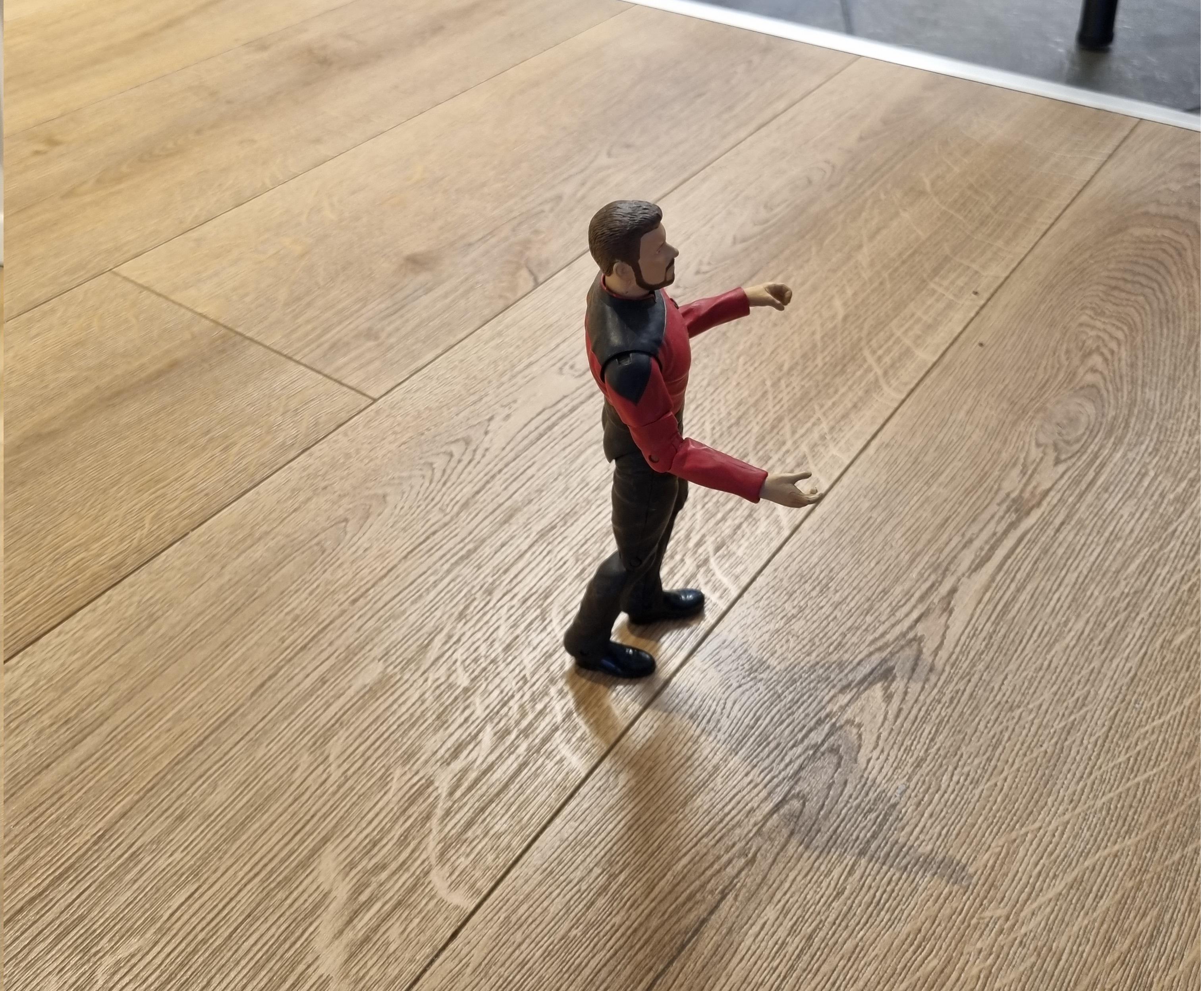

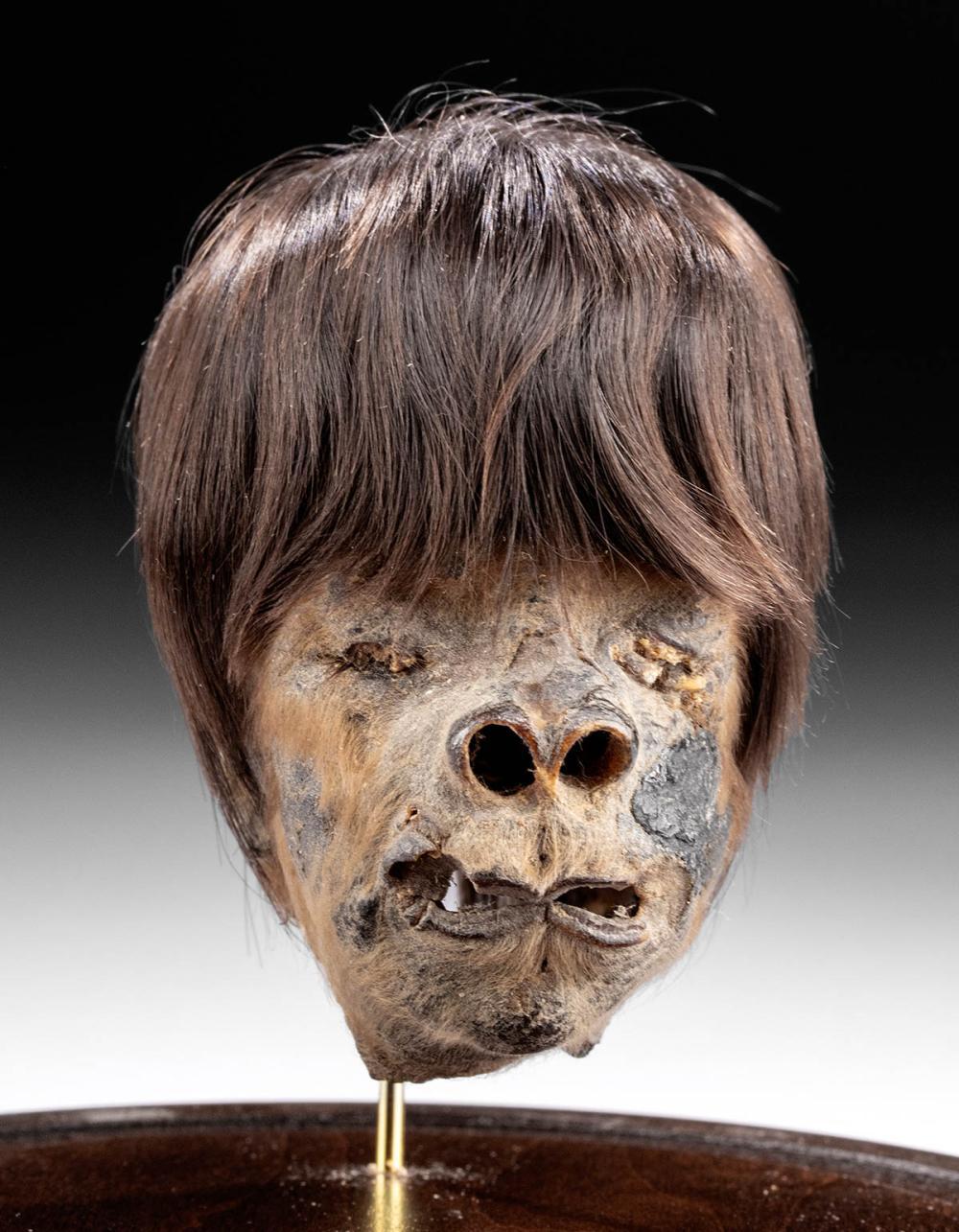

### Riker Doll on Stable Diffusion

This is the `<rikerdoll>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

Shamus/NLLB-600m-swh_Latn-to-eng_Latn

|

Shamus

| 2022-09-10T12:55:11Z | 113 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"m2m_100",

"text2text-generation",

"generated_from_trainer",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-10T08:44:32Z |

---

license: cc-by-nc-4.0

tags:

- generated_from_trainer

metrics:

- bleu

model-index:

- name: NLLB-600m-swh_Latn-to-eng_Latn

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# NLLB-600m-swh_Latn-to-eng_Latn

This model is a fine-tuned version of [facebook/nllb-200-distilled-600M](https://huggingface.co/facebook/nllb-200-distilled-600M) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2490

- Bleu: 31.1907

- Gen Len: 34.464

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- gradient_accumulation_steps: 7

- total_train_batch_size: 14

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 8000

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|

| 2.8224 | 0.41 | 500 | 2.3121 | 8.4908 | 34.136 |

| 2.1656 | 0.83 | 1000 | 1.9451 | 14.9983 | 33.604 |

| 1.885 | 1.24 | 1500 | 1.7385 | 18.7049 | 33.928 |

| 1.6922 | 1.66 | 2000 | 1.6102 | 21.7399 | 33.648 |

| 1.5693 | 2.07 | 2500 | 1.5175 | 23.2299 | 34.912 |

| 1.4695 | 2.49 | 3000 | 1.4552 | 24.8572 | 32.612 |

| 1.4195 | 2.9 | 3500 | 1.3948 | 26.3956 | 33.56 |

| 1.3413 | 3.32 | 4000 | 1.3564 | 27.2599 | 32.824 |

| 1.3094 | 3.73 | 4500 | 1.3263 | 27.9728 | 33.42 |

| 1.2748 | 4.15 | 5000 | 1.3044 | 28.8956 | 33.56 |

| 1.227 | 4.56 | 5500 | 1.2844 | 29.8314 | 33.552 |

| 1.2255 | 4.97 | 6000 | 1.2692 | 30.4411 | 33.716 |

| 1.191 | 5.39 | 6500 | 1.2611 | 31.1336 | 34.432 |

| 1.1842 | 5.8 | 7000 | 1.2542 | 30.8819 | 33.716 |

| 1.1712 | 6.22 | 7500 | 1.2506 | 31.528 | 33.768 |

| 1.1606 | 6.63 | 8000 | 1.2490 | 31.1907 | 34.464 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/scrap-style

|

sd-concepts-library

| 2022-09-10T12:32:37Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-09T18:49:07Z |

---

license: mit

---

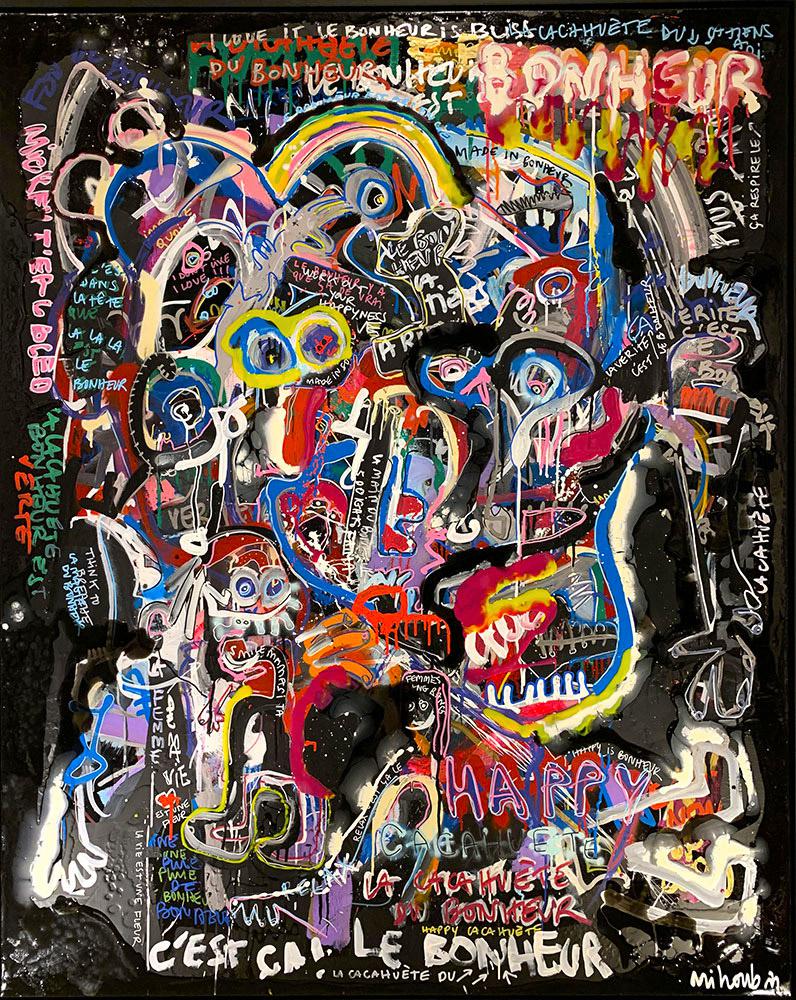

### scrap-style on Stable Diffusion

This is the `<style-scrap>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

sd-concepts-library/line-style

|

sd-concepts-library

| 2022-09-10T11:01:53Z | 0 | 3 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-09T13:16:23Z |

---

license: mit

---

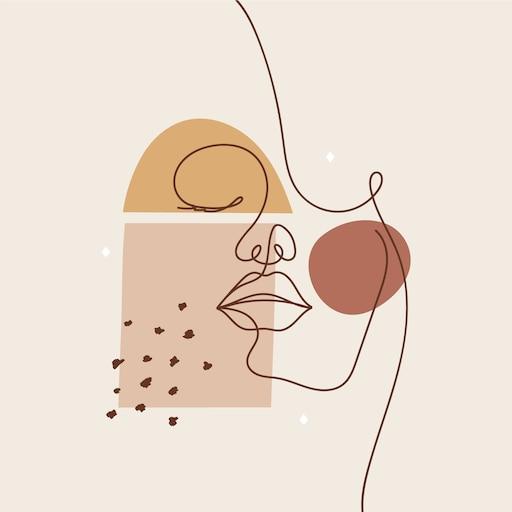

### line-style on Stable Diffusion

This is the `<line-style>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

Shamus/mbart-large-50-many-to-many-mmt-finetuned-fij_Latn-to-eng_Latn

|

Shamus

| 2022-09-10T09:44:21Z | 113 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"m2m_100",

"text2text-generation",

"generated_from_trainer",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-09T04:09:02Z |

---

license: cc-by-nc-4.0

tags:

- generated_from_trainer

metrics:

- bleu

model-index:

- name: mbart-large-50-many-to-many-mmt-finetuned-fij_Latn-to-eng_Latn

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mbart-large-50-many-to-many-mmt-finetuned-fij_Latn-to-eng_Latn

This model is a fine-tuned version of [facebook/nllb-200-distilled-600M](https://huggingface.co/facebook/nllb-200-distilled-600M) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9598

- Bleu: 45.0972

- Gen Len: 42.752

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- gradient_accumulation_steps: 6

- total_train_batch_size: 12

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 8000

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|

| 2.4054 | 0.49 | 500 | 1.7028 | 24.9597 | 43.04 |

| 1.6855 | 0.98 | 1000 | 1.3701 | 33.3128 | 42.2 |

| 1.4042 | 1.47 | 1500 | 1.2224 | 37.6016 | 43.536 |

| 1.2991 | 1.96 | 2000 | 1.1467 | 40.3541 | 42.428 |

| 1.1819 | 2.45 | 2500 | 1.0950 | 42.2106 | 42.58 |

| 1.1323 | 2.94 | 3000 | 1.0523 | 42.9418 | 42.76 |

| 1.0676 | 3.43 | 3500 | 1.0238 | 43.4974 | 42.684 |

| 1.0404 | 3.93 | 4000 | 1.0082 | 43.6092 | 42.616 |

| 0.9882 | 4.42 | 4500 | 0.9942 | 44.7199 | 42.912 |

| 0.982 | 4.91 | 5000 | 0.9814 | 44.8061 | 42.516 |

| 0.9372 | 5.4 | 5500 | 0.9781 | 44.3808 | 42.476 |

| 0.9382 | 5.89 | 6000 | 0.9675 | 45.0267 | 42.76 |

| 0.915 | 6.38 | 6500 | 0.9659 | 45.0073 | 42.676 |

| 0.9126 | 6.87 | 7000 | 0.9617 | 44.9582 | 42.548 |

| 0.8903 | 7.36 | 7500 | 0.9609 | 44.8713 | 42.724 |

| 0.8873 | 7.85 | 8000 | 0.9598 | 45.0972 | 42.752 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

nghuyong/ernie-1.0-base-zh

|

nghuyong

| 2022-09-10T09:37:26Z | 2,164 | 18 |

transformers

|

[

"transformers",

"pytorch",

"ernie",

"fill-mask",

"zh",

"arxiv:1904.09223",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

---

language: zh

---

# ERNIE-1.0

## Introduction

ERNIE (Enhanced Representation through kNowledge IntEgration) is proposed by Baidu in 2019,

which is designed to learn language representation enhanced by knowledge masking strategies i.e. entity-level masking and phrase-level masking.

Experimental results show that ERNIE achieve state-of-the-art results on five Chinese natural language processing tasks including natural language inference,

semantic similarity, named entity recognition, sentiment analysis and question answering.

More detail: https://arxiv.org/abs/1904.09223

## Released Model Info

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo: https://github.com/PaddlePaddle/ERNIE

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("nghuyong/ernie-1.0-base-zh")

model = AutoModel.from_pretrained("nghuyong/ernie-1.0-base-zh")

```

## Citation

```bibtex

@article{sun2019ernie,

title={Ernie: Enhanced representation through knowledge integration},

author={Sun, Yu and Wang, Shuohuan and Li, Yukun and Feng, Shikun and Chen, Xuyi and Zhang, Han and Tian, Xin and Zhu, Danxiang and Tian, Hao and Wu, Hua},

journal={arXiv preprint arXiv:1904.09223},

year={2019}

}

```

|

pedramyamini/distilbert-base-multilingual-cased-finetuned-mobile-banks-cafebazaar

|

pedramyamini

| 2022-09-10T09:34:12Z | 5 | 0 |

transformers

|

[

"transformers",

"tf",

"distilbert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T13:05:28Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: pedramyamini/distilbert-base-multilingual-cased-finetuned-mobile-banks-cafebazaar

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# pedramyamini/distilbert-base-multilingual-cased-finetuned-mobile-banks-cafebazaar

This model is a fine-tuned version of [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.5059

- Validation Loss: 0.7437

- Epoch: 4

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 13370, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 0.5075 | 0.7437 | 0 |

| 0.5074 | 0.7437 | 1 |

| 0.5079 | 0.7437 | 2 |

| 0.5086 | 0.7437 | 3 |

| 0.5059 | 0.7437 | 4 |

### Framework versions

- Transformers 4.21.3

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

nghuyong/ernie-2.0-large-en

|

nghuyong

| 2022-09-10T09:34:12Z | 272 | 8 |

transformers

|

[

"transformers",

"pytorch",

"ernie",

"feature-extraction",

"arxiv:1907.12412",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-03-02T23:29:05Z |

# ERNIE-2.0-large

## Introduction

ERNIE 2.0 is a continual pre-training framework proposed by Baidu in 2019,

which builds and learns incrementally pre-training tasks through constant multi-task learning.

Experimental results demonstrate that ERNIE 2.0 outperforms BERT and XLNet on 16 tasks including English tasks on GLUE benchmarks and several common tasks in Chinese.

More detail: https://arxiv.org/abs/1907.12412

## Released Model Info

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo: https://github.com/PaddlePaddle/ERNIE

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("nghuyong/ernie-2.0-large-en")

model = AutoModel.from_pretrained("nghuyong/ernie-2.0-large-en")

```

## Citation

```bibtex

@article{sun2019ernie20,

title={ERNIE 2.0: A Continual Pre-training Framework for Language Understanding},

author={Sun, Yu and Wang, Shuohuan and Li, Yukun and Feng, Shikun and Tian, Hao and Wu, Hua and Wang, Haifeng},

journal={arXiv preprint arXiv:1907.12412},

year={2019}

}

```

|

sd-concepts-library/stuffed-penguin-toy

|

sd-concepts-library

| 2022-09-10T09:28:40Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-09T05:26:08Z |

---

license: mit

---

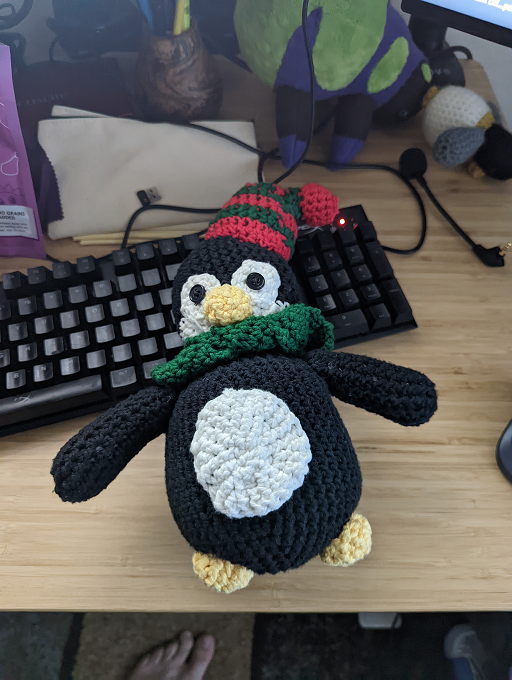

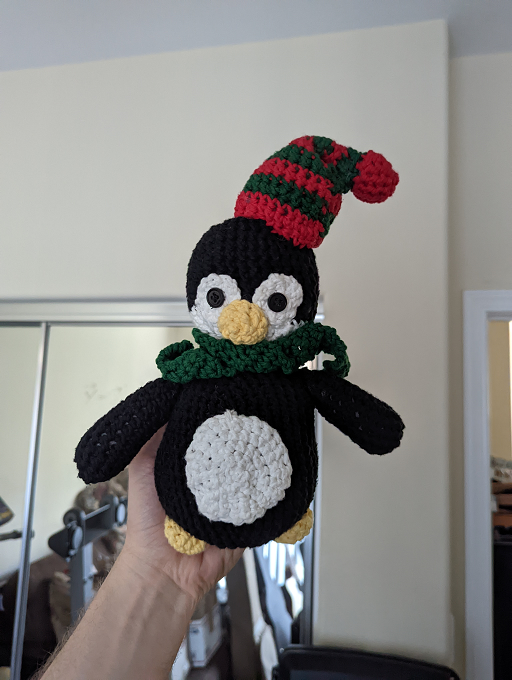

### stuffed-penguin-toy on Stable Diffusion

This is the `<pengu-toy>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

nghuyong/ernie-health-zh

|

nghuyong

| 2022-09-10T09:13:33Z | 517 | 10 |

transformers

|

[

"transformers",

"pytorch",

"ernie",

"feature-extraction",

"zh",

"arxiv:2110.07244",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-05-31T08:43:33Z |

---

language: zh

---

# ernie-health-zh

## Introduction

ERNIE-health is a Chinese biomedical language model pre-trained from in-domain text of de-identified online doctor-patient dialogues, electronic medical records, and textbooks.

More detail:

https://github.com/PaddlePaddle/PaddleNLP/blob/develop/model_zoo/ernie-health/

https://arxiv.org/pdf/2110.07244.pdf

## Released Model Info

|Model Name|Language|Model Structure|

|:---:|:---:|:---:|

|ernie-health-zh| Chinese |Layer:12, Hidden:768, Heads:12|

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo:https://github.com/PaddlePaddle/PaddleNLP/blob/develop/model_zoo/ernie-health/

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("nghuyong/ernie-health-zh")

model = AutoModel.from_pretrained("nghuyong/ernie-health-zh")

```

## Citation

```bibtex

@article{wang2021building,

title={Building Chinese Biomedical Language Models via Multi-Level Text Discrimination},

author={Wang, Quan and Dai, Songtai and Xu, Benfeng and Lyu, Yajuan and Zhu, Yong and Wu, Hua and Wang, Haifeng},

journal={arXiv preprint arXiv:2110.07244},

year={2021}

}

```

|

nghuyong/ernie-3.0-nano-zh

|

nghuyong

| 2022-09-10T09:02:42Z | 284 | 24 |

transformers

|

[

"transformers",

"pytorch",

"ernie",

"feature-extraction",

"zh",

"arxiv:2107.02137",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-08-22T09:39:34Z |

---

language: zh

---

# ERNIE-3.0-nano-zh

## Introduction

ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation

More detail: https://arxiv.org/abs/2107.02137

## Released Model Info

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo:https://paddlenlp.readthedocs.io/zh/latest/model_zoo/transformers/ERNIE/contents.html

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import BertTokenizer, ErnieModel

tokenizer = BertTokenizer.from_pretrained("nghuyong/ernie-3.0-nano-zh")

model = ErnieModel.from_pretrained("nghuyong/ernie-3.0-nano-zh")

```

## Citation

```bibtex

@article{sun2021ernie,

title={Ernie 3.0: Large-scale knowledge enhanced pre-training for language understanding and generation},

author={Sun, Yu and Wang, Shuohuan and Feng, Shikun and Ding, Siyu and Pang, Chao and Shang, Junyuan and Liu, Jiaxiang and Chen, Xuyi and Zhao, Yanbin and Lu, Yuxiang and others},

journal={arXiv preprint arXiv:2107.02137},

year={2021}

}

```

|

IIIT-L/hing-roberta-finetuned-TRAC-DS

|

IIIT-L

| 2022-09-10T08:59:37Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"text-classification",

"generated_from_trainer",

"license:cc-by-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-10T08:45:05Z |

---

license: cc-by-4.0

tags:

- generated_from_trainer

metrics:

- accuracy

- precision

- recall

- f1

model-index:

- name: hing-roberta-finetuned-TRAC-DS

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# hing-roberta-finetuned-TRAC-DS

This model is a fine-tuned version of [l3cube-pune/hing-roberta](https://huggingface.co/l3cube-pune/hing-roberta) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.1610

- Accuracy: 0.7149

- Precision: 0.6921

- Recall: 0.6946

- F1: 0.6932

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4.8796394086479776e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 43

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 4

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:---------:|:------:|:------:|

| 0.7229 | 2.0 | 1224 | 0.7178 | 0.6928 | 0.6815 | 0.6990 | 0.6780 |

| 0.3258 | 3.99 | 2448 | 1.1610 | 0.7149 | 0.6921 | 0.6946 | 0.6932 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.10.1+cu111

- Datasets 2.3.2

- Tokenizers 0.12.1

|

nghuyong/ernie-3.0-micro-zh

|

nghuyong

| 2022-09-10T08:59:03Z | 252 | 1 |

transformers

|

[

"transformers",

"pytorch",

"ernie",

"feature-extraction",

"zh",

"arxiv:2107.02137",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-08-22T09:36:10Z |

---

language: zh

---

# ERNIE-3.0-micro-zh

## Introduction

ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation

More detail: https://arxiv.org/abs/2107.02137

## Released Model Info

This released pytorch model is converted from the officially released PaddlePaddle ERNIE model and

a series of experiments have been conducted to check the accuracy of the conversion.

- Official PaddlePaddle ERNIE repo:https://paddlenlp.readthedocs.io/zh/latest/model_zoo/transformers/ERNIE/contents.html

- Pytorch Conversion repo: https://github.com/nghuyong/ERNIE-Pytorch

## How to use

```Python

from transformers import BertTokenizer, ErnieModel

tokenizer = BertTokenizer.from_pretrained("nghuyong/ernie-3.0-micro-zh")

model = ErnieModel.from_pretrained("nghuyong/ernie-3.0-micro-zh")

```

## Citation

```bibtex

@article{sun2021ernie,

title={Ernie 3.0: Large-scale knowledge enhanced pre-training for language understanding and generation},

author={Sun, Yu and Wang, Shuohuan and Feng, Shikun and Ding, Siyu and Pang, Chao and Shang, Junyuan and Liu, Jiaxiang and Chen, Xuyi and Zhao, Yanbin and Lu, Yuxiang and others},

journal={arXiv preprint arXiv:2107.02137},

year={2021}

}

```

|

hitachinsk/FGT

|

hitachinsk

| 2022-09-10T08:48:54Z | 0 | 4 | null |

[

"arxiv:2208.06768",

"license:mit",

"region:us"

] | null | 2022-09-10T08:12:06Z |

---

license: mit

---

# [ECCV 2022] Flow-Guided Transformer for Video Inpainting

[](https://github.com/hitachinsk/FGT/blob/main/LICENSE)

### [[Paper](https://arxiv.org/abs/2208.06768)] / [[Codes](https://github.com/hitachinsk/FGT)] / [[Demo](https://youtu.be/BC32n-NncPs)] / [[Project page](https://hitachinsk.github.io/publication/2022-10-01-Flow-Guided-Transformer-for-Video-Inpainting)]

This repository hosts the pretrained models of the following paper:

> **Flow-Guided Transformer for Video Inpainting**<br>

> [Kaidong Zhang](https://hitachinsk.github.io/), [Jingjing Fu](https://www.microsoft.com/en-us/research/people/jifu/) and [Dong Liu](http://staff.ustc.edu.cn/~dongeliu/)<br>

> European Conference on Computer Vision (**ECCV**), 2022<br>

## Details

There are three models in this repository, here are the details.

- `lafc.pth.tar`: The pretrained model of "Local Aggregation Flow Completion Network", which accepts a sequence of corrupted optical flows, and outputs the completed flows.

- `lafc_single.pth.tar`: The pretrained model of the single flow completion version of "Local Aggregation Flow Completion Network", it accepts **one** corrupted flow, and outputs **one** completed flow. (Only for the training of the FGT model)

- `fgt.pth.tar`: The pretrained model of "Flow Guided Transformer", which receives a sequence of corrupted frames and completed optical flows, and outputs the completed frames.

Besides the pretrained weights, we also provide the configuration files of these pretrained models.

- `LAFC_config.yaml`: The configuration file of `lafc.pth.tar`

- `LAFC_single_config.yaml`: The configuration file of `lafc_single.pth.tar`

- `FGT_config.yaml`: The configuration file of `fgt.pth.tar`

## Deployment

Download this repository to the base directory of the codes (please download that at the github page), and run "bash deploy.sh" to form the models and the cofiguration files.

After the step above, you can skip the step 1~3 in the `quick start` section in the github page and run the object removal demo directly.

|

sd-concepts-library/mycat

|

sd-concepts-library

| 2022-09-10T07:57:39Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T07:57:35Z |

---

license: mit

---

### mycat on Stable Diffusion

This is the `<mycat>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

Sebabrata/lmv2-g-bnkstm-994-doc-09-10

|

Sebabrata

| 2022-09-10T06:25:50Z | 78 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"layoutlmv2",

"token-classification",

"generated_from_trainer",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-09-10T03:53:08Z |

---

license: cc-by-nc-sa-4.0

tags:

- generated_from_trainer

model-index:

- name: lmv2-g-bnkstm-994-doc-09-10

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# lmv2-g-bnkstm-994-doc-09-10

This model is a fine-tuned version of [microsoft/layoutlmv2-base-uncased](https://huggingface.co/microsoft/layoutlmv2-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0926

- Account Number Precision: 0.8889

- Account Number Recall: 0.9014

- Account Number F1: 0.8951

- Account Number Number: 142

- Bank Name Precision: 0.7993

- Bank Name Recall: 0.8484

- Bank Name F1: 0.8231

- Bank Name Number: 277

- Cust Address Precision: 0.8563

- Cust Address Recall: 0.8827

- Cust Address F1: 0.8693

- Cust Address Number: 162

- Cust Name Precision: 0.9181

- Cust Name Recall: 0.9290

- Cust Name F1: 0.9235

- Cust Name Number: 169

- Ending Balance Precision: 0.7706

- Ending Balance Recall: 0.7892

- Ending Balance F1: 0.7798

- Ending Balance Number: 166

- Starting Balance Precision: 0.9051

- Starting Balance Recall: 0.8720

- Starting Balance F1: 0.8882

- Starting Balance Number: 164

- Statement Date Precision: 0.8817

- Statement Date Recall: 0.8765

- Statement Date F1: 0.8791

- Statement Date Number: 170

- Overall Precision: 0.8531

- Overall Recall: 0.8688

- Overall F1: 0.8609

- Overall Accuracy: 0.9850

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4e-05

- train_batch_size: 1

- eval_batch_size: 1

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: constant

- num_epochs: 30

### Training results

| Training Loss | Epoch | Step | Validation Loss | Account Number Precision | Account Number Recall | Account Number F1 | Account Number Number | Bank Name Precision | Bank Name Recall | Bank Name F1 | Bank Name Number | Cust Address Precision | Cust Address Recall | Cust Address F1 | Cust Address Number | Cust Name Precision | Cust Name Recall | Cust Name F1 | Cust Name Number | Ending Balance Precision | Ending Balance Recall | Ending Balance F1 | Ending Balance Number | Starting Balance Precision | Starting Balance Recall | Starting Balance F1 | Starting Balance Number | Statement Date Precision | Statement Date Recall | Statement Date F1 | Statement Date Number | Overall Precision | Overall Recall | Overall F1 | Overall Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:------------------------:|:---------------------:|:-----------------:|:---------------------:|:-------------------:|:----------------:|:------------:|:----------------:|:----------------------:|:-------------------:|:---------------:|:-------------------:|:-------------------:|:----------------:|:------------:|:----------------:|:------------------------:|:---------------------:|:-----------------:|:---------------------:|:--------------------------:|:-----------------------:|:-------------------:|:-----------------------:|:------------------------:|:---------------------:|:-----------------:|:---------------------:|:-----------------:|:--------------:|:----------:|:----------------:|

| 0.7648 | 1.0 | 795 | 0.2550 | 0.8514 | 0.4437 | 0.5833 | 142 | 0.6229 | 0.5307 | 0.5731 | 277 | 0.5650 | 0.7778 | 0.6545 | 162 | 0.6682 | 0.8698 | 0.7558 | 169 | 0.0 | 0.0 | 0.0 | 166 | 0.0 | 0.0 | 0.0 | 164 | 0.6040 | 0.3588 | 0.4502 | 170 | 0.6370 | 0.4352 | 0.5171 | 0.9623 |

| 0.1725 | 2.0 | 1590 | 0.1128 | 0.6067 | 0.7606 | 0.675 | 142 | 0.7294 | 0.7978 | 0.7621 | 277 | 0.8150 | 0.8704 | 0.8418 | 162 | 0.8966 | 0.9231 | 0.9096 | 169 | 0.7786 | 0.6566 | 0.7124 | 166 | 0.7576 | 0.7622 | 0.7599 | 164 | 0.8509 | 0.8059 | 0.8278 | 170 | 0.7705 | 0.7976 | 0.7838 | 0.9816 |

| 0.0877 | 3.0 | 2385 | 0.0877 | 0.7857 | 0.9296 | 0.8516 | 142 | 0.7872 | 0.8014 | 0.7943 | 277 | 0.7709 | 0.8519 | 0.8094 | 162 | 0.8827 | 0.9349 | 0.9080 | 169 | 0.7673 | 0.7349 | 0.7508 | 166 | 0.8313 | 0.8415 | 0.8364 | 164 | 0.7716 | 0.8941 | 0.8283 | 170 | 0.7985 | 0.8496 | 0.8233 | 0.9830 |

| 0.0564 | 4.0 | 3180 | 0.0826 | 0.8503 | 0.8803 | 0.8651 | 142 | 0.7566 | 0.8303 | 0.7917 | 277 | 0.7895 | 0.8333 | 0.8108 | 162 | 0.8824 | 0.8876 | 0.8850 | 169 | 0.7049 | 0.7771 | 0.7393 | 166 | 0.7717 | 0.8659 | 0.8161 | 164 | 0.8363 | 0.8412 | 0.8387 | 170 | 0.7925 | 0.8432 | 0.8171 | 0.9828 |

| 0.0402 | 5.0 | 3975 | 0.0889 | 0.8815 | 0.8380 | 0.8592 | 142 | 0.7758 | 0.7870 | 0.7814 | 277 | 0.8266 | 0.8827 | 0.8537 | 162 | 0.8983 | 0.9408 | 0.9191 | 169 | 0.6378 | 0.7108 | 0.6724 | 166 | 0.8707 | 0.7805 | 0.8232 | 164 | 0.8508 | 0.9059 | 0.8775 | 170 | 0.8124 | 0.8312 | 0.8217 | 0.9837 |

| 0.0332 | 6.0 | 4770 | 0.0864 | 0.7778 | 0.9366 | 0.8498 | 142 | 0.8175 | 0.8412 | 0.8292 | 277 | 0.8704 | 0.8704 | 0.8704 | 162 | 0.9167 | 0.9112 | 0.9139 | 169 | 0.7702 | 0.7470 | 0.7584 | 166 | 0.8424 | 0.8476 | 0.8450 | 164 | 0.8728 | 0.8882 | 0.8805 | 170 | 0.8366 | 0.86 | 0.8481 | 0.9846 |

| 0.0285 | 7.0 | 5565 | 0.0858 | 0.7516 | 0.8310 | 0.7893 | 142 | 0.8156 | 0.8303 | 0.8229 | 277 | 0.8373 | 0.8580 | 0.8476 | 162 | 0.9133 | 0.9349 | 0.9240 | 169 | 0.8288 | 0.7289 | 0.7756 | 166 | 0.8144 | 0.8293 | 0.8218 | 164 | 0.8353 | 0.8353 | 0.8353 | 170 | 0.8279 | 0.8352 | 0.8315 | 0.9840 |

| 0.027 | 8.0 | 6360 | 0.1033 | 0.8841 | 0.8592 | 0.8714 | 142 | 0.7695 | 0.8556 | 0.8103 | 277 | 0.7816 | 0.8395 | 0.8095 | 162 | 0.9075 | 0.9290 | 0.9181 | 169 | 0.8538 | 0.6687 | 0.75 | 166 | 0.8861 | 0.8537 | 0.8696 | 164 | 0.8492 | 0.8941 | 0.8711 | 170 | 0.8373 | 0.844 | 0.8406 | 0.9837 |

| 0.0237 | 9.0 | 7155 | 0.0922 | 0.8792 | 0.9225 | 0.9003 | 142 | 0.8262 | 0.8412 | 0.8336 | 277 | 0.8421 | 0.8889 | 0.8649 | 162 | 0.8983 | 0.9408 | 0.9191 | 169 | 0.8113 | 0.7771 | 0.7938 | 166 | 0.7641 | 0.9085 | 0.8301 | 164 | 0.8466 | 0.8765 | 0.8613 | 170 | 0.8358 | 0.8752 | 0.8550 | 0.9850 |

| 0.023 | 10.0 | 7950 | 0.0935 | 0.8493 | 0.8732 | 0.8611 | 142 | 0.7848 | 0.8556 | 0.8187 | 277 | 0.8246 | 0.8704 | 0.8468 | 162 | 0.9080 | 0.9349 | 0.9213 | 169 | 0.8133 | 0.7349 | 0.7722 | 166 | 0.8867 | 0.8110 | 0.8471 | 164 | 0.8735 | 0.8529 | 0.8631 | 170 | 0.8419 | 0.848 | 0.8450 | 0.9841 |

| 0.0197 | 11.0 | 8745 | 0.0926 | 0.8889 | 0.9014 | 0.8951 | 142 | 0.7993 | 0.8484 | 0.8231 | 277 | 0.8563 | 0.8827 | 0.8693 | 162 | 0.9181 | 0.9290 | 0.9235 | 169 | 0.7706 | 0.7892 | 0.7798 | 166 | 0.9051 | 0.8720 | 0.8882 | 164 | 0.8817 | 0.8765 | 0.8791 | 170 | 0.8531 | 0.8688 | 0.8609 | 0.9850 |

| 0.0193 | 12.0 | 9540 | 0.1035 | 0.7514 | 0.9366 | 0.8339 | 142 | 0.8127 | 0.8773 | 0.8438 | 277 | 0.8103 | 0.8704 | 0.8393 | 162 | 0.9405 | 0.9349 | 0.9377 | 169 | 0.6983 | 0.7530 | 0.7246 | 166 | 0.8011 | 0.8841 | 0.8406 | 164 | 0.8462 | 0.9059 | 0.8750 | 170 | 0.8081 | 0.8792 | 0.8421 | 0.9836 |

| 0.0166 | 13.0 | 10335 | 0.1077 | 0.8889 | 0.8451 | 0.8664 | 142 | 0.8062 | 0.8412 | 0.8233 | 277 | 0.7953 | 0.8395 | 0.8168 | 162 | 0.8786 | 0.8994 | 0.8889 | 169 | 0.8069 | 0.7048 | 0.7524 | 166 | 0.8167 | 0.8963 | 0.8547 | 164 | 0.8671 | 0.8824 | 0.8746 | 170 | 0.8333 | 0.844 | 0.8386 | 0.9836 |

| 0.016 | 14.0 | 11130 | 0.1247 | 0.8521 | 0.8521 | 0.8521 | 142 | 0.8456 | 0.8303 | 0.8379 | 277 | 0.8050 | 0.7901 | 0.7975 | 162 | 0.9167 | 0.9112 | 0.9139 | 169 | 0.8392 | 0.7229 | 0.7767 | 166 | 0.8521 | 0.8780 | 0.8649 | 164 | 0.9262 | 0.8118 | 0.8652 | 170 | 0.8611 | 0.828 | 0.8442 | 0.9836 |

| 0.0153 | 15.0 | 11925 | 0.1030 | 0.8280 | 0.9155 | 0.8696 | 142 | 0.7637 | 0.8051 | 0.7838 | 277 | 0.8452 | 0.8765 | 0.8606 | 162 | 0.9337 | 0.9172 | 0.9254 | 169 | 0.7551 | 0.6687 | 0.7093 | 166 | 0.8616 | 0.8354 | 0.8483 | 164 | 0.8287 | 0.8824 | 0.8547 | 170 | 0.8252 | 0.8384 | 0.8317 | 0.9834 |

| 0.0139 | 16.0 | 12720 | 0.0920 | 0.8075 | 0.9155 | 0.8581 | 142 | 0.7735 | 0.8628 | 0.8157 | 277 | 0.7663 | 0.8704 | 0.8150 | 162 | 0.8870 | 0.9290 | 0.9075 | 169 | 0.7647 | 0.7831 | 0.7738 | 166 | 0.8571 | 0.8780 | 0.8675 | 164 | 0.6630 | 0.7176 | 0.6893 | 170 | 0.7857 | 0.8504 | 0.8167 | 0.9832 |

| 0.0124 | 17.0 | 13515 | 0.1057 | 0.8013 | 0.8521 | 0.8259 | 142 | 0.8087 | 0.8087 | 0.8087 | 277 | 0.7663 | 0.8704 | 0.8150 | 162 | 0.9186 | 0.9349 | 0.9267 | 169 | 0.8322 | 0.7169 | 0.7702 | 166 | 0.8563 | 0.8720 | 0.8640 | 164 | 0.8603 | 0.9059 | 0.8825 | 170 | 0.8327 | 0.848 | 0.8403 | 0.9829 |

| 0.0135 | 18.0 | 14310 | 0.1001 | 0.8323 | 0.9085 | 0.8687 | 142 | 0.8363 | 0.8484 | 0.8423 | 277 | 0.8494 | 0.8704 | 0.8598 | 162 | 0.8462 | 0.9112 | 0.8775 | 169 | 0.7925 | 0.7590 | 0.7754 | 166 | 0.8286 | 0.8841 | 0.8555 | 164 | 0.8686 | 0.8941 | 0.8812 | 170 | 0.8368 | 0.8656 | 0.8510 | 0.9839 |

| 0.0125 | 19.0 | 15105 | 0.1200 | 0.8562 | 0.8803 | 0.8681 | 142 | 0.8 | 0.8520 | 0.8252 | 277 | 0.7705 | 0.8704 | 0.8174 | 162 | 0.8864 | 0.9231 | 0.9043 | 169 | 0.7716 | 0.7530 | 0.7622 | 166 | 0.8642 | 0.8537 | 0.8589 | 164 | 0.85 | 0.9 | 0.8743 | 170 | 0.8252 | 0.8608 | 0.8426 | 0.9843 |

| 0.0098 | 20.0 | 15900 | 0.1097 | 0.8993 | 0.8803 | 0.8897 | 142 | 0.7933 | 0.8592 | 0.8250 | 277 | 0.8144 | 0.8395 | 0.8267 | 162 | 0.8641 | 0.9408 | 0.9008 | 169 | 0.82 | 0.7410 | 0.7785 | 166 | 0.8704 | 0.8598 | 0.8650 | 164 | 0.8876 | 0.8824 | 0.8850 | 170 | 0.8434 | 0.8576 | 0.8505 | 0.9846 |

| 0.0128 | 21.0 | 16695 | 0.1090 | 0.8993 | 0.8803 | 0.8897 | 142 | 0.8294 | 0.8773 | 0.8526 | 277 | 0.8107 | 0.8457 | 0.8278 | 162 | 0.8678 | 0.8935 | 0.8805 | 169 | 0.8133 | 0.7349 | 0.7722 | 166 | 0.8218 | 0.8720 | 0.8462 | 164 | 0.8889 | 0.8471 | 0.8675 | 170 | 0.8446 | 0.852 | 0.8483 | 0.9838 |

| 0.01 | 22.0 | 17490 | 0.1280 | 0.9 | 0.8239 | 0.8603 | 142 | 0.7848 | 0.8556 | 0.8187 | 277 | 0.8057 | 0.8704 | 0.8368 | 162 | 0.8674 | 0.9290 | 0.8971 | 169 | 0.7595 | 0.7229 | 0.7407 | 166 | 0.8412 | 0.8720 | 0.8563 | 164 | 0.7989 | 0.8882 | 0.8412 | 170 | 0.8169 | 0.8528 | 0.8344 | 0.9832 |

| 0.0096 | 23.0 | 18285 | 0.1023 | 0.8889 | 0.9014 | 0.8951 | 142 | 0.8041 | 0.8448 | 0.8239 | 277 | 0.8253 | 0.8457 | 0.8354 | 162 | 0.8415 | 0.9112 | 0.875 | 169 | 0.7683 | 0.7590 | 0.7636 | 166 | 0.8118 | 0.8415 | 0.8263 | 164 | 0.7979 | 0.8824 | 0.8380 | 170 | 0.8170 | 0.8536 | 0.8349 | 0.9843 |

| 0.0088 | 24.0 | 19080 | 0.1172 | 0.8649 | 0.9014 | 0.8828 | 142 | 0.8298 | 0.8448 | 0.8372 | 277 | 0.7816 | 0.8395 | 0.8095 | 162 | 0.8674 | 0.9290 | 0.8971 | 169 | 0.7257 | 0.7651 | 0.7449 | 166 | 0.8136 | 0.8780 | 0.8446 | 164 | 0.8229 | 0.8471 | 0.8348 | 170 | 0.8155 | 0.856 | 0.8353 | 0.9829 |

| 0.0083 | 25.0 | 19875 | 0.1090 | 0.7401 | 0.9225 | 0.8213 | 142 | 0.8363 | 0.8484 | 0.8423 | 277 | 0.8057 | 0.8704 | 0.8368 | 162 | 0.8889 | 0.8994 | 0.8941 | 169 | 0.8176 | 0.7289 | 0.7707 | 166 | 0.7609 | 0.8537 | 0.8046 | 164 | 0.8488 | 0.8588 | 0.8538 | 170 | 0.8150 | 0.8528 | 0.8335 | 0.9830 |

| 0.0105 | 26.0 | 20670 | 0.1191 | 0.7241 | 0.8873 | 0.7975 | 142 | 0.7468 | 0.8412 | 0.7912 | 277 | 0.8161 | 0.8765 | 0.8452 | 162 | 0.8254 | 0.9231 | 0.8715 | 169 | 0.7384 | 0.7651 | 0.7515 | 166 | 0.8333 | 0.8537 | 0.8434 | 164 | 0.8378 | 0.9118 | 0.8732 | 170 | 0.7853 | 0.8632 | 0.8224 | 0.9814 |

| 0.0103 | 27.0 | 21465 | 0.1125 | 0.8378 | 0.8732 | 0.8552 | 142 | 0.8566 | 0.8628 | 0.8597 | 277 | 0.8046 | 0.8642 | 0.8333 | 162 | 0.8764 | 0.9231 | 0.8991 | 169 | 0.8289 | 0.7590 | 0.7925 | 166 | 0.8466 | 0.8415 | 0.8440 | 164 | 0.8929 | 0.8824 | 0.8876 | 170 | 0.8502 | 0.8584 | 0.8543 | 0.9847 |

| 0.0081 | 28.0 | 22260 | 0.1301 | 0.8601 | 0.8662 | 0.8632 | 142 | 0.8489 | 0.8520 | 0.8505 | 277 | 0.8225 | 0.8580 | 0.8399 | 162 | 0.8870 | 0.9290 | 0.9075 | 169 | 0.8067 | 0.7289 | 0.7658 | 166 | 0.8625 | 0.8415 | 0.8519 | 164 | 0.8613 | 0.8765 | 0.8688 | 170 | 0.8504 | 0.8504 | 0.8504 | 0.9850 |

| 0.0079 | 29.0 | 23055 | 0.1458 | 0.9104 | 0.8592 | 0.8841 | 142 | 0.8185 | 0.8303 | 0.8244 | 277 | 0.7730 | 0.7778 | 0.7754 | 162 | 0.8191 | 0.9112 | 0.8627 | 169 | 0.8013 | 0.7530 | 0.7764 | 166 | 0.8304 | 0.8659 | 0.8478 | 164 | 0.8941 | 0.8941 | 0.8941 | 170 | 0.8321 | 0.8408 | 0.8365 | 0.9834 |

| 0.0084 | 30.0 | 23850 | 0.1264 | 0.8435 | 0.8732 | 0.8581 | 142 | 0.8328 | 0.8628 | 0.8475 | 277 | 0.8256 | 0.8765 | 0.8503 | 162 | 0.9023 | 0.9290 | 0.9155 | 169 | 0.8531 | 0.7349 | 0.7896 | 166 | 0.8598 | 0.8598 | 0.8598 | 164 | 0.8757 | 0.8706 | 0.8732 | 170 | 0.8543 | 0.8584 | 0.8563 | 0.9848 |

### Framework versions

- Transformers 4.22.0.dev0

- Pytorch 1.12.1+cu113

- Datasets 2.2.2

- Tokenizers 0.12.1

|

Osmodin/Neon_Lights

|

Osmodin

| 2022-09-10T04:07:33Z | 0 | 0 | null |

[

"region:us"

] | null | 2022-09-10T02:17:19Z |

Custom Disco Diffusion model trained in Visions of Chaos using neon lights and signs

To use, select "custom_512x_512" for your diffusion model and point to the model .PT file under "custom_path"

|

sd-concepts-library/lego-astronaut

|

sd-concepts-library

| 2022-09-10T03:42:17Z | 0 | 3 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-10T03:42:10Z |

---

license: mit

---

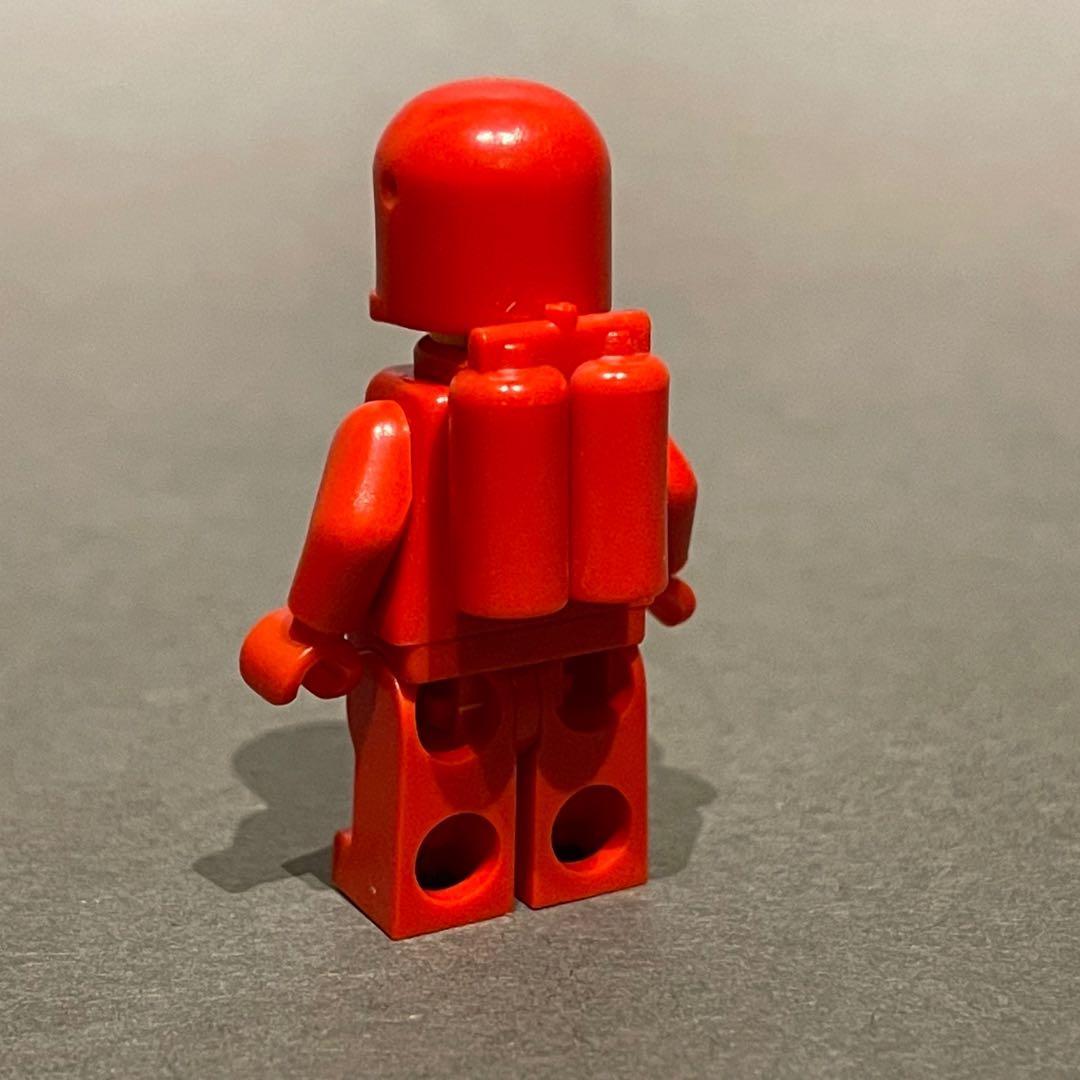

### Lego astronaut on Stable Diffusion

This is the `<lego-astronaut>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

skr1125/distilbert-base-uncased-distilled-clinc

|

skr1125

| 2022-09-10T02:38:56Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:clinc_oos",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-10T02:29:06Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- clinc_oos

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-distilled-clinc

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: clinc_oos

type: clinc_oos

args: plus

metrics:

- name: Accuracy

type: accuracy

value: 0.9429032258064516

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-distilled-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2002

- Accuracy: 0.9429

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data