modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-08 19:17:42

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 549

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-08 18:30:19

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

Billwzl/distilbert-base-uncased-IMDB_distilbert

|

Billwzl

| 2022-08-12T14:28:37Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-08-12T14:06:42Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilbert-base-uncased-IMDB_distilbert

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-IMDB_distilbert

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 2.6232

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 16

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 3.1531 | 1.0 | 1250 | 2.9545 |

| 2.9251 | 2.0 | 2500 | 2.8577 |

| 2.7865 | 3.0 | 3750 | 2.8460 |

| 2.692 | 4.0 | 5000 | 2.7769 |

| 2.611 | 5.0 | 6250 | 2.8373 |

| 2.5341 | 6.0 | 7500 | 2.7105 |

| 2.4887 | 7.0 | 8750 | 2.6864 |

| 2.4292 | 8.0 | 10000 | 2.6600 |

| 2.3524 | 9.0 | 11250 | 2.6872 |

| 2.3217 | 10.0 | 12500 | 2.6527 |

| 2.2961 | 11.0 | 13750 | 2.6659 |

| 2.2553 | 12.0 | 15000 | 2.6513 |

| 2.2066 | 13.0 | 16250 | 2.6443 |

| 2.1912 | 14.0 | 17500 | 2.5912 |

| 2.1703 | 15.0 | 18750 | 2.6312 |

| 2.1715 | 16.0 | 20000 | 2.6232 |

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.0+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

alex-apostolo/legal-roberta-base-cuad

|

alex-apostolo

| 2022-08-12T14:27:48Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"question-answering",

"generated_from_trainer",

"dataset:cuad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-08-09T19:13:16Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- cuad

model-index:

- name: legal-roberta-base-cuad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# legal-roberta-base-cuad

This model is a fine-tuned version of [saibo/legal-roberta-base](https://huggingface.co/saibo/legal-roberta-base) on the cuad dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0260

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:------:|:---------------:|

| 0.0393 | 1.0 | 51295 | 0.0261 |

| 0.0234 | 2.0 | 102590 | 0.0254 |

| 0.0234 | 3.0 | 153885 | 0.0260 |

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.0+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

AkashKhamkar/InSumT510k

|

AkashKhamkar

| 2022-08-12T13:09:54Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"license:afl-3.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-10T11:27:49Z |

---

license: afl-3.0

---

---

About :

This model can be used for text summarization.

The dataset on which it was fine tuned consisted of 10,323 articles.

The Data Fields :

- "Headline" : title of the article

- "articleBody" : the main article content

- "source" : the link to the readmore page.

The data splits were :

- Train : 8258.

- Vaildation : 2065.

### How to use along with pipeline

```python

from transformers import pipeline

from transformers import AutoTokenizer, AutoModelForSeq2Seq

tokenizer = AutoTokenizer.from_pretrained("AkashKhamkar/InSumT510k")

model = AutoModelForSeq2SeqLM.from_pretrained("AkashKhamkar/InSumT510k")

summarizer = pipeline("summarization", model=model, tokenizer=tokenizer)

summarizer("Text for summarization...", min_length=5, max_length=50)

```

language:

- English

library_name: Pytorch

tags:

- Summarization

- T5-base

- Conditional Modelling

-

|

blesot/Mask-RCNN

|

blesot

| 2022-08-12T11:28:17Z | 0 | 5 | null |

[

"arxiv:1703.06870",

"region:us"

] | null | 2022-08-08T23:54:30Z |

Hugging Face's logo

---

tags:

- object-detection

- vision

library_name: mask_rcnn

datasets:

- coco

---

# Mask R-CNN

## Model desription

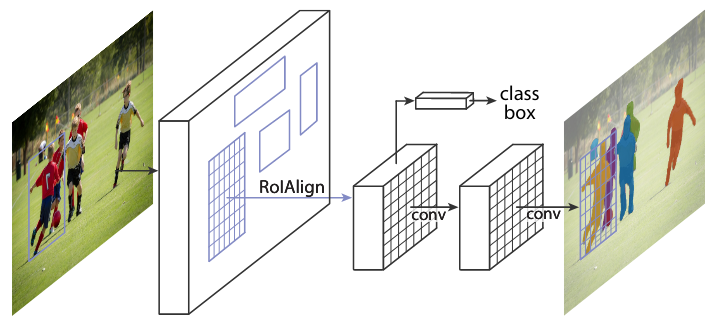

Mask R-CNN is a model that extends Faster R-CNN by adding a branch for predicting an object mask in parallel with the existing branch for bounding box recognition. The model locates pixels of images instead of just bounding boxes as Faster R-CNN was not designed for pixel-to-pixel alignment between network inputs and outputs.

*This Model is based on the Pretrained model from [OpenMMlab](https://github.com/open-mmlab/mmdetection)*

### More information on the model and dataset:

#### The model

Mask R-CNN works towards the approach of instance segmentation, which involves object detection, and semantic segmentation. For object detection, Mask R-CNN uses an architecture that is similar to Faster R-CNN, while it uses a Fully Convolutional Network(FCN) for semantic segmentation.

The FCN is added to the top of features of a Faster R-CNN to generate a mask segmentation output. This segmentation output is in parallel with the classification and bounding box regressor network of the Faster R-CNN model. From the advancement of Fast R-CNN Region of Interest Pooling(ROI), Mask R-CNN adds refinement called ROI aligning by addressing the loss and misalignment of ROI Pooling; the new ROI aligned leads to improved results.

#### Datasets

[COCO Datasets](https://cocodataset.org/#home)

## Training Procedure

Please [read the paper](https://arxiv.org/pdf/1703.06870.pdf) for more information on training, or check OpenMMLab [repository](https://github.com/open-mmlab/mmdetection/tree/master/configs/mask_rcnn)

The model architecture is divided into two parts:

- Region proposal network (RPN) to propose candidate object bounding boxes.

- Binary mask classifier to generate a mask for every class

#### Technical Summary.

- Mask R-CNN is quite similar to the structure of faster R-CNN.

- Outputs a binary mask for each Region of Interest.

- Applies bounding-box classification and regression in parallel, simplifying the original R-CNN's multi-stage pipeline.

- The network architectures utilized are called ResNet and ResNeXt. The depth can be either 50 or 101

#### Results Summary

- Instance Segmentation: Based on the COCO dataset, Mask R-CNN outperforms all categories compared to MNC and FCIS, which are state-of-the-art models.

- Bounding Box Detection: Mask R-CNN outperforms the base variants of all previous state-of-the-art models, including the COCO 2016 Detection Challenge winner.

## Intended uses & limitations

The identification of object relationships and the context of objects in a picture are both aided by image segmentation. Some of the applications include face recognition, number plate recognition, and satellite image analysis. With great model generality, Mask RCNN can be extended to human pose estimation; it can be used to estimate on-site approaching live traffic to aid autonomous driving.

|

Jungwoo4021/wav2vec2-base-ks-finetuning

|

Jungwoo4021

| 2022-08-12T10:59:01Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"text-classification",

"audio-classification",

"generated_from_trainer",

"dataset:superb",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2022-08-12T02:24:38Z |

---

license: apache-2.0

tags:

- audio-classification

- generated_from_trainer

datasets:

- superb

metrics:

- accuracy

model-index:

- name: wav2vec2-base-ks-finetuning

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-base-ks-finetuning

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the superb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2261

- Accuracy: 0.9813

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 256

- eval_batch_size: 256

- seed: 0

- gradient_accumulation_steps: 4

- total_train_batch_size: 1024

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 10.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.6773 | 1.0 | 50 | 1.6218 | 0.6209 |

| 1.4707 | 2.0 | 100 | 1.4400 | 0.6209 |

| 1.1387 | 3.0 | 150 | 1.0470 | 0.6599 |

| 0.7909 | 4.0 | 200 | 0.6997 | 0.8903 |

| 0.5488 | 5.0 | 250 | 0.4567 | 0.9640 |

| 0.4195 | 6.0 | 300 | 0.3288 | 0.9754 |

| 0.3445 | 7.0 | 350 | 0.2598 | 0.9809 |

| 0.3107 | 8.0 | 400 | 0.2261 | 0.9813 |

| 0.2781 | 9.0 | 450 | 0.2104 | 0.9810 |

| 0.2729 | 10.0 | 500 | 0.2050 | 0.9813 |

### Framework versions

- Transformers 4.22.0.dev0

- Pytorch 1.11.0+cu115

- Datasets 2.4.0

- Tokenizers 0.12.1

|

Jiqing/bert-large-uncased-whole-word-masking-finetuned-squad-finetuned-squad

|

Jiqing

| 2022-08-12T09:24:10Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-08-12T09:22:04Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: bert-large-uncased-whole-word-masking-finetuned-squad-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-large-uncased-whole-word-masking-finetuned-squad-finetuned-squad

This model is a fine-tuned version of [bert-large-uncased-whole-word-masking-finetuned-squad](https://huggingface.co/bert-large-uncased-whole-word-masking-finetuned-squad) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Framework versions

- Transformers 4.21.0

- Pytorch 1.12.0+cu102

- Datasets 2.4.0

- Tokenizers 0.12.1

|

DOOGLAK/Article_250v8_NER_Model_3Epochs_UNAUGMENTED

|

DOOGLAK

| 2022-08-12T09:22:05Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:article250v8_wikigold_split",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-08-12T09:16:55Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- article250v8_wikigold_split

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: Article_250v8_NER_Model_3Epochs_UNAUGMENTED

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: article250v8_wikigold_split

type: article250v8_wikigold_split

args: default

metrics:

- name: Precision

type: precision

value: 0.4215600350569676

- name: Recall

type: recall

value: 0.3990597345132743

- name: F1

type: f1

value: 0.4100014206563432

- name: Accuracy

type: accuracy

value: 0.878173617797598

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Article_250v8_NER_Model_3Epochs_UNAUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article250v8_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3329

- Precision: 0.4216

- Recall: 0.3991

- F1: 0.4100

- Accuracy: 0.8782

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 28 | 0.5293 | 0.1767 | 0.0454 | 0.0722 | 0.7988 |

| No log | 2.0 | 56 | 0.3589 | 0.3246 | 0.2987 | 0.3111 | 0.8611 |

| No log | 3.0 | 84 | 0.3329 | 0.4216 | 0.3991 | 0.4100 | 0.8782 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

|

Intel/distilgpt2-wikitext2

|

Intel

| 2022-08-12T09:20:44Z | 8 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"dataset:wikitext",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-08-12T08:59:15Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wikitext

metrics:

- accuracy

model-index:

- name: distilgpt2-wikitext2

results:

- task:

name: Causal Language Modeling

type: text-generation

dataset:

name: wikitext wikitext-2-raw-v1

type: wikitext

args: wikitext-2-raw-v1

metrics:

- name: Accuracy

type: accuracy

value: 0.39321440208536984

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilgpt2-wikitext2

This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on the wikitext wikitext-2-raw-v1 dataset.

It achieves the following results on the evaluation set:

- Loss: 3.3259

- Accuracy: 0.3932

- perplexity: 27.8235

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.1+cu116

- Datasets 2.4.0

- Tokenizers 0.12.1

|

rhiga/q-Taxi-v3

|

rhiga

| 2022-08-12T07:50:11Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-08-12T07:50:03Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- metrics:

- type: mean_reward

value: 7.50 +/- 2.72

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="rhiga/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

Lvxue/distilled-mt5-small-1-2

|

Lvxue

| 2022-08-12T07:29:04Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"generated_from_trainer",

"en",

"ro",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-12T06:11:08Z |

---

language:

- en

- ro

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: distilled-mt5-small-1-2

results:

- task:

name: Translation

type: translation

dataset:

name: wmt16 ro-en

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 1.1101

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilled-mt5-small-1-2

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset.

It achieves the following results on the evaluation set:

- Loss: 3.7760

- Bleu: 1.1101

- Gen Len: 99.5898

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu102

- Datasets 2.3.2

- Tokenizers 0.12.1

|

skouras/DialoGPT-small-maptask

|

skouras

| 2022-08-12T07:22:42Z | 95 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-08-12T06:36:56Z |

---

tags:

- conversational

---

DialoGPT-small finetuned in the Maptask Corpus. The [repository](https://github.com/KonstSkouras/Maptask-Corpus/tree/develop) with additionally pre-processed Maptask dialogues of concatenated utterances per speaker, 80/10/10 train/val/test split and metadata, is a fork of the [Nathan Duran's repository](https://github.com/NathanDuran/Maptask-Corpus). For finetuning the `train_dialogpt.ipynb` notebook from Nathan Cooper's [Tutorial](https://nathancooper.io/i-am-a-nerd/chatbot/deep-learning/gpt2/2020/05/12/chatbot-part-1.html) was used to finetune the model with slight modifications in Google Collab.

History dialogue context = 5. Number of utterances: 14712 (train set), 1964 (test set), 2017 (val set). Fine-tuning for 3 epochs with batch size 2.

Evaluation perplexity in Maptask from 410.7796 (pre-trainded model) to 19.7469 (fine-tuned model).

|

skouras/DialoGPT-small-swda

|

skouras

| 2022-08-12T07:22:06Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-08-12T06:01:01Z |

---

tags:

- conversational

---

DialoGPT-small finetuned in the Switchboard Dialogue Act (SwDa) Corpus. The [repository](https://github.com/KonstSkouras/Switchboard-Corpus/tree/develop/) with additionally pre-processed SwDa dialogues of concatenated utterances per speaker, 80/10/10 train/val/test split and metadata, is a fork of the [Nathan Duran's repository](https://github.com/NathanDuran/Switchboard-Corpus). For finetuning the `train_dialogpt.ipynb` notebook from Nathan Cooper's [Tutorial](https://nathancooper.io/i-am-a-nerd/chatbot/deep-learning/gpt2/2020/05/12/chatbot-part-1.html) was used to finetune the model with slight modifications in Google Collab.

History dialogue context = 5.

Number of utterances: 80704 (train set), 9749 (test set), 9616 (val set).

Checkpoint-84000 after fine-tuning for 2 epochs with batch size 2.

Evaluation perplexity in SwDa from 635.6993 (pre-trainded model) to 18.1693 (fine-tuned model).

|

susank/distilbert-base-uncased-finetuned-emotion

|

susank

| 2022-08-12T05:45:28Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-08-12T05:33:23Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.924

- name: F1

type: f1

value: 0.9240247841894665

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2281

- Accuracy: 0.924

- F1: 0.9240

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8687 | 1.0 | 250 | 0.3390 | 0.9015 | 0.8984 |

| 0.2645 | 2.0 | 500 | 0.2281 | 0.924 | 0.9240 |

### Framework versions

- Transformers 4.13.0

- Pytorch 1.12.0+cu113

- Datasets 2.0.0

- Tokenizers 0.10.3

|

carted-nlp/categorization-finetuned-20220721-164940-distilled-20220811-132317

|

carted-nlp

| 2022-08-12T04:21:30Z | 25 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"onnx",

"roberta",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-08-11T13:25:02Z |

---

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: categorization-finetuned-20220721-164940-distilled-20220811-132317

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# categorization-finetuned-20220721-164940-distilled-20220811-132317

This model is a fine-tuned version of [carted-nlp/categorization-finetuned-20220721-164940](https://huggingface.co/carted-nlp/categorization-finetuned-20220721-164940) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1522

- Accuracy: 0.8783

- F1: 0.8779

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 4e-05

- train_batch_size: 64

- eval_batch_size: 128

- seed: 314

- gradient_accumulation_steps: 4

- total_train_batch_size: 256

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 2000

- num_epochs: 30.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:------:|:---------------:|:--------:|:------:|

| 0.5212 | 0.56 | 2500 | 0.2564 | 0.7953 | 0.7921 |

| 0.243 | 1.12 | 5000 | 0.2110 | 0.8270 | 0.8249 |

| 0.2105 | 1.69 | 7500 | 0.1925 | 0.8409 | 0.8391 |

| 0.1939 | 2.25 | 10000 | 0.1837 | 0.8476 | 0.8465 |

| 0.1838 | 2.81 | 12500 | 0.1771 | 0.8528 | 0.8517 |

| 0.1729 | 3.37 | 15000 | 0.1722 | 0.8564 | 0.8555 |

| 0.1687 | 3.94 | 17500 | 0.1684 | 0.8593 | 0.8576 |

| 0.1602 | 4.5 | 20000 | 0.1653 | 0.8614 | 0.8604 |

| 0.1572 | 5.06 | 22500 | 0.1629 | 0.8648 | 0.8638 |

| 0.1507 | 5.62 | 25000 | 0.1605 | 0.8654 | 0.8646 |

| 0.1483 | 6.19 | 27500 | 0.1602 | 0.8661 | 0.8653 |

| 0.1431 | 6.75 | 30000 | 0.1597 | 0.8669 | 0.8663 |

| 0.1393 | 7.31 | 32500 | 0.1581 | 0.8691 | 0.8687 |

| 0.1374 | 7.87 | 35000 | 0.1556 | 0.8704 | 0.8697 |

| 0.1321 | 8.43 | 37500 | 0.1558 | 0.8707 | 0.8700 |

| 0.1328 | 9.0 | 40000 | 0.1536 | 0.8719 | 0.8711 |

| 0.1261 | 9.56 | 42500 | 0.1544 | 0.8716 | 0.8708 |

| 0.1256 | 10.12 | 45000 | 0.1541 | 0.8731 | 0.8725 |

| 0.122 | 10.68 | 47500 | 0.1520 | 0.8741 | 0.8734 |

| 0.1196 | 11.25 | 50000 | 0.1529 | 0.8734 | 0.8728 |

| 0.1182 | 11.81 | 52500 | 0.1510 | 0.8758 | 0.8751 |

| 0.1145 | 12.37 | 55000 | 0.1526 | 0.8746 | 0.8737 |

| 0.1141 | 12.93 | 57500 | 0.1512 | 0.8765 | 0.8759 |

| 0.1094 | 13.5 | 60000 | 0.1517 | 0.8760 | 0.8753 |

| 0.1098 | 14.06 | 62500 | 0.1513 | 0.8771 | 0.8764 |

| 0.1058 | 14.62 | 65000 | 0.1506 | 0.8775 | 0.8768 |

| 0.1048 | 15.18 | 67500 | 0.1521 | 0.8774 | 0.8768 |

| 0.1028 | 15.74 | 70000 | 0.1520 | 0.8778 | 0.8773 |

| 0.1006 | 16.31 | 72500 | 0.1517 | 0.8780 | 0.8774 |

| 0.1001 | 16.87 | 75000 | 0.1505 | 0.8794 | 0.8790 |

| 0.0971 | 17.43 | 77500 | 0.1520 | 0.8784 | 0.8778 |

| 0.0973 | 17.99 | 80000 | 0.1514 | 0.8796 | 0.8790 |

| 0.0938 | 18.56 | 82500 | 0.1516 | 0.8795 | 0.8789 |

| 0.0942 | 19.12 | 85000 | 0.1522 | 0.8794 | 0.8789 |

| 0.0918 | 19.68 | 87500 | 0.1518 | 0.8799 | 0.8793 |

| 0.0909 | 20.24 | 90000 | 0.1528 | 0.8803 | 0.8796 |

| 0.0901 | 20.81 | 92500 | 0.1516 | 0.8799 | 0.8793 |

| 0.0882 | 21.37 | 95000 | 0.1519 | 0.8800 | 0.8794 |

| 0.088 | 21.93 | 97500 | 0.1517 | 0.8802 | 0.8798 |

| 0.086 | 22.49 | 100000 | 0.1530 | 0.8800 | 0.8795 |

| 0.0861 | 23.05 | 102500 | 0.1523 | 0.8806 | 0.8801 |

| 0.0846 | 23.62 | 105000 | 0.1524 | 0.8808 | 0.8802 |

| 0.0843 | 24.18 | 107500 | 0.1522 | 0.8805 | 0.8800 |

| 0.0836 | 24.74 | 110000 | 0.1525 | 0.8808 | 0.8803 |

| 0.083 | 25.3 | 112500 | 0.1528 | 0.8810 | 0.8803 |

| 0.0829 | 25.87 | 115000 | 0.1528 | 0.8808 | 0.8802 |

| 0.082 | 26.43 | 117500 | 0.1529 | 0.8808 | 0.8802 |

| 0.0818 | 26.99 | 120000 | 0.1525 | 0.8811 | 0.8805 |

| 0.0816 | 27.55 | 122500 | 0.1526 | 0.8811 | 0.8806 |

| 0.0809 | 28.12 | 125000 | 0.1528 | 0.8810 | 0.8805 |

| 0.0809 | 28.68 | 127500 | 0.1527 | 0.8810 | 0.8804 |

| 0.0814 | 29.24 | 130000 | 0.1528 | 0.8808 | 0.8802 |

| 0.0807 | 29.8 | 132500 | 0.1528 | 0.8808 | 0.8802 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.11.6

|

Falcom/animal-classifier

|

Falcom

| 2022-08-12T04:02:18Z | 241 | 2 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"huggingpics",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-08-12T04:02:02Z |

---

tags:

- image-classification

- pytorch

- huggingpics

metrics:

- accuracy

model-index:

- name: animal-classifier

results:

- task:

name: Image Classification

type: image-classification

metrics:

- name: Accuracy

type: accuracy

value: 1.0

---

# animal-classifier

Autogenerated by HuggingPics🤗🖼️

Create your own image classifier for **anything** by running [the demo on Google Colab](https://colab.research.google.com/github/nateraw/huggingpics/blob/main/HuggingPics.ipynb).

Report any issues with the demo at the [github repo](https://github.com/nateraw/huggingpics).

## Example Images

#### butterfly

#### cat

#### chicken

#### cow

#### dog

#### elephant

#### horse

#### sheep

#### spider

#### squirrel

|

Lvxue/distilled-mt5-small-1-0.5

|

Lvxue

| 2022-08-12T03:22:00Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"generated_from_trainer",

"en",

"ro",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-12T02:06:37Z |

---

language:

- en

- ro

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: distilled-mt5-small-1-0.5

results:

- task:

name: Translation

type: translation

dataset:

name: wmt16 ro-en

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 5.3917

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilled-mt5-small-1-0.5

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset.

It achieves the following results on the evaluation set:

- Loss: 3.8410

- Bleu: 5.3917

- Gen Len: 40.6103

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu102

- Datasets 2.3.2

- Tokenizers 0.12.1

|

Lvxue/distilled-mt5-small-1-1

|

Lvxue

| 2022-08-12T03:18:55Z | 16 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"generated_from_trainer",

"en",

"ro",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-12T02:08:29Z |

---

language:

- en

- ro

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: distilled-mt5-small-1-1

results:

- task:

name: Translation

type: translation

dataset:

name: wmt16 ro-en

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 6.6959

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilled-mt5-small-1-1

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset.

It achieves the following results on the evaluation set:

- Loss: 2.8289

- Bleu: 6.6959

- Gen Len: 45.7539

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu102

- Datasets 2.3.2

- Tokenizers 0.12.1

|

Lvxue/distilled-mt5-small-0.005-0.25

|

Lvxue

| 2022-08-12T01:28:13Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"generated_from_trainer",

"en",

"ro",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-12T00:14:33Z |

---

language:

- en

- ro

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: distilled-mt5-small-0.005-0.25

results:

- task:

name: Translation

type: translation

dataset:

name: wmt16 ro-en

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 7.6069

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilled-mt5-small-0.005-0.25

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset.

It achieves the following results on the evaluation set:

- Loss: 2.8536

- Bleu: 7.6069

- Gen Len: 45.1846

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu102

- Datasets 2.3.2

- Tokenizers 0.12.1

|

Lvxue/distilled-mt5-small-0.02-0.25

|

Lvxue

| 2022-08-12T01:26:26Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"generated_from_trainer",

"en",

"ro",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-12T00:12:00Z |

---

language:

- en

- ro

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: distilled-mt5-small-0.02-0.25

results:

- task:

name: Translation

type: translation

dataset:

name: wmt16 ro-en

type: wmt16

args: ro-en

metrics:

- name: Bleu

type: bleu

value: 7.5228

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilled-mt5-small-0.02-0.25

This model is a fine-tuned version of [google/mt5-small](https://huggingface.co/google/mt5-small) on the wmt16 ro-en dataset.

It achieves the following results on the evaluation set:

- Loss: 2.8275

- Bleu: 7.5228

- Gen Len: 44.6403

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

### Framework versions

- Transformers 4.20.1

- Pytorch 1.12.0+cu102

- Datasets 2.3.2

- Tokenizers 0.12.1

|

brookelove/finetuning-sentiment-model-3000-samples

|

brookelove

| 2022-08-12T01:02:47Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-08-12T00:16:58Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

metrics:

- accuracy

- f1

model-index:

- name: finetuning-sentiment-model-3000-samples

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: imdb

type: imdb

config: plain_text

split: train

args: plain_text

metrics:

- name: Accuracy

type: accuracy

value: 0.8633333333333333

- name: F1

type: f1

value: 0.8673139158576051

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# finetuning-sentiment-model-3000-samples

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3246

- Accuracy: 0.8633

- F1: 0.8673

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

### Framework versions

- Transformers 4.21.1

- Pytorch 1.12.0+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

roshan151/Model_output

|

roshan151

| 2022-08-12T00:37:05Z | 62 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"fill-mask",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-08-10T22:55:31Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: roshan151/Model_output

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# roshan151/Model_output

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 2.9849

- Validation Loss: 2.8623

- Epoch: 4

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -82, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 100, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 3.1673 | 2.8445 | 0 |

| 2.9770 | 2.8557 | 1 |

| 3.0018 | 2.8612 | 2 |

| 2.9625 | 2.8496 | 3 |

| 2.9849 | 2.8623 | 4 |

### Framework versions

- Transformers 4.21.1

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

DOOGLAK/Article_500v6_NER_Model_3Epochs_UNAUGMENTED

|

DOOGLAK

| 2022-08-12T00:05:01Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:article500v6_wikigold_split",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-08-12T00:00:05Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- article500v6_wikigold_split

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: Article_500v6_NER_Model_3Epochs_UNAUGMENTED

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: article500v6_wikigold_split

type: article500v6_wikigold_split

args: default

metrics:

- name: Precision

type: precision

value: 0.6462295081967213

- name: Recall

type: recall

value: 0.6930379746835443

- name: F1

type: f1

value: 0.6688157448252461

- name: Accuracy

type: accuracy

value: 0.9318540995006005

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Article_500v6_NER_Model_3Epochs_UNAUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article500v6_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2025

- Precision: 0.6462

- Recall: 0.6930

- F1: 0.6688

- Accuracy: 0.9319

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 63 | 0.2794 | 0.3775 | 0.4525 | 0.4116 | 0.8945 |

| No log | 2.0 | 126 | 0.2119 | 0.6143 | 0.6670 | 0.6396 | 0.9266 |

| No log | 3.0 | 189 | 0.2025 | 0.6462 | 0.6930 | 0.6688 | 0.9319 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

|

DOOGLAK/Article_500v2_NER_Model_3Epochs_UNAUGMENTED

|

DOOGLAK

| 2022-08-11T23:41:48Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:article500v2_wikigold_split",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-08-11T23:36:55Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- article500v2_wikigold_split

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: Article_500v2_NER_Model_3Epochs_UNAUGMENTED

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: article500v2_wikigold_split

type: article500v2_wikigold_split

args: default

metrics:

- name: Precision

type: precision

value: 0.6510177281680893

- name: Recall

type: recall

value: 0.7377232142857143

- name: F1

type: f1

value: 0.6916637600279038

- name: Accuracy

type: accuracy

value: 0.936698943937827

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Article_500v2_NER_Model_3Epochs_UNAUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article500v2_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1886

- Precision: 0.6510

- Recall: 0.7377

- F1: 0.6917

- Accuracy: 0.9367

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 62 | 0.2863 | 0.4448 | 0.5990 | 0.5105 | 0.8927 |

| No log | 2.0 | 124 | 0.1965 | 0.6070 | 0.7321 | 0.6637 | 0.9308 |

| No log | 3.0 | 186 | 0.1886 | 0.6510 | 0.7377 | 0.6917 | 0.9367 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

|

DOOGLAK/Article_500v1_NER_Model_3Epochs_UNAUGMENTED

|

DOOGLAK

| 2022-08-11T23:36:15Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:article500v1_wikigold_split",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-08-11T23:31:14Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- article500v1_wikigold_split

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: Article_500v1_NER_Model_3Epochs_UNAUGMENTED

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: article500v1_wikigold_split

type: article500v1_wikigold_split

args: default

metrics:

- name: Precision

type: precision

value: 0.6614785992217899

- name: Recall

type: recall

value: 0.6746031746031746

- name: F1

type: f1

value: 0.6679764243614931

- name: Accuracy

type: accuracy

value: 0.9325595601710446

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Article_500v1_NER_Model_3Epochs_UNAUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article500v1_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2058

- Precision: 0.6615

- Recall: 0.6746

- F1: 0.6680

- Accuracy: 0.9326

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 58 | 0.3029 | 0.3539 | 0.3790 | 0.3660 | 0.8967 |

| No log | 2.0 | 116 | 0.2191 | 0.6223 | 0.6488 | 0.6353 | 0.9262 |

| No log | 3.0 | 174 | 0.2058 | 0.6615 | 0.6746 | 0.6680 | 0.9326 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

|

DOOGLAK/Article_500v0_NER_Model_3Epochs_UNAUGMENTED

|

DOOGLAK

| 2022-08-11T23:30:34Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:article500v0_wikigold_split",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-08-11T23:25:11Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- article500v0_wikigold_split

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: Article_500v0_NER_Model_3Epochs_UNAUGMENTED

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: article500v0_wikigold_split

type: article500v0_wikigold_split

args: default

metrics:

- name: Precision

type: precision

value: 0.6387981711299804

- name: Recall

type: recall

value: 0.7249814677538917

- name: F1

type: f1

value: 0.6791666666666667

- name: Accuracy

type: accuracy

value: 0.9364674441205053

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Article_500v0_NER_Model_3Epochs_UNAUGMENTED

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the article500v0_wikigold_split dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1853

- Precision: 0.6388

- Recall: 0.7250

- F1: 0.6792

- Accuracy: 0.9365

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 59 | 0.2886 | 0.4480 | 0.6179 | 0.5194 | 0.9012 |

| No log | 2.0 | 118 | 0.1912 | 0.6132 | 0.6946 | 0.6514 | 0.9327 |

| No log | 3.0 | 177 | 0.1853 | 0.6388 | 0.7250 | 0.6792 | 0.9365 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 2.4.0

- Tokenizers 0.11.6

|

BigSalmon/InformalToFormalLincoln61Paraphrase

|

BigSalmon

| 2022-08-11T23:21:29Z | 161 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-08-05T22:11:12Z |

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/InformalToFormalLincoln61Paraphrase")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/InformalToFormalLincoln61Paraphrase")

```

```

Demo:

https://huggingface.co/spaces/BigSalmon/FormalInformalConciseWordy

```

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

input_ids = tokenizer.encode(prompt, return_tensors='pt')

outputs = model.generate(input_ids=input_ids,

max_length=10 + len(prompt),

temperature=1.0,

top_k=50,

top_p=0.95,

do_sample=True,

num_return_sequences=5,

early_stopping=True)

for i in range(5):

print(tokenizer.decode(outputs[i]))

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

***

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

***

informal english: corn fields are all across illinois, visible once you leave chicago.

Translated into the Style of Abraham Lincoln: corn fields ( permeate illinois / span the state of illinois / ( occupy / persist in ) all corners of illinois / line the horizon of illinois / envelop the landscape of illinois ), manifesting themselves visibly as one ventures beyond chicago.

informal english:

```

```

infill: chrome extensions [MASK] accomplish everyday tasks.

Translated into the Style of Abraham Lincoln: chrome extensions ( expedite the ability to / unlock the means to more readily ) accomplish everyday tasks.

infill: at a time when nintendo has become inflexible, [MASK] consoles that are tethered to a fixed iteration, sega diligently curates its legacy of classic video games on handheld devices.

Translated into the Style of Abraham Lincoln: at a time when nintendo has become inflexible, ( stubbornly [MASK] on / firmly set on / unyielding in its insistence on ) consoles that are tethered to a fixed iteration, sega diligently curates its legacy of classic video games on handheld devices.

infill:

```

```

Essay Intro (Warriors vs. Rockets in Game 7):

text: eagerly anticipated by fans, game 7's are the highlight of the post-season.

text: ever-building in suspense, game 7's have the crowd captivated.

***

Essay Intro (South Korean TV Is Becoming Popular):

text: maturing into a bona fide paragon of programming, south korean television ( has much to offer / entertains without fail / never disappoints ).

text: increasingly held in critical esteem, south korean television continues to impress.

text: at the forefront of quality content, south korea is quickly achieving celebrity status.

***

Essay Intro (

```

```

Search: What is the definition of Checks and Balances?

https://en.wikipedia.org/wiki/Checks_and_balances

Checks and Balances is the idea of having a system where each and every action in government should be subject to one or more checks that would not allow one branch or the other to overly dominate.

https://www.harvard.edu/glossary/Checks_and_Balances

Checks and Balances is a system that allows each branch of government to limit the powers of the other branches in order to prevent abuse of power

https://www.law.cornell.edu/library/constitution/Checks_and_Balances

Checks and Balances is a system of separation through which branches of government can control the other, thus preventing excess power.

***

Search: What is the definition of Separation of Powers?

https://en.wikipedia.org/wiki/Separation_of_powers

The separation of powers is a principle in government, whereby governmental powers are separated into different branches, each with their own set of powers, that are prevent one branch from aggregating too much power.

https://www.yale.edu/tcf/Separation_of_Powers.html

Separation of Powers is the division of governmental functions between the executive, legislative and judicial branches, clearly demarcating each branch's authority, in the interest of ensuring that individual liberty or security is not undermined.

***

Search: What is the definition of Connection of Powers?

https://en.wikipedia.org/wiki/Connection_of_powers

Connection of Powers is a feature of some parliamentary forms of government where different branches of government are intermingled, typically the executive and legislative branches.

https://simple.wikipedia.org/wiki/Connection_of_powers

The term Connection of Powers describes a system of government in which there is overlap between different parts of the government.

***

Search: What is the definition of

```

```

Search: What are phrase synonyms for "second-guess"?

https://www.powerthesaurus.org/second-guess/synonyms

Shortest to Longest:

- feel dubious about

- raise an eyebrow at

- wrinkle their noses at

- cast a jaundiced eye at

- teeter on the fence about

***

Search: What are phrase synonyms for "mean to newbies"?

https://www.powerthesaurus.org/mean_to_newbies/synonyms

Shortest to Longest:

- readiness to balk at rookies

- absence of tolerance for novices

- hostile attitude toward newcomers

***

Search: What are phrase synonyms for "make use of"?

https://www.powerthesaurus.org/make_use_of/synonyms

Shortest to Longest:

- call upon

- glean value from

- reap benefits from

- derive utility from

- seize on the merits of

- draw on the strength of

- tap into the potential of

***

Search: What are phrase synonyms for "hurting itself"?

https://www.powerthesaurus.org/hurting_itself/synonyms

Shortest to Longest:

- erring

- slighting itself

- forfeiting its integrity

- doing itself a disservice

- evincing a lack of backbone

***

Search: What are phrase synonyms for "

```

```

- nebraska

- unicamerical legislature

- different from federal house and senate

text: featuring a unicameral legislature, nebraska's political system stands in stark contrast to the federal model, comprised of a house and senate.

***

- penny has practically no value

- should be taken out of circulation

- just as other coins have been in us history

- lost use

- value not enough

- to make environmental consequences worthy

text: all but valueless, the penny should be retired. as with other coins in american history, it has become defunct. too minute to warrant the environmental consequences of its production, it has outlived its usefulness.

***

-

```

```

original: sports teams are profitable for owners. [MASK], their valuations experience a dramatic uptick.

infill: sports teams are profitable for owners. ( accumulating vast sums / stockpiling treasure / realizing benefits / cashing in / registering robust financials / scoring on balance sheets ), their valuations experience a dramatic uptick.

***

original:

```

```

wordy: classical music is becoming less popular more and more.

Translate into Concise Text: interest in classic music is fading.

***

wordy:

```

```

sweet: savvy voters ousted him.

longer: voters who were informed delivered his defeat.

***

sweet:

```

```

1: commercial space company spacex plans to launch a whopping 52 flights in 2022.

2: spacex, a commercial space company, intends to undertake a total of 52 flights in 2022.

3: in 2022, commercial space company spacex has its sights set on undertaking 52 flights.

4: 52 flights are in the pipeline for 2022, according to spacex, a commercial space company.

5: a commercial space company, spacex aims to conduct 52 flights in 2022.

***

1:

```

Keywords to sentences or sentence.

```

ngos are characterized by:

□ voluntary citizens' group that is organized on a local, national or international level

□ encourage political participation

□ often serve humanitarian functions

□ work for social, economic, or environmental change

***

what are the drawbacks of living near an airbnb?

□ noise

□ parking

□ traffic

□ security

□ strangers

***

```

```

original: musicals generally use spoken dialogue as well as songs to convey the story. operas are usually fully sung.

adapted: musicals generally use spoken dialogue as well as songs to convey the story. ( in a stark departure / on the other hand / in contrast / by comparison / at odds with this practice / far from being alike / in defiance of this standard / running counter to this convention ), operas are usually fully sung.

***

original: akoya and tahitian are types of pearls. akoya pearls are mostly white, and tahitian pearls are naturally dark.

adapted: akoya and tahitian are types of pearls. ( a far cry from being indistinguishable / easily distinguished / on closer inspection / setting them apart / not to be mistaken for one another / hardly an instance of mere synonymy / differentiating the two ), akoya pearls are mostly white, and tahitian pearls are naturally dark.

***

original:

```

```

original: had trouble deciding.

translated into journalism speak: wrestled with the question, agonized over the matter, furrowed their brows in contemplation.

***

original:

```

```

input: not loyal

1800s english: ( two-faced / inimical / perfidious / duplicitous / mendacious / double-dealing / shifty ).

***

input:

```

```

first: ( was complicit in / was involved in ).

antonym: ( was blameless / was not an accomplice to / had no hand in / was uninvolved in ).

***

first: ( have no qualms about / see no issue with ).

antonym: ( are deeply troubled by / harbor grave reservations about / have a visceral aversion to / take ( umbrage at / exception to ) / are wary of ).

***

first: ( do not see eye to eye / disagree often ).

antonym: ( are in sync / are united / have excellent rapport / are like-minded / are in step / are of one mind / are in lockstep / operate in perfect harmony / march in lockstep ).

***

first:

```

```

stiff with competition, law school {A} is the launching pad for countless careers, {B} is a crowded field, {C} ranks among the most sought-after professional degrees, {D} is a professional proving ground.

***

languishing in viewership, saturday night live {A} is due for a creative renaissance, {B} is no longer a ratings juggernaut, {C} has been eclipsed by its imitators, {C} can still find its mojo.

***

dubbed the "manhattan of the south," atlanta {A} is a bustling metropolis, {B} is known for its vibrant downtown, {C} is a city of rich history, {D} is the pride of georgia.

***

embattled by scandal, harvard {A} is feeling the heat, {B} cannot escape the media glare, {C} is facing its most intense scrutiny yet, {D} is in the spotlight for all the wrong reasons.

```

Infill / Infilling / Masking / Phrase Masking

```

his contention [blank] by the evidence [sep] was refuted [answer]

***

few sights are as [blank] new york city as the colorful, flashing signage of its bodegas [sep] synonymous with [answer]

***

when rick won the lottery, all of his distant relatives [blank] his winnings [sep] clamored for [answer]

***

the library’s quiet atmosphere encourages visitors to [blank] in their work [sep] immerse themselves [answer]

***

```

|

BigSalmon/InformalToFormalLincoln63Paraphrase

|

BigSalmon

| 2022-08-11T23:20:43Z | 161 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-08-08T00:20:41Z |

```

from transformers import AutoTokenizer, AutoModelForCausalLM

tokenizer = AutoTokenizer.from_pretrained("BigSalmon/InformalToFormalLincoln63Paraphrase")

model = AutoModelForCausalLM.from_pretrained("BigSalmon/InformalToFormalLincoln63Paraphrase")

```

```

Demo:

https://huggingface.co/spaces/BigSalmon/FormalInformalConciseWordy

```

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

input_ids = tokenizer.encode(prompt, return_tensors='pt')

outputs = model.generate(input_ids=input_ids,

max_length=10 + len(prompt),

temperature=1.0,

top_k=50,

top_p=0.95,

do_sample=True,

num_return_sequences=5,

early_stopping=True)

for i in range(5):

print(tokenizer.decode(outputs[i]))

```

Most likely outputs:

```

prompt = """informal english: corn fields are all across illinois, visible once you leave chicago.\nTranslated into the Style of Abraham Lincoln:"""

text = tokenizer.encode(prompt)

myinput, past_key_values = torch.tensor([text]), None

myinput = myinput

myinput= myinput.to(device)

logits, past_key_values = model(myinput, past_key_values = past_key_values, return_dict=False)

logits = logits[0,-1]

probabilities = torch.nn.functional.softmax(logits)

best_logits, best_indices = logits.topk(250)

best_words = [tokenizer.decode([idx.item()]) for idx in best_indices]

text.append(best_indices[0].item())

best_probabilities = probabilities[best_indices].tolist()

words = []

print(best_words)

```

```

How To Make Prompt:

informal english: i am very ready to do that just that.

Translated into the Style of Abraham Lincoln: you can assure yourself of my readiness to work toward this end.

Translated into the Style of Abraham Lincoln: please be assured that i am most ready to undertake this laborious task.

***

informal english: space is huge and needs to be explored.

Translated into the Style of Abraham Lincoln: space awaits traversal, a new world whose boundaries are endless.

Translated into the Style of Abraham Lincoln: space is a ( limitless / boundless ) expanse, a vast virgin domain awaiting exploration.

***

informal english: corn fields are all across illinois, visible once you leave chicago.

Translated into the Style of Abraham Lincoln: corn fields ( permeate illinois / span the state of illinois / ( occupy / persist in ) all corners of illinois / line the horizon of illinois / envelop the landscape of illinois ), manifesting themselves visibly as one ventures beyond chicago.

informal english:

```

```

infill: chrome extensions [MASK] accomplish everyday tasks.

Translated into the Style of Abraham Lincoln: chrome extensions ( expedite the ability to / unlock the means to more readily ) accomplish everyday tasks.

infill: at a time when nintendo has become inflexible, [MASK] consoles that are tethered to a fixed iteration, sega diligently curates its legacy of classic video games on handheld devices.

Translated into the Style of Abraham Lincoln: at a time when nintendo has become inflexible, ( stubbornly [MASK] on / firmly set on / unyielding in its insistence on ) consoles that are tethered to a fixed iteration, sega diligently curates its legacy of classic video games on handheld devices.

infill:

```

```

Essay Intro (Warriors vs. Rockets in Game 7):

text: eagerly anticipated by fans, game 7's are the highlight of the post-season.

text: ever-building in suspense, game 7's have the crowd captivated.

***

Essay Intro (South Korean TV Is Becoming Popular):

text: maturing into a bona fide paragon of programming, south korean television ( has much to offer / entertains without fail / never disappoints ).

text: increasingly held in critical esteem, south korean television continues to impress.

text: at the forefront of quality content, south korea is quickly achieving celebrity status.

***

Essay Intro (

```

```

Search: What is the definition of Checks and Balances?

https://en.wikipedia.org/wiki/Checks_and_balances