modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-10 00:38:21

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 551

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-10 00:38:17

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

waboucay/camembert-large-finetuned-xnli_fr_3_classes-finetuned-rua_wl_3_classes

|

waboucay

| 2022-06-20T09:34:17Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"nli",

"fr",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-20T09:23:44Z |

---

language:

- fr

tags:

- nli

metrics:

- f1

---

## Eval results

We obtain the following results on ```validation``` and ```test``` sets:

| Set | F1<sub>micro</sub> | F1<sub>macro</sub> |

|------------|--------------------|--------------------|

| validation | 72.4 | 72.2 |

| test | 72.8 | 72.5 |

|

qgrantq/bert-finetuned-squad

|

qgrantq

| 2022-06-20T08:03:46Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"question-answering",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-06-20T05:30:05Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: bert-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-finetuned-squad

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.20.0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

|

jacobbieker/dgmr

|

jacobbieker

| 2022-06-20T07:43:41Z | 4 | 1 |

transformers

|

[

"transformers",

"pytorch",

"nowcasting",

"forecasting",

"timeseries",

"remote-sensing",

"gan",

"license:mit",

"endpoints_compatible",

"region:us"

] | null | 2022-06-20T07:44:17Z |

---

license: mit

tags:

- nowcasting

- forecasting

- timeseries

- remote-sensing

- gan

---

# DGMR

## Model description

[More information needed]

## Intended uses & limitations

[More information needed]

## How to use

[More information needed]

## Limitations and bias

[More information needed]

## Training data

[More information needed]

## Training procedure

[More information needed]

## Evaluation results

[More information needed]

|

Hausax/albert-xxlarge-v2-finetuned-Poems

|

Hausax

| 2022-06-20T07:19:43Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"albert",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-06-19T10:02:39Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: albert-xxlarge-v2-finetuned-Poems

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# albert-xxlarge-v2-finetuned-Poems

This model is a fine-tuned version of [albert-xxlarge-v2](https://huggingface.co/albert-xxlarge-v2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 2.1923

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-07

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 2.482 | 1.0 | 19375 | 2.2959 |

| 2.258 | 2.0 | 38750 | 2.2357 |

| 2.2146 | 3.0 | 58125 | 2.2085 |

| 2.1975 | 4.0 | 77500 | 2.1929 |

| 2.1893 | 5.0 | 96875 | 2.1863 |

### Framework versions

- Transformers 4.20.0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

|

KM4STfulltext/CSSCI_ABS_roberta_wwm

|

KM4STfulltext

| 2022-06-20T07:06:48Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"fill-mask",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-06-15T15:33:54Z |

---

license: apache-2.0

---

# Pre-trained Language Model for the Humanities and Social Sciences in Chinese

## Introduction

The research for social science texts in Chinese needs the support natural language processing tools.

The pre-trained language model has greatly improved the accuracy of text mining in general texts. At present, there is an urgent need for a pre-trained language model specifically for the automatic processing of scientific texts in Chinese social science.

We used the abstract of social science research as the training set. Based on the deep language model framework of BERT, we constructed CSSCI_ABS_BERT, CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm pre-training language models by [transformers/run_mlm.py](https://github.com/huggingface/transformers/blob/main/examples/pytorch/language-modeling/run_mlm.py) and [transformers/mlm_wwm](https://github.com/huggingface/transformers/tree/main/examples/research_projects/mlm_wwm).

We designed four downstream tasks of Text Classification on different Chinese social scientific article corpus to verify the performance of the model.

- CSSCI_ABS_BERT , CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm are trained on the abstract of articles published in CSSCI journals. The training set involved in the experiment included a total of `510,956,094 words`.

- Based on the idea of Domain-Adaptive Pretraining, `CSSCI_ABS_BERT` and `CSSCI_ABS_roberta` combine a large amount of abstracts of scientific articles in Chinese based on the BERT structure, and continue to train the BERT and Chinese-RoBERTa models respectively to obtain pre-training models for the automatic processing of Chinese Social science research texts.

## News

- 2022-06-15 : CSSCI_ABS_BERT, CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm has been put forward for the first time.

## How to use

### Huggingface Transformers

The `from_pretrained` method based on [Huggingface Transformers](https://github.com/huggingface/transformers) can directly obtain CSSCI_ABS_BERT, CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm models online.

- CSSCI_ABS_BERT

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("KM4STfulltext/CSSCI_ABS_BERT")

model = AutoModel.from_pretrained("KM4STfulltext/CSSCI_ABS_BERT")

```

- CSSCI_ABS_roberta

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("KM4STfulltext/CSSCI_ABS_roberta")

model = AutoModel.from_pretrained("KM4STfulltext/CSSCI_ABS_roberta")

```

- CSSCI_ABS_roberta-wwm

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("KM4STfulltext/CSSCI_ABS_roberta_wwm")

model = AutoModel.from_pretrained("KM4STfulltext/CSSCI_ABS_roberta_wwm")

```

### Download Models

- The version of the model we provide is `PyTorch`.

### From Huggingface

- Download directly through Huggingface's official website.

- [KM4STfulltext/CSSCI_ABS_BERT](https://huggingface.co/KM4STfulltext/CSSCI_ABS_BERT)

- [KM4STfulltext/CSSCI_ABS_roberta](https://huggingface.co/KM4STfulltext/CSSCI_ABS_roberta)

- [KM4STfulltext/CSSCI_ABS_roberta_wwm](https://huggingface.co/KM4STfulltext/CSSCI_ABS_roberta_wwm)

## Evaluation & Results

- We useCSSCI_ABS_BERT, CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm to perform Text Classificationon different social science research corpus. The experimental results are as follows.

#### Discipline classification experiments of articles published in CSSCI journals

https://github.com/S-T-Full-Text-Knowledge-Mining/CSSCI-BERT

#### Movement recognition experiments for data analysis and knowledge discovery abstract

| Tag | bert-base-Chinese | chinese-roberta-wwm,ext | CSSCI_ABS_BERT | CSSCI_ABS_roberta | CSSCI_ABS_roberta_wwm | support |

| ------------ | ----------------- | ----------------------- | -------------- | ----------------- | --------------------- | ------- |

| Abstract | 55.23 | 62.44 | 56.8 | 57.96 | 58.26 | 223 |

| Location | 61.61 | 54.38 | 61.83 | 61.4 | 61.94 | 2866 |

| Metric | 45.08 | 41 | 45.27 | 46.74 | 47.13 | 622 |

| Organization | 46.85 | 35.29 | 45.72 | 45.44 | 44.65 | 327 |

| Person | 88.66 | 82.79 | 88.21 | 88.29 | 88.51 | 4850 |

| Thing | 71.68 | 65.34 | 71.88 | 71.68 | 71.81 | 5993 |

| Time | 65.35 | 60.38 | 64.15 | 65.26 | 66.03 | 1272 |

| avg | 72.69 | 66.62 | 72.59 | 72.61 | 72.89 | 16153 |

#### Chinese literary entity recognition

| Tag | bert-base-Chinese | chinese-roberta-wwm,ext | CSSCI_ABS_BERT | CSSCI_ABS_roberta | CSSCI_ABS_roberta_wwm | support |

| ------------ | ----------------- | ----------------------- | -------------- | ----------------- | --------------------- | ------- |

| Abstract | 55.23 | 62.44 | 56.8 | 57.96 | 58.26 | 223 |

| Location | 61.61 | 54.38 | 61.83 | 61.4 | 61.94 | 2866 |

| Metric | 45.08 | 41 | 45.27 | 46.74 | 47.13 | 622 |

| Organization | 46.85 | 35.29 | 45.72 | 45.44 | 44.65 | 327 |

| Person | 88.66 | 82.79 | 88.21 | 88.29 | 88.51 | 4850 |

| Thing | 71.68 | 65.34 | 71.88 | 71.68 | 71.81 | 5993 |

| Time | 65.35 | 60.38 | 64.15 | 65.26 | 66.03 | 1272 |

| avg | 72.69 | 66.62 | 72.59 | 72.61 | 72.89 | 16153 |

## Cited

- If our content is helpful for your research work, please quote our research in your article.

- If you want to quote our research, you can use this url [S-T-Full-Text-Knowledge-Mining/CSSCI-BERT (github.com)](https://github.com/S-T-Full-Text-Knowledge-Mining/CSSCI-BERT) as an alternative before our paper is published.

## Disclaimer

- The experimental results presented in the report only show the performance under a specific data set and hyperparameter combination, and cannot represent the essence of each model. The experimental results may change due to random number seeds and computing equipment.

- **Users can use the model arbitrarily within the scope of the license, but we are not responsible for the direct or indirect losses caused by using the content of the project.**

## Acknowledgment

- CSSCI_ABS_BERT was trained based on [BERT-Base-Chinese]([google-research/bert: TensorFlow code and pre-trained models for BERT (github.com)](https://github.com/google-research/bert)).

- CSSCI_ABS_roberta and CSSCI_ABS_roberta-wwm was trained based on [RoBERTa-wwm-ext, Chinese]([ymcui/Chinese-BERT-wwm: Pre-Training with Whole Word Masking for Chinese BERT(中文BERT-wwm系列模型) (github.com)](https://github.com/ymcui/Chinese-BERT-wwm)).

|

anas-awadalla/prompt-tuned-t5-small-num-tokens-100-squad

|

anas-awadalla

| 2022-06-20T04:47:43Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"generated_from_trainer",

"dataset:squad",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2022-06-20T00:50:38Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: prompt-tuned-t5-small-num-tokens-100-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# prompt-tuned-t5-small-num-tokens-100-squad

This model is a fine-tuned version of [google/t5-small-lm-adapt](https://huggingface.co/google/t5-small-lm-adapt) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.3

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- total_train_batch_size: 32

- total_eval_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 30000

### Training results

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.0.0

- Tokenizers 0.11.6

|

huggingtweets/bartoszmilewski

|

huggingtweets

| 2022-06-20T02:35:22Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-06-20T02:33:39Z |

---

language: en

thumbnail: http://www.huggingtweets.com/bartoszmilewski/1655692518288/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1000136690/IslandBartosz_400x400.JPG')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Bartosz Milewski</div>

<div style="text-align: center; font-size: 14px;">@bartoszmilewski</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Bartosz Milewski.

| Data | Bartosz Milewski |

| --- | --- |

| Tweets downloaded | 3248 |

| Retweets | 79 |

| Short tweets | 778 |

| Tweets kept | 2391 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2689vaqz/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @bartoszmilewski's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/1f1jpc3z) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/1f1jpc3z/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/bartoszmilewski')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

ali2066/DistilBERTFINAL_ctxSentence_TRAIN_all_TEST_french_second_train_set_french_False

|

ali2066

| 2022-06-20T01:54:34Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-05-02T14:07:53Z |

---

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: _ctxSentence_TRAIN_all_TEST_french_second_train_set_french_False

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# _ctxSentence_TRAIN_all_TEST_french_second_train_set_french_False

This model is a fine-tuned version of [cardiffnlp/twitter-roberta-base](https://huggingface.co/cardiffnlp/twitter-roberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4936

- Precision: 0.8189

- Recall: 0.9811

- F1: 0.8927

- Accuracy: 0.8120

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| No log | 1.0 | 13 | 0.5150 | 0.7447 | 1.0 | 0.8537 | 0.7447 |

| No log | 2.0 | 26 | 0.5565 | 0.7447 | 1.0 | 0.8537 | 0.7447 |

| No log | 3.0 | 39 | 0.5438 | 0.7778 | 1.0 | 0.8750 | 0.7872 |

| No log | 4.0 | 52 | 0.5495 | 0.7778 | 1.0 | 0.8750 | 0.7872 |

| No log | 5.0 | 65 | 0.5936 | 0.7778 | 1.0 | 0.8750 | 0.7872 |

### Framework versions

- Transformers 4.15.0

- Pytorch 1.10.1+cu113

- Datasets 1.18.0

- Tokenizers 0.10.3

|

huggingtweets/borisjohnson-elonmusk-majornelson

|

huggingtweets

| 2022-06-19T22:42:51Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-06-19T22:42:06Z |

---

language: en

thumbnail: http://www.huggingtweets.com/borisjohnson-elonmusk-majornelson/1655678567047/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1529956155937759233/Nyn1HZWF_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1519703427240013824/FOED2v9N_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1500170386520129536/Rr2G6A-N_400x400.jpg')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Elon Musk & Larry Hryb 🇺🇦 & Boris Johnson</div>

<div style="text-align: center; font-size: 14px;">@borisjohnson-elonmusk-majornelson</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Elon Musk & Larry Hryb 🇺🇦 & Boris Johnson.

| Data | Elon Musk | Larry Hryb 🇺🇦 | Boris Johnson |

| --- | --- | --- | --- |

| Tweets downloaded | 3250 | 3250 | 3248 |

| Retweets | 147 | 736 | 653 |

| Short tweets | 985 | 86 | 17 |

| Tweets kept | 2118 | 2428 | 2578 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/22m356ew/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @borisjohnson-elonmusk-majornelson's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/316f3w9h) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/316f3w9h/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/borisjohnson-elonmusk-majornelson')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

sevlabr/unit-1-PPO-LunarLander-v2

|

sevlabr

| 2022-06-19T21:52:28Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-06-19T21:51:58Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- metrics:

- type: mean_reward

value: 222.00 +/- 55.66

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

chradden/generation_xyz

|

chradden

| 2022-06-19T21:33:52Z | 54 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"huggingpics",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-06-19T21:33:37Z |

---

tags:

- image-classification

- pytorch

- huggingpics

metrics:

- accuracy

model-index:

- name: generation_xyz

results:

- task:

name: Image Classification

type: image-classification

metrics:

- name: Accuracy

type: accuracy

value: 0.5504587292671204

---

# generation_xyz

Autogenerated by HuggingPics🤗🖼️

Create your own image classifier for **anything** by running [the demo on Google Colab](https://colab.research.google.com/github/nateraw/huggingpics/blob/main/HuggingPics.ipynb).

Report any issues with the demo at the [github repo](https://github.com/nateraw/huggingpics).

## Example Images

#### Baby Boomers

#### Generation Alpha

#### Generation X

#### Generation Z

#### Millennials

|

voleg44/dqn-SpaceInvadersNoFrameskip-v4

|

voleg44

| 2022-06-19T20:06:30Z | 3 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-06-19T20:05:54Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- metrics:

- type: mean_reward

value: 434.50 +/- 143.59

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

```

# Download model and save it into the logs/ folder

python -m utils.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga voleg44 -f logs/

python enjoy.py --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python train.py --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m utils.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga voleg44

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 1000000.0),

('optimize_memory_usage', True),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

anas-awadalla/prophetnet-large-squad

|

anas-awadalla

| 2022-06-19T19:16:54Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"prophetnet",

"text2text-generation",

"generated_from_trainer",

"dataset:squad",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-06-19T18:12:00Z |

---

tags:

- generated_from_trainer

datasets:

- squad

model-index:

- name: prophetnet-large-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# prophetnet-large-squad

This model is a fine-tuned version of [microsoft/prophetnet-large-uncased](https://huggingface.co/microsoft/prophetnet-large-uncased) on the squad dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 128

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- total_train_batch_size: 256

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2.0

### Training results

### Framework versions

- Transformers 4.20.0.dev0

- Pytorch 1.11.0+cu113

- Datasets 2.0.0

- Tokenizers 0.11.6

|

martin-ha/text_encoder_in_dual

|

martin-ha

| 2022-06-19T19:11:19Z | 0 | 0 |

keras

|

[

"keras",

"tf-keras",

"region:us"

] | null | 2022-06-19T19:10:58Z |

---

library_name: keras

---

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training Metrics

Model history needed

## Model Plot

<details>

<summary>View Model Plot</summary>

</details>

|

martin-ha/vision_encoder_in_dual

|

martin-ha

| 2022-06-19T19:07:22Z | 0 | 0 |

keras

|

[

"keras",

"tf-keras",

"region:us"

] | null | 2022-06-19T19:06:52Z |

---

library_name: keras

---

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training Metrics

Model history needed

## Model Plot

<details>

<summary>View Model Plot</summary>

</details>

|

diversifix/diversiformer

|

diversifix

| 2022-06-19T16:44:04Z | 6 | 3 |

transformers

|

[

"transformers",

"tf",

"t5",

"text2text-generation",

"de",

"arxiv:2010.11934",

"license:gpl",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-06-19T12:44:02Z |

---

language:

- de

license: gpl

widget:

- text: "Ersetze \"Lehrer\" durch \"Lehrerin oder Lehrer\": Ein promovierter Mathelehrer ist noch nie im Unterricht eingeschlafen."

example_title: "Example 1"

- text: "Ersetze \"Student\" durch \"studierende Person\": Maria ist kein Student."

example_title: "Example 2"

inference:

parameters:

max_length: 500

---

# Diversiformer 🤗 🏳️🌈 🇩🇪

_Work in progress._

Language model for inclusive language in German, fine-tuned on [mT5](https://arxiv.org/abs/2010.11934).

An experimental model version is released [on Huggingface](https://huggingface.co/diversifix/diversiformer).

Source code for fine-tuning is available [on GitHub](https://github.com/diversifix/diversiformer).

## Tasks

- **DETECT**: Recognizes instances of the generic masculine, and of other exclusive language. To do.

- **SUGGEST**: Suggest inclusive alternatives to masculine and exclusive words. To do.

- **REPLACE**: Replace one phrase by another, while preserving grammatical coherence. Work in progress.

- ▶️ `Ersetze "Schüler" durch "Schülerin oder Schüler": Die Schüler kamen zu spät.`

◀️ `Die Schülerinnen und Schüler kamen zu spät.`

- ▶️ `Ersetze "Lehrer" durch "Kollegium": Die wartenden Lehrer wunderten sich.`

◀️ `Das wartende Kollegium wunderte sich.`

## Usage

```python

>>> from transformers import pipeline

>>> generator = pipeline("text2text-generation", model="diversifix/diversiformer")

>>> generator('Ersetze "Schüler" durch "Schülerin oder Schüler": Die Schüler kamen zu spät.', max_length=500)

```

## License

Diversiformer. Transformer model for inclusive language.

Copyright (C) 2022 [Diversifix e. V.](mailto:vorstand@diversifix.org)

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see <https://www.gnu.org/licenses/>.

|

anjankumar/mbart-large-50-finetuned-en-to-te

|

anjankumar

| 2022-06-19T16:32:07Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"mbart",

"text2text-generation",

"generated_from_trainer",

"dataset:kde4",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-06-07T07:02:05Z |

---

tags:

- generated_from_trainer

datasets:

- kde4

metrics:

- bleu

model-index:

- name: mbart-large-50-finetuned-en-to-te

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: kde4

type: kde4

args: en-te

metrics:

- name: Bleu

type: bleu

value: 0.7152

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mbart-large-50-finetuned-en-to-te

This model is a fine-tuned version of [facebook/mbart-large-50](https://huggingface.co/facebook/mbart-large-50) on the kde4 dataset.

It achieves the following results on the evaluation set:

- Loss: 13.8521

- Bleu: 0.7152

- Gen Len: 20.5

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|

| No log | 1.0 | 7 | 13.8521 | 0.7152 | 20.5 |

### Framework versions

- Transformers 4.20.0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

|

thaidv96/lead-reliability-scoring

|

thaidv96

| 2022-06-19T16:15:46Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-19T15:44:25Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- f1

model-index:

- name: lead-reliability-scoring

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# lead-reliability-scoring

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0123

- F1: 0.9937

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| No log | 1.0 | 50 | 0.3866 | 0.5761 |

| No log | 2.0 | 100 | 0.3352 | 0.6538 |

| No log | 3.0 | 150 | 0.1786 | 0.8283 |

| No log | 4.0 | 200 | 0.1862 | 0.8345 |

| No log | 5.0 | 250 | 0.1367 | 0.8736 |

| No log | 6.0 | 300 | 0.0642 | 0.9477 |

| No log | 7.0 | 350 | 0.0343 | 0.9748 |

| No log | 8.0 | 400 | 0.0190 | 0.9874 |

| No log | 9.0 | 450 | 0.0123 | 0.9937 |

| 0.2051 | 10.0 | 500 | 0.0058 | 0.9937 |

### Framework versions

- Transformers 4.20.0

- Pytorch 1.11.0+cu113

- Datasets 2.3.2

- Tokenizers 0.12.1

|

waboucay/camembert-large-xnli

|

waboucay

| 2022-06-19T14:38:51Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"nli",

"fr",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-19T14:35:57Z |

---

language:

- fr

tags:

- nli

metrics:

- f1

---

## Eval results

We obtain the following results on ```validation``` and ```test``` sets:

| Set | F1<sub>micro</sub> | F1<sub>macro</sub> |

|------------|--------------------|--------------------|

| validation | 85.8 | 85.9 |

| test | 84.2 | 84.3 |

|

waboucay/camembert-large-finetuned-rua_wl_3_classes

|

waboucay

| 2022-06-19T14:35:04Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"nli",

"fr",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-19T14:31:32Z |

---

language:

- fr

tags:

- nli

metrics:

- f1

---

## Eval results

We obtain the following results on ```validation``` and ```test``` sets:

| Set | F1<sub>micro</sub> | F1<sub>macro</sub> |

|------------|--------------------|--------------------|

| validation | 75.3 | 74.9 |

| test | 75.8 | 75.3 |

|

waboucay/camembert-large-finetuned-repnum_wl_3_classes

|

waboucay

| 2022-06-19T14:30:19Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"camembert",

"text-classification",

"nli",

"fr",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-19T14:22:13Z |

---

language:

- fr

tags:

- nli

metrics:

- f1

---

## Eval results

We obtain the following results on ```validation``` and ```test``` sets:

| Set | F1<sub>micro</sub> | F1<sub>macro</sub> |

|------------|--------------------|--------------------|

| validation | 79.4 | 79.4 |

| test | 80.6 | 80.6 |

|

ctoraman/RoBERTweetTurkCovid

|

ctoraman

| 2022-06-19T14:25:58Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"tr",

"license:cc-by-nc-sa-4.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-06-08T11:59:09Z |

---

language:

- tr

tags:

- roberta

license: cc-by-nc-sa-4.0

---

# RoBERTweetTurkCovid (uncased)

Pretrained model on Turkish language using a masked language modeling (MLM) objective. The model is uncased.

The pretrained corpus is a Turkish tweets collection related to COVID-19.

Model architecture is similar to RoBERTa-base (12 layers, 12 heads, and 768 hidden size). Tokenization algorithm is WordPiece. Vocabulary size is 30k.

The details of pretraining can be found at this paper:

```bibtex

@InProceedings{clef-checkthat:2022:task1:oguzhan,

author = {Cagri Toraman and Oguzhan Ozcelik and Furkan Şahinuç and Umitcan Sahin},

title = "{ARC-NLP at CheckThat! 2022:} Contradiction for Harmful Tweet Detection",

year = {2022},

booktitle = "Working Notes of {CLEF} 2022 - Conference and Labs of the Evaluation Forum",

editor = {Faggioli, Guglielmo andd Ferro, Nicola and Hanbury, Allan and Potthast, Martin},

series = {CLEF~'2022},

address = {Bologna, Italy},

}

```

The following code can be used for model loading and tokenization, example max length (768) can be changed:

```

model = AutoModel.from_pretrained([model_path])

#for sequence classification:

#model = AutoModelForSequenceClassification.from_pretrained([model_path], num_labels=[num_classes])

tokenizer = PreTrainedTokenizerFast(tokenizer_file=[file_path])

tokenizer.mask_token = "[MASK]"

tokenizer.cls_token = "[CLS]"

tokenizer.sep_token = "[SEP]"

tokenizer.pad_token = "[PAD]"

tokenizer.unk_token = "[UNK]"

tokenizer.bos_token = "[CLS]"

tokenizer.eos_token = "[SEP]"

tokenizer.model_max_length = 768

```

### BibTeX entry and citation info

```bibtex

@InProceedings{clef-checkthat:2022:task1:oguzhan,

author = {Cagri Toraman and Oguzhan Ozcelik and Furkan Şahinuç and Umitcan Sahin},

title = "{ARC-NLP at CheckThat! 2022:} Contradiction for Harmful Tweet Detection",

year = {2022},

booktitle = "Working Notes of {CLEF} 2022 - Conference and Labs of the Evaluation Forum",

editor = {Faggioli, Guglielmo andd Ferro, Nicola and Hanbury, Allan and Potthast, Martin},

series = {CLEF~'2022},

address = {Bologna, Italy},

}

```

|

rajistics/dqn-SpaceInvadersNoFrameskip-v4

|

rajistics

| 2022-06-19T13:48:15Z | 2 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-06-19T13:47:41Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- metrics:

- type: mean_reward

value: 435.50 +/- 129.62

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

```

# Download model and save it into the logs/ folder

python -m utils.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga rajistics -f logs/

python enjoy.py --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python train.py --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m utils.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga rajistics

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 10000000.0),

('optimize_memory_usage', True),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

Classroom-workshop/assignment2-llama

|

Classroom-workshop

| 2022-06-19T13:46:40Z | 7 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-06-02T15:27:20Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- metrics:

- type: mean_reward

value: 200.68 +/- 7.11

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2** using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

|

huggingtweets/david_lynch

|

huggingtweets

| 2022-06-19T13:12:27Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-05-27T11:00:06Z |

---

language: en

thumbnail: http://www.huggingtweets.com/david_lynch/1655644342827/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/63730229/DL_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">David Lynch</div>

<div style="text-align: center; font-size: 14px;">@david_lynch</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from David Lynch.

| Data | David Lynch |

| --- | --- |

| Tweets downloaded | 912 |

| Retweets | 29 |

| Short tweets | 21 |

| Tweets kept | 862 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/do5yghsd/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @david_lynch's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/ddgwjhcj) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/ddgwjhcj/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/david_lynch')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

gary109/ai-light-dance_singing_ft_wav2vec2-large-xlsr-53-5gram-v2

|

gary109

| 2022-06-19T12:14:27Z | 3 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"gary109/AI_Light_Dance",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-06-19T00:34:26Z |

---

tags:

- automatic-speech-recognition

- gary109/AI_Light_Dance

- generated_from_trainer

model-index:

- name: ai-light-dance_singing_ft_wav2vec2-large-xlsr-53-5gram-v2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ai-light-dance_singing_ft_wav2vec2-large-xlsr-53-5gram-v2

This model is a fine-tuned version of [gary109/ai-light-dance_singing_ft_wav2vec2-large-xlsr-53-5gram-v1](https://huggingface.co/gary109/ai-light-dance_singing_ft_wav2vec2-large-xlsr-53-5gram-v1) on the GARY109/AI_LIGHT_DANCE - ONSET-SINGING dataset.

It achieves the following results on the evaluation set:

- Loss: 0.4313

- Wer: 0.1645

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 500

- num_epochs: 10.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.148 | 1.0 | 552 | 0.4313 | 0.1645 |

| 0.1301 | 2.0 | 1104 | 0.4365 | 0.1618 |

| 0.1237 | 3.0 | 1656 | 0.4470 | 0.1595 |

| 0.1063 | 4.0 | 2208 | 0.4593 | 0.1576 |

| 0.128 | 5.0 | 2760 | 0.4525 | 0.1601 |

| 0.1099 | 6.0 | 3312 | 0.4593 | 0.1567 |

| 0.0969 | 7.0 | 3864 | 0.4625 | 0.1550 |

| 0.0994 | 8.0 | 4416 | 0.4672 | 0.1543 |

| 0.125 | 9.0 | 4968 | 0.4636 | 0.1544 |

| 0.0887 | 10.0 | 5520 | 0.4601 | 0.1538 |

### Framework versions

- Transformers 4.21.0.dev0

- Pytorch 1.9.1+cu102

- Datasets 2.3.3.dev0

- Tokenizers 0.12.1

|

ShannonAI/ChineseBERT-large

|

ShannonAI

| 2022-06-19T12:07:31Z | 23 | 5 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2106.16038",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# ChineseBERT-large

This repository contains code, model, dataset for **ChineseBERT** at ACL2021.

paper:

**[ChineseBERT: Chinese Pretraining Enhanced by Glyph and Pinyin Information](https://arxiv.org/abs/2106.16038)**

*Zijun Sun, Xiaoya Li, Xiaofei Sun, Yuxian Meng, Xiang Ao, Qing He, Fei Wu and Jiwei Li*

code:

[ChineseBERT github link](https://github.com/ShannonAI/ChineseBert)

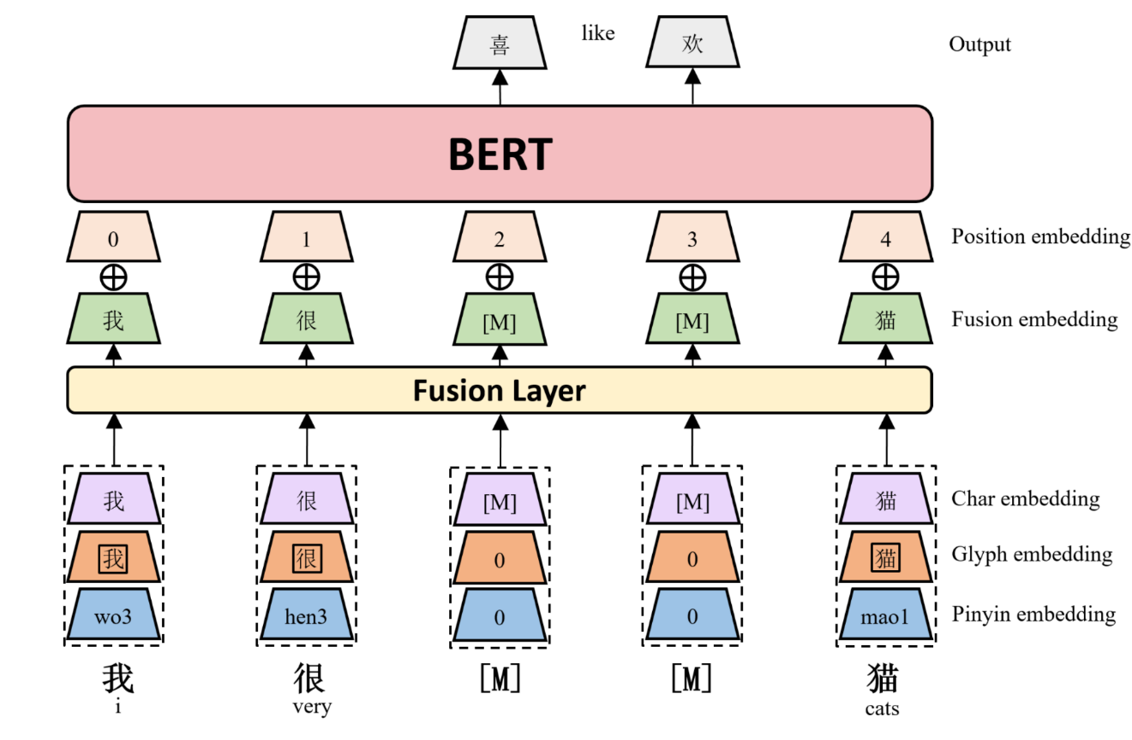

## Model description

We propose ChineseBERT, which incorporates both the glyph and pinyin information of Chinese

characters into language model pretraining.

First, for each Chinese character, we get three kind of embedding.

- **Char Embedding:** the same as origin BERT token embedding.

- **Glyph Embedding:** capture visual features based on different fonts of a Chinese character.

- **Pinyin Embedding:** capture phonetic feature from the pinyin sequence ot a Chinese Character.

Then, char embedding, glyph embedding and pinyin embedding

are first concatenated, and mapped to a D-dimensional embedding through a fully

connected layer to form the fusion embedding.

Finally, the fusion embedding is added with the position embedding, which is fed as input to the BERT model.

The following image shows an overview architecture of ChineseBERT model.

ChineseBERT leverages the glyph and pinyin information of Chinese

characters to enhance the model's ability of capturing

context semantics from surface character forms and

disambiguating polyphonic characters in Chinese.

|

dibsondivya/ernie-phmtweets-sutd

|

dibsondivya

| 2022-06-19T11:38:29Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"ernie",

"health",

"tweet",

"dataset:custom-phm-tweets",

"arxiv:1802.09130",

"arxiv:1907.12412",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-06-19T11:20:14Z |

---

tags:

- ernie

- health

- tweet

datasets:

- custom-phm-tweets

metrics:

- accuracy

model-index:

- name: ernie-phmtweets-sutd

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: custom-phm-tweets

type: labelled

metrics:

- name: Accuracy

type: accuracy

value: 0.885

---

# ernie-phmtweets-sutd

This model is a fine-tuned version of [ernie-2.0-en](https://huggingface.co/nghuyong/ernie-2.0-en) for text classification to identify public health events through tweets. The project was based on an [Emory University Study on Detection of Personal Health Mentions in Social Media paper](https://arxiv.org/pdf/1802.09130v2.pdf), that worked with this [custom dataset](https://github.com/emory-irlab/PHM2017).

It achieves the following results on the evaluation set:

- Accuracy: 0.885

## Usage

```Python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("dibsondivya/ernie-phmtweets-sutd")

model = AutoModelForSequenceClassification.from_pretrained("dibsondivya/ernie-phmtweets-sutd")

```

### Model Evaluation Results

With Validation Set

- Accuracy: 0.889763779527559

With Test Set

- Accuracy: 0.884643644379133

## References for ERNIE 2.0 Model

```bibtex

@article{sun2019ernie20,

title={ERNIE 2.0: A Continual Pre-training Framework for Language Understanding},

author={Sun, Yu and Wang, Shuohuan and Li, Yukun and Feng, Shikun and Tian, Hao and Wu, Hua and Wang, Haifeng},

journal={arXiv preprint arXiv:1907.12412},

year={2019}

}

```

|

levgil2/stam-finetuned-imdb

|

levgil2

| 2022-06-19T11:26:02Z | 4 | 0 |

transformers

|

[

"transformers",

"tf",

"distilbert",

"fill-mask",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-06-19T11:21:46Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: levgil2/stam-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# levgil2/stam-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 2.8517

- Validation Loss: 2.5705

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'WarmUp', 'config': {'initial_learning_rate': 2e-05, 'decay_schedule_fn': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': -687, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}, '__passive_serialization__': True}, 'warmup_steps': 1000, 'power': 1.0, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 2.8517 | 2.5705 | 0 |

### Framework versions

- Transformers 4.20.0

- TensorFlow 2.8.2

- Datasets 2.3.2

- Tokenizers 0.12.1

|

zakria/NLP_Project

|

zakria

| 2022-06-19T09:55:56Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-06-19T07:49:04Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: NLP_Project

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# NLP_Project

This model is a fine-tuned version of [facebook/wav2vec2-base](https://huggingface.co/facebook/wav2vec2-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5308

- Wer: 0.3428

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- num_epochs: 30

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:-----:|:---------------:|:------:|

| 3.5939 | 1.0 | 500 | 2.1356 | 1.0014 |

| 0.9126 | 2.01 | 1000 | 0.5469 | 0.5354 |

| 0.4491 | 3.01 | 1500 | 0.4636 | 0.4503 |

| 0.3008 | 4.02 | 2000 | 0.4269 | 0.4330 |

| 0.2229 | 5.02 | 2500 | 0.4164 | 0.4073 |

| 0.188 | 6.02 | 3000 | 0.4717 | 0.4107 |

| 0.1739 | 7.03 | 3500 | 0.4306 | 0.4031 |

| 0.159 | 8.03 | 4000 | 0.4394 | 0.3993 |

| 0.1342 | 9.04 | 4500 | 0.4462 | 0.3904 |

| 0.1093 | 10.04 | 5000 | 0.4387 | 0.3759 |

| 0.1005 | 11.04 | 5500 | 0.5033 | 0.3847 |

| 0.0857 | 12.05 | 6000 | 0.4805 | 0.3876 |

| 0.0779 | 13.05 | 6500 | 0.5269 | 0.3810 |

| 0.072 | 14.06 | 7000 | 0.5109 | 0.3710 |

| 0.0641 | 15.06 | 7500 | 0.4865 | 0.3638 |

| 0.0584 | 16.06 | 8000 | 0.5041 | 0.3646 |

| 0.0552 | 17.07 | 8500 | 0.4987 | 0.3537 |

| 0.0535 | 18.07 | 9000 | 0.4947 | 0.3586 |

| 0.0475 | 19.08 | 9500 | 0.5237 | 0.3647 |

| 0.042 | 20.08 | 10000 | 0.5338 | 0.3561 |

| 0.0416 | 21.08 | 10500 | 0.5068 | 0.3483 |

| 0.0358 | 22.09 | 11000 | 0.5126 | 0.3532 |

| 0.0334 | 23.09 | 11500 | 0.5213 | 0.3536 |

| 0.0331 | 24.1 | 12000 | 0.5378 | 0.3496 |

| 0.03 | 25.1 | 12500 | 0.5167 | 0.3470 |

| 0.0254 | 26.1 | 13000 | 0.5245 | 0.3418 |

| 0.0233 | 27.11 | 13500 | 0.5393 | 0.3456 |

| 0.0232 | 28.11 | 14000 | 0.5279 | 0.3425 |

| 0.022 | 29.12 | 14500 | 0.5308 | 0.3428 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.11.0+cu113

- Datasets 1.18.3

- Tokenizers 0.12.1

|

sun1638650145/q-Taxi-v3

|

sun1638650145

| 2022-06-19T09:00:38Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-06-19T09:00:26Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- metrics:

- type: mean_reward

value: 7.54 +/- 2.73

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

---

# 使用**Q-Learning**智能体来玩**Taxi-v3**

这是一个使用**Q-Learning**训练有素的模型玩**Taxi-v3**.

## 用法

```python

model = load_from_hub(repo_id='sun1638650145/q-Taxi-v3', filename='q-learning.pkl')

# 不要忘记检查是否需要添加额外的参数(例如is_slippery=False)

env = gym.make(model['env_id'])

evaluate_agent(env, model['max_steps'], model['n_eval_episodes'], model['qtable'], model['eval_seed'])

```

|

ShannonAI/ChineseBERT-base

|

ShannonAI

| 2022-06-19T08:14:46Z | 109 | 20 |

transformers

|

[

"transformers",

"pytorch",

"arxiv:2106.16038",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

# ChineseBERT-base

This repository contains code, model, dataset for **ChineseBERT** at ACL2021.

paper:

**[ChineseBERT: Chinese Pretraining Enhanced by Glyph and Pinyin Information](https://arxiv.org/abs/2106.16038)**

*Zijun Sun, Xiaoya Li, Xiaofei Sun, Yuxian Meng, Xiang Ao, Qing He, Fei Wu and Jiwei Li*

code:

[ChineseBERT github link](https://github.com/ShannonAI/ChineseBert)

## Model description

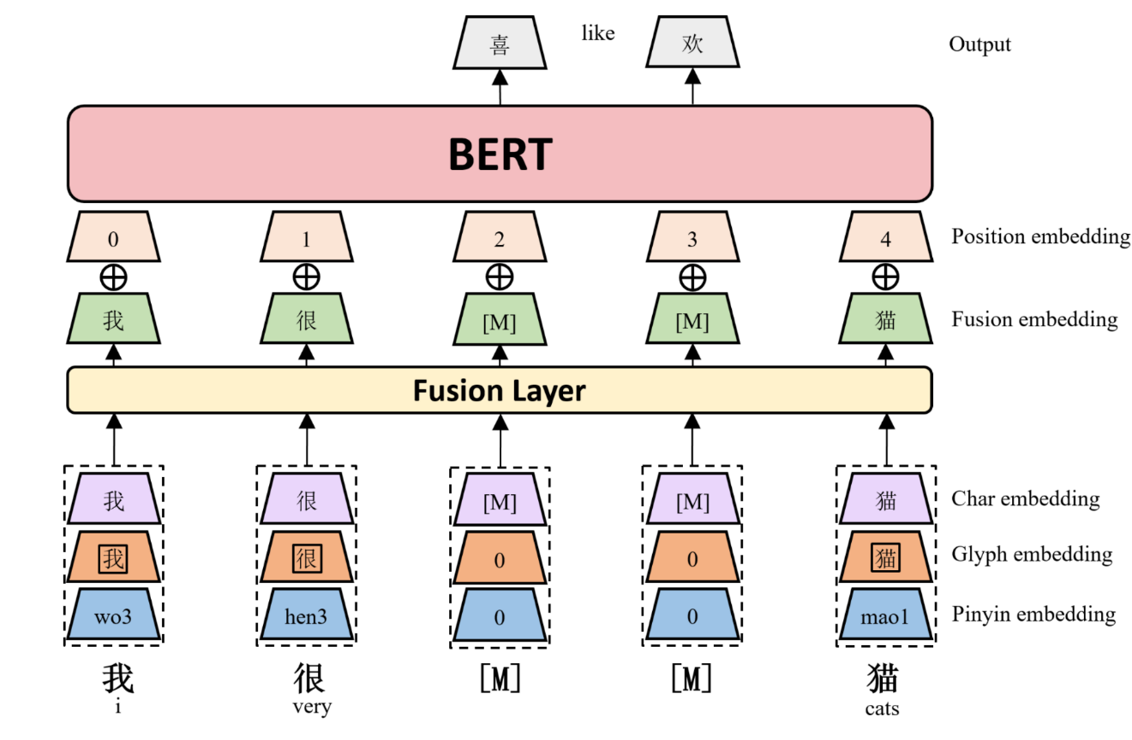

We propose ChineseBERT, which incorporates both the glyph and pinyin information of Chinese

characters into language model pretraining.

First, for each Chinese character, we get three kind of embedding.

- **Char Embedding:** the same as origin BERT token embedding.

- **Glyph Embedding:** capture visual features based on different fonts of a Chinese character.

- **Pinyin Embedding:** capture phonetic feature from the pinyin sequence ot a Chinese Character.

Then, char embedding, glyph embedding and pinyin embedding

are first concatenated, and mapped to a D-dimensional embedding through a fully

connected layer to form the fusion embedding.

Finally, the fusion embedding is added with the position embedding, which is fed as input to the BERT model.

The following image shows an overview architecture of ChineseBERT model.

ChineseBERT leverages the glyph and pinyin information of Chinese

characters to enhance the model's ability of capturing

context semantics from surface character forms and

disambiguating polyphonic characters in Chinese.

|

botika/checkpoint-124500-finetuned-squad

|

botika

| 2022-06-19T05:53:11Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"question-answering",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-06-17T07:41:58Z |

---

tags:

- generated_from_trainer

model-index:

- name: checkpoint-124500-finetuned-squad

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# checkpoint-124500-finetuned-squad

This model was trained from scratch on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 14.9594

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 100

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:------:|:---------------:|

| 3.9975 | 1.0 | 3289 | 3.8405 |

| 3.7311 | 2.0 | 6578 | 3.7114 |

| 3.5681 | 3.0 | 9867 | 3.6829 |

| 3.4101 | 4.0 | 13156 | 3.6368 |

| 3.2487 | 5.0 | 16445 | 3.6526 |

| 3.1143 | 6.0 | 19734 | 3.7567 |

| 2.9783 | 7.0 | 23023 | 3.8469 |

| 2.8295 | 8.0 | 26312 | 4.0040 |

| 2.6912 | 9.0 | 29601 | 4.1996 |

| 2.5424 | 10.0 | 32890 | 4.3387 |

| 2.4161 | 11.0 | 36179 | 4.4988 |

| 2.2713 | 12.0 | 39468 | 4.7861 |

| 2.1413 | 13.0 | 42757 | 4.9276 |

| 2.0125 | 14.0 | 46046 | 5.0598 |

| 1.8798 | 15.0 | 49335 | 5.3347 |

| 1.726 | 16.0 | 52624 | 5.5869 |

| 1.5994 | 17.0 | 55913 | 5.7161 |

| 1.4643 | 18.0 | 59202 | 6.0174 |

| 1.3237 | 19.0 | 62491 | 6.4926 |

| 1.2155 | 20.0 | 65780 | 6.4882 |

| 1.1029 | 21.0 | 69069 | 6.9922 |

| 0.9948 | 22.0 | 72358 | 7.1357 |

| 0.9038 | 23.0 | 75647 | 7.3676 |

| 0.8099 | 24.0 | 78936 | 7.4180 |

| 0.7254 | 25.0 | 82225 | 7.7753 |

| 0.6598 | 26.0 | 85514 | 7.8643 |

| 0.5723 | 27.0 | 88803 | 8.1798 |

| 0.5337 | 28.0 | 92092 | 8.3053 |

| 0.4643 | 29.0 | 95381 | 8.8597 |

| 0.4241 | 30.0 | 98670 | 8.9849 |

| 0.3763 | 31.0 | 101959 | 8.8406 |

| 0.3479 | 32.0 | 105248 | 9.1517 |

| 0.3271 | 33.0 | 108537 | 9.3659 |

| 0.2911 | 34.0 | 111826 | 9.4813 |

| 0.2836 | 35.0 | 115115 | 9.5746 |

| 0.2528 | 36.0 | 118404 | 9.7027 |

| 0.2345 | 37.0 | 121693 | 9.7515 |

| 0.2184 | 38.0 | 124982 | 9.9729 |

| 0.2067 | 39.0 | 128271 | 10.0828 |

| 0.2077 | 40.0 | 131560 | 10.0878 |

| 0.1876 | 41.0 | 134849 | 10.2974 |

| 0.1719 | 42.0 | 138138 | 10.2712 |

| 0.1637 | 43.0 | 141427 | 10.5788 |

| 0.1482 | 44.0 | 144716 | 10.7465 |

| 0.1509 | 45.0 | 148005 | 10.4603 |

| 0.1358 | 46.0 | 151294 | 10.7665 |

| 0.1316 | 47.0 | 154583 | 10.7724 |

| 0.1223 | 48.0 | 157872 | 11.1766 |

| 0.1205 | 49.0 | 161161 | 11.1870 |

| 0.1203 | 50.0 | 164450 | 11.1053 |

| 0.1081 | 51.0 | 167739 | 10.9696 |

| 0.103 | 52.0 | 171028 | 11.2010 |

| 0.0938 | 53.0 | 174317 | 11.6728 |

| 0.0924 | 54.0 | 177606 | 11.1423 |

| 0.0922 | 55.0 | 180895 | 11.7409 |

| 0.0827 | 56.0 | 184184 | 11.7850 |

| 0.0829 | 57.0 | 187473 | 11.8956 |

| 0.073 | 58.0 | 190762 | 11.8915 |

| 0.0788 | 59.0 | 194051 | 12.1617 |

| 0.0734 | 60.0 | 197340 | 12.2007 |

| 0.0729 | 61.0 | 200629 | 12.2388 |

| 0.0663 | 62.0 | 203918 | 12.2471 |

| 0.0662 | 63.0 | 207207 | 12.5830 |

| 0.064 | 64.0 | 210496 | 12.6105 |

| 0.0599 | 65.0 | 213785 | 12.3712 |

| 0.0604 | 66.0 | 217074 | 12.9249 |

| 0.0574 | 67.0 | 220363 | 12.7309 |

| 0.0538 | 68.0 | 223652 | 12.8068 |

| 0.0526 | 69.0 | 226941 | 13.4368 |

| 0.0471 | 70.0 | 230230 | 13.5148 |

| 0.0436 | 71.0 | 233519 | 13.3391 |

| 0.0448 | 72.0 | 236808 | 13.4100 |

| 0.0428 | 73.0 | 240097 | 13.5617 |

| 0.0401 | 74.0 | 243386 | 13.8674 |

| 0.035 | 75.0 | 246675 | 13.5746 |

| 0.0342 | 76.0 | 249964 | 13.5042 |

| 0.0344 | 77.0 | 253253 | 14.2085 |

| 0.0365 | 78.0 | 256542 | 13.6393 |

| 0.0306 | 79.0 | 259831 | 13.9807 |

| 0.0311 | 80.0 | 263120 | 13.9768 |

| 0.0353 | 81.0 | 266409 | 14.5245 |

| 0.0299 | 82.0 | 269698 | 13.9471 |

| 0.0263 | 83.0 | 272987 | 13.7899 |

| 0.0254 | 84.0 | 276276 | 14.3786 |

| 0.0267 | 85.0 | 279565 | 14.5611 |

| 0.022 | 86.0 | 282854 | 14.2658 |

| 0.0198 | 87.0 | 286143 | 14.9215 |

| 0.0193 | 88.0 | 289432 | 14.5650 |

| 0.0228 | 89.0 | 292721 | 14.7014 |

| 0.0184 | 90.0 | 296010 | 14.6946 |

| 0.0182 | 91.0 | 299299 | 14.6614 |

| 0.0188 | 92.0 | 302588 | 14.6915 |

| 0.0196 | 93.0 | 305877 | 14.7262 |

| 0.0138 | 94.0 | 309166 | 14.7625 |

| 0.0201 | 95.0 | 312455 | 15.0442 |

| 0.0189 | 96.0 | 315744 | 14.8832 |

| 0.0148 | 97.0 | 319033 | 14.8995 |

| 0.0129 | 98.0 | 322322 | 14.8974 |

| 0.0132 | 99.0 | 325611 | 14.9813 |

| 0.0139 | 100.0 | 328900 | 14.9594 |

### Framework versions

- Transformers 4.19.2

- Pytorch 1.11.0+cu102

- Datasets 2.2.2

- Tokenizers 0.12.1

|

eslamxm/AraT5-base-title-generation-finetune-ar-xlsum

|

eslamxm

| 2022-06-19T05:23:32Z | 28 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"summarization",

"Arat5-base",

"abstractive summarization",

"ar",

"xlsum",

"generated_from_trainer",

"dataset:xlsum",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

summarization

| 2022-06-18T19:19:57Z |

---

tags:

- summarization

- Arat5-base

- abstractive summarization

- ar

- xlsum

- generated_from_trainer

datasets:

- xlsum

model-index:

- name: AraT5-base-title-generation-finetune-ar-xlsum

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# AraT5-base-title-generation-finetune-ar-xlsum

This model is a fine-tuned version of [UBC-NLP/AraT5-base-title-generation](https://huggingface.co/UBC-NLP/AraT5-base-title-generation) on the xlsum dataset.

It achieves the following results on the evaluation set:

- Loss: 4.2837

- Rouge-1: 32.46

- Rouge-2: 15.15

- Rouge-l: 28.38

- Gen Len: 18.48

- Bertscore: 74.24

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 128