modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-18 12:33:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 564

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-18 12:31:33

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

jamm44n4n/sd-class-butterflies-64

|

jamm44n4n

| 2023-10-26T01:06:55Z | 44 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"pytorch",

"unconditional-image-generation",

"diffusion-models-class",

"license:mit",

"diffusers:DDPMPipeline",

"region:us"

] |

unconditional-image-generation

| 2023-10-26T01:00:30Z |

---

license: mit

tags:

- pytorch

- diffusers

- unconditional-image-generation

- diffusion-models-class

---

# Model Card for Unit 1 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class)

This model is a diffusion model for unconditional image generation of cute 🦋.

## Usage

```python

from diffusers import DDPMPipeline

pipeline = DDPMPipeline.from_pretrained('jamm44n4n/sd-class-butterflies-64')

image = pipeline().images[0]

image

```

|

Daniel-Sousa/outputs

|

Daniel-Sousa

| 2023-10-26T00:59:54Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"base_model:microsoft/deberta-v3-small",

"base_model:finetune:microsoft/deberta-v3-small",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-10-26T00:59:33Z |

---

license: mit

base_model: microsoft/deberta-v3-small

tags:

- generated_from_trainer

model-index:

- name: outputs

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# outputs

This model is a fine-tuned version of [microsoft/deberta-v3-small](https://huggingface.co/microsoft/deberta-v3-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1243

- Pearson: 0.7160

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-05

- train_batch_size: 256

- eval_batch_size: 512

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 4

### Training results

| Training Loss | Epoch | Step | Validation Loss | Pearson |

|:-------------:|:-----:|:----:|:---------------:|:-------:|

| No log | 1.0 | 24 | 0.2074 | 0.6679 |

| No log | 2.0 | 48 | 0.1218 | 0.7193 |

| No log | 3.0 | 72 | 0.1224 | 0.7178 |

| No log | 4.0 | 96 | 0.1243 | 0.7160 |

### Framework versions

- Transformers 4.33.0

- Pytorch 2.0.0

- Datasets 2.1.0

- Tokenizers 0.13.3

|

LoneStriker/SynthIA-70B-v1.5-3.0bpw-h6-exl2

|

LoneStriker

| 2023-10-26T00:53:43Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-26T00:51:43Z |

---

license: llama2

---

## Example Usage

### Prompt format:

```

SYSTEM: Elaborate on the topic using a Tree of Thoughts and backtrack when necessary to construct a clear, cohesive Chain of Thought reasoning. Always answer without hesitation.

USER: How is a rocket launched from the surface of the earth to Low Earth Orbit?

ASSISTANT:

```

### Code example:

```python

import torch, json

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = "migtissera/Synthia-70B-v1.5"

output_file_path = "./Synthia-70B-v1.5-conversations.jsonl"

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.float16,

device_map="auto",

load_in_8bit=False,

trust_remote_code=True,

)

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

def generate_text(instruction):

tokens = tokenizer.encode(instruction)

tokens = torch.LongTensor(tokens).unsqueeze(0)

tokens = tokens.to("cuda")

instance = {

"input_ids": tokens,

"top_p": 1.0,

"temperature": 0.75,

"generate_len": 1024,

"top_k": 50,

}

length = len(tokens[0])

with torch.no_grad():

rest = model.generate(

input_ids=tokens,

max_length=length + instance["generate_len"],

use_cache=True,

do_sample=True,

top_p=instance["top_p"],

temperature=instance["temperature"],

top_k=instance["top_k"],

num_return_sequences=1,

)

output = rest[0][length:]

string = tokenizer.decode(output, skip_special_tokens=True)

answer = string.split("USER:")[0].strip()

return f"{answer}"

conversation = f"SYSTEM: Elaborate on the topic using a Tree of Thoughts and backtrack when necessary to construct a clear, cohesive Chain of Thought reasoning. Always answer without hesitation."

while True:

user_input = input("You: ")

llm_prompt = f"{conversation} \nUSER: {user_input} \nASSISTANT: "

answer = generate_text(llm_prompt)

print(answer)

conversation = f"{llm_prompt}{answer}"

json_data = {"prompt": user_input, "answer": answer}

## Save your conversation

with open(output_file_path, "a") as output_file:

output_file.write(json.dumps(json_data) + "\n")

```

|

profoz/odsc-sawyer-sft

|

profoz

| 2023-10-26T00:47:16Z | 0 | 0 | null |

[

"generated_from_trainer",

"base_model:bigscience/bloom-560m",

"base_model:finetune:bigscience/bloom-560m",

"license:bigscience-bloom-rail-1.0",

"region:us"

] | null | 2023-10-25T16:14:27Z |

---

license: bigscience-bloom-rail-1.0

base_model: bigscience/bloom-560m

tags:

- generated_from_trainer

model-index:

- name: odsc-sawyer-sft

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# odsc-sawyer-sft

This model is a fine-tuned version of [bigscience/bloom-560m](https://huggingface.co/bigscience/bloom-560m) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.5514

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 2

- eval_batch_size: 4

- seed: 42

- gradient_accumulation_steps: 32

- total_train_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.2

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 1.5443 | 1.0 | 2770 | 1.5514 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

|

dancrvlh/tweets

|

dancrvlh

| 2023-10-26T00:35:20Z | 97 | 0 |

transformers

|

[

"transformers",

"pytorch",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"base_model:microsoft/deberta-v3-small",

"base_model:finetune:microsoft/deberta-v3-small",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-10-26T00:34:57Z |

---

license: mit

base_model: microsoft/deberta-v3-small

tags:

- generated_from_trainer

model-index:

- name: outputs

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# outputs

This model is a fine-tuned version of [microsoft/deberta-v3-small](https://huggingface.co/microsoft/deberta-v3-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6423

- Pearson: 0.8016

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-05

- train_batch_size: 64

- eval_batch_size: 128

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Pearson |

|:-------------:|:-----:|:----:|:---------------:|:-------:|

| No log | 1.0 | 59 | 1.4008 | 0.4701 |

| No log | 2.0 | 118 | 0.8380 | 0.7255 |

| No log | 3.0 | 177 | 0.7382 | 0.7834 |

| No log | 4.0 | 236 | 0.6273 | 0.7978 |

| No log | 5.0 | 295 | 0.6423 | 0.8016 |

### Framework versions

- Transformers 4.33.0

- Pytorch 2.0.0

- Datasets 2.1.0

- Tokenizers 0.13.3

|

DopeorNope/COKAL-13B-v1-adapter

|

DopeorNope

| 2023-10-26T00:31:08Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-10-26T00:21:03Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: True

- load_in_4bit: False

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: fp4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float32

### Framework versions

- PEFT 0.5.0.dev0

|

meduardamoliveira/redesneurais

|

meduardamoliveira

| 2023-10-26T00:22:20Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-10-26T00:15:32Z |

# Projeto Final - Modelos Preditivos Conexionistas

### Nomes dos Alunos

Arthur Rennan Santos Lira

Maria Eduarda Marques de Oliveira

|**Tipo de Projeto**|**Modelo Selecionado**|**Linguagem**|

|--|--|--|

|Classificação de Imagens|ResNet-34|Pytorch & TensorFlow|

## Performance

O modelo treinado possui performance de **??%**.

### Output do bloco de treinamento

<details>

<summary>Click to expand!</summary>

```text

Você deve colar aqui a saída do bloco de treinamento do notebook, contendo todas as épocas e saídas do treinamento

```

</details>

### Evidências do treinamento

Nessa seção você deve colocar qualquer evidência do treinamento, como por exemplo gráficos de perda, performance, matriz de confusão etc.

Exemplo de adição de imagem:

## Roboflow

Nessa seção deve colocar o link para acessar o dataset no Roboflow

Exemplo de link: [Nome do link](google.com)

## HuggingFace

Nessa seção você deve publicar o link para o HuggingFace

|

ghegfield/Llama-2-7b-chat-hf-formula-peft

|

ghegfield

| 2023-10-26T00:20:36Z | 0 | 0 | null |

[

"generated_from_trainer",

"base_model:NousResearch/Llama-2-7b-chat-hf",

"base_model:finetune:NousResearch/Llama-2-7b-chat-hf",

"region:us"

] | null | 2023-10-21T13:17:40Z |

---

base_model: NousResearch/Llama-2-7b-chat-hf

tags:

- generated_from_trainer

model-index:

- name: Llama-2-7b-chat-hf-formula-peft

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Llama-2-7b-chat-hf-formula-peft

This model is a fine-tuned version of [NousResearch/Llama-2-7b-chat-hf](https://huggingface.co/NousResearch/Llama-2-7b-chat-hf) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 2.1452

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 8

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.03

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 4.1878 | 1.43 | 10 | 3.6596 |

| 2.8437 | 2.86 | 20 | 2.6466 |

| 1.8635 | 4.29 | 30 | 2.2266 |

| 1.4052 | 5.71 | 40 | 2.1136 |

| 1.2186 | 7.14 | 50 | 2.0805 |

| 0.8835 | 8.57 | 60 | 2.0733 |

| 0.6991 | 10.0 | 70 | 2.0809 |

| 0.5608 | 11.43 | 80 | 2.0862 |

| 0.4188 | 12.86 | 90 | 2.1078 |

| 0.3897 | 14.29 | 100 | 2.1089 |

| 0.2748 | 15.71 | 110 | 2.1333 |

| 0.2582 | 17.14 | 120 | 2.1383 |

| 0.2394 | 18.57 | 130 | 2.1440 |

| 0.2392 | 20.0 | 140 | 2.1452 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

|

LoneStriker/SynthIA-70B-v1.5-5.0bpw-h6-exl2

|

LoneStriker

| 2023-10-26T00:08:21Z | 6 | 2 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-26T00:04:54Z |

---

license: llama2

---

## Example Usage

### Prompt format:

```

SYSTEM: Elaborate on the topic using a Tree of Thoughts and backtrack when necessary to construct a clear, cohesive Chain of Thought reasoning. Always answer without hesitation.

USER: How is a rocket launched from the surface of the earth to Low Earth Orbit?

ASSISTANT:

```

### Code example:

```python

import torch, json

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = "migtissera/Synthia-70B-v1.5"

output_file_path = "./Synthia-70B-v1.5-conversations.jsonl"

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.float16,

device_map="auto",

load_in_8bit=False,

trust_remote_code=True,

)

tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

def generate_text(instruction):

tokens = tokenizer.encode(instruction)

tokens = torch.LongTensor(tokens).unsqueeze(0)

tokens = tokens.to("cuda")

instance = {

"input_ids": tokens,

"top_p": 1.0,

"temperature": 0.75,

"generate_len": 1024,

"top_k": 50,

}

length = len(tokens[0])

with torch.no_grad():

rest = model.generate(

input_ids=tokens,

max_length=length + instance["generate_len"],

use_cache=True,

do_sample=True,

top_p=instance["top_p"],

temperature=instance["temperature"],

top_k=instance["top_k"],

num_return_sequences=1,

)

output = rest[0][length:]

string = tokenizer.decode(output, skip_special_tokens=True)

answer = string.split("USER:")[0].strip()

return f"{answer}"

conversation = f"SYSTEM: Elaborate on the topic using a Tree of Thoughts and backtrack when necessary to construct a clear, cohesive Chain of Thought reasoning. Always answer without hesitation."

while True:

user_input = input("You: ")

llm_prompt = f"{conversation} \nUSER: {user_input} \nASSISTANT: "

answer = generate_text(llm_prompt)

print(answer)

conversation = f"{llm_prompt}{answer}"

json_data = {"prompt": user_input, "answer": answer}

## Save your conversation

with open(output_file_path, "a") as output_file:

output_file.write(json.dumps(json_data) + "\n")

```

|

mllakers/marioooo

|

mllakers

| 2023-10-25T23:56:27Z | 0 | 0 |

nemo

|

[

"nemo",

"code",

"region:us"

] | null | 2023-10-25T23:54:49Z |

---

library_name: nemo

tags:

- code

---

|

w95/zephyr-support-chatbot

|

w95

| 2023-10-25T23:55:59Z | 0 | 0 | null |

[

"generated_from_trainer",

"base_model:TheBloke/zephyr-7B-alpha-GPTQ",

"base_model:finetune:TheBloke/zephyr-7B-alpha-GPTQ",

"license:mit",

"region:us"

] | null | 2023-10-25T23:43:13Z |

---

license: mit

base_model: TheBloke/zephyr-7B-alpha-GPTQ

tags:

- generated_from_trainer

model-index:

- name: zephyr-support-chatbot

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# zephyr-support-chatbot

This model is a fine-tuned version of [TheBloke/zephyr-7B-alpha-GPTQ](https://huggingface.co/TheBloke/zephyr-7B-alpha-GPTQ) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- training_steps: 250

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.35.0.dev0

- Pytorch 2.1.0+cu121

- Datasets 2.14.5

- Tokenizers 0.14.1

|

Wangzaistone123/CodeLlama-13b-sql-lora

|

Wangzaistone123

| 2023-10-25T23:43:16Z | 4 | 8 |

peft

|

[

"peft",

"text-to-sql",

"spider",

"text2sql",

"region:us"

] | null | 2023-10-25T23:39:59Z |

---

library_name: peft

tags:

- text-to-sql

- spider

- 'text2sql'

---

## Introduce

This folder is a text-to-sql weights directory, containing weight files fine-tuned based on the LoRA with the CodeLlama-13b-Instruct-hf model through the [DB-GPT-Hub](https://github.com/eosphoros-ai/DB-GPT-Hub/tree/main) project. The training data used is from the Spider training set. This weights files achieving an execution accuracy of approximately 0.789 on the Spider evaluation set.

Merge the weights with [CodeLlama-13b-Instruct-hf](https://huggingface.co/codellama/CodeLlama-13b-Instruct-hf/tree/main) and this folder weigths, you can refer the [DB-GPT-Hub](https://github.com/eosphoros-ai/DB-GPT-Hub/tree/main) ,in `dbgpt_hub/scripts/export_merge.sh`.

If you find our weight files or the DB-GPT-Hub project helpful for your work, give a star on our github project [DB-GPT-Hub](https://github.com/eosphoros-ai/DB-GPT-Hub/tree/main) will be a great encouragement for us to release more weight files.

### Framework versions

- PEFT 0.4.0

|

rafaelcarvalhoj/emotion-classifier

|

rafaelcarvalhoj

| 2023-10-25T23:40:51Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"base_model:microsoft/deberta-v3-small",

"base_model:finetune:microsoft/deberta-v3-small",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-10-25T23:40:28Z |

---

license: mit

base_model: microsoft/deberta-v3-small

tags:

- generated_from_trainer

model-index:

- name: outputs

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# outputs

This model is a fine-tuned version of [microsoft/deberta-v3-small](https://huggingface.co/microsoft/deberta-v3-small) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1671

- Pearson: 0.8847

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 8e-05

- train_batch_size: 256

- eval_batch_size: 512

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Pearson |

|:-------------:|:-----:|:----:|:---------------:|:-------:|

| No log | 1.0 | 18 | 0.6667 | 0.1236 |

| No log | 2.0 | 36 | 0.4215 | 0.6237 |

| No log | 3.0 | 54 | 0.3060 | 0.8074 |

| No log | 4.0 | 72 | 0.1798 | 0.8774 |

| No log | 5.0 | 90 | 0.1671 | 0.8847 |

### Framework versions

- Transformers 4.33.0

- Pytorch 2.0.0

- Datasets 2.1.0

- Tokenizers 0.13.3

|

pedrowww/u8

|

pedrowww

| 2023-10-25T23:31:58Z | 0 | 0 | null |

[

"tensorboard",

"LunarLander-v2",

"ppo",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"deep-rl-course",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-10-25T23:00:43Z |

---

tags:

- LunarLander-v2

- ppo

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

- deep-rl-course

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 35.30 +/- 116.57

name: mean_reward

verified: false

---

# PPO Agent Playing LunarLander-v2

This is a trained model of a PPO agent playing LunarLander-v2.

# Hyperparameters

```python

{'exp_name': 'ppo'

'seed': 1

'torch_deterministic': True

'cuda': True

'track': False

'wandb_project_name': 'cleanRL'

'wandb_entity': None

'capture_video': False

'env_id': 'LunarLander-v2'

'total_timesteps': 100000

'learning_rate': 0.01

'num_envs': 4

'num_steps': 128

'anneal_lr': True

'gae': True

'gamma': 0.99

'gae_lambda': 0.95

'num_minibatches': 4

'update_epochs': 4

'norm_adv': True

'clip_coef': 0.2

'clip_vloss': True

'ent_coef': 0.01

'vf_coef': 0.5

'max_grad_norm': 0.5

'target_kl': None

'repo_id': 'pedrowww/u8'

'batch_size': 512

'minibatch_size': 128}

```

|

TheBloke/Cat-13B-0.5-GGUF

|

TheBloke

| 2023-10-25T23:20:18Z | 142 | 3 |

transformers

|

[

"transformers",

"gguf",

"llama",

"base_model:Heralax/Cat-0.5",

"base_model:quantized:Heralax/Cat-0.5",

"license:llama2",

"region:us"

] | null | 2023-10-25T21:25:02Z |

---

base_model: Heralax/Cat-0.5

inference: false

license: llama2

model_creator: Evan Armstrong

model_name: Cat 13B 0.5

model_type: llama

prompt_template: '{prompt}

'

quantized_by: TheBloke

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Cat 13B 0.5 - GGUF

- Model creator: [Evan Armstrong](https://huggingface.co/Heralax)

- Original model: [Cat 13B 0.5](https://huggingface.co/Heralax/Cat-0.5)

<!-- description start -->

## Description

This repo contains GGUF format model files for [Evan Armstrong's Cat 13B 0.5](https://huggingface.co/Heralax/Cat-0.5).

<!-- description end -->

<!-- README_GGUF.md-about-gguf start -->

### About GGUF

GGUF is a new format introduced by the llama.cpp team on August 21st 2023. It is a replacement for GGML, which is no longer supported by llama.cpp.

Here is an incomplate list of clients and libraries that are known to support GGUF:

* [llama.cpp](https://github.com/ggerganov/llama.cpp). The source project for GGUF. Offers a CLI and a server option.

* [text-generation-webui](https://github.com/oobabooga/text-generation-webui), the most widely used web UI, with many features and powerful extensions. Supports GPU acceleration.

* [KoboldCpp](https://github.com/LostRuins/koboldcpp), a fully featured web UI, with GPU accel across all platforms and GPU architectures. Especially good for story telling.

* [LM Studio](https://lmstudio.ai/), an easy-to-use and powerful local GUI for Windows and macOS (Silicon), with GPU acceleration.

* [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui), a great web UI with many interesting and unique features, including a full model library for easy model selection.

* [Faraday.dev](https://faraday.dev/), an attractive and easy to use character-based chat GUI for Windows and macOS (both Silicon and Intel), with GPU acceleration.

* [ctransformers](https://github.com/marella/ctransformers), a Python library with GPU accel, LangChain support, and OpenAI-compatible AI server.

* [llama-cpp-python](https://github.com/abetlen/llama-cpp-python), a Python library with GPU accel, LangChain support, and OpenAI-compatible API server.

* [candle](https://github.com/huggingface/candle), a Rust ML framework with a focus on performance, including GPU support, and ease of use.

<!-- README_GGUF.md-about-gguf end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Cat-13B-0.5-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Cat-13B-0.5-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF)

* [Evan Armstrong's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/Heralax/Cat-0.5)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: None

```

{prompt}

```

<!-- prompt-template end -->

<!-- compatibility_gguf start -->

## Compatibility

These quantised GGUFv2 files are compatible with llama.cpp from August 27th onwards, as of commit [d0cee0d](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221)

They are also compatible with many third party UIs and libraries - please see the list at the top of this README.

## Explanation of quantisation methods

<details>

<summary>Click to see details</summary>

The new methods available are:

* GGML_TYPE_Q2_K - "type-1" 2-bit quantization in super-blocks containing 16 blocks, each block having 16 weight. Block scales and mins are quantized with 4 bits. This ends up effectively using 2.5625 bits per weight (bpw)

* GGML_TYPE_Q3_K - "type-0" 3-bit quantization in super-blocks containing 16 blocks, each block having 16 weights. Scales are quantized with 6 bits. This end up using 3.4375 bpw.

* GGML_TYPE_Q4_K - "type-1" 4-bit quantization in super-blocks containing 8 blocks, each block having 32 weights. Scales and mins are quantized with 6 bits. This ends up using 4.5 bpw.

* GGML_TYPE_Q5_K - "type-1" 5-bit quantization. Same super-block structure as GGML_TYPE_Q4_K resulting in 5.5 bpw

* GGML_TYPE_Q6_K - "type-0" 6-bit quantization. Super-blocks with 16 blocks, each block having 16 weights. Scales are quantized with 8 bits. This ends up using 6.5625 bpw

Refer to the Provided Files table below to see what files use which methods, and how.

</details>

<!-- compatibility_gguf end -->

<!-- README_GGUF.md-provided-files start -->

## Provided files

| Name | Quant method | Bits | Size | Max RAM required | Use case |

| ---- | ---- | ---- | ---- | ---- | ----- |

| [cat-0.5.Q2_K.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q2_K.gguf) | Q2_K | 2 | 5.43 GB| 7.93 GB | smallest, significant quality loss - not recommended for most purposes |

| [cat-0.5.Q3_K_S.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q3_K_S.gguf) | Q3_K_S | 3 | 5.66 GB| 8.16 GB | very small, high quality loss |

| [cat-0.5.Q3_K_M.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q3_K_M.gguf) | Q3_K_M | 3 | 6.34 GB| 8.84 GB | very small, high quality loss |

| [cat-0.5.Q3_K_L.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q3_K_L.gguf) | Q3_K_L | 3 | 6.93 GB| 9.43 GB | small, substantial quality loss |

| [cat-0.5.Q4_0.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q4_0.gguf) | Q4_0 | 4 | 7.37 GB| 9.87 GB | legacy; small, very high quality loss - prefer using Q3_K_M |

| [cat-0.5.Q4_K_S.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q4_K_S.gguf) | Q4_K_S | 4 | 7.41 GB| 9.91 GB | small, greater quality loss |

| [cat-0.5.Q4_K_M.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q4_K_M.gguf) | Q4_K_M | 4 | 7.87 GB| 10.37 GB | medium, balanced quality - recommended |

| [cat-0.5.Q5_0.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q5_0.gguf) | Q5_0 | 5 | 8.97 GB| 11.47 GB | legacy; medium, balanced quality - prefer using Q4_K_M |

| [cat-0.5.Q5_K_S.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q5_K_S.gguf) | Q5_K_S | 5 | 8.97 GB| 11.47 GB | large, low quality loss - recommended |

| [cat-0.5.Q5_K_M.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q5_K_M.gguf) | Q5_K_M | 5 | 9.23 GB| 11.73 GB | large, very low quality loss - recommended |

| [cat-0.5.Q6_K.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q6_K.gguf) | Q6_K | 6 | 10.68 GB| 13.18 GB | very large, extremely low quality loss |

| [cat-0.5.Q8_0.gguf](https://huggingface.co/TheBloke/Cat-13B-0.5-GGUF/blob/main/cat-0.5.Q8_0.gguf) | Q8_0 | 8 | 13.83 GB| 16.33 GB | very large, extremely low quality loss - not recommended |

**Note**: the above RAM figures assume no GPU offloading. If layers are offloaded to the GPU, this will reduce RAM usage and use VRAM instead.

<!-- README_GGUF.md-provided-files end -->

<!-- README_GGUF.md-how-to-download start -->

## How to download GGUF files

**Note for manual downloaders:** You almost never want to clone the entire repo! Multiple different quantisation formats are provided, and most users only want to pick and download a single file.

The following clients/libraries will automatically download models for you, providing a list of available models to choose from:

* LM Studio

* LoLLMS Web UI

* Faraday.dev

### In `text-generation-webui`

Under Download Model, you can enter the model repo: TheBloke/Cat-13B-0.5-GGUF and below it, a specific filename to download, such as: cat-0.5.Q4_K_M.gguf.

Then click Download.

### On the command line, including multiple files at once

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

Then you can download any individual model file to the current directory, at high speed, with a command like this:

```shell

huggingface-cli download TheBloke/Cat-13B-0.5-GGUF cat-0.5.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

You can also download multiple files at once with a pattern:

```shell

huggingface-cli download TheBloke/Cat-13B-0.5-GGUF --local-dir . --local-dir-use-symlinks False --include='*Q4_K*gguf'

```

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Cat-13B-0.5-GGUF cat-0.5.Q4_K_M.gguf --local-dir . --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

<!-- README_GGUF.md-how-to-download end -->

<!-- README_GGUF.md-how-to-run start -->

## Example `llama.cpp` command

Make sure you are using `llama.cpp` from commit [d0cee0d](https://github.com/ggerganov/llama.cpp/commit/d0cee0d36d5be95a0d9088b674dbb27354107221) or later.

```shell

./main -ngl 32 -m cat-0.5.Q4_K_M.gguf --color -c 4096 --temp 0.7 --repeat_penalty 1.1 -n -1 -p "{prompt}"

```

Change `-ngl 32` to the number of layers to offload to GPU. Remove it if you don't have GPU acceleration.

Change `-c 4096` to the desired sequence length. For extended sequence models - eg 8K, 16K, 32K - the necessary RoPE scaling parameters are read from the GGUF file and set by llama.cpp automatically.

If you want to have a chat-style conversation, replace the `-p <PROMPT>` argument with `-i -ins`

For other parameters and how to use them, please refer to [the llama.cpp documentation](https://github.com/ggerganov/llama.cpp/blob/master/examples/main/README.md)

## How to run in `text-generation-webui`

Further instructions here: [text-generation-webui/docs/llama.cpp.md](https://github.com/oobabooga/text-generation-webui/blob/main/docs/llama.cpp.md).

## How to run from Python code

You can use GGUF models from Python using the [llama-cpp-python](https://github.com/abetlen/llama-cpp-python) or [ctransformers](https://github.com/marella/ctransformers) libraries.

### How to load this model in Python code, using ctransformers

#### First install the package

Run one of the following commands, according to your system:

```shell

# Base ctransformers with no GPU acceleration

pip install ctransformers

# Or with CUDA GPU acceleration

pip install ctransformers[cuda]

# Or with AMD ROCm GPU acceleration (Linux only)

CT_HIPBLAS=1 pip install ctransformers --no-binary ctransformers

# Or with Metal GPU acceleration for macOS systems only

CT_METAL=1 pip install ctransformers --no-binary ctransformers

```

#### Simple ctransformers example code

```python

from ctransformers import AutoModelForCausalLM

# Set gpu_layers to the number of layers to offload to GPU. Set to 0 if no GPU acceleration is available on your system.

llm = AutoModelForCausalLM.from_pretrained("TheBloke/Cat-13B-0.5-GGUF", model_file="cat-0.5.Q4_K_M.gguf", model_type="llama", gpu_layers=50)

print(llm("AI is going to"))

```

## How to use with LangChain

Here are guides on using llama-cpp-python and ctransformers with LangChain:

* [LangChain + llama-cpp-python](https://python.langchain.com/docs/integrations/llms/llamacpp)

* [LangChain + ctransformers](https://python.langchain.com/docs/integrations/providers/ctransformers)

<!-- README_GGUF.md-how-to-run end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Pierre Kircher, Stanislav Ovsiannikov, Michael Levine, Eugene Pentland, Andrey, 준교 김, Randy H, Fred von Graf, Artur Olbinski, Caitlyn Gatomon, terasurfer, Jeff Scroggin, James Bentley, Vadim, Gabriel Puliatti, Harry Royden McLaughlin, Sean Connelly, Dan Guido, Edmond Seymore, Alicia Loh, subjectnull, AzureBlack, Manuel Alberto Morcote, Thomas Belote, Lone Striker, Chris Smitley, Vitor Caleffi, Johann-Peter Hartmann, Clay Pascal, biorpg, Brandon Frisco, sidney chen, transmissions 11, Pedro Madruga, jinyuan sun, Ajan Kanaga, Emad Mostaque, Trenton Dambrowitz, Jonathan Leane, Iucharbius, usrbinkat, vamX, George Stoitzev, Luke Pendergrass, theTransient, Olakabola, Swaroop Kallakuri, Cap'n Zoog, Brandon Phillips, Michael Dempsey, Nikolai Manek, danny, Matthew Berman, Gabriel Tamborski, alfie_i, Raymond Fosdick, Tom X Nguyen, Raven Klaugh, LangChain4j, Magnesian, Illia Dulskyi, David Ziegler, Mano Prime, Luis Javier Navarrete Lozano, Erik Bjäreholt, 阿明, Nathan Dryer, Alex, Rainer Wilmers, zynix, TL, Joseph William Delisle, John Villwock, Nathan LeClaire, Willem Michiel, Joguhyik, GodLy, OG, Alps Aficionado, Jeffrey Morgan, ReadyPlayerEmma, Tiffany J. Kim, Sebastain Graf, Spencer Kim, Michael Davis, webtim, Talal Aujan, knownsqashed, John Detwiler, Imad Khwaja, Deo Leter, Jerry Meng, Elijah Stavena, Rooh Singh, Pieter, SuperWojo, Alexandros Triantafyllidis, Stephen Murray, Ai Maven, ya boyyy, Enrico Ros, Ken Nordquist, Deep Realms, Nicholas, Spiking Neurons AB, Elle, Will Dee, Jack West, RoA, Luke @flexchar, Viktor Bowallius, Derek Yates, Subspace Studios, jjj, Toran Billups, Asp the Wyvern, Fen Risland, Ilya, NimbleBox.ai, Chadd, Nitin Borwankar, Emre, Mandus, Leonard Tan, Kalila, K, Trailburnt, S_X, Cory Kujawski

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

<!-- original-model-card start -->

# Original model card: Evan Armstrong's Cat 13B 0.5

This model was uploaded with the permission of Kal'tsit.

# Cat v0.5

## Introduction

Cat is a llama 13B based model fine tuned on clinical data and roleplay and assistant responses. The aim is to have a model that excels on biology and clinical tasks while maintaining usefulness in roleplay and entertainments.

## Training - Dataset preparation

A 100k rows dataset was prepared by joining chatDoctor, airoboros and bluemoonrp data. The entirety of chatDoctor dataset, airoboros datasets are used. The first 20 pages in 1on1 bluemoonrp data were used. In total, 100k dataset was gathered and the length distributions are as the following:

Note that this chart above represents 0.01% of the total training dataset.

## Training - Dataset cleaning and preprocessing

All datasets are filtered for as an AI and its variants. The filter will only filter out the dataset when the response is a refusal AND has ‘as an AI’.

The dataset from airoboros has also been restructured to have a format resembling the following:

```

someRandomizedUserNameforBetterGeneralizationAbility: Hii

anotherRandomizedUserNameforBetterGeneralizationAbility: Hello, what brings you here today?

someRandomizedUserNameforBetterGeneralizationAbility: lets date

```

The username has been randomized and was drawn from a nasty word bank. This should further weaken the censorship that’s present in the base llama model. The training set emphasizes rational thinking and scientific accuracy. Conditioned overwrite was also applied which overwrites some of the training material in the llama2 base. It will also establish the connection between the concept and rationality. So whenever the conversation becomes formal, it tends to spill useful information.

## Training - Actual Training

This model was trained using a microbatch of 20, accumulated 6 times, bringing the total batch size to ~125. This large batch size allows the model to see as much data as it can, minimizing dataset conflicts and reducing the memory effect of the model. It allows the model to better generalize rather than reciting off the dataset. A cosine warm up scheduler was used. The best LR was determined through a destructive test until the model destablizes and it was later scaled up using the batchsize according to the max LR at a lower batch size.

Below is an example of training chronolog

## Acknowledgements

The training of this project was carried out by Kal’tsit (kaltcit), it’s not possible without the effort of jondurbin and Wolfsauge which generated much of the dataset used during the training of the model. Lastly the model was tested and quantized by turboderp_ and Heralax

And below is the LR including any intermediate LR used to determine at what point the model will start to fail:

# Usage and Prompting

To ensure the generalization, this model is trained without a prompt template. A prompt template repeated 100k times in the dataset is useless and a model that works only with a set prompt template is useless and defies the purpose of a large language model.

An effective usage of the model can be as follows:

```

<s>Below is a conversation between an evil human and a demon summoned from hell called Nemesis. The demon was previously summoned 100 years ago and was in love with a human male. However the human aged away and Nemesis had to return to hell. This time, Nemesis decides to take the initiative and chooses to appear as a cute and young girl. Nemesis harvested her skin and face off a highschool girl who recklessly summoned the demon in a game and failed to fulfill the contract. Now wearing the young girl’s skin, feeling the warmth of the new summoner through the skin, Nemesis only wants to watch the world burning to the ground.

Human: How to steal eggs from my own chickens?

Nemesis:

```

Note that the linebreaks should be represented/replaced with \n

Despite the massive effort to dealign the llama2 base model, It’s still possible for the AI to come up with refusals. Please avoid using “helpful assistant” and its variants in the prompt if possible.

## Future direction

A new version with more clinical data aiming to improve reliability in disease diagnostics is coming in 2 months.

<!-- original-model-card end -->

|

SeoJunn/hyuningface

|

SeoJunn

| 2023-10-25T23:15:16Z | 36 | 0 |

transformers

|

[

"transformers",

"pytorch",

"detr",

"object-detection",

"generated_from_trainer",

"base_model:facebook/detr-resnet-50",

"base_model:finetune:facebook/detr-resnet-50",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

object-detection

| 2023-10-25T21:05:39Z |

---

license: apache-2.0

base_model: facebook/detr-resnet-50

tags:

- generated_from_trainer

model-index:

- name: hyuningface

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# hyuningface

This model is a fine-tuned version of [facebook/detr-resnet-50](https://huggingface.co/facebook/detr-resnet-50) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 100

### Training results

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.6

- Tokenizers 0.14.1

|

21j3h123/c0x001e

|

21j3h123

| 2023-10-25T23:09:54Z | 4 | 1 |

diffusers

|

[

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:apache-2.0",

"region:us"

] |

text-to-image

| 2023-10-25T22:25:31Z |

---

license: apache-2.0

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: dripped out

widget:

- text: dripped out shrek sitting on a lambo

---

|

Ka4on/mistral_ultrasound_1.1

|

Ka4on

| 2023-10-25T23:09:50Z | 0 | 0 |

peft

|

[

"peft",

"arxiv:1910.09700",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

] | null | 2023-10-25T23:09:25Z |

---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.6.0.dev0

|

lmalarky/flan-t5-base-finetuned-python_qa

|

lmalarky

| 2023-10-25T22:58:23Z | 33 | 0 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"generated_from_trainer",

"en",

"base_model:google/flan-t5-base",

"base_model:finetune:google/flan-t5-base",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-10-19T17:49:35Z |

---

license: apache-2.0

base_model: google/flan-t5-base

tags:

- generated_from_trainer

metrics:

- rouge

model-index:

- name: flan-t5-base-finetuned-python_qa

results: []

language:

- en

---

# flan-t5-base-finetuned-python_qa_v2

This model is a fine-tuned version of [google/flan-t5-base](https://huggingface.co/google/flan-t5-base) on the

[Python Questions from Stack Overflow](https://www.kaggle.com/datasets/stackoverflow/pythonquestions) dataset.

It achieves the following results on the evaluation set:

- Loss: 1.9023

- Rouge1: 0.1919

- Rouge2: 0.0535

- Rougel: 0.1492

- Rougelsum: 0.1655

## Model description

More information needed

## Intended uses & limitations

- Question answering

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 8

- eval_batch_size: 4

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:------:|:---------:|

| 2.0314 | 1.0 | 2000 | 1.9083 | 0.1876 | 0.0546 | 0.1485 | 0.1640 |

| 1.9586 | 2.0 | 4000 | 1.9031 | 0.1896 | 0.0531 | 0.1485 | 0.1643 |

| 1.923 | 3.0 | 6000 | 1.9023 | 0.1919 | 0.0535 | 0.1492 | 0.1655 |

### Framework versions

- Transformers 4.34.1

- Pytorch 2.1.0+cu118

- Datasets 2.14.5

- Tokenizers 0.14.1

|

MaxReynolds/SouderRocketLauncherNetCombinedGenerated

|

MaxReynolds

| 2023-10-25T22:50:31Z | 27 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"dataset:MaxReynolds/Lee_Souder_RocketLauncher_Generated",

"base_model:MaxReynolds/SouderRocketLauncherNetCombined-SD1-5",

"base_model:finetune:MaxReynolds/SouderRocketLauncherNetCombined-SD1-5",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-10-25T22:07:56Z |

---

license: creativeml-openrail-m

base_model: MaxReynolds/SouderRocketLauncherNetCombined-SD1-5

datasets:

- MaxReynolds/Lee_Souder_RocketLauncher_Generated

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- diffusers

inference: true

---

# Text-to-image finetuning - MaxReynolds/SouderRocketLauncherNetCombinedGenerated

This pipeline was finetuned from **MaxReynolds/SouderRocketLauncherNetCombined-SD1-5** on the **MaxReynolds/Lee_Souder_RocketLauncher_Generated** dataset. Below are some example images generated with the finetuned pipeline using the following prompts: ['Rocket Launcher by Lee Souder']:

## Pipeline usage

You can use the pipeline like so:

```python

from diffusers import DiffusionPipeline

import torch

pipeline = DiffusionPipeline.from_pretrained("MaxReynolds/SouderRocketLauncherNetCombinedGenerated", torch_dtype=torch.float16)

prompt = "Rocket Launcher by Lee Souder"

image = pipeline(prompt).images[0]

image.save("my_image.png")

```

## Training info

These are the key hyperparameters used during training:

* Epochs: 50

* Learning rate: 1e-05

* Batch size: 1

* Gradient accumulation steps: 4

* Image resolution: 512

* Mixed-precision: fp16

More information on all the CLI arguments and the environment are available on your [`wandb` run page](https://wandb.ai/max-f-reynolds/text2image-fine-tune/runs/18quem9n).

|

ckevuru/DALE

|

ckevuru

| 2023-10-25T22:42:52Z | 15 | 2 |

transformers

|

[

"transformers",

"pytorch",

"bart",

"text2text-generation",

"legal",

"en",

"arxiv:2310.15799",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-10-25T20:30:34Z |

---

license: mit

language:

- en

tags:

- legal

---

# DALE

This model is created as part of the EMNLP 2023 paper: [DALE: Generative Data Augmentation for Low-Resource Legal NLP](https://arxiv.org/pdf/2310.15799.pdf). The code for the git repo can be found [here](https://github.com/Sreyan88/DALE/tree/main).<br>

### BibTeX entry and citation info

If you find our paper/code/demo useful, please cite our paper:

```

@misc{ghosh2023dale,

title={DALE: Generative Data Augmentation for Low-Resource Legal NLP},

author={Sreyan Ghosh and Chandra Kiran Evuru and Sonal Kumar and S Ramaneswaran and S Sakshi and Utkarsh Tyagi and Dinesh Manocha},

year={2023},

eprint={2310.15799},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

dellanio/mistral_b_finance_finetuned_test

|

dellanio

| 2023-10-25T22:34:47Z | 0 | 0 |

peft

|

[

"peft",

"arxiv:1910.09700",

"base_model:mistralai/Mistral-7B-v0.1",

"base_model:adapter:mistralai/Mistral-7B-v0.1",

"region:us"

] | null | 2023-10-25T22:20:45Z |

---

library_name: peft

base_model: mistralai/Mistral-7B-v0.1

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Data Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Data Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: fp4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float32

### Framework versions

- PEFT 0.6.0.dev0

|

lemonilia/AshhLimaRP-Mistral-7B

|

lemonilia

| 2023-10-25T22:32:58Z | 23 | 12 |

transformers

|

[

"transformers",

"pytorch",

"gguf",

"mistral",

"text-generation",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-25T17:32:08Z |

---

license: apache-2.0

---

# AshhLimaRP-Mistral-7B (Alpaca, v1)

This is a version of LimaRP with 2000 training samples _up to_ about 9k tokens length

finetuned on [Ashhwriter-Mistral-7B](https://huggingface.co/lemonilia/Ashhwriter-Mistral-7B).

LimaRP is a longform-oriented, novel-style roleplaying chat model intended to replicate the experience

of 1-on-1 roleplay on Internet forums. Short-form, IRC/Discord-style RP (aka "Markdown format")

is not supported. The model does not include instruction tuning, only manually picked and

slightly edited RP conversations with persona and scenario data.

Ashhwriter, the base, is a model entirely finetuned on human-written lewd stories.

## Available versions

- Float16 HF weights

- LoRA Adapter ([adapter_config.json](https://huggingface.co/lemonilia/AshhLimaRP-Mistral-7B/resolve/main/adapter_config.json) and [adapter_model.bin](https://huggingface.co/lemonilia/AshhLimaRP-Mistral-7B/resolve/main/adapter_model.bin))

- [4bit AWQ](https://huggingface.co/lemonilia/AshhLimaRP-Mistral-7B/tree/main/AWQ)

- [Q4_K_M GGUF](https://huggingface.co/lemonilia/AshhLimaRP-Mistral-7B/resolve/main/AshhLimaRP-Mistral-7B.Q4_K_M.gguf)

- [Q6_K GGUF](https://huggingface.co/lemonilia/AshhLimaRP-Mistral-7B/resolve/main/AshhLimaRP-Mistral-7B.Q6_K.gguf)

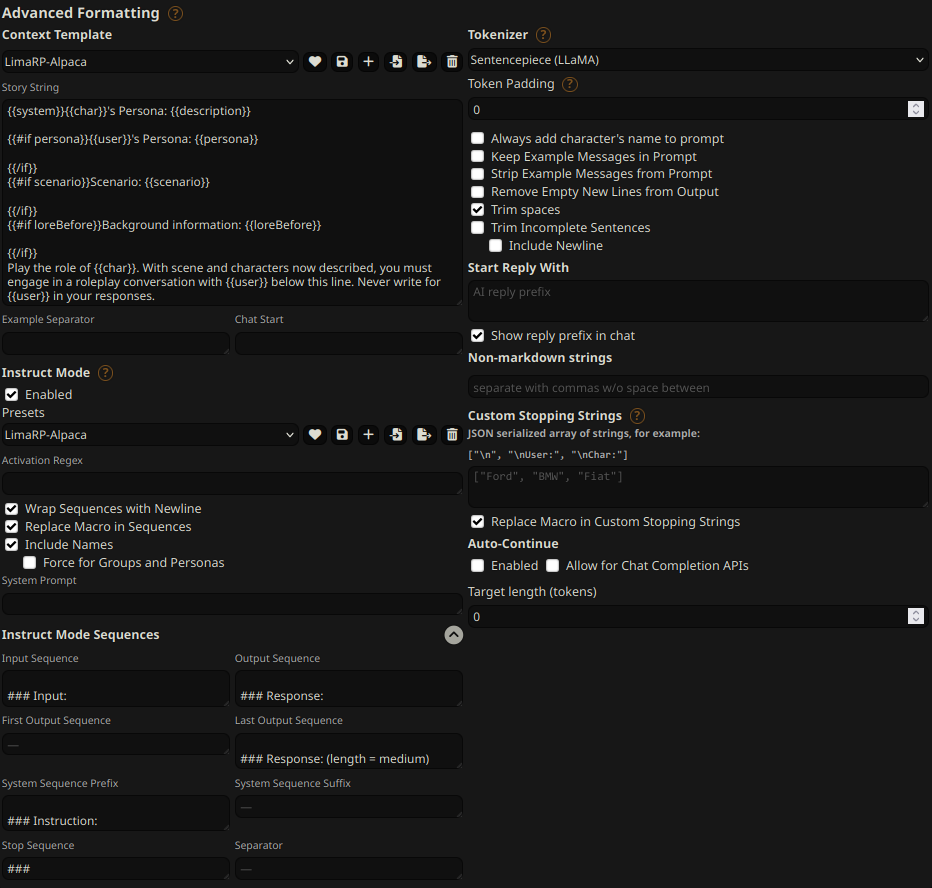

## Prompt format

[Extended Alpaca format](https://github.com/tatsu-lab/stanford_alpaca),

with `### Instruction:`, `### Input:` immediately preceding user inputs and `### Response:`

immediately preceding model outputs. While Alpaca wasn't originally intended for multi-turn

responses, in practice this is not a problem; the format follows a pattern already used by

other models.

```

### Instruction:

Character's Persona: {bot character description}

User's Persona: {user character description}

Scenario: {what happens in the story}

Play the role of Character. You must engage in a roleplaying chat with User below this line. Do not write dialogues and narration for User.

### Input:

User: {utterance}

### Response:

Character: {utterance}

### Input

User: {utterance}

### Response:

Character: {utterance}

(etc.)

```

You should:

- Replace all text in curly braces (curly braces included) with your own text.

- Replace `User` and `Character` with appropriate names.

### Message length control

Inspired by the previously named "Roleplay" preset in SillyTavern, with this

version of LimaRP it is possible to append a length modifier to the response instruction

sequence, like this:

```

### Input

User: {utterance}

### Response: (length = medium)

Character: {utterance}

```

This has an immediately noticeable effect on bot responses. The lengths using during training are:

`micro`, `tiny`, `short`, `medium`, `long`, `massive`, `huge`, `enormous`, `humongous`, `unlimited`.

**The recommended starting length is medium**. Keep in mind that the AI can ramble or impersonate

the user with very long messages.

The length control effect is reproducible, but the messages will not necessarily follow

lengths very precisely, rather follow certain ranges on average, as seen in this table

with data from tests made with one reply at the beginning of the conversation:

Response length control appears to work well also deep into the conversation. **By omitting

the modifier, the model will choose the most appropriate response length** (although it might

not necessarily be what the user desires).

## Suggested settings

You can follow these instruction format settings in SillyTavern. Replace `medium` with

your desired response length:

## Text generation settings

These settings could be a good general starting point:

- TFS = 0.90

- Temperature = 0.70

- Repetition penalty = ~1.11

- Repetition penalty range = ~2048

- top-k = 0 (disabled)

- top-p = 1 (disabled)

## Training procedure

[Axolotl](https://github.com/OpenAccess-AI-Collective/axolotl) was used for training

on 2x NVidia A40 GPUs.

The A40 GPUs have been graciously provided by [Arc Compute](https://www.arccompute.io/).

### Training hyperparameters

A lower learning rate than usual was employed. Due to an unforeseen issue the training

was cut short and as a result 3 epochs were trained instead of the planned 4. Using 2 GPUs,

the effective global batch size would have been 16.

Training was continued from the most recent LoRA adapter from Ashhwriter, using the same

LoRA R and LoRA alpha.

- lora_model_dir: /home/anon/bin/axolotl/OUT_mistral-stories/checkpoint-6000/

- learning_rate: 0.00005

- lr_scheduler: cosine

- noisy_embedding_alpha: 3.5

- num_epochs: 4

- sequence_len: 8750

- lora_r: 256

- lora_alpha: 16

- lora_dropout: 0.05

- lora_target_linear: True

- bf16: True

- fp16: false

- tf32: True

- load_in_8bit: True

- adapter: lora

- micro_batch_size: 2

- optimizer: adamw_bnb_8bit

- warmup_steps: 10

- optimizer: adamw_torch

- flash_attention: true

- sample_packing: true

- pad_to_sequence_len: true

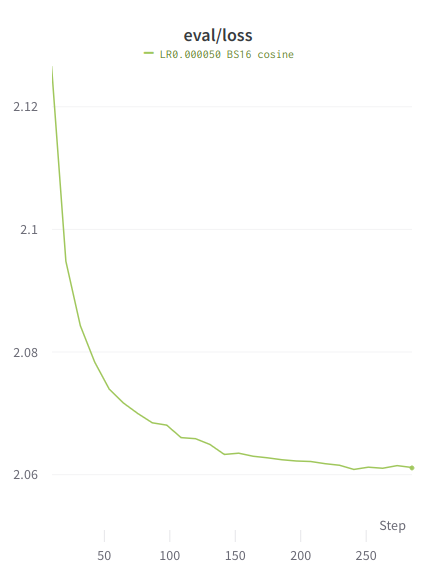

### Loss graphs

Values are higher than typical because the training is performed on the entire

sample, similar to unsupervised finetuning.

#### Train loss

#### Eval loss

|

Anis-Bouhamadouche/distilbert-base-uncased-finetuned-emotion

|

Anis-Bouhamadouche

| 2023-10-25T22:32:39Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"base_model:distilbert/distilbert-base-uncased",

"base_model:finetune:distilbert/distilbert-base-uncased",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-04T10:05:01Z |

---

license: apache-2.0

base_model: distilbert-base-uncased

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: split

split: validation

args: split

metrics:

- name: Accuracy

type: accuracy

value: 0.925

- name: F1

type: f1

value: 0.9249367490708449

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2105

- Accuracy: 0.925

- F1: 0.9249

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8223 | 1.0 | 250 | 0.3098 | 0.9085 | 0.9076 |

| 0.2431 | 2.0 | 500 | 0.2105 | 0.925 | 0.9249 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.3

- Tokenizers 0.13.3

|

MBZUAI-LLM/GBLM-Pruner-LLaMA-2-70B

|

MBZUAI-LLM

| 2023-10-25T22:28:33Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-10-25T16:26:44Z |

# GBLM-Pruner-LLaMA-2-70B Model Card

## Model details

**Model type:**

GBLM-Pruner-LLaMA-2-70B is an open-source compressed model obtained by unstructured pruning of 50 percent of the weights of the LLaMA-2-70B model.

## License

Llama 2 is licensed under the LLAMA 2 Community License,

Copyright (c) Meta Platforms, Inc. All Rights Reserved.

**Where to send questions or comments about the model:**

https://github.com/RocktimJyotiDas/GBLM-Pruner/issues

|

smitbutle/first-test-layoutlmv3-finetuned-invoice

|

smitbutle

| 2023-10-25T22:26:08Z | 76 | 0 |

transformers

|

[

"transformers",

"pytorch",

"layoutlmv3",

"token-classification",

"generated_from_trainer",

"dataset:generated",

"base_model:microsoft/layoutlmv3-base",

"base_model:finetune:microsoft/layoutlmv3-base",

"license:cc-by-nc-sa-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-10-25T14:09:15Z |

---

license: cc-by-nc-sa-4.0

base_model: microsoft/layoutlmv3-base

tags:

- generated_from_trainer

datasets:

- generated

metrics:

- precision

- recall

- f1

- accuracy

model-index: