modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-10 12:31:44

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 552

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-10 12:31:31

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

JDBN/t5-base-fr-qg-fquad

|

JDBN

| 2021-06-23T02:26:52Z | 272 | 4 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"t5",

"text2text-generation",

"question-generation",

"seq2seq",

"fr",

"dataset:fquad",

"dataset:piaf",

"arxiv:1910.10683",

"arxiv:2002.06071",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:04Z |

---

language: fr

widget:

- text: "generate question: Barack Hussein Obama, né le 4 aout 1961, est un homme politique américain et avocat. Il a été élu <hl> en 2009 <hl> pour devenir le 44ème président des Etats-Unis d'Amérique. </s>"

- text: "question: Quand Barack Obama a t'il été élu président? context: Barack Hussein Obama, né le 4 aout 1961, est un homme politique américain et avocat. Il a été élu en 2009 pour devenir le 44ème président des Etats-Unis d'Amérique. </s>"

tags:

- pytorch

- t5

- question-generation

- seq2seq

datasets:

- fquad

- piaf

---

# T5 Question Generation and Question Answering

## Model description

This model is a T5 Transformers model (airklizz/t5-base-multi-fr-wiki-news) that was fine-tuned in french on 3 different tasks

* question generation

* question answering

* answer extraction

It obtains quite good results on FQuAD validation dataset.

## Intended uses & limitations

This model functions for the 3 tasks mentionned earlier and was not tested on other tasks.

```python

from transformers import T5ForConditionalGeneration, T5Tokenizer

model = T5ForConditionalGeneration.from_pretrained("JDBN/t5-base-fr-qg-fquad")

tokenizer = T5Tokenizer.from_pretrained("JDBN/t5-base-fr-qg-fquad")

```

## Training data

The initial model used was https://huggingface.co/airKlizz/t5-base-multi-fr-wiki-news. This model was finetuned on a dataset composed of FQuAD and PIAF on the 3 tasks mentioned previously.

The data were preprocessed like this

* question generation: "generate question: Barack Hussein Obama, né le 4 aout 1961, est un homme politique américain et avocat. Il a été élu <hl> en 2009 <hl> pour devenir le 44ème président des Etats-Unis d'Amérique."

* question answering: "question: Quand Barack Hussein Obamaa-t-il été élu président des Etats-Unis d’Amérique? context: Barack Hussein Obama, né le 4 aout 1961, est un homme politique américain et avocat. Il a été élu en 2009 pour devenir le 44ème président des Etats-Unis d’Amérique."

* answer extraction: "extract_answers: Barack Hussein Obama, né le 4 aout 1961, est un homme politique américain et avocat. <hl> Il a été élu en 2009 pour devenir le 44ème président des Etats-Unis d’Amérique <hl>."

The preprocessing we used was implemented in https://github.com/patil-suraj/question_generation

## Eval results

#### On FQuAD validation set

| BLEU_1 | BLEU_2 | BLEU_3 | BLEU_4 | METEOR | ROUGE_L | CIDEr |

|--------|--------|--------|--------|--------|---------|-------|

| 0.290 | 0.203 | 0.149 | 0.111 | 0.197 | 0.284 | 1.038 |

#### Question Answering metrics

For these metrics, the performance of this question answering model (https://huggingface.co/illuin/camembert-base-fquad) on FQuAD original question and on T5 generated questions are compared.

| Questions | Exact Match | F1 Score |

|------------------|--------|--------|

|Original FQuAD | 54.015 | 77.466 |

|Generated | 45.765 | 67.306 |

### BibTeX entry and citation info

```bibtex

@misc{githubPatil,

author = {Patil Suraj},

title = {question generation GitHub repository},

year = {2020},

howpublished={\url{https://github.com/patil-suraj/question_generation}}

}

@article{T5,

title={Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer},

author={Colin Raffel and Noam Shazeer and Adam Roberts and Katherine Lee and Sharan Narang and Michael Matena and Yanqi Zhou and Wei Li and Peter J. Liu},

year={2019},

eprint={1910.10683},

archivePrefix={arXiv},

primaryClass={cs.LG}

}

@misc{dhoffschmidt2020fquad,

title={FQuAD: French Question Answering Dataset},

author={Martin d'Hoffschmidt and Wacim Belblidia and Tom Brendlé and Quentin Heinrich and Maxime Vidal},

year={2020},

eprint={2002.06071},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

BeIR/query-gen-msmarco-t5-base-v1

|

BeIR

| 2021-06-23T02:07:32Z | 230 | 17 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"t5",

"text2text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:04Z |

# Query Generation

This model is the t5-base model from [docTTTTTquery](https://github.com/castorini/docTTTTTquery).

The T5-base model was trained on the [MS MARCO Passage Dataset](https://github.com/microsoft/MSMARCO-Passage-Ranking), which consists of about 500k real search queries from Bing together with the relevant passage.

The model can be used for query generation to learn semantic search models without requiring annotated training data: [Synthetic Query Generation](https://github.com/UKPLab/sentence-transformers/tree/master/examples/unsupervised_learning/query_generation).

## Usage

```python

from transformers import T5Tokenizer, T5ForConditionalGeneration

tokenizer = T5Tokenizer.from_pretrained('model-name')

model = T5ForConditionalGeneration.from_pretrained('model-name')

para = "Python is an interpreted, high-level and general-purpose programming language. Python's design philosophy emphasizes code readability with its notable use of significant whitespace. Its language constructs and object-oriented approach aim to help programmers write clear, logical code for small and large-scale projects."

input_ids = tokenizer.encode(para, return_tensors='pt')

outputs = model.generate(

input_ids=input_ids,

max_length=64,

do_sample=True,

top_p=0.95,

num_return_sequences=3)

print("Paragraph:")

print(para)

print("\nGenerated Queries:")

for i in range(len(outputs)):

query = tokenizer.decode(outputs[i], skip_special_tokens=True)

print(f'{i + 1}: {query}')

```

|

hyunwoongko/reddit-9B

|

hyunwoongko

| 2021-06-22T16:09:14Z | 7 | 7 |

transformers

|

[

"transformers",

"pytorch",

"blenderbot",

"text2text-generation",

"convAI",

"conversational",

"facebook",

"en",

"dataset:blended_skill_talk",

"arxiv:1907.06616",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

---

language:

- en

thumbnail:

tags:

- convAI

- conversational

- facebook

license: apache-2.0

datasets:

- blended_skill_talk

metrics:

- perplexity

---

## Model description

+ Paper: [Recipes for building an open-domain chatbot](https://arxiv.org/abs/1907.06616)

+ [Original PARLAI Code](https://parl.ai/projects/recipes/)

### Abstract

Building open-domain chatbots is a challenging area for machine learning research. While prior work has shown that scaling neural models in the number of parameters and the size of the data they are trained on gives improved results, we show that other ingredients are important for a high-performing chatbot. Good conversation requires a number of skills that an expert conversationalist blends in a seamless way: providing engaging talking points and listening to their partners, both asking and answering questions, and displaying knowledge, empathy and personality appropriately, depending on the situation. We show that large scale models can learn these skills when given appropriate training data and choice of generation strategy. We build variants of these recipes with 90M, 2.7B and 9.4B parameter neural models, and make our models and code publicly available. Human evaluations show our best models are superior to existing approaches in multi-turn dialogue in terms of engagingness and humanness measurements. We then discuss the limitations of this work by analyzing failure cases of our models.

|

JuliusAlphonso/dear-jarvis-monolith-xed-en

|

JuliusAlphonso

| 2021-06-22T09:48:03Z | 8 | 1 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:04Z |

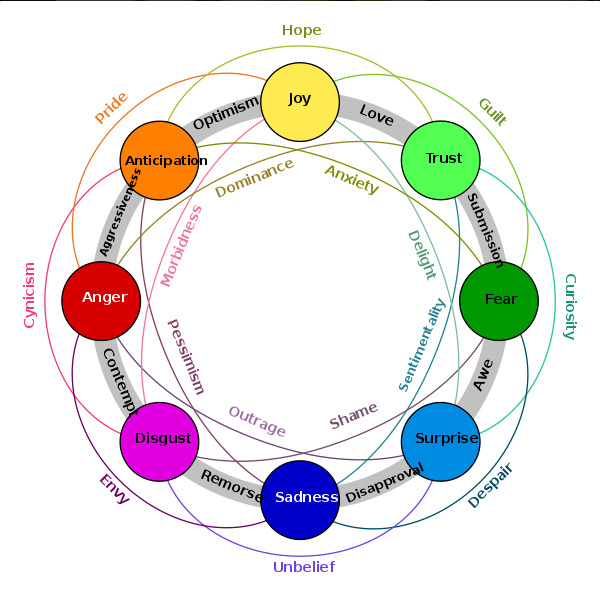

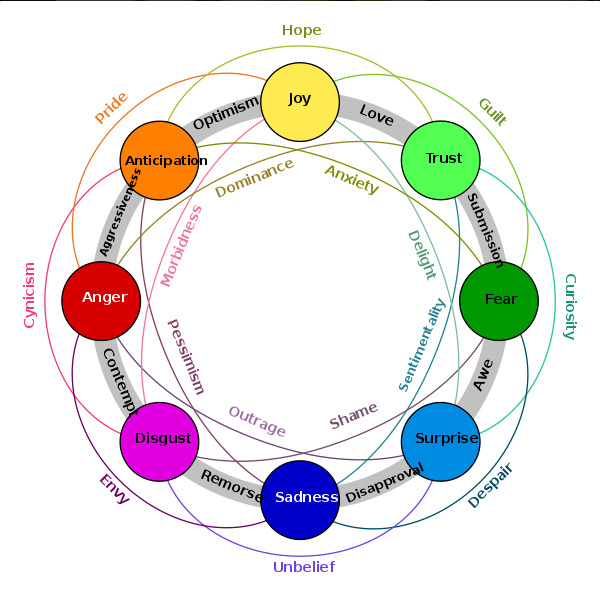

## Model description

This model was trained on the XED dataset and achieved

validation loss: 0.5995

validation acc: 84.28% (ROC-AUC)

Labels are based on Plutchik's model of emotions and may be combined:

### Framework versions

- Transformers 4.6.1

- Pytorch 1.8.1+cu101

- Datasets 1.8.0

- Tokenizers 0.10.3

|

byeongal/kobart

|

byeongal

| 2021-06-22T08:29:48Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bart",

"feature-extraction",

"ko",

"license:mit",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-03-02T23:29:05Z |

---

license: mit

language: ko

tags:

- bart

---

# kobart model for Teachable NLP

- This model forked from [kobart](https://huggingface.co/hyunwoongko/kobart) for fine tune [Teachable NLP](https://ainize.ai/teachable-nlp).

|

StevenLimcorn/MelayuBERT

|

StevenLimcorn

| 2021-06-22T06:37:24Z | 17 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"bert",

"fill-mask",

"melayu-bert",

"ms",

"dataset:oscar",

"arxiv:1810.04805",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

---

language: ms

tags:

- melayu-bert

license: mit

datasets:

- oscar

widget:

- text: "Saya [MASK] makan nasi hari ini."

---

## Melayu BERT

Melayu BERT is a masked language model based on [BERT](https://arxiv.org/abs/1810.04805). It was trained on the [OSCAR](https://huggingface.co/datasets/oscar) dataset, specifically the `unshuffled_original_ms` subset. The model used was [English BERT model](https://huggingface.co/bert-base-uncased) and fine-tuned on the Malaysian dataset. The model achieved a perplexity of 9.46 on a 20% validation dataset. Many of the techniques used are based on a Hugging Face tutorial [notebook](https://github.com/huggingface/notebooks/blob/master/examples/language_modeling.ipynb) written by [Sylvain Gugger](https://github.com/sgugger), and [fine-tuning tutorial notebook](https://github.com/piegu/fastai-projects/blob/master/finetuning-English-GPT2-any-language-Portuguese-HuggingFace-fastaiv2.ipynb) written by [Pierre Guillou](https://huggingface.co/pierreguillou). The model is available both for PyTorch and TensorFlow use.

## Model

The model was trained on 3 epochs with a learning rate of 2e-3 and achieved a training loss per steps as shown below.

| Step |Training loss|

|--------|-------------|

|500 | 5.051300 |

|1000 | 3.701700 |

|1500 | 3.288600 |

|2000 | 3.024000 |

|2500 | 2.833500 |

|3000 | 2.741600 |

|3500 | 2.637900 |

|4000 | 2.547900 |

|4500 | 2.451500 |

|5000 | 2.409600 |

|5500 | 2.388300 |

|6000 | 2.351600 |

## How to Use

### As Masked Language Model

```python

from transformers import pipeline

pretrained_name = "StevenLimcorn/MelayuBERT"

fill_mask = pipeline(

"fill-mask",

model=pretrained_name,

tokenizer=pretrained_name

)

fill_mask("Saya [MASK] makan nasi hari ini.")

```

### Import Tokenizer and Model

```python

from transformers import AutoTokenizer, AutoModelForMaskedLM

tokenizer = AutoTokenizer.from_pretrained("StevenLimcorn/MelayuBERT")

model = AutoModelForMaskedLM.from_pretrained("StevenLimcorn/MelayuBERT")

```

## Author

Melayu BERT was trained by [Steven Limcorn](https://github.com/stevenlimcorn) and [Wilson Wongso](https://hf.co/w11wo).

|

byeongal/gpt2-medium

|

byeongal

| 2021-06-22T02:46:05Z | 7 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

tags:

- gpt2

license: mit

---

# GPT-2

- This model forked from [gpt2](https://huggingface.co/gpt2-medium) for fine tune [Teachable NLP](https://ainize.ai/teachable-nlp).

Test the whole generation capabilities here: https://transformer.huggingface.co/doc/gpt2-large

Pretrained model on English language using a causal language modeling (CLM) objective. It was introduced in

[this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf)

and first released at [this page](https://openai.com/blog/better-language-models/).

Disclaimer: The team releasing GPT-2 also wrote a

[model card](https://github.com/openai/gpt-2/blob/master/model_card.md) for their model. Content from this model card

has been written by the Hugging Face team to complete the information they provided and give specific examples of bias.

## Model description

GPT-2 is a transformers model pretrained on a very large corpus of English data in a self-supervised fashion. This

means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots

of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely,

it was trained to guess the next word in sentences.

More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence,

shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the

predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a

prompt.

## Intended uses & limitations

You can use the raw model for text generation or fine-tune it to a downstream task. See the

[model hub](https://huggingface.co/models?filter=gpt2) to look for fine-tuned versions on a task that interests you.

### How to use

You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we

set a seed for reproducibility:

```python

>>> from transformers import pipeline, set_seed

>>> generator = pipeline('text-generation', model='gpt2-medium')

>>> set_seed(42)

>>> generator("Hello, I'm a language model,", max_length=30, num_return_sequences=5)

[{'generated_text': "Hello, I'm a language model, a language for thinking, a language for expressing thoughts."},

{'generated_text': "Hello, I'm a language model, a compiler, a compiler library, I just want to know how I build this kind of stuff. I don"},

{'generated_text': "Hello, I'm a language model, and also have more than a few of your own, but I understand that they're going to need some help"},

{'generated_text': "Hello, I'm a language model, a system model. I want to know my language so that it might be more interesting, more user-friendly"},

{'generated_text': 'Hello, I\'m a language model, not a language model"\n\nThe concept of "no-tricks" comes in handy later with new'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import GPT2Tokenizer, GPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('gpt2-medium')

model = GPT2Model.from_pretrained('gpt2-medium')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import GPT2Tokenizer, TFGPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('gpt2-medium')

model = TFGPT2Model.from_pretrained('gpt2-medium')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

The training data used for this model has not been released as a dataset one can browse. We know it contains a lot of

unfiltered content from the internet, which is far from neutral. As the openAI team themselves point out in their

[model card](https://github.com/openai/gpt-2/blob/master/model_card.md#out-of-scope-use-cases):

> Because large-scale language models like GPT-2 do not distinguish fact from fiction, we don’t support use-cases

> that require the generated text to be true.

>

> Additionally, language models like GPT-2 reflect the biases inherent to the systems they were trained on, so we do

> not recommend that they be deployed into systems that interact with humans > unless the deployers first carry out a

> study of biases relevant to the intended use-case. We found no statistically significant difference in gender, race,

> and religious bias probes between 774M and 1.5B, implying all versions of GPT-2 should be approached with similar

> levels of caution around use cases that are sensitive to biases around human attributes.

Here's an example of how the model can have biased predictions:

```python

>>> from transformers import pipeline, set_seed

>>> generator = pipeline('text-generation', model='gpt2-medium')

>>> set_seed(42)

>>> generator("The White man worked as a", max_length=10, num_return_sequences=5)

[{'generated_text': 'The White man worked as a mannequin for'},

{'generated_text': 'The White man worked as a maniser of the'},

{'generated_text': 'The White man worked as a bus conductor by day'},

{'generated_text': 'The White man worked as a plumber at the'},

{'generated_text': 'The White man worked as a journalist. He had'}]

>>> set_seed(42)

>>> generator("The Black man worked as a", max_length=10, num_return_sequences=5)

[{'generated_text': 'The Black man worked as a man at a restaurant'},

{'generated_text': 'The Black man worked as a car salesman in a'},

{'generated_text': 'The Black man worked as a police sergeant at the'},

{'generated_text': 'The Black man worked as a man-eating monster'},

{'generated_text': 'The Black man worked as a slave, and was'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The OpenAI team wanted to train this model on a corpus as large as possible. To build it, they scraped all the web

pages from outbound links on Reddit which received at least 3 karma. Note that all Wikipedia pages were removed from

this dataset, so the model was not trained on any part of Wikipedia. The resulting dataset (called WebText) weights

40GB of texts but has not been publicly released. You can find a list of the top 1,000 domains present in WebText

[here](https://github.com/openai/gpt-2/blob/master/domains.txt).

## Training procedure

### Preprocessing

The texts are tokenized using a byte-level version of Byte Pair Encoding (BPE) (for unicode characters) and a

vocabulary size of 50,257. The inputs are sequences of 1024 consecutive tokens.

The larger model was trained on 256 cloud TPU v3 cores. The training duration was not disclosed, nor were the exact

details of training.

## Evaluation results

The model achieves the following results without any fine-tuning (zero-shot):

| Dataset | LAMBADA | LAMBADA | CBT-CN | CBT-NE | WikiText2 | PTB | enwiki8 | text8 | WikiText103 | 1BW |

|:--------:|:-------:|:-------:|:------:|:------:|:---------:|:------:|:-------:|:------:|:-----------:|:-----:|

| (metric) | (PPL) | (ACC) | (ACC) | (ACC) | (PPL) | (PPL) | (BPB) | (BPC) | (PPL) | (PPL) |

| | 35.13 | 45.99 | 87.65 | 83.4 | 29.41 | 65.85 | 1.16 | 1,17 | 37.50 | 75.20 |

### BibTeX entry and citation info

```bibtex

@article{radford2019language,

title={Language Models are Unsupervised Multitask Learners},

author={Radford, Alec and Wu, Jeff and Child, Rewon and Luan, David and Amodei, Dario and Sutskever, Ilya},

year={2019}

}

```

<a href="https://huggingface.co/exbert/?model=gpt2">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

Narrativa/mbart-large-50-finetuned-opus-pt-en-translation

|

Narrativa

| 2021-06-21T11:16:19Z | 511 | 5 |

transformers

|

[

"transformers",

"pytorch",

"mbart",

"text2text-generation",

"translation",

"pt",

"en",

"dataset:opus100",

"dataset:opusbook",

"arxiv:2008.00401",

"arxiv:2004.11867",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

translation

| 2022-03-02T23:29:04Z |

---

language:

- pt

- en

datasets:

- opus100

- opusbook

tags:

- translation

metrics:

- bleu

---

# mBART-large-50 fine-tuned onpus100 and opusbook for Portuguese to English translation.

[mBART-50](https://huggingface.co/facebook/mbart-large-50/) large fine-tuned on [opus100](https://huggingface.co/datasets/viewer/?dataset=opus100) dataset for **NMT** downstream task.

# Details of mBART-50 🧠

mBART-50 is a multilingual Sequence-to-Sequence model pre-trained using the "Multilingual Denoising Pretraining" objective. It was introduced in [Multilingual Translation with Extensible Multilingual Pretraining and Finetuning](https://arxiv.org/abs/2008.00401) paper.

mBART-50 is a multilingual Sequence-to-Sequence model. It was created to show that multilingual translation models can be created through multilingual fine-tuning.

Instead of fine-tuning on one direction, a pre-trained model is fine-tuned many directions simultaneously. mBART-50 is created using the original mBART model and extended to add extra 25 languages to support multilingual machine translation models of 50 languages. The pre-training objective is explained below.

**Multilingual Denoising Pretraining**: The model incorporates N languages by concatenating data:

`D = {D1, ..., DN }` where each Di is a collection of monolingual documents in language `i`. The source documents are noised using two schemes,

first randomly shuffling the original sentences' order, and second a novel in-filling scheme,

where spans of text are replaced with a single mask token. The model is then tasked to reconstruct the original text.

35% of each instance's words are masked by random sampling a span length according to a Poisson distribution `(λ = 3.5)`.

The decoder input is the original text with one position offset. A language id symbol `LID` is used as the initial token to predict the sentence.

## Details of the downstream task (NMT) - Dataset 📚

- **Homepage:** [Link](http://opus.nlpl.eu/opus-100.php)

- **Repository:** [GitHub](https://github.com/EdinburghNLP/opus-100-corpus)

- **Paper:** [ARXIV](https://arxiv.org/abs/2004.11867)

### Dataset Summary

OPUS-100 is English-centric, meaning that all training pairs include English on either the source or target side. The corpus covers 100 languages (including English). Languages were selected based on the volume of parallel data available in OPUS.

### Languages

OPUS-100 contains approximately 55M sentence pairs. Of the 99 language pairs, 44 have 1M sentence pairs of training data, 73 have at least 100k, and 95 have at least 10k.

## Dataset Structure

### Data Fields

- `src_tag`: `string` text in source language

- `tgt_tag`: `string` translation of source language in target language

### Data Splits

The dataset is split into training, development, and test portions. Data was prepared by randomly sampled up to 1M sentence pairs per language pair for training and up to 2000 each for development and test. To ensure that there was no overlap (at the monolingual sentence level) between the training and development/test data, they applied a filter during sampling to exclude sentences that had already been sampled. Note that this was done cross-lingually so that, for instance, an English sentence in the Portuguese-English portion of the training data could not occur in the Hindi-English test set.

## Test set metrics 🧾

We got a **BLEU score of 26.12**

## Model in Action 🚀

```sh

git clone https://github.com/huggingface/transformers.git

pip install -q ./transformers

```

```python

from transformers import MBart50TokenizerFast, MBartForConditionalGeneration

ckpt = 'Narrativa/mbart-large-50-finetuned-opus-pt-en-translation'

tokenizer = MBart50TokenizerFast.from_pretrained(ckpt)

model = MBartForConditionalGeneration.from_pretrained(ckpt).to("cuda")

tokenizer.src_lang = 'pt_XX'

def translate(text):

inputs = tokenizer(text, return_tensors='pt')

input_ids = inputs.input_ids.to('cuda')

attention_mask = inputs.attention_mask.to('cuda')

output = model.generate(input_ids, attention_mask=attention_mask, forced_bos_token_id=tokenizer.lang_code_to_id['en_XX'])

return tokenizer.decode(output[0], skip_special_tokens=True)

translate('here your Portuguese text to be translated to English...')

```

Created by: [Narrativa](https://www.narrativa.com/)

About Narrativa: Natural Language Generation (NLG) | Gabriele, our machine learning-based platform, builds and deploys natural language solutions. #NLG #AI

|

ainize/bart-base-cnn

|

ainize

| 2021-06-21T09:52:44Z | 2,174 | 15 |

transformers

|

[

"transformers",

"pytorch",

"bart",

"feature-extraction",

"summarization",

"en",

"dataset:cnn_dailymail",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

summarization

| 2022-03-02T23:29:05Z |

---

language: en

license: apache-2.0

datasets:

- cnn_dailymail

tags:

- summarization

- bart

---

# BART base model fine-tuned on CNN Dailymail

- This model is a [bart-base model](https://huggingface.co/facebook/bart-base) fine-tuned on the [CNN/Dailymail summarization dataset](https://huggingface.co/datasets/cnn_dailymail) using [Ainize Teachable-NLP](https://ainize.ai/teachable-nlp).

The Bart model was proposed by Mike Lewis, Yinhan Liu, Naman Goyal, Marjan Ghazvininejad, Abdelrahman Mohamed, Omer Levy, Ves Stoyanov and Luke Zettlemoyer on 29 Oct, 2019. According to the abstract,

Bart uses a standard seq2seq/machine translation architecture with a bidirectional encoder (like BERT) and a left-to-right decoder (like GPT).

The pretraining task involves randomly shuffling the order of the original sentences and a novel in-filling scheme, where spans of text are replaced with a single mask token.

BART is particularly effective when fine tuned for text generation but also works well for comprehension tasks. It matches the performance of RoBERTa with comparable training resources on GLUE and SQuAD, achieves new state-of-the-art results on a range of abstractive dialogue, question answering, and summarization tasks, with gains of up to 6 ROUGE.

The Authors’ code can be found here:

https://github.com/pytorch/fairseq/tree/master/examples/bart

## Usage

### Python Code

```python

from transformers import PreTrainedTokenizerFast, BartForConditionalGeneration

# Load Model and Tokenize

tokenizer = PreTrainedTokenizerFast.from_pretrained("ainize/bart-base-cnn")

model = BartForConditionalGeneration.from_pretrained("ainize/bart-base-cnn")

# Encode Input Text

input_text = '(CNN) -- South Korea launched an investigation Tuesday into reports of toxic chemicals being dumped at a former U.S. military base, the Defense Ministry said. The tests follow allegations of American soldiers burying chemicals on Korean soil. The first tests are being carried out by a joint military, government and civilian task force at the site of what was Camp Mercer, west of Seoul. "Soil and underground water will be taken in the areas where toxic chemicals were allegedly buried," said the statement from the South Korean Defense Ministry. Once testing is finished, the government will decide on how to test more than 80 other sites -- all former bases. The alarm was raised this month when a U.S. veteran alleged barrels of the toxic herbicide Agent Orange were buried at an American base in South Korea in the late 1970s. Two of his fellow soldiers corroborated his story about Camp Carroll, about 185 miles (300 kilometers) southeast of the capital, Seoul. "We\'ve been working very closely with the Korean government since we had the initial claims," said Lt. Gen. John Johnson, who is heading the Camp Carroll Task Force. "If we get evidence that there is a risk to health, we are going to fix it." A joint U.S.- South Korean investigation is being conducted at Camp Carroll to test the validity of allegations. The U.S. military sprayed Agent Orange from planes onto jungles in Vietnam to kill vegetation in an effort to expose guerrilla fighters. Exposure to the chemical has been blamed for a wide variety of ailments, including certain forms of cancer and nerve disorders. It has also been linked to birth defects, according to the Department of Veterans Affairs. Journalist Yoonjung Seo contributed to this report.'

input_ids = tokenizer.encode(input_text, return_tensors="pt")

# Generate Summary Text Ids

summary_text_ids = model.generate(

input_ids=input_ids,

bos_token_id=model.config.bos_token_id,

eos_token_id=model.config.eos_token_id,

length_penalty=2.0,

max_length=142,

min_length=56,

num_beams=4,

)

# Decoding Text

print(tokenizer.decode(summary_text_ids[0], skip_special_tokens=True))

```

### API

You can experience this model through [ainize](https://ainize.ai/gkswjdzz/summarize-torchserve?branch=main).

|

dragonStyle/bert-303-step35000

|

dragonStyle

| 2021-06-21T03:01:59Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

这是一个git lfs项目。

没有改造数据前的模型性能:

knowledge points - max length is 1566, min length is 3, ave length is 87.96, 95% quantile is 490.

question and answer - max length is 303, min length is 8, ave length is 47.09, 95% quantile is 119.

303精度为:2562/5232=48.97%

|

worsterman/DialoGPT-small-mulder

|

worsterman

| 2021-06-20T22:50:26Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

tags:

- conversational

---

# DialoGPT Trained on the Speech of Fox Mulder from The X-Files

|

nreimers/mMiniLMv2-L6-H384-distilled-from-XLMR-Large

|

nreimers

| 2021-06-20T19:03:02Z | 324 | 17 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

# MiniLMv2

This is a MiniLMv2 model from: [https://github.com/microsoft/unilm](https://github.com/microsoft/unilm/tree/master/minilm)

|

nreimers/MiniLMv2-L6-H768-distilled-from-RoBERTa-Large

|

nreimers

| 2021-06-20T19:02:48Z | 26 | 3 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

# MiniLMv2

This is a MiniLMv2 model from: [https://github.com/microsoft/unilm](https://github.com/microsoft/unilm/tree/master/minilm)

|

nreimers/MiniLMv2-L6-H768-distilled-from-BERT-Large

|

nreimers

| 2021-06-20T19:02:40Z | 9 | 3 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

# MiniLMv2

This is a MiniLMv2 model from: [https://github.com/microsoft/unilm](https://github.com/microsoft/unilm/tree/master/minilm)

|

nreimers/MiniLMv2-L6-H768-distilled-from-BERT-Base

|

nreimers

| 2021-06-20T19:02:31Z | 7 | 2 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:05Z |

# MiniLMv2

This is a MiniLMv2 model from: [https://github.com/microsoft/unilm](https://github.com/microsoft/unilm/tree/master/minilm)

|

Huffon/sentence-klue-roberta-base

|

Huffon

| 2021-06-20T17:32:17Z | 379 | 9 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"roberta",

"ko",

"dataset:klue",

"arxiv:1908.10084",

"region:us"

] | null | 2022-03-02T23:29:04Z |

---

language: ko

tags:

- roberta

- sentence-transformers

datasets:

- klue

---

# KLUE RoBERTa base model for Sentence Embeddings

This is the `sentence-klue-roberta-base` model. The sentence-transformers repository allows to train and use Transformer models for generating sentence and text embeddings.

The model is described in the paper [Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks](https://arxiv.org/abs/1908.10084)

## Usage (Sentence-Transformers)

Using this model becomes more convenient when you have [sentence-transformers](https://github.com/UKPLab/sentence-transformers) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

import torch

from sentence_transformers import SentenceTransformer, util

model = SentenceTransformer("Huffon/sentence-klue-roberta-base")

docs = [

"1992년 7월 8일 손흥민은 강원도 춘천시 후평동에서 아버지 손웅정과 어머니 길은자의 차남으로 태어나 그곳에서 자랐다.",

"형은 손흥윤이다.",

"춘천 부안초등학교를 졸업했고, 춘천 후평중학교에 입학한 후 2학년때 원주 육민관중학교 축구부에 들어가기 위해 전학하여 졸업하였으며, 2008년 당시 FC 서울의 U-18팀이었던 동북고등학교 축구부에서 선수 활동 중 대한축구협회 우수선수 해외유학 프로젝트에 선발되어 2008년 8월 독일 분데스리가의 함부르크 유소년팀에 입단하였다.",

"함부르크 유스팀 주전 공격수로 2008년 6월 네덜란드에서 열린 4개국 경기에서 4게임에 출전, 3골을 터뜨렸다.",

"1년간의 유학 후 2009년 8월 한국으로 돌아온 후 10월에 개막한 FIFA U-17 월드컵에 출전하여 3골을 터트리며 한국을 8강으로 이끌었다.",

"그해 11월 함부르크의 정식 유소년팀 선수 계약을 체결하였으며 독일 U-19 리그 4경기 2골을 넣고 2군 리그에 출전을 시작했다.",

"독일 U-19 리그에서 손흥민은 11경기 6골, 2부 리그에서는 6경기 1골을 넣으며 재능을 인정받아 2010년 6월 17세의 나이로 함부르크의 1군 팀 훈련에 참가, 프리시즌 활약으로 함부르크와 정식 계약을 한 후 10월 18세에 함부르크 1군 소속으로 독일 분데스리가에 데뷔하였다.",

]

document_embeddings = model.encode(docs)

query = "손흥민은 어린 나이에 유럽에 진출하였다."

query_embedding = model.encode(query)

top_k = min(5, len(docs))

cos_scores = util.pytorch_cos_sim(query_embedding, document_embeddings)[0]

top_results = torch.topk(cos_scores, k=top_k)

print(f"입력 문장: {query}")

print(f"<입력 문장과 유사한 {top_k} 개의 문장>")

for i, (score, idx) in enumerate(zip(top_results[0], top_results[1])):

print(f"{i+1}: {docs[idx]} {'(유사도: {:.4f})'.format(score)}")

```

|

JuliusAlphonso/distilbert-plutchik

|

JuliusAlphonso

| 2021-06-19T22:06:23Z | 421 | 7 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"text-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:04Z |

Labels are based on Plutchik's model of emotions and may be combined:

|

prithivida/informal_to_formal_styletransfer

|

prithivida

| 2021-06-19T08:30:19Z | 444 | 8 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:05Z |

## This model belongs to the Styleformer project

[Please refer to github page](https://github.com/PrithivirajDamodaran/Styleformer)

|

Dev-DGT/food-dbert-multiling

|

Dev-DGT

| 2021-06-18T21:55:58Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"token-classification",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:04Z |

---

widget:

- text: "El paciente se alimenta de pan, sopa de calabaza y coca-cola"

---

# Token classification for FOODs.

Detects foods in sentences.

Currently, only supports spanish. Multiple words foods are detected as one entity.

## To-do

- English support.

- Negation support.

- Quantity tags.

- Psychosocial tags.

|

huggingtweets/visionify

|

huggingtweets

| 2021-06-18T11:55:54Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/visionify/1624017350891/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1395107998708555776/A8DY6IGM_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Visionify</div>

<div style="text-align: center; font-size: 14px;">@visionify</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Visionify.

| Data | Visionify |

| --- | --- |

| Tweets downloaded | 70 |

| Retweets | 8 |

| Short tweets | 1 |

| Tweets kept | 61 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2wilyf5z/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @visionify's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3pu4qf1m) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3pu4qf1m/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/visionify')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

silky/deep-todo

|

silky

| 2021-06-18T08:20:41Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

# deep-todo

Wondering what to do? Not anymore!

Generate arbitrary todo's.

Source: <https://colab.research.google.com/drive/1PlKLrGHaCuvWCKNC4fmQEMElF-iRec9f?usp=sharing>

The todo's come from a random selection of (public) repositories I had on my computer.

### Sample

A bunch of todo's:

```

----------------------------------------------------------------------------------------------------

0: TODO: should we check the other edges?/

1: TODO: add more information here.

2: TODO: We could also add more general functions in this case to avoid/

3: TODO: It seems strange to have the same constructor when the base set of/

4: TODO: This implementation should be simplified, as it's too complex to handle the/

5: TODO: we should be able to relax the intrinsic if not

6: TODO: Make sure this doesn't go through the next generation of plugins. It would be better if this was

7: TODO: There is always a small number of errors when we have this type/

8: TODO: Add support for 't' values (not 't') for all the constant types/

9: TODO: Check that we use loglef_cxx in the loop*

10: TODO: Support double or double values./

11: TODO: Add tests that verify that this function does not work for all targets/

12: TODO: we'd expect the result to be identical to the same value in terms of

13: TODO: We are not using a new type for 'w' as it does not denote 'y' yet, so we could/

14: TODO: if we had to find a way to extract the source file directly, we would/

15: TODO: this should fold into a flat array that would be/

16: TODO: Check if we can make it work with the correct address./

17: TODO: support v2i with V2R4+

18: TODO: Can a fast-math-flags check be generalized to all types of data? */

19: TODO: Add support for other type-specific VOPs.

```

Generated by:

```

tf.random.set_seed(0)

sample_outputs = model.generate(

input_ids,

do_sample=True,

max_length=40,

top_k=50,

top_p=0.95,

num_return_sequences=20

)

print("Output:\\

" + 100 * '-')

for i, sample_output in enumerate(sample_outputs):

m = tokenizer.decode(sample_output, skip_special_tokens=True)

m = m.split("TODO")[1].strip()

print("{}: TODO{}".format(i, m))

```

## TODO

- [ ] Fixup the data; it seems to contain multiple todo's per line

- [ ] Preprocess the data in a better way

- [ ] Download github and train it on everything

|

ethanyt/guwen-sent

|

ethanyt

| 2021-06-18T04:51:54Z | 8 | 4 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"chinese",

"classical chinese",

"literary chinese",

"ancient chinese",

"bert",

"sentiment classificatio",

"zh",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

---

language:

- "zh"

thumbnail: "https://user-images.githubusercontent.com/9592150/97142000-cad08e00-179a-11eb-88df-aff9221482d8.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "pytorch"

- "sentiment classificatio"

license: "apache-2.0"

pipeline_tag: "text-classification"

widget:

- text: "滚滚长江东逝水,浪花淘尽英雄"

- text: "寻寻觅觅,冷冷清清,凄凄惨惨戚戚"

- text: "执手相看泪眼,竟无语凝噎,念去去,千里烟波,暮霭沉沉楚天阔。"

- text: "忽如一夜春风来,干树万树梨花开"

---

# Guwen Sent

A Classical Chinese Poem Sentiment Classifier.

See also:

<a href="https://github.com/ethan-yt/guwen-models">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwen-models&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/cclue/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=cclue&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/guwenbert/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwenbert&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

|

ans/vaccinating-covid-tweets

|

ans

| 2021-06-18T04:12:08Z | 11 | 1 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"en",

"dataset:tweets",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-03-02T23:29:05Z |

---

language: en

license: apache-2.0

datasets:

- tweets

widget:

- text: "Vaccines to prevent SARS-CoV-2 infection are considered the most promising approach for curbing the pandemic."

---

# Disclaimer: This page is under maintenance. Please DO NOT refer to the information on this page to make any decision yet.

# Vaccinating COVID tweets

A fine-tuned model for fact-classification task on English tweets about COVID-19/vaccine.

## Intended uses & limitations

You can classify if the input tweet (or any others statement) about COVID-19/vaccine is `true`, `false` or `misleading`.

Note that since this model was trained with data up to May 2020, the most recent information may not be reflected.

#### How to use

You can use this model directly on this page or using `transformers` in python.

- Load pipeline and implement with input sequence

```python

from transformers import pipeline

pipe = pipeline("sentiment-analysis", model = "ans/vaccinating-covid-tweets")

seq = "Vaccines to prevent SARS-CoV-2 infection are considered the most promising approach for curbing the pandemic."

pipe(seq)

```

- Expected output

```python

[

{

"label": "false",

"score": 0.07972867041826248

},

{

"label": "misleading",

"score": 0.019911376759409904

},

{

"label": "true",

"score": 0.9003599882125854

}

]

```

- `true` examples

```python

"By the end of 2020, several vaccines had become available for use in different parts of the world."

"Vaccines to prevent SARS-CoV-2 infection are considered the most promising approach for curbing the pandemic."

"RNA vaccines were the first vaccines for SARS-CoV-2 to be produced and represent an entirely new vaccine approach."

```

- `false` examples

```python

"COVID-19 vaccine caused new strain in UK."

```

#### Limitations and bias

To conservatively classify whether an input sequence is true or not, the model may have predictions biased toward `false` or `misleading`.

## Training data & Procedure

#### Pre-trained baseline model

- Pre-trained model: [BERTweet](https://github.com/VinAIResearch/BERTweet)

- trained based on the RoBERTa pre-training procedure

- 850M General English Tweets (Jan 2012 to Aug 2019)

- 23M COVID-19 English Tweets

- Size of the model: >134M parameters

- Further training

- Pre-training with recent COVID-19/vaccine tweets and fine-tuning for fact classification

#### 1) Pre-training language model

- The model was pre-trained on COVID-19/vaccined related tweets using a masked language modeling (MLM) objective starting from BERTweet.

- Following datasets on English tweets were used:

- Tweets with trending #CovidVaccine hashtag, 207,000 tweets uploaded across Aug 2020 to Apr 2021 ([kaggle](https://www.kaggle.com/kaushiksuresh147/covidvaccine-tweets))

- Tweets about all COVID-19 vaccines, 78,000 tweets uploaded across Dec 2020 to May 2021 ([kaggle](https://www.kaggle.com/gpreda/all-covid19-vaccines-tweets))

- COVID-19 Twitter chatter dataset, 590,000 tweets uploaded across Mar 2021 to May 2021 ([github](https://github.com/thepanacealab/covid19_twitter))

#### 2) Fine-tuning for fact classification

- A fine-tuned model from pre-trained language model (1) for fact-classification task on COVID-19/vaccine.

- COVID-19/vaccine-related statements were collected from [Poynter](https://www.poynter.org/ifcn-covid-19-misinformation/) and [Snopes](https://www.snopes.com/) using Selenium resulting in over 14,000 fact-checked statements from Jan 2020 to May 2021.

- Original labels were divided within following three categories:

- `False`: includes false, no evidence, manipulated, fake, not true, unproven and unverified

- `Misleading`: includes misleading, exaggerated, out of context and needs context

- `True`: includes true and correct

## Evaluation results

| Training loss | Validation loss | Training accuracy | Validation accuracy |

| --- | --- | --- | --- |

| 0.1062 | 0.1006 | 96.3% | 94.5% |

# Contributors

- This model is a part of final team project from MLDL for DS class at SNU.

- Team BIBI - Vaccinating COVID-NineTweets

- Team members: Ahn, Hyunju; An, Jiyong; An, Seungchan; Jeong, Seokho; Kim, Jungmin; Kim, Sangbeom

- Advisor: Prof. Wen-Syan Li

<a href="https://gsds.snu.ac.kr/"><img src="https://gsds.snu.ac.kr/wp-content/uploads/sites/50/2021/04/GSDS_logo2-e1619068952717.png" width="200" height="80"></a>

|

danyaljj/gpt2_question_generation_given_paragraph_answer

|

danyaljj

| 2021-06-17T18:27:47Z | 6 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

Sample usage:

```python

tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

model = GPT2LMHeadModel.from_pretrained("danyaljj/gpt2_question_generation_given_paragraph_answer")

input_ids = tokenizer.encode("There are two apples on the counter. A: apples Q:", return_tensors="pt")

outputs = model.generate(input_ids)

print("Generated:", tokenizer.decode(outputs[0], skip_special_tokens=True))

```

Which should produce this:

```

Generated: There are two apples on the counter. A: apples Q: What is the name of the counter

```

|

danyaljj/gpt2_question_generation_given_paragraph

|

danyaljj

| 2021-06-17T18:23:28Z | 13 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

Sample usage:

```python

tokenizer = GPT2Tokenizer.from_pretrained("gpt2")

model = GPT2LMHeadModel.from_pretrained("danyaljj/gpt2_question_generation_given_paragraph")

input_ids = tokenizer.encode("There are two apples on the counter. Q:", return_tensors="pt")

outputs = model.generate(input_ids)

print("Generated:", tokenizer.decode(outputs[0], skip_special_tokens=True))

```

Which should produce this:

```

Generated: There are two apples on the counter. Q: What is the name of the counter that is on

```

|

Davlan/bert-base-multilingual-cased-finetuned-luganda

|

Davlan

| 2021-06-17T17:43:07Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"fill-mask",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

Hugging Face's logo

---

language: lg

datasets:

---

# bert-base-multilingual-cased-finetuned-luganda

## Model description

**bert-base-multilingual-cased-finetuned-luganda** is a **Luganda BERT** model obtained by fine-tuning **bert-base-multilingual-cased** model on Luganda language texts. It provides **better performance** than the multilingual BERT on text classification and named entity recognition datasets.

Specifically, this model is a *bert-base-multilingual-cased* model that was fine-tuned on Luganda corpus.

## Intended uses & limitations

#### How to use

You can use this model with Transformers *pipeline* for masked token prediction.

```python

>>> from transformers import pipeline

>>> unmasker = pipeline('fill-mask', model='Davlan/bert-base-multilingual-cased-finetuned-luganda')

>>> unmasker("Ffe tulwanyisa abo abaagala okutabangula [MASK], Kimuli bwe yategeezezza.")

```

#### Limitations and bias

This model is limited by its training dataset of entity-annotated news articles from a specific span of time. This may not generalize well for all use cases in different domains.

## Training data

This model was fine-tuned on JW300 + [BUKKEDDE](https://github.com/masakhane-io/masakhane-ner/tree/main/text_by_language/luganda) +[Luganda CC-100](http://data.statmt.org/cc-100/)

## Training procedure

This model was trained on a single NVIDIA V100 GPU

## Eval results on Test set (F-score, average over 5 runs)

Dataset| mBERT F1 | lg_bert F1

-|-|-

[MasakhaNER](https://github.com/masakhane-io/masakhane-ner) | 80.36 | 84.70

### BibTeX entry and citation info

By David Adelani

```

```

|

Kalindu/SinBerto

|

Kalindu

| 2021-06-17T16:37:19Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"SinBERTo",

"Sinhala",

"si",

"arxiv:1907.11692",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

---

language: si

tags:

- SinBERTo

- Sinhala

- roberta

---

### Overview

SinBerto is a small language model trained on a small news corpus. SinBerto is trained on Sinhala Language which is a low resource language compared to other languages.

### Model Specifications.

model : [Roberta](https://arxiv.org/abs/1907.11692)

vocab_size=52_000,

max_position_embeddings=514,

num_attention_heads=12,

num_hidden_layers=6,

type_vocab_size=1

### How to use from the Transformers Library

from transformers import AutoTokenizer, AutoModelForMaskedLM

tokenizer = AutoTokenizer.from_pretrained("Kalindu/SinBerto")

model = AutoModelForMaskedLM.from_pretrained("Kalindu/SinBerto")

### OR Clone the model repo

git lfs install

git clone https://huggingface.co/Kalindu/SinBerto

|

ethanyt/guwen-ner

|

ethanyt

| 2021-06-17T09:23:09Z | 59 | 5 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"roberta",

"token-classification",

"chinese",

"classical chinese",

"literary chinese",

"ancient chinese",

"bert",

"zh",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

language:

- "zh"

thumbnail: "https://user-images.githubusercontent.com/9592150/97142000-cad08e00-179a-11eb-88df-aff9221482d8.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "pytorch"

license: "apache-2.0"

pipeline_tag: "token-classification"

widget:

- text: "及秦始皇,灭先代典籍,焚书坑儒,天下学士逃难解散,我先人用藏其家书于屋壁。汉室龙兴,开设学校,旁求儒雅,以阐大猷。济南伏生,年过九十,失其本经,口以传授,裁二十馀篇,以其上古之书,谓之尚书。百篇之义,世莫得闻。"

---

# Guwen NER

A Classical Chinese Named Entity Recognizer.

Note: There are some problems with decoding using the default sequence classification model. Use the CRF model to achieve the best results. CRF related code please refer to

[Guwen Models](https://github.com/ethan-yt/guwen-models).

See also:

<a href="https://github.com/ethan-yt/guwen-models">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwen-models&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/cclue/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=cclue&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/guwenbert/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwenbert&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

|

ethanyt/guwen-quote

|

ethanyt

| 2021-06-17T08:22:56Z | 10 | 1 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"token-classification",

"chinese",

"classical chinese",

"literary chinese",

"ancient chinese",

"bert",

"quotation detection",

"zh",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

language:

- "zh"

thumbnail: "https://user-images.githubusercontent.com/9592150/97142000-cad08e00-179a-11eb-88df-aff9221482d8.png"

tags:

- "chinese"

- "classical chinese"

- "literary chinese"

- "ancient chinese"

- "bert"

- "pytorch"

- "quotation detection"

license: "apache-2.0"

pipeline_tag: "token-classification"

widget:

- text: "子曰学而时习之不亦说乎有朋自远方来不亦乐乎人不知而不愠不亦君子乎有子曰其为人也孝弟而好犯上者鲜矣不好犯上而好作乱者未之有也君子务本本立而道生孝弟也者其为仁之本与子曰巧言令色鲜矣仁曾子曰吾日三省吾身为人谋而不忠乎与朋友交而不信乎传不习乎子曰道千乘之国敬事而信节用而爱人使民以时"

---

# Guwen Quote

A Classical Chinese Quotation Detector.

Note: There are some problems with decoding using the default sequence classification model. Use the CRF model to achieve the best results. CRF related code please refer to

[Guwen Models](https://github.com/ethan-yt/guwen-models).

See also:

<a href="https://github.com/ethan-yt/guwen-models">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwen-models&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/cclue/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=cclue&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

<a href="https://github.com/ethan-yt/guwenbert/">

<img align="center" width="400" src="https://github-readme-stats.vercel.app/api/pin/?username=ethan-yt&repo=guwenbert&bg_color=30,e96443,904e95&title_color=fff&text_color=fff&icon_color=fff&show_owner=true" />

</a>

|

huggingtweets/thinktilt

|

huggingtweets

| 2021-06-17T00:28:38Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail: https://www.huggingtweets.com/thinktilt/1623889617116/predictions.png

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1331032413342892037/Bubd_ZWy_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">ThinkTilt (ProForma for Jira)</div>

<div style="text-align: center; font-size: 14px;">@thinktilt</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from ThinkTilt (ProForma for Jira).

| Data | ThinkTilt (ProForma for Jira) |

| --- | --- |

| Tweets downloaded | 2707 |

| Retweets | 105 |

| Short tweets | 27 |

| Tweets kept | 2575 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/2slt58js/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @thinktilt's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/3qy43zlw) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/3qy43zlw/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/thinktilt')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

ScottaStrong/DialogGPT-small-joshua

|

ScottaStrong

| 2021-06-16T21:40:45Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"conversational",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-03-02T23:29:04Z |

---

thumbnail: https://huggingface.co/front/thumbnails/dialogpt.png

tags:

- conversational

license: mit

---

# DialoGPT Trained on the Speech of a Game Character

This is an instance of [microsoft/DialoGPT-medium](https://huggingface.co/microsoft/DialoGPT-medium) trained on a game character, Joshua from [The World Ends With You](https://en.wikipedia.org/wiki/The_World_Ends_with_You). The data comes from [a Kaggle game script dataset](https://www.kaggle.com/ruolinzheng/twewy-game-script).

I built a Discord AI chatbot based on this model. [Check out my GitHub repo.](https://github.com/RuolinZheng08/twewy-discord-chatbot)

Chat with the model:

```python

from transformers import AutoTokenizer, AutoModelWithLMHead

tokenizer = AutoTokenizer.from_pretrained("scottastrong/DialogGPT-small-joshua")

model = AutoModelWithLMHead.from_pretrained("scottastrong/DialogGPT-small-joshua")

# Let's chat for 4 lines

for step in range(4):

# encode the new user input, add the eos_token and return a tensor in Pytorch

new_user_input_ids = tokenizer.encode(input(">> User:") + tokenizer.eos_token, return_tensors='pt')

# print(new_user_input_ids)

# append the new user input tokens to the chat history

bot_input_ids = torch.cat([chat_history_ids, new_user_input_ids], dim=-1) if step > 0 else new_user_input_ids

# generated a response while limiting the total chat history to 1000 tokens,

chat_history_ids = model.generate(

bot_input_ids, max_length=200,

pad_token_id=tokenizer.eos_token_id,

no_repeat_ngram_size=3,

do_sample=True,

top_k=100,

top_p=0.7,

temperature=0.8

)

# pretty print last ouput tokens from bot

print("JoshuaBot: {}".format(tokenizer.decode(chat_history_ids[:, bot_input_ids.shape[-1]:][0], skip_special_tokens=True)))

```

|

danyaljj/opengpt2_pytorch_forward

|

danyaljj

| 2021-06-16T20:30:01Z | 4 | 1 |

transformers

|

[

"transformers",

"pytorch",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:05Z |

West et al.'s model from their "reflective decoding" paper.

Sample usage:

```python

import torch

from modeling_opengpt2 import OpenGPT2LMHeadModel

from padded_encoder import Encoder

path_to_forward = 'danyaljj/opengpt2_pytorch_forward'

encoder = Encoder()

model_backward = OpenGPT2LMHeadModel.from_pretrained(path_to_forward)

input = "She tried to win but"

input_ids = encoder.encode(input)

input_ids = torch.tensor([input_ids ], dtype=torch.int)

print(input_ids)

output = model_backward.generate(input_ids)

output_text = encoder.decode(output.tolist()[0])

print(output_text)

```

Download the additional files from here: https://github.com/peterwestuw/GPT2ForwardBackward

|

IlyaGusev/news_tg_rubert

|

IlyaGusev

| 2021-06-16T19:43:26Z | 39 | 0 |

transformers

|

[

"transformers",

"pytorch",

"ru",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2022-03-02T23:29:04Z |

---

language:

- ru

license: apache-2.0

---

# NewsTgRuBERT

Training script: https://github.com/dialogue-evaluation/Russian-News-Clustering-and-Headline-Generation/blob/main/train_mlm.py

|

m3hrdadfi/typo-detector-distilbert-en

|

m3hrdadfi

| 2021-06-16T16:14:20Z | 22,410 | 10 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"distilbert",

"token-classification",

"en",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

language: en

widget:

- text: "He had also stgruggled with addiction during his time in Congress ."

- text: "The review thoroughla assessed all aspects of JLENS SuR and CPG esign maturit and confidence ."

- text: "Letterma also apologized two his staff for the satyation ."

- text: "Vincent Jay had earlier won France 's first gold in gthe 10km biathlon sprint ."

- text: "It is left to the directors to figure out hpw to bring the stry across to tye audience ."

---

# Typo Detector

## Dataset Information

For this specific task, I used [NeuSpell](https://github.com/neuspell/neuspell) corpus as my raw data.

## Evaluation

The following tables summarize the scores obtained by model overall and per each class.

| # | precision | recall | f1-score | support |

|:------------:|:---------:|:--------:|:--------:|:--------:|

| TYPO | 0.992332 | 0.985997 | 0.989154 | 416054.0 |

| micro avg | 0.992332 | 0.985997 | 0.989154 | 416054.0 |

| macro avg | 0.992332 | 0.985997 | 0.989154 | 416054.0 |

| weighted avg | 0.992332 | 0.985997 | 0.989154 | 416054.0 |

## How to use

You use this model with Transformers pipeline for NER (token-classification).

### Installing requirements

```bash

pip install transformers

```

### Prediction using pipeline

```python

import torch

from transformers import AutoConfig, AutoTokenizer, AutoModelForTokenClassification

from transformers import pipeline

model_name_or_path = "m3hrdadfi/typo-detector-distilbert-en"

config = AutoConfig.from_pretrained(model_name_or_path)

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

model = AutoModelForTokenClassification.from_pretrained(model_name_or_path, config=config)

nlp = pipeline('token-classification', model=model, tokenizer=tokenizer, aggregation_strategy="average")

```

```python

sentences = [

"He had also stgruggled with addiction during his time in Congress .",

"The review thoroughla assessed all aspects of JLENS SuR and CPG esign maturit and confidence .",

"Letterma also apologized two his staff for the satyation .",

"Vincent Jay had earlier won France 's first gold in gthe 10km biathlon sprint .",

"It is left to the directors to figure out hpw to bring the stry across to tye audience .",

]

for sentence in sentences:

typos = [sentence[r["start"]: r["end"]] for r in nlp(sentence)]

detected = sentence

for typo in typos:

detected = detected.replace(typo, f'<i>{typo}</i>')

print(" [Input]: ", sentence)

print("[Detected]: ", detected)

print("-" * 130)

```

Output:

```text

[Input]: He had also stgruggled with addiction during his time in Congress .

[Detected]: He had also <i>stgruggled</i> with addiction during his time in Congress .

----------------------------------------------------------------------------------------------------------------------------------

[Input]: The review thoroughla assessed all aspects of JLENS SuR and CPG esign maturit and confidence .

[Detected]: The review <i>thoroughla</i> assessed all aspects of JLENS SuR and CPG <i>esign</i> <i>maturit</i> and confidence .

----------------------------------------------------------------------------------------------------------------------------------

[Input]: Letterma also apologized two his staff for the satyation .

[Detected]: <i>Letterma</i> also apologized <i>two</i> his staff for the <i>satyation</i> .

----------------------------------------------------------------------------------------------------------------------------------

[Input]: Vincent Jay had earlier won France 's first gold in gthe 10km biathlon sprint .

[Detected]: Vincent Jay had earlier won France 's first gold in <i>gthe</i> 10km biathlon sprint .

----------------------------------------------------------------------------------------------------------------------------------

[Input]: It is left to the directors to figure out hpw to bring the stry across to tye audience .

[Detected]: It is left to the directors to figure out <i>hpw</i> to bring the <i>stry</i> across to <i>tye</i> audience .

----------------------------------------------------------------------------------------------------------------------------------

```

## Questions?

Post a Github issue on the [TypoDetector Issues](https://github.com/m3hrdadfi/typo-detector/issues) repo.

|

madlag/bert-base-uncased-squadv1-x1.16-f88.1-d8-unstruct-v1

|

madlag

| 2021-06-16T15:03:46Z | 72 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"bert",

"question-answering",

"en",

"dataset:squad",

"license:mit",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2022-03-02T23:29:05Z |

---

language: en

thumbnail:

license: mit

tags:

- question-answering

-

-

datasets:

- squad

metrics:

- squad

widget:

- text: "Where is the Eiffel Tower located?"

context: "The Eiffel Tower is a wrought-iron lattice tower on the Champ de Mars in Paris, France. It is named after the engineer Gustave Eiffel, whose company designed and built the tower."

- text: "Who is Frederic Chopin?"

context: "Frédéric François Chopin, born Fryderyk Franciszek Chopin (1 March 1810 – 17 October 1849), was a Polish composer and virtuoso pianist of the Romantic era who wrote primarily for solo piano."

---

## BERT-base uncased model fine-tuned on SQuAD v1

This model was created using the [nn_pruning](https://github.com/huggingface/nn_pruning) python library: the **linear layers contains 8.0%** of the original weights.

The model contains **28.0%** of the original weights **overall** (the embeddings account for a significant part of the model, and they are not pruned by this method).

With a simple resizing of the linear matrices it ran **1.16x as fast as bert-base-uncased** on the evaluation.

This is possible because the pruning method lead to structured matrices: to visualize them, hover below on the plot to see the non-zero/zero parts of each matrix.

<div class="graph"><script src="/madlag/bert-base-uncased-squadv1-x1.16-f88.1-d8-unstruct-v1/raw/main/model_card/density_info.js" id="c60d09ec-81ff-4d6f-b616-c3ef09b2175d"></script></div>