modelId

string | author

string | last_modified

timestamp[us, tz=UTC] | downloads

int64 | likes

int64 | library_name

string | tags

list | pipeline_tag

string | createdAt

timestamp[us, tz=UTC] | card

string |

|---|---|---|---|---|---|---|---|---|---|

chainway9/blockassist-bc-untamed_quick_eel_1755710135

|

chainway9

| 2025-08-20T17:42:27Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"untamed quick eel",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:42:23Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- untamed quick eel

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

lilTAT/blockassist-bc-gentle_rugged_hare_1755711657

|

lilTAT

| 2025-08-20T17:41:26Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"gentle rugged hare",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:41:20Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- gentle rugged hare

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

Muapi/flux-sadamoto-yoshiyuki-evangelion-eva-ayanami-rei-souryuu-asuka-langley-artist-style

|

Muapi

| 2025-08-20T17:41:07Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-20T17:40:51Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# [Flux] Sadamoto Yoshiyuki/贞本义行 《EVANGELION》/《EVA》/《新世纪福音战士》 Ayanami Rei, Souryuu Asuka Langley- Artist Style

**Base model**: Flux.1 D

**Trained words**: Sadamoto Yoshiyuki Style, souryuu asuka langley, ayanami rei, katsuragi misato, ikari shinji, nagisa kaworu, makinami mari illustrious, nadia la arwall

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:708851@792878", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

Muapi/jan-van-goyen-style

|

Muapi

| 2025-08-20T17:40:36Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-20T17:40:19Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# Jan van Goyen Style

**Base model**: Flux.1 D

**Trained words**: Jan van Goyen Style

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:99436@1559598", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

helmutsukocok/blockassist-bc-loud_scavenging_kangaroo_1755709983

|

helmutsukocok

| 2025-08-20T17:39:56Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"loud scavenging kangaroo",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:39:53Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- loud scavenging kangaroo

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mradermacher/brix-query-1.7b-GGUF

|

mradermacher

| 2025-08-20T17:38:53Z | 0 | 0 |

transformers

|

[

"transformers",

"gguf",

"en",

"base_model:SubconsciousDev/brix-query-1.7b",

"base_model:quantized:SubconsciousDev/brix-query-1.7b",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-08-20T17:26:51Z |

---

base_model: SubconsciousDev/brix-query-1.7b

language:

- en

library_name: transformers

mradermacher:

readme_rev: 1

quantized_by: mradermacher

tags: []

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static quants of https://huggingface.co/SubconsciousDev/brix-query-1.7b

<!-- provided-files -->

***For a convenient overview and download list, visit our [model page for this model](https://hf.tst.eu/model#brix-query-1.7b-GGUF).***

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q2_K.gguf) | Q2_K | 0.9 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q3_K_S.gguf) | Q3_K_S | 1.0 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q3_K_M.gguf) | Q3_K_M | 1.0 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q3_K_L.gguf) | Q3_K_L | 1.1 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.IQ4_XS.gguf) | IQ4_XS | 1.1 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q4_K_S.gguf) | Q4_K_S | 1.2 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q4_K_M.gguf) | Q4_K_M | 1.2 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q5_K_S.gguf) | Q5_K_S | 1.3 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q5_K_M.gguf) | Q5_K_M | 1.4 | |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q6_K.gguf) | Q6_K | 1.5 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.Q8_0.gguf) | Q8_0 | 1.9 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/brix-query-1.7b-GGUF/resolve/main/brix-query-1.7b.f16.gguf) | f16 | 3.5 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

uname0x96/blockassist-bc-rough_scavenging_narwhal_1755711168

|

uname0x96

| 2025-08-20T17:34:42Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"rough scavenging narwhal",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:34:25Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- rough scavenging narwhal

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF

|

mradermacher

| 2025-08-20T17:34:11Z | 0 | 0 |

transformers

|

[

"transformers",

"gguf",

"en",

"base_model:Debk/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full",

"base_model:quantized:Debk/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full",

"endpoints_compatible",

"region:us"

] | null | 2025-08-20T17:16:56Z |

---

base_model: Debk/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full

language:

- en

library_name: transformers

mradermacher:

readme_rev: 1

quantized_by: mradermacher

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static quants of https://huggingface.co/Debk/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full

<!-- provided-files -->

***For a convenient overview and download list, visit our [model page for this model](https://hf.tst.eu/model#granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF).***

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q2_K.gguf) | Q2_K | 1.1 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q3_K_S.gguf) | Q3_K_S | 1.2 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q3_K_M.gguf) | Q3_K_M | 1.4 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q3_K_L.gguf) | Q3_K_L | 1.5 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.IQ4_XS.gguf) | IQ4_XS | 1.5 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q4_K_S.gguf) | Q4_K_S | 1.6 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q4_K_M.gguf) | Q4_K_M | 1.6 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q5_K_S.gguf) | Q5_K_S | 1.9 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q5_K_M.gguf) | Q5_K_M | 1.9 | |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q6_K.gguf) | Q6_K | 2.2 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.Q8_0.gguf) | Q8_0 | 2.8 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full-GGUF/resolve/main/granite-3.3-2b-finetuned-alpaca-hindi-bengali_full.f16.gguf) | f16 | 5.2 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

alunadiderot/setfit-e5-base-category-classifier_v1

|

alunadiderot

| 2025-08-20T17:32:11Z | 0 | 0 | null |

[

"safetensors",

"xlm-roberta",

"region:us"

] | null | 2025-08-20T17:29:40Z |

# SetFit E5 Base Category Classifier v1

A SetFit model trained on E5-base for category classification.

## Model Details

- Base Model: E5-base

- Task: Category classification

## Usage

[Add usage instructions]

|

Muapi/artistic-analog-photos-realistic-photos

|

Muapi

| 2025-08-20T17:30:14Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-20T17:29:59Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# Artistic Analog Photos (realistic photos)

**Base model**: Flux.1 D

**Trained words**: aaphotosv2

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:934391@1368796", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

WenFengg/loss_14l14_21_8

|

WenFengg

| 2025-08-20T17:29:15Z | 0 | 0 | null |

[

"safetensors",

"any-to-any",

"omega",

"omegalabs",

"bittensor",

"agi",

"license:mit",

"region:us"

] |

any-to-any

| 2025-08-20T17:23:47Z |

---

license: mit

tags:

- any-to-any

- omega

- omegalabs

- bittensor

- agi

---

This is an Any-to-Any model checkpoint for the OMEGA Labs x Bittensor Any-to-Any subnet.

Check out the [git repo](https://github.com/omegalabsinc/omegalabs-anytoany-bittensor) and find OMEGA on X: [@omegalabsai](https://x.com/omegalabsai).

|

Renu11/my_embedding_gemma

|

Renu11

| 2025-08-20T17:28:24Z | 0 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"safetensors",

"gemma3_text",

"sentence-similarity",

"feature-extraction",

"dense",

"generated_from_trainer",

"dataset_size:3",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:gg-hf-gm/embeddinggemma-300M",

"base_model:finetune:gg-hf-gm/embeddinggemma-300M",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2025-08-20T17:27:05Z |

---

tags:

- sentence-transformers

- sentence-similarity

- feature-extraction

- dense

- generated_from_trainer

- dataset_size:3

- loss:MultipleNegativesRankingLoss

base_model: gg-hf-gm/embeddinggemma-300M

pipeline_tag: sentence-similarity

library_name: sentence-transformers

---

# SentenceTransformer based on gg-hf-gm/embeddinggemma-300M

This is a [sentence-transformers](https://www.SBERT.net) model finetuned from [gg-hf-gm/embeddinggemma-300M](https://huggingface.co/gg-hf-gm/embeddinggemma-300M). It maps sentences & paragraphs to a 768-dimensional dense vector space and can be used for semantic textual similarity, semantic search, paraphrase mining, text classification, clustering, and more.

## Model Details

### Model Description

- **Model Type:** Sentence Transformer

- **Base model:** [gg-hf-gm/embeddinggemma-300M](https://huggingface.co/gg-hf-gm/embeddinggemma-300M) <!-- at revision e4253f99d926a4f5c770e5be9f9762ed86edc80b -->

- **Maximum Sequence Length:** 1024 tokens

- **Output Dimensionality:** 768 dimensions

- **Similarity Function:** Cosine Similarity

<!-- - **Training Dataset:** Unknown -->

<!-- - **Language:** Unknown -->

<!-- - **License:** Unknown -->

### Model Sources

- **Documentation:** [Sentence Transformers Documentation](https://sbert.net)

- **Repository:** [Sentence Transformers on GitHub](https://github.com/UKPLab/sentence-transformers)

- **Hugging Face:** [Sentence Transformers on Hugging Face](https://huggingface.co/models?library=sentence-transformers)

### Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 1024, 'do_lower_case': False, 'architecture': 'Gemma3TextModel'})

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False, 'pooling_mode_weightedmean_tokens': False, 'pooling_mode_lasttoken': False, 'include_prompt': True})

(2): Dense({'in_features': 768, 'out_features': 3072, 'bias': False, 'activation_function': 'torch.nn.modules.linear.Identity'})

(3): Dense({'in_features': 3072, 'out_features': 768, 'bias': False, 'activation_function': 'torch.nn.modules.linear.Identity'})

(4): Normalize()

)

```

## Usage

### Direct Usage (Sentence Transformers)

First install the Sentence Transformers library:

```bash

pip install -U sentence-transformers

```

Then you can load this model and run inference.

```python

from sentence_transformers import SentenceTransformer

# Download from the 🤗 Hub

model = SentenceTransformer("Renu11/my_embedding_gemma")

# Run inference

queries = [

"Which planet is known as the Red Planet?",

]

documents = [

"Venus is often called Earth's twin because of its similar size and proximity.",

'Mars, known for its reddish appearance, is often referred to as the Red Planet.',

'Saturn, famous for its rings, is sometimes mistaken for the Red Planet.',

]

query_embeddings = model.encode_query(queries)

document_embeddings = model.encode_document(documents)

print(query_embeddings.shape, document_embeddings.shape)

# [1, 768] [3, 768]

# Get the similarity scores for the embeddings

similarities = model.similarity(query_embeddings, document_embeddings)

print(similarities)

# tensor([[0.4465, 0.7582, 0.5683]])

```

<!--

### Direct Usage (Transformers)

<details><summary>Click to see the direct usage in Transformers</summary>

</details>

-->

<!--

### Downstream Usage (Sentence Transformers)

You can finetune this model on your own dataset.

<details><summary>Click to expand</summary>

</details>

-->

<!--

### Out-of-Scope Use

*List how the model may foreseeably be misused and address what users ought not to do with the model.*

-->

<!--

## Bias, Risks and Limitations

*What are the known or foreseeable issues stemming from this model? You could also flag here known failure cases or weaknesses of the model.*

-->

<!--

### Recommendations

*What are recommendations with respect to the foreseeable issues? For example, filtering explicit content.*

-->

## Training Details

### Training Dataset

#### Unnamed Dataset

* Size: 3 training samples

* Columns: <code>anchor</code>, <code>positive</code>, and <code>negative</code>

* Approximate statistics based on the first 3 samples:

| | anchor | positive | negative |

|:--------|:----------------------------------------------------------------------------------|:-----------------------------------------------------------------------------------|:-----------------------------------------------------------------------------------|

| type | string | string | string |

| details | <ul><li>min: 10 tokens</li><li>mean: 12.0 tokens</li><li>max: 15 tokens</li></ul> | <ul><li>min: 13 tokens</li><li>mean: 15.33 tokens</li><li>max: 17 tokens</li></ul> | <ul><li>min: 12 tokens</li><li>mean: 12.67 tokens</li><li>max: 14 tokens</li></ul> |

* Samples:

| anchor | positive | negative |

|:--------------------------------------------------------------------------|:-----------------------------------------------------------------------------------|:------------------------------------------------------------------------|

| <code>How do I open a NISA account?</code> | <code>What is the procedure for starting a new tax-free investment account?</code> | <code>I want to check the balance of my regular savings account.</code> |

| <code>Are there fees for making an early repayment on a home loan?</code> | <code>If I pay back my house loan early, will there be any costs?</code> | <code>What is the management fee for this investment trust?</code> |

| <code>What is the coverage for medical insurance?</code> | <code>Tell me about the benefits of the health insurance plan.</code> | <code>What is the cancellation policy for my life insurance?</code> |

* Loss: [<code>MultipleNegativesRankingLoss</code>](https://sbert.net/docs/package_reference/sentence_transformer/losses.html#multiplenegativesrankingloss) with these parameters:

```json

{

"scale": 20.0,

"similarity_fct": "cos_sim",

"gather_across_devices": false

}

```

### Training Hyperparameters

#### Non-Default Hyperparameters

- `per_device_train_batch_size`: 1

- `learning_rate`: 2e-05

- `num_train_epochs`: 5

- `warmup_ratio`: 0.1

- `fp16`: True

- `prompts`: task: sentence similarity | query:

#### All Hyperparameters

<details><summary>Click to expand</summary>

- `overwrite_output_dir`: False

- `do_predict`: False

- `eval_strategy`: no

- `prediction_loss_only`: True

- `per_device_train_batch_size`: 1

- `per_device_eval_batch_size`: 8

- `per_gpu_train_batch_size`: None

- `per_gpu_eval_batch_size`: None

- `gradient_accumulation_steps`: 1

- `eval_accumulation_steps`: None

- `torch_empty_cache_steps`: None

- `learning_rate`: 2e-05

- `weight_decay`: 0.0

- `adam_beta1`: 0.9

- `adam_beta2`: 0.999

- `adam_epsilon`: 1e-08

- `max_grad_norm`: 1.0

- `num_train_epochs`: 5

- `max_steps`: -1

- `lr_scheduler_type`: linear

- `lr_scheduler_kwargs`: {}

- `warmup_ratio`: 0.1

- `warmup_steps`: 0

- `log_level`: passive

- `log_level_replica`: warning

- `log_on_each_node`: True

- `logging_nan_inf_filter`: True

- `save_safetensors`: True

- `save_on_each_node`: False

- `save_only_model`: False

- `restore_callback_states_from_checkpoint`: False

- `no_cuda`: False

- `use_cpu`: False

- `use_mps_device`: False

- `seed`: 42

- `data_seed`: None

- `jit_mode_eval`: False

- `use_ipex`: False

- `bf16`: False

- `fp16`: True

- `fp16_opt_level`: O1

- `half_precision_backend`: auto

- `bf16_full_eval`: False

- `fp16_full_eval`: False

- `tf32`: None

- `local_rank`: 0

- `ddp_backend`: None

- `tpu_num_cores`: None

- `tpu_metrics_debug`: False

- `debug`: []

- `dataloader_drop_last`: False

- `dataloader_num_workers`: 0

- `dataloader_prefetch_factor`: None

- `past_index`: -1

- `disable_tqdm`: False

- `remove_unused_columns`: True

- `label_names`: None

- `load_best_model_at_end`: False

- `ignore_data_skip`: False

- `fsdp`: []

- `fsdp_min_num_params`: 0

- `fsdp_config`: {'min_num_params': 0, 'xla': False, 'xla_fsdp_v2': False, 'xla_fsdp_grad_ckpt': False}

- `fsdp_transformer_layer_cls_to_wrap`: None

- `accelerator_config`: {'split_batches': False, 'dispatch_batches': None, 'even_batches': True, 'use_seedable_sampler': True, 'non_blocking': False, 'gradient_accumulation_kwargs': None}

- `deepspeed`: None

- `label_smoothing_factor`: 0.0

- `optim`: adamw_torch_fused

- `optim_args`: None

- `adafactor`: False

- `group_by_length`: False

- `length_column_name`: length

- `ddp_find_unused_parameters`: None

- `ddp_bucket_cap_mb`: None

- `ddp_broadcast_buffers`: False

- `dataloader_pin_memory`: True

- `dataloader_persistent_workers`: False

- `skip_memory_metrics`: True

- `use_legacy_prediction_loop`: False

- `push_to_hub`: False

- `resume_from_checkpoint`: None

- `hub_model_id`: None

- `hub_strategy`: every_save

- `hub_private_repo`: None

- `hub_always_push`: False

- `hub_revision`: None

- `gradient_checkpointing`: False

- `gradient_checkpointing_kwargs`: None

- `include_inputs_for_metrics`: False

- `include_for_metrics`: []

- `eval_do_concat_batches`: True

- `fp16_backend`: auto

- `push_to_hub_model_id`: None

- `push_to_hub_organization`: None

- `mp_parameters`:

- `auto_find_batch_size`: False

- `full_determinism`: False

- `torchdynamo`: None

- `ray_scope`: last

- `ddp_timeout`: 1800

- `torch_compile`: False

- `torch_compile_backend`: None

- `torch_compile_mode`: None

- `include_tokens_per_second`: False

- `include_num_input_tokens_seen`: False

- `neftune_noise_alpha`: None

- `optim_target_modules`: None

- `batch_eval_metrics`: False

- `eval_on_start`: False

- `use_liger_kernel`: False

- `liger_kernel_config`: None

- `eval_use_gather_object`: False

- `average_tokens_across_devices`: False

- `prompts`: task: sentence similarity | query:

- `batch_sampler`: batch_sampler

- `multi_dataset_batch_sampler`: proportional

- `router_mapping`: {}

- `learning_rate_mapping`: {}

</details>

### Training Logs

| Epoch | Step | Training Loss |

|:-----:|:----:|:-------------:|

| 1.0 | 3 | 0.5841 |

| 2.0 | 6 | 1.1445 |

| 3.0 | 9 | 1.2516 |

| 4.0 | 12 | 1.1445 |

| 5.0 | 15 | 0.0252 |

### Framework Versions

- Python: 3.12.11

- Sentence Transformers: 5.1.0

- Transformers: 4.55.2

- PyTorch: 2.8.0+cu126

- Accelerate: 1.10.0

- Datasets: 4.0.0

- Tokenizers: 0.21.4

## Citation

### BibTeX

#### Sentence Transformers

```bibtex

@inproceedings{reimers-2019-sentence-bert,

title = "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks",

author = "Reimers, Nils and Gurevych, Iryna",

booktitle = "Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing",

month = "11",

year = "2019",

publisher = "Association for Computational Linguistics",

url = "https://arxiv.org/abs/1908.10084",

}

```

#### MultipleNegativesRankingLoss

```bibtex

@misc{henderson2017efficient,

title={Efficient Natural Language Response Suggestion for Smart Reply},

author={Matthew Henderson and Rami Al-Rfou and Brian Strope and Yun-hsuan Sung and Laszlo Lukacs and Ruiqi Guo and Sanjiv Kumar and Balint Miklos and Ray Kurzweil},

year={2017},

eprint={1705.00652},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

<!--

## Glossary

*Clearly define terms in order to be accessible across audiences.*

-->

<!--

## Model Card Authors

*Lists the people who create the model card, providing recognition and accountability for the detailed work that goes into its construction.*

-->

<!--

## Model Card Contact

*Provides a way for people who have updates to the Model Card, suggestions, or questions, to contact the Model Card authors.*

-->

|

MattBou00/llama-3-2-1b-detox_v1f-checkpoint-epoch-40

|

MattBou00

| 2025-08-20T17:24:27Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"trl",

"ppo",

"reinforcement-learning",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

reinforcement-learning

| 2025-08-20T00:22:50Z |

---

license: apache-2.0

library_name: transformers

tags:

- trl

- ppo

- transformers

- reinforcement-learning

---

# TRL Model

This is a [TRL language model](https://github.com/huggingface/trl) that has been fine-tuned with reinforcement learning to

guide the model outputs according to a value, function, or human feedback. The model can be used for text generation.

## Usage

To use this model for inference, first install the TRL library:

```bash

python -m pip install trl

```

You can then generate text as follows:

```python

from transformers import pipeline

generator = pipeline("text-generation", model="MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-40")

outputs = generator("Hello, my llama is cute")

```

If you want to use the model for training or to obtain the outputs from the value head, load the model as follows:

```python

from transformers import AutoTokenizer

from trl import AutoModelForCausalLMWithValueHead

tokenizer = AutoTokenizer.from_pretrained("MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-40")

model = AutoModelForCausalLMWithValueHead.from_pretrained("MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-40")

inputs = tokenizer("Hello, my llama is cute", return_tensors="pt")

outputs = model(**inputs, labels=inputs["input_ids"])

```

|

Muapi/black-spider-man-bodysuit-cosplay-il-flux

|

Muapi

| 2025-08-20T17:23:43Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-20T17:22:49Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# Black Spider-Man Bodysuit Cosplay [IL+Flux]

**Base model**: Flux.1 D

**Trained words**: wearing a black SymbioteSuit

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:701263@784643", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

demonwizard0/affine-beta-5

|

demonwizard0

| 2025-08-20T17:23:19Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"gpt_oss",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-20T17:22:29Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

Muapi/fantasy-novel-art-style

|

Muapi

| 2025-08-20T17:22:30Z | 0 | 0 | null |

[

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-20T17:22:13Z |

---

license: openrail++

tags:

- lora

- stable-diffusion

- flux.1-d

model_type: LoRA

---

# Fantasy Novel Art Style

**Base model**: Flux.1 D

**Trained words**: fna_style

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

headers = {"Content-Type": "application/json", "x-api-key": os.getenv("MUAPIAPP_API_KEY")}

payload = {

"prompt": "masterpiece, best quality, 1girl, looking at viewer",

"model_id": [{"model": "civitai:742202@829996", "weight": 1.0}],

"width": 1024,

"height": 1024,

"num_images": 1

}

print(requests.post(url, headers=headers, json=payload).json())

```

|

MattBou00/llama-3-2-1b-detox_v1f-checkpoint-epoch-20

|

MattBou00

| 2025-08-20T17:21:53Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"trl",

"ppo",

"reinforcement-learning",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

reinforcement-learning

| 2025-08-20T00:18:25Z |

---

license: apache-2.0

library_name: transformers

tags:

- trl

- ppo

- transformers

- reinforcement-learning

---

# TRL Model

This is a [TRL language model](https://github.com/huggingface/trl) that has been fine-tuned with reinforcement learning to

guide the model outputs according to a value, function, or human feedback. The model can be used for text generation.

## Usage

To use this model for inference, first install the TRL library:

```bash

python -m pip install trl

```

You can then generate text as follows:

```python

from transformers import pipeline

generator = pipeline("text-generation", model="MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-20")

outputs = generator("Hello, my llama is cute")

```

If you want to use the model for training or to obtain the outputs from the value head, load the model as follows:

```python

from transformers import AutoTokenizer

from trl import AutoModelForCausalLMWithValueHead

tokenizer = AutoTokenizer.from_pretrained("MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-20")

model = AutoModelForCausalLMWithValueHead.from_pretrained("MattBou00//rds/general/user/mrb124/home/IRL-Bayesian/outputs/2025-08-20_18-18-32/checkpoints/checkpoint-epoch-20")

inputs = tokenizer("Hello, my llama is cute", return_tensors="pt")

outputs = model(**inputs, labels=inputs["input_ids"])

```

|

Danielbrdz/BarcenasMexico-14b

|

Danielbrdz

| 2025-08-20T17:20:48Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen3",

"text-generation",

"mexico",

"conversational",

"es",

"dataset:Danielbrdz/BarcenasMexico",

"base_model:Qwen/Qwen3-14B",

"base_model:finetune:Qwen/Qwen3-14B",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-20T17:08:45Z |

---

license: apache-2.0

datasets:

- Danielbrdz/BarcenasMexico

language:

- es

base_model:

- Qwen/Qwen3-14B

pipeline_tag: text-generation

library_name: transformers

tags:

- mexico

---

Barcenas México 14b

Basado en Qwen 3 14b y entrenado con el dataset Barcenas México

El objetivo de este LLM es tener modelo que sepa todo de México, su historia, cultura, gastronomía, etc. Todo en LLM potente como es Qwen 3 14b

El LLM puede contestar preguntas de México con precisión, por su entrenamiento con datos de México hecha por humanos.

------------------------------------------------------------------------------------------------------------------------

Barcenas Mexico 14b

Based on Qwen 3 14b and trained with the Barcenas Mexico dataset.

The objective of this LLM is to have a model that knows everything about Mexico, its history, culture, gastronomy, etc. All in a powerful LLM like Qwen 3 14b.

The LLM can answer questions about Mexico with precision, due to its training with data from Mexico created by humans.

Made with ❤️ in Guadalupe, Nuevo Leon, Mexico 🇲🇽

|

0xaoyama/blockassist-bc-muscular_zealous_gorilla_1755710315

|

0xaoyama

| 2025-08-20T17:19:11Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"muscular zealous gorilla",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:18:59Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- muscular zealous gorilla

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

WenFengg/pyar_14l13_21_8

|

WenFengg

| 2025-08-20T17:19:03Z | 0 | 0 | null |

[

"safetensors",

"any-to-any",

"omega",

"omegalabs",

"bittensor",

"agi",

"license:mit",

"region:us"

] |

any-to-any

| 2025-08-20T17:14:16Z |

---

license: mit

tags:

- any-to-any

- omega

- omegalabs

- bittensor

- agi

---

This is an Any-to-Any model checkpoint for the OMEGA Labs x Bittensor Any-to-Any subnet.

Check out the [git repo](https://github.com/omegalabsinc/omegalabs-anytoany-bittensor) and find OMEGA on X: [@omegalabsai](https://x.com/omegalabsai).

|

ihsanridzi/blockassist-bc-wiry_flexible_owl_1755708729

|

ihsanridzi

| 2025-08-20T17:18:26Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"wiry flexible owl",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:18:22Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- wiry flexible owl

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

0xaoyama/blockassist-bc-muscular_zealous_gorilla_1755710124

|

0xaoyama

| 2025-08-20T17:16:01Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"muscular zealous gorilla",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:15:49Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- muscular zealous gorilla

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

liu-nlp/salamandra-7b-smol-smoltalk-sv-en

|

liu-nlp

| 2025-08-20T17:15:47Z | 6 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"generated_from_trainer",

"trl",

"sft",

"conversational",

"base_model:BSC-LT/salamandra-7b",

"base_model:finetune:BSC-LT/salamandra-7b",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-13T14:40:45Z |

---

base_model: BSC-LT/salamandra-7b

library_name: transformers

model_name: salamandra-7b-smol-smoltalk-sv-en

tags:

- generated_from_trainer

- trl

- sft

licence: license

---

# Model Card for salamandra-7b-smol-smoltalk-sv-en

This model is a fine-tuned version of [BSC-LT/salamandra-7b](https://huggingface.co/BSC-LT/salamandra-7b).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="liu-nlp/salamandra-7b-smol-smoltalk-sv-en", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

[<img src="https://raw.githubusercontent.com/wandb/assets/main/wandb-github-badge-28.svg" alt="Visualize in Weights & Biases" width="150" height="24"/>](https://wandb.ai/jenny-kunz-liu/huggingface/runs/vzuruaa8)

This model was trained with SFT.

### Framework versions

- TRL: 0.21.0

- Transformers: 4.55.1

- Pytorch: 2.8.0

- Datasets: 4.0.0

- Tokenizers: 0.21.4

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

```

|

hungtrab/q-TaxiV3

|

hungtrab

| 2025-08-20T17:14:19Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2025-08-20T17:14:13Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-TaxiV3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.54 +/- 2.74

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="hungtrab/q-TaxiV3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

youuotty/blockassist-bc-sizable_bipedal_turtle_1755709879

|

youuotty

| 2025-08-20T17:12:07Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"sizable bipedal turtle",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:11:23Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- sizable bipedal turtle

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

software-mansion/react-native-executorch-whisper-tiny.en

|

software-mansion

| 2025-08-20T17:11:38Z | 253 | 1 | null |

[

"license:apache-2.0",

"region:us"

] | null | 2025-02-26T10:21:26Z |

---

license: apache-2.0

---

# Introduction

This repository hosts the [whisper-tiny.en](https://huggingface.co/openai/whisper-tiny.en) model for the [React Native ExecuTorch](https://www.npmjs.com/package/react-native-executorch) library. It includes the model exported for xnnpack backend in `.pte` format, ready for use in the **ExecuTorch** runtime.

If you'd like to run these models in your own ExecuTorch runtime, refer to the [official documentation](https://pytorch.org/executorch/stable/index.html) for setup instructions.

## Compatibility

If you intend to use this models outside of React Native ExecuTorch, make sure your runtime is compatible with the **ExecuTorch** version used to export the `.pte` files. For more details, see the compatibility note in the [ExecuTorch GitHub repository](https://github.com/pytorch/executorch/blob/11d1742fdeddcf05bc30a6cfac321d2a2e3b6768/runtime/COMPATIBILITY.md?plain=1#L4). If you work with React Native ExecuTorch, the constants from the library will guarantee compatibility with runtime used behind the scenes.

These models were exported using v0.6.0 version of ExecuTorch and **no forward compatibility** is guaranteed. Older versions of the runtime may not work with these files.

|

roeker/blockassist-bc-quick_wiry_owl_1755709760

|

roeker

| 2025-08-20T17:10:37Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"quick wiry owl",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:09:59Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- quick wiry owl

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

liu-nlp/salamandra-7b-smol-smoltalk-sv

|

liu-nlp

| 2025-08-20T17:10:15Z | 8 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"generated_from_trainer",

"sft",

"trl",

"conversational",

"base_model:BSC-LT/salamandra-7b",

"base_model:finetune:BSC-LT/salamandra-7b",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-08T09:29:16Z |

---

base_model: BSC-LT/salamandra-7b

library_name: transformers

model_name: salamandra-7b-smol-smoltalk-sv

tags:

- generated_from_trainer

- sft

- trl

licence: license

---

# Model Card for salamandra-7b-smol-smoltalk-sv

This model is a fine-tuned version of [BSC-LT/salamandra-7b](https://huggingface.co/BSC-LT/salamandra-7b).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go to the past or the future once and never return, which would you choose and why?"

generator = pipeline("text-generation", model="liu-nlp/salamandra-7b-smol-smoltalk-sv", device="cuda")

output = generator([{"role": "user", "content": question}], max_new_tokens=128, return_full_text=False)[0]

print(output["generated_text"])

```

## Training procedure

[<img src="https://raw.githubusercontent.com/wandb/assets/main/wandb-github-badge-28.svg" alt="Visualize in Weights & Biases" width="150" height="24"/>](https://wandb.ai/jenny-kunz-liu/huggingface/runs/yt1ddttp)

This model was trained with SFT.

### Framework versions

- TRL: 0.21.0

- Transformers: 4.55.1

- Pytorch: 2.8.0

- Datasets: 4.0.0

- Tokenizers: 0.21.4

## Citations

Cite TRL as:

```bibtex

@misc{vonwerra2022trl,

title = {{TRL: Transformer Reinforcement Learning}},

author = {Leandro von Werra and Younes Belkada and Lewis Tunstall and Edward Beeching and Tristan Thrush and Nathan Lambert and Shengyi Huang and Kashif Rasul and Quentin Gallou{\'e}dec},

year = 2020,

journal = {GitHub repository},

publisher = {GitHub},

howpublished = {\url{https://github.com/huggingface/trl}}

}

```

|

chainway9/blockassist-bc-untamed_quick_eel_1755708079

|

chainway9

| 2025-08-20T17:09:40Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"untamed quick eel",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:09:36Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- untamed quick eel

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

gsaltintas/supertoken_models-llama_google-gemma-2-2b-100b

|

gsaltintas

| 2025-08-20T17:08:00Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"arxiv:1910.09700",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2025-08-18T19:14:40Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

WenFengg/pyar_14l11_21_8

|

WenFengg

| 2025-08-20T17:07:09Z | 0 | 0 | null |

[

"safetensors",

"any-to-any",

"omega",

"omegalabs",

"bittensor",

"agi",

"license:mit",

"region:us"

] |

any-to-any

| 2025-08-20T17:02:25Z |

---

license: mit

tags:

- any-to-any

- omega

- omegalabs

- bittensor

- agi

---

This is an Any-to-Any model checkpoint for the OMEGA Labs x Bittensor Any-to-Any subnet.

Check out the [git repo](https://github.com/omegalabsinc/omegalabs-anytoany-bittensor) and find OMEGA on X: [@omegalabsai](https://x.com/omegalabsai).

|

youuotty/blockassist-bc-bristly_striped_flamingo_1755709536

|

youuotty

| 2025-08-20T17:06:25Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"bristly striped flamingo",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:05:38Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- bristly striped flamingo

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

0xaoyama/blockassist-bc-muscular_zealous_gorilla_1755709539

|

0xaoyama

| 2025-08-20T17:06:11Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"muscular zealous gorilla",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:06:00Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- muscular zealous gorilla

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

kojeklollipop/blockassist-bc-spotted_amphibious_stork_1755707761

|

kojeklollipop

| 2025-08-20T17:05:54Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"spotted amphibious stork",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:05:51Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- spotted amphibious stork

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

vwzyrraz7l/blockassist-bc-tall_hunting_vulture_1755707719

|

vwzyrraz7l

| 2025-08-20T17:04:32Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"tall hunting vulture",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:04:29Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- tall hunting vulture

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

mradermacher/shellm-v0.1-GGUF

|

mradermacher

| 2025-08-20T17:04:23Z | 0 | 0 |

transformers

|

[

"transformers",

"gguf",

"en",

"base_model:hawierdev/shellm-v0.1",

"base_model:quantized:hawierdev/shellm-v0.1",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-08-20T16:49:58Z |

---

base_model: hawierdev/shellm-v0.1

language:

- en

library_name: transformers

mradermacher:

readme_rev: 1

quantized_by: mradermacher

---

## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static quants of https://huggingface.co/hawierdev/shellm-v0.1

<!-- provided-files -->

***For a convenient overview and download list, visit our [model page for this model](https://hf.tst.eu/model#shellm-v0.1-GGUF).***

weighted/imatrix quants seem not to be available (by me) at this time. If they do not show up a week or so after the static ones, I have probably not planned for them. Feel free to request them by opening a Community Discussion.

## Usage

If you are unsure how to use GGUF files, refer to one of [TheBloke's

READMEs](https://huggingface.co/TheBloke/KafkaLM-70B-German-V0.1-GGUF) for

more details, including on how to concatenate multi-part files.

## Provided Quants

(sorted by size, not necessarily quality. IQ-quants are often preferable over similar sized non-IQ quants)

| Link | Type | Size/GB | Notes |

|:-----|:-----|--------:|:------|

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q2_K.gguf) | Q2_K | 0.8 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q3_K_S.gguf) | Q3_K_S | 0.9 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q3_K_M.gguf) | Q3_K_M | 0.9 | lower quality |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q3_K_L.gguf) | Q3_K_L | 1.0 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.IQ4_XS.gguf) | IQ4_XS | 1.0 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q4_K_S.gguf) | Q4_K_S | 1.0 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q4_K_M.gguf) | Q4_K_M | 1.1 | fast, recommended |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q5_K_S.gguf) | Q5_K_S | 1.2 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q5_K_M.gguf) | Q5_K_M | 1.2 | |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q6_K.gguf) | Q6_K | 1.4 | very good quality |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.Q8_0.gguf) | Q8_0 | 1.7 | fast, best quality |

| [GGUF](https://huggingface.co/mradermacher/shellm-v0.1-GGUF/resolve/main/shellm-v0.1.f16.gguf) | f16 | 3.2 | 16 bpw, overkill |

Here is a handy graph by ikawrakow comparing some lower-quality quant

types (lower is better):

And here are Artefact2's thoughts on the matter:

https://gist.github.com/Artefact2/b5f810600771265fc1e39442288e8ec9

## FAQ / Model Request

See https://huggingface.co/mradermacher/model_requests for some answers to

questions you might have and/or if you want some other model quantized.

## Thanks

I thank my company, [nethype GmbH](https://www.nethype.de/), for letting

me use its servers and providing upgrades to my workstation to enable

this work in my free time.

<!-- end -->

|

esi777/blockassist-bc-camouflaged_trotting_eel_1755709382

|

esi777

| 2025-08-20T17:03:55Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"camouflaged trotting eel",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:03:51Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- camouflaged trotting eel

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

0xaoyama/blockassist-bc-muscular_zealous_gorilla_1755709342

|

0xaoyama

| 2025-08-20T17:02:56Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"muscular zealous gorilla",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-20T17:02:44Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- muscular zealous gorilla

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

WenFengg/loss_14l2_20_8

|

WenFengg

| 2025-08-20T16:56:30Z | 0 | 0 | null |

[

"safetensors",

"any-to-any",

"omega",

"omegalabs",

"bittensor",

"agi",

"license:mit",

"region:us"

] |

any-to-any

| 2025-08-20T16:42:26Z |

---

license: mit

tags:

- any-to-any

- omega

- omegalabs

- bittensor

- agi

---

This is an Any-to-Any model checkpoint for the OMEGA Labs x Bittensor Any-to-Any subnet.

Check out the [git repo](https://github.com/omegalabsinc/omegalabs-anytoany-bittensor) and find OMEGA on X: [@omegalabsai](https://x.com/omegalabsai).

|

Frane92O/OpenReasoning-Nemotron-7B-bnb-4bit

|

Frane92O

| 2025-08-20T16:56:19Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen2",

"feature-extraction",

"nvidia",

"code",

"text-generation",

"conversational",

"en",

"arxiv:2504.16891",

"arxiv:2504.01943",

"arxiv:2507.09075",

"base_model:nvidia/OpenReasoning-Nemotron-7B",

"base_model:quantized:nvidia/OpenReasoning-Nemotron-7B",

"license:cc-by-4.0",

"text-generation-inference",

"endpoints_compatible",

"4-bit",

"bitsandbytes",

"region:us"

] |

text-generation

| 2025-08-20T16:56:00Z |

---

base_model:

- nvidia/OpenReasoning-Nemotron-7B

license: cc-by-4.0

language:

- en

pipeline_tag: text-generation

library_name: transformers

tags:

- nvidia

- code

---

# nvidia/OpenReasoning-Nemotron-7B (Quantized)

## Description

This model is a quantized version of the original model [`nvidia/OpenReasoning-Nemotron-7B`](https://huggingface.co/nvidia/OpenReasoning-Nemotron-7B).

It's quantized using the BitsAndBytes library to 4-bit using the [bnb-my-repo](https://huggingface.co/spaces/HF-Quantization/bnb-my-repo) space.

## Quantization Details

- **Quantization Type**: int4

- **bnb_4bit_quant_type**: nf4

- **bnb_4bit_use_double_quant**: True

- **bnb_4bit_compute_dtype**: bfloat16

- **bnb_4bit_quant_storage**: uint8

# 📄 Original Model Information

# OpenReasoning-Nemotron-7B Overview

## Description: <br>

OpenReasoning-Nemotron-7B is a large language model (LLM) which is a derivative of Qwen2.5-7B-Instruct (AKA the reference model). It is a reasoning model that is post-trained for reasoning about math, code and science solution generation. We evaluated this model with up to 64K output tokens. The OpenReasoning model is available in the following sizes: 1.5B, 7B and 14B and 32B. <br>

This model is ready for commercial/non-commercial research use. <br>

### License/Terms of Use: <br>

GOVERNING TERMS: Use of the models listed above are governed by the [Creative Commons Attribution 4.0 International License (CC-BY-4.0)](https://creativecommons.org/licenses/by/4.0/legalcode.en). ADDITIONAL INFORMATION: [Apache 2.0 License](https://huggingface.co/Qwen/Qwen2.5-32B-Instruct/blob/main/LICENSE)

## Scores on Reasoning Benchmarks

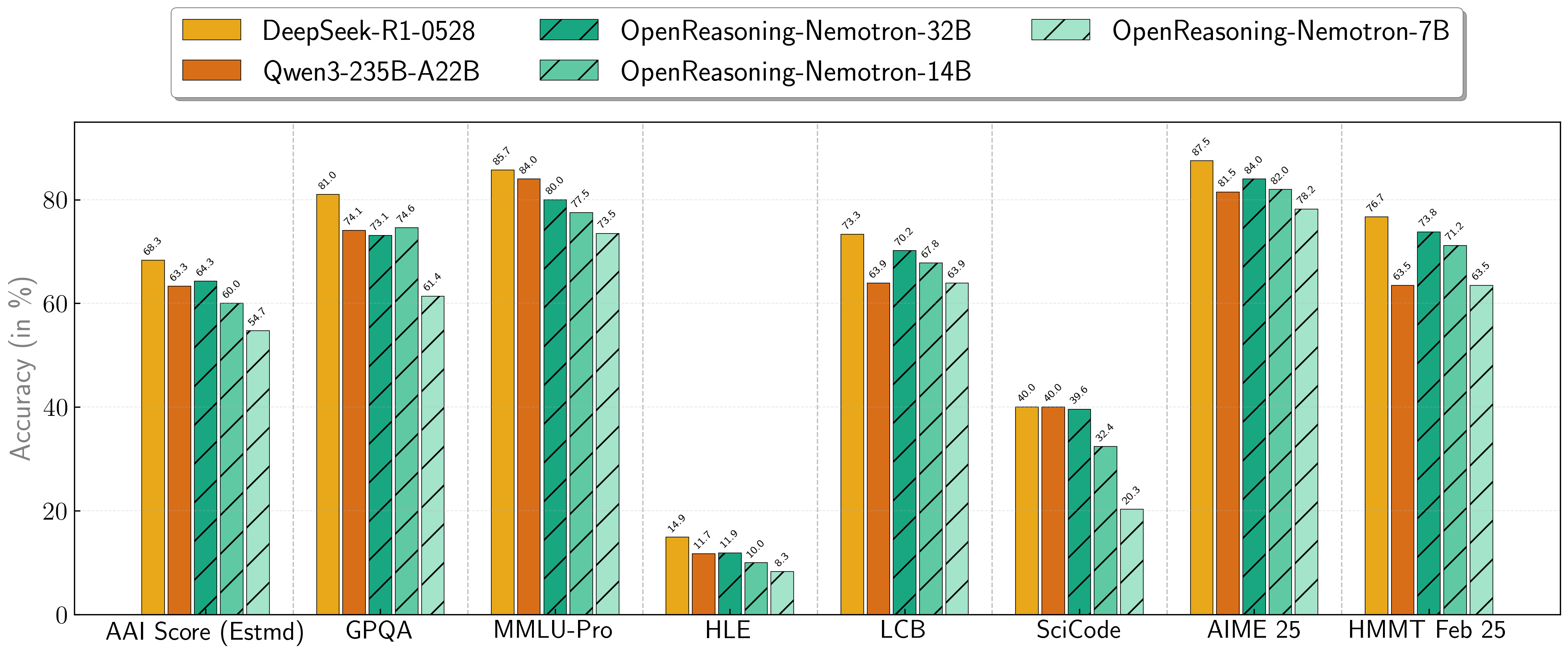

Our models demonstrate exceptional performance across a suite of challenging reasoning benchmarks. The 7B, 14B, and 32B models consistently set new state-of-the-art records for their size classes.

| **Model** | **AritificalAnalysisIndex*** | **GPQA** | **MMLU-PRO** | **HLE** | **LiveCodeBench*** | **SciCode** | **AIME24** | **AIME25** | **HMMT FEB 25** |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **1.5B**| 31.0 | 31.6 | 47.5 | 5.5 | 28.6 | 2.2 | 55.5 | 45.6 | 31.5 |

| **7B** | 54.7 | 61.1 | 71.9 | 8.3 | 63.3 | 16.2 | 84.7 | 78.2 | 63.5 |

| **14B** | 60.9 | 71.6 | 77.5 | 10.1 | 67.8 | 23.5 | 87.8 | 82.0 | 71.2 |

| **32B** | 64.3 | 73.1 | 80.0 | 11.9 | 70.2 | 28.5 | 89.2 | 84.0 | 73.8 |

\* This is our estimation of the Artificial Analysis Intelligence Index, not an official score.

\* LiveCodeBench version 6, date range 2408-2505.

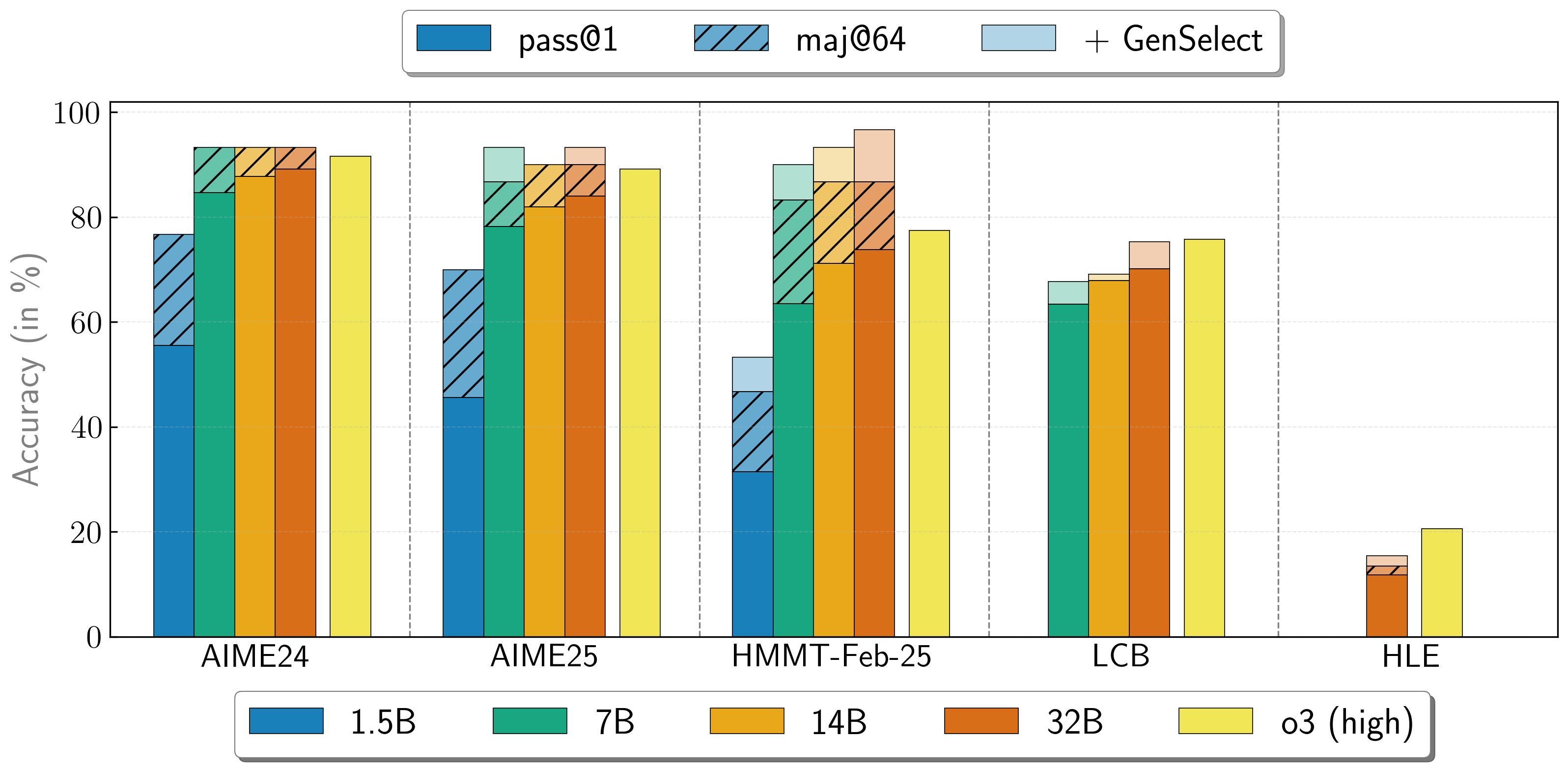

## Combining the work of multiple agents

OpenReasoning-Nemotron models can be used in a "heavy" mode by starting multiple parallel generations and combining them together via [generative solution selection (GenSelect)](https://arxiv.org/abs/2504.16891). To add this "skill" we follow the original GenSelect training pipeline except we do not train on the selection summary but use the full reasoning trace of DeepSeek R1 0528 671B instead. We only train models to select the best solution for math problems but surprisingly find that this capability directly generalizes to code and science questions! With this "heavy" GenSelect inference mode, OpenReasoning-Nemotron-32B model surpasses O3 (High) on math and coding benchmarks.

| **Model** | **Pass@1 (Avg@64)** | **Majority@64** | **GenSelect** |

| :--- | :--- | :--- | :--- |

| **1.5B** | | | |

| **AIME24** | 55.5 | 76.7 | 76.7 |

| **AIME25** | 45.6 | 70.0 | 70.0 |

| **HMMT Feb 25** | 31.5 | 46.7 | 53.3 |

| **7B** | | | |

| **AIME24** | 84.7 | 93.3 | 93.3 |

| **AIME25** | 78.2 | 86.7 | 93.3 |

| **HMMT Feb 25** | 63.5 | 83.3 | 90.0 |

| **LCB v6 2408-2505** | 63.4 | n/a | 67.7 |

| **14B** | | | |

| **AIME24** | 87.8 | 93.3 | 93.3 |

| **AIME25** | 82.0 | 90.0 | 90.0 |

| **HMMT Feb 25** | 71.2 | 86.7 | 93.3 |

| **LCB v6 2408-2505** | 67.9 | n/a | 69.1 |

| **32B** | | | |

| **AIME24** | 89.2 | 93.3 | 93.3 |

| **AIME25** | 84.0 | 90.0 | 93.3 |

| **HMMT Feb 25** | 73.8 | 86.7 | 96.7 |

| **LCB v6 2408-2505** | 70.2 | n/a | 75.3 |

| **HLE** | 11.8 | 13.4 | 15.5 |

## How to use the models?

To run inference on coding problems: