modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-31 06:26:39

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 530

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-31 06:26:13

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

peyschen/ppo-LunarLander-v2

|

peyschen

| 2023-11-23T10:11:26Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-11-23T10:11:05Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 269.13 +/- 19.11

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

feynman-integrals-nn/topbox-3layers

|

feynman-integrals-nn

| 2023-11-23T10:11:15Z | 5 | 0 |

transformers

|

[

"transformers",

"license:cc-by-4.0",

"endpoints_compatible",

"region:us"

] | null | 2023-11-10T09:36:14Z |

---

license: cc-by-4.0

---

# topbox-3layers

* [model](https://huggingface.co/feynman-integrals-nn/topbox-3layers)

* [data](https://huggingface.co/datasets/feynman-integrals-nn/topbox/tree/8acb41221c829845af218756baa7985c97927a50)

* [source](https://gitlab.com/feynman-integrals-nn/feynman-integrals-nn/-/tree/main/topbox)

|

enaitzb/a2c-PandaReachDense-v3

|

enaitzb

| 2023-11-23T10:10:05Z | 1 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"PandaReachDense-v3",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-11-23T10:04:23Z |

---

library_name: stable-baselines3

tags:

- PandaReachDense-v3

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: PandaReachDense-v3

type: PandaReachDense-v3

metrics:

- type: mean_reward

value: -0.22 +/- 0.13

name: mean_reward

verified: false

---

# **A2C** Agent playing **PandaReachDense-v3**

This is a trained model of a **A2C** agent playing **PandaReachDense-v3**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

mtc/LeoLM-leo-mistral-hessianai-7b-xnli-absinth-qlora-4bit

|

mtc

| 2023-11-23T10:09:37Z | 0 | 0 |

peft

|

[

"peft",

"safetensors",

"region:us"

] | null | 2023-11-23T10:09:09Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: QuantizationMethod.BITS_AND_BYTES

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0

|

owanr/SChem5Labels-google-t5-v1_1-xl-inter-frequency-model-cross-ent

|

owanr

| 2023-11-23T10:08:26Z | 0 | 0 | null |

[

"safetensors",

"generated_from_trainer",

"base_model:google/t5-v1_1-xl",

"base_model:finetune:google/t5-v1_1-xl",

"license:apache-2.0",

"region:us"

] | null | 2023-11-23T03:40:41Z |

---

license: apache-2.0

base_model: google/t5-v1_1-xl

tags:

- generated_from_trainer

model-index:

- name: SChem5Labels-google-t5-v1_1-xl-inter-frequency-model-cross-ent

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# SChem5Labels-google-t5-v1_1-xl-inter-frequency-model-cross-ent

This model is a fine-tuned version of [google/t5-v1_1-xl](https://huggingface.co/google/t5-v1_1-xl) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 8.8828

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 50

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 11.4711 | 1.0 | 99 | 8.8828 |

| 11.0023 | 2.0 | 198 | 8.8828 |

| 10.7984 | 3.0 | 297 | 8.8828 |

| 11.1695 | 4.0 | 396 | 8.8828 |

### Framework versions

- Transformers 4.35.2

- Pytorch 2.1.1+cu121

- Datasets 2.15.0

- Tokenizers 0.15.0

|

TheBloke/Noromaid-20B-v0.1.1-GPTQ

|

TheBloke

| 2023-11-23T09:56:48Z | 27 | 8 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"base_model:NeverSleep/Noromaid-20b-v0.1.1",

"base_model:quantized:NeverSleep/Noromaid-20b-v0.1.1",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"text-generation-inference",

"4-bit",

"gptq",

"region:us"

] |

text-generation

| 2023-11-23T08:47:36Z |

---

base_model: NeverSleep/Noromaid-20b-v0.1.1

inference: false

license: cc-by-nc-4.0

model_creator: IkariDev and Undi

model_name: Noromaid 20B v0.1.1

model_type: llama

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'

quantized_by: TheBloke

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Noromaid 20B v0.1.1 - GPTQ

- Model creator: [IkariDev and Undi](https://huggingface.co/NeverSleep)

- Original model: [Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1)

<!-- description start -->

# Description

This repo contains GPTQ model files for [IkariDev and Undi's Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1).

Multiple GPTQ parameter permutations are provided; see Provided Files below for details of the options provided, their parameters, and the software used to create them.

These files were quantised using hardware kindly provided by [Massed Compute](https://massedcompute.com/).

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GGUF)

* [IkariDev and Undi's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Alpaca

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

<!-- prompt-template end -->

<!-- licensing start -->

## Licensing

The creator of the source model has listed its license as `cc-by-nc-4.0`, and this quantization has therefore used that same license.

As this model is based on Llama 2, it is also subject to the Meta Llama 2 license terms, and the license files for that are additionally included. It should therefore be considered as being claimed to be licensed under both licenses. I contacted Hugging Face for clarification on dual licensing but they do not yet have an official position. Should this change, or should Meta provide any feedback on this situation, I will update this section accordingly.

In the meantime, any questions regarding licensing, and in particular how these two licenses might interact, should be directed to the original model repository: [IkariDev and Undi's Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1).

<!-- licensing end -->

<!-- README_GPTQ.md-compatible clients start -->

## Known compatible clients / servers

These GPTQ models are known to work in the following inference servers/webuis.

- [text-generation-webui](https://github.com/oobabooga/text-generation-webui)

- [KoboldAI United](https://github.com/henk717/koboldai)

- [LoLLMS Web UI](https://github.com/ParisNeo/lollms-webui)

- [Hugging Face Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference)

This may not be a complete list; if you know of others, please let me know!

<!-- README_GPTQ.md-compatible clients end -->

<!-- README_GPTQ.md-provided-files start -->

## Provided files, and GPTQ parameters

Multiple quantisation parameters are provided, to allow you to choose the best one for your hardware and requirements.

Each separate quant is in a different branch. See below for instructions on fetching from different branches.

Most GPTQ files are made with AutoGPTQ. Mistral models are currently made with Transformers.

<details>

<summary>Explanation of GPTQ parameters</summary>

- Bits: The bit size of the quantised model.

- GS: GPTQ group size. Higher numbers use less VRAM, but have lower quantisation accuracy. "None" is the lowest possible value.

- Act Order: True or False. Also known as `desc_act`. True results in better quantisation accuracy. Some GPTQ clients have had issues with models that use Act Order plus Group Size, but this is generally resolved now.

- Damp %: A GPTQ parameter that affects how samples are processed for quantisation. 0.01 is default, but 0.1 results in slightly better accuracy.

- GPTQ dataset: The calibration dataset used during quantisation. Using a dataset more appropriate to the model's training can improve quantisation accuracy. Note that the GPTQ calibration dataset is not the same as the dataset used to train the model - please refer to the original model repo for details of the training dataset(s).

- Sequence Length: The length of the dataset sequences used for quantisation. Ideally this is the same as the model sequence length. For some very long sequence models (16+K), a lower sequence length may have to be used. Note that a lower sequence length does not limit the sequence length of the quantised model. It only impacts the quantisation accuracy on longer inference sequences.

- ExLlama Compatibility: Whether this file can be loaded with ExLlama, which currently only supports Llama and Mistral models in 4-bit.

</details>

| Branch | Bits | GS | Act Order | Damp % | GPTQ Dataset | Seq Len | Size | ExLlama | Desc |

| ------ | ---- | -- | --------- | ------ | ------------ | ------- | ---- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/main) | 4 | None | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 10.52 GB | Yes | 4-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-4bit-128g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-4bit-128g-actorder_True) | 4 | 128 | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 10.89 GB | Yes | 4-bit, with Act Order and group size 128g. Uses even less VRAM than 64g, but with slightly lower accuracy. |

| [gptq-4bit-32g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-4bit-32g-actorder_True) | 4 | 32 | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 12.04 GB | Yes | 4-bit, with Act Order and group size 32g. Gives highest possible inference quality, with maximum VRAM usage. |

| [gptq-3bit-128g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-3bit-128g-actorder_True) | 3 | 128 | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 8.41 GB | No | 3-bit, with group size 128g and act-order. Higher quality than 128g-False. |

| [gptq-8bit--1g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-8bit--1g-actorder_True) | 8 | None | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 20.35 GB | No | 8-bit, with Act Order. No group size, to lower VRAM requirements. |

| [gptq-3bit-32g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-3bit-32g-actorder_True) | 3 | 32 | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 9.51 GB | No | 3-bit, with group size 64g and act-order. Highest quality 3-bit option. |

| [gptq-8bit-128g-actorder_True](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ/tree/gptq-8bit-128g-actorder_True) | 8 | 128 | Yes | 0.1 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 20.80 GB | No | 8-bit, with group size 128g for higher inference quality and with Act Order for even higher accuracy. |

<!-- README_GPTQ.md-provided-files end -->

<!-- README_GPTQ.md-download-from-branches start -->

## How to download, including from branches

### In text-generation-webui

To download from the `main` branch, enter `TheBloke/Noromaid-20B-v0.1.1-GPTQ` in the "Download model" box.

To download from another branch, add `:branchname` to the end of the download name, eg `TheBloke/Noromaid-20B-v0.1.1-GPTQ:gptq-4bit-128g-actorder_True`

### From the command line

I recommend using the `huggingface-hub` Python library:

```shell

pip3 install huggingface-hub

```

To download the `main` branch to a folder called `Noromaid-20B-v0.1.1-GPTQ`:

```shell

mkdir Noromaid-20B-v0.1.1-GPTQ

huggingface-cli download TheBloke/Noromaid-20B-v0.1.1-GPTQ --local-dir Noromaid-20B-v0.1.1-GPTQ --local-dir-use-symlinks False

```

To download from a different branch, add the `--revision` parameter:

```shell

mkdir Noromaid-20B-v0.1.1-GPTQ

huggingface-cli download TheBloke/Noromaid-20B-v0.1.1-GPTQ --revision gptq-4bit-128g-actorder_True --local-dir Noromaid-20B-v0.1.1-GPTQ --local-dir-use-symlinks False

```

<details>

<summary>More advanced huggingface-cli download usage</summary>

If you remove the `--local-dir-use-symlinks False` parameter, the files will instead be stored in the central Hugging Face cache directory (default location on Linux is: `~/.cache/huggingface`), and symlinks will be added to the specified `--local-dir`, pointing to their real location in the cache. This allows for interrupted downloads to be resumed, and allows you to quickly clone the repo to multiple places on disk without triggering a download again. The downside, and the reason why I don't list that as the default option, is that the files are then hidden away in a cache folder and it's harder to know where your disk space is being used, and to clear it up if/when you want to remove a download model.

The cache location can be changed with the `HF_HOME` environment variable, and/or the `--cache-dir` parameter to `huggingface-cli`.

For more documentation on downloading with `huggingface-cli`, please see: [HF -> Hub Python Library -> Download files -> Download from the CLI](https://huggingface.co/docs/huggingface_hub/guides/download#download-from-the-cli).

To accelerate downloads on fast connections (1Gbit/s or higher), install `hf_transfer`:

```shell

pip3 install hf_transfer

```

And set environment variable `HF_HUB_ENABLE_HF_TRANSFER` to `1`:

```shell

mkdir Noromaid-20B-v0.1.1-GPTQ

HF_HUB_ENABLE_HF_TRANSFER=1 huggingface-cli download TheBloke/Noromaid-20B-v0.1.1-GPTQ --local-dir Noromaid-20B-v0.1.1-GPTQ --local-dir-use-symlinks False

```

Windows Command Line users: You can set the environment variable by running `set HF_HUB_ENABLE_HF_TRANSFER=1` before the download command.

</details>

### With `git` (**not** recommended)

To clone a specific branch with `git`, use a command like this:

```shell

git clone --single-branch --branch gptq-4bit-128g-actorder_True https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ

```

Note that using Git with HF repos is strongly discouraged. It will be much slower than using `huggingface-hub`, and will use twice as much disk space as it has to store the model files twice (it stores every byte both in the intended target folder, and again in the `.git` folder as a blob.)

<!-- README_GPTQ.md-download-from-branches end -->

<!-- README_GPTQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui)

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Noromaid-20B-v0.1.1-GPTQ`.

- To download from a specific branch, enter for example `TheBloke/Noromaid-20B-v0.1.1-GPTQ:gptq-4bit-128g-actorder_True`

- see Provided Files above for the list of branches for each option.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Noromaid-20B-v0.1.1-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

- Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation** tab and enter a prompt to get started!

<!-- README_GPTQ.md-text-generation-webui end -->

<!-- README_GPTQ.md-use-from-tgi start -->

## Serving this model from Text Generation Inference (TGI)

It's recommended to use TGI version 1.1.0 or later. The official Docker container is: `ghcr.io/huggingface/text-generation-inference:1.1.0`

Example Docker parameters:

```shell

--model-id TheBloke/Noromaid-20B-v0.1.1-GPTQ --port 3000 --quantize gptq --max-input-length 3696 --max-total-tokens 4096 --max-batch-prefill-tokens 4096

```

Example Python code for interfacing with TGI (requires huggingface-hub 0.17.0 or later):

```shell

pip3 install huggingface-hub

```

```python

from huggingface_hub import InferenceClient

endpoint_url = "https://your-endpoint-url-here"

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

client = InferenceClient(endpoint_url)

response = client.text_generation(prompt,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1)

print(f"Model output: {response}")

```

<!-- README_GPTQ.md-use-from-tgi end -->

<!-- README_GPTQ.md-use-from-python start -->

## Python code example: inference from this GPTQ model

### Install the necessary packages

Requires: Transformers 4.33.0 or later, Optimum 1.12.0 or later, and AutoGPTQ 0.4.2 or later.

```shell

pip3 install --upgrade transformers optimum

# If using PyTorch 2.1 + CUDA 12.x:

pip3 install --upgrade auto-gptq

# or, if using PyTorch 2.1 + CUDA 11.x:

pip3 install --upgrade auto-gptq --extra-index-url https://huggingface.github.io/autogptq-index/whl/cu118/

```

If you are using PyTorch 2.0, you will need to install AutoGPTQ from source. Likewise if you have problems with the pre-built wheels, you should try building from source:

```shell

pip3 uninstall -y auto-gptq

git clone https://github.com/PanQiWei/AutoGPTQ

cd AutoGPTQ

git checkout v0.5.1

pip3 install .

```

### Example Python code

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, pipeline

model_name_or_path = "TheBloke/Noromaid-20B-v0.1.1-GPTQ"

# To use a different branch, change revision

# For example: revision="gptq-4bit-128g-actorder_True"

model = AutoModelForCausalLM.from_pretrained(model_name_or_path,

device_map="auto",

trust_remote_code=False,

revision="main")

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, do_sample=True, top_p=0.95, top_k=40, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1

)

print(pipe(prompt_template)[0]['generated_text'])

```

<!-- README_GPTQ.md-use-from-python end -->

<!-- README_GPTQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with Transformers. For non-Mistral models, AutoGPTQ can also be used directly.

[ExLlama](https://github.com/turboderp/exllama) is compatible with Llama and Mistral models in 4-bit. Please see the Provided Files table above for per-file compatibility.

For a list of clients/servers, please see "Known compatible clients / servers", above.

<!-- README_GPTQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Brandon Frisco, LangChain4j, Spiking Neurons AB, transmissions 11, Joseph William Delisle, Nitin Borwankar, Willem Michiel, Michael Dempsey, vamX, Jeffrey Morgan, zynix, jjj, Omer Bin Jawed, Sean Connelly, jinyuan sun, Jeromy Smith, Shadi, Pawan Osman, Chadd, Elijah Stavena, Illia Dulskyi, Sebastain Graf, Stephen Murray, terasurfer, Edmond Seymore, Celu Ramasamy, Mandus, Alex, biorpg, Ajan Kanaga, Clay Pascal, Raven Klaugh, 阿明, K, ya boyyy, usrbinkat, Alicia Loh, John Villwock, ReadyPlayerEmma, Chris Smitley, Cap'n Zoog, fincy, GodLy, S_X, sidney chen, Cory Kujawski, OG, Mano Prime, AzureBlack, Pieter, Kalila, Spencer Kim, Tom X Nguyen, Stanislav Ovsiannikov, Michael Levine, Andrey, Trailburnt, Vadim, Enrico Ros, Talal Aujan, Brandon Phillips, Jack West, Eugene Pentland, Michael Davis, Will Dee, webtim, Jonathan Leane, Alps Aficionado, Rooh Singh, Tiffany J. Kim, theTransient, Luke @flexchar, Elle, Caitlyn Gatomon, Ari Malik, subjectnull, Johann-Peter Hartmann, Trenton Dambrowitz, Imad Khwaja, Asp the Wyvern, Emad Mostaque, Rainer Wilmers, Alexandros Triantafyllidis, Nicholas, Pedro Madruga, SuperWojo, Harry Royden McLaughlin, James Bentley, Olakabola, David Ziegler, Ai Maven, Jeff Scroggin, Nikolai Manek, Deo Leter, Matthew Berman, Fen Risland, Ken Nordquist, Manuel Alberto Morcote, Luke Pendergrass, TL, Fred von Graf, Randy H, Dan Guido, NimbleBox.ai, Vitor Caleffi, Gabriel Tamborski, knownsqashed, Lone Striker, Erik Bjäreholt, John Detwiler, Leonard Tan, Iucharbius

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: IkariDev and Undi's Noromaid 20B v0.1.1

---

# Disclaimer:

## This is a ***TEST*** version, don't expect everything to work!!!

You may use our custom **prompting format**(scroll down to download them!), or simple alpaca. **(Choose which fits best for you!)**

---

# This model is a collab between [IkariDev](https://huggingface.co/IkariDev) and [Undi](https://huggingface.co/Undi95)!

Tired of the same merges everytime? Here it is, the Noromaid-20b-v0.1.1 model. Suitable for RP, ERP and general stuff.

[Recommended settings - No settings yet(Please suggest some over in the Community tab!)]

<!-- description start -->

## Description

<!-- [Recommended settings - contributed by localfultonextractor](https://files.catbox.moe/ue0tja.json) -->

This repo contains fp16 files of Noromaid-20b-v0.1.1.

[FP16 - by IkariDev and Undi](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1)

<!-- [GGUF - By TheBloke](https://huggingface.co/TheBloke/Athena-v4-GGUF)-->

<!-- [GPTQ - By TheBloke](https://huggingface.co/TheBloke/Athena-v4-GPTQ)-->

<!-- [exl2[8bpw-8h] - by AzureBlack](https://huggingface.co/AzureBlack/Echidna-13b-v0.3-8bpw-8h-exl2)-->

<!-- [AWQ - By TheBloke](https://huggingface.co/TheBloke/Athena-v4-AWQ)-->

<!-- [fp16 - by IkariDev+Undi95](https://huggingface.co/IkariDev/Athena-v4)-->

[GGUF - by IkariDev and Undi](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1-GGUF)

<!-- [OLD(GGUF - by IkariDev+Undi95)](https://huggingface.co/IkariDev/Athena-v4-GGUF)-->

## Ratings:

Note: We have permission of all users to upload their ratings, we DONT screenshot random reviews without asking if we can put them here!

No ratings yet!

If you want your rating to be here, send us a message over on DC and we'll put up a screenshot of it here. DC name is "ikaridev" and "undi".

<!-- description end -->

<!-- prompt-template start -->

## Prompt template: Custom format, or Alpaca

### Custom format:

UPDATED!! SillyTavern config files: [Context](https://files.catbox.moe/ifmhai.json), [Instruct](https://files.catbox.moe/ttw1l9.json).

OLD SillyTavern config files: [Context](https://files.catbox.moe/x85uy1.json), [Instruct](https://files.catbox.moe/ttw1l9.json).

### Alpaca:

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

## Training data used:

- [no_robots dataset](https://huggingface.co/Undi95/Llama2-13B-no_robots-alpaca-lora) let the model have more human behavior, enhances the output.

- [Aesir Private RP dataset] New data from a new and never used before dataset, add fresh data, no LimaRP spam, this is 100% new. Thanks to the [MinvervaAI Team](https://huggingface.co/MinervaAI) and, in particular, [Gryphe](https://huggingface.co/Gryphe) for letting us use it!

## Others

Undi: If you want to support me, you can [here](https://ko-fi.com/undiai).

IkariDev: Visit my [retro/neocities style website](https://ikaridevgit.github.io/) please kek

|

baskotayunisha/my_model

|

baskotayunisha

| 2023-11-23T09:56:17Z | 5 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"mt5",

"text2text-generation",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-11-23T09:45:53Z |

---

tags:

- generated_from_trainer

model-index:

- name: my_model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_model

This model was trained from scratch on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.005

- train_batch_size: 1

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 4

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.35.2

- Pytorch 2.1.0+cu118

- Datasets 2.15.0

- Tokenizers 0.15.0

|

y1m1ng/test_dome

|

y1m1ng

| 2023-11-23T09:54:32Z | 15 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"arxiv:2207.12598",

"arxiv:2112.10752",

"arxiv:2103.00020",

"arxiv:2205.11487",

"arxiv:1910.09700",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-09-16T18:31:05Z |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

inference: true

extra_gated_prompt: |-

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. CompVis claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

Please read the full license carefully here: https://huggingface.co/spaces/CompVis/stable-diffusion-license

extra_gated_heading: Please read the LICENSE to access this model

---

# Stable Diffusion v1-5 Model Card

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input.

For more information about how Stable Diffusion functions, please have a look at [🤗's Stable Diffusion blog](https://huggingface.co/blog/stable_diffusion).

The **Stable-Diffusion-v1-5** checkpoint was initialized with the weights of the [Stable-Diffusion-v1-2](https:/steps/huggingface.co/CompVis/stable-diffusion-v1-2)

checkpoint and subsequently fine-tuned on 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

You can use this both with the [🧨Diffusers library](https://github.com/huggingface/diffusers) and the [RunwayML GitHub repository](https://github.com/runwayml/stable-diffusion).

### Diffusers

```py

from diffusers import StableDiffusionPipeline

import torch

model_id = "runwayml/stable-diffusion-v1-5"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

image.save("astronaut_rides_horse.png")

```

For more detailed instructions, use-cases and examples in JAX follow the instructions [here](https://github.com/huggingface/diffusers#text-to-image-generation-with-stable-diffusion)

### Original GitHub Repository

1. Download the weights

- [v1-5-pruned-emaonly.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned-emaonly.ckpt) - 4.27GB, ema-only weight. uses less VRAM - suitable for inference

- [v1-5-pruned.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned.ckpt) - 7.7GB, ema+non-ema weights. uses more VRAM - suitable for fine-tuning

2. Follow instructions [here](https://github.com/runwayml/stable-diffusion).

## Model Details

- **Developed by:** Robin Rombach, Patrick Esser

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([CLIP ViT-L/14](https://arxiv.org/abs/2103.00020)) as suggested in the [Imagen paper](https://arxiv.org/abs/2205.11487).

- **Resources for more information:** [GitHub Repository](https://github.com/CompVis/stable-diffusion), [Paper](https://arxiv.org/abs/2112.10752).

- **Cite as:**

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

# Uses

## Direct Use

The model is intended for research purposes only. Possible research areas and

tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

_Note: This section is taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), but applies in the same way to Stable Diffusion v1_.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/) which contains adult material

and is not fit for product use without additional safety mechanisms and

considerations.

- No additional measures were used to deduplicate the dataset. As a result, we observe some degree of memorization for images that are duplicated in the training data.

The training data can be searched at [https://rom1504.github.io/clip-retrieval/](https://rom1504.github.io/clip-retrieval/) to possibly assist in the detection of memorized images.

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are primarily limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

### Safety Module

The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers.

This checker works by checking model outputs against known hard-coded NSFW concepts.

The concepts are intentionally hidden to reduce the likelihood of reverse-engineering this filter.

Specifically, the checker compares the class probability of harmful concepts in the embedding space of the `CLIPTextModel` *after generation* of the images.

The concepts are passed into the model with the generated image and compared to a hand-engineered weight for each NSFW concept.

## Training

**Training Data**

The model developers used the following dataset for training the model:

- LAION-2B (en) and subsets thereof (see next section)

**Training Procedure**

Stable Diffusion v1-5 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training,

- Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4

- Text prompts are encoded through a ViT-L/14 text-encoder.

- The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention.

- The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet.

Currently six Stable Diffusion checkpoints are provided, which were trained as follows.

- [`stable-diffusion-v1-1`](https://huggingface.co/CompVis/stable-diffusion-v1-1): 237,000 steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en).

194,000 steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`).

- [`stable-diffusion-v1-2`](https://huggingface.co/CompVis/stable-diffusion-v1-2): Resumed from `stable-diffusion-v1-1`.

515,000 steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en,

filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)).

- [`stable-diffusion-v1-3`](https://huggingface.co/CompVis/stable-diffusion-v1-3): Resumed from `stable-diffusion-v1-2` - 195,000 steps at resolution `512x512` on "laion-improved-aesthetics" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-v1-4`](https://huggingface.co/CompVis/stable-diffusion-v1-4) Resumed from `stable-diffusion-v1-2` - 225,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) Resumed from `stable-diffusion-v1-2` - 595,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-inpainting`](https://huggingface.co/runwayml/stable-diffusion-inpainting) Resumed from `stable-diffusion-v1-5` - then 440,000 steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning. For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything.

- **Hardware:** 32 x 8 x A100 GPUs

- **Optimizer:** AdamW

- **Gradient Accumulations**: 2

- **Batch:** 32 x 8 x 2 x 4 = 2048

- **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant

## Evaluation Results

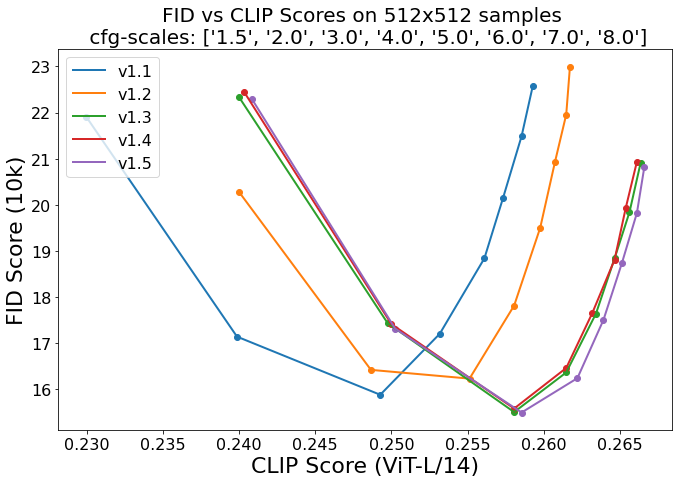

Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0,

5.0, 6.0, 7.0, 8.0) and 50 PNDM/PLMS sampling

steps show the relative improvements of the checkpoints:

Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores.

## Environmental Impact

**Stable Diffusion v1** **Estimated Emissions**

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

- **Hardware Type:** A100 PCIe 40GB

- **Hours used:** 150000

- **Cloud Provider:** AWS

- **Compute Region:** US-east

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 11250 kg CO2 eq.

## Citation

```bibtex

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

```

*This model card was written by: Robin Rombach and Patrick Esser and is based on the [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).*

|

LittlePrincess/my-pet-dog-xzg

|

LittlePrincess

| 2023-11-23T09:46:23Z | 0 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"NxtWave-GenAI-Webinar",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-11-23T09:41:28Z |

---

license: creativeml-openrail-m

tags:

- NxtWave-GenAI-Webinar

- text-to-image

- stable-diffusion

---

### My-Pet-Dog-xzg Dreambooth model trained by LittlePrincess following the "Build your own Gen AI model" session by NxtWave.

Project Submission Code: NPR-220

Sample pictures of this concept:

|

Yntec/ChildrenStoriesAnime

|

Yntec

| 2023-11-23T09:45:36Z | 147 | 7 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"Zovya",

"Children Books",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-07-20T13:56:44Z |

---

license: creativeml-openrail-m

library_name: diffusers

pipeline_tag: text-to-image

tags:

- stable-diffusion

- stable-diffusion-diffusers

- diffusers

- text-to-image

- Zovya

- Children Books

---

# Children's Stories Anime

This version of this model by Zovya has the Waifu 1.4 VAE baked in for better saturation.

Original page:

https://civitai.com/models/64544?modelVersionId=69167

|

florentgbelidji/leoLM-13b-sft-lora-oa

|

florentgbelidji

| 2023-11-23T09:44:51Z | 20 | 1 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"generated_from_trainer",

"conversational",

"custom_code",

"base_model:LeoLM/leo-hessianai-13b",

"base_model:quantized:LeoLM/leo-hessianai-13b",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"4-bit",

"bitsandbytes",

"region:us"

] |

text-generation

| 2023-11-22T19:29:30Z |

---

base_model: LeoLM/leo-hessianai-13b

tags:

- generated_from_trainer

model-index:

- name: leoLM-13b-sft-lora-oa

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# leoLM-13b-sft-lora-oa

This model is a fine-tuned version of [LeoLM/leo-hessianai-13b](https://huggingface.co/LeoLM/leo-hessianai-13b) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 1

- eval_batch_size: 1

- seed: 42

- distributed_type: multi-GPU

- num_devices: 4

- gradient_accumulation_steps: 4

- total_train_batch_size: 16

- total_eval_batch_size: 4

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- num_epochs: 1

### Training results

### Framework versions

- Transformers 4.35.0

- Pytorch 2.1.1

- Datasets 2.15.0

- Tokenizers 0.14.1

|

alejoa/bert-sst2

|

alejoa

| 2023-11-23T09:43:55Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-11-23T00:44:05Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- accuracy

model-index:

- name: bert-sst2

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: sst2

split: validation

args: sst2

metrics:

- name: Accuracy

type: accuracy

value: 0.9139908256880734

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-sst2

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3616

- Accuracy: 0.9140

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.2447 | 1.0 | 8419 | 0.3422 | 0.9117 |

| 0.1435 | 2.0 | 16838 | 0.3616 | 0.9140 |

### Framework versions

- Transformers 4.30.2

- Pytorch 2.0.1

- Datasets 2.14.5

- Tokenizers 0.13.3

|

mogaio/TinyLlama-con-emp-lora-ada

|

mogaio

| 2023-11-23T09:42:37Z | 0 | 0 | null |

[

"tensorboard",

"safetensors",

"generated_from_trainer",

"base_model:TinyLlama/TinyLlama-1.1B-Chat-v0.3",

"base_model:finetune:TinyLlama/TinyLlama-1.1B-Chat-v0.3",

"license:apache-2.0",

"region:us"

] | null | 2023-11-23T09:39:35Z |

---

license: apache-2.0

base_model: PY007/TinyLlama-1.1B-Chat-v0.3

tags:

- generated_from_trainer

model-index:

- name: TinyLlama-con-emp-lora-ada

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# TinyLlama-con-emp-lora-ada

This model is a fine-tuned version of [PY007/TinyLlama-1.1B-Chat-v0.3](https://huggingface.co/PY007/TinyLlama-1.1B-Chat-v0.3) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 1

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 4

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- training_steps: 200

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.35.2

- Pytorch 2.1.0+cu118

- Datasets 2.15.0

- Tokenizers 0.15.0

|

Systran/faster-whisper-large-v3

|

Systran

| 2023-11-23T09:41:12Z | 708,585 | 325 |

ctranslate2

|

[

"ctranslate2",

"audio",

"automatic-speech-recognition",

"en",

"zh",

"de",

"es",

"ru",

"ko",

"fr",

"ja",

"pt",

"tr",

"pl",

"ca",

"nl",

"ar",

"sv",

"it",

"id",

"hi",

"fi",

"vi",

"he",

"uk",

"el",

"ms",

"cs",

"ro",

"da",

"hu",

"ta",

"no",

"th",

"ur",

"hr",

"bg",

"lt",

"la",

"mi",

"ml",

"cy",

"sk",

"te",

"fa",

"lv",

"bn",

"sr",

"az",

"sl",

"kn",

"et",

"mk",

"br",

"eu",

"is",

"hy",

"ne",

"mn",

"bs",

"kk",

"sq",

"sw",

"gl",

"mr",

"pa",

"si",

"km",

"sn",

"yo",

"so",

"af",

"oc",

"ka",

"be",

"tg",

"sd",

"gu",

"am",

"yi",

"lo",

"uz",

"fo",

"ht",

"ps",

"tk",

"nn",

"mt",

"sa",

"lb",

"my",

"bo",

"tl",

"mg",

"as",

"tt",

"haw",

"ln",

"ha",

"ba",

"jw",

"su",

"yue",

"license:mit",

"region:us"

] |

automatic-speech-recognition

| 2023-11-23T09:34:20Z |

---

language:

- en

- zh

- de

- es

- ru

- ko

- fr

- ja

- pt

- tr

- pl

- ca

- nl

- ar

- sv

- it

- id

- hi

- fi

- vi

- he

- uk

- el

- ms

- cs

- ro

- da

- hu

- ta

- 'no'

- th

- ur

- hr

- bg

- lt

- la

- mi

- ml

- cy

- sk

- te

- fa

- lv

- bn

- sr

- az

- sl

- kn

- et

- mk

- br

- eu

- is

- hy

- ne

- mn

- bs

- kk

- sq

- sw

- gl

- mr

- pa

- si

- km

- sn

- yo

- so

- af

- oc

- ka

- be

- tg

- sd

- gu

- am

- yi

- lo

- uz

- fo

- ht

- ps

- tk

- nn

- mt

- sa

- lb

- my

- bo

- tl

- mg

- as

- tt

- haw

- ln

- ha

- ba

- jw

- su

- yue

tags:

- audio

- automatic-speech-recognition

license: mit

library_name: ctranslate2

---

# Whisper large-v3 model for CTranslate2

This repository contains the conversion of [openai/whisper-large-v3](https://huggingface.co/openai/whisper-large-v3) to the [CTranslate2](https://github.com/OpenNMT/CTranslate2) model format.

This model can be used in CTranslate2 or projects based on CTranslate2 such as [faster-whisper](https://github.com/systran/faster-whisper).

## Example

```python

from faster_whisper import WhisperModel

model = WhisperModel("large-v3")

segments, info = model.transcribe("audio.mp3")

for segment in segments:

print("[%.2fs -> %.2fs] %s" % (segment.start, segment.end, segment.text))

```

## Conversion details

The original model was converted with the following command:

```

ct2-transformers-converter --model openai/whisper-large-v3 --output_dir faster-whisper-large-v3 \

--copy_files tokenizer.json preprocessor_config.json --quantization float16

```

Note that the model weights are saved in FP16. This type can be changed when the model is loaded using the [`compute_type` option in CTranslate2](https://opennmt.net/CTranslate2/quantization.html).

## More information

**For more information about the original model, see its [model card](https://huggingface.co/openai/whisper-large-v3).**

|

TheBloke/Noromaid-20B-v0.1.1-AWQ

|

TheBloke

| 2023-11-23T09:31:48Z | 17 | 4 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"base_model:NeverSleep/Noromaid-20b-v0.1.1",

"base_model:quantized:NeverSleep/Noromaid-20b-v0.1.1",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"text-generation-inference",

"4-bit",

"awq",

"region:us"

] |

text-generation

| 2023-11-23T08:47:36Z |

---

base_model: NeverSleep/Noromaid-20b-v0.1.1

inference: false

license: cc-by-nc-4.0

model_creator: IkariDev and Undi

model_name: Noromaid 20B v0.1.1

model_type: llama

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'

quantized_by: TheBloke

---

<!-- markdownlint-disable MD041 -->

<!-- header start -->

<!-- 200823 -->

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/theblokeai">Chat & support: TheBloke's Discord server</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? TheBloke's Patreon page</a></p>

</div>

</div>

<div style="text-align:center; margin-top: 0em; margin-bottom: 0em"><p style="margin-top: 0.25em; margin-bottom: 0em;">TheBloke's LLM work is generously supported by a grant from <a href="https://a16z.com">andreessen horowitz (a16z)</a></p></div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

<!-- header end -->

# Noromaid 20B v0.1.1 - AWQ

- Model creator: [IkariDev and Undi](https://huggingface.co/NeverSleep)

- Original model: [Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1)

<!-- description start -->

## Description

This repo contains AWQ model files for [IkariDev and Undi's Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1).

These files were quantised using hardware kindly provided by [Massed Compute](https://massedcompute.com/).

### About AWQ

AWQ is an efficient, accurate and blazing-fast low-bit weight quantization method, currently supporting 4-bit quantization. Compared to GPTQ, it offers faster Transformers-based inference with equivalent or better quality compared to the most commonly used GPTQ settings.

It is supported by:

- [Text Generation Webui](https://github.com/oobabooga/text-generation-webui) - using Loader: AutoAWQ

- [vLLM](https://github.com/vllm-project/vllm) - Llama and Mistral models only

- [Hugging Face Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference)

- [Transformers](https://huggingface.co/docs/transformers) version 4.35.0 and later, from any code or client that supports Transformers

- [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) - for use from Python code

<!-- description end -->

<!-- repositories-available start -->

## Repositories available

* [AWQ model(s) for GPU inference.](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-AWQ)

* [GPTQ models for GPU inference, with multiple quantisation parameter options.](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGUF models for CPU+GPU inference](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-GGUF)

* [IkariDev and Undi's original unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1)

<!-- repositories-available end -->

<!-- prompt-template start -->

## Prompt template: Alpaca

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

<!-- prompt-template end -->

<!-- licensing start -->

## Licensing

The creator of the source model has listed its license as `cc-by-nc-4.0`, and this quantization has therefore used that same license.

As this model is based on Llama 2, it is also subject to the Meta Llama 2 license terms, and the license files for that are additionally included. It should therefore be considered as being claimed to be licensed under both licenses. I contacted Hugging Face for clarification on dual licensing but they do not yet have an official position. Should this change, or should Meta provide any feedback on this situation, I will update this section accordingly.

In the meantime, any questions regarding licensing, and in particular how these two licenses might interact, should be directed to the original model repository: [IkariDev and Undi's Noromaid 20B v0.1.1](https://huggingface.co/NeverSleep/Noromaid-20b-v0.1.1).

<!-- licensing end -->

<!-- README_AWQ.md-provided-files start -->

## Provided files, and AWQ parameters

I currently release 128g GEMM models only. The addition of group_size 32 models, and GEMV kernel models, is being actively considered.

Models are released as sharded safetensors files.

| Branch | Bits | GS | AWQ Dataset | Seq Len | Size |

| ------ | ---- | -- | ----------- | ------- | ---- |

| [main](https://huggingface.co/TheBloke/Noromaid-20B-v0.1.1-AWQ/tree/main) | 4 | 128 | [open-instruct](https://huggingface.co/datasets/VMware/open-instruct/viewer/) | 4096 | 10.87 GB

<!-- README_AWQ.md-provided-files end -->

<!-- README_AWQ.md-text-generation-webui start -->

## How to easily download and use this model in [text-generation-webui](https://github.com/oobabooga/text-generation-webui)

Please make sure you're using the latest version of [text-generation-webui](https://github.com/oobabooga/text-generation-webui).

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/Noromaid-20B-v0.1.1-AWQ`.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done".

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `Noromaid-20B-v0.1.1-AWQ`

7. Select **Loader: AutoAWQ**.

8. Click Load, and the model will load and is now ready for use.

9. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

10. Once you're ready, click the **Text Generation** tab and enter a prompt to get started!

<!-- README_AWQ.md-text-generation-webui end -->

<!-- README_AWQ.md-use-from-vllm start -->

## Multi-user inference server: vLLM

Documentation on installing and using vLLM [can be found here](https://vllm.readthedocs.io/en/latest/).

- Please ensure you are using vLLM version 0.2 or later.

- When using vLLM as a server, pass the `--quantization awq` parameter.

For example:

```shell

python3 -m vllm.entrypoints.api_server --model TheBloke/Noromaid-20B-v0.1.1-AWQ --quantization awq --dtype auto

```

- When using vLLM from Python code, again set `quantization=awq`.

For example:

```python

from vllm import LLM, SamplingParams

prompts = [

"Tell me about AI",

"Write a story about llamas",

"What is 291 - 150?",

"How much wood would a woodchuck chuck if a woodchuck could chuck wood?",

]

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

prompts = [prompt_template.format(prompt=prompt) for prompt in prompts]

sampling_params = SamplingParams(temperature=0.8, top_p=0.95)

llm = LLM(model="TheBloke/Noromaid-20B-v0.1.1-AWQ", quantization="awq", dtype="auto")

outputs = llm.generate(prompts, sampling_params)

# Print the outputs.

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated text: {generated_text!r}")

```

<!-- README_AWQ.md-use-from-vllm start -->

<!-- README_AWQ.md-use-from-tgi start -->

## Multi-user inference server: Hugging Face Text Generation Inference (TGI)

Use TGI version 1.1.0 or later. The official Docker container is: `ghcr.io/huggingface/text-generation-inference:1.1.0`

Example Docker parameters:

```shell

--model-id TheBloke/Noromaid-20B-v0.1.1-AWQ --port 3000 --quantize awq --max-input-length 3696 --max-total-tokens 4096 --max-batch-prefill-tokens 4096

```

Example Python code for interfacing with TGI (requires [huggingface-hub](https://github.com/huggingface/huggingface_hub) 0.17.0 or later):

```shell

pip3 install huggingface-hub

```

```python

from huggingface_hub import InferenceClient

endpoint_url = "https://your-endpoint-url-here"

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

client = InferenceClient(endpoint_url)

response = client.text_generation(prompt,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1)

print(f"Model output: ", response)

```

<!-- README_AWQ.md-use-from-tgi end -->

<!-- README_AWQ.md-use-from-python start -->

## Inference from Python code using Transformers

### Install the necessary packages

- Requires: [Transformers](https://huggingface.co/docs/transformers) 4.35.0 or later.

- Requires: [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) 0.1.6 or later.

```shell

pip3 install --upgrade "autoawq>=0.1.6" "transformers>=4.35.0"

```

Note that if you are using PyTorch 2.0.1, the above AutoAWQ command will automatically upgrade you to PyTorch 2.1.0.

If you are using CUDA 11.8 and wish to continue using PyTorch 2.0.1, instead run this command:

```shell

pip3 install https://github.com/casper-hansen/AutoAWQ/releases/download/v0.1.6/autoawq-0.1.6+cu118-cp310-cp310-linux_x86_64.whl

```

If you have problems installing [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) using the pre-built wheels, install it from source instead:

```shell

pip3 uninstall -y autoawq

git clone https://github.com/casper-hansen/AutoAWQ

cd AutoAWQ

pip3 install .

```

### Transformers example code (requires Transformers 4.35.0 and later)

```python

from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

model_name_or_path = "TheBloke/Noromaid-20B-v0.1.1-AWQ"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

model = AutoModelForCausalLM.from_pretrained(

model_name_or_path,

low_cpu_mem_usage=True,

device_map="cuda:0"

)

# Using the text streamer to stream output one token at a time

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

prompt = "Tell me about AI"

prompt_template=f'''Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'''

# Convert prompt to tokens

tokens = tokenizer(

prompt_template,

return_tensors='pt'

).input_ids.cuda()

generation_params = {

"do_sample": True,

"temperature": 0.7,

"top_p": 0.95,

"top_k": 40,

"max_new_tokens": 512,

"repetition_penalty": 1.1

}

# Generate streamed output, visible one token at a time

generation_output = model.generate(

tokens,

streamer=streamer,

**generation_params

)

# Generation without a streamer, which will include the prompt in the output

generation_output = model.generate(

tokens,

**generation_params

)

# Get the tokens from the output, decode them, print them

token_output = generation_output[0]

text_output = tokenizer.decode(token_output)

print("model.generate output: ", text_output)

# Inference is also possible via Transformers' pipeline

from transformers import pipeline

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

**generation_params

)

pipe_output = pipe(prompt_template)[0]['generated_text']

print("pipeline output: ", pipe_output)

```

<!-- README_AWQ.md-use-from-python end -->

<!-- README_AWQ.md-compatibility start -->

## Compatibility

The files provided are tested to work with:

- [text-generation-webui](https://github.com/oobabooga/text-generation-webui) using `Loader: AutoAWQ`.

- [vLLM](https://github.com/vllm-project/vllm) version 0.2.0 and later.

- [Hugging Face Text Generation Inference (TGI)](https://github.com/huggingface/text-generation-inference) version 1.1.0 and later.

- [Transformers](https://huggingface.co/docs/transformers) version 4.35.0 and later.

- [AutoAWQ](https://github.com/casper-hansen/AutoAWQ) version 0.1.1 and later.

<!-- README_AWQ.md-compatibility end -->

<!-- footer start -->

<!-- 200823 -->

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute

Thanks to the [chirper.ai](https://chirper.ai) team!

Thanks to Clay from [gpus.llm-utils.org](llm-utils)!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Aemon Algiz.

**Patreon special mentions**: Brandon Frisco, LangChain4j, Spiking Neurons AB, transmissions 11, Joseph William Delisle, Nitin Borwankar, Willem Michiel, Michael Dempsey, vamX, Jeffrey Morgan, zynix, jjj, Omer Bin Jawed, Sean Connelly, jinyuan sun, Jeromy Smith, Shadi, Pawan Osman, Chadd, Elijah Stavena, Illia Dulskyi, Sebastain Graf, Stephen Murray, terasurfer, Edmond Seymore, Celu Ramasamy, Mandus, Alex, biorpg, Ajan Kanaga, Clay Pascal, Raven Klaugh, 阿明, K, ya boyyy, usrbinkat, Alicia Loh, John Villwock, ReadyPlayerEmma, Chris Smitley, Cap'n Zoog, fincy, GodLy, S_X, sidney chen, Cory Kujawski, OG, Mano Prime, AzureBlack, Pieter, Kalila, Spencer Kim, Tom X Nguyen, Stanislav Ovsiannikov, Michael Levine, Andrey, Trailburnt, Vadim, Enrico Ros, Talal Aujan, Brandon Phillips, Jack West, Eugene Pentland, Michael Davis, Will Dee, webtim, Jonathan Leane, Alps Aficionado, Rooh Singh, Tiffany J. Kim, theTransient, Luke @flexchar, Elle, Caitlyn Gatomon, Ari Malik, subjectnull, Johann-Peter Hartmann, Trenton Dambrowitz, Imad Khwaja, Asp the Wyvern, Emad Mostaque, Rainer Wilmers, Alexandros Triantafyllidis, Nicholas, Pedro Madruga, SuperWojo, Harry Royden McLaughlin, James Bentley, Olakabola, David Ziegler, Ai Maven, Jeff Scroggin, Nikolai Manek, Deo Leter, Matthew Berman, Fen Risland, Ken Nordquist, Manuel Alberto Morcote, Luke Pendergrass, TL, Fred von Graf, Randy H, Dan Guido, NimbleBox.ai, Vitor Caleffi, Gabriel Tamborski, knownsqashed, Lone Striker, Erik Bjäreholt, John Detwiler, Leonard Tan, Iucharbius

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

<!-- footer end -->

# Original model card: IkariDev and Undi's Noromaid 20B v0.1.1

---

# Disclaimer:

## This is a ***TEST*** version, don't expect everything to work!!!

You may use our custom **prompting format**(scroll down to download them!), or simple alpaca. **(Choose which fits best for you!)**

---

# This model is a collab between [IkariDev](https://huggingface.co/IkariDev) and [Undi](https://huggingface.co/Undi95)!

Tired of the same merges everytime? Here it is, the Noromaid-20b-v0.1.1 model. Suitable for RP, ERP and general stuff.

[Recommended settings - No settings yet(Please suggest some over in the Community tab!)]

<!-- description start -->