modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 06:27:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 527

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 06:27:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

kr-manish/text-to-image-sdxl-lora-dreemBooth-rashmika_v2

|

kr-manish

| 2023-12-19T11:13:23Z | 1 | 0 |

diffusers

|

[

"diffusers",

"text-to-image",

"autotrain",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:finetune:stabilityai/stable-diffusion-xl-base-1.0",

"region:us"

] |

text-to-image

| 2023-12-19T08:31:31Z |

---

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: A photo of rashmika mehta wearing casual clothes, taking a selfie, and smiling.

tags:

- text-to-image

- diffusers

- autotrain

inference: true

---

# DreamBooth trained by AutoTrain

Text encoder was not trained.

|

vilm/vinallama-7b

|

vilm

| 2023-12-19T11:10:40Z | 108 | 23 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"vi",

"arxiv:2312.11011",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-11-28T07:45:04Z |

---

license: llama2

language:

- vi

---

# VinaLLaMA - State-of-the-art Vietnamese LLMs

Read our [Paper](https://huggingface.co/papers/2312.11011)

|

ngocminhta/Llama-2-Chat-Movie-Review

|

ngocminhta

| 2023-12-19T11:03:55Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"movie",

"entertainment",

"text-classification",

"en",

"arxiv:1910.09700",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-12-19T10:37:22Z |

---

license: apache-2.0

language:

- en

pipeline_tag: text-classification

tags:

- movie

- entertainment

---

# Model Card for Model ID

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

LogicismTV/Chronomaid-Storytelling-13b-exl2

|

LogicismTV

| 2023-12-19T11:02:15Z | 11 | 1 |

transformers

|

[

"transformers",

"safetensors",

"llama",

"text-generation",

"base_model:NyxKrage/Chronomaid-Storytelling-13b",

"base_model:finetune:NyxKrage/Chronomaid-Storytelling-13b",

"license:llama2",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] |

text-generation

| 2023-12-18T06:04:30Z |

---

base_model: NyxKrage/Chronomaid-Storytelling-13b

inference: false

license: llama2

model_creator: Carsten Kragelund

model_name: Chronomaid Storytelling 13B

model_type: llama

prompt_template: 'Below is an instruction that describes a task. Write a response

that appropriately completes the request.

### Instruction:

{prompt}

### Response:

'

quantized_by: LogicismTV

---

<div style="width: auto; margin-left: auto; margin-right: auto">

<img src="https://i.imgur.com/T1kcNir.jpg" style="width: 100%; min-width: 400px; display: block; margin: auto;">

</div>

<div style="display: flex; justify-content: space-between; width: 100%;">

<div style="display: flex; flex-direction: column; align-items: flex-start;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://logicism.tv/">Vist my Website</a></p>

</div>

<div style="display: flex; flex-direction: column; align-items: flex-end;">

<p style="margin-top: 0.5em; margin-bottom: 0em;"><a href="https://discord.gg/nStuNeZsWz">Join my Discord</a></p>

</div>

</div>

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;">

# Chronomaid Storytelling 13B - ExLlama V2

Original model: [Chronomaid Storytelling 13B](https://huggingface.co/NyxKrage/Chronomaid-Storytelling-13b)

# Description

This is an EXL2 quantization of the NyxKrage's Chronomaid Storytelling 13B model.

## Prompt template: Alpaca

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

# Quantizations

| Bits Per Weight | Size |

| --------------- | ---- |

| [main (2.4bpw)](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/main) | 3.98 GB |

| [3bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/3bpw) | 4.86 GB |

| [3.5bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/3.5bpw) | 5.60 GB |

| [4bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/4bpw) | 6.34 GB |

| [4.5bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/4.5bpw) | 7.08 GB |

| [5bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/5bpw) | 7.82 GB |

| [6bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/6bpw) | 9.29 GB |

| [8bpw](https://huggingface.co/LogicismTV/Chronomaid-Storytelling-13b-exl2/tree/8bpw) | 12.2 GB |

# Original model card: Carsten Kragelund's Chronomaid Storytelling 13B

# Chronomaid-Storytelling-13b

<img src="https://cdn-uploads.huggingface.co/production/uploads/65221315578e7da0d74f73d8/v2fVXhCcOdvOdjTrd9dY0.webp" alt="image of a vibrant and whimsical scene with an anime-style character as the focal point. The character is a young girl with blue eyes and short brown hair, wearing a black and white maid outfit with ruffled apron and a red ribbon at her collar. She is lying amidst a fantastical backdrop filled with an assortment of floating, colorful clocks, gears, and hourglasses. The space around her is filled with sparkling stars, glowing nebulae, and swirling galaxies." height="75%" width="75%" />

Merge including [Noromaid-13b-v0.1.1](https://huggingface.co/NeverSleep/Noromaid-13b-v0.1.1), and [Chronos-13b-v2](https://huggingface.co/elinas/chronos-13b-v2) with the [Storytelling-v1-Lora](https://huggingface.co/Undi95/Storytelling-v1-13B-lora) applied afterwards

Inteded for primarily RP, and will do ERP, narrator-character and group-chats without much trouble in my testing.

## Prompt Format

Tested with Alpaca, the Noromaid preset's will probably also work (check the Noromaid model card for SillyTavern presets)

```

Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{prompt}

### Response:

```

## Sampler Settings

Tested at

* `temp` 1.3 `min p` 0.05 and 0.15

* `temp` 1.7, `min p` 0.08 and 0.15

## Quantized Models

The model has been kindly quantized in GGUF, AWQ, and GPTQ by TheBloke

Find them in the [Chronomaid-Storytelling-13b Collection](https://huggingface.co/collections/NyxKrage/chronomaid-storytelling-13b-656115dd7065690d7f17c7c8)

## Thanks ❤️

To [Undi](https://huggingface.co/Undi95) & [Ikari](https://huggingface.co/IkariDev) for Noromaid and [Elinas](https://huggingface.co/elinas) for Chronos

Support [Undi](https://ko-fi.com/undiai) and [Elinas](https://ko-fi.com/elinas) on Kofi

|

npvinHnivqn/Mistral-7B-Instruct

|

npvinHnivqn

| 2023-12-19T10:52:30Z | 0 | 0 | null |

[

"tensorboard",

"safetensors",

"license:mit",

"region:us"

] | null | 2023-12-18T19:31:58Z |

---

license: mit

---

### Quick start

```bash

pip install accelerate peft bitsandbytes pip install git+https://github.com/huggingface/transformers trl py7zr auto-gptq optimum

```

```python

from peft import AutoPeftModelForCausalLM

from transformers import GenerationConfig

from transformers import AutoTokenizer

import torch

tokenizer = AutoTokenizer.from_pretrained("npvinHnivqn/Mistral-7B-Instruct")

model = AutoPeftModelForCausalLM.from_pretrained(

'npvinHnivqn/Mistral-7B-Instruct',

low_cpu_mem_usage=True,

return_dict=True,

torch_dtype=torch.float16,

device_map="cuda")

generation_config = GenerationConfig(

do_sample=True,

top_k=1,

temperature=0.1,

max_new_tokens=25,

pad_token_id=tokenizer.eos_token_id

)

inputs = tokenizer("""<|SYSTEM|> You are a very good chatbot, you can answer every question from users. <|USER|> Summarize this following dialogue: Vasanth: I'm at the railway station in Chennai Karthik: No problems so far? Vasanth: no, everything's going smoothly Karthik: good. lets meet there soon! [INPUT] <|BOT|>""", return_tensors="pt").to("cuda")

outputs = model.generate(**inputs, generation_config=generation_config)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

|

Kshitij2406/GPTTest

|

Kshitij2406

| 2023-12-19T10:51:27Z | 0 | 0 |

peft

|

[

"peft",

"safetensors",

"arxiv:1910.09700",

"base_model:vilsonrodrigues/falcon-7b-instruct-sharded",

"base_model:adapter:vilsonrodrigues/falcon-7b-instruct-sharded",

"region:us"

] | null | 2023-12-15T10:39:01Z |

---

library_name: peft

base_model: vilsonrodrigues/falcon-7b-instruct-sharded

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.7.2.dev0

|

hkivancoral/smids_10x_deit_small_adamax_00001_fold3

|

hkivancoral

| 2023-12-19T10:51:15Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"dataset:imagefolder",

"base_model:facebook/deit-small-patch16-224",

"base_model:finetune:facebook/deit-small-patch16-224",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-12-19T09:43:00Z |

---

license: apache-2.0

base_model: facebook/deit-small-patch16-224

tags:

- generated_from_trainer

datasets:

- imagefolder

metrics:

- accuracy

model-index:

- name: smids_10x_deit_small_adamax_00001_fold3

results:

- task:

name: Image Classification

type: image-classification

dataset:

name: imagefolder

type: imagefolder

config: default

split: test

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9183333333333333

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# smids_10x_deit_small_adamax_00001_fold3

This model is a fine-tuned version of [facebook/deit-small-patch16-224](https://huggingface.co/facebook/deit-small-patch16-224) on the imagefolder dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8187

- Accuracy: 0.9183

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 50

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.2585 | 1.0 | 750 | 0.2681 | 0.8983 |

| 0.2105 | 2.0 | 1500 | 0.2490 | 0.9167 |

| 0.0786 | 3.0 | 2250 | 0.2625 | 0.9167 |

| 0.0736 | 4.0 | 3000 | 0.2826 | 0.9133 |

| 0.0688 | 5.0 | 3750 | 0.3568 | 0.91 |

| 0.0468 | 6.0 | 4500 | 0.4349 | 0.9083 |

| 0.0289 | 7.0 | 5250 | 0.4645 | 0.9183 |

| 0.0394 | 8.0 | 6000 | 0.5300 | 0.9183 |

| 0.0012 | 9.0 | 6750 | 0.5842 | 0.92 |

| 0.0139 | 10.0 | 7500 | 0.6285 | 0.915 |

| 0.0002 | 11.0 | 8250 | 0.6464 | 0.9217 |

| 0.0001 | 12.0 | 9000 | 0.6757 | 0.9133 |

| 0.0 | 13.0 | 9750 | 0.7480 | 0.9167 |

| 0.0001 | 14.0 | 10500 | 0.7033 | 0.92 |

| 0.0 | 15.0 | 11250 | 0.7525 | 0.9133 |

| 0.0 | 16.0 | 12000 | 0.7472 | 0.915 |

| 0.0 | 17.0 | 12750 | 0.7380 | 0.92 |

| 0.0 | 18.0 | 13500 | 0.7432 | 0.9183 |

| 0.0 | 19.0 | 14250 | 0.7438 | 0.9217 |

| 0.0 | 20.0 | 15000 | 0.7615 | 0.92 |

| 0.0 | 21.0 | 15750 | 0.7581 | 0.9233 |

| 0.0 | 22.0 | 16500 | 0.7753 | 0.92 |

| 0.0 | 23.0 | 17250 | 0.7758 | 0.92 |

| 0.0 | 24.0 | 18000 | 0.7745 | 0.9217 |

| 0.0 | 25.0 | 18750 | 0.7780 | 0.9233 |

| 0.0 | 26.0 | 19500 | 0.7763 | 0.9217 |

| 0.0 | 27.0 | 20250 | 0.7839 | 0.9183 |

| 0.0 | 28.0 | 21000 | 0.7914 | 0.9183 |

| 0.0 | 29.0 | 21750 | 0.7935 | 0.92 |

| 0.0 | 30.0 | 22500 | 0.8320 | 0.9117 |

| 0.0 | 31.0 | 23250 | 0.8021 | 0.9183 |

| 0.0 | 32.0 | 24000 | 0.8041 | 0.9217 |

| 0.0 | 33.0 | 24750 | 0.8030 | 0.9167 |

| 0.0 | 34.0 | 25500 | 0.8170 | 0.9133 |

| 0.0 | 35.0 | 26250 | 0.8237 | 0.915 |

| 0.0 | 36.0 | 27000 | 0.8072 | 0.9167 |

| 0.0 | 37.0 | 27750 | 0.8249 | 0.915 |

| 0.0 | 38.0 | 28500 | 0.8116 | 0.9167 |

| 0.0 | 39.0 | 29250 | 0.8160 | 0.9217 |

| 0.0 | 40.0 | 30000 | 0.8158 | 0.92 |

| 0.0 | 41.0 | 30750 | 0.8164 | 0.92 |

| 0.0 | 42.0 | 31500 | 0.8163 | 0.92 |

| 0.0 | 43.0 | 32250 | 0.8169 | 0.92 |

| 0.0 | 44.0 | 33000 | 0.8174 | 0.92 |

| 0.0 | 45.0 | 33750 | 0.8182 | 0.92 |

| 0.0 | 46.0 | 34500 | 0.8186 | 0.9183 |

| 0.0 | 47.0 | 35250 | 0.8185 | 0.92 |

| 0.0 | 48.0 | 36000 | 0.8187 | 0.92 |

| 0.0 | 49.0 | 36750 | 0.8181 | 0.9183 |

| 0.0 | 50.0 | 37500 | 0.8187 | 0.9183 |

### Framework versions

- Transformers 4.32.1

- Pytorch 2.1.0+cu121

- Datasets 2.12.0

- Tokenizers 0.13.2

|

Dhanang/aspect_model

|

Dhanang

| 2023-12-19T10:51:07Z | 7 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"base_model:indobenchmark/indobert-base-p2",

"base_model:finetune:indobenchmark/indobert-base-p2",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-12-19T09:02:25Z |

---

license: mit

base_model: indobenchmark/indobert-base-p2

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: aspect_model

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# aspect_model

This model is a fine-tuned version of [indobenchmark/indobert-base-p2](https://huggingface.co/indobenchmark/indobert-base-p2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.3490

- Accuracy: 0.8084

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 72 | 0.6516 | 0.7735 |

| No log | 2.0 | 144 | 0.6119 | 0.7909 |

| No log | 3.0 | 216 | 0.6152 | 0.8049 |

| No log | 4.0 | 288 | 0.7480 | 0.8118 |

| No log | 5.0 | 360 | 1.0121 | 0.7770 |

| No log | 6.0 | 432 | 1.0780 | 0.7909 |

| 0.27 | 7.0 | 504 | 1.1602 | 0.7840 |

| 0.27 | 8.0 | 576 | 1.2136 | 0.8014 |

| 0.27 | 9.0 | 648 | 1.2490 | 0.8014 |

| 0.27 | 10.0 | 720 | 1.3102 | 0.7840 |

| 0.27 | 11.0 | 792 | 1.3184 | 0.8049 |

| 0.27 | 12.0 | 864 | 1.3255 | 0.8014 |

| 0.27 | 13.0 | 936 | 1.3192 | 0.8049 |

| 0.0022 | 14.0 | 1008 | 1.3229 | 0.7944 |

| 0.0022 | 15.0 | 1080 | 1.3415 | 0.8014 |

| 0.0022 | 16.0 | 1152 | 1.3515 | 0.7909 |

| 0.0022 | 17.0 | 1224 | 1.3544 | 0.7944 |

| 0.0022 | 18.0 | 1296 | 1.3529 | 0.7944 |

| 0.0022 | 19.0 | 1368 | 1.3484 | 0.8084 |

| 0.0022 | 20.0 | 1440 | 1.3490 | 0.8084 |

### Framework versions

- Transformers 4.35.2

- Pytorch 2.1.0+cu121

- Datasets 2.15.0

- Tokenizers 0.15.0

|

kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1-RILE-v1

|

kghanlon

| 2023-12-19T10:50:52Z | 7 | 0 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"distilbert",

"text-classification",

"generated_from_trainer",

"base_model:kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1",

"base_model:finetune:kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-12-19T10:05:36Z |

---

base_model: kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1

tags:

- generated_from_trainer

metrics:

- accuracy

- recall

- f1

model-index:

- name: distilbert-base-uncased-finetuned-SOTUs-v1-RILE-v1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-SOTUs-v1-RILE-v1

This model is a fine-tuned version of [kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1](https://huggingface.co/kghanlon/distilbert-base-uncased-finetuned-SOTUs-v1) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.8575

- Accuracy: 0.7345

- Recall: 0.7345

- F1: 0.7343

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | Recall | F1 |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|:------:|:------:|

| 0.703 | 1.0 | 15490 | 0.6829 | 0.7138 | 0.7138 | 0.7109 |

| 0.5689 | 2.0 | 30980 | 0.6758 | 0.7348 | 0.7348 | 0.7344 |

| 0.4264 | 3.0 | 46470 | 0.8575 | 0.7345 | 0.7345 | 0.7343 |

### Framework versions

- Transformers 4.35.2

- Pytorch 2.1.0+cu121

- Datasets 2.15.0

- Tokenizers 0.15.0

|

satani/phtben-5

|

satani

| 2023-12-19T10:49:23Z | 8 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-12-19T10:45:26Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### phtben_5 Dreambooth model trained by satani with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

|

kalinds/Llama_1.5

|

kalinds

| 2023-12-19T10:42:58Z | 0 | 0 |

peft

|

[

"peft",

"safetensors",

"llama",

"arxiv:1910.09700",

"base_model:meta-llama/Llama-2-7b-hf",

"base_model:adapter:meta-llama/Llama-2-7b-hf",

"region:us"

] | null | 2023-12-19T10:20:34Z |

---

library_name: peft

base_model: meta-llama/Llama-2-7b-hf

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.7.1

|

ntc-ai/SDXL-LoRA-slider.mischievious-grin

|

ntc-ai

| 2023-12-19T10:35:52Z | 101 | 2 |

diffusers

|

[

"diffusers",

"text-to-image",

"stable-diffusion-xl",

"lora",

"template:sd-lora",

"template:sdxl-lora",

"sdxl-sliders",

"ntcai.xyz-sliders",

"concept",

"en",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:mit",

"region:us"

] |

text-to-image

| 2023-12-19T10:35:49Z |

---

language:

- en

thumbnail: "images/evaluate/mischievious grin.../mischievious grin_17_3.0.png"

widget:

- text: mischievious grin

output:

url: images/mischievious grin_17_3.0.png

- text: mischievious grin

output:

url: images/mischievious grin_19_3.0.png

- text: mischievious grin

output:

url: images/mischievious grin_20_3.0.png

- text: mischievious grin

output:

url: images/mischievious grin_21_3.0.png

- text: mischievious grin

output:

url: images/mischievious grin_22_3.0.png

tags:

- text-to-image

- stable-diffusion-xl

- lora

- template:sd-lora

- template:sdxl-lora

- sdxl-sliders

- ntcai.xyz-sliders

- concept

- diffusers

license: "mit"

inference: false

instance_prompt: "mischievious grin"

base_model: "stabilityai/stable-diffusion-xl-base-1.0"

---

# ntcai.xyz slider - mischievious grin (SDXL LoRA)

| Strength: -3 | Strength: 0 | Strength: 3 |

| --- | --- | --- |

| <img src="images/mischievious grin_17_-3.0.png" width=256 height=256 /> | <img src="images/mischievious grin_17_0.0.png" width=256 height=256 /> | <img src="images/mischievious grin_17_3.0.png" width=256 height=256 /> |

| <img src="images/mischievious grin_19_-3.0.png" width=256 height=256 /> | <img src="images/mischievious grin_19_0.0.png" width=256 height=256 /> | <img src="images/mischievious grin_19_3.0.png" width=256 height=256 /> |

| <img src="images/mischievious grin_20_-3.0.png" width=256 height=256 /> | <img src="images/mischievious grin_20_0.0.png" width=256 height=256 /> | <img src="images/mischievious grin_20_3.0.png" width=256 height=256 /> |

## Download

Weights for this model are available in Safetensors format.

## Trigger words

You can apply this LoRA with trigger words for additional effect:

```

mischievious grin

```

## Use in diffusers

```python

from diffusers import StableDiffusionXLPipeline

from diffusers import EulerAncestralDiscreteScheduler

import torch

pipe = StableDiffusionXLPipeline.from_single_file("https://huggingface.co/martyn/sdxl-turbo-mario-merge-top-rated/blob/main/topRatedTurboxlLCM_v10.safetensors")

pipe.to("cuda")

pipe.scheduler = EulerAncestralDiscreteScheduler.from_config(pipe.scheduler.config)

# Load the LoRA

pipe.load_lora_weights('ntc-ai/SDXL-LoRA-slider.mischievious-grin', weight_name='mischievious grin.safetensors', adapter_name="mischievious grin")

# Activate the LoRA

pipe.set_adapters(["mischievious grin"], adapter_weights=[2.0])

prompt = "medieval rich kingpin sitting in a tavern, mischievious grin"

negative_prompt = "nsfw"

width = 512

height = 512

num_inference_steps = 10

guidance_scale = 2

image = pipe(prompt, negative_prompt=negative_prompt, width=width, height=height, guidance_scale=guidance_scale, num_inference_steps=num_inference_steps).images[0]

image.save('result.png')

```

## Support the Patreon

If you like this model please consider [joining our Patreon](https://www.patreon.com/NTCAI).

By joining our Patreon, you'll gain access to an ever-growing library of over 470+ unique and diverse LoRAs, covering a wide range of styles and genres. You'll also receive early access to new models and updates, exclusive behind-the-scenes content, and the powerful LoRA slider creator, allowing you to craft your own custom LoRAs and experiment with endless possibilities.

Your support on Patreon will allow us to continue developing and refining new models.

## Other resources

- [CivitAI](https://civitai.com/user/ntc) - Follow ntc on Civit for even more LoRAs

- [ntcai.xyz](https://ntcai.xyz) - See ntcai.xyz to find more articles and LoRAs

|

tresbien1/ppo-Huggy

|

tresbien1

| 2023-12-19T10:29:10Z | 5 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-12-19T10:29:00Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: tresbien1/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

bclavie/fio-base-japanese-v0.1

|

bclavie

| 2023-12-19T10:28:16Z | 36 | 6 |

sentence-transformers

|

[

"sentence-transformers",

"safetensors",

"bert",

"feature-extraction",

"sentence-similarity",

"transformers",

"ja",

"dataset:shunk031/JGLUE",

"dataset:shunk031/jsnli",

"dataset:hpprc/jsick",

"dataset:miracl/miracl",

"dataset:castorini/mr-tydi",

"dataset:unicamp-dl/mmarco",

"autotrain_compatible",

"region:us"

] |

sentence-similarity

| 2023-12-18T11:01:07Z |

---

language:

- ja

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

inference: false

datasets:

- shunk031/JGLUE

- shunk031/jsnli

- hpprc/jsick

- miracl/miracl

- castorini/mr-tydi

- unicamp-dl/mmarco

library_name: sentence-transformers

---

# fio-base-japanese-v0.1

日本語版は近日公開予定です(日本語を勉強中なので、間違いはご容赦ください!)

fio-base-japanese-v0.1 is a proof of concept, and the first release of the Fio family of Japanese embeddings. It is based on [cl-tohoku/bert-base-japanese-v3](https://huggingface.co/cl-tohoku/bert-base-japanese-v3) and trained on limited volumes of data on a single GPU.

For more information, please refer to [my notes on Fio](https://ben.clavie.eu/fio).

#### Datasets

Similarity/Entailment:

- JSTS (train)

- JSNLI (train)

- JNLI (train)

- JSICK (train)

Retrieval:

- MMARCO (Multilingual Marco) (train, 124k sentence pairs, <1% of the full data)

- Mr.TyDI (train)

- MIRACL (train, 50% sample)

- ~~JSQuAD (train, 50% sample, no LLM enhancement)~~ JSQuAD is not used in the released version, to serve as an unseen test set.

#### Results

> ⚠️ WARNING: fio-base-japanese-v0.1 has seen textual entailment tasks during its training, which is _not_ the case of the other other japanese-only models in this table. This gives Fio an unfair advantage over the previous best results, `cl-nagoya/sup-simcse-ja-[base|large]`. During mid-training evaluations, this didn't seem to greatly affect performance, however, JSICK (NLI set) was included in the training data, and therefore it's impossible to fully remove this contamination at the moment. I intend to fix this in future release, but please keep this in mind as you view the results (see JSQuAD results on the associated blog post for a fully unseen comparison, although focused on retrieval).

This is adapted and truncated (to keep only the most popular models) from [oshizo's benchmarking github repo](https://github.com/oshizo/JapaneseEmbeddingEval), please check it out for more information and give it a star as it was very useful!

Italic denotes best model for its size when a smaller model outperforms a bigger one (base/large | 768/1024), bold denotes best overall.

| Model | JSTS valid-v1.1 | JSICK test | MIRACL dev | Average |

|-------------------------------------------------|-----------------|------------|------------|---------|

| bclavie/fio-base-japanese-v0.1 | **_0.863_** | **_0.894_** | 0.718 | _0.825_ |

| cl-nagoya/sup-simcse-ja-base | 0.809 | 0.827 | 0.527 | 0.721 |

| cl-nagoya/sup-simcse-ja-large | _0.831_ | _0.831_ | 0.507 | 0.723 |

| colorfulscoop/sbert-base-ja | 0.742 | 0.657 | 0.254 | 0.551 |

| intfloat/multilingual-e5-base | 0.796 | 0.806 | __0.845__ | 0.816 |

| intfloat/multilingual-e5-large | 0.819 | 0.794 | **0.883** | **_0.832_** |

| pkshatech/GLuCoSE-base-ja | 0.818 | 0.757 | 0.692 | 0.755 |

| text-embedding-ada-002 | 0.790 | 0.789 | 0.7232 | 0.768 |

## Usage

This model requires both `fugashi` and `unidic-lite`:

```

pip install -U fugashi unidic-lite

```

If using for a retrieval task, you must prefix your query with `"関連記事を取得するために使用できるこの文の表現を生成します: "`.

### Usage (Sentence-Transformers)

This model is best used through [sentence-transformers](https://www.SBERT.net). If you don't have it, it's easy to install:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["こんにちは、世界!", "文埋め込み最高!文埋め込み最高と叫びなさい", "極度乾燥しなさい"]

model = SentenceTransformer('bclavie/fio-base-japanese-v0.1')

embeddings = model.encode(sentences)

print(embeddings)

```

### Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

def cls_pooling(model_output, attention_mask):

return model_output[0][:,0]

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, cls pooling.

sentence_embeddings = cls_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Citing & Authors

```@misc{

bclavie-fio-embeddings,

author = {Benjamin Clavié},

title = {Fio Japanese Embeddings},

year = {2023},

howpublished = {\url{https://ben.clavie.eu/fio}}

}```

|

Federm1512/ppo-Huggy

|

Federm1512

| 2023-12-19T10:25:01Z | 1 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-12-19T09:54:11Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: Federm1512/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

XingeTong/9-testresults

|

XingeTong

| 2023-12-19T10:19:31Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"generated_from_trainer",

"base_model:cardiffnlp/twitter-roberta-base-sentiment-latest",

"base_model:finetune:cardiffnlp/twitter-roberta-base-sentiment-latest",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-12-19T10:17:13Z |

---

base_model: cardiffnlp/twitter-roberta-base-sentiment-latest

tags:

- generated_from_trainer

model-index:

- name: 9-testresults

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 9-testresults

This model is a fine-tuned version of [cardiffnlp/twitter-roberta-base-sentiment-latest](https://huggingface.co/cardiffnlp/twitter-roberta-base-sentiment-latest) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 9.359061927977144e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

### Framework versions

- Transformers 4.34.0

- Pytorch 2.1.0+cu118

- Datasets 2.14.5

- Tokenizers 0.14.1

|

VictorNGomes/pttmario5

|

VictorNGomes

| 2023-12-19T10:15:08Z | 6 | 1 |

transformers

|

[

"transformers",

"tensorboard",

"safetensors",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:xlsum",

"base_model:VictorNGomes/pttmario5",

"base_model:finetune:VictorNGomes/pttmario5",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-12-17T01:40:38Z |

---

license: mit

base_model: VictorNGomes/pttmario5

tags:

- generated_from_trainer

datasets:

- xlsum

model-index:

- name: pttmario5

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# pttmario5

This model is a fine-tuned version of [VictorNGomes/pttmario5](https://huggingface.co/VictorNGomes/pttmario5) on the xlsum dataset.

It achieves the following results on the evaluation set:

- Loss: 2.2144

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- gradient_accumulation_steps: 16

- total_train_batch_size: 384

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 8

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.5131 | 3.34 | 500 | 2.2600 |

| 2.4594 | 6.69 | 1000 | 2.2144 |

### Framework versions

- Transformers 4.36.2

- Pytorch 2.1.2+cu121

- Datasets 2.15.0

- Tokenizers 0.15.0

|

baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints

|

baichuan-inc

| 2023-12-19T10:03:18Z | 16 | 18 | null |

[

"en",

"zh",

"license:other",

"region:us"

] | null | 2023-09-05T09:35:23Z |

---

language:

- en

- zh

license: other

tasks:

- text-generation

---

<!-- markdownlint-disable first-line-h1 -->

<!-- markdownlint-disable html -->

<div align="center">

<h1>

Baichuan 2

</h1>

</div>

<div align="center">

<a href="https://github.com/baichuan-inc/Baichuan2" target="_blank">🦉GitHub</a> | <a href="https://github.com/baichuan-inc/Baichuan-7B/blob/main/media/wechat.jpeg?raw=true" target="_blank">💬WeChat</a>

</div>

<div align="center">

百川API支持搜索增强和192K长窗口,新增百川搜索增强知识库、限时免费!<br>

🚀 <a href="https://www.baichuan-ai.com/" target="_blank">百川大模型在线对话平台</a> 已正式向公众开放 🎉

</div>

# 目录/Table of Contents

- [📖 模型介绍/Introduction](#Introduction)

- [⚙️ 快速开始/Quick Start](#Start)

- [📊 Benchmark评估/Benchmark Evaluation](#Benchmark)

- [📜 声明与协议/Terms and Conditions](#Terms)

# <span id="Introduction">模型介绍/Introduction</span>

Baichuan 2 是[百川智能]推出的新一代开源大语言模型,采用 **2.6 万亿** Tokens 的高质量语料训练,在权威的中文和英文 benchmark

上均取得同尺寸最好的效果。本次发布包含有 7B、13B 的 Base 和 Chat 版本,并提供了 Chat 版本的 4bits

量化,所有版本不仅对学术研究完全开放,开发者也仅需[邮件申请]并获得官方商用许可后,即可以免费商用。具体发布版本和下载见下表:

Baichuan 2 is the new generation of large-scale open-source language models launched by [Baichuan Intelligence inc.](https://www.baichuan-ai.com/).

It is trained on a high-quality corpus with 2.6 trillion tokens and has achieved the best performance in authoritative Chinese and English benchmarks of the same size.

This release includes 7B and 13B versions for both Base and Chat models, along with a 4bits quantized version for the Chat model.

All versions are fully open to academic research, and developers can also use them for free in commercial applications after obtaining an official commercial license through [email request](mailto:opensource@baichuan-inc.com).

The specific release versions and download links are listed in the table below:

| | Base Model | Chat Model | 4bits Quantized Chat Model |

|:---:|:--------------------:|:--------------------:|:--------------------------:|

| 7B | [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) | [Baichuan2-7B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat) | [Baichuan2-7B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base-4bits) |

| 13B | [Baichuan2-13B-Base](https://huggingface.co/baichuan-inc/Baichuan2-13B-Base) | [Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat) | [Baichuan2-13B-Chat-4bits](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits) |

# <span id="Start">快速开始/Quick Start</span>

在Baichuan2系列模型中,我们为了加快推理速度使用了Pytorch2.0加入的新功能F.scaled_dot_product_attention,因此模型需要在Pytorch2.0环境下运行。

In the Baichuan 2 series models, we have utilized the new feature `F.scaled_dot_product_attention` introduced in PyTorch 2.0 to accelerate inference speed. Therefore, the model needs to be run in a PyTorch 2.0 environment.

**我们将训练中的Checkpoints上传到了本项目中,可以通过指定revision来加载不同step的Checkpoint。**

**We have uploaded the checkpoints during training to this project. You can load checkpoints from different steps by specifying the revision.**

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints", revision="train_02200B", use_fast=False, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained("baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints", revision="train_02200B", device_map="auto", torch_dtype=torch.bfloat16, trust_remote_code=True)

inputs = tokenizer('登鹳雀楼->王之涣\n夜雨寄北->', return_tensors='pt')

inputs = inputs.to('cuda:0')

pred = model.generate(**inputs, max_new_tokens=64, repetition_penalty=1.1)

print(tokenizer.decode(pred.cpu()[0], skip_special_tokens=True))

```

# <span id="Benchmark">Benchmark 结果/Benchmark Evaluation</span>

我们在[通用]、[法律]、[医疗]、[数学]、[代码]和[多语言翻译]六个领域的中英文权威数据集上对模型进行了广泛测试,更多详细测评结果可查看[GitHub]。

We have extensively tested the model on authoritative Chinese-English datasets across six domains: [General](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#general-domain), [Legal](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Medical](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#law-and-medicine), [Mathematics](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), [Code](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#mathematics-and-code), and [Multilingual Translation](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md#multilingual-translation). For more detailed evaluation results, please refer to [GitHub](https://github.com/baichuan-inc/Baichuan2/blob/main/README_EN.md).

### 7B Model Results

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|:-----------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

| **LLaMA-7B** | 27.10 | 35.10 | 26.75 | 27.81 | 28.17 | 32.38 |

| **LLaMA2-7B** | 28.90 | 45.73 | 31.38 | 25.97 | 26.53 | 39.16 |

| **MPT-7B** | 27.15 | 27.93 | 26.00 | 26.54 | 24.83 | 35.20 |

| **Falcon-7B** | 24.23 | 26.03 | 25.66 | 24.24 | 24.10 | 28.77 |

| **ChatGLM2-6B** | 50.20 | 45.90 | 49.00 | 49.44 | 45.28 | 31.65 |

| **[Baichuan-7B]** | 42.80 | 42.30 | 44.02 | 36.34 | 34.44 | 32.48 |

| **[Baichuan2-7B-Base]** | 54.00 | 54.16 | 57.07 | 47.47 | 42.73 | 41.56 |

### 13B Model Results

| | **C-Eval** | **MMLU** | **CMMLU** | **Gaokao** | **AGIEval** | **BBH** |

|:---------------------------:|:----------:|:--------:|:---------:|:----------:|:-----------:|:-------:|

| | 5-shot | 5-shot | 5-shot | 5-shot | 5-shot | 3-shot |

| **GPT-4** | 68.40 | 83.93 | 70.33 | 66.15 | 63.27 | 75.12 |

| **GPT-3.5 Turbo** | 51.10 | 68.54 | 54.06 | 47.07 | 46.13 | 61.59 |

| **LLaMA-13B** | 28.50 | 46.30 | 31.15 | 28.23 | 28.22 | 37.89 |

| **LLaMA2-13B** | 35.80 | 55.09 | 37.99 | 30.83 | 32.29 | 46.98 |

| **Vicuna-13B** | 32.80 | 52.00 | 36.28 | 30.11 | 31.55 | 43.04 |

| **Chinese-Alpaca-Plus-13B** | 38.80 | 43.90 | 33.43 | 34.78 | 35.46 | 28.94 |

| **XVERSE-13B** | 53.70 | 55.21 | 58.44 | 44.69 | 42.54 | 38.06 |

| **[Baichuan-13B-Base]** | 52.40 | 51.60 | 55.30 | 49.69 | 43.20 | 43.01 |

| **[Baichuan2-13B-Base]** | 58.10 | 59.17 | 61.97 | 54.33 | 48.17 | 48.78 |

## 训练过程模型/Training Dynamics

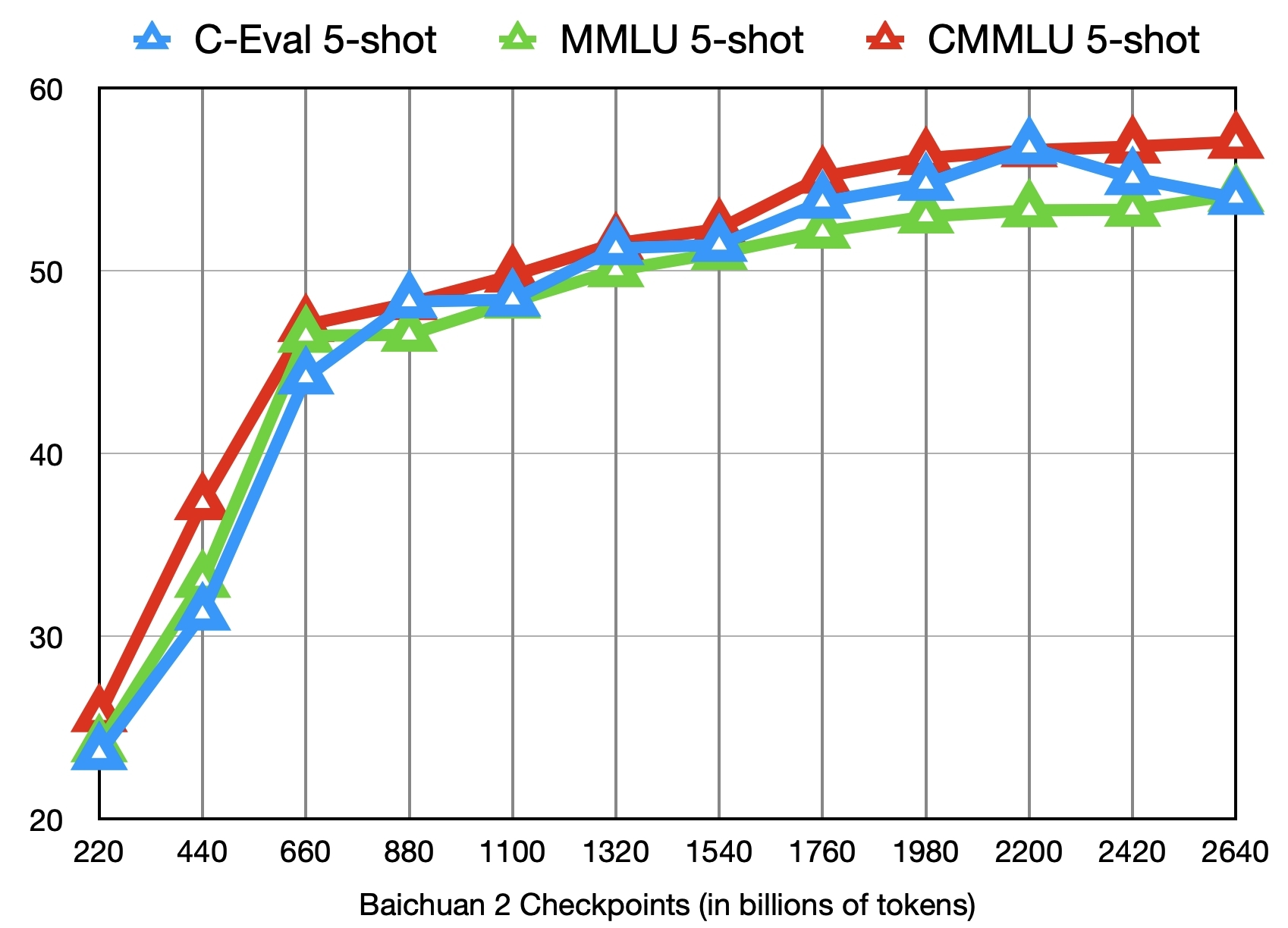

除了训练了 2.6 万亿 Tokens 的 [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) 模型,我们还提供了在此之前的另外 11 个中间过程的模型(分别对应训练了约 0.2 ~ 2.4 万亿 Tokens)供社区研究使用

([训练过程checkpoint下载](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints))。下图给出了这些 checkpoints 在 C-Eval、MMLU、CMMLU 三个 benchmark 上的效果变化:

In addition to the [Baichuan2-7B-Base](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base) model trained on 2.6 trillion tokens, we also offer 11 additional intermediate-stage models for community research, corresponding to training on approximately 0.2 to 2.4 trillion tokens each ([Intermediate Checkpoints Download](https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints)). The graph below shows the performance changes of these checkpoints on three benchmarks: C-Eval, MMLU, and CMMLU.

# <span id="Terms">声明与协议/Terms and Conditions</span>

## 声明

我们在此声明,我们的开发团队并未基于 Baichuan 2 模型开发任何应用,无论是在 iOS、Android、网页或任何其他平台。我们强烈呼吁所有使用者,不要利用

Baichuan 2 模型进行任何危害国家社会安全或违法的活动。另外,我们也要求使用者不要将 Baichuan 2

模型用于未经适当安全审查和备案的互联网服务。我们希望所有的使用者都能遵守这个原则,确保科技的发展能在规范和合法的环境下进行。

我们已经尽我们所能,来确保模型训练过程中使用的数据的合规性。然而,尽管我们已经做出了巨大的努力,但由于模型和数据的复杂性,仍有可能存在一些无法预见的问题。因此,如果由于使用

Baichuan 2 开源模型而导致的任何问题,包括但不限于数据安全问题、公共舆论风险,或模型被误导、滥用、传播或不当利用所带来的任何风险和问题,我们将不承担任何责任。

We hereby declare that our team has not developed any applications based on Baichuan 2 models, not on iOS, Android, the web, or any other platform. We strongly call on all users not to use Baichuan 2 models for any activities that harm national / social security or violate the law. Also, we ask users not to use Baichuan 2 models for Internet services that have not undergone appropriate security reviews and filings. We hope that all users can abide by this principle and ensure that the development of technology proceeds in a regulated and legal environment.

We have done our best to ensure the compliance of the data used in the model training process. However, despite our considerable efforts, there may still be some unforeseeable issues due to the complexity of the model and data. Therefore, if any problems arise due to the use of Baichuan 2 open-source models, including but not limited to data security issues, public opinion risks, or any risks and problems brought about by the model being misled, abused, spread or improperly exploited, we will not assume any responsibility.

## 协议

社区使用 Baichuan 2 模型需要遵循 [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) 和[《Baichuan 2 模型社区许可协议》](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf)。Baichuan 2 模型支持商业用途,如果您计划将 Baichuan 2 模型或其衍生品用于商业目的,请您确认您的主体符合以下情况:

1. 您或您的关联方的服务或产品的日均用户活跃量(DAU)低于100万。

2. 您或您的关联方不是软件服务提供商、云服务提供商。

3. 您或您的关联方不存在将授予您的商用许可,未经百川许可二次授权给其他第三方的可能。

在符合以上条件的前提下,您需要通过以下联系邮箱 opensource@baichuan-inc.com ,提交《Baichuan 2 模型社区许可协议》要求的申请材料。审核通过后,百川将特此授予您一个非排他性、全球性、不可转让、不可再许可、可撤销的商用版权许可。

The community usage of Baichuan 2 model requires adherence to [Apache 2.0](https://github.com/baichuan-inc/Baichuan2/blob/main/LICENSE) and [Community License for Baichuan2 Model](https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/resolve/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf). The Baichuan 2 model supports commercial use. If you plan to use the Baichuan 2 model or its derivatives for commercial purposes, please ensure that your entity meets the following conditions:

1. The Daily Active Users (DAU) of your or your affiliate's service or product is less than 1 million.

2. Neither you nor your affiliates are software service providers or cloud service providers.

3. There is no possibility for you or your affiliates to grant the commercial license given to you, to reauthorize it to other third parties without Baichuan's permission.

Upon meeting the above conditions, you need to submit the application materials required by the Baichuan 2 Model Community License Agreement via the following contact email: opensource@baichuan-inc.com. Once approved, Baichuan will hereby grant you a non-exclusive, global, non-transferable, non-sublicensable, revocable commercial copyright license.

[GitHub]:https://github.com/baichuan-inc/Baichuan2

[Baichuan2]:https://github.com/baichuan-inc/Baichuan2

[Baichuan-7B]:https://huggingface.co/baichuan-inc/Baichuan-7B

[Baichuan2-7B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base

[Baichuan2-7B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat

[Baichuan2-7B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat-4bits

[Baichuan-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan-13B-Base

[Baichuan2-13B-Base]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Base

[Baichuan2-13B-Chat]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat

[Baichuan2-13B-Chat-4bits]:https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat-4bits

[通用]:https://github.com/baichuan-inc/Baichuan2#%E9%80%9A%E7%94%A8%E9%A2%86%E5%9F%9F

[法律]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

[医疗]:https://github.com/baichuan-inc/Baichuan2#%E6%B3%95%E5%BE%8B%E5%8C%BB%E7%96%97

[数学]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

[代码]:https://github.com/baichuan-inc/Baichuan2#%E6%95%B0%E5%AD%A6%E4%BB%A3%E7%A0%81

[多语言翻译]:https://github.com/baichuan-inc/Baichuan2#%E5%A4%9A%E8%AF%AD%E8%A8%80%E7%BF%BB%E8%AF%91

[《Baichuan 2 模型社区许可协议》]:https://huggingface.co/baichuan-inc/Baichuan2-7B-Base/blob/main/Baichuan%202%E6%A8%A1%E5%9E%8B%E7%A4%BE%E5%8C%BA%E8%AE%B8%E5%8F%AF%E5%8D%8F%E8%AE%AE.pdf

[邮件申请]: mailto:opensource@baichuan-inc.com

[Email]: mailto:opensource@baichuan-inc.com

[opensource@baichuan-inc.com]: mailto:opensource@baichuan-inc.com

[训练过程heckpoint下载]: https://huggingface.co/baichuan-inc/Baichuan2-7B-Intermediate-Checkpoints

[百川智能]: https://www.baichuan-ai.com

|

sdpkjc/Ant-v4-sac_continuous_action-seed2

|

sdpkjc

| 2023-12-19T09:57:43Z | 0 | 0 |

cleanrl

|

[

"cleanrl",

"tensorboard",

"Ant-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-12-19T09:57:34Z |

---

tags:

- Ant-v4

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

library_name: cleanrl

model-index:

- name: SAC

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Ant-v4

type: Ant-v4

metrics:

- type: mean_reward

value: 5816.91 +/- 66.05

name: mean_reward

verified: false

---

# (CleanRL) **SAC** Agent Playing **Ant-v4**

This is a trained model of a SAC agent playing Ant-v4.

The model was trained by using [CleanRL](https://github.com/vwxyzjn/cleanrl) and the most up-to-date training code can be

found [here](https://github.com/vwxyzjn/cleanrl/blob/master/cleanrl/sac_continuous_action.py).

## Get Started

To use this model, please install the `cleanrl` package with the following command:

```

pip install "cleanrl[sac_continuous_action]"

python -m cleanrl_utils.enjoy --exp-name sac_continuous_action --env-id Ant-v4

```

Please refer to the [documentation](https://docs.cleanrl.dev/get-started/zoo/) for more detail.

## Command to reproduce the training

```bash

curl -OL https://huggingface.co/sdpkjc/Ant-v4-sac_continuous_action-seed2/raw/main/sac_continuous_action.py

curl -OL https://huggingface.co/sdpkjc/Ant-v4-sac_continuous_action-seed2/raw/main/pyproject.toml

curl -OL https://huggingface.co/sdpkjc/Ant-v4-sac_continuous_action-seed2/raw/main/poetry.lock

poetry install --all-extras

python sac_continuous_action.py --save-model --upload-model --hf-entity sdpkjc --env-id Ant-v4 --seed 2 --track

```

# Hyperparameters

```python

{'alpha': 0.2,

'autotune': True,

'batch_size': 256,

'buffer_size': 1000000,

'capture_video': False,

'cuda': True,

'env_id': 'Ant-v4',

'exp_name': 'sac_continuous_action',

'gamma': 0.99,

'hf_entity': 'sdpkjc',

'learning_starts': 5000.0,

'noise_clip': 0.5,

'policy_frequency': 2,

'policy_lr': 0.0003,

'q_lr': 0.001,

'save_model': True,

'seed': 2,

'target_network_frequency': 1,

'tau': 0.005,

'torch_deterministic': True,

'total_timesteps': 1000000,

'track': True,

'upload_model': True,

'wandb_entity': None,

'wandb_project_name': 'cleanRL'}

```

|

Breyten/mistral-instruct-dutch-syntax-10000

|

Breyten

| 2023-12-19T09:56:16Z | 1 | 0 |

peft

|

[

"peft",

"safetensors",

"generated_from_trainer",

"base_model:mistralai/Mistral-7B-Instruct-v0.1",

"base_model:adapter:mistralai/Mistral-7B-Instruct-v0.1",

"license:apache-2.0",

"region:us"

] | null | 2023-12-16T22:54:25Z |

---

license: apache-2.0

library_name: peft

tags:

- generated_from_trainer

base_model: mistralai/Mistral-7B-Instruct-v0.1

model-index:

- name: mistral-instruct-dutch-syntax-10000

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Mistral-7B-Instruct-v0.1-syntax2023-12-16-21-24

This model is a fine-tuned version of [mistralai/Mistral-7B-Instruct-v0.1](https://huggingface.co/mistralai/Mistral-7B-Instruct-v0.1) on a Lassy_small dataset curated for dutch syntax.

10000 samples where used, batch-size 2, runtime 2 epochs.

It achieves the following results on the evaluation set:

- Loss: 0.2522

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2.5e-05

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 0.03

- training_steps: 10000

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 0.7075 | 0.11 | 500 | 0.6710 |

| 0.3569 | 0.21 | 1000 | 0.4348 |

| 0.3458 | 0.32 | 1500 | 0.3517 |

| 0.3325 | 0.42 | 2000 | 0.3151 |

| 0.3014 | 0.53 | 2500 | 0.2928 |

| 0.2304 | 0.63 | 3000 | 0.2817 |

| 0.2984 | 0.74 | 3500 | 0.2736 |

| 0.2283 | 0.84 | 4000 | 0.2680 |

| 0.2399 | 0.95 | 4500 | 0.2640 |

| 0.24 | 1.05 | 5000 | 0.2609 |

| 0.2039 | 1.16 | 5500 | 0.2588 |

| 0.2447 | 1.26 | 6000 | 0.2558 |

| 0.2377 | 1.37 | 6500 | 0.2544 |

| 0.2399 | 1.47 | 7000 | 0.2544 |

| 0.2424 | 1.58 | 7500 | 0.2532 |

| 0.2626 | 1.68 | 8000 | 0.2527 |

| 0.2346 | 1.79 | 8500 | 0.2524 |

| 0.2194 | 1.89 | 9000 | 0.2522 |

| 0.2123 | 2.0 | 9500 | 0.2522 |

| 0.2618 | 2.11 | 10000 | 0.2522 |

### Framework versions

- PEFT 0.7.1

- Transformers 4.36.1

- Pytorch 2.1.2+cu121

- Datasets 2.15.0

- Tokenizers 0.15.0

|

maonx/gtemodel1

|

maonx

| 2023-12-19T09:52:32Z | 5 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"safetensors",

"bert",

"feature-extraction",

"sentence-similarity",

"autotrain_compatible",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2023-11-30T07:34:24Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

---

# gtemodel2

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 1024 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('gtemodel2')

embeddings = model.encode(sentences)

print(embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name=gtemodel2)

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 159 with parameters:

```

{'batch_size': 36, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.ContrastiveLoss.ContrastiveLoss` with parameters:

```

{'distance_metric': 'SiameseDistanceMetric.COSINE_DISTANCE', 'margin': 0.5, 'size_average': True}

```

Parameters of the fit()-Method:

```

{

"epochs": 5,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 5e-06

},

"scheduler": "WarmupLinear",

"steps_per_epoch": null,

"warmup_steps": 79,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: BertModel

(1): Pooling({'word_embedding_dimension': 1024, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

(2): Normalize()

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

sdpkjc/Walker2d-v4-sac_continuous_action-seed2

|

sdpkjc

| 2023-12-19T09:51:57Z | 0 | 0 |

cleanrl

|

[

"cleanrl",

"tensorboard",

"Walker2d-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-12-19T09:51:48Z |

---

tags:

- Walker2d-v4

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

library_name: cleanrl

model-index:

- name: SAC

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Walker2d-v4

type: Walker2d-v4

metrics:

- type: mean_reward

value: 3860.43 +/- 46.19

name: mean_reward

verified: false

---

# (CleanRL) **SAC** Agent Playing **Walker2d-v4**

This is a trained model of a SAC agent playing Walker2d-v4.

The model was trained by using [CleanRL](https://github.com/vwxyzjn/cleanrl) and the most up-to-date training code can be

found [here](https://github.com/vwxyzjn/cleanrl/blob/master/cleanrl/sac_continuous_action.py).