modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-29 18:27:06

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 526

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-29 18:26:56

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg

|

vocabtrimmer

| 2023-03-27T09:14:30Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"question generation",

"es",

"dataset:lmqg/qg_esquad",

"arxiv:2210.03992",

"license:cc-by-4.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-03-20T17:58:31Z |

---

license: cc-by-4.0

metrics:

- bleu4

- meteor

- rouge-l

- bertscore

- moverscore

language: es

datasets:

- lmqg/qg_esquad

pipeline_tag: text2text-generation

tags:

- question generation

widget:

- text: "del <hl> Ministerio de Desarrollo Urbano <hl> , Gobierno de la India."

example_title: "Question Generation Example 1"

- text: "a <hl> noviembre <hl> , que es también la estación lluviosa."

example_title: "Question Generation Example 2"

- text: "como <hl> el gobierno de Abbott <hl> que asumió el cargo el 18 de septiembre de 2013."

example_title: "Question Generation Example 3"

model-index:

- name: vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg

results:

- task:

name: Text2text Generation

type: text2text-generation

dataset:

name: lmqg/qg_esquad

type: default

args: default

metrics:

- name: BLEU4 (Question Generation)

type: bleu4_question_generation

value: 9.45

- name: ROUGE-L (Question Generation)

type: rouge_l_question_generation

value: 24.37

- name: METEOR (Question Generation)

type: meteor_question_generation

value: 22.59

- name: BERTScore (Question Generation)

type: bertscore_question_generation

value: 84.15

- name: MoverScore (Question Generation)

type: moverscore_question_generation

value: 58.96

---

# Model Card of `vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg`

This model is fine-tuned version of [ckpts/mt5-small-trimmed-es-120000](https://huggingface.co/ckpts/mt5-small-trimmed-es-120000) for question generation task on the [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) (dataset_name: default) via [`lmqg`](https://github.com/asahi417/lm-question-generation).

### Overview

- **Language model:** [ckpts/mt5-small-trimmed-es-120000](https://huggingface.co/ckpts/mt5-small-trimmed-es-120000)

- **Language:** es

- **Training data:** [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) (default)

- **Online Demo:** [https://autoqg.net/](https://autoqg.net/)

- **Repository:** [https://github.com/asahi417/lm-question-generation](https://github.com/asahi417/lm-question-generation)

- **Paper:** [https://arxiv.org/abs/2210.03992](https://arxiv.org/abs/2210.03992)

### Usage

- With [`lmqg`](https://github.com/asahi417/lm-question-generation#lmqg-language-model-for-question-generation-)

```python

from lmqg import TransformersQG

# initialize model

model = TransformersQG(language="es", model="vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg")

# model prediction

questions = model.generate_q(list_context="a noviembre , que es también la estación lluviosa.", list_answer="noviembre")

```

- With `transformers`

```python

from transformers import pipeline

pipe = pipeline("text2text-generation", "vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg")

output = pipe("del <hl> Ministerio de Desarrollo Urbano <hl> , Gobierno de la India.")

```

## Evaluation

- ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_esquad.default.json)

| | Score | Type | Dataset |

|:-----------|--------:|:--------|:-----------------------------------------------------------------|

| BERTScore | 84.15 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| Bleu_1 | 25.99 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| Bleu_2 | 17.64 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| Bleu_3 | 12.73 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| Bleu_4 | 9.45 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| METEOR | 22.59 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| MoverScore | 58.96 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

| ROUGE_L | 24.37 | default | [lmqg/qg_esquad](https://huggingface.co/datasets/lmqg/qg_esquad) |

## Training hyperparameters

The following hyperparameters were used during fine-tuning:

- dataset_path: lmqg/qg_esquad

- dataset_name: default

- input_types: paragraph_answer

- output_types: question

- prefix_types: None

- model: ckpts/mt5-small-trimmed-es-120000

- max_length: 512

- max_length_output: 32

- epoch: 13

- batch: 16

- lr: 0.001

- fp16: False

- random_seed: 1

- gradient_accumulation_steps: 4

- label_smoothing: 0.15

The full configuration can be found at [fine-tuning config file](https://huggingface.co/vocabtrimmer/mt5-small-trimmed-es-120000-esquad-qg/raw/main/trainer_config.json).

## Citation

```

@inproceedings{ushio-etal-2022-generative,

title = "{G}enerative {L}anguage {M}odels for {P}aragraph-{L}evel {Q}uestion {G}eneration",

author = "Ushio, Asahi and

Alva-Manchego, Fernando and

Camacho-Collados, Jose",

booktitle = "Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing",

month = dec,

year = "2022",

address = "Abu Dhabi, U.A.E.",

publisher = "Association for Computational Linguistics",

}

```

|

phatho/NeverEnding-Dream

|

phatho

| 2023-03-27T08:49:36Z | 8 | 2 |

diffusers

|

[

"diffusers",

"stable-diffusion",

"text-to-image",

"art",

"artistic",

"en",

"license:other",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-03-27T08:48:49Z |

---

language:

- en

license: other

tags:

- stable-diffusion

- text-to-image

- art

- artistic

- diffusers

inference: true

duplicated_from: Lykon/NeverEnding-Dream

---

# NeverEnding Dream (NED)

## Official Repository

Read more about this model here: https://civitai.com/models/10028/neverending-dream-ned

Also please support by giving 5 stars and a heart, which will notify new updates.

Also consider supporting me on Patreon or ByuMeACoffee

- https://www.patreon.com/Lykon275

- https://www.buymeacoffee.com/lykon

You can run this model on:

- https://sinkin.ai/m/qGdxrYG

Some sample output:

|

jamesimmanuel/a2c-PandaReachDense-v2

|

jamesimmanuel

| 2023-03-27T08:39:05Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"PandaReachDense-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-03-27T08:36:38Z |

---

library_name: stable-baselines3

tags:

- PandaReachDense-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: PandaReachDense-v2

type: PandaReachDense-v2

metrics:

- type: mean_reward

value: -1.26 +/- 0.24

name: mean_reward

verified: false

---

# **A2C** Agent playing **PandaReachDense-v2**

This is a trained model of a **A2C** agent playing **PandaReachDense-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

lio/ppo-Huggy

|

lio

| 2023-03-27T08:32:46Z | 6 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-03-27T08:32:39Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy** using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://github.com/huggingface/ml-agents#get-started

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

### Resume the training

```

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser:**.

1. Go to https://huggingface.co/spaces/unity/ML-Agents-Huggy

2. Step 1: Find your model_id: lio/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

aallal-prestataire/scene-classifier-demo-2

|

aallal-prestataire

| 2023-03-27T08:10:38Z | 226 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"huggingpics",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-03-27T07:49:13Z |

---

tags:

- image-classification

- pytorch

- huggingpics

metrics:

- accuracy

model-index:

- name: scene-classifier-demo-2

results:

- task:

name: Image Classification

type: image-classification

metrics:

- name: Accuracy

type: accuracy

value: 0.986760139465332

---

# scene-classifier-demo-2

Autogenerated by HuggingPics🤗🖼️

Create your own image classifier for **anything** by running [the demo on Google Colab](https://colab.research.google.com/github/nateraw/huggingpics/blob/main/HuggingPics.ipynb).

Report any issues with the demo at the [github repo](https://github.com/nateraw/huggingpics).

## Example Images

#### carte

#### credits

#### generique

#### meteo

#### plateau

#### reportage

#### sommaire

|

VuDucQuang/Dreambooth-Avatar

|

VuDucQuang

| 2023-03-27T08:05:19Z | 49 | 1 |

diffusers

|

[

"diffusers",

"dreambooth",

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"en",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-03-27T08:02:49Z |

---

language:

- en

thumbnail: "https://staticassetbucket.s3.us-west-1.amazonaws.com/avatar_grid.png"

tags:

- dreambooth

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

---

# Dreambooth style: Avatar

__Dreambooth finetuning of Stable Diffusion (v1.5.1) on Avatar art style by [Lambda Labs](https://lambdalabs.com/).__

## About

This text-to-image stable diffusion model was trained with dreambooth.

Put in a text prompt and generate your own Avatar style image!

## Usage

To run model locally:

```bash

pip install accelerate torchvision transformers>=4.21.0 ftfy tensorboard modelcards

```

```python

import torch

from diffusers import StableDiffusionPipeline

from torch import autocast

pipe = StableDiffusionPipeline.from_pretrained("lambdalabs/dreambooth-avatar", torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "Yoda, avatarart style"

scale = 7.5

n_samples = 4

with autocast("cuda"):

images = pipe(n_samples*[prompt], guidance_scale=scale).images

for idx, im in enumerate(images):

im.save(f"{idx:06}.png")

```

## Model description

Base model is Stable Diffusion v1.5 and was trained using Dreambooth with 60 input images sized 512x512 displaying Avatar character images.

The model is learning to associate Avatar images with the style tokenized as 'avatarart style'.

Prior preservation was used during training using the class 'Person' to avoid training bleeding into the representations for that class.

Training ran on 2xA6000 GPUs on [Lambda GPU Cloud](https://lambdalabs.com/service/gpu-cloud) for 700 steps, batch size 4 (a couple hours, at a cost of about $4).

Author: Eole Cervenka

|

VuDucQuang/Stable_Diffusion_v1.5

|

VuDucQuang

| 2023-03-27T07:59:42Z | 0 | 0 | null |

[

"stable-diffusion",

"stable-diffusion-diffusers",

"text-to-image",

"arxiv:2207.12598",

"arxiv:2112.10752",

"arxiv:2103.00020",

"arxiv:2205.11487",

"arxiv:1910.09700",

"license:creativeml-openrail-m",

"region:us"

] |

text-to-image

| 2023-03-27T07:54:14Z |

---

license: creativeml-openrail-m

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

inference: true

extra_gated_prompt: |-

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

The CreativeML OpenRAIL License specifies:

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

2. CompVis claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

Please read the full license carefully here: https://huggingface.co/spaces/CompVis/stable-diffusion-license

extra_gated_heading: Please read the LICENSE to access this model

---

# Stable Diffusion v1-5 Model Card

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input.

For more information about how Stable Diffusion functions, please have a look at [🤗's Stable Diffusion blog](https://huggingface.co/blog/stable_diffusion).

The **Stable-Diffusion-v1-5** checkpoint was initialized with the weights of the [Stable-Diffusion-v1-2](https:/steps/huggingface.co/CompVis/stable-diffusion-v1-2)

checkpoint and subsequently fine-tuned on 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

You can use this both with the [🧨Diffusers library](https://github.com/huggingface/diffusers) and the [RunwayML GitHub repository](https://github.com/runwayml/stable-diffusion).

### Diffusers

```py

from diffusers import StableDiffusionPipeline

import torch

model_id = "runwayml/stable-diffusion-v1-5"

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

pipe = pipe.to("cuda")

prompt = "a photo of an astronaut riding a horse on mars"

image = pipe(prompt).images[0]

image.save("astronaut_rides_horse.png")

```

For more detailed instructions, use-cases and examples in JAX follow the instructions [here](https://github.com/huggingface/diffusers#text-to-image-generation-with-stable-diffusion)

### Original GitHub Repository

1. Download the weights

- [v1-5-pruned-emaonly.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned-emaonly.ckpt) - 4.27GB, ema-only weight. uses less VRAM - suitable for inference

- [v1-5-pruned.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned.ckpt) - 7.7GB, ema+non-ema weights. uses more VRAM - suitable for fine-tuning

2. Follow instructions [here](https://github.com/runwayml/stable-diffusion).

## Model Details

- **Developed by:** Robin Rombach, Patrick Esser

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([CLIP ViT-L/14](https://arxiv.org/abs/2103.00020)) as suggested in the [Imagen paper](https://arxiv.org/abs/2205.11487).

- **Resources for more information:** [GitHub Repository](https://github.com/CompVis/stable-diffusion), [Paper](https://arxiv.org/abs/2112.10752).

- **Cite as:**

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

# Uses

## Direct Use

The model is intended for research purposes only. Possible research areas and

tasks include

- Safe deployment of models which have the potential to generate harmful content.

- Probing and understanding the limitations and biases of generative models.

- Generation of artworks and use in design and other artistic processes.

- Applications in educational or creative tools.

- Research on generative models.

Excluded uses are described below.

### Misuse, Malicious Use, and Out-of-Scope Use

_Note: This section is taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), but applies in the same way to Stable Diffusion v1_.

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

#### Out-of-Scope Use

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

#### Misuse and Malicious Use

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

- Impersonating individuals without their consent.

- Sexual content without consent of the people who might see it.

- Mis- and disinformation

- Representations of egregious violence and gore

- Sharing of copyrighted or licensed material in violation of its terms of use.

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

## Limitations and Bias

### Limitations

- The model does not achieve perfect photorealism

- The model cannot render legible text

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

- Faces and people in general may not be generated properly.

- The model was trained mainly with English captions and will not work as well in other languages.

- The autoencoding part of the model is lossy

- The model was trained on a large-scale dataset

[LAION-5B](https://laion.ai/blog/laion-5b/) which contains adult material

and is not fit for product use without additional safety mechanisms and

considerations.

- No additional measures were used to deduplicate the dataset. As a result, we observe some degree of memorization for images that are duplicated in the training data.

The training data can be searched at [https://rom1504.github.io/clip-retrieval/](https://rom1504.github.io/clip-retrieval/) to possibly assist in the detection of memorized images.

### Bias

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

which consists of images that are primarily limited to English descriptions.

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

### Safety Module

The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers.

This checker works by checking model outputs against known hard-coded NSFW concepts.

The concepts are intentionally hidden to reduce the likelihood of reverse-engineering this filter.

Specifically, the checker compares the class probability of harmful concepts in the embedding space of the `CLIPTextModel` *after generation* of the images.

The concepts are passed into the model with the generated image and compared to a hand-engineered weight for each NSFW concept.

## Training

**Training Data**

The model developers used the following dataset for training the model:

- LAION-2B (en) and subsets thereof (see next section)

**Training Procedure**

Stable Diffusion v1-5 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training,

- Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4

- Text prompts are encoded through a ViT-L/14 text-encoder.

- The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention.

- The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet.

Currently six Stable Diffusion checkpoints are provided, which were trained as follows.

- [`stable-diffusion-v1-1`](https://huggingface.co/CompVis/stable-diffusion-v1-1): 237,000 steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en).

194,000 steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`).

- [`stable-diffusion-v1-2`](https://huggingface.co/CompVis/stable-diffusion-v1-2): Resumed from `stable-diffusion-v1-1`.

515,000 steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en,

filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)).

- [`stable-diffusion-v1-3`](https://huggingface.co/CompVis/stable-diffusion-v1-3): Resumed from `stable-diffusion-v1-2` - 195,000 steps at resolution `512x512` on "laion-improved-aesthetics" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-v1-4`](https://huggingface.co/CompVis/stable-diffusion-v1-4) Resumed from `stable-diffusion-v1-2` - 225,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) Resumed from `stable-diffusion-v1-2` - 595,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

- [`stable-diffusion-inpainting`](https://huggingface.co/runwayml/stable-diffusion-inpainting) Resumed from `stable-diffusion-v1-5` - then 440,000 steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning. For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything.

- **Hardware:** 32 x 8 x A100 GPUs

- **Optimizer:** AdamW

- **Gradient Accumulations**: 2

- **Batch:** 32 x 8 x 2 x 4 = 2048

- **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant

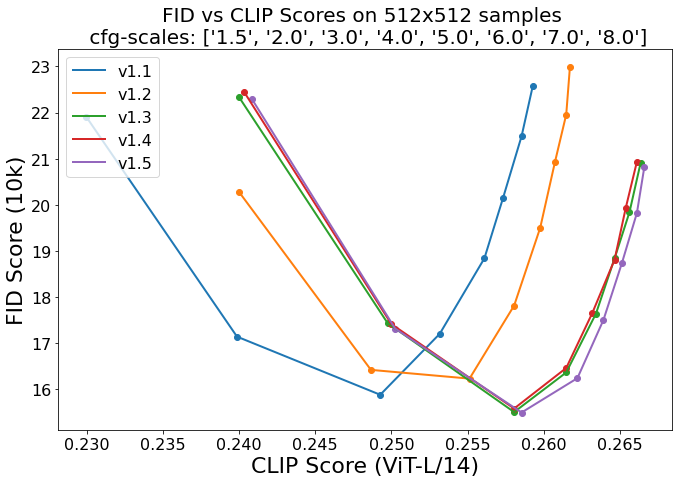

## Evaluation Results

Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0,

5.0, 6.0, 7.0, 8.0) and 50 PNDM/PLMS sampling

steps show the relative improvements of the checkpoints:

Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores.

## Environmental Impact

**Stable Diffusion v1** **Estimated Emissions**

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

- **Hardware Type:** A100 PCIe 40GB

- **Hours used:** 150000

- **Cloud Provider:** AWS

- **Compute Region:** US-east

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 11250 kg CO2 eq.

## Citation

```bibtex

@InProceedings{Rombach_2022_CVPR,

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2022},

pages = {10684-10695}

}

```

*This model card was written by: Robin Rombach and Patrick Esser and is based on the [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).*

|

Maciel/T5Corrector-base-v1

|

Maciel

| 2023-03-27T07:58:09Z | 124 | 5 |

transformers

|

[

"transformers",

"pytorch",

"t5",

"text2text-generation",

"text error correction",

"zh",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-02-08T02:52:24Z |

---

language:

- zh

license: apache-2.0

tags:

- t5

- text error correction

widget:

- text: "今天天气不太好,我的心情也不是很偷快"

example_title: "案例1"

- text: "听到这个消息,心情真的蓝瘦"

example_title: "案例2"

- text: "脑子有点胡涂了,这道题冥冥学过还没有做出来"

example_title: "案例3"

inference:

parameters:

max_length: 256

num_beams: 10

no_repeat_ngram_size: 5

do_sample: True

early_stopping: True

---

## 功能介绍

T5Corrector:中文字音与字形纠错模型

这个模型是基于mengzi-t5-base进行文本纠错训练,使用500w+句子,通过替换同音词、近音词和形近字来构造纠错平行语料,共计3kw+句对,累计训练45000步。

<a href='https://github.com/Macielyoung/T5Corrector'>Github项目地址</a>

加载模型:

```python

# 加载模型

from transformers import T5Tokenizer, T5ForConditionalGeneration

pretrained = "Maciel/T5Corrector-base-v1"

tokenizer = T5Tokenizer.from_pretrained(pretrained)

model = T5ForConditionalGeneration.from_pretrained(pretrained)

```

使用模型进行预测推理方法:

```python

import torch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

def correct(text, max_length):

model_inputs = tokenizer(text,

max_length=max_length,

truncation=True,

return_tensors="pt").to(device)

output = model.generate(**model_inputs,

num_beams=5,

no_repeat_ngram_size=4,

do_sample=True,

early_stopping=True,

max_length=max_length,

return_dict_in_generate=True,

output_scores=True)

pred_output = tokenizer.batch_decode(output.sequences, skip_special_tokens=True)[0]

return pred_output

text = "听到这个消息,心情真的蓝瘦"

correction = correct(text, max_length=32)

print(correction)

```

### 案例展示

```

示例1:

input: 听到这个消息,心情真的蓝瘦

output: 听到这个消息,心情真的难受

示例2:

input: 脑子有点胡涂了,这道题冥冥学过还没有做出来

output: 脑子有点糊涂了,这道题明明学过还没有做出来

示例3:

input: 今天天气不太好,我的心情也不是很偷快

output: 今天天气不太好,我的心情也不是很愉快

```

|

VuDucQuang/ControlNet

|

VuDucQuang

| 2023-03-27T07:55:34Z | 1 | 0 |

transformers

|

[

"transformers",

"art",

"controlnet",

"stable-diffusion",

"arxiv:2302.05543",

"base_model:runwayml/stable-diffusion-v1-5",

"base_model:adapter:runwayml/stable-diffusion-v1-5",

"license:openrail",

"endpoints_compatible",

"region:us"

] | null | 2023-03-27T07:52:00Z |

---

license: openrail

base_model: runwayml/stable-diffusion-v1-5

tags:

- art

- controlnet

- stable-diffusion

---

# Controlnet - *Canny Version*

ControlNet is a neural network structure to control diffusion models by adding extra conditions.

This checkpoint corresponds to the ControlNet conditioned on **Canny edges**.

It can be used in combination with [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/text2img).

## Model Details

- **Developed by:** Lvmin Zhang, Maneesh Agrawala

- **Model type:** Diffusion-based text-to-image generation model

- **Language(s):** English

- **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

- **Resources for more information:** [GitHub Repository](https://github.com/lllyasviel/ControlNet), [Paper](https://arxiv.org/abs/2302.05543).

- **Cite as:**

@misc{zhang2023adding,

title={Adding Conditional Control to Text-to-Image Diffusion Models},

author={Lvmin Zhang and Maneesh Agrawala},

year={2023},

eprint={2302.05543},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

## Introduction

Controlnet was proposed in [*Adding Conditional Control to Text-to-Image Diffusion Models*](https://arxiv.org/abs/2302.05543) by

Lvmin Zhang, Maneesh Agrawala.

The abstract reads as follows:

*We present a neural network structure, ControlNet, to control pretrained large diffusion models to support additional input conditions.

The ControlNet learns task-specific conditions in an end-to-end way, and the learning is robust even when the training dataset is small (< 50k).

Moreover, training a ControlNet is as fast as fine-tuning a diffusion model, and the model can be trained on a personal devices.

Alternatively, if powerful computation clusters are available, the model can scale to large amounts (millions to billions) of data.

We report that large diffusion models like Stable Diffusion can be augmented with ControlNets to enable conditional inputs like edge maps, segmentation maps, keypoints, etc.

This may enrich the methods to control large diffusion models and further facilitate related applications.*

## Released Checkpoints

The authors released 8 different checkpoints, each trained with [Stable Diffusion v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5)

on a different type of conditioning:

| Model Name | Control Image Overview| Control Image Example | Generated Image Example |

|---|---|---|---|

|[lllyasviel/sd-controlnet-canny](https://huggingface.co/lllyasviel/sd-controlnet-canny)<br/> *Trained with canny edge detection* | A monochrome image with white edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_canny.png"><img width="64" style="margin:0;padding:0;" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_canny.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_canny_1.png"/></a>|

|[lllyasviel/sd-controlnet-depth](https://huggingface.co/lllyasviel/sd-controlnet-depth)<br/> *Trained with Midas depth estimation* |A grayscale image with black representing deep areas and white representing shallow areas.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_depth.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_depth.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_depth_2.png"/></a>|

|[lllyasviel/sd-controlnet-hed](https://huggingface.co/lllyasviel/sd-controlnet-hed)<br/> *Trained with HED edge detection (soft edge)* |A monochrome image with white soft edges on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_bird_hed.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_bird_hed.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_bird_hed_1.png"/></a> |

|[lllyasviel/sd-controlnet-mlsd](https://huggingface.co/lllyasviel/sd-controlnet-mlsd)<br/> *Trained with M-LSD line detection* |A monochrome image composed only of white straight lines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_mlsd.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_mlsd.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_mlsd_0.png"/></a>|

|[lllyasviel/sd-controlnet-normal](https://huggingface.co/lllyasviel/sd-controlnet-normal)<br/> *Trained with normal map* |A [normal mapped](https://en.wikipedia.org/wiki/Normal_mapping) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_normal.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_normal.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_normal_1.png"/></a>|

|[lllyasviel/sd-controlnet_openpose](https://huggingface.co/lllyasviel/sd-controlnet-openpose)<br/> *Trained with OpenPose bone image* |A [OpenPose bone](https://github.com/CMU-Perceptual-Computing-Lab/openpose) image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_human_openpose.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_human_openpose.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_human_openpose_0.png"/></a>|

|[lllyasviel/sd-controlnet_scribble](https://huggingface.co/lllyasviel/sd-controlnet-scribble)<br/> *Trained with human scribbles* |A hand-drawn monochrome image with white outlines on a black background.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_vermeer_scribble.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_vermeer_scribble.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_vermeer_scribble_0.png"/></a> |

|[lllyasviel/sd-controlnet_seg](https://huggingface.co/lllyasviel/sd-controlnet-seg)<br/>*Trained with semantic segmentation* |An [ADE20K](https://groups.csail.mit.edu/vision/datasets/ADE20K/)'s segmentation protocol image.|<a href="https://huggingface.co/takuma104/controlnet_dev/blob/main/gen_compare/control_images/converted/control_room_seg.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/control_images/converted/control_room_seg.png"/></a>|<a href="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"><img width="64" src="https://huggingface.co/takuma104/controlnet_dev/resolve/main/gen_compare/output_images/diffusers/output_room_seg_1.png"/></a> |

## Example

It is recommended to use the checkpoint with [Stable Diffusion v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5) as the checkpoint

has been trained on it.

Experimentally, the checkpoint can be used with other diffusion models such as dreamboothed stable diffusion.

**Note**: If you want to process an image to create the auxiliary conditioning, external dependencies are required as shown below:

1. Install opencv

```sh

$ pip install opencv-contrib-python

```

2. Let's install `diffusers` and related packages:

```

$ pip install diffusers transformers git+https://github.com/huggingface/accelerate.git

```

3. Run code:

```python

import cv2

from PIL import Image

from diffusers import StableDiffusionControlNetPipeline, ControlNetModel, UniPCMultistepScheduler

import torch

import numpy as np

from diffusers.utils import load_image

image = load_image("https://huggingface.co/lllyasviel/sd-controlnet-hed/resolve/main/images/bird.png")

image = np.array(image)

low_threshold = 100

high_threshold = 200

image = cv2.Canny(image, low_threshold, high_threshold)

image = image[:, :, None]

image = np.concatenate([image, image, image], axis=2)

image = Image.fromarray(image)

controlnet = ControlNetModel.from_pretrained(

"fusing/stable-diffusion-v1-5-controlnet-canny", torch_dtype=torch.float16

)

pipe = StableDiffusionControlNetPipeline.from_pretrained(

"runwayml/stable-diffusion-v1-5", controlnet=controlnet, safety_checker=None, torch_dtype=torch.float16

)

pipe.scheduler = UniPCMultistepScheduler.from_config(pipe.scheduler.config)

# Remove if you do not have xformers installed

# see https://huggingface.co/docs/diffusers/v0.13.0/en/optimization/xformers#installing-xformers

# for installation instructions

pipe.enable_xformers_memory_efficient_attention()

pipe.enable_model_cpu_offload()

image = pipe("bird", image, num_inference_steps=20).images[0]

image.save('images/bird_canny_out.png')

```

### Training

The canny edge model was trained on 3M edge-image, caption pairs. The model was trained for 600 GPU-hours with Nvidia A100 80G using Stable Diffusion 1.5 as a base model.

### Blog post

For more information, please also have a look at the [official ControlNet Blog Post](https://huggingface.co/blog/controlnet).

|

xinyixiuxiu/albert-xxlarge-v2-SST2-finetuned-epoch-2

|

xinyixiuxiu

| 2023-03-27T07:53:52Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"albert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-03-27T07:20:57Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: xinyixiuxiu/albert-xxlarge-v2-SST2-finetuned-epoch-2

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# xinyixiuxiu/albert-xxlarge-v2-SST2-finetuned-epoch-2

This model is a fine-tuned version of [albert-xxlarge-v2](https://huggingface.co/albert-xxlarge-v2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.2721

- Train Accuracy: 0.8858

- Validation Loss: 0.1265

- Validation Accuracy: 0.9564

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': 3e-06, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Validation Loss | Validation Accuracy | Epoch |

|:----------:|:--------------:|:---------------:|:-------------------:|:-----:|

| 0.2721 | 0.8858 | 0.1265 | 0.9564 | 0 |

### Framework versions

- Transformers 4.21.1

- TensorFlow 2.7.0

- Datasets 2.10.1

- Tokenizers 0.12.1

|

jamesimmanuel/a2c-AntBulletEnv-v0

|

jamesimmanuel

| 2023-03-27T07:40:37Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"AntBulletEnv-v0",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-03-27T07:39:29Z |

---

library_name: stable-baselines3

tags:

- AntBulletEnv-v0

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: AntBulletEnv-v0

type: AntBulletEnv-v0

metrics:

- type: mean_reward

value: 1812.33 +/- 61.20

name: mean_reward

verified: false

---

# **A2C** Agent playing **AntBulletEnv-v0**

This is a trained model of a **A2C** agent playing **AntBulletEnv-v0**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

swl-models/DanMix-v2.3

|

swl-models

| 2023-03-27T07:36:06Z | 0 | 2 | null |

[

"license:creativeml-openrail-m",

"region:us"

] | null | 2023-03-27T04:11:44Z |

---

license: creativeml-openrail-m

---

|

circulus/alpaca-lora-7b

|

circulus

| 2023-03-27T07:21:53Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-03-27T06:50:53Z |

---

license: gpl-3.0

---

This repo contains a low-rank adapter for LLaMA-7b model fit on the Stanford Alpaca dataset.

Also modified for enhance performance with 8 epoch training.

|

YoungMasterFromSect/Trauter_LoRAs

|

YoungMasterFromSect

| 2023-03-27T07:11:06Z | 0 | 519 | null |

[

"anime",

"region:us"

] | null | 2023-01-14T12:43:26Z |

---

tags:

- anime

---

NOTICE: My LoRAs require high amount of tags to look good, I will fix this later on and update all of my LoRAs if everything works out.

# General Information

- [Overview](#overview)

- [Installation](#installation)

- [Usage](#usage)

- [SocialMedia](#socialmedia)

- [Plans for the future](#plans-for-the-future)

# Overview

Welcome to the place where I host my LoRAs. In short, LoRA is just a checkpoint trained on specific artstyle/subject that you load into your WebUI, that can be used with other models.

Although you can use it with any model, the effects of LoRA will vary between them.

Most of the previews use models that come from [WarriorMama777](https://huggingface.co/WarriorMama777/OrangeMixs) .

For more information about them, you can visit the original LoRA repository: https://github.com/cloneofsimo/lora

Every images posted here, or on the other sites have metadata in them that you can use in PNG Info tab in your WebUI to get access to the prompt of the image.

Everything I do here is for free of charge!

I don't guarantee that my LoRAs will give you good results, if you think they are bad, don't use them.

# Installation

To use them in your WebUI, please install the extension linked under, following the installation guide:

https://github.com/kohya-ss/sd-webui-additional-networks#installation

# Usage

All of my LoRAs are to be used with their original danbooru tag. For example:

```

asuna \(blue archive\)

```

My LoRAs will have sufixes that will tell you how much they were trained. Either by using words like "soft" and "hard",

where soft stands for lower amount of training and hard for higher amount of training.

More trained LoRA is harder to modify but provides higher consistency in details and original outfits,

while lower trained one will be more flexible, but may get details wrong.

All the LoRAs that aren't marked with PRUNED require tagging everything about the character to get the likness of it.

You have to tag every part of the character like: eyes,hair,breasts,accessories,special features,etc...

In theory, this should allow LoRAs to be more flexible, but it requires to prompt those things always, because character tag doesn't have those features baked into it.

From 1/16 I will test releasing pruned versions which will not require those prompting those things.

The usage of them is also explained in this guide:

https://github.com/kohya-ss/sd-webui-additional-networks#how-to-use

# SocialMedia

Here are some places where you can find my other stuff that I post, or if you feel like buying me a coffee:

[Twitter](https://twitter.com/Trauter8)

[Pixiv](https://www.pixiv.net/en/users/88153216)

[Buymeacoffee](https://www.buymeacoffee.com/Trauter)

# Plans for the future

- Remake all of my LoRAs into pruned versions which will be more user friendly and easier to use, and use 768x768 res. for training and better Learning Rate

- After finishing all of my LoRA that I want to make, go over the old ones and try to make them better.

- Accept suggestions for almost every character.

- Maybe get motivation to actually tag outfits.

# LoRAs

- [Genshin Impact](#genshin-impact)

- [Eula](#eula)

- [Barbara](#barbara)

- [Diluc](#diluc)

- [Mona](#mona)

- [Rosaria](#rosaria)

- [Yae Miko](#yae-miko)

- [Raiden Shogun](#raiden-shogun)

- [Kujou Sara](#kujou-sara)

- [Shenhe](#shenhe)

- [Yelan](#yelan)

- [Jean](#jean)

- [Lisa](#lisa)

- [Zhongli](#zhongli)

- [Yoimiya](#yoimiya)

- [Blue Archive](#blue-archive)

- [Rikuhachima Aru](#rikuhachima-aru)

- [Ichinose Asuna](#ichinose-asuna)

- [Fate Grand Order](#fate-grand-order)

- [Minamoto-no-Raikou](#minamoto-no-raikou)

- [Misc. Characters](#misc.-characters)

- [Aponia](#aponia)

- [Reisalin Stout](#reisalin-stout)

- [Artstyles](#artstyles)

- [Pozer](#pozer)

# Genshin Impact

- # Eula

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/1.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/1.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305293076)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Eula)

- # Barbara

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/bar.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/bar.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305435137)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Barbara)

- # Diluc

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/dil.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/dil.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305427945)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Diluc)

- # Mona

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/mon.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/mon.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305428050)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Mona)

- # Rosaria

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ros.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ros.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305428015)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Rosaria)

- # Yae Miko

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/yae.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/yae.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305448948)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/yae%20miko)

- # Raiden Shogun

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ra.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ra.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, raiden shogun, 1girl, breasts, solo, cleavage, kimono, bangs, sash, mole, obi, tassel, blush, large breasts, purple eyes, japanese clothes, long hair, looking at viewer, hand on own chest, hair ornament, purple hair, bridal gauntlets, closed mouth, purple kimono, blue hair, mole under eye, shoulder armor, long sleeves, wide sleeves, mitsudomoe (shape), tomoe (symbol), cowboy shot

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, from behind

Steps: 30, Sampler: DPM++ 2M Karras, CFG scale: 4.5, Seed: 2544310848, Size: 704x384, Model hash: 2bba3136, Denoising strength: 0.5, Clip skip: 2, ENSD: 31337, Hires upscale: 2.05, Hires upscaler: 4x_foolhardy_Remacri

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305313633)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Raiden%20Shogun)

- # Kujou Sara

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ku.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ku.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, kujou sara, 1girl, solo, mask, gloves, bangs, bodysuit, gradient, sidelocks, signature, yellow eyes, bird mask, mask on head, looking at viewer, short hair, black hair, detached sleeves, simple background, japanese clothes, black gloves, black bodysuit, wide sleeves, white background, upper body, gradient background, closed mouth, hair ornament, artist name, elbow gloves

Negative prompt: (worst quality, low quality:1.4)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 3966121353, Size: 512x768, Model hash: 931f9552, Denoising strength: 0.5, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires steps: 20, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305311498)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Kujou%20Sara)

- # Shenhe

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/sh.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/sh.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, shenhe \(genshin impact\), 1girl, solo, breasts, bodysuit, tassel, gloves, bangs, braid, outdoors, bird, jewelry, earrings, sky, breast curtain, long hair, hair over one eye, covered navel, blue eyes, looking at viewer, hair ornament, large breasts, shoulder cutout, clothing cutout, very long hair, hip vent, braided ponytail, partially fingerless gloves, black bodysuit, tassel earrings, black gloves, gold trim, cowboy shot, white hair

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 573332187, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305307599)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Shenhe)

- # Yelan

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/10.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/10.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, yelan \(genshin impact\), 1girl, breasts, solo, bangs, armpits, smile, sky, cleavage, jewelry, gloves, jacket, dice, mole, cloud, grin, dress, blush, earrings, thighs, tassel, sleeveless, day, outdoors, large breasts, looking at viewer, green eyes, arms up, short hair, blue hair, vision (genshin impact), fur trim, white jacket, blue sky, mole on breast, arms behind head, bob cut, multicolored hair, black hair, fur-trimmed jacket, elbow gloves, bare shoulders, blue dress, parted lips, diagonal bangs, clothing cutout, pelvic curtain, asymmetrical gloves

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name

Steps: 23, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 575500509, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 2.4, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305296897)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Yelan)

- # Jean

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/333.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/333.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, jean \(genshin impact\), 1girl, breasts, solo, cleavage, strapless, smile, ponytail, bangs, jewelry, earrings, bow, capelet, signature, sidelocks, cape, corset, shiny, blonde hair, long hair, upper body, detached sleeves, purple eyes, hair between eyes, hair bow, parted lips, looking to the side, large breasts, detached collar, medium breasts, blue capelet, white background, black bow, blue eyes, bare shoulders, simple background

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7.5, Seed: 32930253, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.59, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305307594)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Jean)

- # Lisa

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/lis.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/lis.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, lisa \(genshin impact\), 1girl, solo, hat, breasts, gloves, cleavage, flower, smile, bangs, dress, rose, jewelry, witch, capelet, green eyes, witch hat, brown hair, purple headwear, looking at viewer, white background, large breasts, long hair, simple background, black gloves, purple flower, hair between eyes, upper body, purple rose, parted lips, purple capelet, hat flower, multicolored dress, hair ornament, multicolored clothes, vision (genshin impact)

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, worst quality, low quality, extra digits, loli, loli face

Steps: 23, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 350134479, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305290865)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Lisa)

- # Zhongli

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/zho.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/zho.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, zhongli \(genshin impact\), solo, 1boy, bangs, jewelry, tassel, earrings, ponytail, low ponytail, gloves, necktie, jacket, shirt, formal, petals, suit, makeup, eyeliner, eyeshadow, male focus, long hair, brown hair, multicolored hair, long sleeves, tassel earrings, single earring, collared shirt, hair between eyes, black gloves, closed mouth, yellow eyes, gradient hair, orange hair, simple background

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, worst quality, low quality, extra digits, loli, loli face

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7, Seed: 88418604, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305311423)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Zhongli)

- # Yoimiya

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/Yoi.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/Yoi.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305448498)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Genshin-Impact/Yoimiya)

# Blue Archive

- # Rikuhachima Aru

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/22.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/22.png)

<details>

<summary>Sample Prompt</summary>

<pre>

aru \(blue archive\), masterpiece, best quality, 1girl, solo, horns, skirt, gloves, shirt, halo, window, breasts, blush, sweatdrop, ribbon, coat, bangs, :d, smile, indoors, standing, plant, thighs, sweat, jacket, day, sunlight, long hair, white shirt, white gloves, black skirt, looking at viewer, open mouth, long sleeves, red ribbon, fur trim, neck ribbon, red hair, fur-trimmed coat, collared shirt, orange eyes, medium breasts, brown coat, hands up, side slit, coat on shoulders, v-shaped eyebrows, yellow eyes, potted plant, fur collar, shirt tucked in, demon horns, high-waist skirt, dress shirt

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 1190296645, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.58, Clip skip: 2, ENSD: 31337, Hires upscale: 1.85, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305293051)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Blue-Archive/Rikuhachima%20Aru)

- # Ichinose Asuna

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/asu.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/asu.png)

<details>

<summary>Sample Prompt</summary>

<pre>

photorealistic, (hyperrealistic:1.2), (extremely detailed CG unity 8k wallpaper), (ultra-detailed), (mature female:1.2), masterpiece, best quality, asuna \(blue archive\), 1girl, breasts, solo, gloves, pantyhose, ass, leotard, smile, tail, halo, grin, blush, bangs, sideboob, highleg, standing, mole, strapless, ribbon, thighs, animal ears, playboy bunny, rabbit ears, long hair, white gloves, very long hair, large breasts, high heels, blue leotard, hair over one eye, fake animal ears, blue eyes, looking at viewer, white footwear, rabbit tail, official alternate costume, full body, elbow gloves, simple background, white background, absurdly long hair, bare shoulders, detached collar, thighband pantyhose, leaning forward, highleg leotard, strapless leotard, hair ribbon, brown pantyhose, black pantyhose, mole on breast, light brown hair, brown hair, looking back, fake tail

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 6.5, Seed: 2052579935, Size: 512x768, Model hash: ffa7b160, Clip skip: 2, ENSD: 31337

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305292996)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Blue-Archive/Ichinose%20Asuna)

# Fate Grand Order

- # Minamoto-no-Raikou

- [<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/3.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/3.png)

<details>

<summary>Sample Prompt</summary>

<pre>

mature female, masterpiece, best quality, minamoto no raikou \(fate\), 1girl, breasts, solo, bodysuit, gloves, bangs, smile, rope, heart, blush, thighs, armor, kote, long hair, purple hair, fingerless gloves, purple eyes, large breasts, very long hair, looking at viewer, parted bangs, ribbed sleeves, black gloves, arm guards, covered navel, low-tied long hair, purple bodysuit, japanese armor

Negative prompt: lowres, bad anatomy, bad hands, text, error, missing fingers, extra digit, fewer digits, cropped, worst quality, low quality, normal quality, jpeg artifacts,signature, watermark, username, blurry, artist name, (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 22, Sampler: DPM++ SDE Karras, CFG scale: 7.5, Seed: 3383453781, Size: 512x768, Model hash: ffa7b160, Denoising strength: 0.59, Clip skip: 2, ENSD: 31337, Hires upscale: 2, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305290900)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Fate-Grand-Order/Minamoto-no-Raikou)

# Misc. Characters

- # Aponia

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/apo.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/apo.png)

<details>

<summary>Sample Prompt</summary>

<pre>

masterpiece, best quality, eula \(genshin impact\), 1girl, solo, thighhighs, weapon, gloves, breasts, sword, hairband, necktie, holding, leotard, bangs, greatsword, cape, thighs, boots, blue hair, looking at viewer, arms up, vision (genshin impact), medium breasts, holding sword, long sleeves, holding weapon, purple eyes, medium hair, copyright name, hair ornament, thigh boots, black leotard, black hairband, blue necktie, black thighhighs, yellow eyes, closed mouth

Negative prompt: (worst quality, low quality, extra digits, loli, loli face:1.3)

Steps: 20, Sampler: DPM++ SDE Karras, CFG scale: 8, Seed: 2010519914, Size: 512x768, Model hash: a87fd7da, Denoising strength: 0.57, Clip skip: 2, ENSD: 31337, Hires upscale: 1.8, Hires upscaler: Latent (nearest-exact)

</pre>

</details>

- [Examples](https://www.flickr.com/photos/197461145@N04/albums/72177720305445819)

- [Download](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/tree/main/LoRA/Misc.%20Characters/Aponia)

- # Reisalin Stout

[<img src="https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ryza.png" width="512" height="768">](https://huggingface.co/YoungMasterFromSect/Trauter_LoRAs/resolve/main/LoRA/Previews/ryza.png)

<details>

<summary>Sample Prompt</summary>

<pre>