modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 18:26:50

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 530

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 18:26:48

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

TokenfreeEMNLPSubmission/bert-base-finetuned-masakhaner-amh

|

TokenfreeEMNLPSubmission

| 2023-04-04T05:08:24Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"en",

"arxiv:1810.04805",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-04T05:08:12Z |

---

language:

- en

license: apache-2.0

---

# BERT multilingual base model (cased)

Pretrained model on the English dataset using a masked language modeling (MLM) objective.

It was introduced in [this paper](https://arxiv.org/abs/1810.04805) and first released in

[this repository](https://github.com/google-research/bert). This model is case sensitive: it makes a difference

between english and English.

## Model description

BERT is a transformers model pretrained on a large corpus of English data in a self-supervised fashion. This means

it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots of

publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely, it

was pretrained with two objectives:

- Masked language modeling (MLM): taking a sentence, the model randomly masks 15% of the words in the input then run

the entire masked sentence through the model and has to predict the masked words. This is different from traditional

recurrent neural networks (RNNs) that usually see the words one after the other, or from autoregressive models like

GPT which internally mask the future tokens. It allows the model to learn a bidirectional representation of the

sentence.

- Next sentence prediction (NSP): the models concatenates two masked sentences as inputs during pretraining. Sometimes

they correspond to sentences that were next to each other in the original text, sometimes not. The model then has to

predict if the two sentences were following each other or not.

The pretrained model has been finetuned for one specific language for one specific task.

### How to use

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import BertTokenizer, BertModel

model = BertModel.from_pretrained("mushfiqur11/<repo_name>")

```

|

kambehmw/Reinforce-v2

|

kambehmw

| 2023-04-04T05:01:08Z | 0 | 0 | null |

[

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-04T05:01:04Z |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Reinforce-v2

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 34.20 +/- 24.48

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

Orreo/ColorRough_LoRA

|

Orreo

| 2023-04-04T04:46:18Z | 0 | 1 | null |

[

"license:artistic-2.0",

"region:us"

] | null | 2023-04-04T04:28:47Z |

---

license: artistic-2.0

---

상업적 이용 전면 금지

No commercial use

트리거워드 rough skecth

권장 프롬 skecth style

추천 가중치 0.3~0.7

모델에 따라 결과물이 깨지는 경우 있음

|

davis901/roberta-frame-CP

|

davis901

| 2023-04-04T04:40:41Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain",

"unk",

"dataset:davis901/autotrain-data-imdb-textclassification",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-04-04T03:16:27Z |

---

tags:

- autotrain

- text-classification

language:

- unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- davis901/autotrain-data-imdb-textclassification

co2_eq_emissions:

emissions: 3.313265712444502

---

# Model Trained Using AutoTrain

- Problem type: Binary Classification

- Model ID: 46471115134

- CO2 Emissions (in grams): 3.3133

## Validation Metrics

- Loss: 0.006

- Accuracy: 0.999

- Precision: 0.999

- Recall: 1.000

- AUC: 1.000

- F1: 0.999

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/davis901/autotrain-imdb-textclassification-46471115134

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("davis901/autotrain-imdb-textclassification-46471115134", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("davis901/autotrain-imdb-textclassification-46471115134", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

Humayoun/Donut5WithRandomPlacing

|

Humayoun

| 2023-04-04T03:38:14Z | 12 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vision-encoder-decoder",

"image-text-to-text",

"generated_from_trainer",

"dataset:imagefolder",

"license:mit",

"endpoints_compatible",

"region:us"

] |

image-text-to-text

| 2023-04-04T02:40:28Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- imagefolder

model-index:

- name: Donut5WithRandomPlacing

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Donut5WithRandomPlacing

This model is a fine-tuned version of [humayoun/Donut4](https://huggingface.co/humayoun/Donut4) on the imagefolder dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.28.0.dev0

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

davis901/autotrain-imdb-textclassification-46471115127

|

davis901

| 2023-04-04T03:22:52Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain",

"unk",

"dataset:davis901/autotrain-data-imdb-textclassification",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-04-04T03:15:58Z |

---

tags:

- autotrain

- text-classification

language:

- unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- davis901/autotrain-data-imdb-textclassification

co2_eq_emissions:

emissions: 2.683579313085358

---

# Model Trained Using AutoTrain

- Problem type: Binary Classification

- Model ID: 46471115127

- CO2 Emissions (in grams): 2.6836

## Validation Metrics

- Loss: 0.000

- Accuracy: 1.000

- Precision: 1.000

- Recall: 1.000

- AUC: 1.000

- F1: 1.000

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/davis901/autotrain-imdb-textclassification-46471115127

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("davis901/autotrain-imdb-textclassification-46471115127", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("davis901/autotrain-imdb-textclassification-46471115127", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

huggingtweets/dash_eats-lica_rezende

|

huggingtweets

| 2023-04-04T02:59:37Z | 135 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-04T02:59:25Z |

---

language: en

thumbnail: https://github.com/borisdayma/huggingtweets/blob/master/img/logo.png?raw=true

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1539146021808533504/g-XjE19Z_400x400.jpg')">

</div>

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/556455602331742208/KWkVe0TV_400x400.jpeg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI CYBORG 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">Olivia’s World & dasha</div>

<div style="text-align: center; font-size: 14px;">@dash_eats-lica_rezende</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from Olivia’s World & dasha.

| Data | Olivia’s World | dasha |

| --- | --- | --- |

| Tweets downloaded | 1115 | 3199 |

| Retweets | 118 | 510 |

| Short tweets | 120 | 574 |

| Tweets kept | 877 | 2115 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/5n8zwv6v/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @dash_eats-lica_rezende's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/rpkswkc0) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/rpkswkc0/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/dash_eats-lica_rezende')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

RoachTheHorse/sd-class-butterflies-32

|

RoachTheHorse

| 2023-04-04T02:24:53Z | 30 | 0 |

diffusers

|

[

"diffusers",

"pytorch",

"unconditional-image-generation",

"diffusion-models-class",

"license:mit",

"diffusers:DDPMPipeline",

"region:us"

] |

unconditional-image-generation

| 2023-04-04T02:23:23Z |

---

license: mit

tags:

- pytorch

- diffusers

- unconditional-image-generation

- diffusion-models-class

---

# Model Card for Unit 1 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class)

This model is a diffusion model for unconditional image generation of cute 🦋.

## Usage

```python

from diffusers import DDPMPipeline

pipeline = DDPMPipeline.from_pretrained('RoachTheHorse/sd-class-butterflies-32')

image = pipeline().images[0]

image

```

|

dongdongcui/DriveGPT

|

dongdongcui

| 2023-04-04T02:04:06Z | 0 | 0 | null |

[

"pytorch",

"question-answering",

"en",

"region:us"

] |

question-answering

| 2023-03-24T23:28:37Z |

---

language:

- en

pipeline_tag: question-answering

---

|

Larxel/q-Taxi-v3

|

Larxel

| 2023-04-04T01:39:26Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-04T01:39:23Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.56 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="Larxel/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Geekay/flower-classifier

|

Geekay

| 2023-04-04T01:19:21Z | 193 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"vit",

"image-classification",

"huggingpics",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2023-04-04T01:19:11Z |

---

tags:

- image-classification

- pytorch

- huggingpics

metrics:

- accuracy

model-index:

- name: flower-classifier

results:

- task:

name: Image Classification

type: image-classification

metrics:

- name: Accuracy

type: accuracy

value: 0.9701492786407471

---

# flower-classifier

Autogenerated by HuggingPics🤗🖼️

Create your own image classifier for **anything** by running [the demo on Google Colab](https://colab.research.google.com/github/nateraw/huggingpics/blob/main/HuggingPics.ipynb).

Report any issues with the demo at the [github repo](https://github.com/nateraw/huggingpics).

## Example Images

#### lily

#### orchids

#### roses

|

sgoodfriend/ppo-unet-MicrortsDefeatRandomEnemySparseReward-v3

|

sgoodfriend

| 2023-04-04T00:49:31Z | 0 | 0 |

rl-algo-impls

|

[

"rl-algo-impls",

"MicrortsDefeatRandomEnemySparseReward-v3",

"ppo",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-04T00:49:25Z |

---

library_name: rl-algo-impls

tags:

- MicrortsDefeatRandomEnemySparseReward-v3

- ppo

- deep-reinforcement-learning

- reinforcement-learning

model-index:

- name: ppo

results:

- metrics:

- type: mean_reward

value: 131.04 +/- 15.12

name: mean_reward

task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: MicrortsDefeatRandomEnemySparseReward-v3

type: MicrortsDefeatRandomEnemySparseReward-v3

---

# **PPO** Agent playing **MicrortsDefeatRandomEnemySparseReward-v3**

This is a trained model of a **PPO** agent playing **MicrortsDefeatRandomEnemySparseReward-v3** using the [/sgoodfriend/rl-algo-impls](https://github.com/sgoodfriend/rl-algo-impls) repo.

All models trained at this commit can be found at https://api.wandb.ai/links/sgoodfriend/ww60gryx.

## Training Results

This model was trained from 3 trainings of **PPO** agents using different initial seeds. These agents were trained by checking out [388c8ed](https://github.com/sgoodfriend/rl-algo-impls/tree/388c8ed9f7db1d5f5c380d981aeb8a85f34eeacb). The best and last models were kept from each training. This submission has loaded the best models from each training, reevaluates them, and selects the best model from these latest evaluations (mean - std).

| algo | env | seed | reward_mean | reward_std | eval_episodes | best | wandb_url |

|:-------|:-----------------------------------------|-------:|--------------:|-------------:|----------------:|:-------|:-----------------------------------------------------------------------------|

| ppo | MicrortsDefeatRandomEnemySparseReward-v3 | 1 | 134.783 | 30.3561 | 24 | | [wandb](https://wandb.ai/sgoodfriend/rl-algo-impls-benchmarks/runs/40wjv5yz) |

| ppo | MicrortsDefeatRandomEnemySparseReward-v3 | 2 | 131.042 | 15.1237 | 24 | * | [wandb](https://wandb.ai/sgoodfriend/rl-algo-impls-benchmarks/runs/ysujlrg4) |

| ppo | MicrortsDefeatRandomEnemySparseReward-v3 | 3 | 137.025 | 21.6223 | 24 | | [wandb](https://wandb.ai/sgoodfriend/rl-algo-impls-benchmarks/runs/t81hur93) |

### Prerequisites: Weights & Biases (WandB)

Training and benchmarking assumes you have a Weights & Biases project to upload runs to.

By default training goes to a rl-algo-impls project while benchmarks go to

rl-algo-impls-benchmarks. During training and benchmarking runs, videos of the best

models and the model weights are uploaded to WandB.

Before doing anything below, you'll need to create a wandb account and run `wandb

login`.

## Usage

/sgoodfriend/rl-algo-impls: https://github.com/sgoodfriend/rl-algo-impls

Note: While the model state dictionary and hyperaparameters are saved, the latest

implementation could be sufficiently different to not be able to reproduce similar

results. You might need to checkout the commit the agent was trained on:

[388c8ed](https://github.com/sgoodfriend/rl-algo-impls/tree/388c8ed9f7db1d5f5c380d981aeb8a85f34eeacb).

```

# Downloads the model, sets hyperparameters, and runs agent for 3 episodes

python enjoy.py --wandb-run-path=sgoodfriend/rl-algo-impls-benchmarks/ysujlrg4

```

Setup hasn't been completely worked out yet, so you might be best served by using Google

Colab starting from the

[colab_enjoy.ipynb](https://github.com/sgoodfriend/rl-algo-impls/blob/main/colab_enjoy.ipynb)

notebook.

## Training

If you want the highest chance to reproduce these results, you'll want to checkout the

commit the agent was trained on: [388c8ed](https://github.com/sgoodfriend/rl-algo-impls/tree/388c8ed9f7db1d5f5c380d981aeb8a85f34eeacb). While

training is deterministic, different hardware will give different results.

```

python train.py --algo ppo --env MicrortsDefeatRandomEnemySparseReward-v3 --seed 2

```

Setup hasn't been completely worked out yet, so you might be best served by using Google

Colab starting from the

[colab_train.ipynb](https://github.com/sgoodfriend/rl-algo-impls/blob/main/colab_train.ipynb)

notebook.

## Benchmarking (with Lambda Labs instance)

This and other models from https://api.wandb.ai/links/sgoodfriend/ww60gryx were generated by running a script on a Lambda

Labs instance. In a Lambda Labs instance terminal:

```

git clone git@github.com:sgoodfriend/rl-algo-impls.git

cd rl-algo-impls

bash ./lambda_labs/setup.sh

wandb login

bash ./lambda_labs/benchmark.sh [-a {"ppo a2c dqn vpg"}] [-e ENVS] [-j {6}] [-p {rl-algo-impls-benchmarks}] [-s {"1 2 3"}]

```

### Alternative: Google Colab Pro+

As an alternative,

[colab_benchmark.ipynb](https://github.com/sgoodfriend/rl-algo-impls/tree/main/benchmarks#:~:text=colab_benchmark.ipynb),

can be used. However, this requires a Google Colab Pro+ subscription and running across

4 separate instances because otherwise running all jobs will exceed the 24-hour limit.

## Hyperparameters

This isn't exactly the format of hyperparams in hyperparams/ppo.yml, but instead the Wandb Run Config. However, it's very

close and has some additional data:

```

additional_keys_to_log:

- microrts_stats

algo: ppo

algo_hyperparams:

batch_size: 3072

clip_range: 0.1

clip_range_decay: none

clip_range_vf: 0.1

ent_coef: 0.01

learning_rate: 0.00025

learning_rate_decay: spike

max_grad_norm: 0.5

n_epochs: 4

n_steps: 512

ppo2_vf_coef_halving: true

vf_coef: 0.5

device: auto

env: unet-MicrortsDefeatRandomEnemySparseReward-v3

env_hyperparams:

bots:

randomBiasedAI: 24

env_type: microrts

make_kwargs:

map_path: maps/16x16/basesWorkers16x16.xml

max_steps: 2000

num_selfplay_envs: 0

render_theme: 2

reward_weight:

- 10

- 1

- 1

- 0.2

- 1

- 4

n_envs: 24

env_id: MicrortsDefeatRandomEnemySparseReward-v3

eval_params:

deterministic: false

n_timesteps: 2000000

policy_hyperparams:

activation_fn: relu

actor_head_style: unet

cnn_flatten_dim: 256

cnn_style: microrts

v_hidden_sizes:

- 256

- 128

seed: 2

use_deterministic_algorithms: true

wandb_entity: null

wandb_group: null

wandb_project_name: rl-algo-impls-benchmarks

wandb_tags:

- benchmark_388c8ed

- host_155-248-197-5

- branch_unet

- v0.0.8

```

|

kachinni/emotion-recognition

|

kachinni

| 2023-04-04T00:32:40Z | 0 | 1 | null |

[

"region:us"

] | null | 2023-04-04T00:29:18Z |

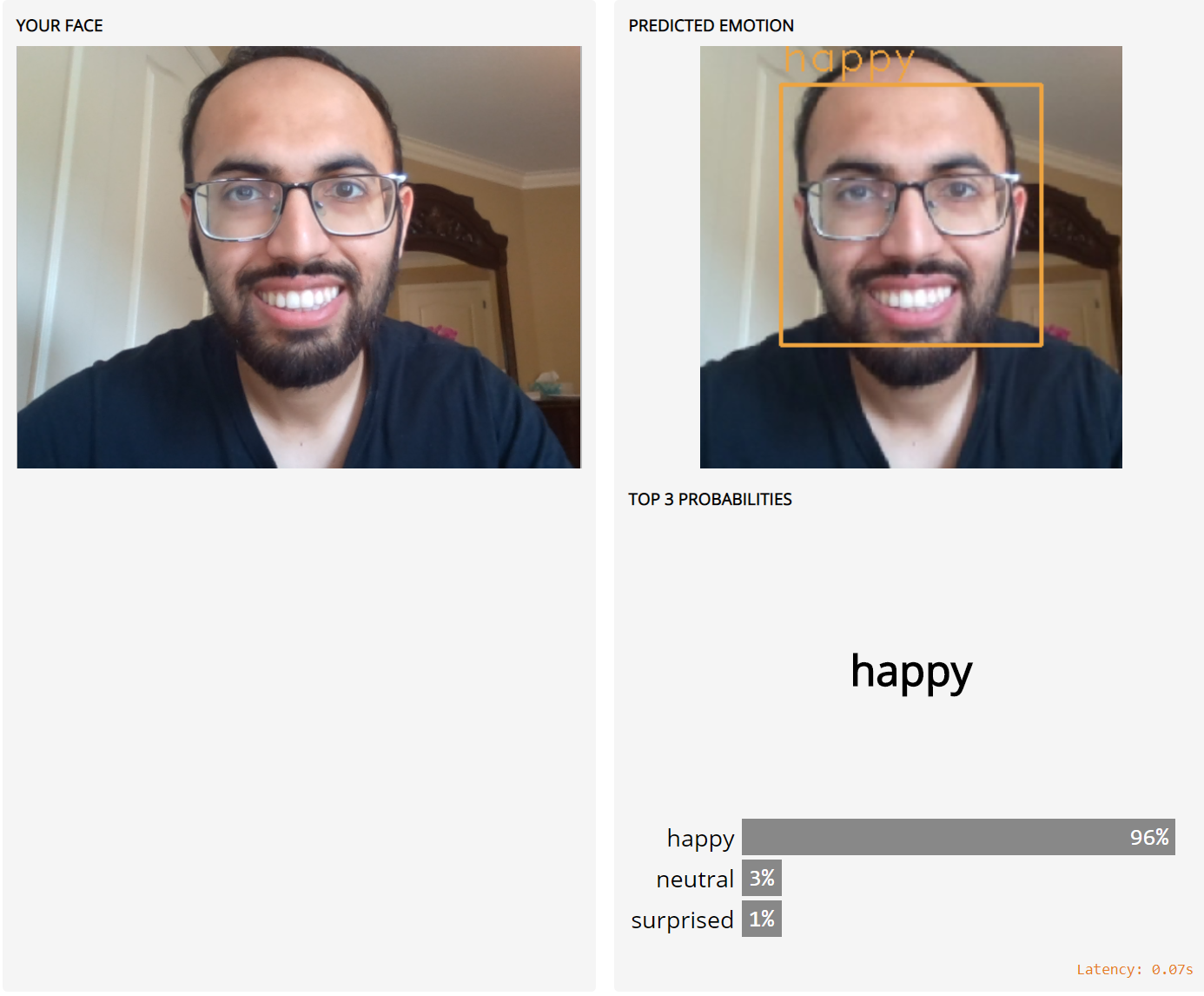

# Emotion Recognition on Gradio

This repo contains code to launch a [Gradio](https://github.com/gradio-app/gradio) interface for Emotion Recognition on [Gradio Hub](https://hub.gradio.app)

Please see the **original repo**: [omar178/Emotion-recognition](https://github.com/omar178/Emotion-recognition)

|

EchoShao8899/t5_event_relation_extractor

|

EchoShao8899

| 2023-04-04T00:08:28Z | 115 | 1 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"t5",

"text2text-generation",

"license:cc",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-02-06T09:06:58Z |

---

license: cc

---

This is the event-relation extraction model in ACCENT (An Automatic Event Commonsense Evaluation Metric for Open-Domain Dialogue Systems).

|

globophobe/q-FrozenLake-v1-4x4-noSlippery

|

globophobe

| 2023-04-03T23:38:57Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-03T23:38:54Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="globophobe/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Ray2791/distilbert-base-uncased-finetuned-imdb

|

Ray2791

| 2023-04-03T23:29:52Z | 125 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"fill-mask",

"generated_from_trainer",

"dataset:imdb",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2023-04-03T23:16:46Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- imdb

model-index:

- name: distilbert-base-uncased-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the imdb dataset.

It achieves the following results on the evaluation set:

- Loss: 2.4721

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.7086 | 1.0 | 157 | 2.4897 |

| 2.5796 | 2.0 | 314 | 2.4230 |

| 2.5269 | 3.0 | 471 | 2.4354 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

Brizape/tmvar_0.0001_ES12

|

Brizape

| 2023-04-03T23:07:14Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T22:51:16Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_0.0001_ES12

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_0.0001_ES12

This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0194

- Precision: 0.8877

- Recall: 0.8973

- F1: 0.8925

- Accuracy: 0.9968

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 1000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.2263 | 1.47 | 25 | 0.0788 | 0.0 | 0.0 | 0.0 | 0.9843 |

| 0.0492 | 2.94 | 50 | 0.0355 | 0.2576 | 0.3676 | 0.3029 | 0.9863 |

| 0.0258 | 4.41 | 75 | 0.0224 | 0.6 | 0.6811 | 0.6380 | 0.9933 |

| 0.013 | 5.88 | 100 | 0.0141 | 0.8267 | 0.9027 | 0.8630 | 0.9969 |

| 0.0031 | 7.35 | 125 | 0.0162 | 0.8218 | 0.8973 | 0.8579 | 0.9971 |

| 0.0028 | 8.82 | 150 | 0.0187 | 0.8449 | 0.8541 | 0.8495 | 0.9961 |

| 0.0024 | 10.29 | 175 | 0.0154 | 0.8267 | 0.9027 | 0.8630 | 0.9965 |

| 0.0014 | 11.76 | 200 | 0.0159 | 0.8221 | 0.9243 | 0.8702 | 0.9966 |

| 0.0013 | 13.24 | 225 | 0.0179 | 0.8579 | 0.8811 | 0.8693 | 0.9971 |

| 0.0009 | 14.71 | 250 | 0.0165 | 0.8807 | 0.8378 | 0.8587 | 0.9964 |

| 0.0005 | 16.18 | 275 | 0.0184 | 0.8549 | 0.8919 | 0.8730 | 0.9966 |

| 0.0003 | 17.65 | 300 | 0.0188 | 0.8777 | 0.8919 | 0.8847 | 0.9967 |

| 0.0002 | 19.12 | 325 | 0.0195 | 0.8474 | 0.8703 | 0.8587 | 0.9964 |

| 0.0002 | 20.59 | 350 | 0.0192 | 0.8836 | 0.9027 | 0.8930 | 0.9969 |

| 0.0003 | 22.06 | 375 | 0.0191 | 0.8889 | 0.9081 | 0.8984 | 0.9969 |

| 0.0002 | 23.53 | 400 | 0.0194 | 0.8877 | 0.8973 | 0.8925 | 0.9968 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

sgolkar/gpt2-medium-finetuned-brookstraining

|

sgolkar

| 2023-04-03T22:25:30Z | 205 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-03T21:38:17Z |

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: gpt2-medium-finetuned-brookstraining

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-medium-finetuned-brookstraining

This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 4.8470

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 100 | 3.4632 |

| No log | 2.0 | 200 | 3.4360 |

| No log | 3.0 | 300 | 3.4539 |

| No log | 4.0 | 400 | 3.4867 |

| 3.2934 | 5.0 | 500 | 3.5341 |

| 3.2934 | 6.0 | 600 | 3.6145 |

| 3.2934 | 7.0 | 700 | 3.6938 |

| 3.2934 | 8.0 | 800 | 3.8198 |

| 3.2934 | 9.0 | 900 | 3.9274 |

| 2.2258 | 10.0 | 1000 | 4.0388 |

| 2.2258 | 11.0 | 1100 | 4.1807 |

| 2.2258 | 12.0 | 1200 | 4.2635 |

| 2.2258 | 13.0 | 1300 | 4.3549 |

| 2.2258 | 14.0 | 1400 | 4.5134 |

| 1.5305 | 15.0 | 1500 | 4.5719 |

| 1.5305 | 16.0 | 1600 | 4.6932 |

| 1.5305 | 17.0 | 1700 | 4.7392 |

| 1.5305 | 18.0 | 1800 | 4.7729 |

| 1.5305 | 19.0 | 1900 | 4.8324 |

| 1.1988 | 20.0 | 2000 | 4.8470 |

### Framework versions

- Transformers 4.27.4

- Pytorch 1.13.1

- Datasets 2.11.0

- Tokenizers 0.11.0

|

huggingtweets/nathaniacolver

|

huggingtweets

| 2023-04-03T22:05:58Z | 141 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"huggingtweets",

"en",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-03-07T00:58:43Z |

---

language: en

thumbnail: https://github.com/borisdayma/huggingtweets/blob/master/img/logo.png?raw=true

tags:

- huggingtweets

widget:

- text: "My dream is"

---

<div class="inline-flex flex-col" style="line-height: 1.5;">

<div class="flex">

<div

style="display:inherit; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('https://pbs.twimg.com/profile_images/1606922334535057408/ODScb83P_400x400.jpg')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

<div

style="display:none; margin-left: 4px; margin-right: 4px; width: 92px; height:92px; border-radius: 50%; background-size: cover; background-image: url('')">

</div>

</div>

<div style="text-align: center; margin-top: 3px; font-size: 16px; font-weight: 800">🤖 AI BOT 🤖</div>

<div style="text-align: center; font-size: 16px; font-weight: 800">nia :)</div>

<div style="text-align: center; font-size: 14px;">@nathaniacolver</div>

</div>

I was made with [huggingtweets](https://github.com/borisdayma/huggingtweets).

Create your own bot based on your favorite user with [the demo](https://colab.research.google.com/github/borisdayma/huggingtweets/blob/master/huggingtweets-demo.ipynb)!

## How does it work?

The model uses the following pipeline.

To understand how the model was developed, check the [W&B report](https://wandb.ai/wandb/huggingtweets/reports/HuggingTweets-Train-a-Model-to-Generate-Tweets--VmlldzoxMTY5MjI).

## Training data

The model was trained on tweets from nia :).

| Data | nia :) |

| --- | --- |

| Tweets downloaded | 3177 |

| Retweets | 538 |

| Short tweets | 100 |

| Tweets kept | 2539 |

[Explore the data](https://wandb.ai/wandb/huggingtweets/runs/vh4m181u/artifacts), which is tracked with [W&B artifacts](https://docs.wandb.com/artifacts) at every step of the pipeline.

## Training procedure

The model is based on a pre-trained [GPT-2](https://huggingface.co/gpt2) which is fine-tuned on @nathaniacolver's tweets.

Hyperparameters and metrics are recorded in the [W&B training run](https://wandb.ai/wandb/huggingtweets/runs/jab5ifpt) for full transparency and reproducibility.

At the end of training, [the final model](https://wandb.ai/wandb/huggingtweets/runs/jab5ifpt/artifacts) is logged and versioned.

## How to use

You can use this model directly with a pipeline for text generation:

```python

from transformers import pipeline

generator = pipeline('text-generation',

model='huggingtweets/nathaniacolver')

generator("My dream is", num_return_sequences=5)

```

## Limitations and bias

The model suffers from [the same limitations and bias as GPT-2](https://huggingface.co/gpt2#limitations-and-bias).

In addition, the data present in the user's tweets further affects the text generated by the model.

## About

*Built by Boris Dayma*

[](https://twitter.com/intent/follow?screen_name=borisdayma)

For more details, visit the project repository.

[](https://github.com/borisdayma/huggingtweets)

|

olivierdehaene/optimized-santacoder

|

olivierdehaene

| 2023-04-03T22:04:43Z | 12 | 8 |

transformers

|

[

"transformers",

"safetensors",

"gpt2",

"text-generation",

"custom_code",

"code",

"dataset:bigcode/the-stack",

"arxiv:1911.02150",

"arxiv:2207.14255",

"arxiv:2301.03988",

"license:openrail",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"region:us"

] |

text-generation

| 2023-01-19T17:22:06Z |

---

license: openrail

datasets:

- bigcode/the-stack

language:

- code

programming_language:

- Java

- JavaScript

- Python

pipeline_tag: text-generation

inference: false

widget:

- text: 'def print_hello_world():'

example_title: Hello world

group: Python

model-index:

- name: SantaCoder

results:

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL HumanEval (Python)

metrics:

- name: pass@1

type: pass@1

value: 0.18

verified: false

- name: pass@10

type: pass@10

value: 0.29

verified: false

- name: pass@100

type: pass@100

value: 0.49

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL MBPP (Python)

metrics:

- name: pass@1

type: pass@1

value: 0.35

verified: false

- name: pass@10

type: pass@10

value: 0.58

verified: false

- name: pass@100

type: pass@100

value: 0.77

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL HumanEval (JavaScript)

metrics:

- name: pass@1

type: pass@1

value: 0.16

verified: false

- name: pass@10

type: pass@10

value: 0.27

verified: false

- name: pass@100

type: pass@100

value: 0.47

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL MBPP (Javascript)

metrics:

- name: pass@1

type: pass@1

value: 0.28

verified: false

- name: pass@10

type: pass@10

value: 0.51

verified: false

- name: pass@100

type: pass@100

value: 0.70

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL HumanEval (Java)

metrics:

- name: pass@1

type: pass@1

value: 0.15

verified: false

- name: pass@10

type: pass@10

value: 0.26

verified: false

- name: pass@100

type: pass@100

value: 0.41

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL MBPP (Java)

metrics:

- name: pass@1

type: pass@1

value: 0.28

verified: false

- name: pass@10

type: pass@10

value: 0.44

verified: false

- name: pass@100

type: pass@100

value: 0.59

verified: false

- task:

type: text-generation

dataset:

type: loubnabnl/humaneval_infilling

name: HumanEval FIM (Python)

metrics:

- name: single_line

type: exact_match

value: 0.44

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL HumanEval FIM (Java)

metrics:

- name: single_line

type: exact_match

value: 0.62

verified: false

- task:

type: text-generation

dataset:

type: nuprl/MultiPL-E

name: MultiPL HumanEval FIM (JavaScript)

metrics:

- name: single_line

type: exact_match

value: 0.60

verified: false

- task:

type: text-generation

dataset:

type: code_x_glue_ct_code_to_text

name: CodeXGLUE code-to-text (Python)

metrics:

- name: BLEU

type: bleu

value: 18.13

verified: false

---

# Optimizd SantaCoder

A up to 60% faster version of bigcode/santacoder.

# Table of Contents

1. [Model Summary](#model-summary)

2. [Use](#use)

3. [Limitations](#limitations)

4. [Training](#training)

5. [License](#license)

6. [Citation](#citation)

# Model Summary

The SantaCoder models are a series of 1.1B parameter models trained on the Python, Java, and JavaScript subset of [The Stack (v1.1)](https://huggingface.co/datasets/bigcode/the-stack) (which excluded opt-out requests).

The main model uses [Multi Query Attention](https://arxiv.org/abs/1911.02150), was trained using near-deduplication and comment-to-code ratio as filtering criteria and using the [Fill-in-the-Middle objective](https://arxiv.org/abs/2207.14255).

In addition there are several models that were trained on datasets with different filter parameters and with architecture and objective variations.

- **Repository:** [bigcode/Megatron-LM](https://github.com/bigcode-project/Megatron-LM)

- **Project Website:** [bigcode-project.org](www.bigcode-project.org)

- **Paper:** [🎅SantaCoder: Don't reach for the stars!🌟](https://arxiv.org/abs/2301.03988)

- **Point of Contact:** [contact@bigcode-project.org](mailto:contact@bigcode-project.org)

- **Languages:** Python, Java, and JavaScript

|Model|Architecture|Objective|Filtering|

|:-|:-|:-|:-|

|`mha`|MHA|AR + FIM| Base |

|`no-fim`| MQA | AR| Base |

|`fim`| MQA | AR + FIM | Base |

|`stars`| MQA | AR + FIM | GitHub stars |

|`fertility`| MQA | AR + FIM | Tokenizer fertility |

|`comments`| MQA | AR + FIM | Comment-to-code ratio |

|`dedup-alt`| MQA | AR + FIM | Stronger near-deduplication |

|`final`| MQA | AR + FIM | Stronger near-deduplication and comment-to-code ratio |

The `final` model is the best performing model and was trained twice as long (236B tokens) as the others. This checkpoint is the default model and available on the `main` branch. All other checkpoints are on separate branches with according names.

# Use

## Intended use

The model was trained on GitHub code. As such it is _not_ an instruction model and commands like "Write a function that computes the square root." do not work well.

You should phrase commands like they occur in source code such as comments (e.g. `# the following function computes the sqrt`) or write a function signature and docstring and let the model complete the function body.

**Feel free to share your generations in the Community tab!**

## How to use

### Generation

```python

# pip install -q transformers

from transformers import AutoModelForCausalLM, AutoTokenizer

checkpoint = "olivierdehaene/optimized-santacoder"

device = "cuda" # for GPU usage or "cpu" for CPU usage

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForCausalLM.from_pretrained(checkpoint, trust_remote_code=True).to(device)

inputs = tokenizer.encode("def print_hello_world():", return_tensors="pt").to(device)

outputs = model.generate(inputs)

print(tokenizer.decode(outputs[0]))

```

### Fill-in-the-middle

Fill-in-the-middle uses special tokens to identify the prefix/middle/suffic part of the input and output:

```python

input_text = "<fim-prefix>def print_hello_world():\n <fim-suffix>\n print('Hello world!')<fim-middle>"

inputs = tokenizer.encode(input_text, return_tensors="pt").to(device)

outputs = model.generate(inputs)

print(tokenizer.decode(outputs[0]))

```

### Load other checkpoints

We upload the checkpoint of each experiment to a separate branch as well as the intermediate checkpoints as commits on the branches. You can load them with the `revision` flag:

```python

model = AutoModelForCausalLM.from_pretrained(

"olivierdehaene/optimized-santacoder",

revision="no-fim", # name of branch or commit hash

trust_remote_code=True

)

```

### Attribution & Other Requirements

The pretraining dataset of the model was filtered for permissive licenses only. Nevertheless, the model can generate source code verbatim from the dataset. The code's license might require attribution and/or other specific requirements that must be respected. We provide a [search index](https://huggingface.co/spaces/bigcode/santacoder-search) that let's you search through the pretraining data to identify where generated code came from and apply the proper attribution to your code.

# Limitations

The model has been trained on source code in Python, Java, and JavaScript. The predominant language in source is English although other languages are also present. As such the model is capable to generate code snippets provided some context but the generated code is not guaranteed to work as intended. It can be inefficient, contain bugs or exploits.

# Training

## Model

- **Architecture:** GPT-2 model with multi-query attention and Fill-in-the-Middle objective

- **Pretraining steps:** 600K

- **Pretraining tokens:** 236 billion

- **Precision:** float16

## Hardware

- **GPUs:** 96 Tesla V100

- **Training time:** 6.2 days

- **Total FLOPS:** 2.1 x 10e21

## Software

- **Orchestration:** [Megatron-LM](https://github.com/bigcode-project/Megatron-LM)

- **Neural networks:** [PyTorch](https://github.com/pytorch/pytorch)

- **FP16 if applicable:** [apex](https://github.com/NVIDIA/apex)

# License

The model is licenses under the CodeML Open RAIL-M v0.1 license. You can find the full license [here](https://huggingface.co/spaces/bigcode/license).

# Citation

```

@article{allal2023santacoder,

title={SantaCoder: don't reach for the stars!},

author={Allal, Loubna Ben and Li, Raymond and Kocetkov, Denis and Mou, Chenghao and Akiki, Christopher and Ferrandis, Carlos Munoz and Muennighoff, Niklas and Mishra, Mayank and Gu, Alex and Dey, Manan and others},

journal={arXiv preprint arXiv:2301.03988},

year={2023}

}

```

|

Brizape/tmvar_0.0001_ES2

|

Brizape

| 2023-04-03T21:55:47Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T21:48:44Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_0.0001_ES2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_0.0001_ES2

This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0187

- Precision: 0.8449

- Recall: 0.8541

- F1: 0.8495

- Accuracy: 0.9961

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 1000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.2263 | 1.47 | 25 | 0.0788 | 0.0 | 0.0 | 0.0 | 0.9843 |

| 0.0492 | 2.94 | 50 | 0.0355 | 0.2576 | 0.3676 | 0.3029 | 0.9863 |

| 0.0258 | 4.41 | 75 | 0.0224 | 0.6 | 0.6811 | 0.6380 | 0.9933 |

| 0.013 | 5.88 | 100 | 0.0141 | 0.8267 | 0.9027 | 0.8630 | 0.9969 |

| 0.0031 | 7.35 | 125 | 0.0162 | 0.8218 | 0.8973 | 0.8579 | 0.9971 |

| 0.0028 | 8.82 | 150 | 0.0187 | 0.8449 | 0.8541 | 0.8495 | 0.9961 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

Brizape/tmvar_5e-05_ES2

|

Brizape

| 2023-04-03T21:48:37Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T21:34:48Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_5e-05_ES2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_5e-05_ES2

This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0189

- Precision: 0.8469

- Recall: 0.8973

- F1: 0.8714

- Accuracy: 0.9971

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 1000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.3852 | 1.47 | 25 | 0.1019 | 0.0 | 0.0 | 0.0 | 0.9843 |

| 0.0775 | 2.94 | 50 | 0.0398 | 0.2812 | 0.3892 | 0.3265 | 0.9863 |

| 0.0327 | 4.41 | 75 | 0.0243 | 0.4740 | 0.4919 | 0.4828 | 0.9910 |

| 0.02 | 5.88 | 100 | 0.0191 | 0.7656 | 0.7946 | 0.7798 | 0.9954 |

| 0.0084 | 7.35 | 125 | 0.0229 | 0.7766 | 0.7892 | 0.7828 | 0.9952 |

| 0.0045 | 8.82 | 150 | 0.0172 | 0.8351 | 0.8486 | 0.8418 | 0.9964 |

| 0.0023 | 10.29 | 175 | 0.0190 | 0.9148 | 0.8703 | 0.8920 | 0.9968 |

| 0.0015 | 11.76 | 200 | 0.0189 | 0.8469 | 0.8973 | 0.8714 | 0.9971 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

Brizape/tmvar_2e-05_ES2

|

Brizape

| 2023-04-03T21:31:36Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T21:20:50Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_2e-05_ES2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_2e-05_ES2

This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0184

- Precision: 0.8368

- Recall: 0.8595

- F1: 0.848

- Accuracy: 0.9962

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 1000

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.5018 | 1.47 | 25 | 0.1002 | 0.0 | 0.0 | 0.0 | 0.9843 |

| 0.0852 | 2.94 | 50 | 0.0509 | 0.9286 | 0.0703 | 0.1307 | 0.9852 |

| 0.0373 | 4.41 | 75 | 0.0283 | 0.5485 | 0.6108 | 0.5780 | 0.9918 |

| 0.0256 | 5.88 | 100 | 0.0204 | 0.6429 | 0.7297 | 0.6835 | 0.9938 |

| 0.0123 | 7.35 | 125 | 0.0188 | 0.8063 | 0.8324 | 0.8191 | 0.9956 |

| 0.008 | 8.82 | 150 | 0.0171 | 0.7979 | 0.8324 | 0.8148 | 0.9958 |

| 0.0047 | 10.29 | 175 | 0.0158 | 0.8010 | 0.8919 | 0.8440 | 0.9962 |

| 0.0037 | 11.76 | 200 | 0.0171 | 0.8511 | 0.8649 | 0.8579 | 0.9964 |

| 0.0025 | 13.24 | 225 | 0.0184 | 0.8368 | 0.8595 | 0.848 | 0.9962 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

cxyz/mndknypntr

|

cxyz

| 2023-04-03T21:28:34Z | 36 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"text-to-image",

"stable-diffusion",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-04-03T21:22:18Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

- stable-diffusion

---

### mndknypntr Dreambooth model trained by cxyz with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook

Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast_stable_diffusion_AUTOMATIC1111.ipynb)

Sample pictures of this concept:

|

ShrJatin/100K_sample_model

|

ShrJatin

| 2023-04-03T21:25:49Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"dataset:wmt16",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2023-04-02T22:00:20Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- wmt16

metrics:

- bleu

model-index:

- name: 100K_sample_model

results:

- task:

name: Sequence-to-sequence Language Modeling

type: text2text-generation

dataset:

name: wmt16

type: wmt16

config: de-en

split: validation

args: de-en

metrics:

- name: Bleu

type: bleu

value: 13.0723

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 100K_sample_model

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on the wmt16 dataset.

It achieves the following results on the evaluation set:

- Loss: 1.2624

- Bleu: 13.0723

- Gen Len: 17.5159

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Gen Len |

|:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|

| 1.2605 | 1.0 | 12500 | 1.2595 | 13.0452 | 17.503 |

| 1.2728 | 2.0 | 25000 | 1.2596 | 13.049 | 17.5154 |

| 1.2437 | 3.0 | 37500 | 1.2624 | 13.0723 | 17.5159 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu117

- Datasets 2.11.0

- Tokenizers 0.13.2

|

sgolkar/gpt2-finetuned-brookstraining

|

sgolkar

| 2023-04-03T21:21:02Z | 12 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-03T18:44:26Z |

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: gpt2-finetuned-brookstraining

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gpt2-finetuned-brookstraining

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 4.3233

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 20

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 201 | 3.7473 |

| No log | 2.0 | 402 | 3.7192 |

| 3.9557 | 3.0 | 603 | 3.7303 |

| 3.9557 | 4.0 | 804 | 3.7354 |

| 3.4723 | 5.0 | 1005 | 3.7725 |

| 3.4723 | 6.0 | 1206 | 3.7934 |

| 3.4723 | 7.0 | 1407 | 3.8325 |

| 3.1092 | 8.0 | 1608 | 3.8907 |

| 3.1092 | 9.0 | 1809 | 3.9566 |

| 2.8224 | 10.0 | 2010 | 3.9908 |

| 2.8224 | 11.0 | 2211 | 4.0487 |

| 2.8224 | 12.0 | 2412 | 4.0744 |

| 2.5733 | 13.0 | 2613 | 4.1212 |

| 2.5733 | 14.0 | 2814 | 4.1872 |

| 2.3879 | 15.0 | 3015 | 4.2208 |

| 2.3879 | 16.0 | 3216 | 4.2358 |

| 2.3879 | 17.0 | 3417 | 4.2799 |

| 2.2721 | 18.0 | 3618 | 4.3077 |

| 2.2721 | 19.0 | 3819 | 4.3217 |

| 2.2043 | 20.0 | 4020 | 4.3233 |

### Framework versions

- Transformers 4.27.4

- Pytorch 1.13.1

- Datasets 2.11.0

- Tokenizers 0.11.0

|

cartesinus/iva_mt-leyzer-intent_baseline-xlm_r-pl

|

cartesinus

| 2023-04-03T21:16:22Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"text-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-03-27T22:11:53Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: fedcsis_translated-intent_baseline-xlm_r-pl

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# fedcsis_translated-intent_baseline-xlm_r-pl

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the

[leyzer-fedcsis-translated](https://huggingface.co/datasets/cartesinus/leyzer-fedcsis-translated) dataset.

Results on untranslated test set:

- Accuracy: 0.8769

It achieves the following results on the evaluation set:

- Loss: 0.5478

- Accuracy: 0.8769

- F1: 0.8769

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 3.505 | 1.0 | 814 | 1.8819 | 0.5979 | 0.5979 |

| 1.5056 | 2.0 | 1628 | 1.1033 | 0.7611 | 0.7611 |

| 1.0892 | 3.0 | 2442 | 0.7402 | 0.8470 | 0.8470 |

| 0.648 | 4.0 | 3256 | 0.5263 | 0.8902 | 0.8902 |

| 0.423 | 5.0 | 4070 | 0.4253 | 0.9152 | 0.9152 |

| 0.3429 | 6.0 | 4884 | 0.3654 | 0.9194 | 0.9194 |

| 0.2464 | 7.0 | 5698 | 0.3213 | 0.9273 | 0.9273 |

| 0.1873 | 8.0 | 6512 | 0.3065 | 0.9328 | 0.9328 |

| 0.1666 | 9.0 | 7326 | 0.3046 | 0.9345 | 0.9345 |

| 0.1459 | 10.0 | 8140 | 0.2911 | 0.9370 | 0.9370 |

### Framework versions

- Transformers 4.27.3

- Pytorch 1.13.1+cu116

- Datasets 2.10.1

- Tokenizers 0.13.2

|

hopkins/strict-small-2

|

hopkins

| 2023-04-03T20:51:27Z | 132 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"dataset:generator",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-04-02T20:10:49Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- generator

model-index:

- name: strict-small-2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# strict-small-2

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on the generator dataset.

It achieves the following results on the evaluation set:

- Loss: 5.8423

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0005

- train_batch_size: 128

- eval_batch_size: 128

- seed: 42

- gradient_accumulation_steps: 8

- total_train_batch_size: 1024

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_steps: 1000

- num_epochs: 50

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:-----:|:---------------:|

| 4.1594 | 7.33 | 2000 | 3.8824 |

| 2.8132 | 14.65 | 4000 | 4.2196 |

| 2.121 | 21.98 | 6000 | 4.7343 |

| 1.6016 | 29.3 | 8000 | 5.2934 |

| 1.2441 | 36.63 | 10000 | 5.6547 |

| 1.0171 | 43.96 | 12000 | 5.8423 |

### Framework versions

- Transformers 4.25.1

- Pytorch 1.13.1+cu117

- Datasets 2.8.0

- Tokenizers 0.13.2

|

amannlp/dqn-SpaceInvadersNoFrameskip-v4

|

amannlp

| 2023-04-03T20:42:54Z | 8 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"SpaceInvadersNoFrameskip-v4",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-03T20:42:21Z |

---

library_name: stable-baselines3

tags:

- SpaceInvadersNoFrameskip-v4

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: DQN

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: SpaceInvadersNoFrameskip-v4

type: SpaceInvadersNoFrameskip-v4

metrics:

- type: mean_reward

value: 462.00 +/- 166.89

name: mean_reward

verified: false

---

# **DQN** Agent playing **SpaceInvadersNoFrameskip-v4**

This is a trained model of a **DQN** agent playing **SpaceInvadersNoFrameskip-v4**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3)

and the [RL Zoo](https://github.com/DLR-RM/rl-baselines3-zoo).

The RL Zoo is a training framework for Stable Baselines3

reinforcement learning agents,

with hyperparameter optimization and pre-trained agents included.

## Usage (with SB3 RL Zoo)

RL Zoo: https://github.com/DLR-RM/rl-baselines3-zoo<br/>

SB3: https://github.com/DLR-RM/stable-baselines3<br/>

SB3 Contrib: https://github.com/Stable-Baselines-Team/stable-baselines3-contrib

Install the RL Zoo (with SB3 and SB3-Contrib):

```bash

pip install rl_zoo3

```

```

# Download model and save it into the logs/ folder

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga amannlp -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

If you installed the RL Zoo3 via pip (`pip install rl_zoo3`), from anywhere you can do:

```

python -m rl_zoo3.load_from_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -orga amannlp -f logs/

python -m rl_zoo3.enjoy --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

```

## Training (with the RL Zoo)

```

python -m rl_zoo3.train --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/

# Upload the model and generate video (when possible)

python -m rl_zoo3.push_to_hub --algo dqn --env SpaceInvadersNoFrameskip-v4 -f logs/ -orga amannlp

```

## Hyperparameters

```python

OrderedDict([('batch_size', 32),

('buffer_size', 100000),

('env_wrapper',

['stable_baselines3.common.atari_wrappers.AtariWrapper']),

('exploration_final_eps', 0.01),

('exploration_fraction', 0.1),

('frame_stack', 4),

('gradient_steps', 1),

('learning_rate', 0.0001),

('learning_starts', 100000),

('n_timesteps', 2000000.0),

('optimize_memory_usage', False),

('policy', 'CnnPolicy'),

('target_update_interval', 1000),

('train_freq', 4),

('normalize', False)])

```

|

Brizape/tmvar_0.0001

|

Brizape

| 2023-04-03T20:41:31Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T20:30:20Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_0.0001

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_0.0001

This model is a fine-tuned version of [microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext](https://huggingface.co/microsoft/BiomedNLP-PubMedBERT-base-uncased-abstract-fulltext) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0162

- Precision: 0.8877

- Recall: 0.8973

- F1: 0.8925

- Accuracy: 0.9971

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- training_steps: 500

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.2263 | 1.47 | 25 | 0.0776 | 0.0 | 0.0 | 0.0 | 0.9843 |

| 0.05 | 2.94 | 50 | 0.0400 | 0.2868 | 0.4216 | 0.3414 | 0.9872 |

| 0.0271 | 4.41 | 75 | 0.0219 | 0.5381 | 0.6486 | 0.5882 | 0.9925 |

| 0.0108 | 5.88 | 100 | 0.0132 | 0.8324 | 0.8324 | 0.8324 | 0.9965 |

| 0.0029 | 7.35 | 125 | 0.0107 | 0.8934 | 0.9514 | 0.9215 | 0.9979 |

| 0.0025 | 8.82 | 150 | 0.0123 | 0.8691 | 0.8973 | 0.8830 | 0.9972 |

| 0.0011 | 10.29 | 175 | 0.0127 | 0.8579 | 0.9135 | 0.8848 | 0.9969 |

| 0.0006 | 11.76 | 200 | 0.0102 | 0.8969 | 0.9405 | 0.9182 | 0.9981 |

| 0.0005 | 13.24 | 225 | 0.0118 | 0.8942 | 0.9135 | 0.9037 | 0.9978 |

| 0.0005 | 14.71 | 250 | 0.0106 | 0.8768 | 0.9622 | 0.9175 | 0.9981 |

| 0.0015 | 16.18 | 275 | 0.0119 | 0.855 | 0.9243 | 0.8883 | 0.9976 |

| 0.0006 | 17.65 | 300 | 0.0134 | 0.8814 | 0.9243 | 0.9024 | 0.9977 |

| 0.0004 | 19.12 | 325 | 0.0177 | 0.8617 | 0.8757 | 0.8686 | 0.9969 |

| 0.0003 | 20.59 | 350 | 0.0162 | 0.8877 | 0.8973 | 0.8925 | 0.9971 |

### Framework versions

- Transformers 4.27.4

- Pytorch 2.0.0+cu118

- Datasets 2.11.0

- Tokenizers 0.13.2

|

OccamRazor/pygmalion-6b-gptq-4bit

|

OccamRazor

| 2023-04-03T20:34:06Z | 10 | 10 |

transformers

|

[

"transformers",

"gptj",

"text-generation",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-03-23T06:01:05Z |

---

license: creativeml-openrail-m

---

|

marci0929/InvertedDoublePendulumBulletEnv-v0-InvertedDoublePendulumBulletEnv-v0-100k

|

marci0929

| 2023-04-03T20:22:37Z | 0 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"InvertedDoublePendulumBulletEnv-v0",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-04-03T19:52:18Z |

---

library_name: stable-baselines3

tags:

- InvertedDoublePendulumBulletEnv-v0

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: A2C

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: InvertedDoublePendulumBulletEnv-v0

type: InvertedDoublePendulumBulletEnv-v0

metrics:

- type: mean_reward

value: 1129.72 +/- 346.09

name: mean_reward

verified: false

---

# **A2C** Agent playing **InvertedDoublePendulumBulletEnv-v0**

This is a trained model of a **A2C** agent playing **InvertedDoublePendulumBulletEnv-v0**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

Brizape/tmvar_2e-05

|

Brizape

| 2023-04-03T20:15:13Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-04-03T19:59:13Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: tmvar_2e-05

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tmvar_2e-05