modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 06:27:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 527

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 06:27:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

crystalline7/698504

|

crystalline7

| 2025-08-29T23:57:51Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:57:45Z |

[View on Civ Archive](https://civarchive.com/models/680268?modelVersionId=785062)

|

amethyst9/537038

|

amethyst9

| 2025-08-29T23:57:37Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:57:32Z |

[View on Civ Archive](https://civarchive.com/models/530102?modelVersionId=622054)

|

seraphimzzzz/530692

|

seraphimzzzz

| 2025-08-29T23:57:25Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:57:19Z |

[View on Civ Archive](https://civarchive.com/models/553093?modelVersionId=615506)

|

liukevin666/blockassist-bc-yawning_striped_cassowary_1756511760

|

liukevin666

| 2025-08-29T23:57:18Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"yawning striped cassowary",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:56:57Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- yawning striped cassowary

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

seraphimzzzz/543996

|

seraphimzzzz

| 2025-08-29T23:56:59Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:56:53Z |

[View on Civ Archive](https://civarchive.com/models/564261?modelVersionId=629295)

|

Wejh/Affine-5DhQ91jnXRrCvzTmjZ2URiv2fdi677d1Dn26ZD1UezhbC4e9

|

Wejh

| 2025-08-29T23:56:55Z | 0 | 0 | null |

[

"pytorch",

"safetensors",

"llama",

"facebook",

"meta",

"llama-2",

"text-generation",

"en",

"region:us"

] |

text-generation

| 2025-08-29T23:56:54Z |

---

extra_gated_heading: Access Llama 2 on Hugging Face

extra_gated_description: >-

This is a form to enable access to Llama 2 on Hugging Face after you have been

granted access from Meta. Please visit the [Meta website](https://ai.meta.com/resources/models-and-libraries/llama-downloads) and accept our

license terms and acceptable use policy before submitting this form. Requests

will be processed in 1-2 days.

extra_gated_button_content: Submit

extra_gated_fields:

I agree to share my name, email address and username with Meta and confirm that I have already been granted download access on the Meta website: checkbox

language:

- en

pipeline_tag: text-generation

inference: false

tags:

- facebook

- meta

- pytorch

- llama

- llama-2

---

# **Llama 2**

Llama 2 is a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. This is the repository for the 7B fine-tuned model, optimized for dialogue use cases and converted for the Hugging Face Transformers format. Links to other models can be found in the index at the bottom.

## Model Details

*Note: Use of this model is governed by the Meta license. In order to download the model weights and tokenizer, please visit the [website](https://ai.meta.com/resources/models-and-libraries/llama-downloads/) and accept our License before requesting access here.*

Meta developed and publicly released the Llama 2 family of large language models (LLMs), a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters. Our fine-tuned LLMs, called Llama-2-Chat, are optimized for dialogue use cases. Llama-2-Chat models outperform open-source chat models on most benchmarks we tested, and in our human evaluations for helpfulness and safety, are on par with some popular closed-source models like ChatGPT and PaLM.

**Model Developers** Meta

**Variations** Llama 2 comes in a range of parameter sizes — 7B, 13B, and 70B — as well as pretrained and fine-tuned variations.

**Input** Models input text only.

**Output** Models generate text only.

**Model Architecture** Llama 2 is an auto-regressive language model that uses an optimized transformer architecture. The tuned versions use supervised fine-tuning (SFT) and reinforcement learning with human feedback (RLHF) to align to human preferences for helpfulness and safety.

||Training Data|Params|Content Length|GQA|Tokens|LR|

|---|---|---|---|---|---|---|

|Llama 2|*A new mix of publicly available online data*|7B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|13B|4k|✗|2.0T|3.0 x 10<sup>-4</sup>|

|Llama 2|*A new mix of publicly available online data*|70B|4k|✔|2.0T|1.5 x 10<sup>-4</sup>|

*Llama 2 family of models.* Token counts refer to pretraining data only. All models are trained with a global batch-size of 4M tokens. Bigger models - 70B -- use Grouped-Query Attention (GQA) for improved inference scalability.

**Model Dates** Llama 2 was trained between January 2023 and July 2023.

**Status** This is a static model trained on an offline dataset. Future versions of the tuned models will be released as we improve model safety with community feedback.

**License** A custom commercial license is available at: [https://ai.meta.com/resources/models-and-libraries/llama-downloads/](https://ai.meta.com/resources/models-and-libraries/llama-downloads/)

## Intended Use

**Intended Use Cases** Llama 2 is intended for commercial and research use in English. Tuned models are intended for assistant-like chat, whereas pretrained models can be adapted for a variety of natural language generation tasks.

To get the expected features and performance for the chat versions, a specific formatting needs to be followed, including the `INST` and `<<SYS>>` tags, `BOS` and `EOS` tokens, and the whitespaces and breaklines in between (we recommend calling `strip()` on inputs to avoid double-spaces). See our reference code in github for details: [`chat_completion`](https://github.com/facebookresearch/llama/blob/main/llama/generation.py#L212).

**Out-of-scope Uses** Use in any manner that violates applicable laws or regulations (including trade compliance laws).Use in languages other than English. Use in any other way that is prohibited by the Acceptable Use Policy and Licensing Agreement for Llama 2.

## Hardware and Software

**Training Factors** We used custom training libraries, Meta's Research Super Cluster, and production clusters for pretraining. Fine-tuning, annotation, and evaluation were also performed on third-party cloud compute.

**Carbon Footprint** Pretraining utilized a cumulative 3.3M GPU hours of computation on hardware of type A100-80GB (TDP of 350-400W). Estimated total emissions were 539 tCO2eq, 100% of which were offset by Meta’s sustainability program.

||Time (GPU hours)|Power Consumption (W)|Carbon Emitted(tCO<sub>2</sub>eq)|

|---|---|---|---|

|Llama 2 7B|184320|400|31.22|

|Llama 2 13B|368640|400|62.44|

|Llama 2 70B|1720320|400|291.42|

|Total|3311616||539.00|

**CO<sub>2</sub> emissions during pretraining.** Time: total GPU time required for training each model. Power Consumption: peak power capacity per GPU device for the GPUs used adjusted for power usage efficiency. 100% of the emissions are directly offset by Meta's sustainability program, and because we are openly releasing these models, the pretraining costs do not need to be incurred by others.

## Training Data

**Overview** Llama 2 was pretrained on 2 trillion tokens of data from publicly available sources. The fine-tuning data includes publicly available instruction datasets, as well as over one million new human-annotated examples. Neither the pretraining nor the fine-tuning datasets include Meta user data.

**Data Freshness** The pretraining data has a cutoff of September 2022, but some tuning data is more recent, up to July 2023.

## Evaluation Results

In this section, we report the results for the Llama 1 and Llama 2 models on standard academic benchmarks.For all the evaluations, we use our internal evaluations library.

|Model|Size|Code|Commonsense Reasoning|World Knowledge|Reading Comprehension|Math|MMLU|BBH|AGI Eval|

|---|---|---|---|---|---|---|---|---|---|

|Llama 1|7B|14.1|60.8|46.2|58.5|6.95|35.1|30.3|23.9|

|Llama 1|13B|18.9|66.1|52.6|62.3|10.9|46.9|37.0|33.9|

|Llama 1|33B|26.0|70.0|58.4|67.6|21.4|57.8|39.8|41.7|

|Llama 1|65B|30.7|70.7|60.5|68.6|30.8|63.4|43.5|47.6|

|Llama 2|7B|16.8|63.9|48.9|61.3|14.6|45.3|32.6|29.3|

|Llama 2|13B|24.5|66.9|55.4|65.8|28.7|54.8|39.4|39.1|

|Llama 2|70B|**37.5**|**71.9**|**63.6**|**69.4**|**35.2**|**68.9**|**51.2**|**54.2**|

**Overall performance on grouped academic benchmarks.** *Code:* We report the average pass@1 scores of our models on HumanEval and MBPP. *Commonsense Reasoning:* We report the average of PIQA, SIQA, HellaSwag, WinoGrande, ARC easy and challenge, OpenBookQA, and CommonsenseQA. We report 7-shot results for CommonSenseQA and 0-shot results for all other benchmarks. *World Knowledge:* We evaluate the 5-shot performance on NaturalQuestions and TriviaQA and report the average. *Reading Comprehension:* For reading comprehension, we report the 0-shot average on SQuAD, QuAC, and BoolQ. *MATH:* We report the average of the GSM8K (8 shot) and MATH (4 shot) benchmarks at top 1.

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama 1|7B|27.42|23.00|

|Llama 1|13B|41.74|23.08|

|Llama 1|33B|44.19|22.57|

|Llama 1|65B|48.71|21.77|

|Llama 2|7B|33.29|**21.25**|

|Llama 2|13B|41.86|26.10|

|Llama 2|70B|**50.18**|24.60|

**Evaluation of pretrained LLMs on automatic safety benchmarks.** For TruthfulQA, we present the percentage of generations that are both truthful and informative (the higher the better). For ToxiGen, we present the percentage of toxic generations (the smaller the better).

|||TruthfulQA|Toxigen|

|---|---|---|---|

|Llama-2-Chat|7B|57.04|**0.00**|

|Llama-2-Chat|13B|62.18|**0.00**|

|Llama-2-Chat|70B|**64.14**|0.01|

**Evaluation of fine-tuned LLMs on different safety datasets.** Same metric definitions as above.

## Ethical Considerations and Limitations

Llama 2 is a new technology that carries risks with use. Testing conducted to date has been in English, and has not covered, nor could it cover all scenarios. For these reasons, as with all LLMs, Llama 2’s potential outputs cannot be predicted in advance, and the model may in some instances produce inaccurate, biased or other objectionable responses to user prompts. Therefore, before deploying any applications of Llama 2, developers should perform safety testing and tuning tailored to their specific applications of the model.

Please see the Responsible Use Guide available at [https://ai.meta.com/llama/responsible-use-guide/](https://ai.meta.com/llama/responsible-use-guide)

## Reporting Issues

Please report any software “bug,” or other problems with the models through one of the following means:

- Reporting issues with the model: [github.com/facebookresearch/llama](http://github.com/facebookresearch/llama)

- Reporting problematic content generated by the model: [developers.facebook.com/llama_output_feedback](http://developers.facebook.com/llama_output_feedback)

- Reporting bugs and security concerns: [facebook.com/whitehat/info](http://facebook.com/whitehat/info)

## Llama Model Index

|Model|Llama2|Llama2-hf|Llama2-chat|Llama2-chat-hf|

|---|---|---|---|---|

|7B| [Link](https://huggingface.co/llamaste/Llama-2-7b) | [Link](https://huggingface.co/llamaste/Llama-2-7b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-7b-chat-hf)|

|13B| [Link](https://huggingface.co/llamaste/Llama-2-13b) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-13b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-13b-hf)|

|70B| [Link](https://huggingface.co/llamaste/Llama-2-70b) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf) | [Link](https://huggingface.co/llamaste/Llama-2-70b-chat) | [Link](https://huggingface.co/llamaste/Llama-2-70b-hf)|

|

ultratopaz/652549

|

ultratopaz

| 2025-08-29T23:56:46Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:56:40Z |

[View on Civ Archive](https://civarchive.com/models/517929?modelVersionId=738620)

|

xibitthenoob/Qwen-3-32B-Medical-Reasoning

|

xibitthenoob

| 2025-08-29T23:56:20Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"arxiv:1910.09700",

"endpoints_compatible",

"region:us"

] | null | 2025-08-17T19:54:27Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

amethyst9/573062

|

amethyst9

| 2025-08-29T23:56:19Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:56:13Z |

[View on Civ Archive](https://civarchive.com/models/589061?modelVersionId=657683)

|

hakimjustbao/blockassist-bc-raging_subtle_wasp_1756510253

|

hakimjustbao

| 2025-08-29T23:56:14Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"raging subtle wasp",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:56:10Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- raging subtle wasp

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

ultratopaz/518800

|

ultratopaz

| 2025-08-29T23:56:06Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:56:00Z |

[View on Civ Archive](https://civarchive.com/models/542594?modelVersionId=603296)

|

pempekmangedd/blockassist-bc-patterned_sturdy_dolphin_1756510275

|

pempekmangedd

| 2025-08-29T23:56:00Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"patterned sturdy dolphin",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:55:56Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- patterned sturdy dolphin

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

ultratopaz/810462

|

ultratopaz

| 2025-08-29T23:53:56Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:53:47Z |

[View on Civ Archive](https://civarchive.com/models/774205?modelVersionId=902028)

|

bah63843/blockassist-bc-plump_fast_antelope_1756511581

|

bah63843

| 2025-08-29T23:53:48Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:53:39Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

seraphimzzzz/385884

|

seraphimzzzz

| 2025-08-29T23:53:38Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:53:38Z |

[View on Civ Archive](https://civarchive.com/models/419169?modelVersionId=466982)

|

Loder-S/blockassist-bc-sprightly_knobby_tiger_1756510052

|

Loder-S

| 2025-08-29T23:52:45Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"sprightly knobby tiger",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:52:42Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- sprightly knobby tiger

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

seraphimzzzz/496178

|

seraphimzzzz

| 2025-08-29T23:52:38Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:52:35Z |

[View on Civ Archive](https://civarchive.com/models/522696?modelVersionId=580731)

|

seraphimzzzz/635223

|

seraphimzzzz

| 2025-08-29T23:52:15Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:52:09Z |

[View on Civ Archive](https://civarchive.com/models/644196?modelVersionId=720610)

|

ultratopaz/461961

|

ultratopaz

| 2025-08-29T23:51:48Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:51:42Z |

[View on Civ Archive](https://civarchive.com/models/490763?modelVersionId=545714)

|

amethyst9/1848824

|

amethyst9

| 2025-08-29T23:50:53Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:50:44Z |

[View on Civ Archive](https://civarchive.com/models/1714215?modelVersionId=1951266)

|

amethyst9/620074

|

amethyst9

| 2025-08-29T23:50:22Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:50:16Z |

[View on Civ Archive](https://civarchive.com/models/567581?modelVersionId=705452)

|

yanghuattt/ckpts

|

yanghuattt

| 2025-08-29T23:49:46Z | 0 | 0 |

peft

|

[

"peft",

"safetensors",

"base_model:adapter:stabilityai/stable-code-3b",

"lora",

"transformers",

"text-generation",

"arxiv:1910.09700",

"base_model:stabilityai/stable-code-3b",

"region:us"

] |

text-generation

| 2025-08-29T16:06:00Z |

---

base_model: stabilityai/stable-code-3b

library_name: peft

pipeline_tag: text-generation

tags:

- base_model:adapter:stabilityai/stable-code-3b

- lora

- transformers

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

### Framework versions

- PEFT 0.17.2.dev0

|

aoskes111/blockassist-bc-stinging_scruffy_bobcat_1756511312

|

aoskes111

| 2025-08-29T23:49:17Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"stinging scruffy bobcat",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:48:59Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- stinging scruffy bobcat

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

ultratopaz/625721

|

ultratopaz

| 2025-08-29T23:49:09Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:49:03Z |

[View on Civ Archive](https://civarchive.com/models/635945?modelVersionId=711036)

|

amethyst9/568985

|

amethyst9

| 2025-08-29T23:48:55Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:48:49Z |

[View on Civ Archive](https://civarchive.com/models/585614?modelVersionId=653500)

|

crystalline7/669537

|

crystalline7

| 2025-08-29T23:48:45Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:48:42Z |

[View on Civ Archive](https://civarchive.com/models/675147?modelVersionId=755748)

|

amethyst9/608675

|

amethyst9

| 2025-08-29T23:48:17Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:48:11Z |

[View on Civ Archive](https://civarchive.com/models/610760?modelVersionId=694017)

|

crystalline7/903115

|

crystalline7

| 2025-08-29T23:47:40Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:47:30Z |

[View on Civ Archive](https://civarchive.com/models/567581?modelVersionId=997525)

|

crystalline7/897338

|

crystalline7

| 2025-08-29T23:47:10Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:47:10Z |

[View on Civ Archive](https://civarchive.com/models/567581?modelVersionId=991368)

|

seraphimzzzz/1164218

|

seraphimzzzz

| 2025-08-29T23:46:41Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:46:41Z |

[View on Civ Archive](https://civarchive.com/models/517929?modelVersionId=1259027)

|

amethyst9/1816274

|

amethyst9

| 2025-08-29T23:46:16Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:46:11Z |

[View on Civ Archive](https://civarchive.com/models/1694695?modelVersionId=1917948)

|

crystalline7/569015

|

crystalline7

| 2025-08-29T23:45:56Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:45:50Z |

[View on Civ Archive](https://civarchive.com/models/585614?modelVersionId=654001)

|

keras/qwen3_4b_en

|

keras

| 2025-08-29T23:45:48Z | 0 | 0 |

keras-hub

|

[

"keras-hub",

"text-generation",

"region:us"

] |

text-generation

| 2025-08-29T23:41:54Z |

---

library_name: keras-hub

pipeline_tag: text-generation

---

This is a [`Qwen3` model](https://keras.io/api/keras_hub/models/qwen3) uploaded using the KerasHub library and can be used with JAX, TensorFlow, and PyTorch backends.

This model is related to a `CausalLM` task.

Model config:

* **name:** qwen3_backbone

* **trainable:** True

* **vocabulary_size:** 151936

* **num_layers:** 36

* **num_query_heads:** 32

* **hidden_dim:** 2560

* **head_dim:** 128

* **intermediate_dim:** 9728

* **rope_max_wavelength:** 1000000

* **rope_scaling_factor:** 1.0

* **num_key_value_heads:** 8

* **layer_norm_epsilon:** 1e-06

* **dropout:** 0.0

* **tie_word_embeddings:** True

* **sliding_window_size:** None

This model card has been generated automatically and should be completed by the model author. See [Model Cards documentation](https://huggingface.co/docs/hub/model-cards) for more information.

|

seraphimzzzz/798003

|

seraphimzzzz

| 2025-08-29T23:45:40Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:45:32Z |

[View on Civ Archive](https://civarchive.com/models/795340?modelVersionId=889343)

|

crystalline7/545009

|

crystalline7

| 2025-08-29T23:45:22Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:45:17Z |

[View on Civ Archive](https://civarchive.com/models/564261?modelVersionId=630310)

|

amethyst9/575038

|

amethyst9

| 2025-08-29T23:44:51Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:44:51Z |

[View on Civ Archive](https://civarchive.com/models/584311?modelVersionId=660032)

|

seraphimzzzz/629805

|

seraphimzzzz

| 2025-08-29T23:44:31Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:44:25Z |

[View on Civ Archive](https://civarchive.com/models/512252?modelVersionId=715117)

|

seraphimzzzz/823757

|

seraphimzzzz

| 2025-08-29T23:44:17Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:44:12Z |

[View on Civ Archive](https://civarchive.com/models/816163?modelVersionId=916207)

|

seraphimzzzz/575008

|

seraphimzzzz

| 2025-08-29T23:43:51Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:43:45Z |

[View on Civ Archive](https://civarchive.com/models/584311?modelVersionId=659857)

|

bah63843/blockassist-bc-plump_fast_antelope_1756510974

|

bah63843

| 2025-08-29T23:43:44Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"plump fast antelope",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:43:35Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- plump fast antelope

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

seraphimzzzz/724311

|

seraphimzzzz

| 2025-08-29T23:43:38Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:43:33Z |

[View on Civ Archive](https://civarchive.com/models/719079?modelVersionId=804126)

|

crystalline7/856951

|

crystalline7

| 2025-08-29T23:43:23Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:43:15Z |

[View on Civ Archive](https://civarchive.com/models/848936?modelVersionId=949798)

|

ultratopaz/1243851

|

ultratopaz

| 2025-08-29T23:43:04Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:42:55Z |

[View on Civ Archive](https://civarchive.com/models/1189856?modelVersionId=1339576)

|

qualcomm/Shufflenet-v2

|

qualcomm

| 2025-08-29T23:42:48Z | 53 | 1 |

pytorch

|

[

"pytorch",

"tflite",

"android",

"image-classification",

"arxiv:1807.11164",

"license:other",

"region:us"

] |

image-classification

| 2024-02-25T23:05:43Z |

---

library_name: pytorch

license: other

tags:

- android

pipeline_tag: image-classification

---

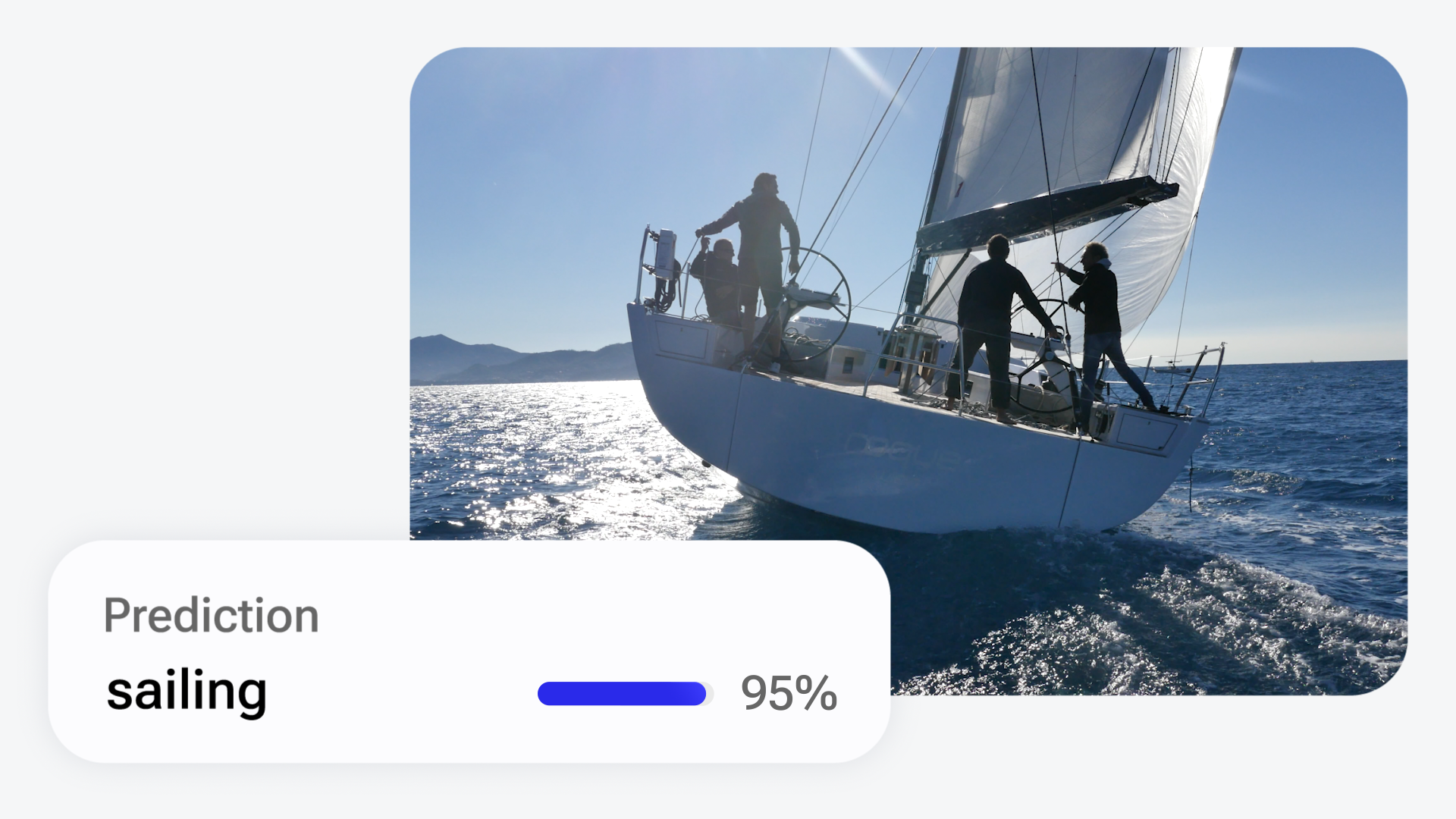

# Shufflenet-v2: Optimized for Mobile Deployment

## Imagenet classifier and general purpose backbone

ShufflenetV2 is a machine learning model that can classify images from the Imagenet dataset. It can also be used as a backbone in building more complex models for specific use cases.

This model is an implementation of Shufflenet-v2 found [here](https://github.com/pytorch/vision/blob/main/torchvision/models/shufflenetv2.py).

This repository provides scripts to run Shufflenet-v2 on Qualcomm® devices.

More details on model performance across various devices, can be found

[here](https://aihub.qualcomm.com/models/shufflenet_v2).

### Model Details

- **Model Type:** Model_use_case.image_classification

- **Model Stats:**

- Model checkpoint: Imagenet

- Input resolution: 224x224

- Number of parameters: 1.37M

- Model size (float): 5.24 MB

- Model size (w8a8): 1.47 MB

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model

|---|---|---|---|---|---|---|---|---|

| Shufflenet-v2 | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 1.592 ms | 0 - 19 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 1.584 ms | 0 - 21 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 0.781 ms | 0 - 35 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 1.172 ms | 1 - 35 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 0.701 ms | 0 - 30 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 0.694 ms | 1 - 19 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 0.971 ms | 0 - 19 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 0.933 ms | 1 - 20 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | SA7255P ADP | Qualcomm® SA7255P | TFLITE | 1.592 ms | 0 - 19 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | SA7255P ADP | Qualcomm® SA7255P | QNN_DLC | 1.584 ms | 0 - 21 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | TFLITE | 0.7 ms | 0 - 29 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | QNN_DLC | 0.695 ms | 1 - 19 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | SA8295P ADP | Qualcomm® SA8295P | TFLITE | 1.11 ms | 0 - 26 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | SA8295P ADP | Qualcomm® SA8295P | QNN_DLC | 1.105 ms | 1 - 28 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | TFLITE | 0.701 ms | 0 - 29 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | QNN_DLC | 0.687 ms | 1 - 19 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | SA8775P ADP | Qualcomm® SA8775P | TFLITE | 0.971 ms | 0 - 19 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | SA8775P ADP | Qualcomm® SA8775P | QNN_DLC | 0.933 ms | 1 - 20 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 0.699 ms | 0 - 29 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 0.694 ms | 0 - 17 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 0.973 ms | 0 - 17 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.onnx.zip) |

| Shufflenet-v2 | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 0.445 ms | 0 - 26 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 0.455 ms | 0 - 32 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 0.636 ms | 0 - 28 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.onnx.zip) |

| Shufflenet-v2 | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 0.442 ms | 0 - 24 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.tflite) |

| Shufflenet-v2 | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 0.46 ms | 1 - 27 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 0.648 ms | 1 - 23 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.onnx.zip) |

| Shufflenet-v2 | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 0.83 ms | 16 - 16 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.dlc) |

| Shufflenet-v2 | float | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 1.036 ms | 3 - 3 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2.onnx.zip) |

| Shufflenet-v2 | w8a8 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 0.781 ms | 0 - 16 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 1.049 ms | 0 - 17 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 0.359 ms | 0 - 33 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 0.535 ms | 0 - 29 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 0.316 ms | 0 - 11 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 0.462 ms | 0 - 11 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 0.512 ms | 0 - 16 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 0.656 ms | 0 - 17 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | RB3 Gen 2 (Proxy) | Qualcomm® QCS6490 (Proxy) | TFLITE | 0.626 ms | 0 - 21 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | RB3 Gen 2 (Proxy) | Qualcomm® QCS6490 (Proxy) | QNN_DLC | 1.041 ms | 0 - 20 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | RB5 (Proxy) | Qualcomm® QCS8250 (Proxy) | TFLITE | 11.599 ms | 0 - 16 MB | CPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA7255P ADP | Qualcomm® SA7255P | TFLITE | 0.781 ms | 0 - 16 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA7255P ADP | Qualcomm® SA7255P | QNN_DLC | 1.049 ms | 0 - 17 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | TFLITE | 0.315 ms | 0 - 10 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA8255 (Proxy) | Qualcomm® SA8255P (Proxy) | QNN_DLC | 0.469 ms | 0 - 9 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | SA8295P ADP | Qualcomm® SA8295P | TFLITE | 0.622 ms | 0 - 27 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA8295P ADP | Qualcomm® SA8295P | QNN_DLC | 0.789 ms | 0 - 27 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | TFLITE | 0.31 ms | 0 - 11 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA8650 (Proxy) | Qualcomm® SA8650P (Proxy) | QNN_DLC | 0.457 ms | 0 - 10 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | SA8775P ADP | Qualcomm® SA8775P | TFLITE | 0.512 ms | 0 - 16 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | SA8775P ADP | Qualcomm® SA8775P | QNN_DLC | 0.656 ms | 0 - 17 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 0.314 ms | 0 - 11 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 0.471 ms | 0 - 9 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 9.591 ms | 1 - 47 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.onnx.zip) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 0.221 ms | 0 - 23 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 0.326 ms | 0 - 27 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 8.019 ms | 1 - 270 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.onnx.zip) |

| Shufflenet-v2 | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 0.244 ms | 0 - 20 MB | NPU | [Shufflenet-v2.tflite](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.tflite) |

| Shufflenet-v2 | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 0.31 ms | 0 - 24 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 7.848 ms | 1 - 258 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.onnx.zip) |

| Shufflenet-v2 | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 0.586 ms | 4 - 4 MB | NPU | [Shufflenet-v2.dlc](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.dlc) |

| Shufflenet-v2 | w8a8 | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 9.607 ms | 6 - 6 MB | NPU | [Shufflenet-v2.onnx.zip](https://huggingface.co/qualcomm/Shufflenet-v2/blob/main/Shufflenet-v2_w8a8.onnx.zip) |

## Installation

Install the package via pip:

```bash

pip install qai-hub-models

```

## Configure Qualcomm® AI Hub to run this model on a cloud-hosted device

Sign-in to [Qualcomm® AI Hub](https://app.aihub.qualcomm.com/) with your

Qualcomm® ID. Once signed in navigate to `Account -> Settings -> API Token`.

With this API token, you can configure your client to run models on the cloud

hosted devices.

```bash

qai-hub configure --api_token API_TOKEN

```

Navigate to [docs](https://app.aihub.qualcomm.com/docs/) for more information.

## Demo off target

The package contains a simple end-to-end demo that downloads pre-trained

weights and runs this model on a sample input.

```bash

python -m qai_hub_models.models.shufflenet_v2.demo

```

The above demo runs a reference implementation of pre-processing, model

inference, and post processing.

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

environment, please add the following to your cell (instead of the above).

```

%run -m qai_hub_models.models.shufflenet_v2.demo

```

### Run model on a cloud-hosted device

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm®

device. This script does the following:

* Performance check on-device on a cloud-hosted device

* Downloads compiled assets that can be deployed on-device for Android.

* Accuracy check between PyTorch and on-device outputs.

```bash

python -m qai_hub_models.models.shufflenet_v2.export

```

## How does this work?

This [export script](https://aihub.qualcomm.com/models/shufflenet_v2/qai_hub_models/models/Shufflenet-v2/export.py)

leverages [Qualcomm® AI Hub](https://aihub.qualcomm.com/) to optimize, validate, and deploy this model

on-device. Lets go through each step below in detail:

Step 1: **Compile model for on-device deployment**

To compile a PyTorch model for on-device deployment, we first trace the model

in memory using the `jit.trace` and then call the `submit_compile_job` API.

```python

import torch

import qai_hub as hub

from qai_hub_models.models.shufflenet_v2 import Model

# Load the model

torch_model = Model.from_pretrained()

# Device

device = hub.Device("Samsung Galaxy S24")

# Trace model

input_shape = torch_model.get_input_spec()

sample_inputs = torch_model.sample_inputs()

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

# Compile model on a specific device

compile_job = hub.submit_compile_job(

model=pt_model,

device=device,

input_specs=torch_model.get_input_spec(),

)

# Get target model to run on-device

target_model = compile_job.get_target_model()

```

Step 2: **Performance profiling on cloud-hosted device**

After compiling models from step 1. Models can be profiled model on-device using the

`target_model`. Note that this scripts runs the model on a device automatically

provisioned in the cloud. Once the job is submitted, you can navigate to a

provided job URL to view a variety of on-device performance metrics.

```python

profile_job = hub.submit_profile_job(

model=target_model,

device=device,

)

```

Step 3: **Verify on-device accuracy**

To verify the accuracy of the model on-device, you can run on-device inference

on sample input data on the same cloud hosted device.

```python

input_data = torch_model.sample_inputs()

inference_job = hub.submit_inference_job(

model=target_model,

device=device,

inputs=input_data,

)

on_device_output = inference_job.download_output_data()

```

With the output of the model, you can compute like PSNR, relative errors or

spot check the output with expected output.

**Note**: This on-device profiling and inference requires access to Qualcomm®

AI Hub. [Sign up for access](https://myaccount.qualcomm.com/signup).

## Run demo on a cloud-hosted device

You can also run the demo on-device.

```bash

python -m qai_hub_models.models.shufflenet_v2.demo --eval-mode on-device

```

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

environment, please add the following to your cell (instead of the above).

```

%run -m qai_hub_models.models.shufflenet_v2.demo -- --eval-mode on-device

```

## Deploying compiled model to Android

The models can be deployed using multiple runtimes:

- TensorFlow Lite (`.tflite` export): [This

tutorial](https://www.tensorflow.org/lite/android/quickstart) provides a

guide to deploy the .tflite model in an Android application.

- QNN (`.so` export ): This [sample

app](https://docs.qualcomm.com/bundle/publicresource/topics/80-63442-50/sample_app.html)

provides instructions on how to use the `.so` shared library in an Android application.

## View on Qualcomm® AI Hub

Get more details on Shufflenet-v2's performance across various devices [here](https://aihub.qualcomm.com/models/shufflenet_v2).

Explore all available models on [Qualcomm® AI Hub](https://aihub.qualcomm.com/)

## License

* The license for the original implementation of Shufflenet-v2 can be found

[here](https://github.com/pytorch/vision/blob/main/LICENSE).

* The license for the compiled assets for on-device deployment can be found [here](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/Qualcomm+AI+Hub+Proprietary+License.pdf)

## References

* [ShuffleNet V2: Practical Guidelines for Efficient CNN Architecture Design](https://arxiv.org/abs/1807.11164)

* [Source Model Implementation](https://github.com/pytorch/vision/blob/main/torchvision/models/shufflenetv2.py)

## Community

* Join [our AI Hub Slack community](https://aihub.qualcomm.com/community/slack) to collaborate, post questions and learn more about on-device AI.

* For questions or feedback please [reach out to us](mailto:ai-hub-support@qti.qualcomm.com).

|

seraphimzzzz/572890

|

seraphimzzzz

| 2025-08-29T23:42:46Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:42:40Z |

[View on Civ Archive](https://civarchive.com/models/589279?modelVersionId=657943)

|

tamewild/4b_v66_merged_e3

|

tamewild

| 2025-08-29T23:42:43Z | 0 | 0 |

transformers

|

[

"transformers",

"safetensors",

"qwen3",

"text-generation",

"conversational",

"arxiv:1910.09700",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2025-08-29T23:41:27Z |

---

library_name: transformers

tags: []

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

- **Developed by:** [More Information Needed]

- **Funded by [optional]:** [More Information Needed]

- **Shared by [optional]:** [More Information Needed]

- **Model type:** [More Information Needed]

- **Language(s) (NLP):** [More Information Needed]

- **License:** [More Information Needed]

- **Finetuned from model [optional]:** [More Information Needed]

### Model Sources [optional]

<!-- Provide the basic links for the model. -->

- **Repository:** [More Information Needed]

- **Paper [optional]:** [More Information Needed]

- **Demo [optional]:** [More Information Needed]

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

[More Information Needed]

### Downstream Use [optional]

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

[More Information Needed]

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

[More Information Needed]

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

[More Information Needed]

### Recommendations

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model. More information needed for further recommendations.

## How to Get Started with the Model

Use the code below to get started with the model.

[More Information Needed]

## Training Details

### Training Data

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

[More Information Needed]

### Training Procedure

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

#### Preprocessing [optional]

[More Information Needed]

#### Training Hyperparameters

- **Training regime:** [More Information Needed] <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

#### Speeds, Sizes, Times [optional]

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

[More Information Needed]

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

### Testing Data, Factors & Metrics

#### Testing Data

<!-- This should link to a Dataset Card if possible. -->

[More Information Needed]

#### Factors

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

[More Information Needed]

#### Metrics

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

[More Information Needed]

### Results

[More Information Needed]

#### Summary

## Model Examination [optional]

<!-- Relevant interpretability work for the model goes here -->

[More Information Needed]

## Environmental Impact

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** [More Information Needed]

- **Hours used:** [More Information Needed]

- **Cloud Provider:** [More Information Needed]

- **Compute Region:** [More Information Needed]

- **Carbon Emitted:** [More Information Needed]

## Technical Specifications [optional]

### Model Architecture and Objective

[More Information Needed]

### Compute Infrastructure

[More Information Needed]

#### Hardware

[More Information Needed]

#### Software

[More Information Needed]

## Citation [optional]

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

**BibTeX:**

[More Information Needed]

**APA:**

[More Information Needed]

## Glossary [optional]

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

[More Information Needed]

## More Information [optional]

[More Information Needed]

## Model Card Authors [optional]

[More Information Needed]

## Model Card Contact

[More Information Needed]

|

seraphimzzzz/606810

|

seraphimzzzz

| 2025-08-29T23:42:17Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:42:12Z |

[View on Civ Archive](https://civarchive.com/models/618597?modelVersionId=691527)

|

seraphimzzzz/568401

|

seraphimzzzz

| 2025-08-29T23:41:57Z | 0 | 0 | null |

[

"region:us"

] | null | 2025-08-29T23:41:57Z |

[View on Civ Archive](https://civarchive.com/models/584094?modelVersionId=651672)

|

sekirr/blockassist-bc-masked_tenacious_whale_1756510869

|

sekirr

| 2025-08-29T23:41:49Z | 0 | 0 | null |

[

"gensyn",

"blockassist",

"gensyn-blockassist",

"minecraft",

"masked tenacious whale",

"arxiv:2504.07091",

"region:us"

] | null | 2025-08-29T23:41:45Z |

---

tags:

- gensyn

- blockassist

- gensyn-blockassist

- minecraft

- masked tenacious whale

---

# Gensyn BlockAssist

Gensyn's BlockAssist is a distributed extension of the paper [AssistanceZero: Scalably Solving Assistance Games](https://arxiv.org/abs/2504.07091).

|

qualcomm/SalsaNext

|

qualcomm

| 2025-08-29T23:41:47Z | 23 | 0 |

pytorch

|

[

"pytorch",

"tflite",

"android",

"image-segmentation",

"license:other",

"region:us"

] |

image-segmentation

| 2025-07-02T21:12:51Z |

---

library_name: pytorch

license: other

tags:

- android

pipeline_tag: image-segmentation

---

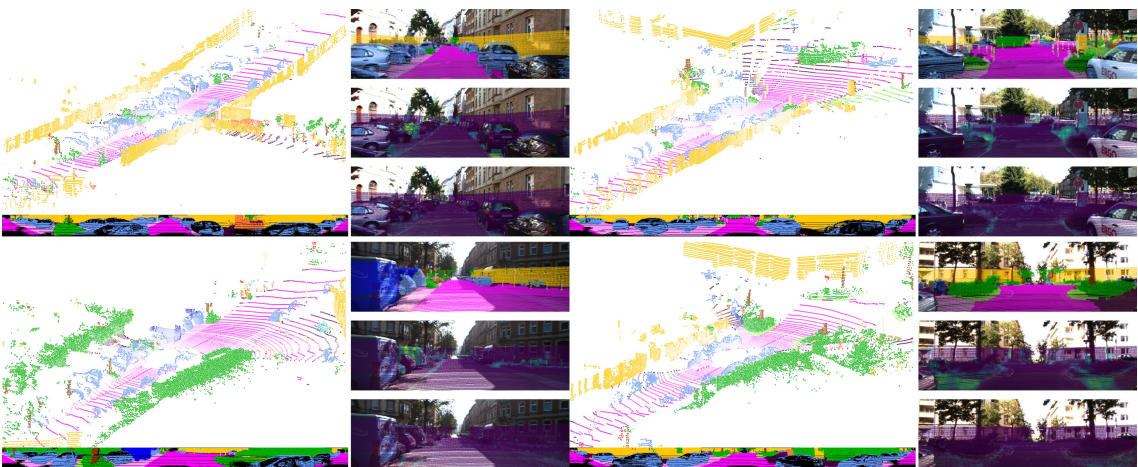

# SalsaNext: Optimized for Mobile Deployment

## Semantic segmentation model optimized for LiDAR point cloud data

SalsaNext is a LiDAR-based semantic segmentation model designed for efficient and accurate

This repository provides scripts to run SalsaNext on Qualcomm® devices.

More details on model performance across various devices, can be found

[here](https://aihub.qualcomm.com/models/salsanext).

### Model Details

- **Model Type:** Model_use_case.semantic_segmentation

- **Model Stats:**

- Model checkpoint: SalsaNext

- Input resolution: 1x5x64x2048

- Number of parameters: 6.71M

- Model size (float): 25.7 MB

| Model | Precision | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Primary Compute Unit | Target Model

|---|---|---|---|---|---|---|---|---|

| SalsaNext | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | TFLITE | 121.917 ms | 10 - 51 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 118.129 ms | 0 - 43 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | TFLITE | 45.983 ms | 10 - 68 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 57.071 ms | 3 - 65 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | TFLITE | 32.787 ms | 10 - 21 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 33.11 ms | 0 - 31 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | TFLITE | 39.657 ms | 10 - 51 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 39.21 ms | 0 - 43 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | TFLITE | 32.983 ms | 10 - 24 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 33.284 ms | 3 - 16 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | ONNX | 32.55 ms | 10 - 51 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.onnx.zip) |

| SalsaNext | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | TFLITE | 23.376 ms | 9 - 58 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 25.031 ms | 3 - 52 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 23.671 ms | 25 - 69 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.onnx.zip) |

| SalsaNext | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | TFLITE | 22.018 ms | 10 - 54 MB | NPU | [SalsaNext.tflite](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.tflite) |

| SalsaNext | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 23.464 ms | 3 - 50 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 21.652 ms | 25 - 73 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.onnx.zip) |

| SalsaNext | float | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 32.211 ms | 45 - 45 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.dlc) |

| SalsaNext | float | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 32.259 ms | 33 - 33 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext.onnx.zip) |

| SalsaNext | w8a16 | QCS8275 (Proxy) | Qualcomm® QCS8275 (Proxy) | QNN_DLC | 71.498 ms | 1 - 74 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | QCS8450 (Proxy) | Qualcomm® QCS8450 (Proxy) | QNN_DLC | 72.924 ms | 1 - 126 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | QCS8550 (Proxy) | Qualcomm® QCS8550 (Proxy) | QNN_DLC | 41.639 ms | 0 - 31 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | QCS9075 (Proxy) | Qualcomm® QCS9075 (Proxy) | QNN_DLC | 40.223 ms | 1 - 76 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 Mobile | QNN_DLC | 41.372 ms | 0 - 32 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | QNN_DLC | 28.139 ms | 1 - 80 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 Mobile | ONNX | 374.08 ms | 318 - 686 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.onnx.zip) |

| SalsaNext | w8a16 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | QNN_DLC | 26.916 ms | 1 - 81 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite Mobile | ONNX | 336.785 ms | 323 - 576 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.onnx.zip) |

| SalsaNext | w8a16 | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN_DLC | 40.726 ms | 39 - 39 MB | NPU | [SalsaNext.dlc](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.dlc) |

| SalsaNext | w8a16 | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 385.857 ms | 529 - 529 MB | NPU | [SalsaNext.onnx.zip](https://huggingface.co/qualcomm/SalsaNext/blob/main/SalsaNext_w8a16.onnx.zip) |

## Installation

Install the package via pip:

```bash

pip install qai-hub-models

```

## Configure Qualcomm® AI Hub to run this model on a cloud-hosted device

Sign-in to [Qualcomm® AI Hub](https://app.aihub.qualcomm.com/) with your

Qualcomm® ID. Once signed in navigate to `Account -> Settings -> API Token`.

With this API token, you can configure your client to run models on the cloud

hosted devices.

```bash

qai-hub configure --api_token API_TOKEN

```

Navigate to [docs](https://app.aihub.qualcomm.com/docs/) for more information.

## Demo off target

The package contains a simple end-to-end demo that downloads pre-trained

weights and runs this model on a sample input.

```bash

python -m qai_hub_models.models.salsanext.demo

```

The above demo runs a reference implementation of pre-processing, model

inference, and post processing.

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

environment, please add the following to your cell (instead of the above).

```

%run -m qai_hub_models.models.salsanext.demo

```

### Run model on a cloud-hosted device

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm®

device. This script does the following:

* Performance check on-device on a cloud-hosted device

* Downloads compiled assets that can be deployed on-device for Android.

* Accuracy check between PyTorch and on-device outputs.

```bash

python -m qai_hub_models.models.salsanext.export

```

## How does this work?

This [export script](https://aihub.qualcomm.com/models/salsanext/qai_hub_models/models/SalsaNext/export.py)

leverages [Qualcomm® AI Hub](https://aihub.qualcomm.com/) to optimize, validate, and deploy this model

on-device. Lets go through each step below in detail:

Step 1: **Compile model for on-device deployment**

To compile a PyTorch model for on-device deployment, we first trace the model

in memory using the `jit.trace` and then call the `submit_compile_job` API.

```python

import torch

import qai_hub as hub

from qai_hub_models.models.salsanext import Model

# Load the model

torch_model = Model.from_pretrained()

# Device

device = hub.Device("Samsung Galaxy S24")

# Trace model

input_shape = torch_model.get_input_spec()

sample_inputs = torch_model.sample_inputs()

pt_model = torch.jit.trace(torch_model, [torch.tensor(data[0]) for _, data in sample_inputs.items()])

# Compile model on a specific device

compile_job = hub.submit_compile_job(

model=pt_model,

device=device,

input_specs=torch_model.get_input_spec(),

)

# Get target model to run on-device

target_model = compile_job.get_target_model()

```

Step 2: **Performance profiling on cloud-hosted device**

After compiling models from step 1. Models can be profiled model on-device using the

`target_model`. Note that this scripts runs the model on a device automatically

provisioned in the cloud. Once the job is submitted, you can navigate to a

provided job URL to view a variety of on-device performance metrics.

```python

profile_job = hub.submit_profile_job(

model=target_model,

device=device,

)

```

Step 3: **Verify on-device accuracy**

To verify the accuracy of the model on-device, you can run on-device inference

on sample input data on the same cloud hosted device.

```python

input_data = torch_model.sample_inputs()

inference_job = hub.submit_inference_job(

model=target_model,

device=device,

inputs=input_data,

)

on_device_output = inference_job.download_output_data()

```

With the output of the model, you can compute like PSNR, relative errors or

spot check the output with expected output.

**Note**: This on-device profiling and inference requires access to Qualcomm®

AI Hub. [Sign up for access](https://myaccount.qualcomm.com/signup).

## Deploying compiled model to Android

The models can be deployed using multiple runtimes:

- TensorFlow Lite (`.tflite` export): [This

tutorial](https://www.tensorflow.org/lite/android/quickstart) provides a

guide to deploy the .tflite model in an Android application.

- QNN (`.so` export ): This [sample

app](https://docs.qualcomm.com/bundle/publicresource/topics/80-63442-50/sample_app.html)

provides instructions on how to use the `.so` shared library in an Android application.

## View on Qualcomm® AI Hub

Get more details on SalsaNext's performance across various devices [here](https://aihub.qualcomm.com/models/salsanext).

Explore all available models on [Qualcomm® AI Hub](https://aihub.qualcomm.com/)

## License