modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-08 06:28:05

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 546

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-08 06:27:40

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

hhffxx/distilbert-base-uncased-distilled-clinc

|

hhffxx

| 2022-09-08T01:32:06Z | 107 | 0 |

transformers

|

[

"transformers",

"pytorch",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:clinc_oos",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-08T00:58:19Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- clinc_oos

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-distilled-clinc

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: clinc_oos

type: clinc_oos

config: plus

split: train

args: plus

metrics:

- name: Accuracy

type: accuracy

value: 0.9503225806451613

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-distilled-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2656

- Accuracy: 0.9503

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 12

- eval_batch_size: 12

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 9

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 3.1212 | 1.0 | 1271 | 1.2698 | 0.8558 |

| 0.6441 | 2.0 | 2542 | 0.3528 | 0.9326 |

| 0.149 | 3.0 | 3813 | 0.2512 | 0.9494 |

| 0.0647 | 4.0 | 5084 | 0.2510 | 0.95 |

| 0.0406 | 5.0 | 6355 | 0.2575 | 0.9510 |

| 0.0318 | 6.0 | 7626 | 0.2592 | 0.9494 |

| 0.026 | 7.0 | 8897 | 0.2629 | 0.9503 |

| 0.023 | 8.0 | 10168 | 0.2682 | 0.95 |

| 0.0207 | 9.0 | 11439 | 0.2656 | 0.9503 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/arcane-style-jv

|

sd-concepts-library

| 2022-09-08T01:08:33Z | 0 | 45 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-08T01:08:28Z |

---

license: mit

---

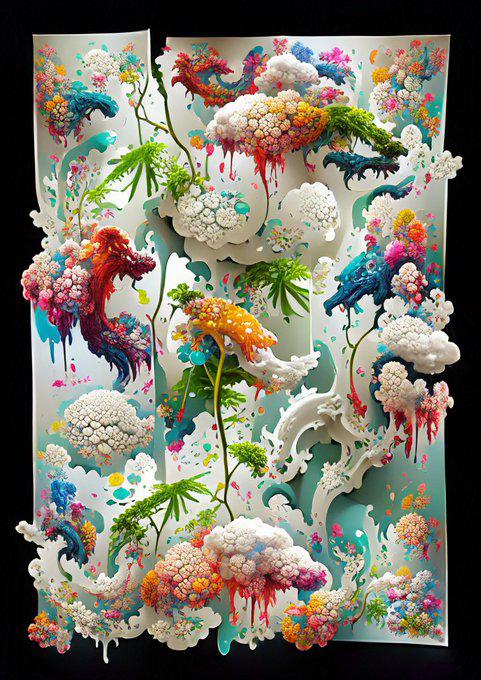

### arcane style jv on Stable Diffusion

This is the `<arcane-style-jv>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

sd-concepts-library/vkuoo1

|

sd-concepts-library

| 2022-09-08T00:04:38Z | 0 | 24 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-08T00:04:32Z |

---

license: mit

---

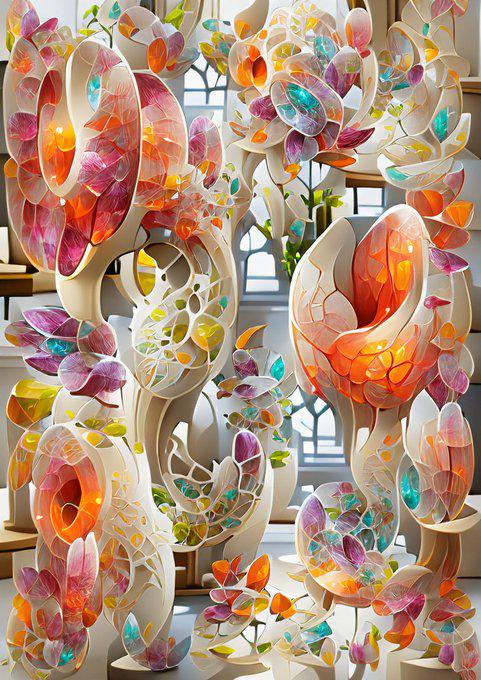

### Vkuoo1 on Stable Diffusion

This is the `<style-vkuoo1>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

sd-concepts-library/2814-roth

|

sd-concepts-library

| 2022-09-07T23:39:06Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T23:39:00Z |

---

license: mit

---

### 2814 Roth on Stable Diffusion

This is the `<2814Roth>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

slarionne/q-FrozenLake-v1-4x4-noSlippery_2

|

slarionne

| 2022-09-07T23:06:29Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-07T23:06:21Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery_2

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="slarionne/q-FrozenLake-v1-4x4-noSlippery_2", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

sd-concepts-library/covid-19-rapid-test

|

sd-concepts-library

| 2022-09-07T22:13:27Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T22:13:20Z |

---

license: mit

---

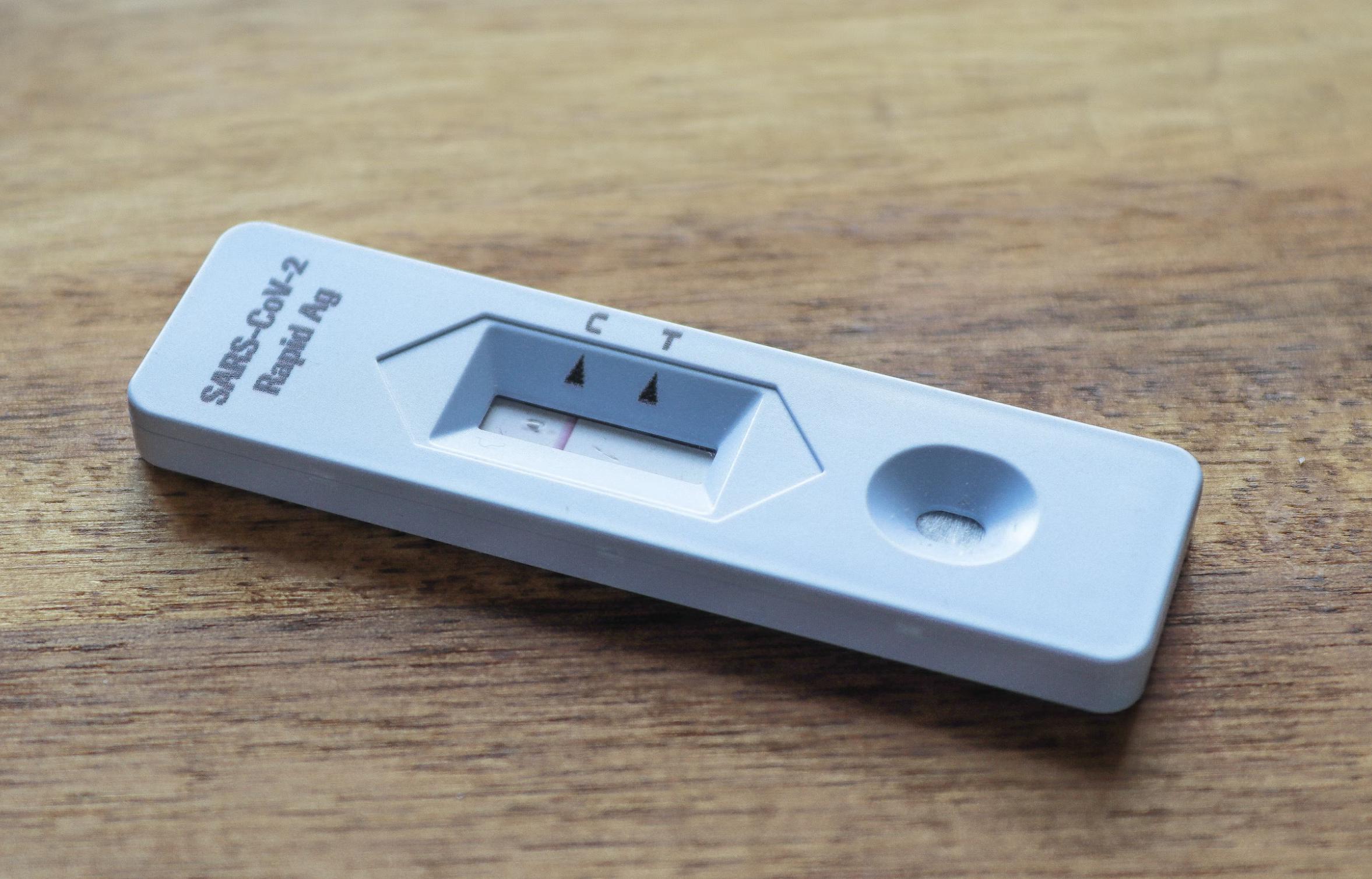

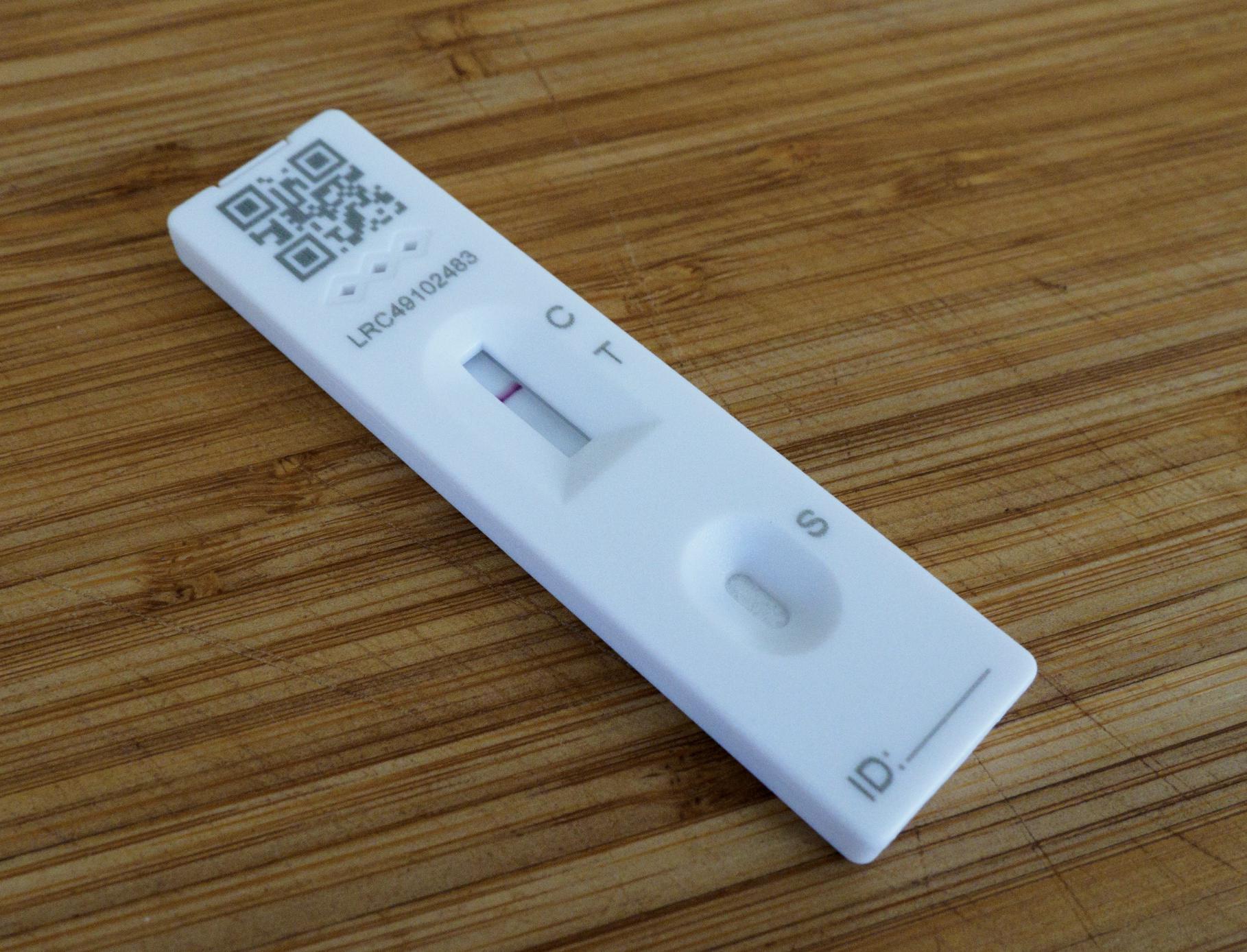

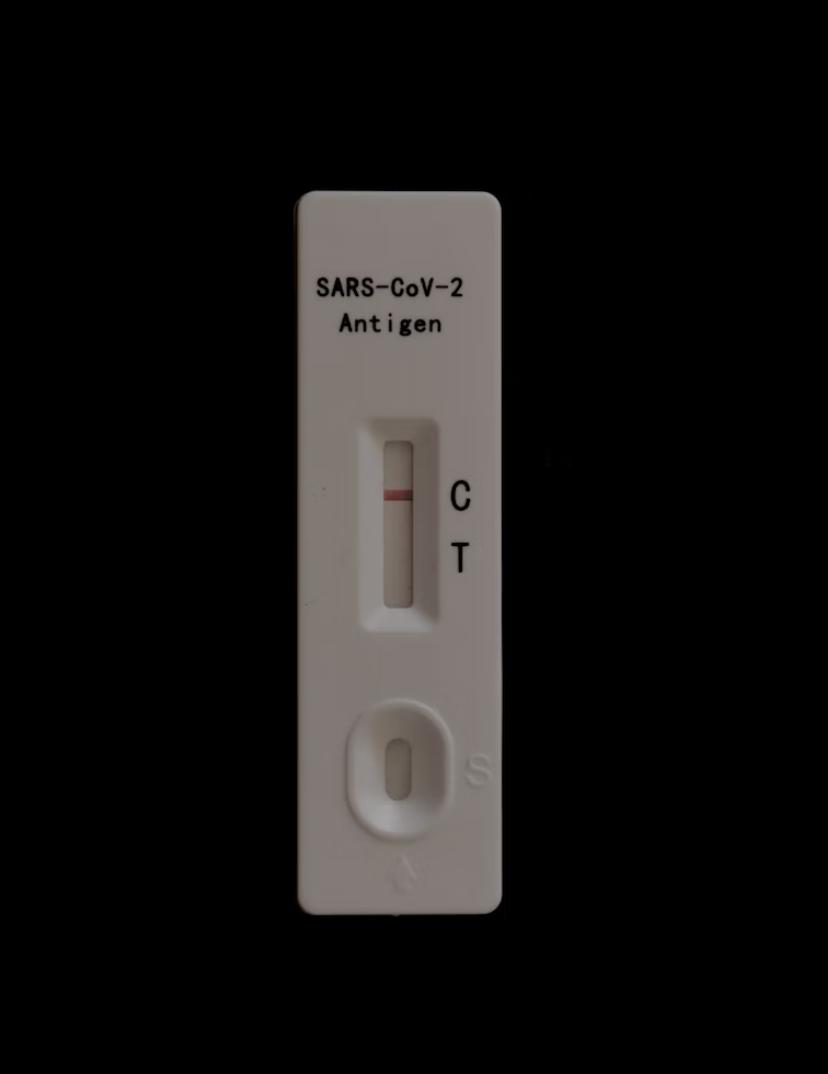

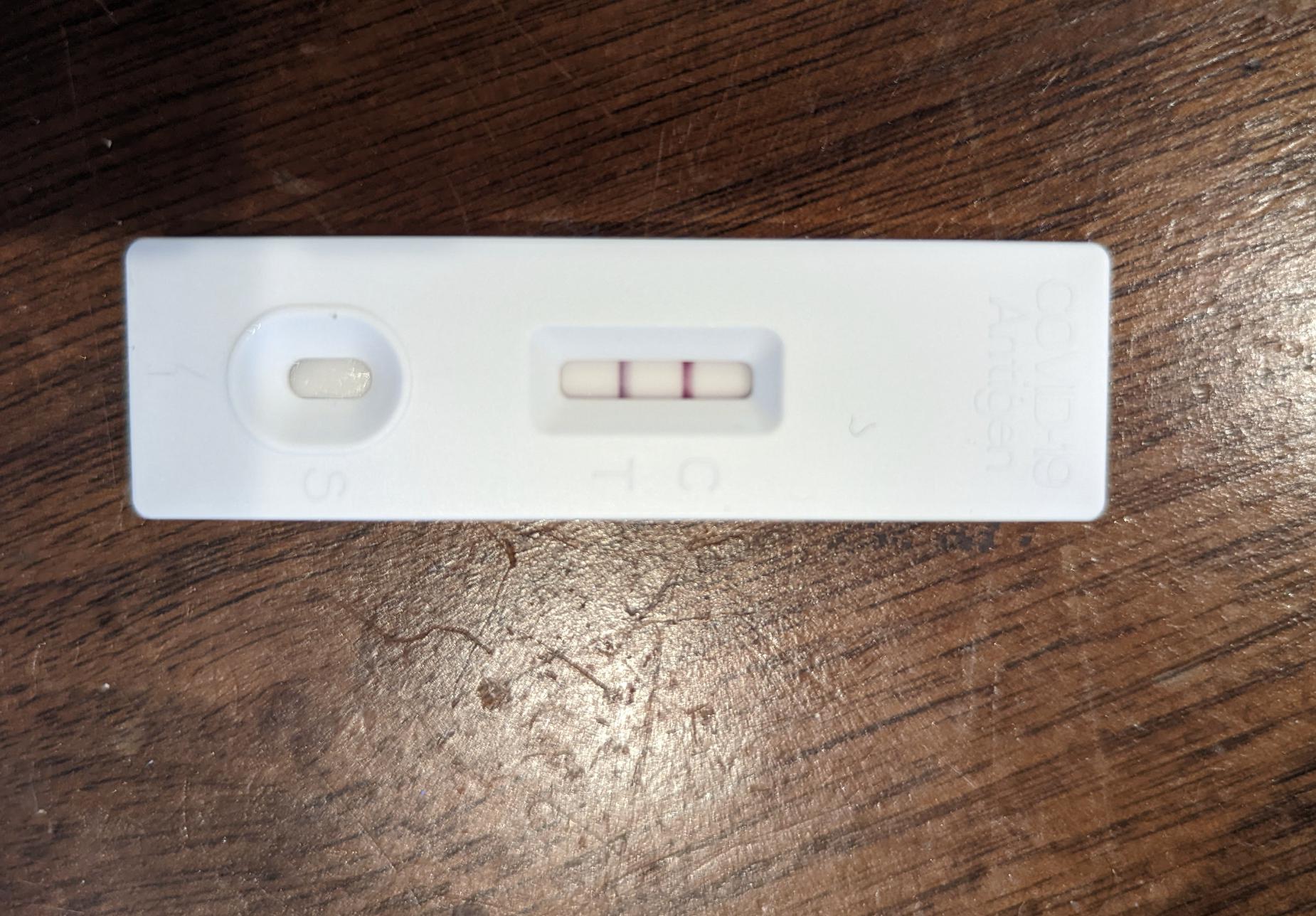

### covid-19-rapid-test on Stable Diffusion

This is the `<covid-test>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

slarionne/q-Taxi-v3

|

slarionne

| 2022-09-07T22:10:05Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-07T22:10:00Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.54 +/- 2.73

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="slarionne/q-Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

sd-concepts-library/cubex

|

sd-concepts-library

| 2022-09-07T21:43:50Z | 0 | 4 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T21:43:45Z |

---

license: mit

---

### cubex on Stable Diffusion

This is the `<cube>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/schloss-mosigkau

|

sd-concepts-library

| 2022-09-07T21:42:56Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T21:42:50Z |

---

license: mit

---

### schloss mosigkau on Stable Diffusion

This is the `<ralph>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

rewsiffer/distilroberta-base-finetuned-wikitext2

|

rewsiffer

| 2022-09-07T21:25:31Z | 70 | 0 |

transformers

|

[

"transformers",

"tf",

"roberta",

"fill-mask",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-07T21:17:32Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: rewsiffer/distilroberta-base-finetuned-wikitext2

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# rewsiffer/distilroberta-base-finetuned-wikitext2

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 1.8968

- Validation Loss: 1.6676

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 1.8968 | 1.6676 | 0 |

### Framework versions

- Transformers 4.21.3

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

talhaa/distilbert-base-uncased-masking-lang

|

talhaa

| 2022-09-07T20:58:20Z | 161 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-07T20:54:06Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilbert-base-uncased-masking-lang

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-masking-lang

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.9978

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 1 | 2.2594 |

| No log | 2.0 | 2 | 0.7379 |

| No log | 3.0 | 3 | 2.0914 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

3ebdola/Dialectal-Arabic-XLM-R-Base

|

3ebdola

| 2022-09-07T20:12:53Z | 1,413 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"fill-mask",

"Dialectal Arabic",

"Arabic",

"sequence labeling",

"Named entity recognition",

"Part-of-speech tagging",

"Zero-shot transfer learning",

"bert",

"multilingual",

"af",

"am",

"ar",

"as",

"az",

"be",

"bg",

"bn",

"br",

"bs",

"ca",

"cs",

"cy",

"da",

"de",

"el",

"en",

"eo",

"es",

"et",

"eu",

"fa",

"fi",

"fr",

"fy",

"ga",

"gd",

"gl",

"gu",

"ha",

"he",

"hi",

"hr",

"hu",

"hy",

"id",

"is",

"it",

"ja",

"jv",

"ka",

"kk",

"km",

"kn",

"ko",

"ku",

"ky",

"la",

"lo",

"lt",

"lv",

"mg",

"mk",

"ml",

"mn",

"mr",

"ms",

"my",

"ne",

"nl",

"no",

"om",

"or",

"pa",

"pl",

"ps",

"pt",

"ro",

"ru",

"sa",

"sd",

"si",

"sk",

"sl",

"so",

"sq",

"sr",

"su",

"sv",

"sw",

"ta",

"te",

"th",

"tl",

"tr",

"ug",

"uk",

"ur",

"uz",

"vi",

"xh",

"yi",

"zh",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-05T19:43:55Z |

---

language:

- multilingual

- af

- am

- ar

- as

- az

- be

- bg

- bn

- br

- bs

- ca

- cs

- cy

- da

- de

- el

- en

- eo

- es

- et

- eu

- fa

- fi

- fr

- fy

- ga

- gd

- gl

- gu

- ha

- he

- hi

- hr

- hu

- hy

- id

- is

- it

- ja

- jv

- ka

- kk

- km

- kn

- ko

- ku

- ky

- la

- lo

- lt

- lv

- mg

- mk

- ml

- mn

- mr

- ms

- my

- ne

- nl

- no

- om

- or

- pa

- pl

- ps

- pt

- ro

- ru

- sa

- sd

- si

- sk

- sl

- so

- sq

- sr

- su

- sv

- sw

- ta

- te

- th

- tl

- tr

- ug

- uk

- ur

- uz

- vi

- xh

- yi

- zh

tags:

- Dialectal Arabic

- Arabic

- sequence labeling

- Named entity recognition

- Part-of-speech tagging

- Zero-shot transfer learning

- bert

license: "mit"

---

# Dialectal Arabic XLM-R Base

This is a repo of the language model used for "AdaSL: An Unsupervised Domain Adaptation framework for Arabic multi-dialectal Sequence Labeling". The state-of-the-art method for sequence labeling on multi-dialect Arabic.

### About the Dialectal-Arabic-XLM-R-Base model

This model is an trained as a further pre-trained of XLM-RoBERTa base using the Masked-language modeling on a dialectal Arabic corpus.

### About the Dialectal-Arabic-XLM-R-Base model training corpora

We have built a 5 million Tweets corpus from Twitter. The crawled tweets cover the dialects of the four Arabic world regions (EGY, GLF, LEV, and MAG regions), as well as MSA. The collected corpus consists of one million (1M) tweets per Arabic variant. We did not perform any text pre-processing on the tweets, except by removing tweets that have a small length (tweets containing less than four words).

### Usage

The model weights can be loaded using `transformers` library by HuggingFace.

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("3ebdola/Dialectal-Arabic-XLM-R-Base")

model = AutoModel.from_pretrained("3ebdola/Dialectal-Arabic-XLM-R-Base")

text = "هذا مثال لنص باللغة العربية, يمكنك استعمال اللهجات العربية أيضا"

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

### Citation

```

@article{ELMEKKI2022102964,

title = {AdaSL: An Unsupervised Domain Adaptation framework for Arabic multi-dialectal Sequence Labeling},

journal = {Information Processing & Management},

volume = {59},

number = {4},

pages = {102964},

year = {2022},

issn = {0306-4573},

doi = {https://doi.org/10.1016/j.ipm.2022.102964},

url = {https://www.sciencedirect.com/science/article/pii/S0306457322000814},

author = {Abdellah {El Mekki} and Abdelkader {El Mahdaouy} and Ismail Berrada and Ahmed Khoumsi},

keywords = {Dialectal Arabic, Arabic natural language processing, Domain adaptation, Multi-dialectal sequence labeling, Named entity recognition, Part-of-speech tagging, Zero-shot transfer learning}

}

```

|

jenniferjane/Bert_Classifier

|

jenniferjane

| 2022-09-07T20:03:28Z | 108 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:yelp_review_full",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T14:39:32Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- yelp_review_full

metrics:

- accuracy

model-index:

- name: Bert_Classifier

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: yelp_review_full

type: yelp_review_full

config: yelp_review_full

split: train

args: yelp_review_full

metrics:

- name: Accuracy

type: accuracy

value: 0.634

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Bert_Classifier

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the yelp_review_full dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0546

- Accuracy: 0.634

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.9528 | 1.0 | 2500 | 0.9097 | 0.5985 |

| 0.7607 | 2.0 | 5000 | 0.8969 | 0.627 |

| 0.5039 | 3.0 | 7500 | 1.0546 | 0.634 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

sd-concepts-library/mafalda-character

|

sd-concepts-library

| 2022-09-07T20:02:26Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T20:02:12Z |

---

license: mit

---

### mafalda character on Stable Diffusion

This is the `<mafalda-quino>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as an `object`:

|

sd-concepts-library/ti-junglepunk-v0

|

sd-concepts-library

| 2022-09-07T19:56:19Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-09-07T19:56:13Z |

---

license: mit

---

### TI_junglepunk_v0 on Stable Diffusion

This is the `<jungle-punk>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

|

talhaa/distilbert-base-uncased-finetuned-imdb

|

talhaa

| 2022-09-07T19:52:38Z | 162 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"fill-mask",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-07T18:50:27Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: distilbert-base-uncased-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-imdb

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 2.2119

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 1 | 3.3374 |

| No log | 2.0 | 2 | 3.8206 |

| No log | 3.0 | 3 | 2.8370 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

VanessaSchenkel/padrao-unicamp-vanessa-finetuned-handscrafted

|

VanessaSchenkel

| 2022-09-07T19:16:28Z | 69 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_keras_callback",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-07T19:13:14Z |

---

tags:

- generated_from_keras_callback

model-index:

- name: VanessaSchenkel/padrao-unicamp-vanessa-finetuned-handscrafted

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# VanessaSchenkel/padrao-unicamp-vanessa-finetuned-handscrafted

This model is a fine-tuned version of [VanessaSchenkel/padrao-unicamp-finetuned-news_commentary](https://huggingface.co/VanessaSchenkel/padrao-unicamp-finetuned-news_commentary) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.8875

- Validation Loss: 0.5701

- Train Bleu: 70.9943

- Train Gen Len: 8.8125

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Train Bleu | Train Gen Len | Epoch |

|:----------:|:---------------:|:----------:|:-------------:|:-----:|

| 0.8875 | 0.5701 | 70.9943 | 8.8125 | 0 |

### Framework versions

- Transformers 4.21.3

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

Imene/vit-base-patch16-224-in21k-Wr

|

Imene

| 2022-09-07T18:16:20Z | 82 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"vit",

"image-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-09-07T16:34:58Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Imene/vit-base-patch16-224-in21k-Wr

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Imene/vit-base-patch16-224-in21k-Wr

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3104

- Train Accuracy: 0.9956

- Train Top-3-accuracy: 0.9981

- Validation Loss: 1.6041

- Validation Accuracy: 0.5770

- Validation Top-3-accuracy: 0.8035

- Epoch: 7

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 0.0001, 'decay_steps': 1500, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000}

- training_precision: mixed_float16

### Training results

| Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch |

|:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:|

| 3.8300 | 0.0583 | 0.1381 | 3.6801 | 0.0951 | 0.2203 | 0 |

| 3.2915 | 0.2418 | 0.4557 | 3.0277 | 0.3004 | 0.5507 | 1 |

| 2.6535 | 0.4438 | 0.7106 | 2.5932 | 0.3780 | 0.6546 | 2 |

| 2.0541 | 0.6308 | 0.8575 | 2.2998 | 0.4556 | 0.6871 | 3 |

| 1.4622 | 0.7924 | 0.9496 | 2.0054 | 0.5056 | 0.7234 | 4 |

| 0.9098 | 0.9201 | 0.9887 | 1.8079 | 0.5695 | 0.7785 | 5 |

| 0.5220 | 0.9821 | 0.9969 | 1.6444 | 0.5845 | 0.7922 | 6 |

| 0.3104 | 0.9956 | 0.9981 | 1.6041 | 0.5770 | 0.8035 | 7 |

### Framework versions

- Transformers 4.21.3

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

PrimeQA/listqa_nq-task-xlm-roberta-large

|

PrimeQA

| 2022-09-07T17:43:27Z | 39 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"MRC",

"Natural Questions List",

"xlm-roberta-large",

"multilingual",

"arxiv:1911.02116",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | null | 2022-09-07T14:45:31Z |

---

license: apache-2.0

tags:

- MRC

- Natural Questions List

- xlm-roberta-large

language:

- multilingual

---

# Model description

An XLM-RoBERTa reading comprehension model for List Question Answering using a fine-tuned [xlm-roberta-large](https://huggingface.co/xlm-roberta-large/) model that is further fine-tuned on the list questions in the [Natural Questions](https://huggingface.co/datasets/natural_questions) dataset.

## Intended uses & limitations

You can use the raw model for the reading comprehension task. Biases associated with the pre-existing language model, xlm-roberta-large, that we used may be present in our fine-tuned model, listqa_nq-task-xlm-roberta-large.

## Usage

You can use this model directly with the [PrimeQA](https://github.com/primeqa/primeqa) pipeline for reading comprehension [listqa.ipynb](https://github.com/primeqa/primeqa/blob/main/notebooks/mrc/listqa.ipynb).

### BibTeX entry and citation info

```bibtex

@article{kwiatkowski-etal-2019-natural,

title = "Natural Questions: A Benchmark for Question Answering Research",

author = "Kwiatkowski, Tom and

Palomaki, Jennimaria and

Redfield, Olivia and

Collins, Michael and

Parikh, Ankur and

Alberti, Chris and

Epstein, Danielle and

Polosukhin, Illia and

Devlin, Jacob and

Lee, Kenton and

Toutanova, Kristina and

Jones, Llion and

Kelcey, Matthew and

Chang, Ming-Wei and

Dai, Andrew M. and

Uszkoreit, Jakob and

Le, Quoc and

Petrov, Slav",

journal = "Transactions of the Association for Computational Linguistics",

volume = "7",

year = "2019",

address = "Cambridge, MA",

publisher = "MIT Press",

url = "https://aclanthology.org/Q19-1026",

doi = "10.1162/tacl_a_00276",

pages = "452--466",

}

```

```bibtex

@article{DBLP:journals/corr/abs-1911-02116,

author = {Alexis Conneau and

Kartikay Khandelwal and

Naman Goyal and

Vishrav Chaudhary and

Guillaume Wenzek and

Francisco Guzm{\'{a}}n and

Edouard Grave and

Myle Ott and

Luke Zettlemoyer and

Veselin Stoyanov},

title = {Unsupervised Cross-lingual Representation Learning at Scale},

journal = {CoRR},

volume = {abs/1911.02116},

year = {2019},

url = {http://arxiv.org/abs/1911.02116},

eprinttype = {arXiv},

eprint = {1911.02116},

timestamp = {Mon, 11 Nov 2019 18:38:09 +0100},

biburl = {https://dblp.org/rec/journals/corr/abs-1911-02116.bib},

bibsource = {dblp computer science bibliography, https://dblp.org}

}

```

|

clementchadebec/reproduced_ciwae

|

clementchadebec

| 2022-09-07T15:34:02Z | 0 | 0 |

pythae

|

[

"pythae",

"reproducibility",

"en",

"license:apache-2.0",

"region:us"

] | null | 2022-09-07T15:22:26Z |

---

language: en

tags:

- pythae

- reproducibility

license: apache-2.0

---

This model was trained with pythae. It can be downloaded or reloaded using the method `load_from_hf_hub`

```python

>>> from pythae.models import AutoModel

>>> model = AutoModel.load_from_hf_hub(hf_hub_path="clementchadebec/reproduced_ciwae")

```

## Reproducibility

This trained model reproduces the results of the official implementation of [1].

| Model | Dataset | Metric | Obtained value | Reference value |

|:---:|:---:|:---:|:---:|:---:|

| CIWAE (beta=0.05) | Dyn. Binarized MNIST | NLL (5000 IS) | 84.74 (0.01) | 84.57 (0.09) |

[1] Rainforth, Tom, et al. "Tighter variational bounds are not necessarily better." International Conference on Machine Learning. PMLR, 2018.

|

PrimeQA/mt5-base-tydi-question-generator

|

PrimeQA

| 2022-09-07T15:01:15Z | 121 | 3 |

transformers

|

[

"transformers",

"pytorch",

"mt5",

"text2text-generation",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-06-29T09:46:08Z |

---

license: apache-2.0

---

# Model description

This is an [mt5-base](https://huggingface.co/google/mt5-base) model, finetuned to generate questions using [TyDi QA](https://huggingface.co/datasets/tydiqa) dataset. It was trained to take the context and answer as input to generate questions.

# Overview

*Language model*: mT5-base \

*Language*: Arabic, Bengali, English, Finnish, Indonesian, Korean, Russian, Swahili, Telugu \

*Task*: Question Generation \

*Data*: TyDi QA

# Intented use and limitations

One can use this model to generate questions. Biases associated with pre-training of mT5 and TyDiQA dataset may be present.

## Usage

One can use this model directly in the [PrimeQA](https://github.com/primeqa/primeqa) framework as in this example [notebook](https://github.com/primeqa/primeqa/blob/main/notebooks/qg/tableqg_inference.ipynb).

Or

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("PrimeQA/mt5-base-tydi-question-generator")

model = AutoModelForSeq2SeqLM.from_pretrained("PrimeQA/mt5-base-tydi-question-generator")

def get_question(answer, context, max_length=64):

input_text = answer +" <<sep>> " + context

features = tokenizer([input_text], return_tensors='pt')

output = model.generate(input_ids=features['input_ids'],

attention_mask=features['attention_mask'],

max_length=max_length)

return tokenizer.decode(output[0])

context = "শচীন টেন্ডুলকারকে ক্রিকেট ইতিহাসের অন্যতম সেরা ব্যাটসম্যান হিসেবে গণ্য করা হয়।"

answer = "শচীন টেন্ডুলকার"

get_question(answer, context)

# output: ক্রিকেট ইতিহাসের অন্যতম সেরা ব্যাটসম্যান কে?

```

## Citation

```bibtex

@inproceedings{xue2021mt5,

title={mT5: A Massively Multilingual Pre-trained Text-to-Text Transformer},

author={Xue, Linting and Constant, Noah and Roberts, Adam and

Kale, Mihir and Al-Rfou, Rami and Siddhant, Aditya and

Barua, Aditya and Raffel, Colin},

booktitle={Proceedings of the 2021 Conference of the North American

Chapter of the Association for Computational Linguistics:

Human Language Technologies},

pages={483--498},

year={2021}

}

```

|

Tahahah/ddpm-butterflies-128

|

Tahahah

| 2022-09-07T13:31:44Z | 0 | 0 |

diffusers

|

[

"diffusers",

"tensorboard",

"en",

"license:apache-2.0",

"diffusers:DDPMPipeline",

"region:us"

] | null | 2022-09-07T02:25:45Z |

---

language: en

license: apache-2.0

library_name: diffusers

tags: []

datasets: /content/drive/Shareddrives/artGAN S2 2022/sugimori-artwork

metrics: []

---

<!-- This model card has been generated automatically according to the information the training script had access to. You

should probably proofread and complete it, then remove this comment. -->

# ddpm-butterflies-128

## Model description

This diffusion model is trained with the [🤗 Diffusers](https://github.com/huggingface/diffusers) library

on the `/content/drive/Shareddrives/artGAN S2 2022/sugimori-artwork` dataset.

## Intended uses & limitations

#### How to use

```python

# TODO: add an example code snippet for running this diffusion pipeline

```

#### Limitations and bias

[TODO: provide examples of latent issues and potential remediations]

## Training data

[TODO: describe the data used to train the model]

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 16

- gradient_accumulation_steps: 1

- optimizer: AdamW with betas=(None, None), weight_decay=None and epsilon=None

- lr_scheduler: None

- lr_warmup_steps: 500

- ema_inv_gamma: None

- ema_inv_gamma: None

- ema_inv_gamma: None

- mixed_precision: fp16

### Training results

📈 [TensorBoard logs](https://huggingface.co/Tahahah/ddpm-butterflies-128/tensorboard?#scalars)

|

RayK/distilbert-base-uncased-finetuned-cola

|

RayK

| 2022-09-07T13:13:31Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:glue",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-08-04T00:05:29Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- glue

metrics:

- matthews_correlation

model-index:

- name: distilbert-base-uncased-finetuned-cola

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: glue

type: glue

config: cola

split: train

args: cola

metrics:

- name: Matthews Correlation

type: matthews_correlation

value: 0.5410039366652665

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-cola

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the glue dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6949

- Matthews Correlation: 0.5410

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Matthews Correlation |

|:-------------:|:-----:|:----:|:---------------:|:--------------------:|

| 0.5241 | 1.0 | 535 | 0.5322 | 0.3973 |

| 0.356 | 2.0 | 1070 | 0.5199 | 0.4836 |

| 0.2402 | 3.0 | 1605 | 0.6086 | 0.5238 |

| 0.166 | 4.0 | 2140 | 0.6949 | 0.5410 |

| 0.134 | 5.0 | 2675 | 0.8254 | 0.5253 |

### Framework versions

- Transformers 4.21.0

- Pytorch 1.9.1

- Datasets 1.12.1

- Tokenizers 0.12.1

|

anniepyim/xlm-roberta-base-finetuned-panx-de

|

anniepyim

| 2022-09-07T13:03:30Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"dataset:xtreme",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-09-07T12:39:42Z |

---

license: mit

tags:

- generated_from_trainer

datasets:

- xtreme

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-de

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: xtreme

type: xtreme

args: PAN-X.de

metrics:

- name: F1

type: f1

value: 0.8648740833380706

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-de

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1365

- F1: 0.8649

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.2553 | 1.0 | 525 | 0.1575 | 0.8279 |

| 0.1284 | 2.0 | 1050 | 0.1386 | 0.8463 |

| 0.0813 | 3.0 | 1575 | 0.1365 | 0.8649 |

### Framework versions

- Transformers 4.11.3

- Pytorch 1.12.1+cu113

- Datasets 1.16.1

- Tokenizers 0.10.3

|

liat-nakayama/japanese-roberta-base-20201221

|

liat-nakayama

| 2022-09-07T13:03:15Z | 191 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"license:cc-by-sa-3.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-07T12:45:36Z |

---

license: cc-by-sa-3.0

---

2020/12/21時点のWikipediaを用いて事前学習した日本語RoBERTaです。

janome(MeCabのPythonラッパー)とBPEを使用してトークナイズしています。

|

hhffxx/distilbert-base-uncased-finetuned-clinc

|

hhffxx

| 2022-09-07T08:21:36Z | 109 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:clinc_oos",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T02:40:13Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- clinc_oos

metrics:

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-clinc

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: clinc_oos

type: clinc_oos

config: plus

split: train

args: plus

metrics:

- name: Accuracy

type: accuracy

value: 0.9503225806451613

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-clinc

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the clinc_oos dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2339

- Accuracy: 0.9503

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 12

- eval_batch_size: 12

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 3.2073 | 1.0 | 1271 | 1.3840 | 0.8542 |

| 0.7452 | 2.0 | 2542 | 0.4053 | 0.9316 |

| 0.1916 | 3.0 | 3813 | 0.2580 | 0.9452 |

| 0.0768 | 4.0 | 5084 | 0.2371 | 0.9477 |

| 0.0455 | 5.0 | 6355 | 0.2339 | 0.9503 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1

|

Vasanth/bert-base-uncased-finetuned-emotion

|

Vasanth

| 2022-09-07T06:19:01Z | 106 | 2 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"dataset:emotion",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T05:27:34Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- emotion

metrics:

- accuracy

- f1

model-index:

- name: bert-base-uncased-finetuned-emotion

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: emotion

type: emotion

config: default

split: train

args: default

metrics:

- name: Accuracy

type: accuracy

value: 0.9454375

- name: F1

type: f1

value: 0.9458448428504193

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the emotion dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1476

- Accuracy: 0.9454

- F1: 0.9458

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.8907 | 1.0 | 250 | 0.2625 | 0.9184 | 0.9157 |

| 0.2315 | 2.0 | 500 | 0.1476 | 0.9454 | 0.9458 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

mesolitica/roberta-base-bahasa-cased

|

mesolitica

| 2022-09-07T06:12:59Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"fill-mask",

"ms",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-07T05:54:15Z |

---

language: ms

---

# roberta-base-bahasa-cased

Pretrained RoBERTa base language model for Malay.

## Pretraining Corpus

`roberta-base-bahasa-cased` model was pretrained on ~400 miliion words. Below is list of data we trained on,

1. IIUM confession, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

2. local Instagram, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

3. local news, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

4. local parliament hansards, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

5. local research papers related to `kebudayaan`, `keagaaman` and `etnik`, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

6. local twitter, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

7. Malay Wattpad, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

8. Malay Wikipedia, https://github.com/huseinzol05/malay-dataset/tree/master/dumping/clean

## Pretraining details

- All steps can reproduce from https://github.com/huseinzol05/Malaya/tree/master/pretrained-model/roberta.

## Example using AutoModelWithLMHead

```python

from transformers import AutoTokenizer, AutoModelForMaskedLM, pipeline

model = AutoModelForMaskedLM.from_pretrained('mesolitica/roberta-base-bahasa-cased')

tokenizer = AutoTokenizer.from_pretrained(

'mesolitica/roberta-base-bahasa-cased',

do_lower_case = False,

)

fill_mask = pipeline('fill-mask', model=model, tokenizer=tokenizer)

fill_mask('Permohonan Najib, anak untuk dengar isu perlembagaan <mask> .')

```

Output is,

```json

[{'score': 0.3368818759918213,

'token': 746,

'token_str': ' negara',

'sequence': 'Permohonan Najib, anak untuk dengar isu perlembagaan negara.'},

{'score': 0.09646568447351456,

'token': 598,

'token_str': ' Malaysia',

'sequence': 'Permohonan Najib, anak untuk dengar isu perlembagaan Malaysia.'},

{'score': 0.029483484104275703,

'token': 3265,

'token_str': ' UMNO',

'sequence': 'Permohonan Najib, anak untuk dengar isu perlembagaan UMNO.'},

{'score': 0.026470622047781944,

'token': 2562,

'token_str': ' parti',

'sequence': 'Permohonan Najib, anak untuk dengar isu perlembagaan parti.'},

{'score': 0.023237623274326324,

'token': 391,

'token_str': ' ini',

'sequence': 'Permohonan Najib, anak untuk dengar isu perlembagaan ini.'}]

```

|

neuralspace/autotrain-citizen_nlu_hindi-1370952776

|

neuralspace

| 2022-09-07T05:48:02Z | 102 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain",

"hi",

"dataset:neuralspace/autotrain-data-citizen_nlu_hindi",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T05:39:47Z |

---

tags:

- autotrain

- text-classification

language:

- hi

widget:

- text: "I love AutoTrain 🤗"

datasets:

- neuralspace/autotrain-data-citizen_nlu_hindi

co2_eq_emissions:

emissions: 0.06283545088764929

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 1370952776

- CO2 Emissions (in grams): 0.0628

## Validation Metrics

- Loss: 0.101

- Accuracy: 0.974

- Macro F1: 0.974

- Micro F1: 0.974

- Weighted F1: 0.974

- Macro Precision: 0.975

- Micro Precision: 0.974

- Weighted Precision: 0.975

- Macro Recall: 0.973

- Micro Recall: 0.974

- Weighted Recall: 0.974

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/neuralspace/autotrain-citizen_nlu_hindi-1370952776

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("neuralspace/autotrain-citizen_nlu_hindi-1370952776", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("neuralspace/autotrain-citizen_nlu_hindi-1370952776", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

neuralspace/autotrain-citizen_nlu_bn-1370652766

|

neuralspace

| 2022-09-07T05:42:31Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"autotrain",

"bn",

"dataset:neuralspace/autotrain-data-citizen_nlu_bn",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-07T05:33:04Z |

---

tags:

- autotrain

- text-classification

language:

- bn

widget:

- text: "I love AutoTrain 🤗"

datasets:

- neuralspace/autotrain-data-citizen_nlu_bn

co2_eq_emissions:

emissions: 0.08431503532658222

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 1370652766

- CO2 Emissions (in grams): 0.0843

## Validation Metrics

- Loss: 0.117

- Accuracy: 0.971

- Macro F1: 0.971

- Micro F1: 0.971

- Weighted F1: 0.971

- Macro Precision: 0.973

- Micro Precision: 0.971

- Weighted Precision: 0.972

- Macro Recall: 0.970

- Micro Recall: 0.971

- Weighted Recall: 0.971

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/neuralspace/autotrain-citizen_nlu_bn-1370652766

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("neuralspace/autotrain-citizen_nlu_bn-1370652766", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("neuralspace/autotrain-citizen_nlu_bn-1370652766", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

nateraw/test-update-metadata-issue

|

nateraw

| 2022-09-07T02:40:04Z | 0 | 0 | null |

[

"en",

"license:mit",

"region:us"

] | null | 2022-09-07T02:28:32Z |

---

language: en

license: mit

---

|

VietAI/vit5-large-vietnews-summarization

|

VietAI

| 2022-09-07T02:28:54Z | 654 | 12 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"t5",

"text2text-generation",

"summarization",

"vi",

"dataset:cc100",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

summarization

| 2022-05-12T10:09:43Z |

---

language: vi

datasets:

- cc100

tags:

- summarization

license: mit

widget:

- text: "vietnews: VietAI là tổ chức phi lợi nhuận với sứ mệnh ươm mầm tài năng về trí tuệ nhân tạo và xây dựng một cộng đồng các chuyên gia trong lĩnh vực trí tuệ nhân tạo đẳng cấp quốc tế tại Việt Nam."

---

# ViT5-large Finetuned on `vietnews` Abstractive Summarization

State-of-the-art pretrained Transformer-based encoder-decoder model for Vietnamese.

[](https://paperswithcode.com/sota/abstractive-text-summarization-on-vietnews?p=vit5-pretrained-text-to-text-transformer-for)

## How to use

For more details, do check out [our Github repo](https://github.com/vietai/ViT5) and [eval script](https://github.com/vietai/ViT5/blob/main/eval/Eval_vietnews_sum.ipynb).

```python

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

tokenizer = AutoTokenizer.from_pretrained("VietAI/vit5-large-vietnews-summarization")

model = AutoModelForSeq2SeqLM.from_pretrained("VietAI/vit5-large-vietnews-summarization")

model.cuda()

sentence = "VietAI là tổ chức phi lợi nhuận với sứ mệnh ươm mầm tài năng về trí tuệ nhân tạo và xây dựng một cộng đồng các chuyên gia trong lĩnh vực trí tuệ nhân tạo đẳng cấp quốc tế tại Việt Nam."

text = "vietnews: " + sentence + " </s>"

encoding = tokenizer(text, return_tensors="pt")

input_ids, attention_masks = encoding["input_ids"].to("cuda"), encoding["attention_mask"].to("cuda")

outputs = model.generate(

input_ids=input_ids, attention_mask=attention_masks,

max_length=256,

early_stopping=True

)

for output in outputs:

line = tokenizer.decode(output, skip_special_tokens=True, clean_up_tokenization_spaces=True)

print(line)

```

## Citation

```

@inproceedings{phan-etal-2022-vit5,

title = "{V}i{T}5: Pretrained Text-to-Text Transformer for {V}ietnamese Language Generation",

author = "Phan, Long and Tran, Hieu and Nguyen, Hieu and Trinh, Trieu H.",

booktitle = "Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies: Student Research Workshop",

year = "2022",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2022.naacl-srw.18",

pages = "136--142",

}

```

|

slarionne/q-FrozenLake-v1-4x4-noSlippery

|

slarionne

| 2022-09-07T02:11:10Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-09-07T02:11:04Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="slarionne/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

evaluate_agent(env, model["max_steps"], model["n_eval_episodes"], model["qtable"], model["eval_seed"])

```

|

Imene/vit-base-patch16-384-wi5

|

Imene

| 2022-09-07T01:30:24Z | 79 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"vit",

"image-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-09-06T19:10:41Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Imene/vit-base-patch16-384-wi5

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Imene/vit-base-patch16-384-wi5

This model is a fine-tuned version of [google/vit-base-patch16-384](https://huggingface.co/google/vit-base-patch16-384) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.4102

- Train Accuracy: 0.9755

- Train Top-3-accuracy: 0.9960

- Validation Loss: 1.9021

- Validation Accuracy: 0.4912

- Validation Top-3-accuracy: 0.7302

- Epoch: 8

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'inner_optimizer': {'class_name': 'AdamWeightDecay', 'config': {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 3e-05, 'decay_steps': 3180, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}}, 'dynamic': True, 'initial_scale': 32768.0, 'dynamic_growth_steps': 2000}

- training_precision: mixed_float16

### Training results

| Train Loss | Train Accuracy | Train Top-3-accuracy | Validation Loss | Validation Accuracy | Validation Top-3-accuracy | Epoch |

|:----------:|:--------------:|:--------------------:|:---------------:|:-------------------:|:-------------------------:|:-----:|

| 4.2945 | 0.0568 | 0.1328 | 3.6233 | 0.1387 | 0.2916 | 0 |

| 3.1234 | 0.2437 | 0.4585 | 2.8657 | 0.3041 | 0.5330 | 1 |

| 2.4383 | 0.4182 | 0.6638 | 2.5499 | 0.3534 | 0.6048 | 2 |

| 1.9258 | 0.5698 | 0.7913 | 2.3046 | 0.4202 | 0.6583 | 3 |

| 1.4919 | 0.6963 | 0.8758 | 2.1349 | 0.4553 | 0.6784 | 4 |

| 1.1127 | 0.7992 | 0.9395 | 2.0878 | 0.4595 | 0.6809 | 5 |

| 0.8092 | 0.8889 | 0.9720 | 1.9460 | 0.4962 | 0.7210 | 6 |

| 0.5794 | 0.9419 | 0.9883 | 1.9478 | 0.4979 | 0.7201 | 7 |

| 0.4102 | 0.9755 | 0.9960 | 1.9021 | 0.4912 | 0.7302 | 8 |

### Framework versions

- Transformers 4.21.3

- TensorFlow 2.8.2

- Datasets 2.4.0

- Tokenizers 0.12.1

|

theojolliffe/t5-model1-feedback

|

theojolliffe

| 2022-09-06T22:02:05Z | 110 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-09-06T21:47:53Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: t5-model1-feedback

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-model1-feedback

This model is a fine-tuned version of [theojolliffe/T5-model-1-feedback-e1](https://huggingface.co/theojolliffe/T5-model-1-feedback-e1) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 2

- eval_batch_size: 2

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:|

| No log | 1.0 | 345 | 0.8173 | 52.0119 | 27.6158 | 44.7895 | 44.8584 | 16.5455 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

diegopetrola/vit-for-kaggle-mayo-clinic

|

diegopetrola

| 2022-09-06T20:12:36Z | 226 | 0 |

transformers

|

[

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-08-14T01:01:52Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: vit-for-kaggle-mayo-clinic

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-for-kaggle-mayo-clinic

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5538

- Accuracy: 0.7616

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: cosine

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 8

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 10 | 0.5944 | 0.7483 |

| No log | 2.0 | 20 | 0.5640 | 0.7483 |

| No log | 3.0 | 30 | 0.5582 | 0.7483 |

| No log | 4.0 | 40 | 0.5585 | 0.7483 |

| No log | 5.0 | 50 | 0.5598 | 0.7483 |

| No log | 6.0 | 60 | 0.5484 | 0.7483 |

| No log | 7.0 | 70 | 0.5524 | 0.7417 |

| No log | 8.0 | 80 | 0.5538 | 0.7616 |

### Framework versions

- Transformers 4.20.1

- Pytorch 1.11.0

- Datasets 2.1.0

- Tokenizers 0.12.1

|

Azizjah/autotrain-arabic_cuisine-1367052683

|

Azizjah

| 2022-09-06T15:18:01Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"autotrain",

"ar",

"dataset:Azizjah/autotrain-data-arabic_cuisine",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-06T15:14:52Z |

---

tags:

- autotrain

- text-classification

language:

- ar

widget:

- text: "I love AutoTrain 🤗"

datasets:

- Azizjah/autotrain-data-arabic_cuisine

co2_eq_emissions:

emissions: 0.02430968865158923

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 1367052683

- CO2 Emissions (in grams): 0.0243

## Validation Metrics

- Loss: 2.302

- Accuracy: 0.439

- Macro F1: 0.133

- Micro F1: 0.439

- Weighted F1: 0.391

- Macro Precision: 0.167

- Micro Precision: 0.439

- Weighted Precision: 0.378

- Macro Recall: 0.140

- Micro Recall: 0.439

- Weighted Recall: 0.439

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/Azizjah/autotrain-arabic_cuisine-1367052683

```

Or Python API:

```

from transformers import AutoModelForSequenceClassification, AutoTokenizer

model = AutoModelForSequenceClassification.from_pretrained("Azizjah/autotrain-arabic_cuisine-1367052683", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("Azizjah/autotrain-arabic_cuisine-1367052683", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

gaeunseo/bert-base-finetuned-imdb

|

gaeunseo

| 2022-09-06T13:22:45Z | 178 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"fill-mask",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-09-05T07:27:09Z |

---

tags:

- generated_from_trainer

model-index:

- name: bert-base-finetuned-imdb

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-base-finetuned-imdb

This model is a fine-tuned version of [klue/bert-base](https://huggingface.co/klue/bert-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.7539

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.9763 | 1.0 | 16 | 1.9655 |

| 2.0231 | 2.0 | 32 | 1.9590 |

| 1.9451 | 3.0 | 48 | 1.8852 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Datasets 2.4.0

- Tokenizers 0.12.1

|

Anurag0961/cards-demo-model3

|

Anurag0961

| 2022-09-06T12:33:17Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-09-06T11:20:24Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- f1

model-index:

- name: cards-demo-model3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# cards-demo-model3

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9271

- F1: 0.7505

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.301 | 1.0 | 41 | 0.9127 | 0.7477 |

| 0.318 | 2.0 | 82 | 0.9173 | 0.7574 |

| 0.2757 | 3.0 | 123 | 0.9271 | 0.7505 |

### Framework versions

- Transformers 4.21.3

- Pytorch 1.12.1+cu113

- Tokenizers 0.12.1

|

burakyldrm/wav2vec2-burak-v2.1

|

burakyldrm

| 2022-09-06T12:33:17Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-09-06T10:50:11Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-burak-v2.1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-burak-v2.1

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5006

- Wer: 0.4605

## Model description