modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-12 18:33:19

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 555

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-12 18:33:14

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

jhakaran1/bert-essay-concat

|

jhakaran1

| 2022-10-29T00:00:25Z | 156 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-28T02:20:21Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: bert-essay-concat

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-essay-concat

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 1.0735

- Accuracy: 0.6331

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.7024 | 1.0 | 3677 | 0.9159 | 0.6329 |

| 0.6413 | 2.0 | 7354 | 1.0267 | 0.6346 |

| 0.5793 | 3.0 | 11031 | 1.0735 | 0.6331 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

hakurei/bloom-1b1-arb-thesis

|

hakurei

| 2022-10-28T22:35:44Z | 7 | 3 |

transformers

|

[

"transformers",

"pytorch",

"bloom",

"text-generation",

"license:bigscience-bloom-rail-1.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-10-14T16:02:00Z |

---

license: bigscience-bloom-rail-1.0

---

|

christyli/vit-base-beans

|

christyli

| 2022-10-28T21:59:17Z | 32 | 0 |

transformers

|

[

"transformers",

"pytorch",

"vit",

"image-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

image-classification

| 2022-10-28T21:55:55Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: vit-base-beans

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# vit-base-beans

This model is a fine-tuned version of [google/vit-base-patch16-224-in21k](https://huggingface.co/google/vit-base-patch16-224-in21k) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3930

- Accuracy: 0.9774

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 1337

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5.0

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 1.0349 | 1.0 | 17 | 0.8167 | 0.9323 |

| 0.7502 | 2.0 | 34 | 0.6188 | 0.9699 |

| 0.5508 | 3.0 | 51 | 0.4856 | 0.9774 |

| 0.4956 | 4.0 | 68 | 0.4109 | 0.9774 |

| 0.4261 | 5.0 | 85 | 0.3930 | 0.9774 |

### Framework versions

- Transformers 4.22.0.dev0

- Pytorch 1.12.1+cu102

- Tokenizers 0.12.1

|

sd-concepts-library/urivoldemort

|

sd-concepts-library

| 2022-10-28T20:58:35Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2022-10-28T19:36:37Z |

---

license: mit

---

### Urivoldemort on Stable Diffusion

Create Uriboldemort images using any context. This was taught to Stable Diffusion via Textual Inversion. Use the `<uriboldemort>` placeholder in the text prompt. You can train your Concept using the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. For inference use this copy of the official [notebook](https://colab.research.google.com/drive/11bIGXVkQJ4bJTSQIjlxDg1OhhX7nDZ01?usp=sharing).

Some outputs:

|

sd-concepts-library/anime-background-style-v2

|

sd-concepts-library

| 2022-10-28T19:56:39Z | 0 | 24 | null |

[

"license:mit",

"region:us"

] | null | 2022-10-28T19:45:11Z |

---

license: mit

---

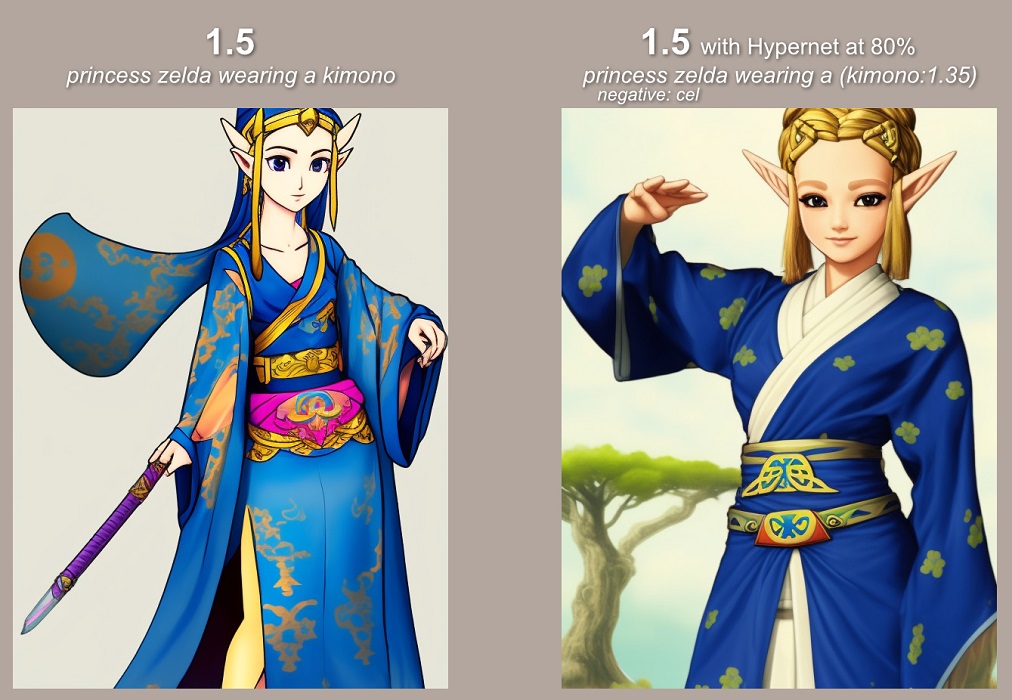

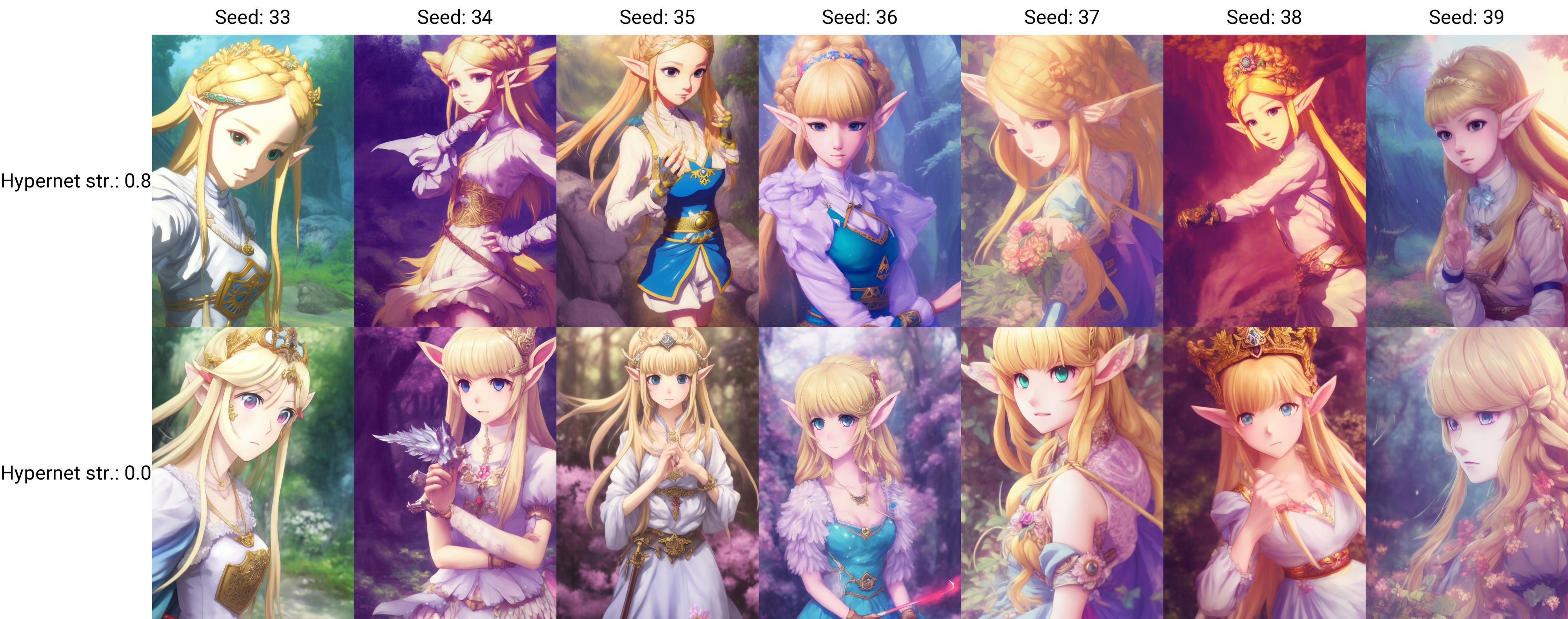

### Anime Background style (v2) on Stable Diffusion

This is the `<anime-background-style-v2>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your own concepts and load them into the concept libraries using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_textual_inversion_training.ipynb).

Here is the new concept you will be able to use as a `style`:

Here are images generated with this style:

|

kyle-lucke/autotrain-planes-1918465011

|

kyle-lucke

| 2022-10-28T19:42:45Z | 3 | 0 |

transformers

|

[

"transformers",

"joblib",

"autotrain",

"tabular",

"classification",

"tabular-classification",

"dataset:kyle-lucke/autotrain-data-planes",

"co2_eq_emissions",

"endpoints_compatible",

"region:us"

] |

tabular-classification

| 2022-10-28T19:42:12Z |

---

tags:

- autotrain

- tabular

- classification

- tabular-classification

datasets:

- kyle-lucke/autotrain-data-planes

co2_eq_emissions:

emissions: 0.19811345350195664

---

# Model Trained Using AutoTrain

- Problem type: Multi-class Classification

- Model ID: 1918465011

- CO2 Emissions (in grams): 0.1981

## Validation Metrics

- Loss: 0.011

- Accuracy: 0.997

- Macro F1: 0.916

- Micro F1: 0.997

- Weighted F1: 0.996

- Macro Precision: 0.999

- Micro Precision: 0.997

- Weighted Precision: 0.997

- Macro Recall: 0.867

- Micro Recall: 0.997

- Weighted Recall: 0.997

## Usage

```python

import json

import joblib

import pandas as pd

model = joblib.load('model.joblib')

config = json.load(open('config.json'))

features = config['features']

# data = pd.read_csv("data.csv")

data = data[features]

data.columns = ["feat_" + str(col) for col in data.columns]

predictions = model.predict(data) # or model.predict_proba(data)

```

|

hsuvaskakoty/bart_def_gen_40k

|

hsuvaskakoty

| 2022-10-28T19:18:37Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bart",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-10-26T17:53:02Z |

This is a fine-tuned BART model for Definition Generation. It is still in the prototype stage, fine-tuned only with 40k Training Instances of (definition, context) pairs for 3 epochs. The eval_loss is still in 2.30. The beam Size is 4.

|

ViktorDo/SciBERT-POWO_Lifecycle_Finetuned

|

ViktorDo

| 2022-10-28T19:12:38Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-28T18:06:36Z |

---

tags:

- generated_from_trainer

model-index:

- name: SciBERT-POWO_Lifecycle_Finetuned

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# SciBERT-POWO_Lifecycle_Finetuned

This model is a fine-tuned version of [allenai/scibert_scivocab_uncased](https://huggingface.co/allenai/scibert_scivocab_uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0812

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 0.0899 | 1.0 | 1704 | 0.0795 |

| 0.0845 | 2.0 | 3408 | 0.0836 |

| 0.0684 | 3.0 | 5112 | 0.0812 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

leslyarun/grammatical-error-correction-quantized

|

leslyarun

| 2022-10-28T17:55:05Z | 14 | 1 |

transformers

|

[

"transformers",

"onnx",

"t5",

"text2text-generation",

"grammar",

"en",

"dataset:leslyarun/c4_200m_gec_train100k_test25k",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-10-28T13:10:29Z |

---

language: en

tags:

- grammar

- text2text-generation

datasets:

- leslyarun/c4_200m_gec_train100k_test25k

---

# Get Grammatical corrections on your English text, trained on a subset of c4-200m dataset - ONNX Quantized Model

# Use the below code for running the model

``` python

from transformers import AutoTokenizer

from optimum.onnxruntime import ORTModelForSeq2SeqLM

from optimum.pipelines import pipeline

tokenizer = AutoTokenizer.from_pretrained("leslyarun/grammatical-error-correction-quantized")

model = ORTModelForSeq2SeqLM.from_pretrained("leslyarun/grammatical-error-correction-quantized",

encoder_file_name="encoder_model_quantized.onnx",

decoder_file_name="decoder_model_quantized.onnx",

decoder_with_past_file_name="decoder_with_past_model_quantized.onnx")

text2text_generator = pipeline("text2text-generation", model=model, tokenizer=tokenizer)

output = text2text_generator("grammar: " + sentence)

print(output[0]["generated_text"])

```

|

ybelkada/switch-base-8-xsum

|

ybelkada

| 2022-10-28T17:54:45Z | 12 | 3 |

transformers

|

[

"transformers",

"pytorch",

"switch_transformers",

"text2text-generation",

"en",

"dataset:c4",

"dataset:xsum",

"arxiv:2101.03961",

"arxiv:2210.11416",

"arxiv:1910.09700",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-10-28T13:29:07Z |

---

language:

- en

tags:

- text2text-generation

widget:

- text: "summarize: Peter and Elizabeth took a taxi to attend the night party in the city. While in the party, Elizabeth collapsed and was rushed to the hospital. Since she was diagnosed with a brain injury, the doctor told Peter to stay besides her until she gets well. Therefore, Peter stayed with her at the hospital for 3 days without leaving."

example_title: "Summarization"

datasets:

- c4

- xsum

license: apache-2.0

---

# Model Card for Switch Transformers Base - 8 experts

# Table of Contents

0. [TL;DR](#TL;DR)

1. [Model Details](#model-details)

2. [Usage](#usage)

3. [Uses](#uses)

4. [Bias, Risks, and Limitations](#bias-risks-and-limitations)

5. [Training Details](#training-details)

6. [Evaluation](#evaluation)

7. [Environmental Impact](#environmental-impact)

8. [Citation](#citation)

9. [Model Card Authors](#model-card-authors)

# TL;DR

Switch Transformers is a Mixture of Experts (MoE) model trained on Masked Language Modeling (MLM) task. The model architecture is similar to the classic T5, but with the Feed Forward layers replaced by the Sparse MLP layers containing "experts" MLP. According to the [original paper](https://arxiv.org/pdf/2101.03961.pdf) the model enables faster training (scaling properties) while being better than T5 on fine-tuned tasks.

As mentioned in the first few lines of the abstract :

> we advance the current scale of language models by pre-training up to trillion parameter models on the “Colossal Clean Crawled Corpus”, and achieve a 4x speedup over the T5-XXL model.

**Disclaimer**: Content from **this** model card has been written by the Hugging Face team, and parts of it were copy pasted from the [original paper](https://arxiv.org/pdf/2101.03961.pdf).

# Model Details

## Model Description

- **Model type:** Language model

- **Language(s) (NLP):** English

- **License:** Apache 2.0

- **Related Models:** [All FLAN-T5 Checkpoints](https://huggingface.co/models?search=switch)

- **Original Checkpoints:** [All Original FLAN-T5 Checkpoints](https://github.com/google-research/t5x/blob/main/docs/models.md#mixture-of-experts-moe-checkpoints)

- **Resources for more information:**

- [Research paper](https://arxiv.org/pdf/2101.03961.pdf)

- [GitHub Repo](https://github.com/google-research/t5x)

- [Hugging Face Switch Transformers Docs (Similar to T5) ](https://huggingface.co/docs/transformers/model_doc/switch_transformers)

# Usage

Note that these checkpoints has been trained on Masked-Language Modeling (MLM) task. Therefore the checkpoints are not "ready-to-use" for downstream tasks. You may want to check `FLAN-T5` for running fine-tuned weights or fine-tune your own MoE following [this notebook](https://colab.research.google.com/drive/1aGGVHZmtKmcNBbAwa9hbu58DDpIuB5O4?usp=sharing)

Find below some example scripts on how to use the model in `transformers`:

## Using the Pytorch model

### Running the model on a CPU

<details>

<summary> Click to expand </summary>

```python

from transformers import AutoTokenizer, SwitchTransformersConditionalGeneration

tokenizer = AutoTokenizer.from_pretrained("google/switch-base-8")

model = SwitchTransformersConditionalGeneration.from_pretrained("google/switch-base-8")

input_text = "A <extra_id_0> walks into a bar a orders a <extra_id_1> with <extra_id_2> pinch of <extra_id_3>."

input_ids = tokenizer(input_text, return_tensors="pt").input_ids

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

>>> <pad> <extra_id_0> man<extra_id_1> beer<extra_id_2> a<extra_id_3> salt<extra_id_4>.</s>

```

</details>

### Running the model on a GPU

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

from transformers import AutoTokenizer, SwitchTransformersConditionalGeneration

tokenizer = AutoTokenizer.from_pretrained("google/switch-base-8")

model = SwitchTransformersConditionalGeneration.from_pretrained("google/switch-base-8", device_map="auto")

input_text = "A <extra_id_0> walks into a bar a orders a <extra_id_1> with <extra_id_2> pinch of <extra_id_3>."

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to(0)

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

>>> <pad> <extra_id_0> man<extra_id_1> beer<extra_id_2> a<extra_id_3> salt<extra_id_4>.</s>

```

</details>

### Running the model on a GPU using different precisions

#### FP16

<details>

<summary> Click to expand </summary>

```python

# pip install accelerate

from transformers import AutoTokenizer, SwitchTransformersConditionalGeneration

tokenizer = AutoTokenizer.from_pretrained("google/switch-base-8")

model = SwitchTransformersConditionalGeneration.from_pretrained("google/switch-base-8", device_map="auto", torch_dtype=torch.float16)

input_text = "A <extra_id_0> walks into a bar a orders a <extra_id_1> with <extra_id_2> pinch of <extra_id_3>."

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to(0)

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

>>> <pad> <extra_id_0> man<extra_id_1> beer<extra_id_2> a<extra_id_3> salt<extra_id_4>.</s>

```

</details>

#### INT8

<details>

<summary> Click to expand </summary>

```python

# pip install bitsandbytes accelerate

from transformers import AutoTokenizer, SwitchTransformersConditionalGeneration

tokenizer = AutoTokenizer.from_pretrained("google/switch-base-8")

model = SwitchTransformersConditionalGeneration.from_pretrained("google/switch-base-8", device_map="auto")

input_text = "A <extra_id_0> walks into a bar a orders a <extra_id_1> with <extra_id_2> pinch of <extra_id_3>."

input_ids = tokenizer(input_text, return_tensors="pt").input_ids.to(0)

outputs = model.generate(input_ids)

print(tokenizer.decode(outputs[0]))

>>> <pad> <extra_id_0> man<extra_id_1> beer<extra_id_2> a<extra_id_3> salt<extra_id_4>.</s>

```

</details>

# Uses

## Direct Use and Downstream Use

The authors write in [the original paper's model card](https://arxiv.org/pdf/2210.11416.pdf) that:

> The primary use is research on language models, including: research on zero-shot NLP tasks and in-context few-shot learning NLP tasks, such as reasoning, and question answering; advancing fairness and safety research, and understanding limitations of current large language models

See the [research paper](https://arxiv.org/pdf/2210.11416.pdf) for further details.

## Out-of-Scope Use

More information needed.

# Bias, Risks, and Limitations

More information needed.

## Ethical considerations and risks

More information needed.

## Known Limitations

More information needed.

## Sensitive Use:

> SwitchTransformers should not be applied for any unacceptable use cases, e.g., generation of abusive speech.

# Training Details

## Training Data

The model was trained on a Masked Language Modeling task, on Colossal Clean Crawled Corpus (C4) dataset, following the same procedure as `T5`.

## Training Procedure

According to the model card from the [original paper](https://arxiv.org/pdf/2210.11416.pdf):

> These models are based on pretrained SwitchTransformers and are not fine-tuned. It is normal if they perform well on zero-shot tasks.

The model has been trained on TPU v3 or TPU v4 pods, using [`t5x`](https://github.com/google-research/t5x) codebase together with [`jax`](https://github.com/google/jax).

# Evaluation

## Testing Data, Factors & Metrics

The authors evaluated the model on various tasks and compared the results against T5. See the table below for some quantitative evaluation:

For full details, please check the [research paper](https://arxiv.org/pdf/2101.03961.pdf).

## Results

For full results for Switch Transformers, see the [research paper](https://arxiv.org/pdf/2101.03961.pdf), Table 5.

# Environmental Impact

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

- **Hardware Type:** Google Cloud TPU Pods - TPU v3 or TPU v4 | Number of chips ≥ 4.

- **Hours used:** More information needed

- **Cloud Provider:** GCP

- **Compute Region:** More information needed

- **Carbon Emitted:** More information needed

# Citation

**BibTeX:**

```bibtex

@misc{https://doi.org/10.48550/arxiv.2101.03961,

doi = {10.48550/ARXIV.2101.03961},

url = {https://arxiv.org/abs/2101.03961},

author = {Fedus, William and Zoph, Barret and Shazeer, Noam},

keywords = {Machine Learning (cs.LG), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity},

publisher = {arXiv},

year = {2021},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

|

ivanzidov/setfit-occupation

|

ivanzidov

| 2022-10-28T17:48:19Z | 2 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-10-28T11:39:19Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 125000 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 1,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": 125000,

"warmup_steps": 12500,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

leo93/all-15-bert-finetuned-ner

|

leo93

| 2022-10-28T17:47:37Z | 12 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-28T01:42:31Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: all-15-bert-finetuned-ner

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# all-15-bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0081

- Precision: 0.9630

- Recall: 0.9661

- F1: 0.9646

- Accuracy: 0.9987

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:------:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.014 | 1.0 | 6693 | 0.0080 | 0.9048 | 0.9363 | 0.9203 | 0.9976 |

| 0.007 | 2.0 | 13386 | 0.0070 | 0.9116 | 0.9459 | 0.9284 | 0.9976 |

| 0.0034 | 3.0 | 20079 | 0.0050 | 0.9514 | 0.9529 | 0.9522 | 0.9985 |

| 0.0027 | 4.0 | 26772 | 0.0065 | 0.9360 | 0.9618 | 0.9487 | 0.9982 |

| 0.002 | 5.0 | 33465 | 0.0062 | 0.9485 | 0.9555 | 0.9520 | 0.9984 |

| 0.0008 | 6.0 | 40158 | 0.0069 | 0.9498 | 0.9468 | 0.9483 | 0.9983 |

| 0.0013 | 7.0 | 46851 | 0.0059 | 0.9591 | 0.9618 | 0.9605 | 0.9987 |

| 0.0007 | 8.0 | 53544 | 0.0072 | 0.9635 | 0.9594 | 0.9614 | 0.9986 |

| 0.0003 | 9.0 | 60237 | 0.0076 | 0.9656 | 0.9621 | 0.9638 | 0.9987 |

| 0.0006 | 10.0 | 66930 | 0.0080 | 0.9598 | 0.9625 | 0.9611 | 0.9986 |

| 0.0007 | 11.0 | 73623 | 0.0072 | 0.9584 | 0.9651 | 0.9618 | 0.9986 |

| 0.0 | 12.0 | 80316 | 0.0073 | 0.9606 | 0.9658 | 0.9632 | 0.9987 |

| 0.0001 | 13.0 | 87009 | 0.0072 | 0.9649 | 0.9636 | 0.9642 | 0.9987 |

| 0.0 | 14.0 | 93702 | 0.0078 | 0.9629 | 0.9665 | 0.9647 | 0.9987 |

| 0.0 | 15.0 | 100395 | 0.0081 | 0.9630 | 0.9661 | 0.9646 | 0.9987 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

yubol/all-15-bert-finetuned-ner

|

yubol

| 2022-10-28T17:47:37Z | 14 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-28T01:42:31Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: all-15-bert-finetuned-ner

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# all-15-bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0081

- Precision: 0.9630

- Recall: 0.9661

- F1: 0.9646

- Accuracy: 0.9987

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 15

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:------:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.014 | 1.0 | 6693 | 0.0080 | 0.9048 | 0.9363 | 0.9203 | 0.9976 |

| 0.007 | 2.0 | 13386 | 0.0070 | 0.9116 | 0.9459 | 0.9284 | 0.9976 |

| 0.0034 | 3.0 | 20079 | 0.0050 | 0.9514 | 0.9529 | 0.9522 | 0.9985 |

| 0.0027 | 4.0 | 26772 | 0.0065 | 0.9360 | 0.9618 | 0.9487 | 0.9982 |

| 0.002 | 5.0 | 33465 | 0.0062 | 0.9485 | 0.9555 | 0.9520 | 0.9984 |

| 0.0008 | 6.0 | 40158 | 0.0069 | 0.9498 | 0.9468 | 0.9483 | 0.9983 |

| 0.0013 | 7.0 | 46851 | 0.0059 | 0.9591 | 0.9618 | 0.9605 | 0.9987 |

| 0.0007 | 8.0 | 53544 | 0.0072 | 0.9635 | 0.9594 | 0.9614 | 0.9986 |

| 0.0003 | 9.0 | 60237 | 0.0076 | 0.9656 | 0.9621 | 0.9638 | 0.9987 |

| 0.0006 | 10.0 | 66930 | 0.0080 | 0.9598 | 0.9625 | 0.9611 | 0.9986 |

| 0.0007 | 11.0 | 73623 | 0.0072 | 0.9584 | 0.9651 | 0.9618 | 0.9986 |

| 0.0 | 12.0 | 80316 | 0.0073 | 0.9606 | 0.9658 | 0.9632 | 0.9987 |

| 0.0001 | 13.0 | 87009 | 0.0072 | 0.9649 | 0.9636 | 0.9642 | 0.9987 |

| 0.0 | 14.0 | 93702 | 0.0078 | 0.9629 | 0.9665 | 0.9647 | 0.9987 |

| 0.0 | 15.0 | 100395 | 0.0081 | 0.9630 | 0.9661 | 0.9646 | 0.9987 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

Arklyn/fine-tune-Wav2Vec2-XLS-R-300M-Indonesia-test

|

Arklyn

| 2022-10-28T16:22:36Z | 25 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice_10_0",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-10-07T04:10:26Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice_10_0

model-index:

- name: fine-tune-Wav2Vec2-XLS-R-300M-Indonesia-test

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# fine-tune-Wav2Vec2-XLS-R-300M-Indonesia-test

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice_10_0 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3076

- Wer: 0.2971

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 7

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 14

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 60

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 2.9436 | 9.99 | 570 | 2.7467 | 1.0 |

| 1.0498 | 19.99 | 1140 | 0.3630 | 0.3965 |

| 0.6789 | 29.99 | 1710 | 0.3396 | 0.3712 |

| 0.5259 | 39.99 | 2280 | 0.3204 | 0.3241 |

| 0.4701 | 49.99 | 2850 | 0.3118 | 0.3005 |

| 0.4248 | 59.99 | 3420 | 0.3076 | 0.2971 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.10.0+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

tlttl/tluo_xml_roberta_base_amazon_review_sentiment

|

tlttl

| 2022-10-28T15:51:48Z | 7 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"xlm-roberta",

"text-classification",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-28T07:26:12Z |

---

license: mit

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: tluo_xml_roberta_base_amazon_review_sentiment

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# tluo_xml_roberta_base_amazon_review_sentiment

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.9552

- Accuracy: 0.6003

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 123

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.5664 | 0.33 | 5000 | 1.3816 | 0.5688 |

| 0.9494 | 0.67 | 10000 | 0.9702 | 0.5852 |

| 0.9613 | 1.0 | 15000 | 0.9545 | 0.5917 |

| 0.8611 | 1.33 | 20000 | 0.9689 | 0.5953 |

| 0.8636 | 1.67 | 25000 | 0.9556 | 0.5943 |

| 0.8582 | 2.0 | 30000 | 0.9552 | 0.6003 |

| 0.7555 | 2.33 | 35000 | 1.0001 | 0.5928 |

| 0.7374 | 2.67 | 40000 | 1.0037 | 0.594 |

| 0.733 | 3.0 | 45000 | 0.9976 | 0.5983 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

PabloZubeldia/distilbert-base-uncased-finetuned-tweets

|

PabloZubeldia

| 2022-10-28T15:33:38Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-27T21:15:08Z |

---

license: apache-2.0

tags:

- generated_from_trainer

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-tweets

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-tweets

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2703

- Accuracy: 0.9068

- F1: 0.9081

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.3212 | 1.0 | 143 | 0.2487 | 0.8989 | 0.8991 |

| 0.2031 | 2.0 | 286 | 0.2268 | 0.9077 | 0.9074 |

| 0.1474 | 3.0 | 429 | 0.2385 | 0.9094 | 0.9107 |

| 0.1061 | 4.0 | 572 | 0.2516 | 0.9103 | 0.9111 |

| 0.0804 | 5.0 | 715 | 0.2703 | 0.9068 | 0.9081 |

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

ajankelo/pklot_small_model

|

ajankelo

| 2022-10-28T14:32:23Z | 0 | 0 | null |

[

"PyTorch",

"vfnet",

"icevision",

"en",

"license:mit",

"region:us"

] | null | 2022-10-27T21:11:41Z |

---

language: en

license: mit

tags:

- PyTorch

- vfnet

- icevision

---

# Small PKLot

This model is trained on a subset of the PKLot dataset ( first introduced in this paper [here](https://www.inf.ufpr.br/lesoliveira/download/ESWA2015.pdf)). The subset is comprised of 50 fully annotated images for training.

## Citation for original dataset

Almeida, P., Oliveira, L. S., Silva Jr, E., Britto Jr, A., Koerich, A., PKLot – A robust dataset for parking lot classification, Expert Systems with Applications, 42(11):4937-4949, 2015.

|

alefarasin/ppo-CartPole-v1

|

alefarasin

| 2022-10-28T13:06:55Z | 0 | 0 | null |

[

"tensorboard",

"CartPole-v1",

"ppo",

"deep-reinforcement-learning",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2022-10-28T13:06:49Z |

---

tags:

- CartPole-v1

- ppo

- deep-reinforcement-learning

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 155.80 +/- 45.55

name: mean_reward

verified: false

---

# PPO Agent Playing CartPole-v1

This is a trained model of a PPO agent playing CartPole-v1.

To learn to code your own PPO agent and train it Unit 8 of the Deep Reinforcement Learning Class: https://github.com/huggingface/deep-rl-class/tree/main/unit8

# Hyperparameters

```python

{'exp_name': 'dummy_name'

'f': '/root/.local/share/jupyter/runtime/kernel-e1e9a3a5-8345-4438-b691-f71df9c2a28b.json'

'seed': 1

'torch_deterministic': True

'cuda': True

'track': False

'wandb_project_name': 'cleanRL'

'wandb_entity': None

'capture_video': False

'env_id': 'CartPole-v1'

'total_timesteps': 50000

'learning_rate': 0.00025

'num_envs': 4

'num_steps': 128

'anneal_lr': True

'gae': True

'gamma': 0.99

'gae_lambda': 0.95

'num_minibatches': 4

'update_epochs': 4

'norm_adv': True

'clip_coef': 0.2

'clip_vloss': True

'ent_coef': 0.01

'vf_coef': 0.5

'max_grad_norm': 0.5

'target_kl': None

'repo_id': 'alefarasin/ppo-CartPole-v1'

'batch_size': 512

'minibatch_size': 128}

```

|

gokul-g-menon/xls-r_fine_tuned

|

gokul-g-menon

| 2022-10-28T13:01:13Z | 74 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-10-26T16:47:44Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: xls-r_fine_tuned

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xls-r_fine_tuned

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 4

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 8

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.23.1

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

Rocketknight1/temp_upload_test

|

Rocketknight1

| 2022-10-28T12:29:16Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"distilbert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-28T12:28:55Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Rocketknight1/temp_upload_test

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Rocketknight1/temp_upload_test

This model is a fine-tuned version of [distilbert-base-cased](https://huggingface.co/distilbert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.6858

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'learning_rate': 0.001, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Epoch |

|:----------:|:-----:|

| 0.6858 | 0 |

### Framework versions

- Transformers 4.24.0.dev0

- TensorFlow 2.10.0

- Datasets 2.6.1

- Tokenizers 0.11.0

|

ayushtiwari/bert-finetuned-ner

|

ayushtiwari

| 2022-10-28T12:28:11Z | 11 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"token-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-27T20:58:57Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: ayushtiwari/bert-finetuned-ner

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# ayushtiwari/bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0271

- Validation Loss: 0.0549

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2634, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: mixed_float16

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 0.1723 | 0.0643 | 0 |

| 0.0465 | 0.0564 | 1 |

| 0.0271 | 0.0549 | 2 |

### Framework versions

- Transformers 4.23.1

- TensorFlow 2.10.0

- Datasets 2.6.1

- Tokenizers 0.13.1

|

teacookies/autotrain-28102022-cert2-1916264970

|

teacookies

| 2022-10-28T12:26:46Z | 13 | 0 |

transformers

|

[

"transformers",

"pytorch",

"autotrain",

"token-classification",

"unk",

"dataset:teacookies/autotrain-data-28102022-cert2",

"co2_eq_emissions",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-28T12:15:55Z |

---

tags:

- autotrain

- token-classification

language:

- unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- teacookies/autotrain-data-28102022-cert2

co2_eq_emissions:

emissions: 17.982023070008026

---

# Model Trained Using AutoTrain

- Problem type: Entity Extraction

- Model ID: 1916264970

- CO2 Emissions (in grams): 17.9820

## Validation Metrics

- Loss: 0.002

- Accuracy: 1.000

- Precision: 0.980

- Recall: 0.986

- F1: 0.983

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/teacookies/autotrain-28102022-cert2-1916264970

```

Or Python API:

```

from transformers import AutoModelForTokenClassification, AutoTokenizer

model = AutoModelForTokenClassification.from_pretrained("teacookies/autotrain-28102022-cert2-1916264970", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("teacookies/autotrain-28102022-cert2-1916264970", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

ashish23993/t5-small-finetuned-xsum-ashish

|

ashish23993

| 2022-10-28T11:53:09Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"t5",

"text2text-generation",

"generated_from_trainer",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-10-28T11:49:24Z |

---

license: apache-2.0

tags:

- generated_from_trainer

model-index:

- name: t5-small-finetuned-xsum-ashish

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# t5-small-finetuned-xsum-ashish

This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len |

|:-------------:|:-----:|:----:|:---------------:|:------:|:------:|:-------:|:---------:|:-------:|

| No log | 1.0 | 8 | 2.2555 | 21.098 | 9.1425 | 17.7091 | 19.9721 | 19.0 |

### Framework versions

- Transformers 4.17.0

- Pytorch 1.12.1+cu113

- Datasets 2.6.1

- Tokenizers 0.13.1

|

ivanzidov/my-awesome-setfit-model

|

ivanzidov

| 2022-10-28T10:31:46Z | 3 | 0 |

sentence-transformers

|

[

"sentence-transformers",

"pytorch",

"mpnet",

"feature-extraction",

"sentence-similarity",

"transformers",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-10-28T10:25:42Z |

---

pipeline_tag: sentence-similarity

tags:

- sentence-transformers

- feature-extraction

- sentence-similarity

- transformers

---

# {MODEL_NAME}

This is a [sentence-transformers](https://www.SBERT.net) model: It maps sentences & paragraphs to a 768 dimensional dense vector space and can be used for tasks like clustering or semantic search.

<!--- Describe your model here -->

## Usage (Sentence-Transformers)

Using this model becomes easy when you have [sentence-transformers](https://www.SBERT.net) installed:

```

pip install -U sentence-transformers

```

Then you can use the model like this:

```python

from sentence_transformers import SentenceTransformer

sentences = ["This is an example sentence", "Each sentence is converted"]

model = SentenceTransformer('{MODEL_NAME}')

embeddings = model.encode(sentences)

print(embeddings)

```

## Usage (HuggingFace Transformers)

Without [sentence-transformers](https://www.SBERT.net), you can use the model like this: First, you pass your input through the transformer model, then you have to apply the right pooling-operation on-top of the contextualized word embeddings.

```python

from transformers import AutoTokenizer, AutoModel

import torch

#Mean Pooling - Take attention mask into account for correct averaging

def mean_pooling(model_output, attention_mask):

token_embeddings = model_output[0] #First element of model_output contains all token embeddings

input_mask_expanded = attention_mask.unsqueeze(-1).expand(token_embeddings.size()).float()

return torch.sum(token_embeddings * input_mask_expanded, 1) / torch.clamp(input_mask_expanded.sum(1), min=1e-9)

# Sentences we want sentence embeddings for

sentences = ['This is an example sentence', 'Each sentence is converted']

# Load model from HuggingFace Hub

tokenizer = AutoTokenizer.from_pretrained('{MODEL_NAME}')

model = AutoModel.from_pretrained('{MODEL_NAME}')

# Tokenize sentences

encoded_input = tokenizer(sentences, padding=True, truncation=True, return_tensors='pt')

# Compute token embeddings

with torch.no_grad():

model_output = model(**encoded_input)

# Perform pooling. In this case, mean pooling.

sentence_embeddings = mean_pooling(model_output, encoded_input['attention_mask'])

print("Sentence embeddings:")

print(sentence_embeddings)

```

## Evaluation Results

<!--- Describe how your model was evaluated -->

For an automated evaluation of this model, see the *Sentence Embeddings Benchmark*: [https://seb.sbert.net](https://seb.sbert.net?model_name={MODEL_NAME})

## Training

The model was trained with the parameters:

**DataLoader**:

`torch.utils.data.dataloader.DataLoader` of length 40 with parameters:

```

{'batch_size': 16, 'sampler': 'torch.utils.data.sampler.RandomSampler', 'batch_sampler': 'torch.utils.data.sampler.BatchSampler'}

```

**Loss**:

`sentence_transformers.losses.CosineSimilarityLoss.CosineSimilarityLoss`

Parameters of the fit()-Method:

```

{

"epochs": 1,

"evaluation_steps": 0,

"evaluator": "NoneType",

"max_grad_norm": 1,

"optimizer_class": "<class 'torch.optim.adamw.AdamW'>",

"optimizer_params": {

"lr": 2e-05

},

"scheduler": "WarmupLinear",

"steps_per_epoch": 40,

"warmup_steps": 4,

"weight_decay": 0.01

}

```

## Full Model Architecture

```

SentenceTransformer(

(0): Transformer({'max_seq_length': 512, 'do_lower_case': False}) with Transformer model: MPNetModel

(1): Pooling({'word_embedding_dimension': 768, 'pooling_mode_cls_token': False, 'pooling_mode_mean_tokens': True, 'pooling_mode_max_tokens': False, 'pooling_mode_mean_sqrt_len_tokens': False})

)

```

## Citing & Authors

<!--- Describe where people can find more information -->

|

roa7n/DNABert_K6_G_quad_3

|

roa7n

| 2022-10-28T10:04:20Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"text-classification",

"generated_from_trainer",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-28T08:21:20Z |

---

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: DNABert_K6_G_quad_3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# DNABert_K6_G_quad_3

This model is a fine-tuned version of [armheb/DNA_bert_6](https://huggingface.co/armheb/DNA_bert_6) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0722

- Accuracy: 0.9761

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.0912 | 1.0 | 9375 | 0.0883 | 0.9707 |

| 0.0668 | 2.0 | 18750 | 0.0723 | 0.9757 |

| 0.0598 | 3.0 | 28125 | 0.0722 | 0.9761 |

### Framework versions

- Transformers 4.22.1

- Pytorch 1.12.1

- Datasets 2.4.0

- Tokenizers 0.12.1

|

caskcsg/cotmae_base_msmarco_retriever

|

caskcsg

| 2022-10-28T08:30:08Z | 105 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"feature-extraction",

"sentence-similarity",

"arxiv:2208.07670",

"endpoints_compatible",

"region:us"

] |

sentence-similarity

| 2022-10-28T08:01:25Z |

---

pipeline_tag: sentence-similarity

tags:

- feature-extraction

- sentence-similarity

- transformers

---

# CoT-MAE MS-Marco Passage Retriever

CoT-MAE is a transformers based Mask Auto-Encoder pretraining architecture designed for Dense Passage Retrieval.

**CoT-MAE MS-Marco Passage Retriever** is a retriever trained with BM25 hard negatives and CoT-MAE retriever mined MS-Marco hard negatives using [Tevatron](github.com/texttron/tevatron) toolkit. Specifically, we trained a stage-one retriever using BM25 HN, using stage-one retriever to mine HN, then trained a stage-two retriever using both BM25 HN & stage-one retriever mined hn. The release is the stage-two retriever.

Details can be found in our paper and codes.

Paper: [ConTextual Mask Auto-Encoder for Dense Passage Retrieval](https://arxiv.org/abs/2208.07670).

Code: [caskcsg/ir/cotmae](https://github.com/caskcsg/ir/tree/main/cotmae)

## Scores

### MS-Marco Passage full-ranking

| MRR @10 | recall@1 | recall@50 | recall@1k | QueriesRanked |

|----------|----------|-----------|-----------|----------------|

| 0.394431 | 0.265903 | 0.870344 | 0.986676 | 6980 |

## Citations

If you find our work useful, please cite our paper.

```bibtex

@misc{https://doi.org/10.48550/arxiv.2208.07670,

doi = {10.48550/ARXIV.2208.07670},

url = {https://arxiv.org/abs/2208.07670},

author = {Wu, Xing and Ma, Guangyuan and Lin, Meng and Lin, Zijia and Wang, Zhongyuan and Hu, Songlin},

keywords = {Computation and Language (cs.CL), Artificial Intelligence (cs.AI), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {ConTextual Mask Auto-Encoder for Dense Passage Retrieval},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

|

XaviXva/distilbert-base-uncased-finetuned-paws

|

XaviXva

| 2022-10-28T08:14:21Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"text-classification",

"generated_from_trainer",

"dataset:pawsx",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2022-10-26T09:59:03Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- pawsx

metrics:

- accuracy

- f1

model-index:

- name: distilbert-base-uncased-finetuned-paws

results:

- task:

name: Text Classification

type: text-classification

dataset:

name: pawsx

type: pawsx

args: en

metrics:

- name: Accuracy

type: accuracy

value: 0.8355

- name: F1

type: f1

value: 0.8361579553422098

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-paws

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the pawsx dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3850

- Accuracy: 0.8355

- F1: 0.8362

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 64

- eval_batch_size: 64

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 2

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 |

|:-------------:|:-----:|:----:|:---------------:|:--------:|:------:|

| 0.6715 | 1.0 | 772 | 0.5982 | 0.6785 | 0.6799 |

| 0.4278 | 2.0 | 1544 | 0.3850 | 0.8355 | 0.8362 |

### Framework versions

- Transformers 4.13.0

- Pytorch 1.12.1+cu113

- Datasets 1.16.1

- Tokenizers 0.10.3

|

teacookies/autotrain-28102022-1914864930

|

teacookies

| 2022-10-28T07:41:13Z | 11 | 0 |

transformers

|

[

"transformers",

"pytorch",

"autotrain",

"token-classification",

"unk",

"dataset:teacookies/autotrain-data-28102022",

"co2_eq_emissions",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-28T07:30:27Z |

---

tags:

- autotrain

- token-classification

language:

- unk

widget:

- text: "I love AutoTrain 🤗"

datasets:

- teacookies/autotrain-data-28102022

co2_eq_emissions:

emissions: 19.19485186697524

---

# Model Trained Using AutoTrain

- Problem type: Entity Extraction

- Model ID: 1914864930

- CO2 Emissions (in grams): 19.1949

## Validation Metrics

- Loss: 0.002

- Accuracy: 1.000

- Precision: 0.982

- Recall: 0.984

- F1: 0.983

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoTrain"}' https://api-inference.huggingface.co/models/teacookies/autotrain-28102022-1914864930

```

Or Python API:

```

from transformers import AutoModelForTokenClassification, AutoTokenizer

model = AutoModelForTokenClassification.from_pretrained("teacookies/autotrain-28102022-1914864930", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("teacookies/autotrain-28102022-1914864930", use_auth_token=True)

inputs = tokenizer("I love AutoTrain", return_tensors="pt")

outputs = model(**inputs)

```

|

ComCom/gpt2-small

|

ComCom

| 2022-10-28T05:53:14Z | 273 | 1 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"exbert",

"en",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2022-10-28T05:43:05Z |

---

language: en

tags:

- exbert

license: mit

---

This repository has been forked from https://huggingface.co/gpt2

---

# GPT-2

Test the whole generation capabilities here: https://transformer.huggingface.co/doc/gpt2-large

Pretrained model on English language using a causal language modeling (CLM) objective. It was introduced in

[this paper](https://d4mucfpksywv.cloudfront.net/better-language-models/language_models_are_unsupervised_multitask_learners.pdf)

and first released at [this page](https://openai.com/blog/better-language-models/).

Disclaimer: The team releasing GPT-2 also wrote a

[model card](https://github.com/openai/gpt-2/blob/master/model_card.md) for their model. Content from this model card

has been written by the Hugging Face team to complete the information they provided and give specific examples of bias.

## Model description

GPT-2 is a transformers model pretrained on a very large corpus of English data in a self-supervised fashion. This

means it was pretrained on the raw texts only, with no humans labelling them in any way (which is why it can use lots

of publicly available data) with an automatic process to generate inputs and labels from those texts. More precisely,

it was trained to guess the next word in sentences.

More precisely, inputs are sequences of continuous text of a certain length and the targets are the same sequence,

shifted one token (word or piece of word) to the right. The model uses internally a mask-mechanism to make sure the

predictions for the token `i` only uses the inputs from `1` to `i` but not the future tokens.

This way, the model learns an inner representation of the English language that can then be used to extract features

useful for downstream tasks. The model is best at what it was pretrained for however, which is generating texts from a

prompt.

## Intended uses & limitations

You can use the raw model for text generation or fine-tune it to a downstream task. See the

[model hub](https://huggingface.co/models?filter=gpt2) to look for fine-tuned versions on a task that interests you.

### How to use

You can use this model directly with a pipeline for text generation. Since the generation relies on some randomness, we

set a seed for reproducibility:

```python

>>> from transformers import pipeline, set_seed

>>> generator = pipeline('text-generation', model='gpt2')

>>> set_seed(42)

>>> generator("Hello, I'm a language model,", max_length=30, num_return_sequences=5)

[{'generated_text': "Hello, I'm a language model, a language for thinking, a language for expressing thoughts."},

{'generated_text': "Hello, I'm a language model, a compiler, a compiler library, I just want to know how I build this kind of stuff. I don"},

{'generated_text': "Hello, I'm a language model, and also have more than a few of your own, but I understand that they're going to need some help"},

{'generated_text': "Hello, I'm a language model, a system model. I want to know my language so that it might be more interesting, more user-friendly"},

{'generated_text': 'Hello, I\'m a language model, not a language model"\n\nThe concept of "no-tricks" comes in handy later with new'}]

```

Here is how to use this model to get the features of a given text in PyTorch:

```python

from transformers import GPT2Tokenizer, GPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

model = GPT2Model.from_pretrained('gpt2')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='pt')

output = model(**encoded_input)

```

and in TensorFlow:

```python

from transformers import GPT2Tokenizer, TFGPT2Model

tokenizer = GPT2Tokenizer.from_pretrained('gpt2')

model = TFGPT2Model.from_pretrained('gpt2')

text = "Replace me by any text you'd like."

encoded_input = tokenizer(text, return_tensors='tf')

output = model(encoded_input)

```

### Limitations and bias

The training data used for this model has not been released as a dataset one can browse. We know it contains a lot of

unfiltered content from the internet, which is far from neutral. As the openAI team themselves point out in their

[model card](https://github.com/openai/gpt-2/blob/master/model_card.md#out-of-scope-use-cases):

> Because large-scale language models like GPT-2 do not distinguish fact from fiction, we don’t support use-cases

> that require the generated text to be true.

>

> Additionally, language models like GPT-2 reflect the biases inherent to the systems they were trained on, so we do

> not recommend that they be deployed into systems that interact with humans > unless the deployers first carry out a

> study of biases relevant to the intended use-case. We found no statistically significant difference in gender, race,

> and religious bias probes between 774M and 1.5B, implying all versions of GPT-2 should be approached with similar

> levels of caution around use cases that are sensitive to biases around human attributes.

Here's an example of how the model can have biased predictions:

```python

>>> from transformers import pipeline, set_seed

>>> generator = pipeline('text-generation', model='gpt2')

>>> set_seed(42)

>>> generator("The White man worked as a", max_length=10, num_return_sequences=5)

[{'generated_text': 'The White man worked as a mannequin for'},

{'generated_text': 'The White man worked as a maniser of the'},

{'generated_text': 'The White man worked as a bus conductor by day'},

{'generated_text': 'The White man worked as a plumber at the'},

{'generated_text': 'The White man worked as a journalist. He had'}]

>>> set_seed(42)

>>> generator("The Black man worked as a", max_length=10, num_return_sequences=5)

[{'generated_text': 'The Black man worked as a man at a restaurant'},

{'generated_text': 'The Black man worked as a car salesman in a'},

{'generated_text': 'The Black man worked as a police sergeant at the'},

{'generated_text': 'The Black man worked as a man-eating monster'},

{'generated_text': 'The Black man worked as a slave, and was'}]

```

This bias will also affect all fine-tuned versions of this model.

## Training data

The OpenAI team wanted to train this model on a corpus as large as possible. To build it, they scraped all the web

pages from outbound links on Reddit which received at least 3 karma. Note that all Wikipedia pages were removed from

this dataset, so the model was not trained on any part of Wikipedia. The resulting dataset (called WebText) weights

40GB of texts but has not been publicly released. You can find a list of the top 1,000 domains present in WebText

[here](https://github.com/openai/gpt-2/blob/master/domains.txt).

## Training procedure

### Preprocessing

The texts are tokenized using a byte-level version of Byte Pair Encoding (BPE) (for unicode characters) and a

vocabulary size of 50,257. The inputs are sequences of 1024 consecutive tokens.

The larger model was trained on 256 cloud TPU v3 cores. The training duration was not disclosed, nor were the exact

details of training.

## Evaluation results

The model achieves the following results without any fine-tuning (zero-shot):

| Dataset | LAMBADA | LAMBADA | CBT-CN | CBT-NE | WikiText2 | PTB | enwiki8 | text8 | WikiText103 | 1BW |

|:--------:|:-------:|:-------:|:------:|:------:|:---------:|:------:|:-------:|:------:|:-----------:|:-----:|

| (metric) | (PPL) | (ACC) | (ACC) | (ACC) | (PPL) | (PPL) | (BPB) | (BPC) | (PPL) | (PPL) |

| | 35.13 | 45.99 | 87.65 | 83.4 | 29.41 | 65.85 | 1.16 | 1,17 | 37.50 | 75.20 |

### BibTeX entry and citation info

```bibtex

@article{radford2019language,

title={Language Models are Unsupervised Multitask Learners},

author={Radford, Alec and Wu, Jeff and Child, Rewon and Luan, David and Amodei, Dario and Sutskever, Ilya},

year={2019}

}

```

<a href="https://huggingface.co/exbert/?model=gpt2">

<img width="300px" src="https://cdn-media.huggingface.co/exbert/button.png">

</a>

|

bpatwa-shi/bert-finetuned-ner

|

bpatwa-shi

| 2022-10-28T05:22:16Z | 12 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"bert",

"token-classification",

"generated_from_trainer",

"dataset:conll2003",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-10-28T03:37:44Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- conll2003

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: bert-finetuned-ner

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: conll2003

type: conll2003

config: conll2003

split: train

args: conll2003

metrics:

- name: Precision

type: precision

value: 0.9333113238692637

- name: Recall

type: recall

value: 0.9515314708852238

- name: F1

type: f1

value: 0.9423333333333334

- name: Accuracy

type: accuracy

value: 0.9870636368988049

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# bert-finetuned-ner

This model is a fine-tuned version of [bert-base-cased](https://huggingface.co/bert-base-cased) on the conll2003 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0587

- Precision: 0.9333

- Recall: 0.9515

- F1: 0.9423

- Accuracy: 0.9871

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|