modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-09-11 18:29:29

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 555

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-09-11 18:25:24

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

testing/autonlp-ingredient_sentiment_analysis-19126711

|

testing

| 2021-11-04T15:54:28Z | 16 | 0 |

transformers

|

[

"transformers",

"pytorch",

"bert",

"token-classification",

"autonlp",

"en",

"dataset:testing/autonlp-data-ingredient_sentiment_analysis",

"co2_eq_emissions",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

tags: autonlp

language: en

widget:

- text: "I love AutoNLP 🤗"

datasets:

- testing/autonlp-data-ingredient_sentiment_analysis

co2_eq_emissions: 1.8458289701133035

---

# Model Trained Using AutoNLP

- Problem type: Entity Extraction

- Model ID: 19126711

- CO2 Emissions (in grams): 1.8458289701133035

## Validation Metrics

- Loss: 0.054593171924352646

- Accuracy: 0.9790668170284748

- Precision: 0.8029411764705883

- Recall: 0.6026490066225165

- F1: 0.6885245901639344

## Usage

You can use cURL to access this model:

```

$ curl -X POST -H "Authorization: Bearer YOUR_API_KEY" -H "Content-Type: application/json" -d '{"inputs": "I love AutoNLP"}' https://api-inference.huggingface.co/models/testing/autonlp-ingredient_sentiment_analysis-19126711

```

Or Python API:

```

from transformers import AutoModelForTokenClassification, AutoTokenizer

model = AutoModelForTokenClassification.from_pretrained("testing/autonlp-ingredient_sentiment_analysis-19126711", use_auth_token=True)

tokenizer = AutoTokenizer.from_pretrained("testing/autonlp-ingredient_sentiment_analysis-19126711", use_auth_token=True)

inputs = tokenizer("I love AutoNLP", return_tensors="pt")

outputs = model(**inputs)

```

|

patrickvonplaten/wav2vec2-base-100h-2nd-try

|

patrickvonplaten

| 2021-11-04T15:41:08Z | 12 | 0 |

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"en",

"dataset:librispeech_asr",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: en

datasets:

- librispeech_asr

tags:

- audio

- automatic-speech-recognition

license: apache-2.0

widget:

- example_title: IEMOCAP sample 1

src: https://cdn-media.huggingface.co/speech_samples/IEMOCAP_Ses01F_impro03_F013.wav

- example_title: IEMOCAP sample 2

src: https://cdn-media.huggingface.co/speech_samples/IEMOCAP_Ses01F_impro04_F000.wav

- example_title: LibriSpeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/LibriSpeech_61-70968-0000.flac

- example_title: LibriSpeech sample 2

src: https://cdn-media.huggingface.co/speech_samples/LibriSpeech_61-70968-0001.flac

- example_title: VoxCeleb sample 1

src: https://cdn-media.huggingface.co/speech_samples/VoxCeleb1_00003.wav

- example_title: VoxCeleb sample 2

src: https://cdn-media.huggingface.co/speech_samples/VoxCeleb_00004.wav

---

Second fine-tuning try of `wav2vec2-base`. Results are similar to the ones reported in https://huggingface.co/facebook/wav2vec2-base-100h.

Model was trained on *librispeech-clean-train.100* with following hyper-parameters:

- 2 GPUs Titan RTX

- Total update steps 11000

- Batch size per GPU: 32 corresponding to a *total batch size* of ca. ~750 seconds

- Adam with linear decaying learning rate with 3000 warmup steps

- dynamic padding for batch

- fp16

- attention_mask was **not** used during training

Check: https://wandb.ai/patrickvonplaten/huggingface/runs/1yrpescx?workspace=user-patrickvonplaten

*Result (WER)* on Librispeech:

| "clean" (% rel difference to results in paper) | "other" (% rel difference to results in paper) |

|---|---|

| 6.2 (-1.6%) | 15.2 (-11.2%)|

|

osanseviero/hubert_asr_using_hub

|

osanseviero

| 2021-11-04T15:39:06Z | 0 | 0 |

superb

|

[

"superb",

"automatic-speech-recognition",

"benchmark:superb",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

tags:

- superb

- automatic-speech-recognition

- benchmark:superb

library_name: superb

widget:

- example_title: Librispeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/sample1.flac

---

# Test for superb using hubert downstream ASR and upstream hubert model from the HF Hub

This repo uses: https://huggingface.co/osanseviero/hubert_base

|

mpariente/ConvTasNet_WHAM_sepclean

|

mpariente

| 2021-11-04T15:29:29Z | 446 | 0 |

asteroid

|

[

"asteroid",

"pytorch",

"audio",

"ConvTasNet",

"audio-to-audio",

"dataset:wham",

"dataset:sep_clean",

"license:cc-by-sa-4.0",

"region:us"

] |

audio-to-audio

| 2022-03-02T23:29:05Z |

---

tags:

- asteroid

- audio

- ConvTasNet

- audio-to-audio

datasets:

- wham

- sep_clean

license: cc-by-sa-4.0

widget:

- example_title: Librispeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/sample1.flac

---

## Asteroid model `mpariente/ConvTasNet_WHAM_sepclean`

Imported from [Zenodo](https://zenodo.org/record/3862942)

### Description:

This model was trained by Manuel Pariente

using the wham/ConvTasNet recipe in [Asteroid](https://github.com/asteroid-team/asteroid).

It was trained on the `sep_clean` task of the WHAM! dataset.

### Training config:

```yaml

data:

n_src: 2

mode: min

nondefault_nsrc: None

sample_rate: 8000

segment: 3

task: sep_clean

train_dir: data/wav8k/min/tr/

valid_dir: data/wav8k/min/cv/

filterbank:

kernel_size: 16

n_filters: 512

stride: 8

main_args:

exp_dir: exp/wham

gpus: -1

help: None

masknet:

bn_chan: 128

hid_chan: 512

mask_act: relu

n_blocks: 8

n_repeats: 3

n_src: 2

skip_chan: 128

optim:

lr: 0.001

optimizer: adam

weight_decay: 0.0

positional arguments:

training:

batch_size: 24

early_stop: True

epochs: 200

half_lr: True

num_workers: 4

```

### Results:

```yaml

si_sdr: 16.21326632846293

si_sdr_imp: 16.21441705664987

sdr: 16.615180021738933

sdr_imp: 16.464137807433435

sir: 26.860503975131923

sir_imp: 26.709461760826414

sar: 17.18312813480803

sar_imp: -131.99332048277296

stoi: 0.9619940905157323

stoi_imp: 0.2239480672473015

```

### License notice:

This work "ConvTasNet_WHAM!_sepclean" is a derivative of [CSR-I (WSJ0) Complete](https://catalog.ldc.upenn.edu/LDC93S6A)

by [LDC](https://www.ldc.upenn.edu/), used under [LDC User Agreement for

Non-Members](https://catalog.ldc.upenn.edu/license/ldc-non-members-agreement.pdf) (Research only).

"ConvTasNet_WHAM!_sepclean" is licensed under [Attribution-ShareAlike 3.0 Unported](https://creativecommons.org/licenses/by-sa/3.0/)

by Manuel Pariente.

|

microsoft/unispeech-sat-base-100h-libri-ft

|

microsoft

| 2021-11-04T15:26:40Z | 198,321 | 4 |

transformers

|

[

"transformers",

"pytorch",

"unispeech-sat",

"automatic-speech-recognition",

"audio",

"en",

"dataset:librispeech_asr",

"arxiv:2110.05752",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: en

datasets:

- librispeech_asr

tags:

- audio

- automatic-speech-recognition

license: apache-2.0

widget:

- example_title: Librispeech sample 1

src: https://cdn-media.huggingface.co/speech_samples/sample1.flac

- example_title: Librispeech sample 2

src: https://cdn-media.huggingface.co/speech_samples/sample2.flac

---

# UniSpeech-SAT-Base-Finetuned-100h-Libri

[Microsoft's UniSpeech](https://www.microsoft.com/en-us/research/publication/unispeech-unified-speech-representation-learning-with-labeled-and-unlabeled-data/)

A [unispeech-sat-base model]( ) that was fine-tuned on 100h hours of Librispeech on 16kHz sampled speech audio. When using the model

make sure that your speech input is also sampled at 16Khz.

The model was fine-tuned on:

- 100 hours of [LibriSpeech](https://huggingface.co/datasets/librispeech_asr)

[Paper: UNISPEECH-SAT: UNIVERSAL SPEECH REPRESENTATION LEARNING WITH SPEAKER

AWARE PRE-TRAINING](https://arxiv.org/abs/2110.05752)

Authors: Sanyuan Chen, Yu Wu, Chengyi Wang, Zhengyang Chen, Zhuo Chen, Shujie Liu, Jian Wu, Yao Qian, Furu Wei, Jinyu Li, Xiangzhan Yu

**Abstract**

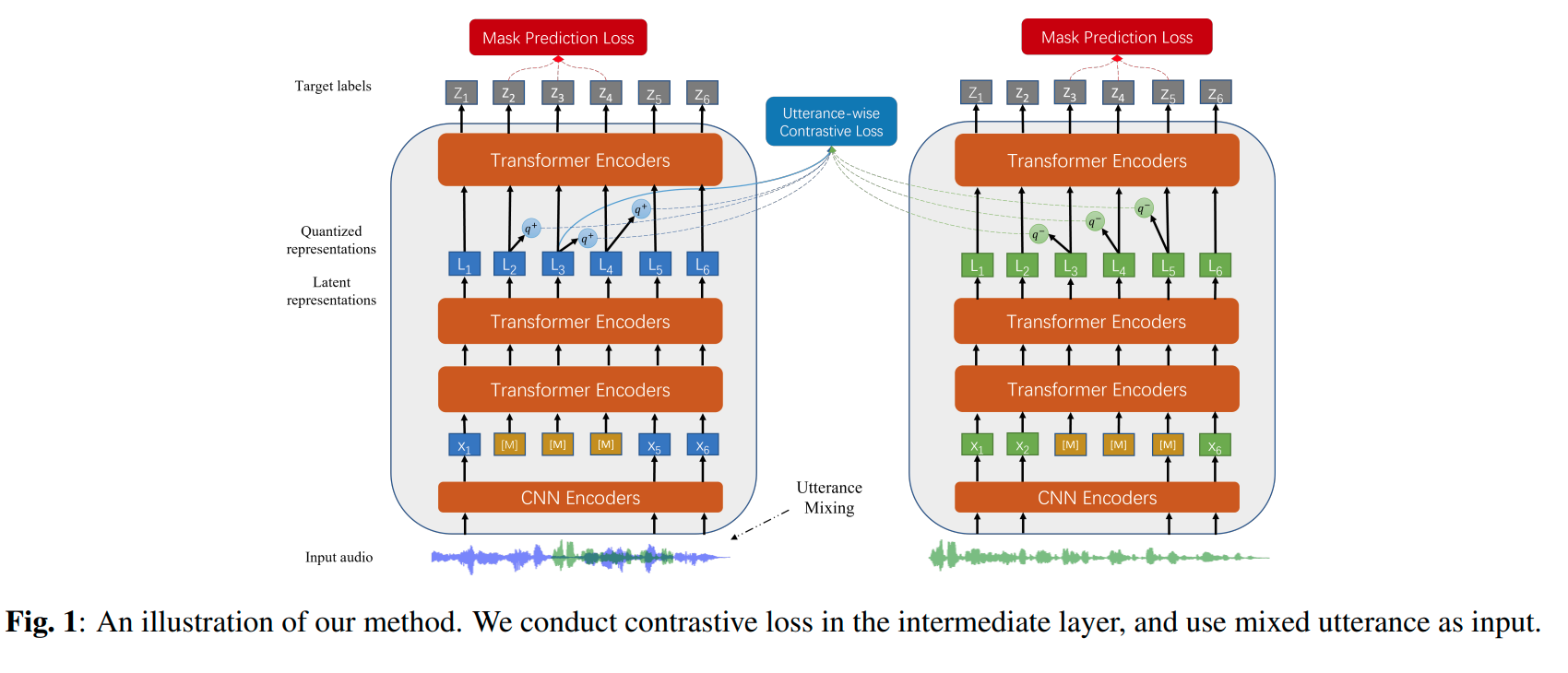

*Self-supervised learning (SSL) is a long-standing goal for speech processing, since it utilizes large-scale unlabeled data and avoids extensive human labeling. Recent years witness great successes in applying self-supervised learning in speech recognition, while limited exploration was attempted in applying SSL for modeling speaker characteristics. In this paper, we aim to improve the existing SSL framework for speaker representation learning. Two methods are introduced for enhancing the unsupervised speaker information extraction. First, we apply the multi-task learning to the current SSL framework, where we integrate the utterance-wise contrastive loss with the SSL objective function. Second, for better speaker discrimination, we propose an utterance mixing strategy for data augmentation, where additional overlapped utterances are created unsupervisely and incorporate during training. We integrate the proposed methods into the HuBERT framework. Experiment results on SUPERB benchmark show that the proposed system achieves state-of-the-art performance in universal representation learning, especially for speaker identification oriented tasks. An ablation study is performed verifying the efficacy of each proposed method. Finally, we scale up training dataset to 94 thousand hours public audio data and achieve further performance improvement in all SUPERB tasks..*

The original model can be found under https://github.com/microsoft/UniSpeech/tree/main/UniSpeech-SAT.

# Usage

To transcribe audio files the model can be used as a standalone acoustic model as follows:

```python

from transformers import Wav2Vec2Processor, UniSpeechSatForCTC

from datasets import load_dataset

import torch

# load model and tokenizer

processor = Wav2Vec2Processor.from_pretrained("microsoft/unispeech-sat-base-100h-libri-ft")

model = UniSpeechSatForCTC.from_pretrained("microsoft/unispeech-sat-base-100h-libri-ft")

# load dummy dataset

ds = load_dataset("patrickvonplaten/librispeech_asr_dummy", "clean", split="validation")

# tokenize

input_values = processor(ds[0]["audio"]["array"], return_tensors="pt", padding="longest").input_values # Batch size 1

# retrieve logits

logits = model(input_values).logits

# take argmax and decode

predicted_ids = torch.argmax(logits, dim=-1)

transcription = processor.batch_decode(predicted_ids)

```

# Contribution

The model was contributed by [cywang](https://huggingface.co/cywang) and [patrickvonplaten](https://huggingface.co/patrickvonplaten).

# License

The official license can be found [here](https://github.com/microsoft/UniSpeech/blob/main/LICENSE)

|

m3hrdadfi/wav2vec2-large-xlsr-persian

|

m3hrdadfi

| 2021-11-04T15:22:12Z | 251 | 16 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"speech",

"xlsr-fine-tuning-week",

"fa",

"dataset:common_voice",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: fa

datasets:

- common_voice

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

widget:

- example_title: Common Voice sample 687

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-persian/resolve/main/sample687.flac

- example_title: Common Voice sample 1671

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-persian/resolve/main/sample1671.flac

model-index:

- name: XLSR Wav2Vec2 Persian (Farsi) by Mehrdad Farahani

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice fa

type: common_voice

args: fa

metrics:

- name: Test WER

type: wer

value: 32.20

---

# Wav2Vec2-Large-XLSR-53-Persian

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Persian (Farsi) using [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz.

## Usage

The model can be used directly (without a language model) as follows:

**Requirements**

```bash

# requirement packages

!pip install git+https://github.com/huggingface/datasets.git

!pip install git+https://github.com/huggingface/transformers.git

!pip install torchaudio

!pip install librosa

!pip install jiwer

!pip install hazm

```

**Prediction**

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset

import numpy as np

import hazm

import re

import string

import IPython.display as ipd

_normalizer = hazm.Normalizer()

chars_to_ignore = [

",", "?", ".", "!", "-", ";", ":", '""', "%", "'", '"', "�",

"#", "!", "؟", "?", "«", "»", "ء", "،", "(", ")", "؛", "'ٔ", "٬",'ٔ', ",", "?",

".", "!", "-", ";", ":",'"',"“", "%", "‘", "”", "�", "–", "…", "_", "”", '“', '„'

]

# In case of farsi

chars_to_ignore = chars_to_ignore + list(string.ascii_lowercase + string.digits)

chars_to_mapping = {

'ك': 'ک', 'دِ': 'د', 'بِ': 'ب', 'زِ': 'ز', 'ذِ': 'ذ', 'شِ': 'ش', 'سِ': 'س', 'ى': 'ی',

'ي': 'ی', 'أ': 'ا', 'ؤ': 'و', "ے": "ی", "ۀ": "ه", "ﭘ": "پ", "ﮐ": "ک", "ﯽ": "ی",

"ﺎ": "ا", "ﺑ": "ب", "ﺘ": "ت", "ﺧ": "خ", "ﺩ": "د", "ﺱ": "س", "ﻀ": "ض", "ﻌ": "ع",

"ﻟ": "ل", "ﻡ": "م", "ﻢ": "م", "ﻪ": "ه", "ﻮ": "و", "ئ": "ی", 'ﺍ': "ا", 'ة': "ه",

'ﯾ': "ی", 'ﯿ': "ی", 'ﺒ': "ب", 'ﺖ': "ت", 'ﺪ': "د", 'ﺮ': "ر", 'ﺴ': "س", 'ﺷ': "ش",

'ﺸ': "ش", 'ﻋ': "ع", 'ﻤ': "م", 'ﻥ': "ن", 'ﻧ': "ن", 'ﻭ': "و", 'ﺭ': "ر", "ﮔ": "گ",

"\u200c": " ", "\u200d": " ", "\u200e": " ", "\u200f": " ", "\ufeff": " ",

}

def multiple_replace(text, chars_to_mapping):

pattern = "|".join(map(re.escape, chars_to_mapping.keys()))

return re.sub(pattern, lambda m: chars_to_mapping[m.group()], str(text))

def remove_special_characters(text, chars_to_ignore_regex):

text = re.sub(chars_to_ignore_regex, '', text).lower() + " "

return text

def normalizer(batch, chars_to_ignore, chars_to_mapping):

chars_to_ignore_regex = f"""[{"".join(chars_to_ignore)}]"""

text = batch["sentence"].lower().strip()

text = _normalizer.normalize(text)

text = multiple_replace(text, chars_to_mapping)

text = remove_special_characters(text, chars_to_ignore_regex)

batch["sentence"] = text

return batch

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-persian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-persian").to(device)

dataset = load_dataset("common_voice", "fa", split="test[:1%]")

dataset = dataset.map(

normalizer,

fn_kwargs={"chars_to_ignore": chars_to_ignore, "chars_to_mapping": chars_to_mapping},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

max_items = np.random.randint(0, len(result), 20).tolist()

for i in max_items:

reference, predicted = result["sentence"][i], result["predicted"][i]

print("reference:", reference)

print("predicted:", predicted)

print('---')

```

**Output:**

```text

reference: اطلاعات مسری است

predicted: اطلاعات مسری است

---

reference: نه منظورم اینه که وقتی که ساکته چه کاریه خودمونه بندازیم زحمت

predicted: نه منظورم اینه که وقتی که ساکت چی کاریه خودمونو بندازیم زحمت

---

reference: من آب پرتقال می خورم لطفا

predicted: من آپ ارتغال می خورم لطفا

---

reference: وقت آن رسیده آنها را که قدم پیش میگذارند بزرگ بداریم

predicted: وقت آ رسیده آنها را که قدم پیش میگذارند بزرگ بداریم

---

reference: سیم باتری دارید

predicted: سیم باتری دارید

---

reference: این بهتره تا اینکه به بهونه درس و مشق هر روز بره خونه شون

predicted: این بهتره تا اینکه به بهمونه درسومش خرروز بره خونه اشون

---

reference: ژاکت تنگ است

predicted: ژاکت تنگ است

---

reference: آت و اشغال های خیابان

predicted: آت و اشغال های خیابان

---

reference: من به این روند اعتراض دارم

predicted: من به این لوند تراج دارم

---

reference: کرایه این مکان چند است

predicted: کرایه این مکان چند است

---

reference: ولی این فرصت این سهم جوانی اعطا نشده است

predicted: ولی این فرصت این سحم جوانی اتان نشده است

---

reference: متوجه فاجعهای محیطی میشوم

predicted: متوجه فاجایهای محیطی میشوم

---

reference: ترافیک شدیدیم بود و دیدن نور ماشینا و چراغا و لامپهای مراکز تجاری حس خوبی بهم میدادن

predicted: ترافیک شدید ی هم بودا دیدن نور ماشینا و چراغ لامپهای مراکز تجاری حس خولی بهم میدادن

---

reference: این مورد عمل ها مربوط به تخصص شما می شود

predicted: این مورد عملها مربوط به تخصص شما میشود

---

reference: انرژی خیلی کمی دارم

predicted: انرژی خیلی کمی دارم

---

reference: زیادی خوبی کردنم تهش داستانه

predicted: زیادی خوبی کردنم ترش داستانه

---

reference: بردهای که پادشاه شود

predicted: برده ای که پاده شاه شود

---

reference: یونسکو

predicted: یونسکو

---

reference: شما اخراج هستید

predicted: شما اخراج هستید

---

reference: من سفر کردن را دوست دارم

predicted: من سفر کردم را دوست دارم

```

## Evaluation

The model can be evaluated as follows on the Persian (Farsi) test data of Common Voice.

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset, load_metric

import numpy as np

import hazm

import re

import string

_normalizer = hazm.Normalizer()

chars_to_ignore = [

",", "?", ".", "!", "-", ";", ":", '""', "%", "'", '"', "�",

"#", "!", "؟", "?", "«", "»", "ء", "،", "(", ")", "؛", "'ٔ", "٬",'ٔ', ",", "?",

".", "!", "-", ";", ":",'"',"“", "%", "‘", "”", "�", "–", "…", "_", "”", '“', '„'

]

# In case of farsi

chars_to_ignore = chars_to_ignore + list(string.ascii_lowercase + string.digits)

chars_to_mapping = {

'ك': 'ک', 'دِ': 'د', 'بِ': 'ب', 'زِ': 'ز', 'ذِ': 'ذ', 'شِ': 'ش', 'سِ': 'س', 'ى': 'ی',

'ي': 'ی', 'أ': 'ا', 'ؤ': 'و', "ے": "ی", "ۀ": "ه", "ﭘ": "پ", "ﮐ": "ک", "ﯽ": "ی",

"ﺎ": "ا", "ﺑ": "ب", "ﺘ": "ت", "ﺧ": "خ", "ﺩ": "د", "ﺱ": "س", "ﻀ": "ض", "ﻌ": "ع",

"ﻟ": "ل", "ﻡ": "م", "ﻢ": "م", "ﻪ": "ه", "ﻮ": "و", "ئ": "ی", 'ﺍ': "ا", 'ة': "ه",

'ﯾ': "ی", 'ﯿ': "ی", 'ﺒ': "ب", 'ﺖ': "ت", 'ﺪ': "د", 'ﺮ': "ر", 'ﺴ': "س", 'ﺷ': "ش",

'ﺸ': "ش", 'ﻋ': "ع", 'ﻤ': "م", 'ﻥ': "ن", 'ﻧ': "ن", 'ﻭ': "و", 'ﺭ': "ر", "ﮔ": "گ",

"\\u200c": " ", "\\u200d": " ", "\\u200e": " ", "\\u200f": " ", "\\ufeff": " ",

}

def multiple_replace(text, chars_to_mapping):

pattern = "|".join(map(re.escape, chars_to_mapping.keys()))

return re.sub(pattern, lambda m: chars_to_mapping[m.group()], str(text))

def remove_special_characters(text, chars_to_ignore_regex):

text = re.sub(chars_to_ignore_regex, '', text).lower() + " "

return text

def normalizer(batch, chars_to_ignore, chars_to_mapping):

chars_to_ignore_regex = f"""[{"".join(chars_to_ignore)}]"""

text = batch["sentence"].lower().strip()

text = _normalizer.normalize(text)

text = multiple_replace(text, chars_to_mapping)

text = remove_special_characters(text, chars_to_ignore_regex)

batch["sentence"] = text

return batch

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-persian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-persian").to(device)

dataset = load_dataset("common_voice", "fa", split="test")

dataset = dataset.map(

normalizer,

fn_kwargs={"chars_to_ignore": chars_to_ignore, "chars_to_mapping": chars_to_mapping},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

wer = load_metric("wer")

print("WER: {:.2f}".format(100 * wer.compute(predictions=result["predicted"], references=result["sentence"])))

```

**Test Result:**

- WER: 32.20%

## Training

The Common Voice `train`, `validation` datasets were used for training.

The script used for training can be found [here](https://colab.research.google.com/github/m3hrdadfi/notebooks/blob/main/Fine_Tune_XLSR_Wav2Vec2_on_Persian_ASR_with_%F0%9F%A4%97_Transformers_ipynb.ipynb)

|

m3hrdadfi/wav2vec2-large-xlsr-lithuanian

|

m3hrdadfi

| 2021-11-04T15:22:08Z | 5 | 2 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"speech",

"xlsr-fine-tuning-week",

"lt",

"dataset:common_voice",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: lt

datasets:

- common_voice

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

widget:

- example_title: Common Voice sample 11

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-lithuanian/resolve/main/sample11.flac

- example_title: Common Voice sample 74

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-lithuanian/resolve/main/sample74.flac

model-index:

- name: XLSR Wav2Vec2 Lithuanian by Mehrdad Farahani

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice lt

type: common_voice

args: lt

metrics:

- name: Test WER

type: wer

value: 34.66

---

# Wav2Vec2-Large-XLSR-53-Lithuanian

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Lithuanian using [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz.

## Usage

The model can be used directly (without a language model) as follows:

**Requirements**

```bash

# requirement packages

!pip install git+https://github.com/huggingface/datasets.git

!pip install git+https://github.com/huggingface/transformers.git

!pip install torchaudio

!pip install librosa

!pip install jiwer

```

**Normalizer**

```bash

!wget -O normalizer.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-lithuanian/raw/main/normalizer.py

```

**Prediction**

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset

import numpy as np

import re

import string

import IPython.display as ipd

from normalizer import normalizer

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-lithuanian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-lithuanian").to(device)

dataset = load_dataset("common_voice", "lt", split="test[:1%]")

dataset = dataset.map(

normalizer,

fn_kwargs={"remove_extra_space": True},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

max_items = np.random.randint(0, len(result), 20).tolist()

for i in max_items:

reference, predicted = result["sentence"][i], result["predicted"][i]

print("reference:", reference)

print("predicted:", predicted)

print('---')

```

**Output:**

```text

reference: jos tikslas buvo rasti kelią į ramųjį vandenyną šiaurės amerikoje

predicted: jos tikstas buvo rasikelia į ramų į vandenyna šiaurės amerikoje

---

reference: pietrytinėje dalyje likusių katalikų kapinių teritorija po antrojo pasaulinio karo dar padidėjo

predicted: pietrytinė daljelikusių gatalikų kapinių teritoriją pontro pasaulnio karo dar padidėjo

---

reference: koplyčioje pakabintas aušros vartų marijos paveikslas

predicted: koplyčioje pakagintas aušos fortų marijos paveikslas

---

reference: yra politinių debatų vedėjas

predicted: yra politinių debatų vedėjas

---

reference: žmogui taip pat gali būti mirtinai pavojingi

predicted: žmogui taip pat gali būti mirtinai pavojingi

---

reference: tuo pačiu metu kijeve nuverstas netekęs vokietijos paramos skoropadskis

predicted: tuo pačiu metu kiei venų verstas netekės vokietijos paramos kropadskis

---

reference: visos dvylika komandų tarpusavyje sužaidžia po dvi rungtynes

predicted: visos dvylika komandų tarpuso vysų žaidžia po dvi rungtynės

---

reference: kaukazo regioną sudaro kaukazo kalnai ir gretimos žemumos

predicted: kau kazo regioną sudaro kaukazo kalnai ir gretimos žemumus

---

reference: tarptautinių ir rusiškų šaškių kandidatas į sporto meistrus

predicted: tarptautinio ir rusiškos šaškių kandidatus į sporto meistrus

---

reference: prasideda putorano plynaukštės pietiniame pakraštyje

predicted: prasideda futorano prynaukštės pietiniame pakraštyje

---

reference: miestas skirstomas į senamiestį ir naujamiestį

predicted: miestas skirstomas į senamėsti ir naujamiestė

---

reference: tais pačiais metais pelnė bronzą pasaulio taurės kolumbijos etape komandinio sprinto rungtyje

predicted: tais pačiais metais pelnį mronsa pasaulio taurės kolumbijos etape komandinio sprento rungtyje

---

reference: prasideda putorano plynaukštės pietiniame pakraštyje

predicted: prasideda futorano prynaukštės pietiniame pakraštyje

---

reference: moterų tarptautinės meistrės vardas yra viena pakopa žemesnis už moterų tarptautinės korespondencinių šachmatų didmeistrės

predicted: moterų tarptautinės meistrės vardas yra gana pakopo žymesnis už moterų tarptautinės kūrespondencinių šachmatų didmesčias

---

reference: teritoriją dengia tropinės džiunglės

predicted: teritorija dengia tropinės žiunglės

---

reference: pastaroji dažnai pereina į nimcovičiaus gynybą arba bogoliubovo gynybą

predicted: pastaruoji dažnai pereina nimcovičiaus gynyba arba bogalių buvo gymyba

---

reference: už tai buvo suimtas ir tris mėnesius sėdėjo butyrkų kalėjime

predicted: užtai buvo sujumtas ir tris mėne susiedėjo butirkų kalėjime

---

reference: tai didžiausias pagal gyventojų skaičių regionas

predicted: tai didžiausias pagal gyventojų skaičių redionus

---

reference: vilkyškių miške taip pat auga raganų eglė

predicted: vilkiškimiškė taip pat auga ragano eglė

---

reference: kitas gavo skaraitiškės dvarą su palivarkais

predicted: kitas gavos karaitiškės dvarą spolivarkais

---

```

## Evaluation

The model can be evaluated as follows on the test data of Common Voice.

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset, load_metric

import numpy as np

import re

import string

from normalizer import normalizer

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-lithuanian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-lithuanian").to(device)

dataset = load_dataset("common_voice", "lt", split="test")

dataset = dataset.map(

normalizer,

fn_kwargs={"remove_extra_space": True},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

wer = load_metric("wer")

print("WER: {:.2f}".format(100 * wer.compute(predictions=result["predicted"], references=result["sentence"])))

```

]

**Test Result**:

- WER: 34.66%

## Training & Report

The Common Voice `train`, `validation` datasets were used for training.

You can see the training states [here](https://wandb.ai/m3hrdadfi/wav2vec2_large_xlsr_lt/reports/Fine-Tuning-for-Wav2Vec2-Large-XLSR-53-Lithuanian--Vmlldzo1OTM1MTU?accessToken=kdkpara4hcmjvrlpbfsnu4s8cdk3a0xeyrb84ycpr4k701n13hzr9q7s60b00swx)

The script used for training can be found [here](https://colab.research.google.com/github/m3hrdadfi/notebooks/blob/main/Fine_Tune_XLSR_Wav2Vec2_on_Lithuanian_ASR_with_%F0%9F%A4%97_Transformers_ipynb.ipynb)

## Questions?

Post a Github issue on the [Wav2Vec](https://github.com/m3hrdadfi/wav2vec) repo.

|

m3hrdadfi/wav2vec2-large-xlsr-icelandic

|

m3hrdadfi

| 2021-11-04T15:22:07Z | 15 | 1 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"speech",

"xlsr-fine-tuning-week",

"is",

"dataset:malromur",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: is

datasets:

- malromur

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

widget:

- example_title: Malromur sample 1608

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/resolve/main/sample1608.flac

- example_title: Malromur sample 3860

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/resolve/main/sample3860.flac

model-index:

- name: XLSR Wav2Vec2 Icelandic by Mehrdad Farahani

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Malromur is

type: malromur

args: lt

metrics:

- name: Test WER

type: wer

value: 09.21

---

# Wav2Vec2-Large-XLSR-53-Icelandic

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Icelandic using [Malromur](https://clarin.is/en/resources/malromur/). When using this model, make sure that your speech input is sampled at 16kHz.

## Usage

The model can be used directly (without a language model) as follows:

**Requirements**

```bash

# requirement packages

!pip install git+https://github.com/huggingface/datasets.git

!pip install git+https://github.com/huggingface/transformers.git

!pip install torchaudio

!pip install librosa

!pip install jiwer

!pip install num2words

```

**Normalizer**

```bash

# num2word packages

# Original source: https://github.com/savoirfairelinux/num2words

!mkdir -p ./num2words

!wget -O num2words/__init__.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/__init__.py

!wget -O num2words/base.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/base.py

!wget -O num2words/compat.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/compat.py

!wget -O num2words/currency.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/currency.py

!wget -O num2words/lang_EU.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/lang_EU.py

!wget -O num2words/lang_IS.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/lang_IS.py

!wget -O num2words/utils.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/num2words/utils.py

# Malromur_test selected based on gender and age

!wget -O malromur_test.csv https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/malromur_test.csv

# Normalizer

!wget -O normalizer.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-icelandic/raw/main/normalizer.py

```

**Prediction**

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset

import numpy as np

import re

import string

import IPython.display as ipd

from normalizer import Normalizer

normalizer = Normalizer(lang="is")

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-icelandic")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-icelandic").to(device)

dataset = load_dataset("csv", data_files={"test": "./malromur_test.csv"})["test"]

dataset = dataset.map(

normalizer,

fn_kwargs={"do_lastspace_removing": True, "text_key_name": "cleaned_sentence"},

remove_columns=list(set(dataset.column_names) - set(['cleaned_sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict, batched=True, batch_size=8)

max_items = np.random.randint(0, len(result), 20).tolist()

for i in max_items:

reference, predicted = result["cleaned_sentence"][i], result["predicted"][i]

print("reference:", reference)

print("predicted:", predicted)

print('---')

```

**Output:**

```text

reference: eða eitthvað annað dýr

predicted: eða eitthvað annað dýr

---

reference: oddgerður

predicted: oddgerður

---

reference: eiðný

predicted: eiðný

---

reference: löndum

predicted: löndum

---

reference: tileinkaði bróður sínum markið

predicted: tileinkaði bróður sínum markið

---

reference: þetta er svo mikill hégómi

predicted: þetta er svo mikill hégómi

---

reference: timarit is

predicted: timarit is

---

reference: stefna strax upp aftur

predicted: stefna strax upp aftur

---

reference: brekkuflöt

predicted: brekkuflöt

---

reference: áætlunarferð frestað vegna veðurs

predicted: áætluna ferð frestað vegna veðurs

---

reference: sagði af sér vegna kláms

predicted: sagði af sér vegni kláms

---

reference: grímúlfur

predicted: grímúlgur

---

reference: lýsti sig saklausan

predicted: lýsti sig saklausan

---

reference: belgingur is

predicted: belgingur is

---

reference: sambía

predicted: sambía

---

reference: geirastöðum

predicted: geirastöðum

---

reference: varð tvisvar fyrir eigin bíl

predicted: var tvisvar fyrir eigin bíl

---

reference: reykjavöllum

predicted: reykjavöllum

---

reference: miklir menn eru þeir þremenningar

predicted: miklir menn eru þeir þremenningar

---

reference: handverkoghonnun is

predicted: handverkoghonnun is

---

```

## Evaluation

The model can be evaluated as follows on the test data of Malromur.

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset, load_metric

import numpy as np

import re

import string

from normalizer import Normalizer

normalizer = Normalizer(lang="is")

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-icelandic")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-icelandic").to(device)

dataset = load_dataset("csv", data_files={"test": "./malromur_test.csv"})["test"]

dataset = dataset.map(

normalizer,

fn_kwargs={"do_lastspace_removing": True, "text_key_name": "cleaned_sentence"},

remove_columns=list(set(dataset.column_names) - set(['cleaned_sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict, batched=True, batch_size=8)

wer = load_metric("wer")

print("WER: {:.2f}".format(100 * wer.compute(predictions=result["predicted"], references=result["cleaned_sentence"])))

```

**Test Result**:

- WER: 09.21%

## Training & Report

The Common Voice `train`, `validation` datasets were used for training.

You can see the training states [here](https://wandb.ai/m3hrdadfi/wav2vec2_large_xlsr_is/reports/Fine-Tuning-for-Wav2Vec2-Large-XLSR-Icelandic--Vmlldzo2Mjk3ODc?accessToken=j7neoz71mce1fkzt0bch4j0l50witnmme07xe90nvs769kjjtbwneu2wfz3oip16)

The script used for training can be found [here](https://colab.research.google.com/github/m3hrdadfi/notebooks/blob/main/Fine_Tune_XLSR_Wav2Vec2_on_Icelandic_ASR_with_%F0%9F%A4%97_Transformers_ipynb.ipynb)

## Questions?

Post a Github issue on the [Wav2Vec](https://github.com/m3hrdadfi/wav2vec) repo.

|

m3hrdadfi/wav2vec2-large-xlsr-georgian

|

m3hrdadfi

| 2021-11-04T15:22:05Z | 67 | 5 |

transformers

|

[

"transformers",

"pytorch",

"jax",

"wav2vec2",

"automatic-speech-recognition",

"audio",

"speech",

"xlsr-fine-tuning-week",

"ka",

"dataset:common_voice",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language: ka

datasets:

- common_voice

tags:

- audio

- automatic-speech-recognition

- speech

- xlsr-fine-tuning-week

license: apache-2.0

widget:

- example_title: Common Voice sample 566

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-georgian/resolve/main/sample566.flac

- example_title: Common Voice sample 95

src: https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-georgian/resolve/main/sample95.flac

model-index:

- name: XLSR Wav2Vec2 Georgian by Mehrdad Farahani

results:

- task:

name: Speech Recognition

type: automatic-speech-recognition

dataset:

name: Common Voice ka

type: common_voice

args: ka

metrics:

- name: Test WER

type: wer

value: 43.86

---

# Wav2Vec2-Large-XLSR-53-Georgian

Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) in Georgian using [Common Voice](https://huggingface.co/datasets/common_voice). When using this model, make sure that your speech input is sampled at 16kHz.

## Usage

The model can be used directly (without a language model) as follows:

**Requirements**

```bash

# requirement packages

!pip install git+https://github.com/huggingface/datasets.git

!pip install git+https://github.com/huggingface/transformers.git

!pip install torchaudio

!pip install librosa

!pip install jiwer

```

**Normalizer**

```bash

!wget -O normalizer.py https://huggingface.co/m3hrdadfi/wav2vec2-large-xlsr-lithuanian/raw/main/normalizer.py

```

**Prediction**

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset

import numpy as np

import re

import string

import IPython.display as ipd

from normalizer import normalizer

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-georgian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-georgian").to(device)

dataset = load_dataset("common_voice", "ka", split="test[:1%]")

dataset = dataset.map(

normalizer,

fn_kwargs={"remove_extra_space": True},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

max_items = np.random.randint(0, len(result), 20).tolist()

for i in max_items:

reference, predicted = result["sentence"][i], result["predicted"][i]

print("reference:", reference)

print("predicted:", predicted)

print('---')

```

**Output:**

```text

reference: პრეზიდენტობისას ბუში საქართველოს და უკრაინის დემოკრატიულ მოძრაობების და ნატოში გაწევრიანების აქტიური მხარდამჭერი იყო

predicted: პრეზიდენტო ვისას ბუში საქართველოს და უკრაინის დემოკრატიულ მოძრაობების და ნატიში დაწევრიანების აქტიური მხარდამჭერი იყო

---

reference: შესაძლებელია მისი დამონება და მსახურ დემონად გადაქცევა

predicted: შესაძლებელია მისი დამონებათ და მსახურდემანად გადაქცევა

---

reference: ეს გამოსახულებები აღბეჭდილი იყო მოსკოვის დიდი მთავრებისა და მეფეების ბეჭდებზე

predicted: ეს გამოსახულებები აღბეჭდილი იყო მოსკოვის დიდი მთავრებისა და მეფეების ბეჭდებზე

---

reference: ჯოლიმ ოქროს გლობუსისა და კინომსახიობთა გილდიის ნომინაციები მიიღო

predicted: ჯოლი მოქროს გლობუსისა და კინამსახიობთა გილდიის ნომინაციები მიიღო

---

reference: შემდგომში საქალაქო ბიბლიოთეკა სარაიონო ბიბლიოთეკად გადაკეთდა გაიზარდა წიგნადი ფონდი

predicted: შემდღომში საქალაქო ბიბლიოთეკა სარაიონო ბიბლიოთეკად გადაკეთა გაიზარდა წიგნადი ფოვდი

---

reference: აბრამსი დაუკავშირდა მირანდას და ორი თვის განმავლობაში ისინი მუშაობდნენ აღნიშნული სცენის თანმხლებ მელოდიაზე

predicted: აბრამში და უკავშირდა მირანდეს და ორითვის განმავლობაში ისინი მუშაობდნენა აღნიშნულის ჩენის მთამხლევით მელოდიაში

---

reference: ამჟამად თემთა პალატის ოპოზიციის ლიდერია ლეიბორისტული პარტიის ლიდერი ჯერემი კორბინი

predicted: ამჟამად თემთა პალატის ოპოზიციის ლიდერია ლეიბურისტული პარტიის ლიდერი ჯერემი კორვინი

---

reference: ორი

predicted: ორი

---

reference: მას შემდეგ იგი კოლექტივის მუდმივი წევრია

predicted: მას შემდეგ იგი კოლექტივის ფუდ მივი წევრია

---

reference: აზერბაიჯანულ ფილოსოფიას შეიძლება მივაკუთვნოთ რუსეთის საზოგადო მოღვაწე ჰეიდარ ჯემალი

predicted: აზერგვოიჯანალ ფილოსოფიას შეიძლება მივაკუთვნოთ რუსეთის საზოგადო მოღვაწე ჰეიდარ ჯემალი

---

reference: ბრონქსში ჯერომის ავენიუ ჰყოფს გამჭოლ ქუჩებს აღმოსავლეთ და დასავლეთ ნაწილებად

predicted: რონგში დერომიწ ავენილ პოფს გამ დოლფურქებს აღმოსავლეთ და დასავლეთ ნაწილებად

---

reference: ჰაერი არის ჟანგბადის ის ძირითადი წყარო რომელსაც საჭიროებს ყველა ცოცხალი ორგანიზმი

predicted: არი არის ჯამუბადესის ძირითადი წყარო რომელსაც საჭიროოებს ყველა ცოცხალი ორგანიზმი

---

reference: ჯგუფი უმეტესწილად ასრულებს პოპმუსიკის ჟანრის სიმღერებს

predicted: ჯგუფიუმეტესწევად ასრულებს პოპნუსიკის ჟანრის სიმრერებს

---

reference: ბაბილინა მუდმივად ცდილობდა შესაძლებლობების ფარგლებში მიეღო ცოდნა და ახალი ინფორმაცია

predicted: ბაბილინა მუდმივა ცდილობდა შესაძლებლობების ფარგლებში მიიღო ცოტნა და ახალი ინფორმაცია

---

reference: მრევლის რწმენით რომელი ჯგუფიც გაიმარჯვებდა მთელი წლის მანძილზე სიუხვე და ბარაქა არ მოაკლდებოდა

predicted: მრევრის რწმენით რომელიჯგუფის გაიმარჯვებდა მთელიჭლის მანძილზა სიუყვეტაბარაქა არ მოაკლდებოდა

---

reference: ნინო ჩხეიძეს განსაკუთრებული ღვაწლი მიუძღვის ქუთაისისა და რუსთაველის თეატრების შემოქმედებით ცხოვრებაში

predicted: მინო ჩხეიძეს განსაკუთრებული ღოვაწლი მიოცხვის ქუთაისისა და რუსთაველის თეატრების შემოქმედებით ცხოვრებაში

---

reference: იგი სამი დიალექტისგან შედგება

predicted: იგი სამი დიალეთის გან შედგება

---

reference: ფორმით სირაქლემებს წააგვანან

predicted: ომიცი რაქლემებს ააგვანამ

---

reference: დანი დაიბადა კოლუმბუსში ოჰაიოში

predicted: დონი დაიბაოდა კოლუმბუსში ოხვაიოში

---

reference: მშენებლობისათვის გამოიყო ადგილი ყოფილი აეროპორტის რაიონში

predicted: შენებლობისათვის გამოიყო ადგილი ყოფილი აეროპორტის რაიონში

---

```

## Evaluation

The model can be evaluated as follows on the Georgian test data of Common Voice.

```python

import librosa

import torch

import torchaudio

from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor

from datasets import load_dataset, load_metric

import numpy as np

import re

import string

from normalizer import normalizer

def speech_file_to_array_fn(batch):

speech_array, sampling_rate = torchaudio.load(batch["path"])

speech_array = speech_array.squeeze().numpy()

speech_array = librosa.resample(np.asarray(speech_array), sampling_rate, 16_000)

batch["speech"] = speech_array

return batch

def predict(batch):

features = processor(batch["speech"], sampling_rate=16_000, return_tensors="pt", padding=True)

input_values = features.input_values.to(device)

attention_mask = features.attention_mask.to(device)

with torch.no_grad():

logits = model(input_values, attention_mask=attention_mask).logits

pred_ids = torch.argmax(logits, dim=-1)

batch["predicted"] = processor.batch_decode(pred_ids)[0]

return batch

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

processor = Wav2Vec2Processor.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-georgian")

model = Wav2Vec2ForCTC.from_pretrained("m3hrdadfi/wav2vec2-large-xlsr-georgian").to(device)

dataset = load_dataset("common_voice", "ka", split="test")

dataset = dataset.map(

normalizer,

fn_kwargs={"remove_extra_space": True},

remove_columns=list(set(dataset.column_names) - set(['sentence', 'path']))

)

dataset = dataset.map(speech_file_to_array_fn)

result = dataset.map(predict)

wer = load_metric("wer")

print("WER: {:.2f}".format(100 * wer.compute(predictions=result["predicted"], references=result["sentence"])))

```

**Test Result**:

- WER: 43.86%

## Training & Report

The Common Voice `train`, `validation` datasets were used for training.

You can see the training states [here](https://wandb.ai/m3hrdadfi/wav2vec2_large_xlsr_ka/reports/Fine-Tuning-for-Wav2Vec2-Large-XLSR-53-Georgian--Vmlldzo1OTQyMzk?accessToken=ytf7jseje66a3byuheh68o6a7215thjviscv5k2ewl5hgq9yqr50yxbko0bnf1d3)

The script used for training can be found [here](https://colab.research.google.com/github/m3hrdadfi/notebooks/blob/main/Fine_Tune_XLSR_Wav2Vec2_on_Georgian_ASR_with_%F0%9F%A4%97_Transformers_ipynb.ipynb)

## Questions?

Post a Github issue on the [Wav2Vec](https://github.com/m3hrdadfi/wav2vec) repo.

|

patrickvonplaten/hello_2b_3

|

patrickvonplaten

| 2021-11-04T15:11:04Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"common_voice",

"generated_from_trainer",

"tr",

"dataset:common_voice",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language:

- tr

tags:

- automatic-speech-recognition

- common_voice

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: hello_2b_3

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# hello_2b_3

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-2b](https://huggingface.co/facebook/wav2vec2-xls-r-2b) on the COMMON_VOICE - TR dataset.

It achieves the following results on the evaluation set:

- Loss: 1.5615

- Wer: 0.9808

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-06

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- gradient_accumulation_steps: 8

- total_train_batch_size: 32

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 3.6389 | 0.92 | 100 | 3.6218 | 1.0 |

| 1.6676 | 1.85 | 200 | 3.2655 | 1.0 |

| 0.3067 | 2.77 | 300 | 3.2273 | 1.0 |

| 0.1924 | 3.7 | 400 | 3.0238 | 0.9999 |

| 0.1777 | 4.63 | 500 | 2.1606 | 0.9991 |

| 0.1481 | 5.55 | 600 | 1.8742 | 0.9982 |

| 0.1128 | 6.48 | 700 | 2.0114 | 0.9994 |

| 0.1806 | 7.4 | 800 | 1.9032 | 0.9984 |

| 0.0399 | 8.33 | 900 | 2.0556 | 0.9996 |

| 0.0729 | 9.26 | 1000 | 2.0515 | 0.9987 |

| 0.0847 | 10.18 | 1100 | 2.2121 | 0.9995 |

| 0.0777 | 11.11 | 1200 | 1.7002 | 0.9923 |

| 0.0476 | 12.04 | 1300 | 1.5262 | 0.9792 |

| 0.0518 | 12.96 | 1400 | 1.5990 | 0.9832 |

| 0.071 | 13.88 | 1500 | 1.6326 | 0.9875 |

| 0.0333 | 14.81 | 1600 | 1.5955 | 0.9870 |

| 0.0369 | 15.74 | 1700 | 1.5577 | 0.9832 |

| 0.0689 | 16.66 | 1800 | 1.5415 | 0.9839 |

| 0.0227 | 17.59 | 1900 | 1.5450 | 0.9878 |

| 0.0472 | 18.51 | 2000 | 1.5642 | 0.9846 |

| 0.0214 | 19.44 | 2100 | 1.6103 | 0.9846 |

| 0.0289 | 20.37 | 2200 | 1.6467 | 0.9898 |

| 0.0182 | 21.29 | 2300 | 1.5268 | 0.9780 |

| 0.0439 | 22.22 | 2400 | 1.6001 | 0.9818 |

| 0.06 | 23.15 | 2500 | 1.5481 | 0.9813 |

| 0.0351 | 24.07 | 2600 | 1.5672 | 0.9820 |

| 0.0198 | 24.99 | 2700 | 1.6303 | 0.9856 |

| 0.0328 | 25.92 | 2800 | 1.5958 | 0.9831 |

| 0.0245 | 26.85 | 2900 | 1.5745 | 0.9809 |

| 0.0885 | 27.77 | 3000 | 1.5455 | 0.9809 |

| 0.0224 | 28.7 | 3100 | 1.5378 | 0.9824 |

| 0.0223 | 29.63 | 3200 | 1.5642 | 0.9810 |

### Framework versions

- Transformers 4.13.0.dev0

- Pytorch 1.10.0

- Datasets 1.15.2.dev0

- Tokenizers 0.10.3

|

AkshaySg/langid

|

AkshaySg

| 2021-11-04T12:38:18Z | 1 | 5 |

speechbrain

|

[

"speechbrain",

"audio-classification",

"embeddings",

"Language",

"Identification",

"pytorch",

"ECAPA-TDNN",

"TDNN",

"VoxLingua107",

"multilingual",

"dataset:VoxLingua107",

"license:apache-2.0",

"region:us"

] |

audio-classification

| 2022-03-02T23:29:04Z |

---

language: multilingual

thumbnail:

tags:

- audio-classification

- speechbrain

- embeddings

- Language

- Identification

- pytorch

- ECAPA-TDNN

- TDNN

- VoxLingua107

license: "apache-2.0"

datasets:

- VoxLingua107

metrics:

- Accuracy

widget:

- example_title: English Sample

src: https://cdn-media.huggingface.co/speech_samples/LibriSpeech_61-70968-0000.flac

---

# VoxLingua107 ECAPA-TDNN Spoken Language Identification Model

## Model description

This is a spoken language recognition model trained on the VoxLingua107 dataset using SpeechBrain.

The model uses the ECAPA-TDNN architecture that has previously been used for speaker recognition.

The model can classify a speech utterance according to the language spoken.

It covers 107 different languages (

Abkhazian,

Afrikaans,

Amharic,

Arabic,

Assamese,

Azerbaijani,

Bashkir,

Belarusian,

Bulgarian,

Bengali,

Tibetan,

Breton,

Bosnian,

Catalan,

Cebuano,

Czech,

Welsh,

Danish,

German,

Greek,

English,

Esperanto,

Spanish,

Estonian,

Basque,

Persian,

Finnish,

Faroese,

French,

Galician,

Guarani,

Gujarati,

Manx,

Hausa,

Hawaiian,

Hindi,

Croatian,

Haitian,

Hungarian,

Armenian,

Interlingua,

Indonesian,

Icelandic,

Italian,

Hebrew,

Japanese,

Javanese,

Georgian,

Kazakh,

Central Khmer,

Kannada,

Korean,

Latin,

Luxembourgish,

Lingala,

Lao,

Lithuanian,

Latvian,

Malagasy,

Maori,

Macedonian,

Malayalam,

Mongolian,

Marathi,

Malay,

Maltese,

Burmese,

Nepali,

Dutch,

Norwegian Nynorsk,

Norwegian,

Occitan,

Panjabi,

Polish,

Pushto,

Portuguese,

Romanian,

Russian,

Sanskrit,

Scots,

Sindhi,

Sinhala,

Slovak,

Slovenian,

Shona,

Somali,

Albanian,

Serbian,

Sundanese,

Swedish,

Swahili,

Tamil,

Telugu,

Tajik,

Thai,

Turkmen,

Tagalog,

Turkish,

Tatar,

Ukrainian,

Urdu,

Uzbek,

Vietnamese,

Waray,

Yiddish,

Yoruba,

Mandarin Chinese).

## Intended uses & limitations

The model has two uses:

- use 'as is' for spoken language recognition

- use as an utterance-level feature (embedding) extractor, for creating a dedicated language ID model on your own data

The model is trained on automatically collected YouTube data. For more

information about the dataset, see [here](http://bark.phon.ioc.ee/voxlingua107/).

#### How to use

```python

import torchaudio

from speechbrain.pretrained import EncoderClassifier

language_id = EncoderClassifier.from_hparams(source="TalTechNLP/voxlingua107-epaca-tdnn", savedir="tmp")

# Download Thai language sample from Omniglot and cvert to suitable form

signal = language_id.load_audio("https://omniglot.com/soundfiles/udhr/udhr_th.mp3")

prediction = language_id.classify_batch(signal)

print(prediction)

(tensor([[0.3210, 0.3751, 0.3680, 0.3939, 0.4026, 0.3644, 0.3689, 0.3597, 0.3508,

0.3666, 0.3895, 0.3978, 0.3848, 0.3957, 0.3949, 0.3586, 0.4360, 0.3997,

0.4106, 0.3886, 0.4177, 0.3870, 0.3764, 0.3763, 0.3672, 0.4000, 0.4256,

0.4091, 0.3563, 0.3695, 0.3320, 0.3838, 0.3850, 0.3867, 0.3878, 0.3944,

0.3924, 0.4063, 0.3803, 0.3830, 0.2996, 0.4187, 0.3976, 0.3651, 0.3950,

0.3744, 0.4295, 0.3807, 0.3613, 0.4710, 0.3530, 0.4156, 0.3651, 0.3777,

0.3813, 0.6063, 0.3708, 0.3886, 0.3766, 0.4023, 0.3785, 0.3612, 0.4193,

0.3720, 0.4406, 0.3243, 0.3866, 0.3866, 0.4104, 0.4294, 0.4175, 0.3364,

0.3595, 0.3443, 0.3565, 0.3776, 0.3985, 0.3778, 0.2382, 0.4115, 0.4017,

0.4070, 0.3266, 0.3648, 0.3888, 0.3907, 0.3755, 0.3631, 0.4460, 0.3464,

0.3898, 0.3661, 0.3883, 0.3772, 0.9289, 0.3687, 0.4298, 0.4211, 0.3838,

0.3521, 0.3515, 0.3465, 0.4772, 0.4043, 0.3844, 0.3973, 0.4343]]), tensor([0.9289]), tensor([94]), ['th'])

# The scores in the prediction[0] tensor can be interpreted as cosine scores between

# the languages and the given utterance (i.e., the larger the better)

# The identified language ISO code is given in prediction[3]

print(prediction[3])

['th']

# Alternatively, use the utterance embedding extractor:

emb = language_id.encode_batch(signal)

print(emb.shape)

torch.Size([1, 1, 256])

```

#### Limitations and bias

Since the model is trained on VoxLingua107, it has many limitations and biases, some of which are:

- Probably it's accuracy on smaller languages is quite limited

- Probably it works worse on female speech than male speech (because YouTube data includes much more male speech)

- Based on subjective experiments, it doesn't work well on speech with a foreign accent

- Probably it doesn't work well on children's speech and on persons with speech disorders

## Training data

The model is trained on [VoxLingua107](http://bark.phon.ioc.ee/voxlingua107/).

VoxLingua107 is a speech dataset for training spoken language identification models.

The dataset consists of short speech segments automatically extracted from YouTube videos and labeled according the language of the video title and description, with some post-processing steps to filter out false positives.

VoxLingua107 contains data for 107 languages. The total amount of speech in the training set is 6628 hours.

The average amount of data per language is 62 hours. However, the real amount per language varies a lot. There is also a seperate development set containing 1609 speech segments from 33 languages, validated by at least two volunteers to really contain the given language.

## Training procedure

We used [SpeechBrain](https://github.com/speechbrain/speechbrain) to train the model.

Training recipe will be published soon.

## Evaluation results

Error rate: 7% on the development dataset

### BibTeX entry and citation info

```bibtex

@inproceedings{valk2021slt,

title={{VoxLingua107}: a Dataset for Spoken Language Recognition},

author={J{\"o}rgen Valk and Tanel Alum{\"a}e},

booktitle={Proc. IEEE SLT Workshop},

year={2021},

}

```

|

nikhil6041/wav2vec2-large-xlsr-hindi-demo-colab

|

nikhil6041

| 2021-11-04T09:21:14Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"wav2vec2",

"automatic-speech-recognition",

"generated_from_trainer",

"dataset:common_voice",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: wav2vec2-large-xlsr-hindi-demo-colab

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# wav2vec2-large-xlsr-hindi-demo-colab

This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the common_voice dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0003

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 2

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 10

- mixed_precision_training: Native AMP

### Training results

### Framework versions

- Transformers 4.11.3

- Pytorch 1.10.0+cu102

- Datasets 1.13.3

- Tokenizers 0.10.3

|

nateraw/lightweight-gan-flowers-64

|

nateraw

| 2021-11-04T09:11:04Z | 0 | 4 | null |

[

"region:us"

] | null | 2022-03-02T23:29:05Z |

# Flowers GAN

<a href="https://colab.research.google.com/github/nateraw/huggingface-hub-examples/blob/main/pytorch_lightweight_gan.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

Give the [Github Repo](https://github.com/nateraw/huggingface-hub-examples) a ⭐️

### Generated Images

<video width="320" height="240" controls>

<source src="https://huggingface.co/nateraw/lightweight-gan-flowers-64/resolve/main/generated.mp4" type="video/mp4">

</video>

### EMA

<video width="320" height="240" controls>

<source src="https://huggingface.co/nateraw/lightweight-gan-flowers-64/resolve/main/ema.mp4" type="video/mp4">

</video>

|

histinct7002/distilbert-base-uncased-finetuned-ner

|

histinct7002

| 2021-11-04T07:14:05Z | 4 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"distilbert",

"token-classification",

"generated_from_trainer",

"dataset:conll2003",

"license:apache-2.0",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-03-02T23:29:05Z |

---

license: apache-2.0

tags:

- generated_from_trainer

datasets:

- conll2003

metrics:

- precision

- recall

- f1

- accuracy

model-index:

- name: distilbert-base-uncased-finetuned-ner

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: conll2003

type: conll2003

args: conll2003

metrics:

- name: Precision

type: precision

value: 0.9334444444444444

- name: Recall

type: recall

value: 0.9398142969012194

- name: F1

type: f1

value: 0.9366185406098445

- name: Accuracy

type: accuracy

value: 0.9845425516704529

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-ner

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the conll2003 dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0727

- Precision: 0.9334

- Recall: 0.9398

- F1: 0.9366

- Accuracy: 0.9845

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 16

- eval_batch_size: 16

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:---------:|:------:|:------:|:--------:|

| 0.0271 | 1.0 | 878 | 0.0656 | 0.9339 | 0.9339 | 0.9339 | 0.9840 |

| 0.0136 | 2.0 | 1756 | 0.0703 | 0.9268 | 0.9380 | 0.9324 | 0.9838 |

| 0.008 | 3.0 | 2634 | 0.0727 | 0.9334 | 0.9398 | 0.9366 | 0.9845 |

### Framework versions

- Transformers 4.12.3

- Pytorch 1.9.0+cu111

- Datasets 1.15.1

- Tokenizers 0.10.3

|

Roy029/japanese-roberta-base-finetuned-wikitext2

|

Roy029

| 2021-11-04T05:25:22Z | 5 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"fill-mask",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: japanese-roberta-base-finetuned-wikitext2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# japanese-roberta-base-finetuned-wikitext2

This model is a fine-tuned version of [rinna/japanese-roberta-base](https://huggingface.co/rinna/japanese-roberta-base) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 3.2302

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3.0

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| No log | 1.0 | 18 | 3.4128 |

| No log | 2.0 | 36 | 3.1374 |

| No log | 3.0 | 54 | 3.2285 |

### Framework versions

- Transformers 4.12.3

- Pytorch 1.9.0+cu111

- Datasets 1.15.1

- Tokenizers 0.10.3

|

Ahmad/parsT5

|

Ahmad

| 2021-11-04T05:16:46Z | 23 | 1 |

transformers

|

[

"transformers",

"jax",

"t5",

"text2text-generation",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-03-02T23:29:04Z |

A checkpoint for training Persian T5 model. This repository can be cloned and pre-training can be resumed. This model uses flax and is for training.

For more information and getting the training code please refer to:

https://github.com/puraminy/parsT5

|

patrickvonplaten/hello_2b_2

|

patrickvonplaten

| 2021-11-04T05:07:39Z | 3 | 0 |

transformers

|

[

"transformers",

"pytorch",

"wav2vec2",

"automatic-speech-recognition",

"common_voice",

"generated_from_trainer",

"tr",

"dataset:common_voice",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2022-03-02T23:29:05Z |

---

language:

- tr

tags:

- automatic-speech-recognition

- common_voice

- generated_from_trainer

datasets:

- common_voice

model-index:

- name: hello_2b_2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# hello_2b_2

This model is a fine-tuned version of [facebook/wav2vec2-xls-r-2b](https://huggingface.co/facebook/wav2vec2-xls-r-2b) on the COMMON_VOICE - TR dataset.

It achieves the following results on the evaluation set:

- Loss: 0.5324

- Wer: 0.5109

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-06

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- distributed_type: multi-GPU

- num_devices: 2

- gradient_accumulation_steps: 8

- total_train_batch_size: 32

- total_eval_batch_size: 16

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- num_epochs: 30.0

- mixed_precision_training: Native AMP

### Training results

| Training Loss | Epoch | Step | Validation Loss | Wer |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 3.3543 | 0.92 | 100 | 3.4342 | 1.0 |

| 3.0521 | 1.85 | 200 | 3.1243 | 1.0 |

| 1.4905 | 2.77 | 300 | 1.1760 | 0.9876 |

| 0.5852 | 3.7 | 400 | 0.7678 | 0.7405 |

| 0.4442 | 4.63 | 500 | 0.7637 | 0.7179 |

| 0.3816 | 5.55 | 600 | 0.7114 | 0.6726 |

| 0.2923 | 6.48 | 700 | 0.7109 | 0.6837 |

| 0.2771 | 7.4 | 800 | 0.6800 | 0.6530 |

| 0.1643 | 8.33 | 900 | 0.6031 | 0.6089 |

| 0.2931 | 9.26 | 1000 | 0.6467 | 0.6308 |

| 0.1495 | 10.18 | 1100 | 0.6042 | 0.6085 |

| 0.2093 | 11.11 | 1200 | 0.5850 | 0.5889 |

| 0.1329 | 12.04 | 1300 | 0.5557 | 0.5567 |

| 0.1005 | 12.96 | 1400 | 0.5964 | 0.5814 |

| 0.2162 | 13.88 | 1500 | 0.5692 | 0.5626 |

| 0.0923 | 14.81 | 1600 | 0.5508 | 0.5462 |

| 0.075 | 15.74 | 1700 | 0.5477 | 0.5307 |

| 0.2029 | 16.66 | 1800 | 0.5501 | 0.5300 |

| 0.0985 | 17.59 | 1900 | 0.5350 | 0.5303 |

| 0.1674 | 18.51 | 2000 | 0.5429 | 0.5241 |

| 0.1305 | 19.44 | 2100 | 0.5645 | 0.5443 |

| 0.0774 | 20.37 | 2200 | 0.5313 | 0.5216 |

| 0.1372 | 21.29 | 2300 | 0.5644 | 0.5392 |

| 0.1095 | 22.22 | 2400 | 0.5577 | 0.5306 |

| 0.0958 | 23.15 | 2500 | 0.5461 | 0.5273 |

| 0.0544 | 24.07 | 2600 | 0.5290 | 0.5055 |

| 0.0579 | 24.99 | 2700 | 0.5295 | 0.5150 |

| 0.1213 | 25.92 | 2800 | 0.5311 | 0.5221 |

| 0.0691 | 26.85 | 2900 | 0.5228 | 0.5095 |

| 0.1729 | 27.77 | 3000 | 0.5340 | 0.5095 |

| 0.0697 | 28.7 | 3100 | 0.5334 | 0.5139 |

| 0.0734 | 29.63 | 3200 | 0.5323 | 0.5140 |

### Framework versions