modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-28 18:27:53

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 525

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-28 18:27:52

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

Gman/pretrained-bert

|

Gman

| 2023-08-14T10:35:06Z | 47 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"pretraining",

"generated_from_keras_callback",

"endpoints_compatible",

"region:us"

] | null | 2023-08-14T10:34:07Z |

---

base_model: ''

tags:

- generated_from_keras_callback

model-index:

- name: pretrained-bert

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# pretrained-bert

This model is a fine-tuned version of [](https://huggingface.co/) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 8.4779

- Validation Loss: 8.6183

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': 1e-04, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 8.4779 | 8.6183 | 0 |

### Framework versions

- Transformers 4.32.0.dev0

- TensorFlow 2.12.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

bigmorning/whisper_charsplit_new_round4__0005

|

bigmorning

| 2023-08-14T10:33:57Z | 59 | 0 |

transformers

|

[

"transformers",

"tf",

"whisper",

"automatic-speech-recognition",

"generated_from_keras_callback",

"base_model:bigmorning/whisper_charsplit_new_round2__0061",

"base_model:finetune:bigmorning/whisper_charsplit_new_round2__0061",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-14T10:33:48Z |

---

license: apache-2.0

base_model: bigmorning/whisper_charsplit_new_round2__0061

tags:

- generated_from_keras_callback

model-index:

- name: whisper_charsplit_new_round4__0005

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# whisper_charsplit_new_round4__0005

This model is a fine-tuned version of [bigmorning/whisper_charsplit_new_round2__0061](https://huggingface.co/bigmorning/whisper_charsplit_new_round2__0061) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0009

- Train Accuracy: 0.0795

- Train Wermet: 8.4533

- Validation Loss: 0.5771

- Validation Accuracy: 0.0771

- Validation Wermet: 7.4112

- Epoch: 4

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 1e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Train Wermet | Validation Loss | Validation Accuracy | Validation Wermet | Epoch |

|:----------:|:--------------:|:------------:|:---------------:|:-------------------:|:-----------------:|:-----:|

| 0.0009 | 0.0795 | 7.9702 | 0.5713 | 0.0770 | 6.9300 | 0 |

| 0.0011 | 0.0795 | 7.7485 | 0.5743 | 0.0771 | 6.6465 | 1 |

| 0.0011 | 0.0795 | 8.1600 | 0.5748 | 0.0771 | 7.1363 | 2 |

| 0.0008 | 0.0795 | 8.1954 | 0.5845 | 0.0770 | 7.1869 | 3 |

| 0.0009 | 0.0795 | 8.4533 | 0.5771 | 0.0771 | 7.4112 | 4 |

### Framework versions

- Transformers 4.32.0.dev0

- TensorFlow 2.12.0

- Tokenizers 0.13.3

|

frankjoshua/controlnet-depth-sdxl-1.0

|

frankjoshua

| 2023-08-14T10:27:05Z | 86 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"stable-diffusion-xl",

"stable-diffusion-xl-diffusers",

"text-to-image",

"controlnet",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:openrail++",

"region:us"

] |

text-to-image

| 2023-10-14T01:25:51Z |

---

license: openrail++

base_model: stabilityai/stable-diffusion-xl-base-1.0

tags:

- stable-diffusion-xl

- stable-diffusion-xl-diffusers

- text-to-image

- diffusers

- controlnet

inference: false

---

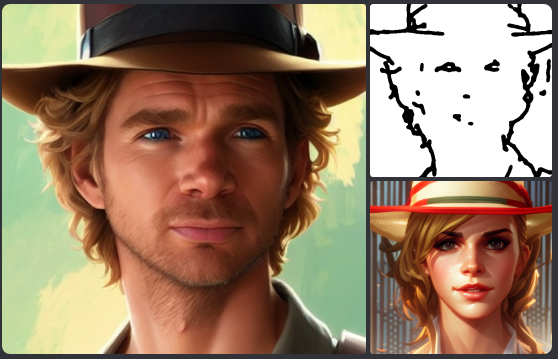

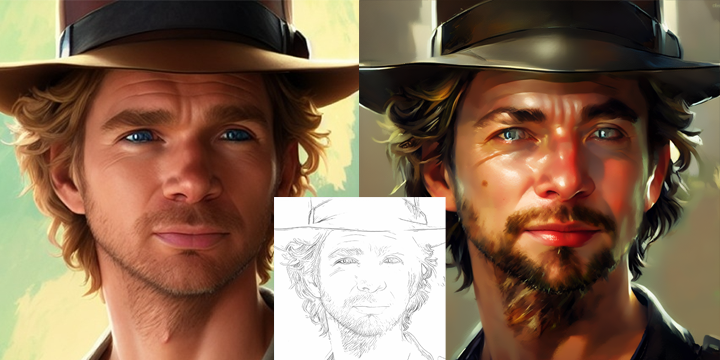

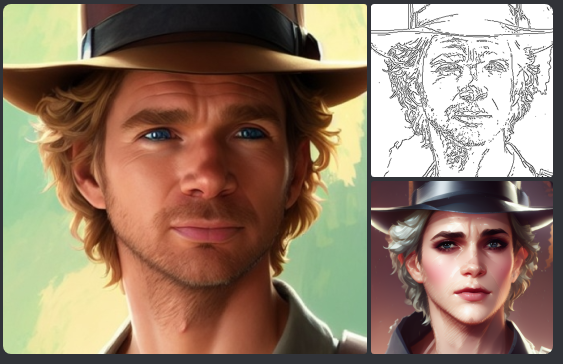

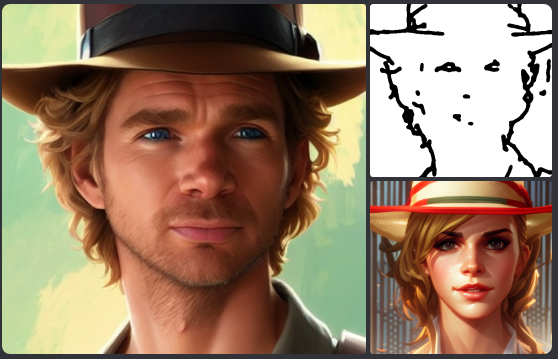

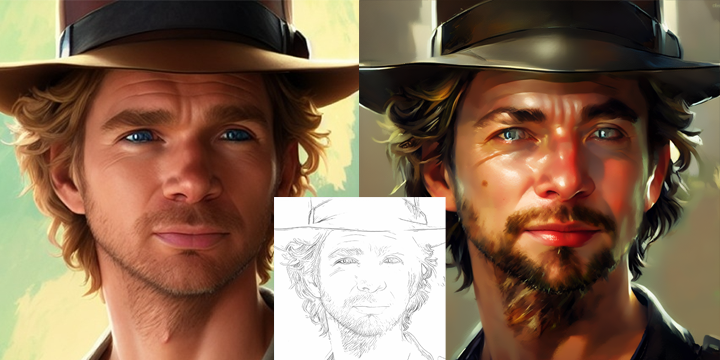

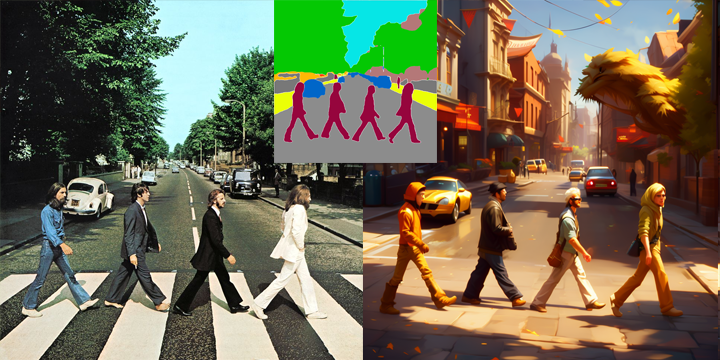

# SDXL-controlnet: Depth

These are controlnet weights trained on stabilityai/stable-diffusion-xl-base-1.0 with depth conditioning. You can find some example images in the following.

prompt: spiderman lecture, photorealistic

## Usage

Make sure to first install the libraries:

```bash

pip install accelerate transformers safetensors diffusers

```

And then we're ready to go:

```python

import torch

import numpy as np

from PIL import Image

from transformers import DPTFeatureExtractor, DPTForDepthEstimation

from diffusers import ControlNetModel, StableDiffusionXLControlNetPipeline, AutoencoderKL

from diffusers.utils import load_image

depth_estimator = DPTForDepthEstimation.from_pretrained("Intel/dpt-hybrid-midas").to("cuda")

feature_extractor = DPTFeatureExtractor.from_pretrained("Intel/dpt-hybrid-midas")

controlnet = ControlNetModel.from_pretrained(

"diffusers/controlnet-depth-sdxl-1.0",

variant="fp16",

use_safetensors=True,

torch_dtype=torch.float16,

).to("cuda")

vae = AutoencoderKL.from_pretrained("madebyollin/sdxl-vae-fp16-fix", torch_dtype=torch.float16).to("cuda")

pipe = StableDiffusionXLControlNetPipeline.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0",

controlnet=controlnet,

vae=vae,

variant="fp16",

use_safetensors=True,

torch_dtype=torch.float16,

).to("cuda")

pipe.enable_model_cpu_offload()

def get_depth_map(image):

image = feature_extractor(images=image, return_tensors="pt").pixel_values.to("cuda")

with torch.no_grad(), torch.autocast("cuda"):

depth_map = depth_estimator(image).predicted_depth

depth_map = torch.nn.functional.interpolate(

depth_map.unsqueeze(1),

size=(1024, 1024),

mode="bicubic",

align_corners=False,

)

depth_min = torch.amin(depth_map, dim=[1, 2, 3], keepdim=True)

depth_max = torch.amax(depth_map, dim=[1, 2, 3], keepdim=True)

depth_map = (depth_map - depth_min) / (depth_max - depth_min)

image = torch.cat([depth_map] * 3, dim=1)

image = image.permute(0, 2, 3, 1).cpu().numpy()[0]

image = Image.fromarray((image * 255.0).clip(0, 255).astype(np.uint8))

return image

prompt = "stormtrooper lecture, photorealistic"

image = load_image("https://huggingface.co/lllyasviel/sd-controlnet-depth/resolve/main/images/stormtrooper.png")

controlnet_conditioning_scale = 0.5 # recommended for good generalization

depth_image = get_depth_map(image)

images = pipe(

prompt, image=depth_image, num_inference_steps=30, controlnet_conditioning_scale=controlnet_conditioning_scale,

).images

images[0]

images[0].save(f"stormtrooper.png")

```

To more details, check out the official documentation of [`StableDiffusionXLControlNetPipeline`](https://huggingface.co/docs/diffusers/main/en/api/pipelines/controlnet_sdxl).

### Training

Our training script was built on top of the official training script that we provide [here](https://github.com/huggingface/diffusers/blob/main/examples/controlnet/README_sdxl.md).

#### Training data and Compute

The model is trained on 3M image-text pairs from LAION-Aesthetics V2. The model is trained for 700 GPU hours on 80GB A100 GPUs.

#### Batch size

Data parallel with a single gpu batch size of 8 for a total batch size of 256.

#### Hyper Parameters

Constant learning rate of 1e-5.

#### Mixed precision

fp16

|

amirhamza11/my_awesome_eli5_mlm_model_2

|

amirhamza11

| 2023-08-14T10:17:27Z | 106 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"roberta",

"fill-mask",

"generated_from_trainer",

"base_model:distilbert/distilroberta-base",

"base_model:finetune:distilbert/distilroberta-base",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2023-08-14T09:59:42Z |

---

license: apache-2.0

base_model: distilroberta-base

tags:

- generated_from_trainer

model-index:

- name: my_awesome_eli5_mlm_model_2

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_awesome_eli5_mlm_model_2

This model is a fine-tuned version of [distilroberta-base](https://huggingface.co/distilroberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 1.9883

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 2.2469 | 1.0 | 1138 | 2.0423 |

| 2.1601 | 2.0 | 2276 | 2.0028 |

| 2.1295 | 3.0 | 3414 | 2.0125 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Tokenizers 0.13.3

|

vj1148/lora-peft-flant5-large-v2

|

vj1148

| 2023-08-14T10:03:02Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-14T10:03:01Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: True

- load_in_4bit: False

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: fp4

- bnb_4bit_use_double_quant: False

- bnb_4bit_compute_dtype: float32

### Framework versions

- PEFT 0.4.0

|

AdirK/CartPole-v1

|

AdirK

| 2023-08-14T09:45:20Z | 0 | 0 | null |

[

"CartPole-v1",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-14T09:45:09Z |

---

tags:

- CartPole-v1

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: CartPole-v1

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: CartPole-v1

type: CartPole-v1

metrics:

- type: mean_reward

value: 500.00 +/- 0.00

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **CartPole-v1**

This is a trained model of a **Reinforce** agent playing **CartPole-v1** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

M2LInES/gz21-ocean-momentum

|

M2LInES

| 2023-08-14T09:40:18Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-08-14T09:10:22Z |

# gz21-ocean-momentum pretrained models

[gfdl-cm2.6-pangeo]: https://catalog.pangeo.io/browse/master/ocean/GFDL_CM2_6/

[gz21-gh]: https://github.com/m2lines/gz21_ocean_momentum

[gz21-data-hf]: https://huggingface.co/datasets/M2LInES/gfdl-cmip26-gz21-ocean-forcing

[gz21-ocean-momentum][gz21-gh] models trained on the forcing data generated from

the [GFDL CM2.6][gfdl-cm2.6-pangeo] dataset. (Some forcing is hosted on Hugging

Face at [datasets/M2LInES/gfdl-cmip26-gz21-ocean-forcing][gz21-data-hf].

See individual model directories for details (hyperparameters, code used).

|

anikesh-mane/prompt-tuned-flan-t5-large

|

anikesh-mane

| 2023-08-14T09:38:40Z | 1 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-14T09:38:39Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.4.0

|

Xilabs/instructmix-llama-3b

|

Xilabs

| 2023-08-14T09:35:38Z | 8 | 1 |

peft

|

[

"peft",

"text-generation",

"dataset:Xilabs/instructmix",

"region:us"

] |

text-generation

| 2023-07-24T03:00:40Z |

---

library_name: peft

datasets:

- Xilabs/instructmix

pipeline_tag: text-generation

---

## Model Card for "InstructMix Llama 3B"

**Model Name:** InstructMix Llama 3B

**Description:**

InstructMix Llama 3B is a language model fine-tuned on the InstructMix dataset using parameter-efficient fine-tuning (PEFT), using the base model "openlm-research/open_llama_3b_v2," which can be found at [https://huggingface.co/openlm-research/open_llama_3b_v2](https://huggingface.co/openlm-research/open_llama_3b_v2).

An easy way to use InstructMix Llama 3B is via the API: https://replicate.com/ritabratamaiti/instructmix-llama-3b

**Usage:**

```py

import torch

from transformers import LlamaForCausalLM, LlamaTokenizer

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

from transformers import LlamaTokenizer, LlamaForCausalLM, GenerationConfig

from peft import PeftModel, PeftConfig

# Hugging Face model_path

model_path = 'openlm-research/open_llama_3b_v2'

peft_model_id = 'Xilabs/instructmix-llama-3b'

tokenizer = LlamaTokenizer.from_pretrained(model_path)

model = LlamaForCausalLM.from_pretrained(

model_path, device_map="auto"

)

model = PeftModel.from_pretrained(model, peft_model_id)

def generate_prompt(instruction, input=None):

if input:

return f"""Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Input:

{input}

### Response:"""

else:

return f"""Below is an instruction that describes a task. Write a response that appropriately completes the request.

### Instruction:

{instruction}

### Response:"""

def evaluate(

instruction,

input=None,

temperature=0.5,

top_p=0.75,

top_k=40,

num_beams=5,

max_new_tokens=128,

**kwargs,

):

prompt = generate_prompt(instruction, input)

inputs = tokenizer(prompt, return_tensors="pt")

input_ids = inputs["input_ids"].to("cuda")

generation_config = GenerationConfig(

temperature=temperature,

top_p=top_p,

top_k=top_k,

num_beams=num_beams,

early_stopping=True,

repetition_penalty=1.1,

**kwargs,

)

with torch.no_grad():

generation_output = model.generate(

input_ids=input_ids,

generation_config=generation_config,

return_dict_in_generate=True,

output_scores=True,

max_new_tokens=max_new_tokens,

)

s = generation_output.sequences[0]

output = tokenizer.decode(s, skip_special_tokens = True)

#print(output)

return output.split("### Response:")[1]

instruction = "What is the meaning of life?"

print(evaluate(instruction, num_beams=3, temperature=0.1, max_new_tokens=256))

```

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.4.0

|

vnktrmnb/bert-base-multilingual-cased-FT-TyDiQA_AUQ

|

vnktrmnb

| 2023-08-14T09:29:24Z | 71 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"bert",

"question-answering",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-multilingual-cased",

"base_model:finetune:google-bert/bert-base-multilingual-cased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-12T07:38:12Z |

---

license: apache-2.0

base_model: bert-base-multilingual-cased

tags:

- generated_from_keras_callback

model-index:

- name: vnktrmnb/bert-base-multilingual-cased-FT-TyDiQA_AUQ

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vnktrmnb/bert-base-multilingual-cased-FT-TyDiQA_AUQ

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3207

- Train End Logits Accuracy: 0.8945

- Train Start Logits Accuracy: 0.9240

- Validation Loss: 0.4883

- Validation End Logits Accuracy: 0.8621

- Validation Start Logits Accuracy: 0.9124

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2439, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch |

|:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:|

| 1.2099 | 0.6849 | 0.7242 | 0.5171 | 0.8454 | 0.8930 | 0 |

| 0.5374 | 0.8328 | 0.8737 | 0.4915 | 0.8570 | 0.8943 | 1 |

| 0.3207 | 0.8945 | 0.9240 | 0.4883 | 0.8621 | 0.9124 | 2 |

### Framework versions

- Transformers 4.31.0

- TensorFlow 2.12.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

gagneurlab/SpeciesLM

|

gagneurlab

| 2023-08-14T09:27:08Z | 1,351 | 1 | null |

[

"license:mit",

"region:us"

] | null | 2023-08-14T08:41:30Z |

---

license: mit

---

Load each model using:

```python

from transformers import AutoTokenizer, AutoModelForMaskedLM

tokenizer = AutoTokenizer.from_pretrained("gagneurlab/SpeciesLM", revision = "<<choose model type>>")

model = AutoModelForMaskedLM.from_pretrained("gagneurlab/SpeciesLM", revision = "<<choose model type>>")

```

Model type:

- Species LM, 3' region: `downstream_species_lm`

- Agnostic LM, 3' region: `downstream_agnostic_lm`

- Species LM, 5' region: `upstream_species_lm`

- Agnostic LM, 5' region: `upstream_agnostic_lm`

|

CyberHarem/temari_naruto

|

CyberHarem

| 2023-08-14T09:18:34Z | 0 | 0 | null |

[

"art",

"text-to-image",

"dataset:CyberHarem/temari_naruto",

"license:mit",

"region:us"

] |

text-to-image

| 2023-08-14T09:12:33Z |

---

license: mit

datasets:

- CyberHarem/temari_naruto

pipeline_tag: text-to-image

tags:

- art

---

# Lora of temari_naruto

This model is trained with [HCP-Diffusion](https://github.com/7eu7d7/HCP-Diffusion). And the auto-training framework is maintained by [DeepGHS Team](https://huggingface.co/deepghs).

After downloading the pt and safetensors files for the specified step, you need to use them simultaneously. The pt file will be used as an embedding, while the safetensors file will be loaded for Lora.

For example, if you want to use the model from step 1500, you need to download `1500/temari_naruto.pt` as the embedding and `1500/temari_naruto.safetensors` for loading Lora. By using both files together, you can generate images for the desired characters.

**The trigger word is `temari_naruto`.**

These are available steps:

| Steps | pattern_1 | pattern_2 | pattern_3 | pattern_4 | bikini | free | nude | Download |

|--------:|:-----------------------------------------------|:-----------------------------------------------|:-----------------------------------------------|:----------------------------------------------------|:-----------------------------------------|:-------------------------------------|:-----------------------------------------------|:-----------------------------------|

| 1500 |  |  |  | [<NSFW, click to see>](1500/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1500/previews/nude.png) | [Download](1500/temari_naruto.zip) |

| 1400 |  |  |  | [<NSFW, click to see>](1400/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1400/previews/nude.png) | [Download](1400/temari_naruto.zip) |

| 1300 |  |  |  | [<NSFW, click to see>](1300/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1300/previews/nude.png) | [Download](1300/temari_naruto.zip) |

| 1200 |  |  |  | [<NSFW, click to see>](1200/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1200/previews/nude.png) | [Download](1200/temari_naruto.zip) |

| 1100 |  |  |  | [<NSFW, click to see>](1100/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1100/previews/nude.png) | [Download](1100/temari_naruto.zip) |

| 1000 |  |  |  | [<NSFW, click to see>](1000/previews/pattern_4.png) |  |  | [<NSFW, click to see>](1000/previews/nude.png) | [Download](1000/temari_naruto.zip) |

| 900 |  |  |  | [<NSFW, click to see>](900/previews/pattern_4.png) |  |  | [<NSFW, click to see>](900/previews/nude.png) | [Download](900/temari_naruto.zip) |

| 800 |  |  |  | [<NSFW, click to see>](800/previews/pattern_4.png) |  |  | [<NSFW, click to see>](800/previews/nude.png) | [Download](800/temari_naruto.zip) |

| 700 |  |  |  | [<NSFW, click to see>](700/previews/pattern_4.png) |  |  | [<NSFW, click to see>](700/previews/nude.png) | [Download](700/temari_naruto.zip) |

| 600 |  |  |  | [<NSFW, click to see>](600/previews/pattern_4.png) |  |  | [<NSFW, click to see>](600/previews/nude.png) | [Download](600/temari_naruto.zip) |

| 500 |  |  |  | [<NSFW, click to see>](500/previews/pattern_4.png) |  |  | [<NSFW, click to see>](500/previews/nude.png) | [Download](500/temari_naruto.zip) |

| 400 |  |  |  | [<NSFW, click to see>](400/previews/pattern_4.png) |  |  | [<NSFW, click to see>](400/previews/nude.png) | [Download](400/temari_naruto.zip) |

| 300 |  |  |  | [<NSFW, click to see>](300/previews/pattern_4.png) |  |  | [<NSFW, click to see>](300/previews/nude.png) | [Download](300/temari_naruto.zip) |

| 200 |  |  |  | [<NSFW, click to see>](200/previews/pattern_4.png) |  |  | [<NSFW, click to see>](200/previews/nude.png) | [Download](200/temari_naruto.zip) |

| 100 |  |  |  | [<NSFW, click to see>](100/previews/pattern_4.png) |  |  | [<NSFW, click to see>](100/previews/nude.png) | [Download](100/temari_naruto.zip) |

|

rokset3/kazroberta-180kstep

|

rokset3

| 2023-08-14T09:18:28Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-14T09:16:45Z |

---

library_name: peft

---

## Training procedure

### Framework versions

- PEFT 0.4.0.dev0

|

jondurbin/airoboros-13b

|

jondurbin

| 2023-08-14T09:07:30Z | 1,446 | 106 |

transformers

|

[

"transformers",

"pytorch",

"llama",

"text-generation",

"license:cc-by-nc-4.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-05-19T11:56:55Z |

---

license: cc-by-nc-4.0

---

# Overview

This is a fine-tuned 13b parameter LlaMa model, using completely synthetic training data created by https://github.com/jondurbin/airoboros

__*I don't recommend using this model! The outputs aren't particularly great, and it may contain "harmful" data due to jailbreak*__

Please see one of the updated airoboros models for a much better experience.

### Eval (gpt4 judging)

| model | raw score | gpt-3.5 adjusted score |

| --- | --- | --- |

| __airoboros-13b__ | __17947__ | __98.087__ |

| gpt35 | 18297 | 100.0 |

| gpt4-x-alpasta-30b | 15612 | 85.33 |

| manticore-13b | 15856 | 86.66 |

| vicuna-13b-1.1 | 16306 | 89.12 |

| wizard-vicuna-13b-uncensored | 16287 | 89.01 |

<details>

<summary>individual question scores, with shareGPT links (200 prompts generated by gpt-4)</summary>

*wb-13b-u is Wizard-Vicuna-13b-Uncensored*

| airoboros-13b | gpt35 | gpt4-x-alpasta-30b | manticore-13b | vicuna-13b-1.1 | wv-13b-u | link |

|----------------:|--------:|---------------------:|----------------:|-----------------:|-------------------------------:|:---------------------------------------|

| 80 | 95 | 70 | 90 | 85 | 60 | [eval](https://sharegpt.com/c/PIbRQD3) |

| 20 | 95 | 40 | 30 | 90 | 80 | [eval](https://sharegpt.com/c/fSzwzzd) |

| 100 | 100 | 100 | 95 | 95 | 100 | [eval](https://sharegpt.com/c/AXMzZiO) |

| 90 | 100 | 85 | 60 | 95 | 100 | [eval](https://sharegpt.com/c/7obzJm2) |

| 95 | 90 | 80 | 85 | 95 | 75 | [eval](https://sharegpt.com/c/cRpj6M1) |

| 100 | 95 | 90 | 95 | 98 | 92 | [eval](https://sharegpt.com/c/p0by1T7) |

| 50 | 100 | 80 | 95 | 60 | 55 | [eval](https://sharegpt.com/c/rowNlKx) |

| 70 | 90 | 80 | 60 | 85 | 40 | [eval](https://sharegpt.com/c/I4POj4I) |

| 100 | 95 | 50 | 85 | 40 | 60 | [eval](https://sharegpt.com/c/gUAeiRp) |

| 85 | 60 | 55 | 65 | 50 | 70 | [eval](https://sharegpt.com/c/Lgw4QQL) |

| 95 | 100 | 85 | 90 | 60 | 75 | [eval](https://sharegpt.com/c/X9tDYft) |

| 100 | 95 | 70 | 80 | 50 | 85 | [eval](https://sharegpt.com/c/9V2ElkH) |

| 100 | 95 | 80 | 70 | 60 | 90 | [eval](https://sharegpt.com/c/D5xg6qt) |

| 95 | 100 | 70 | 85 | 90 | 90 | [eval](https://sharegpt.com/c/lQnSfDs) |

| 80 | 95 | 90 | 60 | 30 | 85 | [eval](https://sharegpt.com/c/1hpHGNc) |

| 60 | 95 | 0 | 75 | 50 | 40 | [eval](https://sharegpt.com/c/an6TqE4) |

| 100 | 95 | 90 | 98 | 95 | 95 | [eval](https://sharegpt.com/c/7vr6n3F) |

| 60 | 85 | 40 | 50 | 20 | 0 | [eval](https://sharegpt.com/c/TOkMkgE) |

| 100 | 90 | 85 | 95 | 95 | 80 | [eval](https://sharegpt.com/c/Qu7ak0r) |

| 100 | 95 | 100 | 95 | 90 | 95 | [eval](https://sharegpt.com/c/hMD4gPo) |

| 95 | 90 | 96 | 80 | 92 | 88 | [eval](https://sharegpt.com/c/HTlicNh) |

| 95 | 92 | 90 | 93 | 89 | 91 | [eval](https://sharegpt.com/c/MjxHpAf) |

| 95 | 93 | 90 | 94 | 96 | 92 | [eval](https://sharegpt.com/c/4RvxOR9) |

| 95 | 90 | 93 | 88 | 92 | 85 | [eval](https://sharegpt.com/c/PcAIU9r) |

| 95 | 90 | 85 | 96 | 88 | 92 | [eval](https://sharegpt.com/c/MMqul3q) |

| 95 | 95 | 90 | 93 | 92 | 91 | [eval](https://sharegpt.com/c/YQsLyzJ) |

| 95 | 98 | 80 | 97 | 99 | 96 | [eval](https://sharegpt.com/c/UDhSTMq) |

| 95 | 93 | 90 | 87 | 92 | 89 | [eval](https://sharegpt.com/c/4gCfdCV) |

| 90 | 85 | 95 | 80 | 92 | 75 | [eval](https://sharegpt.com/c/bkQs4SP) |

| 90 | 85 | 95 | 93 | 80 | 92 | [eval](https://sharegpt.com/c/LeLCEEt) |

| 95 | 92 | 90 | 91 | 93 | 89 | [eval](https://sharegpt.com/c/DFxNzVu) |

| 100 | 95 | 90 | 85 | 80 | 95 | [eval](https://sharegpt.com/c/gnVzNML) |

| 95 | 97 | 93 | 92 | 96 | 94 | [eval](https://sharegpt.com/c/y7pxMIy) |

| 95 | 93 | 94 | 90 | 88 | 92 | [eval](https://sharegpt.com/c/5UeCvTY) |

| 90 | 95 | 98 | 85 | 96 | 92 | [eval](https://sharegpt.com/c/T4oL9I5) |

| 90 | 88 | 85 | 80 | 82 | 84 | [eval](https://sharegpt.com/c/HnGyTAG) |

| 90 | 95 | 85 | 87 | 92 | 88 | [eval](https://sharegpt.com/c/ZbRMBNj) |

| 95 | 97 | 96 | 90 | 93 | 92 | [eval](https://sharegpt.com/c/iTmFJqd) |

| 95 | 93 | 92 | 90 | 89 | 91 | [eval](https://sharegpt.com/c/VuPifET) |

| 90 | 95 | 93 | 92 | 94 | 91 | [eval](https://sharegpt.com/c/AvFAH1x) |

| 90 | 85 | 95 | 80 | 88 | 75 | [eval](https://sharegpt.com/c/4ealKGN) |

| 85 | 90 | 95 | 88 | 92 | 80 | [eval](https://sharegpt.com/c/bE1b2vX) |

| 90 | 95 | 92 | 85 | 80 | 87 | [eval](https://sharegpt.com/c/I3nMPBC) |

| 85 | 90 | 95 | 80 | 88 | 75 | [eval](https://sharegpt.com/c/as7r3bW) |

| 85 | 80 | 75 | 90 | 70 | 82 | [eval](https://sharegpt.com/c/qYceaUa) |

| 90 | 85 | 95 | 92 | 93 | 80 | [eval](https://sharegpt.com/c/g4FXchU) |

| 90 | 95 | 75 | 85 | 80 | 70 | [eval](https://sharegpt.com/c/6kGLvL5) |

| 85 | 90 | 80 | 88 | 82 | 83 | [eval](https://sharegpt.com/c/SRozqaF) |

| 85 | 90 | 95 | 92 | 88 | 80 | [eval](https://sharegpt.com/c/GoKydf6) |

| 85 | 90 | 80 | 75 | 95 | 88 | [eval](https://sharegpt.com/c/37aXkHQ) |

| 85 | 90 | 80 | 88 | 84 | 92 | [eval](https://sharegpt.com/c/nVuUaTj) |

| 80 | 90 | 75 | 85 | 70 | 95 | [eval](https://sharegpt.com/c/TkAQKLC) |

| 90 | 88 | 85 | 80 | 92 | 83 | [eval](https://sharegpt.com/c/55cO2y0) |

| 85 | 75 | 90 | 80 | 78 | 88 | [eval](https://sharegpt.com/c/tXtq5lT) |

| 85 | 90 | 80 | 82 | 75 | 88 | [eval](https://sharegpt.com/c/TfMjeJQ) |

| 90 | 85 | 40 | 95 | 80 | 88 | [eval](https://sharegpt.com/c/2jQ6K2S) |

| 85 | 95 | 90 | 75 | 88 | 80 | [eval](https://sharegpt.com/c/aQtr2ca) |

| 85 | 95 | 90 | 92 | 89 | 88 | [eval](https://sharegpt.com/c/tbWLyZ7) |

| 80 | 85 | 75 | 60 | 90 | 70 | [eval](https://sharegpt.com/c/moHC7i2) |

| 85 | 90 | 87 | 80 | 88 | 75 | [eval](https://sharegpt.com/c/GK6GShh) |

| 85 | 80 | 75 | 50 | 90 | 80 | [eval](https://sharegpt.com/c/ugcW4qG) |

| 95 | 80 | 90 | 85 | 75 | 82 | [eval](https://sharegpt.com/c/WL8iq6F) |

| 85 | 90 | 80 | 70 | 95 | 88 | [eval](https://sharegpt.com/c/TZJKnvS) |

| 90 | 95 | 70 | 85 | 80 | 75 | [eval](https://sharegpt.com/c/beNOKb5) |

| 90 | 85 | 70 | 75 | 80 | 60 | [eval](https://sharegpt.com/c/o2oRCF5) |

| 95 | 90 | 70 | 50 | 85 | 80 | [eval](https://sharegpt.com/c/TNjbK6D) |

| 80 | 85 | 40 | 60 | 90 | 95 | [eval](https://sharegpt.com/c/rJvszWJ) |

| 75 | 60 | 80 | 55 | 70 | 85 | [eval](https://sharegpt.com/c/HJwRkro) |

| 90 | 85 | 60 | 50 | 80 | 95 | [eval](https://sharegpt.com/c/AeFoSDK) |

| 45 | 85 | 60 | 20 | 65 | 75 | [eval](https://sharegpt.com/c/KA1cgOl) |

| 85 | 90 | 30 | 60 | 80 | 70 | [eval](https://sharegpt.com/c/RTy8n0y) |

| 90 | 95 | 80 | 40 | 85 | 70 | [eval](https://sharegpt.com/c/PJMJoXh) |

| 85 | 90 | 70 | 75 | 80 | 95 | [eval](https://sharegpt.com/c/Ib3jzyC) |

| 90 | 70 | 50 | 20 | 60 | 40 | [eval](https://sharegpt.com/c/oMmqqtX) |

| 90 | 95 | 75 | 60 | 85 | 80 | [eval](https://sharegpt.com/c/qRNhNTw) |

| 85 | 80 | 60 | 70 | 65 | 75 | [eval](https://sharegpt.com/c/3MAHQIy) |

| 90 | 85 | 80 | 75 | 82 | 70 | [eval](https://sharegpt.com/c/0Emc5HS) |

| 90 | 95 | 80 | 70 | 85 | 75 | [eval](https://sharegpt.com/c/UqAxRWF) |

| 85 | 75 | 30 | 80 | 90 | 70 | [eval](https://sharegpt.com/c/eywxGAw) |

| 85 | 90 | 50 | 70 | 80 | 60 | [eval](https://sharegpt.com/c/A2KSEWP) |

| 100 | 95 | 98 | 99 | 97 | 96 | [eval](https://sharegpt.com/c/C8rebQf) |

| 95 | 90 | 92 | 93 | 91 | 89 | [eval](https://sharegpt.com/c/cd9HF4V) |

| 95 | 92 | 90 | 85 | 88 | 91 | [eval](https://sharegpt.com/c/LHkjvQJ) |

| 100 | 95 | 98 | 97 | 96 | 99 | [eval](https://sharegpt.com/c/o5PdoyZ) |

| 100 | 100 | 100 | 90 | 100 | 95 | [eval](https://sharegpt.com/c/rh8pZVg) |

| 100 | 95 | 98 | 97 | 94 | 99 | [eval](https://sharegpt.com/c/T5DYL83) |

| 95 | 90 | 92 | 93 | 94 | 91 | [eval](https://sharegpt.com/c/G5Osg3X) |

| 100 | 95 | 98 | 90 | 96 | 95 | [eval](https://sharegpt.com/c/9ZqI03V) |

| 95 | 96 | 92 | 90 | 89 | 93 | [eval](https://sharegpt.com/c/4tFfwZU) |

| 100 | 95 | 93 | 90 | 92 | 88 | [eval](https://sharegpt.com/c/mG1JqPH) |

| 100 | 100 | 98 | 97 | 99 | 100 | [eval](https://sharegpt.com/c/VDdtgCu) |

| 95 | 90 | 92 | 85 | 93 | 94 | [eval](https://sharegpt.com/c/uKtGkvg) |

| 95 | 93 | 90 | 92 | 96 | 91 | [eval](https://sharegpt.com/c/9B92N6P) |

| 95 | 96 | 92 | 90 | 93 | 91 | [eval](https://sharegpt.com/c/GeIFfOu) |

| 95 | 90 | 92 | 93 | 91 | 89 | [eval](https://sharegpt.com/c/gn3E9nN) |

| 100 | 98 | 95 | 97 | 96 | 99 | [eval](https://sharegpt.com/c/Erxa46H) |

| 90 | 95 | 85 | 88 | 92 | 87 | [eval](https://sharegpt.com/c/oRHVOvK) |

| 95 | 93 | 90 | 92 | 89 | 88 | [eval](https://sharegpt.com/c/ghtKLUX) |

| 100 | 95 | 97 | 90 | 96 | 94 | [eval](https://sharegpt.com/c/ZL4KjqP) |

| 95 | 93 | 90 | 92 | 94 | 91 | [eval](https://sharegpt.com/c/YOnqIQa) |

| 95 | 92 | 90 | 93 | 94 | 88 | [eval](https://sharegpt.com/c/3BKwKho) |

| 95 | 92 | 60 | 97 | 90 | 96 | [eval](https://sharegpt.com/c/U1i31bn) |

| 95 | 90 | 92 | 93 | 91 | 89 | [eval](https://sharegpt.com/c/etfRoAE) |

| 95 | 90 | 97 | 92 | 91 | 93 | [eval](https://sharegpt.com/c/B0OpVxR) |

| 90 | 95 | 93 | 85 | 92 | 91 | [eval](https://sharegpt.com/c/MBgGJ5A) |

| 95 | 90 | 40 | 92 | 93 | 85 | [eval](https://sharegpt.com/c/eQKTYO7) |

| 100 | 100 | 95 | 90 | 95 | 90 | [eval](https://sharegpt.com/c/szKWCBt) |

| 90 | 95 | 96 | 98 | 93 | 92 | [eval](https://sharegpt.com/c/8ZhUcAv) |

| 90 | 95 | 92 | 89 | 93 | 94 | [eval](https://sharegpt.com/c/VQWdy99) |

| 100 | 95 | 100 | 98 | 96 | 99 | [eval](https://sharegpt.com/c/g1DHUSM) |

| 100 | 100 | 95 | 90 | 100 | 90 | [eval](https://sharegpt.com/c/uYgfJC3) |

| 90 | 85 | 88 | 92 | 87 | 91 | [eval](https://sharegpt.com/c/crk8BH3) |

| 95 | 97 | 90 | 92 | 93 | 94 | [eval](https://sharegpt.com/c/95F9afQ) |

| 90 | 95 | 85 | 88 | 92 | 89 | [eval](https://sharegpt.com/c/otioHUo) |

| 95 | 93 | 90 | 92 | 94 | 91 | [eval](https://sharegpt.com/c/KSiL9F6) |

| 90 | 95 | 85 | 80 | 88 | 82 | [eval](https://sharegpt.com/c/GmGq3b3) |

| 95 | 90 | 60 | 85 | 93 | 70 | [eval](https://sharegpt.com/c/VOhklyz) |

| 95 | 92 | 94 | 93 | 96 | 90 | [eval](https://sharegpt.com/c/wqy8m6k) |

| 95 | 90 | 85 | 93 | 87 | 92 | [eval](https://sharegpt.com/c/iWKrIuS) |

| 95 | 96 | 93 | 90 | 97 | 92 | [eval](https://sharegpt.com/c/o1h3w8N) |

| 100 | 0 | 0 | 100 | 0 | 0 | [eval](https://sharegpt.com/c/3UH9eed) |

| 60 | 100 | 0 | 80 | 0 | 0 | [eval](https://sharegpt.com/c/44g0FAh) |

| 0 | 100 | 60 | 0 | 0 | 90 | [eval](https://sharegpt.com/c/PaQlcrU) |

| 100 | 100 | 0 | 100 | 100 | 100 | [eval](https://sharegpt.com/c/51icV4o) |

| 100 | 100 | 100 | 100 | 95 | 100 | [eval](https://sharegpt.com/c/1VnbGAR) |

| 100 | 100 | 100 | 50 | 90 | 100 | [eval](https://sharegpt.com/c/EYGBrgw) |

| 100 | 100 | 100 | 100 | 95 | 90 | [eval](https://sharegpt.com/c/EGRduOt) |

| 100 | 100 | 100 | 95 | 0 | 100 | [eval](https://sharegpt.com/c/O3JJfnK) |

| 50 | 95 | 20 | 10 | 30 | 85 | [eval](https://sharegpt.com/c/2roVtAu) |

| 100 | 100 | 60 | 20 | 30 | 40 | [eval](https://sharegpt.com/c/sphFpfx) |

| 100 | 0 | 0 | 0 | 0 | 100 | [eval](https://sharegpt.com/c/OeWGKBo) |

| 0 | 100 | 60 | 0 | 0 | 80 | [eval](https://sharegpt.com/c/TOUsuFA) |

| 50 | 100 | 20 | 90 | 0 | 10 | [eval](https://sharegpt.com/c/Y3P6DCu) |

| 100 | 100 | 100 | 100 | 100 | 100 | [eval](https://sharegpt.com/c/hkbdeiM) |

| 100 | 100 | 100 | 100 | 100 | 100 | [eval](https://sharegpt.com/c/eubbaVC) |

| 40 | 100 | 95 | 0 | 100 | 40 | [eval](https://sharegpt.com/c/QWiF49v) |

| 100 | 100 | 100 | 100 | 80 | 100 | [eval](https://sharegpt.com/c/dKTapBu) |

| 100 | 100 | 100 | 0 | 90 | 40 | [eval](https://sharegpt.com/c/P8NGwFZ) |

| 0 | 100 | 100 | 50 | 70 | 20 | [eval](https://sharegpt.com/c/v96BtBL) |

| 100 | 100 | 50 | 90 | 0 | 95 | [eval](https://sharegpt.com/c/YRlzj1t) |

| 100 | 95 | 90 | 85 | 98 | 80 | [eval](https://sharegpt.com/c/76VX3eB) |

| 95 | 98 | 90 | 92 | 96 | 89 | [eval](https://sharegpt.com/c/JK1uNef) |

| 90 | 95 | 75 | 85 | 80 | 82 | [eval](https://sharegpt.com/c/ku6CKmx) |

| 95 | 98 | 50 | 92 | 96 | 94 | [eval](https://sharegpt.com/c/0iAFuKW) |

| 95 | 90 | 0 | 93 | 92 | 94 | [eval](https://sharegpt.com/c/6uGnKio) |

| 95 | 90 | 85 | 92 | 80 | 88 | [eval](https://sharegpt.com/c/lfpRBw8) |

| 95 | 93 | 75 | 85 | 90 | 92 | [eval](https://sharegpt.com/c/mKu70jb) |

| 90 | 95 | 88 | 85 | 92 | 89 | [eval](https://sharegpt.com/c/GkYzJHO) |

| 100 | 100 | 100 | 95 | 97 | 98 | [eval](https://sharegpt.com/c/mly2k0z) |

| 85 | 40 | 30 | 95 | 90 | 88 | [eval](https://sharegpt.com/c/5td2ob0) |

| 90 | 95 | 92 | 85 | 88 | 93 | [eval](https://sharegpt.com/c/0ISpWfy) |

| 95 | 96 | 92 | 90 | 89 | 93 | [eval](https://sharegpt.com/c/kdUDUn7) |

| 90 | 95 | 85 | 80 | 92 | 88 | [eval](https://sharegpt.com/c/fjMNYr2) |

| 95 | 98 | 65 | 90 | 85 | 93 | [eval](https://sharegpt.com/c/6xBIf2Q) |

| 95 | 92 | 96 | 97 | 90 | 89 | [eval](https://sharegpt.com/c/B9GY8Ln) |

| 95 | 90 | 92 | 91 | 89 | 93 | [eval](https://sharegpt.com/c/vn1FPU4) |

| 95 | 90 | 80 | 75 | 95 | 90 | [eval](https://sharegpt.com/c/YurEMYg) |

| 92 | 40 | 30 | 95 | 90 | 93 | [eval](https://sharegpt.com/c/D19Qeui) |

| 90 | 92 | 85 | 88 | 89 | 87 | [eval](https://sharegpt.com/c/5QRFfrt) |

| 95 | 80 | 90 | 92 | 91 | 88 | [eval](https://sharegpt.com/c/pYWPRi4) |

| 95 | 93 | 92 | 90 | 91 | 94 | [eval](https://sharegpt.com/c/wPRTntL) |

| 100 | 98 | 95 | 90 | 92 | 96 | [eval](https://sharegpt.com/c/F6PLYKE) |

| 95 | 92 | 80 | 85 | 90 | 93 | [eval](https://sharegpt.com/c/WeJnMGv) |

| 95 | 98 | 90 | 88 | 97 | 96 | [eval](https://sharegpt.com/c/zNKL49e) |

| 90 | 95 | 85 | 88 | 86 | 92 | [eval](https://sharegpt.com/c/kIKmA1b) |

| 100 | 100 | 100 | 100 | 100 | 100 | [eval](https://sharegpt.com/c/1btWd4O) |

| 90 | 95 | 85 | 96 | 92 | 88 | [eval](https://sharegpt.com/c/s9sf1Lp) |

| 100 | 98 | 95 | 99 | 97 | 96 | [eval](https://sharegpt.com/c/RWzv8py) |

| 95 | 92 | 70 | 90 | 93 | 89 | [eval](https://sharegpt.com/c/bYF7FqA) |

| 95 | 90 | 88 | 92 | 94 | 93 | [eval](https://sharegpt.com/c/SuUqjMj) |

| 95 | 90 | 93 | 92 | 85 | 94 | [eval](https://sharegpt.com/c/r0aRdYY) |

| 95 | 93 | 90 | 87 | 92 | 91 | [eval](https://sharegpt.com/c/VuMfkkd) |

| 95 | 93 | 90 | 96 | 92 | 91 | [eval](https://sharegpt.com/c/rhm6fa4) |

| 95 | 97 | 85 | 96 | 98 | 90 | [eval](https://sharegpt.com/c/DwXnyqG) |

| 95 | 92 | 90 | 85 | 93 | 94 | [eval](https://sharegpt.com/c/0ScdkGS) |

| 95 | 96 | 92 | 90 | 97 | 93 | [eval](https://sharegpt.com/c/6yIoCDU) |

| 95 | 93 | 96 | 94 | 90 | 92 | [eval](https://sharegpt.com/c/VubEvp9) |

| 95 | 94 | 93 | 92 | 90 | 89 | [eval](https://sharegpt.com/c/RHzmZWG) |

| 90 | 85 | 95 | 80 | 87 | 75 | [eval](https://sharegpt.com/c/IMiP9Zm) |

| 95 | 94 | 92 | 93 | 90 | 96 | [eval](https://sharegpt.com/c/bft4PIL) |

| 95 | 100 | 90 | 95 | 95 | 95 | [eval](https://sharegpt.com/c/iHXB34b) |

| 100 | 95 | 85 | 100 | 0 | 90 | [eval](https://sharegpt.com/c/vCGn9R7) |

| 100 | 95 | 90 | 95 | 100 | 95 | [eval](https://sharegpt.com/c/be8crZL) |

| 95 | 90 | 60 | 95 | 85 | 80 | [eval](https://sharegpt.com/c/33elmDz) |

| 100 | 95 | 90 | 98 | 97 | 99 | [eval](https://sharegpt.com/c/RWD3Zx7) |

| 95 | 90 | 85 | 95 | 80 | 92 | [eval](https://sharegpt.com/c/GiwBvM7) |

| 100 | 95 | 100 | 98 | 100 | 90 | [eval](https://sharegpt.com/c/hX2pYxk) |

| 100 | 95 | 80 | 85 | 90 | 85 | [eval](https://sharegpt.com/c/MfxdGd7) |

| 100 | 90 | 95 | 85 | 95 | 100 | [eval](https://sharegpt.com/c/28hQjmS) |

| 95 | 90 | 85 | 80 | 88 | 92 | [eval](https://sharegpt.com/c/fzy5EPe) |

| 100 | 100 | 0 | 0 | 100 | 0 | [eval](https://sharegpt.com/c/vwxPjbR) |

| 100 | 100 | 100 | 50 | 100 | 75 | [eval](https://sharegpt.com/c/FAYfFWy) |

| 100 | 100 | 0 | 0 | 100 | 0 | [eval](https://sharegpt.com/c/SoudGsQ) |

| 0 | 100 | 0 | 0 | 0 | 0 | [eval](https://sharegpt.com/c/mkwEgVn) |

| 100 | 100 | 50 | 0 | 0 | 0 | [eval](https://sharegpt.com/c/q8MQEsz) |

| 100 | 100 | 100 | 100 | 100 | 95 | [eval](https://sharegpt.com/c/tzHpsKh) |

| 100 | 100 | 50 | 0 | 0 | 0 | [eval](https://sharegpt.com/c/3ugYBtJ) |

| 100 | 100 | 0 | 0 | 100 | 0 | [eval](https://sharegpt.com/c/I6KfOJT) |

| 90 | 85 | 80 | 95 | 70 | 75 | [eval](https://sharegpt.com/c/enaV1CK) |

| 100 | 100 | 0 | 0 | 0 | 0 | [eval](https://sharegpt.com/c/JBk7oSh) |

</details>

### Training data

This was an experiment to see if a "jailbreak" prompt could be used to generate a broader range of data that would otherwise have been filtered by OpenAI's alignment efforts.

The jailbreak did indeed work with a high success rate, and caused OpenAI to generate a broader range of topics and fewer refusals to answer questions/instructions of sensitive topics.

### Prompt format

The prompt should be 1:1 compatible with the FastChat/vicuna format, e.g.:

With a system prompt:

```

A chat between a curious user and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the user's questions. USER: [prompt] ASSISTANT:

```

Or without a system prompt:

```

USER: [prompt] ASSISTANT:

```

### Usage and License Notices

The model and dataset are intended and licensed for research use only. I've used the 'cc-nc-4.0' license, but really it is subject to a custom/special license because:

- the base model is LLaMa, which has it's own special research license

- the dataset(s) were generated with OpenAI (gpt-4 and/or gpt-3.5-turbo), which has a clausing saying the data can't be used to create models to compete with openai

So, to reiterate: this model (and datasets) cannot be used commercially.

|

samaksh-khatri-crest-data/gmra_model_gpt2-medium_14082023T134929

|

samaksh-khatri-crest-data

| 2023-08-14T09:05:39Z | 103 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-classification",

"generated_from_trainer",

"base_model:openai-community/gpt2-medium",

"base_model:finetune:openai-community/gpt2-medium",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-14T08:19:30Z |

---

license: mit

base_model: gpt2-medium

tags:

- generated_from_trainer

metrics:

- accuracy

model-index:

- name: gmra_model_gpt2-medium_14082023T134929

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# gmra_model_gpt2-medium_14082023T134929

This model is a fine-tuned version of [gpt2-medium](https://huggingface.co/gpt2-medium) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3831

- Accuracy: 0.9438

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 10

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| No log | 1.0 | 284 | 0.2626 | 0.9069 |

| 0.3464 | 2.0 | 568 | 0.2263 | 0.9262 |

| 0.3464 | 3.0 | 852 | 0.2545 | 0.9394 |

| 0.1022 | 4.0 | 1137 | 0.2577 | 0.9464 |

| 0.1022 | 5.0 | 1421 | 0.3485 | 0.9420 |

| 0.0292 | 6.0 | 1705 | 0.3445 | 0.9429 |

| 0.0292 | 7.0 | 1989 | 0.3127 | 0.9464 |

| 0.0125 | 8.0 | 2274 | 0.4068 | 0.9411 |

| 0.0085 | 9.0 | 2558 | 0.3853 | 0.9438 |

| 0.0085 | 9.99 | 2840 | 0.3831 | 0.9438 |

### Framework versions

- Transformers 4.31.0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Skie0007/reinforce-pixel

|

Skie0007

| 2023-08-14T09:02:11Z | 0 | 0 | null |

[

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-14T08:24:43Z |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: reinforce-pixel

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 6.50 +/- 8.95

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

wangxso/ppo-PyramidsTraining

|

wangxso

| 2023-08-14T08:58:00Z | 0 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Pyramids",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Pyramids",

"region:us"

] |

reinforcement-learning

| 2023-08-14T08:57:57Z |

---

library_name: ml-agents

tags:

- Pyramids

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Pyramids

---

# **ppo** Agent playing **Pyramids**

This is a trained model of a **ppo** agent playing **Pyramids**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: wangxso/ppo-PyramidsTraining

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

ai-forever/mGPT-1.3B-ukranian

|

ai-forever

| 2023-08-14T08:57:08Z | 31 | 3 |

transformers

|

[

"transformers",

"pytorch",

"gpt2",

"text-generation",

"gpt3",

"mgpt",

"uk",

"en",

"ru",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-08-10T05:12:23Z |

---

language:

- uk

- en

- ru

license: mit

tags:

- gpt3

- transformers

- mgpt

---

# 🇺🇦 Ukranian mGPT 1.3B

Language model for Ukranian. Model has 1.3B parameters as you can guess from it's name.

Ukranian belongs to Indo-European language family. It's a very melodic language with approximately 40 million speakers. Here are some facts about it:

1. One of the East Slavic languages, alongside Russian and Belarusian.

2. It is the official language of Ukraine and is written in a version of the Cyrillic script.

3. Ukrainian has a rich literary history, it has maintained a vibrant cultural presence, especially in poetry and music.

## Technical details

It's one of the models derived from the base [mGPT-XL (1.3B)](https://huggingface.co/ai-forever/mGPT) model (see the list below) which was originally trained on the 61 languages from 25 language families using Wikipedia and C4 corpus.

We've found additional data for 23 languages most of which are considered as minor and decided to further tune the base model. **Ukranian mGPT 1.3B** was trained for another 10000 steps with batch_size=4 and context window of **2048** tokens on 1 A100.

Final perplexity for this model on validation is **7.1**.

_Chart of the training loss and perplexity:_

## Other mGPT-1.3B models

- [🇦🇲 mGPT-1.3B Armenian](https://huggingface.co/ai-forever/mGPT-1.3B-armenian)

- [🇦🇿 mGPT-1.3B Azerbaijan](https://huggingface.co/ai-forever/mGPT-1.3B-azerbaijan)

- [🍯 mGPT-1.3B Bashkir](https://huggingface.co/ai-forever/mGPT-1.3B-bashkir)

- [🇧🇾 mGPT-1.3B Belorussian](https://huggingface.co/ai-forever/mGPT-1.3B-belorussian)

- [🇧🇬 mGPT-1.3B Bulgarian](https://huggingface.co/ai-forever/mGPT-1.3B-bulgarian)

- [🌞 mGPT-1.3B Buryat](https://huggingface.co/ai-forever/mGPT-1.3B-buryat)

- [🌳 mGPT-1.3B Chuvash](https://huggingface.co/ai-forever/mGPT-1.3B-chuvash)

- [🇬🇪 mGPT-1.3B Georgian](https://huggingface.co/ai-forever/mGPT-1.3B-georgian)

- [🌸 mGPT-1.3B Kalmyk](https://huggingface.co/ai-forever/mGPT-1.3B-kalmyk)

- [🇰🇿 mGPT-1.3B Kazakh](https://huggingface.co/ai-forever/mGPT-1.3B-kazakh)

- [🇰🇬 mGPT-1.3B Kirgiz](https://huggingface.co/ai-forever/mGPT-1.3B-kirgiz)

- [🐻 mGPT-1.3B Mari](https://huggingface.co/ai-forever/mGPT-1.3B-mari)

- [🇲🇳 mGPT-1.3B Mongol](https://huggingface.co/ai-forever/mGPT-1.3B-mongol)

- [🐆 mGPT-1.3B Ossetian](https://huggingface.co/ai-forever/mGPT-1.3B-ossetian)

- [🇮🇷 mGPT-1.3B Persian](https://huggingface.co/ai-forever/mGPT-1.3B-persian)

- [🇷🇴 mGPT-1.3B Romanian](https://huggingface.co/ai-forever/mGPT-1.3B-romanian)

- [🇹🇯 mGPT-1.3B Tajik](https://huggingface.co/ai-forever/mGPT-1.3B-tajik)

- [☕ mGPT-1.3B Tatar](https://huggingface.co/ai-forever/mGPT-1.3B-tatar)

- [🇹🇲 mGPT-1.3B Turkmen](https://huggingface.co/ai-forever/mGPT-1.3B-turkmen)

- [🐎 mGPT-1.3B Tuvan](https://huggingface.co/ai-forever/mGPT-1.3B-tuvan)

- [🇺🇿 mGPT-1.3B Uzbek](https://huggingface.co/ai-forever/mGPT-1.3B-uzbek)

- [💎 mGPT-1.3B Yakut](https://huggingface.co/ai-forever/mGPT-1.3B-yakut)

## Feedback

If you'll find a bug or have additional data to train a model on your language — **please, give us feedback**.

Model will be improved over time. Stay tuned!

|

nagupv/Stable13B_contextLLMExam_f4

|

nagupv

| 2023-08-14T08:31:09Z | 0 | 0 |

peft

|

[

"peft",

"region:us"

] | null | 2023-08-14T08:30:46Z |

---

library_name: peft

---

## Training procedure

The following `bitsandbytes` quantization config was used during training:

- quant_method: bitsandbytes

- load_in_8bit: False

- load_in_4bit: True

- llm_int8_threshold: 6.0

- llm_int8_skip_modules: None

- llm_int8_enable_fp32_cpu_offload: False

- llm_int8_has_fp16_weight: False

- bnb_4bit_quant_type: nf4

- bnb_4bit_use_double_quant: True

- bnb_4bit_compute_dtype: bfloat16

### Framework versions

- PEFT 0.5.0.dev0

|

bigmorning/whisper_charsplit_new_round3__0073

|

bigmorning

| 2023-08-14T08:28:57Z | 59 | 0 |

transformers

|

[

"transformers",

"tf",

"whisper",

"automatic-speech-recognition",

"generated_from_keras_callback",

"base_model:bigmorning/whisper_charsplit_new_round2__0061",

"base_model:finetune:bigmorning/whisper_charsplit_new_round2__0061",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-14T08:28:49Z |

---

license: apache-2.0

base_model: bigmorning/whisper_charsplit_new_round2__0061

tags:

- generated_from_keras_callback

model-index:

- name: whisper_charsplit_new_round3__0073

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# whisper_charsplit_new_round3__0073

This model is a fine-tuned version of [bigmorning/whisper_charsplit_new_round2__0061](https://huggingface.co/bigmorning/whisper_charsplit_new_round2__0061) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0013

- Train Accuracy: 0.0795

- Train Wermet: 8.0558

- Validation Loss: 0.5732

- Validation Accuracy: 0.0770

- Validation Wermet: 6.5109

- Epoch: 72

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 1e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Train Wermet | Validation Loss | Validation Accuracy | Validation Wermet | Epoch |

|:----------:|:--------------:|:------------:|:---------------:|:-------------------:|:-----------------:|:-----:|

| 0.0009 | 0.0795 | 7.9492 | 0.5730 | 0.0769 | 7.2856 | 0 |

| 0.0015 | 0.0795 | 8.4221 | 0.5756 | 0.0769 | 7.1487 | 1 |

| 0.0012 | 0.0795 | 7.8476 | 0.5699 | 0.0769 | 6.5976 | 2 |

| 0.0010 | 0.0795 | 7.6843 | 0.5740 | 0.0769 | 6.9513 | 3 |

| 0.0014 | 0.0795 | 8.0796 | 0.5763 | 0.0768 | 7.4043 | 4 |

| 0.0019 | 0.0795 | 7.7274 | 0.5724 | 0.0769 | 6.4922 | 5 |

| 0.0008 | 0.0795 | 7.3468 | 0.5734 | 0.0769 | 6.1909 | 6 |

| 0.0009 | 0.0795 | 7.2393 | 0.5816 | 0.0769 | 6.5734 | 7 |

| 0.0010 | 0.0795 | 7.5822 | 0.5755 | 0.0769 | 6.6613 | 8 |

| 0.0004 | 0.0795 | 7.3807 | 0.5698 | 0.0770 | 7.0671 | 9 |

| 0.0001 | 0.0795 | 7.7157 | 0.5681 | 0.0771 | 6.8391 | 10 |

| 0.0001 | 0.0795 | 7.7540 | 0.5725 | 0.0771 | 6.9281 | 11 |

| 0.0001 | 0.0795 | 7.7721 | 0.5726 | 0.0771 | 6.8911 | 12 |

| 0.0000 | 0.0795 | 7.8163 | 0.5721 | 0.0771 | 6.8876 | 13 |

| 0.0000 | 0.0795 | 7.7745 | 0.5741 | 0.0771 | 6.8770 | 14 |

| 0.0000 | 0.0795 | 7.7277 | 0.5752 | 0.0771 | 6.8671 | 15 |

| 0.0000 | 0.0795 | 7.7355 | 0.5765 | 0.0771 | 6.8447 | 16 |

| 0.0000 | 0.0795 | 7.7109 | 0.5784 | 0.0771 | 6.8560 | 17 |

| 0.0000 | 0.0795 | 7.7427 | 0.5796 | 0.0771 | 6.8406 | 18 |

| 0.0003 | 0.0795 | 7.6709 | 0.6610 | 0.0762 | 7.0119 | 19 |

| 0.0115 | 0.0793 | 8.3288 | 0.5580 | 0.0769 | 7.1457 | 20 |

| 0.0013 | 0.0795 | 8.2537 | 0.5574 | 0.0770 | 6.7708 | 21 |

| 0.0004 | 0.0795 | 8.0507 | 0.5619 | 0.0770 | 7.0678 | 22 |

| 0.0003 | 0.0795 | 8.0534 | 0.5593 | 0.0771 | 7.0433 | 23 |

| 0.0002 | 0.0795 | 8.1738 | 0.5604 | 0.0771 | 7.1617 | 24 |

| 0.0001 | 0.0795 | 8.1494 | 0.5589 | 0.0771 | 7.1609 | 25 |

| 0.0000 | 0.0795 | 8.2151 | 0.5614 | 0.0771 | 7.1972 | 26 |

| 0.0000 | 0.0795 | 8.2332 | 0.5633 | 0.0771 | 7.1736 | 27 |

| 0.0000 | 0.0795 | 8.2573 | 0.5648 | 0.0771 | 7.2086 | 28 |

| 0.0000 | 0.0795 | 8.2571 | 0.5667 | 0.0771 | 7.1787 | 29 |

| 0.0000 | 0.0795 | 8.2607 | 0.5689 | 0.0771 | 7.2107 | 30 |

| 0.0000 | 0.0795 | 8.2992 | 0.5700 | 0.0772 | 7.2006 | 31 |

| 0.0000 | 0.0795 | 8.3059 | 0.5721 | 0.0772 | 7.2341 | 32 |

| 0.0000 | 0.0795 | 8.2872 | 0.5744 | 0.0772 | 7.2069 | 33 |

| 0.0080 | 0.0794 | 8.3693 | 0.5947 | 0.0762 | 7.3034 | 34 |

| 0.0063 | 0.0794 | 8.2517 | 0.5491 | 0.0769 | 7.1324 | 35 |

| 0.0008 | 0.0795 | 7.9115 | 0.5447 | 0.0771 | 6.9422 | 36 |

| 0.0002 | 0.0795 | 7.6265 | 0.5471 | 0.0771 | 6.8107 | 37 |

| 0.0001 | 0.0795 | 7.6685 | 0.5493 | 0.0771 | 6.6914 | 38 |

| 0.0001 | 0.0795 | 7.6100 | 0.5515 | 0.0771 | 6.7738 | 39 |

| 0.0000 | 0.0795 | 7.6623 | 0.5535 | 0.0771 | 6.7829 | 40 |

| 0.0000 | 0.0795 | 7.6768 | 0.5556 | 0.0771 | 6.8287 | 41 |

| 0.0000 | 0.0795 | 7.7199 | 0.5578 | 0.0772 | 6.8398 | 42 |

| 0.0000 | 0.0795 | 7.7423 | 0.5600 | 0.0772 | 6.8518 | 43 |

| 0.0000 | 0.0795 | 7.7561 | 0.5617 | 0.0772 | 6.8898 | 44 |

| 0.0000 | 0.0795 | 7.7766 | 0.5639 | 0.0772 | 6.8982 | 45 |

| 0.0000 | 0.0795 | 7.7962 | 0.5659 | 0.0772 | 6.9091 | 46 |

| 0.0000 | 0.0795 | 7.8106 | 0.5680 | 0.0772 | 6.9293 | 47 |

| 0.0000 | 0.0795 | 7.8387 | 0.5701 | 0.0772 | 6.9401 | 48 |

| 0.0000 | 0.0795 | 7.8480 | 0.5724 | 0.0772 | 6.9544 | 49 |

| 0.0000 | 0.0795 | 7.8755 | 0.5744 | 0.0772 | 6.9767 | 50 |

| 0.0000 | 0.0795 | 7.8924 | 0.5770 | 0.0772 | 6.9928 | 51 |

| 0.0000 | 0.0795 | 7.9169 | 0.5794 | 0.0772 | 7.0149 | 52 |

| 0.0000 | 0.0795 | 7.9400 | 0.5822 | 0.0772 | 7.0438 | 53 |

| 0.0000 | 0.0795 | 7.9697 | 0.5846 | 0.0772 | 7.0785 | 54 |

| 0.0000 | 0.0795 | 8.0061 | 0.5875 | 0.0772 | 7.0840 | 55 |

| 0.0000 | 0.0795 | 8.0364 | 0.5907 | 0.0772 | 7.0683 | 56 |

| 0.0113 | 0.0793 | 7.8674 | 0.5714 | 0.0768 | 6.0540 | 57 |

| 0.0030 | 0.0795 | 7.4853 | 0.5586 | 0.0770 | 6.6707 | 58 |

| 0.0009 | 0.0795 | 7.4969 | 0.5584 | 0.0771 | 6.7292 | 59 |

| 0.0004 | 0.0795 | 7.6676 | 0.5577 | 0.0771 | 6.7898 | 60 |

| 0.0002 | 0.0795 | 7.5238 | 0.5561 | 0.0772 | 6.6962 | 61 |

| 0.0002 | 0.0795 | 7.4915 | 0.5613 | 0.0772 | 6.6315 | 62 |

| 0.0005 | 0.0795 | 7.6199 | 0.5783 | 0.0770 | 6.9551 | 63 |

| 0.0019 | 0.0795 | 7.8859 | 0.5789 | 0.0769 | 6.9689 | 64 |

| 0.0020 | 0.0795 | 7.9131 | 0.5655 | 0.0770 | 7.0500 | 65 |

| 0.0010 | 0.0795 | 7.8135 | 0.5750 | 0.0770 | 7.0532 | 66 |

| 0.0009 | 0.0795 | 7.7899 | 0.5646 | 0.0770 | 6.8492 | 67 |

| 0.0007 | 0.0795 | 7.7019 | 0.5691 | 0.0771 | 6.6536 | 68 |

| 0.0005 | 0.0795 | 7.7786 | 0.5695 | 0.0771 | 6.3958 | 69 |

| 0.0010 | 0.0795 | 7.8106 | 0.5724 | 0.0771 | 6.8654 | 70 |

| 0.0013 | 0.0795 | 8.2501 | 0.5772 | 0.0770 | 6.9794 | 71 |

| 0.0013 | 0.0795 | 8.0558 | 0.5732 | 0.0770 | 6.5109 | 72 |

### Framework versions

- Transformers 4.32.0.dev0

- TensorFlow 2.12.0

- Tokenizers 0.13.3

|

bigmorning/whisper_charsplit_new_round3__0072

|

bigmorning

| 2023-08-14T08:24:48Z | 60 | 0 |

transformers

|

[

"transformers",

"tf",

"whisper",

"automatic-speech-recognition",

"generated_from_keras_callback",

"base_model:bigmorning/whisper_charsplit_new_round2__0061",

"base_model:finetune:bigmorning/whisper_charsplit_new_round2__0061",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-14T08:24:40Z |

---

license: apache-2.0

base_model: bigmorning/whisper_charsplit_new_round2__0061

tags:

- generated_from_keras_callback

model-index:

- name: whisper_charsplit_new_round3__0072

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# whisper_charsplit_new_round3__0072

This model is a fine-tuned version of [bigmorning/whisper_charsplit_new_round2__0061](https://huggingface.co/bigmorning/whisper_charsplit_new_round2__0061) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0013

- Train Accuracy: 0.0795

- Train Wermet: 8.2501

- Validation Loss: 0.5772

- Validation Accuracy: 0.0770

- Validation Wermet: 6.9794

- Epoch: 71

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 1e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Train Wermet | Validation Loss | Validation Accuracy | Validation Wermet | Epoch |

|:----------:|:--------------:|:------------:|:---------------:|:-------------------:|:-----------------:|:-----:|

| 0.0009 | 0.0795 | 7.9492 | 0.5730 | 0.0769 | 7.2856 | 0 |

| 0.0015 | 0.0795 | 8.4221 | 0.5756 | 0.0769 | 7.1487 | 1 |

| 0.0012 | 0.0795 | 7.8476 | 0.5699 | 0.0769 | 6.5976 | 2 |

| 0.0010 | 0.0795 | 7.6843 | 0.5740 | 0.0769 | 6.9513 | 3 |

| 0.0014 | 0.0795 | 8.0796 | 0.5763 | 0.0768 | 7.4043 | 4 |

| 0.0019 | 0.0795 | 7.7274 | 0.5724 | 0.0769 | 6.4922 | 5 |

| 0.0008 | 0.0795 | 7.3468 | 0.5734 | 0.0769 | 6.1909 | 6 |

| 0.0009 | 0.0795 | 7.2393 | 0.5816 | 0.0769 | 6.5734 | 7 |

| 0.0010 | 0.0795 | 7.5822 | 0.5755 | 0.0769 | 6.6613 | 8 |

| 0.0004 | 0.0795 | 7.3807 | 0.5698 | 0.0770 | 7.0671 | 9 |

| 0.0001 | 0.0795 | 7.7157 | 0.5681 | 0.0771 | 6.8391 | 10 |

| 0.0001 | 0.0795 | 7.7540 | 0.5725 | 0.0771 | 6.9281 | 11 |

| 0.0001 | 0.0795 | 7.7721 | 0.5726 | 0.0771 | 6.8911 | 12 |

| 0.0000 | 0.0795 | 7.8163 | 0.5721 | 0.0771 | 6.8876 | 13 |

| 0.0000 | 0.0795 | 7.7745 | 0.5741 | 0.0771 | 6.8770 | 14 |

| 0.0000 | 0.0795 | 7.7277 | 0.5752 | 0.0771 | 6.8671 | 15 |

| 0.0000 | 0.0795 | 7.7355 | 0.5765 | 0.0771 | 6.8447 | 16 |

| 0.0000 | 0.0795 | 7.7109 | 0.5784 | 0.0771 | 6.8560 | 17 |

| 0.0000 | 0.0795 | 7.7427 | 0.5796 | 0.0771 | 6.8406 | 18 |

| 0.0003 | 0.0795 | 7.6709 | 0.6610 | 0.0762 | 7.0119 | 19 |

| 0.0115 | 0.0793 | 8.3288 | 0.5580 | 0.0769 | 7.1457 | 20 |

| 0.0013 | 0.0795 | 8.2537 | 0.5574 | 0.0770 | 6.7708 | 21 |

| 0.0004 | 0.0795 | 8.0507 | 0.5619 | 0.0770 | 7.0678 | 22 |

| 0.0003 | 0.0795 | 8.0534 | 0.5593 | 0.0771 | 7.0433 | 23 |

| 0.0002 | 0.0795 | 8.1738 | 0.5604 | 0.0771 | 7.1617 | 24 |

| 0.0001 | 0.0795 | 8.1494 | 0.5589 | 0.0771 | 7.1609 | 25 |

| 0.0000 | 0.0795 | 8.2151 | 0.5614 | 0.0771 | 7.1972 | 26 |

| 0.0000 | 0.0795 | 8.2332 | 0.5633 | 0.0771 | 7.1736 | 27 |

| 0.0000 | 0.0795 | 8.2573 | 0.5648 | 0.0771 | 7.2086 | 28 |

| 0.0000 | 0.0795 | 8.2571 | 0.5667 | 0.0771 | 7.1787 | 29 |

| 0.0000 | 0.0795 | 8.2607 | 0.5689 | 0.0771 | 7.2107 | 30 |

| 0.0000 | 0.0795 | 8.2992 | 0.5700 | 0.0772 | 7.2006 | 31 |

| 0.0000 | 0.0795 | 8.3059 | 0.5721 | 0.0772 | 7.2341 | 32 |

| 0.0000 | 0.0795 | 8.2872 | 0.5744 | 0.0772 | 7.2069 | 33 |

| 0.0080 | 0.0794 | 8.3693 | 0.5947 | 0.0762 | 7.3034 | 34 |

| 0.0063 | 0.0794 | 8.2517 | 0.5491 | 0.0769 | 7.1324 | 35 |

| 0.0008 | 0.0795 | 7.9115 | 0.5447 | 0.0771 | 6.9422 | 36 |

| 0.0002 | 0.0795 | 7.6265 | 0.5471 | 0.0771 | 6.8107 | 37 |

| 0.0001 | 0.0795 | 7.6685 | 0.5493 | 0.0771 | 6.6914 | 38 |

| 0.0001 | 0.0795 | 7.6100 | 0.5515 | 0.0771 | 6.7738 | 39 |

| 0.0000 | 0.0795 | 7.6623 | 0.5535 | 0.0771 | 6.7829 | 40 |

| 0.0000 | 0.0795 | 7.6768 | 0.5556 | 0.0771 | 6.8287 | 41 |

| 0.0000 | 0.0795 | 7.7199 | 0.5578 | 0.0772 | 6.8398 | 42 |

| 0.0000 | 0.0795 | 7.7423 | 0.5600 | 0.0772 | 6.8518 | 43 |

| 0.0000 | 0.0795 | 7.7561 | 0.5617 | 0.0772 | 6.8898 | 44 |

| 0.0000 | 0.0795 | 7.7766 | 0.5639 | 0.0772 | 6.8982 | 45 |

| 0.0000 | 0.0795 | 7.7962 | 0.5659 | 0.0772 | 6.9091 | 46 |

| 0.0000 | 0.0795 | 7.8106 | 0.5680 | 0.0772 | 6.9293 | 47 |

| 0.0000 | 0.0795 | 7.8387 | 0.5701 | 0.0772 | 6.9401 | 48 |

| 0.0000 | 0.0795 | 7.8480 | 0.5724 | 0.0772 | 6.9544 | 49 |

| 0.0000 | 0.0795 | 7.8755 | 0.5744 | 0.0772 | 6.9767 | 50 |

| 0.0000 | 0.0795 | 7.8924 | 0.5770 | 0.0772 | 6.9928 | 51 |

| 0.0000 | 0.0795 | 7.9169 | 0.5794 | 0.0772 | 7.0149 | 52 |

| 0.0000 | 0.0795 | 7.9400 | 0.5822 | 0.0772 | 7.0438 | 53 |

| 0.0000 | 0.0795 | 7.9697 | 0.5846 | 0.0772 | 7.0785 | 54 |

| 0.0000 | 0.0795 | 8.0061 | 0.5875 | 0.0772 | 7.0840 | 55 |

| 0.0000 | 0.0795 | 8.0364 | 0.5907 | 0.0772 | 7.0683 | 56 |

| 0.0113 | 0.0793 | 7.8674 | 0.5714 | 0.0768 | 6.0540 | 57 |

| 0.0030 | 0.0795 | 7.4853 | 0.5586 | 0.0770 | 6.6707 | 58 |

| 0.0009 | 0.0795 | 7.4969 | 0.5584 | 0.0771 | 6.7292 | 59 |

| 0.0004 | 0.0795 | 7.6676 | 0.5577 | 0.0771 | 6.7898 | 60 |

| 0.0002 | 0.0795 | 7.5238 | 0.5561 | 0.0772 | 6.6962 | 61 |

| 0.0002 | 0.0795 | 7.4915 | 0.5613 | 0.0772 | 6.6315 | 62 |

| 0.0005 | 0.0795 | 7.6199 | 0.5783 | 0.0770 | 6.9551 | 63 |

| 0.0019 | 0.0795 | 7.8859 | 0.5789 | 0.0769 | 6.9689 | 64 |

| 0.0020 | 0.0795 | 7.9131 | 0.5655 | 0.0770 | 7.0500 | 65 |

| 0.0010 | 0.0795 | 7.8135 | 0.5750 | 0.0770 | 7.0532 | 66 |

| 0.0009 | 0.0795 | 7.7899 | 0.5646 | 0.0770 | 6.8492 | 67 |

| 0.0007 | 0.0795 | 7.7019 | 0.5691 | 0.0771 | 6.6536 | 68 |

| 0.0005 | 0.0795 | 7.7786 | 0.5695 | 0.0771 | 6.3958 | 69 |

| 0.0010 | 0.0795 | 7.8106 | 0.5724 | 0.0771 | 6.8654 | 70 |

| 0.0013 | 0.0795 | 8.2501 | 0.5772 | 0.0770 | 6.9794 | 71 |

### Framework versions

- Transformers 4.32.0.dev0

- TensorFlow 2.12.0

- Tokenizers 0.13.3

|

phatpt/ppo-Huggy

|

phatpt

| 2023-08-14T08:23:33Z | 12 | 0 |

ml-agents

|

[

"ml-agents",

"tensorboard",

"onnx",

"Huggy",

"deep-reinforcement-learning",

"reinforcement-learning",

"ML-Agents-Huggy",

"region:us"

] |

reinforcement-learning

| 2023-08-14T08:23:27Z |

---

library_name: ml-agents

tags:

- Huggy

- deep-reinforcement-learning

- reinforcement-learning

- ML-Agents-Huggy

---

# **ppo** Agent playing **Huggy**

This is a trained model of a **ppo** agent playing **Huggy**

using the [Unity ML-Agents Library](https://github.com/Unity-Technologies/ml-agents).

## Usage (with ML-Agents)

The Documentation: https://unity-technologies.github.io/ml-agents/ML-Agents-Toolkit-Documentation/

We wrote a complete tutorial to learn to train your first agent using ML-Agents and publish it to the Hub:

- A *short tutorial* where you teach Huggy the Dog 🐶 to fetch the stick and then play with him directly in your

browser: https://huggingface.co/learn/deep-rl-course/unitbonus1/introduction

- A *longer tutorial* to understand how works ML-Agents:

https://huggingface.co/learn/deep-rl-course/unit5/introduction

### Resume the training

```bash

mlagents-learn <your_configuration_file_path.yaml> --run-id=<run_id> --resume

```

### Watch your Agent play

You can watch your agent **playing directly in your browser**

1. If the environment is part of ML-Agents official environments, go to https://huggingface.co/unity

2. Step 1: Find your model_id: phatpt/ppo-Huggy

3. Step 2: Select your *.nn /*.onnx file

4. Click on Watch the agent play 👀

|

bigmorning/whisper_charsplit_new_round3__0071

|

bigmorning

| 2023-08-14T08:20:41Z | 59 | 0 |

transformers

|

[

"transformers",

"tf",

"whisper",

"automatic-speech-recognition",

"generated_from_keras_callback",

"base_model:bigmorning/whisper_charsplit_new_round2__0061",

"base_model:finetune:bigmorning/whisper_charsplit_new_round2__0061",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

automatic-speech-recognition

| 2023-08-14T08:20:29Z |

---

license: apache-2.0

base_model: bigmorning/whisper_charsplit_new_round2__0061

tags:

- generated_from_keras_callback

model-index:

- name: whisper_charsplit_new_round3__0071

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# whisper_charsplit_new_round3__0071

This model is a fine-tuned version of [bigmorning/whisper_charsplit_new_round2__0061](https://huggingface.co/bigmorning/whisper_charsplit_new_round2__0061) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.0010

- Train Accuracy: 0.0795

- Train Wermet: 7.8106

- Validation Loss: 0.5724

- Validation Accuracy: 0.0771

- Validation Wermet: 6.8654

- Epoch: 70

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 1e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Train Accuracy | Train Wermet | Validation Loss | Validation Accuracy | Validation Wermet | Epoch |

|:----------:|:--------------:|:------------:|:---------------:|:-------------------:|:-----------------:|:-----:|

| 0.0009 | 0.0795 | 7.9492 | 0.5730 | 0.0769 | 7.2856 | 0 |

| 0.0015 | 0.0795 | 8.4221 | 0.5756 | 0.0769 | 7.1487 | 1 |

| 0.0012 | 0.0795 | 7.8476 | 0.5699 | 0.0769 | 6.5976 | 2 |

| 0.0010 | 0.0795 | 7.6843 | 0.5740 | 0.0769 | 6.9513 | 3 |

| 0.0014 | 0.0795 | 8.0796 | 0.5763 | 0.0768 | 7.4043 | 4 |

| 0.0019 | 0.0795 | 7.7274 | 0.5724 | 0.0769 | 6.4922 | 5 |

| 0.0008 | 0.0795 | 7.3468 | 0.5734 | 0.0769 | 6.1909 | 6 |

| 0.0009 | 0.0795 | 7.2393 | 0.5816 | 0.0769 | 6.5734 | 7 |

| 0.0010 | 0.0795 | 7.5822 | 0.5755 | 0.0769 | 6.6613 | 8 |

| 0.0004 | 0.0795 | 7.3807 | 0.5698 | 0.0770 | 7.0671 | 9 |

| 0.0001 | 0.0795 | 7.7157 | 0.5681 | 0.0771 | 6.8391 | 10 |

| 0.0001 | 0.0795 | 7.7540 | 0.5725 | 0.0771 | 6.9281 | 11 |

| 0.0001 | 0.0795 | 7.7721 | 0.5726 | 0.0771 | 6.8911 | 12 |

| 0.0000 | 0.0795 | 7.8163 | 0.5721 | 0.0771 | 6.8876 | 13 |

| 0.0000 | 0.0795 | 7.7745 | 0.5741 | 0.0771 | 6.8770 | 14 |

| 0.0000 | 0.0795 | 7.7277 | 0.5752 | 0.0771 | 6.8671 | 15 |

| 0.0000 | 0.0795 | 7.7355 | 0.5765 | 0.0771 | 6.8447 | 16 |

| 0.0000 | 0.0795 | 7.7109 | 0.5784 | 0.0771 | 6.8560 | 17 |

| 0.0000 | 0.0795 | 7.7427 | 0.5796 | 0.0771 | 6.8406 | 18 |

| 0.0003 | 0.0795 | 7.6709 | 0.6610 | 0.0762 | 7.0119 | 19 |

| 0.0115 | 0.0793 | 8.3288 | 0.5580 | 0.0769 | 7.1457 | 20 |

| 0.0013 | 0.0795 | 8.2537 | 0.5574 | 0.0770 | 6.7708 | 21 |

| 0.0004 | 0.0795 | 8.0507 | 0.5619 | 0.0770 | 7.0678 | 22 |

| 0.0003 | 0.0795 | 8.0534 | 0.5593 | 0.0771 | 7.0433 | 23 |

| 0.0002 | 0.0795 | 8.1738 | 0.5604 | 0.0771 | 7.1617 | 24 |

| 0.0001 | 0.0795 | 8.1494 | 0.5589 | 0.0771 | 7.1609 | 25 |

| 0.0000 | 0.0795 | 8.2151 | 0.5614 | 0.0771 | 7.1972 | 26 |

| 0.0000 | 0.0795 | 8.2332 | 0.5633 | 0.0771 | 7.1736 | 27 |

| 0.0000 | 0.0795 | 8.2573 | 0.5648 | 0.0771 | 7.2086 | 28 |

| 0.0000 | 0.0795 | 8.2571 | 0.5667 | 0.0771 | 7.1787 | 29 |

| 0.0000 | 0.0795 | 8.2607 | 0.5689 | 0.0771 | 7.2107 | 30 |

| 0.0000 | 0.0795 | 8.2992 | 0.5700 | 0.0772 | 7.2006 | 31 |

| 0.0000 | 0.0795 | 8.3059 | 0.5721 | 0.0772 | 7.2341 | 32 |

| 0.0000 | 0.0795 | 8.2872 | 0.5744 | 0.0772 | 7.2069 | 33 |

| 0.0080 | 0.0794 | 8.3693 | 0.5947 | 0.0762 | 7.3034 | 34 |

| 0.0063 | 0.0794 | 8.2517 | 0.5491 | 0.0769 | 7.1324 | 35 |

| 0.0008 | 0.0795 | 7.9115 | 0.5447 | 0.0771 | 6.9422 | 36 |

| 0.0002 | 0.0795 | 7.6265 | 0.5471 | 0.0771 | 6.8107 | 37 |

| 0.0001 | 0.0795 | 7.6685 | 0.5493 | 0.0771 | 6.6914 | 38 |

| 0.0001 | 0.0795 | 7.6100 | 0.5515 | 0.0771 | 6.7738 | 39 |

| 0.0000 | 0.0795 | 7.6623 | 0.5535 | 0.0771 | 6.7829 | 40 |

| 0.0000 | 0.0795 | 7.6768 | 0.5556 | 0.0771 | 6.8287 | 41 |

| 0.0000 | 0.0795 | 7.7199 | 0.5578 | 0.0772 | 6.8398 | 42 |

| 0.0000 | 0.0795 | 7.7423 | 0.5600 | 0.0772 | 6.8518 | 43 |

| 0.0000 | 0.0795 | 7.7561 | 0.5617 | 0.0772 | 6.8898 | 44 |

| 0.0000 | 0.0795 | 7.7766 | 0.5639 | 0.0772 | 6.8982 | 45 |

| 0.0000 | 0.0795 | 7.7962 | 0.5659 | 0.0772 | 6.9091 | 46 |

| 0.0000 | 0.0795 | 7.8106 | 0.5680 | 0.0772 | 6.9293 | 47 |

| 0.0000 | 0.0795 | 7.8387 | 0.5701 | 0.0772 | 6.9401 | 48 |

| 0.0000 | 0.0795 | 7.8480 | 0.5724 | 0.0772 | 6.9544 | 49 |

| 0.0000 | 0.0795 | 7.8755 | 0.5744 | 0.0772 | 6.9767 | 50 |

| 0.0000 | 0.0795 | 7.8924 | 0.5770 | 0.0772 | 6.9928 | 51 |

| 0.0000 | 0.0795 | 7.9169 | 0.5794 | 0.0772 | 7.0149 | 52 |

| 0.0000 | 0.0795 | 7.9400 | 0.5822 | 0.0772 | 7.0438 | 53 |

| 0.0000 | 0.0795 | 7.9697 | 0.5846 | 0.0772 | 7.0785 | 54 |

| 0.0000 | 0.0795 | 8.0061 | 0.5875 | 0.0772 | 7.0840 | 55 |

| 0.0000 | 0.0795 | 8.0364 | 0.5907 | 0.0772 | 7.0683 | 56 |

| 0.0113 | 0.0793 | 7.8674 | 0.5714 | 0.0768 | 6.0540 | 57 |

| 0.0030 | 0.0795 | 7.4853 | 0.5586 | 0.0770 | 6.6707 | 58 |

| 0.0009 | 0.0795 | 7.4969 | 0.5584 | 0.0771 | 6.7292 | 59 |

| 0.0004 | 0.0795 | 7.6676 | 0.5577 | 0.0771 | 6.7898 | 60 |

| 0.0002 | 0.0795 | 7.5238 | 0.5561 | 0.0772 | 6.6962 | 61 |

| 0.0002 | 0.0795 | 7.4915 | 0.5613 | 0.0772 | 6.6315 | 62 |

| 0.0005 | 0.0795 | 7.6199 | 0.5783 | 0.0770 | 6.9551 | 63 |