modelId

stringlengths 5

139

| author

stringlengths 2

42

| last_modified

timestamp[us, tz=UTC]date 2020-02-15 11:33:14

2025-08-30 06:27:36

| downloads

int64 0

223M

| likes

int64 0

11.7k

| library_name

stringclasses 527

values | tags

listlengths 1

4.05k

| pipeline_tag

stringclasses 55

values | createdAt

timestamp[us, tz=UTC]date 2022-03-02 23:29:04

2025-08-30 06:27:12

| card

stringlengths 11

1.01M

|

|---|---|---|---|---|---|---|---|---|---|

Aonodensetsu/codyblue-731

|

Aonodensetsu

| 2023-08-31T10:55:11Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:04:23Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **codyblue-731** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> furry, femboy" - the recommended settings are epoch 11-15, strength 0.6-0.8.

|

Aonodensetsu/cromachina

|

Aonodensetsu

| 2023-08-31T10:54:31Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:09:10Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **cromachina** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> 1girl" - the recommended settings are epoch 11-15, strength 0.5-0.8.

|

Aonodensetsu/delicious

|

Aonodensetsu

| 2023-08-31T10:54:12Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:42:21Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **delicious** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> furry" - the recommended settings are epoch 12-15, strength 0.6-0.8.

|

abhishek/llama-2-7b-hf-guanaco-sr-1

|

abhishek

| 2023-08-31T10:54:05Z | 0 | 0 | null |

[

"generated_from_trainer",

"base_model:meta-llama/Llama-2-7b-hf",

"base_model:finetune:meta-llama/Llama-2-7b-hf",

"region:us"

] | null | 2023-08-31T08:09:34Z |

---

base_model: meta-llama/Llama-2-7b-hf

tags:

- generated_from_trainer

model-index:

- name: llama-2-7b-hf-guanaco-sr-1

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# llama-2-7b-hf-guanaco-sr-1

This model is a fine-tuned version of [meta-llama/Llama-2-7b-hf](https://huggingface.co/meta-llama/Llama-2-7b-hf) on an unknown dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0002

- train_batch_size: 2

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 1

### Training results

### Framework versions

- Transformers 4.32.1

- Pytorch 2.0.1

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Aonodensetsu/darkmirage

|

Aonodensetsu

| 2023-08-31T10:53:59Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:24:00Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **darkmirage** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> furry" - the recommended settings are epoch 13-14, strength 0.5-0.7.

|

Aonodensetsu/frenky_hw

|

Aonodensetsu

| 2023-08-31T10:53:21Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:53:19Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **frenky_hw** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> furry, male, girly" - the recommended settings are epoch 11-13, strength 0.6-0.8.

|

Aonodensetsu/gothbunnyboy

|

Aonodensetsu

| 2023-08-31T10:53:05Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T12:57:11Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **gothbunnyboy** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> furry" - the recommended settings are epoch 11-15, strength 0.6-0.8.

|

vishnuhaasan/q-FrozenLake-v1-4x4-noSlippery

|

vishnuhaasan

| 2023-08-31T10:52:54Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-31T10:52:48Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

model = load_from_hub(repo_id="vishnuhaasan/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

|

Aonodensetsu/pumpkinspicelatte

|

Aonodensetsu

| 2023-08-31T10:52:39Z | 0 | 0 | null |

[

"license:gpl-3.0",

"region:us"

] | null | 2023-08-15T13:04:44Z |

---

license: gpl-3.0

---

This is a mirror of CivitAI.

The style of artist **pumpkinspicelatte** trained for [Foxya v3](https://civitai.com/models/17138).

The preview image uses the prompt "\<lyco\> 1girl" - the recommended settings are epoch 10-15, strength 0.6-0.9.

|

ardt-multipart/ardt-multipart-ppo_train_walker2d_level-3108_0934-33

|

ardt-multipart

| 2023-08-31T10:39:20Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"decision_transformer",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] | null | 2023-08-31T08:36:19Z |

---

tags:

- generated_from_trainer

model-index:

- name: ardt-multipart-ppo_train_walker2d_level-3108_0934-33

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ardt-multipart-ppo_train_walker2d_level-3108_0934-33

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 64

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- training_steps: 10000

### Training results

### Framework versions

- Transformers 4.29.2

- Pytorch 2.1.0.dev20230727+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

SHENMU007/neunit_BASE_V9.5.9

|

SHENMU007

| 2023-08-31T10:36:34Z | 75 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"speecht5",

"text-to-audio",

"1.1.0",

"generated_from_trainer",

"zh",

"dataset:facebook/voxpopuli",

"base_model:microsoft/speecht5_tts",

"base_model:finetune:microsoft/speecht5_tts",

"license:mit",

"endpoints_compatible",

"region:us"

] |

text-to-audio

| 2023-08-31T09:35:39Z |

---

language:

- zh

license: mit

base_model: microsoft/speecht5_tts

tags:

- 1.1.0

- generated_from_trainer

datasets:

- facebook/voxpopuli

model-index:

- name: SpeechT5 TTS Dutch neunit

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# SpeechT5 TTS Dutch neunit

This model is a fine-tuned version of [microsoft/speecht5_tts](https://huggingface.co/microsoft/speecht5_tts) on the VoxPopuli dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 1e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- gradient_accumulation_steps: 4

- total_train_batch_size: 32

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 500

- training_steps: 4000

### Training results

### Framework versions

- Transformers 4.31.0.dev0

- Pytorch 2.0.1+cu117

- Datasets 2.12.0

- Tokenizers 0.13.3

|

dt-and-vanilla-ardt/dt-ppo_train_hopper_level-3108_1003-66

|

dt-and-vanilla-ardt

| 2023-08-31T10:15:36Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"decision_transformer",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] | null | 2023-08-31T09:04:45Z |

---

tags:

- generated_from_trainer

model-index:

- name: dt-ppo_train_hopper_level-3108_1003-66

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# dt-ppo_train_hopper_level-3108_1003-66

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 64

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- training_steps: 10000

### Training results

### Framework versions

- Transformers 4.29.2

- Pytorch 2.1.0.dev20230727+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

sumet/Test_Trocr_digit_handwriting

|

sumet

| 2023-08-31T09:52:03Z | 201 | 2 |

transformers

|

[

"transformers",

"pytorch",

"vision-encoder-decoder",

"image-text-to-text",

"trocr",

"image-to-text",

"endpoints_compatible",

"region:us"

] |

image-to-text

| 2023-08-30T02:28:52Z |

---

tags:

- trocr

- image-to-text

---

|

phillipos99/ppo-LunarLander-v2

|

phillipos99

| 2023-08-31T09:51:38Z | 2 | 0 |

stable-baselines3

|

[

"stable-baselines3",

"LunarLander-v2",

"deep-reinforcement-learning",

"reinforcement-learning",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-31T09:51:20Z |

---

library_name: stable-baselines3

tags:

- LunarLander-v2

- deep-reinforcement-learning

- reinforcement-learning

- stable-baselines3

model-index:

- name: PPO

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: LunarLander-v2

type: LunarLander-v2

metrics:

- type: mean_reward

value: 279.40 +/- 17.75

name: mean_reward

verified: false

---

# **PPO** Agent playing **LunarLander-v2**

This is a trained model of a **PPO** agent playing **LunarLander-v2**

using the [stable-baselines3 library](https://github.com/DLR-RM/stable-baselines3).

## Usage (with Stable-baselines3)

TODO: Add your code

```python

from stable_baselines3 import ...

from huggingface_sb3 import load_from_hub

...

```

|

vnktrmnb/MBERT_FT-TyDiQA_S67

|

vnktrmnb

| 2023-08-31T09:45:40Z | 62 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"bert",

"question-answering",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-multilingual-cased",

"base_model:finetune:google-bert/bert-base-multilingual-cased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-30T06:03:12Z |

---

license: apache-2.0

base_model: bert-base-multilingual-cased

tags:

- generated_from_keras_callback

model-index:

- name: vnktrmnb/MBERT_FT-TyDiQA_S67

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vnktrmnb/MBERT_FT-TyDiQA_S67

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.3185

- Train End Logits Accuracy: 0.9077

- Train Start Logits Accuracy: 0.9272

- Validation Loss: 0.5503

- Validation End Logits Accuracy: 0.875

- Validation Start Logits Accuracy: 0.9111

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2412, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch |

|:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:|

| 0.6586 | 0.8284 | 0.8598 | 0.5000 | 0.8737 | 0.9124 | 0 |

| 0.4565 | 0.8766 | 0.8978 | 0.5009 | 0.8776 | 0.9175 | 1 |

| 0.3185 | 0.9077 | 0.9272 | 0.5503 | 0.875 | 0.9111 | 2 |

### Framework versions

- Transformers 4.32.1

- TensorFlow 2.12.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

ukr-models/xlm-roberta-base-uk

|

ukr-models

| 2023-08-31T09:41:51Z | 526 | 12 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"xlm-roberta",

"fill-mask",

"ukrainian",

"uk",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-11T10:53:02Z |

---

language:

- uk

tags:

- ukrainian

widget:

- text: "Тарас Шевченко – великий український <mask>."

license: mit

---

This is a smaller version of the [XLM-RoBERTa](https://huggingface.co/xlm-roberta-base) model with only Ukrainian and some English embeddings left.

* The original model has 470M parameters, with 384M of them being input and output embeddings.

* After shrinking the `sentencepiece` vocabulary from 250K to 31K (top 25K Ukrainian tokens and top English tokens) the number of model parameters reduced to 134M parameters, and model size reduced from 1GB to 400MB.

|

ukr-models/uk-ner

|

ukr-models

| 2023-08-31T09:41:21Z | 188 | 3 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"xlm-roberta",

"token-classification",

"ukrainian",

"uk",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-04-07T05:31:07Z |

---

language:

- uk

tags:

- ukrainian

widget:

- text: "Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера."

license: mit

---

## Model Description

Fine-tuning of [XLM-RoBERTa-Uk](https://huggingface.co/ukr-models/xlm-roberta-base-uk) model on [synthetic NER dataset](https://huggingface.co/datasets/ukr-models/Ukr-Synth) with B-PER, I-PER, B-LOC, I-LOC, B-ORG, I-ORG tags

## How to Use

Huggingface pipeline way (returns tokens with labels):

```py

from transformers import pipeline, AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-ner')

model = AutoModelForTokenClassification.from_pretrained('ukr-models/uk-ner')

ner = pipeline('ner', model=model, tokenizer=tokenizer)

ner("Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера.")

```

If you wish to get predictions split by words, not by tokens, you may use the following approach (download script get_predictions.py from the repository, it uses [package tokenize_uk](https://pypi.org/project/tokenize_uk/) for splitting)

```py

from transformers import AutoTokenizer, AutoModelForTokenClassification

from get_predictions import get_word_predictions

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-ner')

model = AutoModelForTokenClassification.from_pretrained('ukr-models/uk-ner')

get_word_predictions(model, tokenizer, ["Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера."])

```

|

ukr-models/uk-morph

|

ukr-models

| 2023-08-31T09:41:07Z | 124 | 1 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"xlm-roberta",

"token-classification",

"ukrainian",

"uk",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-04-08T07:14:02Z |

---

language:

- uk

tags:

- ukrainian

widget:

- text: "Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера."

license: mit

---

## Model Description

Fine-tuning of [XLM-RoBERTa-Uk](https://huggingface.co/ukr-models/xlm-roberta-base-uk) model on [synthetic morphological dataset](https://huggingface.co/datasets/ukr-models/Ukr-Synth), returns both UPOS and morphological features (joined by double underscore symbol)

## How to Use

Huggingface pipeline way (returns tokens with labels):

```py

from transformers import TokenClassificationPipeline, AutoTokenizer, AutoModelForTokenClassification

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-morph')

model = AutoModelForTokenClassification.from_pretrained('ukr-models/uk-morph')

ppln = TokenClassificationPipeline(model=model, tokenizer=tokenizer)

ppln("Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера.")

```

If you wish to get predictions split by words, not by tokens, you may use the following approach (download script get_predictions.py from the repository, it uses [package tokenize_uk](https://pypi.org/project/tokenize_uk/) for splitting)

```py

from transformers import AutoTokenizer, AutoModelForTokenClassification

from get_predictions import get_word_predictions

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-morph')

model = AutoModelForTokenClassification.from_pretrained('ukr-models/uk-morph')

get_word_predictions(model, tokenizer, ["Могила Тараса Шевченка — місце поховання видатного українського поета Тараса Шевченка в місті Канів (Черкаська область) на Чернечій горі, над яким із 1939 року височіє бронзовий пам'ятник роботи скульптора Матвія Манізера."])

```

|

ukr-models/uk-punctcase

|

ukr-models

| 2023-08-31T09:40:36Z | 118 | 3 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"xlm-roberta",

"token-classification",

"ukrainian",

"uk",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2022-07-13T11:50:18Z |

---

language:

- uk

tags:

- ukrainian

widget:

- text: "упродовж 2012-2014 років національний природний парк «зачарований край» разом із всесвітнім фондом природи wwf успішно реалізували проект із відновлення болота «чорне багно» розташованого на схилах гори бужора у закарпатті водноболотне угіддя «чорне багно» є найбільшою болотною екосистемою регіону воно займає площу близько 15 га унікальністю цього високогірного болота розташованого на висоті 840 м над рівнем моря є велика потужність торфових покладів (глибиною до 59 м) і своєрідна рослинність у 50-х і на початку 60-х років минулого століття на природних потічках що протікали через болото побудували осушувальні канали це порушило природну рівновагу відтак змінилася екосистема болота"

license: mit

---

## Model Description

Fine-tuning of [XLM-RoBERTa-Uk](https://huggingface.co/ukr-models/xlm-roberta-base-uk) model on Ukrainian texts to recover punctuation and case.

## How to Use

Download script get_predictions.py from the repository.

```py

from transformers import AutoTokenizer, AutoModelForTokenClassification

from get_predictions import recover_text

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-punctcase')

model = AutoModelForTokenClassification.from_pretrained('ukr-models/uk-punctcase')

text = "..."

recover_text(text_processed, model, tokenizer)

```

|

ukr-models/uk-summarizer

|

ukr-models

| 2023-08-31T09:40:08Z | 132 | 4 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"t5",

"text2text-generation",

"ukrainian",

"uk",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text2text-generation

| 2022-08-29T13:21:16Z |

---

language:

- uk

tags:

- ukrainian

license: mit

---

## Model Description

Fine-tuning of [uk-mt5-base](https://huggingface.co/kravchenko/uk-mt5-base) model on summarization dataset.

## How to Use

```py

from transformers import AutoTokenizer, T5ForConditionalGeneration, pipeline

tokenizer = AutoTokenizer.from_pretrained('ukr-models/uk-summarizer')

model = T5ForConditionalGeneration.from_pretrained('ukr-models/uk-summarizer')

ppln = pipeline("summarization", model=model, tokenizer=tokenizer, device=0, max_length=128, num_beams=4, no_repeat_ngram_size=2, clean_up_tokenization_spaces=True)

text = "..."

ppln(text)

```

|

UholoDala/sentence_sentiments_analysis_roberta

|

UholoDala

| 2023-08-31T09:39:09Z | 104 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"text-classification",

"generated_from_trainer",

"base_model:FacebookAI/roberta-base",

"base_model:finetune:FacebookAI/roberta-base",

"license:mit",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-31T06:42:32Z |

---

license: mit

base_model: roberta-base

tags:

- generated_from_trainer

model-index:

- name: sentence_sentiments_analysis_roberta

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# sentence_sentiments_analysis_roberta

This model is a fine-tuned version of [roberta-base](https://huggingface.co/roberta-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.2736

- F1-score: 0.9119

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1-score |

|:-------------:|:-----:|:-----:|:---------------:|:--------:|

| 0.3477 | 1.0 | 2500 | 0.3307 | 0.9112 |

| 0.2345 | 2.0 | 5000 | 0.2736 | 0.9119 |

| 0.175 | 3.0 | 7500 | 0.3625 | 0.9161 |

| 0.1064 | 4.0 | 10000 | 0.3272 | 0.9358 |

| 0.07 | 5.0 | 12500 | 0.3291 | 0.9380 |

### Framework versions

- Transformers 4.32.1

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

AndrewL088/Pixelcopter-2

|

AndrewL088

| 2023-08-31T09:38:36Z | 0 | 0 | null |

[

"Pixelcopter-PLE-v0",

"reinforce",

"reinforcement-learning",

"custom-implementation",

"deep-rl-class",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-31T09:38:31Z |

---

tags:

- Pixelcopter-PLE-v0

- reinforce

- reinforcement-learning

- custom-implementation

- deep-rl-class

model-index:

- name: Pixelcopter-2

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Pixelcopter-PLE-v0

type: Pixelcopter-PLE-v0

metrics:

- type: mean_reward

value: 36.10 +/- 22.06

name: mean_reward

verified: false

---

# **Reinforce** Agent playing **Pixelcopter-PLE-v0**

This is a trained model of a **Reinforce** agent playing **Pixelcopter-PLE-v0** .

To learn to use this model and train yours check Unit 4 of the Deep Reinforcement Learning Course: https://huggingface.co/deep-rl-course/unit4/introduction

|

Datactive/BERT_pap_queries_classification_2

|

Datactive

| 2023-08-31T09:37:52Z | 61 | 0 |

transformers

|

[

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

text-classification

| 2023-08-29T20:36:30Z |

---

license: apache-2.0

tags:

- generated_from_keras_callback

model-index:

- name: Datactive/BERT_pap_queries_classification_2

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# Datactive/BERT_pap_queries_classification_2

This model is a fine-tuned version of [ai-forever/ruBert-base](https://huggingface.co/ai-forever/ruBert-base) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.1558

- Validation Loss: 0.1369

- Train F1: 0.9475

- Epoch: 0

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': False, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 1463, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Train F1 | Epoch |

|:----------:|:---------------:|:--------:|:-----:|

| 0.1558 | 0.1369 | 0.9475 | 0 |

### Framework versions

- Transformers 4.29.0.dev0

- TensorFlow 2.12.0

- Datasets 2.11.0

- Tokenizers 0.13.3

|

ardt-multipart/ardt-multipart-ppo_train_hopper_level-3108_0919-66

|

ardt-multipart

| 2023-08-31T09:36:54Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"decision_transformer",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] | null | 2023-08-31T08:21:10Z |

---

tags:

- generated_from_trainer

model-index:

- name: ardt-multipart-ppo_train_hopper_level-3108_0919-66

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ardt-multipart-ppo_train_hopper_level-3108_0919-66

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 64

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- training_steps: 10000

### Training results

### Framework versions

- Transformers 4.29.2

- Pytorch 2.1.0.dev20230727+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

lomahony/eleuther-pythia12b-hh-sft

|

lomahony

| 2023-08-31T09:34:04Z | 16 | 0 |

transformers

|

[

"transformers",

"pytorch",

"gpt_neox",

"text-generation",

"causal-lm",

"pythia",

"en",

"dataset:Anthropic/hh-rlhf",

"arxiv:2101.00027",

"license:apache-2.0",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-08-25T10:52:10Z |

---

language:

- en

tags:

- pytorch

- causal-lm

- pythia

license: apache-2.0

datasets:

- Anthropic/hh-rlhf

---

[Pythia-12b](https://huggingface.co/EleutherAI/pythia-12b) supervised finetuned with [Anthropic-hh-rlhf dataset](https://huggingface.co/datasets/Anthropic/hh-rlhf) for 1 epoch.

[wandb log](https://wandb.ai/pythia_dpo/Pythia_LOM/runs/hdct406x)

Benchmark evaluations included in repo done using [lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness/tree/big-refactor).

See [Pythia-12b](https://huggingface.co/EleutherAI/pythia-12b) for model details [(paper)](https://arxiv.org/abs/2101.00027).

|

AK-12/my_awesome_model

|

AK-12

| 2023-08-31T09:33:02Z | 9 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"hubert",

"audio-classification",

"generated_from_trainer",

"dataset:marsyas/gtzan",

"base_model:ntu-spml/distilhubert",

"base_model:finetune:ntu-spml/distilhubert",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"region:us"

] |

audio-classification

| 2023-07-27T10:46:57Z |

---

license: apache-2.0

base_model: ntu-spml/distilhubert

tags:

- generated_from_trainer

datasets:

- marsyas/gtzan

metrics:

- accuracy

model-index:

- name: distilhubert-finetuned-gtzan

results:

- task:

name: Audio Classification

type: audio-classification

dataset:

name: GTZAN

type: marsyas/gtzan

config: all

split: train

args: all

metrics:

- name: Accuracy

type: accuracy

value: 0.9475

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilhubert-finetuned-gtzan

This model is a fine-tuned version of [ntu-spml/distilhubert](https://huggingface.co/ntu-spml/distilhubert) on the GTZAN dataset.

It achieves the following results on the evaluation set:

- Loss: 0.3616

- Accuracy: 0.9475

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 9e-05

- train_batch_size: 6

- eval_batch_size: 6

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_ratio: 0.1

- num_epochs: 5

### Training results

| Training Loss | Epoch | Step | Validation Loss | Accuracy |

|:-------------:|:-----:|:----:|:---------------:|:--------:|

| 0.2804 | 1.0 | 100 | 0.3327 | 0.9475 |

| 0.4089 | 2.0 | 200 | 0.3448 | 0.955 |

| 0.0564 | 3.0 | 300 | 0.3446 | 0.95 |

| 0.0 | 4.0 | 400 | 0.3417 | 0.9475 |

| 0.0 | 5.0 | 500 | 0.3616 | 0.9475 |

### Framework versions

- Transformers 4.32.1

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

aviroes/MAScIR_elderly_whisper-medium-LoRA

|

aviroes

| 2023-08-31T09:31:02Z | 0 | 0 | null |

[

"generated_from_trainer",

"base_model:openai/whisper-medium",

"base_model:finetune:openai/whisper-medium",

"license:apache-2.0",

"region:us"

] | null | 2023-08-31T07:02:39Z |

---

license: apache-2.0

base_model: openai/whisper-medium

tags:

- generated_from_trainer

model-index:

- name: MAScIR_elderly_whisper-medium-LoRA

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# MAScIR_elderly_whisper-medium-LoRA

This model is a fine-tuned version of [openai/whisper-medium](https://huggingface.co/openai/whisper-medium) on the None dataset.

It achieves the following results on the evaluation set:

- Loss: 0.0224

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.001

- train_batch_size: 8

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 200

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 0.3209 | 0.19 | 100 | 0.3262 |

| 0.2482 | 0.37 | 200 | 0.3101 |

| 0.2726 | 0.56 | 300 | 0.3030 |

| 0.2288 | 0.74 | 400 | 0.2848 |

| 0.2014 | 0.93 | 500 | 0.2586 |

| 0.1277 | 1.11 | 600 | 0.2098 |

| 0.1054 | 1.3 | 700 | 0.1857 |

| 0.1056 | 1.48 | 800 | 0.1449 |

| 0.0842 | 1.67 | 900 | 0.1069 |

| 0.0692 | 1.85 | 1000 | 0.0874 |

| 0.0314 | 2.04 | 1100 | 0.0628 |

| 0.0265 | 2.22 | 1200 | 0.0515 |

| 0.0154 | 2.41 | 1300 | 0.0443 |

| 0.0127 | 2.59 | 1400 | 0.0382 |

| 0.0237 | 2.78 | 1500 | 0.0290 |

| 0.0119 | 2.96 | 1600 | 0.0224 |

### Framework versions

- Transformers 4.33.0.dev0

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Dala/mlc-chat-vicuna-13b-v1.5

|

Dala

| 2023-08-31T09:23:54Z | 0 | 1 | null |

[

"license:llama2",

"region:us"

] | null | 2023-08-25T17:42:24Z |

---

inference: false

license: llama2

model_type: llama

model_creator: lmsys

model_link: https://huggingface.co/lmsys/vicuna-13b-v1.5

model_name: Vicuna 13B v1.5

quantized_by: Dala

---

# Vicuna 13B v1.5 - MLC

- Model creator: [lmsys](https://huggingface.co/lmsys)

- Original model: [Vicuna 13B v1.5](https://huggingface.co/lmsys/vicuna-13b-v1.5)

## Description

This repo contains the [MLC](https://mlc.ai/mlc-llm/) compiled parameters for [lmsys's Vicuna 13B v1.5](https://huggingface.co/lmsys/vicuna-13b-v1.5).

It contains several quantizations, each in its own branch:

- main (q4f16_1) <-- You are currently on this branch

- q4f16_2

- q8f16_1

- autogptq_llama_q4f16_1

To run the model, please check out the [MLC instructions](https://mlc.ai/mlc-llm/docs/get_started/try_out.html).

In case the model libraries are not yet available in the [binary lib srepo](https://github.com/mlc-ai/binary-mlc-llm-libs), please obtain them from [this PR](https://github.com/mlc-ai/binary-mlc-llm-libs/pull/15/files)

|

dt-and-vanilla-ardt/ardt-vanilla-ppo_train_halfcheetah_level-3108_0816-99

|

dt-and-vanilla-ardt

| 2023-08-31T09:23:43Z | 31 | 0 |

transformers

|

[

"transformers",

"pytorch",

"decision_transformer",

"generated_from_trainer",

"endpoints_compatible",

"region:us"

] | null | 2023-08-31T07:18:16Z |

---

tags:

- generated_from_trainer

model-index:

- name: ardt-vanilla-ppo_train_halfcheetah_level-3108_0816-99

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# ardt-vanilla-ppo_train_halfcheetah_level-3108_0816-99

This model is a fine-tuned version of [](https://huggingface.co/) on the None dataset.

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 64

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- lr_scheduler_warmup_steps: 1000

- training_steps: 10000

### Training results

### Framework versions

- Transformers 4.29.2

- Pytorch 2.1.0.dev20230727+cu118

- Datasets 2.12.0

- Tokenizers 0.13.3

|

pvcodes/comment_toxicity_classifier

|

pvcodes

| 2023-08-31T09:22:36Z | 0 | 0 | null |

[

"license:mit",

"region:us"

] | null | 2023-08-28T14:03:39Z |

---

license: mit

---

<h1 align=center>Comment Toxicity Classification</h1>

This model helps to predict the is a comment/sentence is hateful on various parameters such as toxicity, severe toxicity, obscene, threat, insult and racism.

### Test the model here : <a href="https://huggingface.co/spaces/pvcodes/comment_toxicity_classifier">pvcodes/comment_toxicity_classifier</a>

<br>

## Working of the Model

- #### Loading of Data

The data is fetched from <a href='assets/jigsaw_toxic_challenge/train.csv/train.csv'>csv</a> file, which consist of the comment and attributes such as toxicity, severe toxicity, obscene, threat, insult and racism.

- #### Preprocessing the comments

Then the data is tokenized using the `TextVectorization` method of `keras` in and embeded

- #### Creating of <emp>Deep NLP Model</emp>

For this model we used `Keras sequential API` with a number of `LSTM` layers (because they are particulary good while working with sequences)

- ####

- The dataset used to train the model is from <a href=https://www.kaggle.com/c/jigsaw-toxic-comment-classification-challenge>Toxic Comment Classification Challenge</a> from <a href=https://www.kaggle.com>Kaggle</a>.

##### Note: The compiled data model is available here: <a href='assets/toxicity.h5'>here</a>.

<samp>

<p align="center">

════ ⋆★⋆ ════<br>

From <a href="https://github.com/pvcodes/pvcodes">pvcodes</a>

</p>

</samp>

|

moro01525/mlm

|

moro01525

| 2023-08-31T09:16:14Z | 6 | 0 |

transformers

|

[

"transformers",

"pytorch",

"roberta",

"fill-mask",

"generated_from_trainer",

"base_model:moro01525/mlm",

"base_model:finetune:moro01525/mlm",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2023-08-29T09:25:43Z |

---

base_model: moro01525/mlm

tags:

- generated_from_trainer

model-index:

- name: mlm

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mlm

This model is a fine-tuned version of [moro01525/mlm](https://huggingface.co/moro01525/mlm) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 4.9361

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 0.0001

- train_batch_size: 16

- eval_batch_size: 8

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

### Training results

| Training Loss | Epoch | Step | Validation Loss |

|:-------------:|:-----:|:----:|:---------------:|

| 4.248 | 1.0 | 582 | 4.9240 |

### Framework versions

- Transformers 4.32.1

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

Norod78/SDXL-StickerSheet-Lora

|

Norod78

| 2023-08-31T09:12:04Z | 248 | 33 |

diffusers

|

[

"diffusers",

"text-to-image",

"stable-diffusion",

"lora",

"en",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:mit",

"region:us"

] |

text-to-image

| 2023-08-31T09:04:29Z |

---

license: mit

base_model: stabilityai/stable-diffusion-xl-base-1.0

instance_prompt: StickerSheet

tags:

- text-to-image

- stable-diffusion

- lora

- diffusers

widget:

- text: Cute sparkle pink barbie StickerSheet

- text: Cthulhu StickerSheet based on H.P Lovecraft stories

- text: Cute sparkle rainbow kitten StickerSheet, Eric Wallis

- text: Cute socially awkward potato StickerSheet

inference: true

language:

- en

---

# Trigger words

Use "StickerSheet" in your prompts

# Examples

Cute sparkle pink barbie StickerSheet, Very detailed, clean, high quality, sharp image, Eric Wallis

Cthulhu StickerSheet, based on H.P Lovecraft stories, Very detailed, clean, high quality, sharp image

|

iloya/Taxi-v3

|

iloya

| 2023-08-31T09:08:28Z | 0 | 0 | null |

[

"Taxi-v3",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-31T09:08:25Z |

---

tags:

- Taxi-v3

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: Taxi-v3

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: Taxi-v3

type: Taxi-v3

metrics:

- type: mean_reward

value: 7.52 +/- 2.71

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **Taxi-v3**

This is a trained model of a **Q-Learning** agent playing **Taxi-v3** .

## Usage

```python

model = load_from_hub(repo_id="iloya/Taxi-v3", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Hozier/sd-class-butterflies-32

|

Hozier

| 2023-08-31T08:57:55Z | 44 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"pytorch",

"unconditional-image-generation",

"diffusion-models-class",

"license:mit",

"diffusers:DDPMPipeline",

"region:us"

] |

unconditional-image-generation

| 2023-08-31T08:53:04Z |

---

license: mit

tags:

- pytorch

- diffusers

- unconditional-image-generation

- diffusion-models-class

---

# Model Card for Unit 1 of the [Diffusion Models Class 🧨](https://github.com/huggingface/diffusion-models-class)

This model is a diffusion model for unconditional image generation of cute 🦋.

## Usage

```python

from diffusers import DDPMPipeline

pipeline = DDPMPipeline.from_pretrained('Hozier/sd-class-butterflies-32')

image = pipeline().images[0]

image

```

|

kimi0230/TestModel

|

kimi0230

| 2023-08-31T08:56:33Z | 1 | 0 | null |

[

"tf",

"generated_from_keras_callback",

"dataset:fka/awesome-chatgpt-prompts",

"license:mit",

"region:us"

] | null | 2023-08-31T07:44:10Z |

---

license: mit

tags:

- generated_from_keras_callback

model-index:

- name: chatgpt-gpt4-prompts-bart-large-cnn-samsum

results: []

datasets:

- fka/awesome-chatgpt-prompts

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# chatgpt-gpt4-prompts-bart-large-cnn-samsum

This model generates ChatGPT/BingChat & GPT-3 prompts and is a fine-tuned version of [philschmid/bart-large-cnn-samsum](https://huggingface.co/philschmid/bart-large-cnn-samsum) on an [this](https://huggingface.co/datasets/fka/awesome-chatgpt-prompts) dataset.

It achieves the following results on the evaluation set:

- Train Loss: 1.2214

- Validation Loss: 2.7584

- Epoch: 4

### Streamlit

This model supports a [Streamlit](https://streamlit.io/) Web UI to run the chatgpt-gpt4-prompts-bart-large-cnn-samsum model:

[](https://huggingface.co/spaces/Kaludi/ChatGPT-BingChat-GPT3-Prompt-Generator_App)

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'AdamWeightDecay', 'learning_rate': 2e-05, 'decay': 0.0, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-07, 'amsgrad': False, 'weight_decay_rate': 0.01}

- training_precision: float32

### Training results

| Train Loss | Validation Loss | Epoch |

|:----------:|:---------------:|:-----:|

| 3.1982 | 2.6801 | 0 |

| 2.3601 | 2.5493 | 1 |

| 1.9225 | 2.5377 | 2 |

| 1.5465 | 2.6794 | 3 |

| 1.2214 | 2.7584 | 4 |

### Framework versions

- Transformers 4.27.3

- TensorFlow 2.11.0

- Datasets 2.10.1

- Tokenizers 0.13.2

|

iloya/q-FrozenLake-v1-4x4-noSlippery

|

iloya

| 2023-08-31T08:55:45Z | 0 | 0 | null |

[

"FrozenLake-v1-4x4-no_slippery",

"q-learning",

"reinforcement-learning",

"custom-implementation",

"model-index",

"region:us"

] |

reinforcement-learning

| 2023-08-31T08:55:42Z |

---

tags:

- FrozenLake-v1-4x4-no_slippery

- q-learning

- reinforcement-learning

- custom-implementation

model-index:

- name: q-FrozenLake-v1-4x4-noSlippery

results:

- task:

type: reinforcement-learning

name: reinforcement-learning

dataset:

name: FrozenLake-v1-4x4-no_slippery

type: FrozenLake-v1-4x4-no_slippery

metrics:

- type: mean_reward

value: 1.00 +/- 0.00

name: mean_reward

verified: false

---

# **Q-Learning** Agent playing1 **FrozenLake-v1**

This is a trained model of a **Q-Learning** agent playing **FrozenLake-v1** .

## Usage

```python

model = load_from_hub(repo_id="iloya/q-FrozenLake-v1-4x4-noSlippery", filename="q-learning.pkl")

# Don't forget to check if you need to add additional attributes (is_slippery=False etc)

env = gym.make(model["env_id"])

```

|

Alexa06/54yg

|

Alexa06

| 2023-08-31T08:53:31Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-08-31T08:52:14Z |

photo_5154810918662679307_x.jpg

|

vnktrmnb/MBERT_FT-TyDiQA_S59

|

vnktrmnb

| 2023-08-31T08:34:15Z | 65 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"bert",

"question-answering",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-multilingual-cased",

"base_model:finetune:google-bert/bert-base-multilingual-cased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-30T05:18:51Z |

---

license: apache-2.0

base_model: bert-base-multilingual-cased

tags:

- generated_from_keras_callback

model-index:

- name: vnktrmnb/MBERT_FT-TyDiQA_S59

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vnktrmnb/MBERT_FT-TyDiQA_S59

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.6175

- Train End Logits Accuracy: 0.8417

- Train Start Logits Accuracy: 0.8693

- Validation Loss: 0.4662

- Validation End Logits Accuracy: 0.8789

- Validation Start Logits Accuracy: 0.9162

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2412, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch |

|:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:|

| 1.4412 | 0.6715 | 0.7002 | 0.4875 | 0.8570 | 0.8943 | 0 |

| 0.8493 | 0.7898 | 0.8229 | 0.4547 | 0.8686 | 0.9137 | 1 |

| 0.6175 | 0.8417 | 0.8693 | 0.4662 | 0.8789 | 0.9162 | 2 |

### Framework versions

- Transformers 4.32.1

- TensorFlow 2.12.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

parksuna/xlm-roberta-base-finetuned-panx-de

|

parksuna

| 2023-08-31T08:29:59Z | 122 | 0 |

transformers

|

[

"transformers",

"pytorch",

"xlm-roberta",

"token-classification",

"generated_from_trainer",

"dataset:xtreme",

"base_model:FacebookAI/xlm-roberta-base",

"base_model:finetune:FacebookAI/xlm-roberta-base",

"license:mit",

"model-index",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

token-classification

| 2023-08-31T08:25:49Z |

---

license: mit

base_model: xlm-roberta-base

tags:

- generated_from_trainer

datasets:

- xtreme

metrics:

- f1

model-index:

- name: xlm-roberta-base-finetuned-panx-de

results:

- task:

name: Token Classification

type: token-classification

dataset:

name: xtreme

type: xtreme

config: PAN-X.de

split: validation

args: PAN-X.de

metrics:

- name: F1

type: f1

value: 0.8657241810026685

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# xlm-roberta-base-finetuned-panx-de

This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the xtreme dataset.

It achieves the following results on the evaluation set:

- Loss: 0.1338

- F1: 0.8657

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 5e-05

- train_batch_size: 24

- eval_batch_size: 24

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 3

### Training results

| Training Loss | Epoch | Step | Validation Loss | F1 |

|:-------------:|:-----:|:----:|:---------------:|:------:|

| 0.257 | 1.0 | 525 | 0.1557 | 0.8218 |

| 0.126 | 2.0 | 1050 | 0.1460 | 0.8521 |

| 0.0827 | 3.0 | 1575 | 0.1338 | 0.8657 |

### Framework versions

- Transformers 4.32.1

- Pytorch 2.0.1+cu118

- Datasets 2.14.4

- Tokenizers 0.13.3

|

vnktrmnb/MBERT_FT-TyDiQA_S531

|

vnktrmnb

| 2023-08-31T08:22:15Z | 65 | 0 |

transformers

|

[

"transformers",

"tf",

"tensorboard",

"bert",

"question-answering",

"generated_from_keras_callback",

"base_model:google-bert/bert-base-multilingual-cased",

"base_model:finetune:google-bert/bert-base-multilingual-cased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] |

question-answering

| 2023-08-31T07:27:40Z |

---

license: apache-2.0

base_model: bert-base-multilingual-cased

tags:

- generated_from_keras_callback

model-index:

- name: vnktrmnb/MBERT_FT-TyDiQA_S531

results: []

---

<!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# vnktrmnb/MBERT_FT-TyDiQA_S531

This model is a fine-tuned version of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) on an unknown dataset.

It achieves the following results on the evaluation set:

- Train Loss: 0.6202

- Train End Logits Accuracy: 0.8376

- Train Start Logits Accuracy: 0.8661

- Validation Loss: 0.4939

- Validation End Logits Accuracy: 0.8647

- Validation Start Logits Accuracy: 0.9046

- Epoch: 2

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure

### Training hyperparameters

The following hyperparameters were used during training:

- optimizer: {'name': 'Adam', 'weight_decay': None, 'clipnorm': None, 'global_clipnorm': None, 'clipvalue': None, 'use_ema': False, 'ema_momentum': 0.99, 'ema_overwrite_frequency': None, 'jit_compile': True, 'is_legacy_optimizer': False, 'learning_rate': {'class_name': 'PolynomialDecay', 'config': {'initial_learning_rate': 2e-05, 'decay_steps': 2412, 'end_learning_rate': 0.0, 'power': 1.0, 'cycle': False, 'name': None}}, 'beta_1': 0.9, 'beta_2': 0.999, 'epsilon': 1e-08, 'amsgrad': False}

- training_precision: float32

### Training results

| Train Loss | Train End Logits Accuracy | Train Start Logits Accuracy | Validation Loss | Validation End Logits Accuracy | Validation Start Logits Accuracy | Epoch |

|:----------:|:-------------------------:|:---------------------------:|:---------------:|:------------------------------:|:--------------------------------:|:-----:|

| 1.4876 | 0.6535 | 0.6831 | 0.5669 | 0.8222 | 0.8698 | 0 |

| 0.8473 | 0.7841 | 0.8173 | 0.4769 | 0.8647 | 0.9059 | 1 |

| 0.6202 | 0.8376 | 0.8661 | 0.4939 | 0.8647 | 0.9046 | 2 |

### Framework versions

- Transformers 4.32.1

- TensorFlow 2.12.0

- Datasets 2.14.4

- Tokenizers 0.13.3

|

emotibot-inc/Zhuhai-13B

|

emotibot-inc

| 2023-08-31T08:13:01Z | 0 | 0 | null |

[

"region:us"

] | null | 2023-08-30T09:33:05Z |

# README

# Zhuhai-13B

[Hugging Face](https://huggingface.co/emotibot-inc/Zhuhai-13B) | [GitHub](https://github.com/emotibot-inc/Zhuhai-13B) | [Model Scope](https://modelscope.cn/models/emotibotinc/Zhuhai-13B/summary) | [Emotibrain](https://brain.emotibot.com/?source=zhuhai13b_huggingface)

# **模型介绍**

"竹海-13B"是竹间智能继“竹海-7B”之后开发的一款拥大模语言模型,以下是“竹海-13B”的四个主要特点:

- 更大尺寸、更多数据:相比于“竹海-7B”,我们将参数量扩大到130亿,并在高质量语料上训练了1.2万亿tokens。Zhuhai-13B的上下文窗口长度为4096。

- 高效性能:基于Transformer结构,在大约1.2万亿tokens上训练出来的130亿参数模型,支持中英双语。

- 安全性:我们对“竹海-13B”进行了严格的安全控制和优化,确保其在实际应用中不会产生任何不适当或误导性的输出。通过精心设计和调整算法参数,“竹海-13B”可以有效地避免乱说话现象。

# Model **benchmark**

## **中文评测** - **CMMLU**

### Result

| Model 5-shot | STEM | Humanities | Social Science | Other | China-specific | Average |

| --- | --- | --- | --- | --- | --- | --- |

| Multilingual-oriented | | | | | | |

| [GPT4](https://openai.com/gpt4) | 65.23 | 72.11 | 72.06 | 74.79 | 66.12 | 70.95 |

| [ChatGPT](https://openai.com/chatgpt) | 47.81 | 55.68 | 56.50 | 62.66 | 50.69 | 55.51 |

| [Falcon-40B](https://huggingface.co/tiiuae/falcon-40b) | 33.33 | 43.46 | 44.28 | 44.75 | 39.46 | 41.45 |

| [LLaMA-65B](https://github.com/facebookresearch/llama) | 34.47 | 40.24 | 41.55 | 42.88 | 37.00 | 39.80 |

| [BLOOMZ-7B](https://github.com/bigscience-workshop/xmtf) | 30.56 | 39.10 | 38.59 | 40.32 | 37.15 | 37.04 |

| [Bactrian-LLaMA-13B](https://github.com/mbzuai-nlp/bactrian-x) | 27.52 | 32.47 | 32.27 | 35.77 | 31.56 | 31.88 |

| Chinese-oriented | | | | | | |

| [Zhuzhi-6B](https://github.com/emotibot-inc/Zhuzhi-6B) | 40.30 | 48.08 | 46.72 | 47.41 | 45.51 | 45.60 |

| [Zhuhai-13B](https://github.com/emotibot-inc/Zhuhai-13B) | 42.39 | 61.57 | 60.48 | 58.57 | 55.68 | 55.74 |

| [Baichuan-13B](https://github.com/baichuan-inc/Baichuan-13B) | 42.38 | 61.61 | 60.44 | 59.26 | 56.62 | 55.82 |

| [ChatGLM2-6B](https://huggingface.co/THUDM/chatglm2-6b) | 42.55 | 50.98 | 50.99 | 50.80 | 48.37 | 48.80 |

| [Baichuan-7B](https://github.com/baichuan-inc/baichuan-7B) | 35.25 | 48.07 | 47.88 | 46.61 | 44.14 | 44.43 |

| [ChatGLM-6B](https://github.com/THUDM/GLM-130B) | 32.35 | 39.22 | 39.65 | 38.62 | 37.70 | 37.48 |

| [BatGPT-15B](https://github.com/haonan-li/CMMLU/blob/master) | 34.96 | 35.45 | 36.31 | 42.14 | 37.89 | 37.16 |

| [Chinese-LLaMA-13B](https://github.com/ymcui/Chinese-LLaMA-Alpaca) | 27.12 | 33.18 | 34.87 | 35.10 | 32.97 | 32.63 |

| [MOSS-SFT-16B](https://github.com/OpenLMLab/MOSS) | 27.23 | 30.41 | 28.84 | 32.56 | 28.68 | 29.57 |

| [Chinese-GLM-10B](https://github.com/THUDM/GLM) | 25.49 | 27.05 | 27.42 | 29.21 | 28.05 | 27.26 |

| Random | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 | 25.00 |

| Model 0-shot | STEM | Humanities | Social Science | Other | China-specific | Average |

| --- | --- | --- | --- | --- | --- | --- |

| Multilingual-oriented | | | | | | |

| [GPT4](https://openai.com/gpt4) | 63.16 | 69.19 | 70.26 | 73.16 | 63.47 | 68.9 |

| [ChatGPT](https://openai.com/chatgpt) | 44.8 | 53.61 | 54.22 | 59.95 | 49.74 | 53.22 |

| [BLOOMZ-7B](https://github.com/bigscience-workshop/xmtf) | 33.03 | 45.74 | 45.74 | 46.25 | 41.58 | 42.8 |

| [Falcon-40B](https://huggingface.co/tiiuae/falcon-40b) | 31.11 | 41.3 | 40.87 | 40.61 | 36.05 | 38.5 |

| [LLaMA-65B](https://github.com/facebookresearch/llama) | 31.09 | 34.45 | 36.05 | 37.94 | 32.89 | 34.88 |

| [Bactrian-LLaMA-13B](https://github.com/mbzuai-nlp/bactrian-x) | 26.46 | 29.36 | 31.81 | 31.55 | 29.17 | 30.06 |

| Chinese-oriented | | | | | | |

| [Zhuzhi-6B](https://github.com/emotibot-inc/Zhuzhi-6B) | 42.51 | 48.91 | 48.85 | 50.25 | 47.57 | 47.62 |

| [Zhuhai-13B](https://github.com/emotibot-inc/Zhuhai-13B) | 42.37 | 60.97 | 59.71 | 56.35 | 54.81 | 54.84 |

| [Baichuan-13B](https://github.com/baichuan-inc/Baichuan-13B) | 42.04 | 60.49 | 59.55 | 56.6 | 55.72 | 54.63 |

| [ChatGLM2-6B](https://huggingface.co/THUDM/chatglm2-6b) | 41.28 | 52.85 | 53.37 | 52.24 | 50.58 | 49.95 |

| [Baichuan-7B](https://github.com/baichuan-inc/baichuan-7B) | 32.79 | 44.43 | 46.78 | 44.79 | 43.11 | 42.33 |

| [ChatGLM-6B](https://github.com/THUDM/GLM-130B) | 32.22 | 42.91 | 44.81 | 42.6 | 41.93 | 40.79 |

| [BatGPT-15B](https://github.com/haonan-li/CMMLU/blob/master) | 33.72 | 36.53 | 38.07 | 46.94 | 38.32 | 38.51 |

| [Chinese-LLaMA-13B](https://github.com/ymcui/Chinese-LLaMA-Alpaca) | 26.76 | 26.57 | 27.42 | 28.33 | 26.73 | 27.34 |

| [MOSS-SFT-16B](https://github.com/OpenLMLab/MOSS) | 25.68 | 26.35 | 27.21 | 27.92 | 26.7 | 26.88 |

| [Chinese-GLM-10B](https://github.com/THUDM/GLM) | 25.57 | 25.01 | 26.33 | 25.94 | 25.81 | 25.8 |

| Random | 25 | 25 | 25 | 25 | 25 | 25 |

# **推理对话**

您可以直接注册并登录竹间智能科技发布的大模型产品 [Emotibrain](https://brain.emotibot.com/?source=zhuhai13b_huggingface),并选择 **CoPilot**(**KKBot**) 进行的在线测试,注册即可立即使用;

# **模型训练**

您可以直接注册并登录竹间智能科技发布的大模型产品 [Emotibrain](https://brain.emotibot.com/?source=zhuhai13b_huggingface),并选择 Fine-tune 进行 **0 代码微调**,注册即可立即使用;

详细的训练流程您可以浏览此文档:[Emotibrain 快速入门](https://brain.emotibot.com/supports/model-factory/dash-into.html)(大约 5 分钟)

# **更多信息**

若您想了解更多 大模型训练平台 的相关信息,请访问 [Emotibrain 官网](https://brain.emotibot.com/?source=zhuhai13b_huggingface) 进行了解;

|

Geotrend/bert-base-en-fr-ar-cased

|

Geotrend

| 2023-08-31T08:03:30Z | 114 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"fill-mask",

"multilingual",

"dataset:wikipedia",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

---

language: multilingual

datasets: wikipedia

license: apache-2.0

---

# bert-base-en-fr-ar-cased

We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages.

Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy.

For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf).

## How to use

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-en-fr-ar-cased")

model = AutoModel.from_pretrained("Geotrend/bert-base-en-fr-ar-cased")

```

To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers).

### How to cite

```bibtex

@inproceedings{smallermbert,

title={Load What You Need: Smaller Versions of Mutlilingual BERT},

author={Abdaoui, Amine and Pradel, Camille and Sigel, Grégoire},

booktitle={SustaiNLP / EMNLP},

year={2020}

}

```

## Contact

Please contact amine@geotrend.fr for any question, feedback or request.

|

Geotrend/bert-base-en-pt-cased

|

Geotrend

| 2023-08-31T08:03:02Z | 116 | 1 |

transformers

|

[

"transformers",

"pytorch",

"tf",

"jax",

"safetensors",

"bert",

"fill-mask",

"multilingual",

"en",

"pt",

"dataset:wikipedia",

"license:apache-2.0",

"autotrain_compatible",

"endpoints_compatible",

"region:us"

] |

fill-mask

| 2022-03-02T23:29:04Z |

---

language:

- multilingual

- en

- pt

datasets: wikipedia

license: apache-2.0

---

# bert-base-en-pt-cased

We are sharing smaller versions of [bert-base-multilingual-cased](https://huggingface.co/bert-base-multilingual-cased) that handle a custom number of languages.

Unlike [distilbert-base-multilingual-cased](https://huggingface.co/distilbert-base-multilingual-cased), our versions give exactly the same representations produced by the original model which preserves the original accuracy.

For more information please visit our paper: [Load What You Need: Smaller Versions of Multilingual BERT](https://www.aclweb.org/anthology/2020.sustainlp-1.16.pdf).

## How to use

```python

from transformers import AutoTokenizer, AutoModel

tokenizer = AutoTokenizer.from_pretrained("Geotrend/bert-base-en-pt-cased")

model = AutoModel.from_pretrained("Geotrend/bert-base-en-pt-cased")

```

To generate other smaller versions of multilingual transformers please visit [our Github repo](https://github.com/Geotrend-research/smaller-transformers).

### How to cite

```bibtex

@inproceedings{smallermbert,

title={Load What You Need: Smaller Versions of Mutlilingual BERT},

author={Abdaoui, Amine and Pradel, Camille and Sigel, Grégoire},

booktitle={SustaiNLP / EMNLP},

year={2020}

}

```

## Contact

Please contact amine@geotrend.fr for any question, feedback or request.

|

ThanhMai/green-clip-inpaint

|

ThanhMai

| 2023-08-31T08:01:58Z | 21 | 0 |

diffusers

|

[

"diffusers",

"safetensors",

"text-to-image",

"license:creativeml-openrail-m",

"autotrain_compatible",

"endpoints_compatible",

"diffusers:StableDiffusionPipeline",

"region:us"

] |

text-to-image

| 2023-08-31T08:01:05Z |

---

license: creativeml-openrail-m

tags:

- text-to-image

---

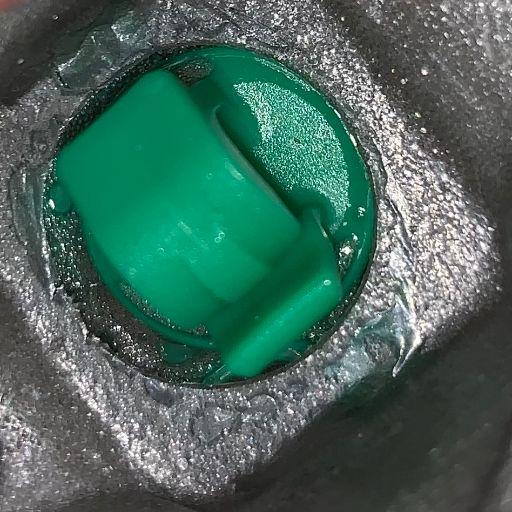

### green clip inpaint on Stable Diffusion via Dreambooth

#### model by ThanhMai

This your the Stable Diffusion model fine-tuned the green clip inpaint concept taught to Stable Diffusion with Dreambooth.

It can be used by modifying the `instance_prompt`: **<green-clip> clip**

You can also train your own concepts and upload them to the library by using [this notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_training.ipynb).

And you can run your new concept via `diffusers`: [Colab Notebook for Inference](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/sd_dreambooth_inference.ipynb), [Spaces with the Public Concepts loaded](https://huggingface.co/spaces/sd-dreambooth-library/stable-diffusion-dreambooth-concepts)

Here are the images used for training this concept:

|

SCUT-DLVCLab/lilt-roberta-en-base

|

SCUT-DLVCLab

| 2023-08-31T07:59:36Z | 19,575 | 18 |

transformers

|

[

"transformers",

"pytorch",

"safetensors",

"lilt",

"feature-extraction",

"vision",

"arxiv:2202.13669",

"license:mit",

"endpoints_compatible",

"region:us"

] |

feature-extraction

| 2022-09-29T14:06:32Z |

---

license: mit

tags:

- vision

---

# LiLT-RoBERTa (base-sized model)

Language-Independent Layout Transformer - RoBERTa model by stitching a pre-trained RoBERTa (English) and a pre-trained Language-Independent Layout Transformer (LiLT) together. It was introduced in the paper [LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding](https://arxiv.org/abs/2202.13669) by Wang et al. and first released in [this repository](https://github.com/jpwang/lilt).

Disclaimer: The team releasing LiLT did not write a model card for this model so this model card has been written by the Hugging Face team.

## Model description

The Language-Independent Layout Transformer (LiLT) allows to combine any pre-trained RoBERTa encoder from the hub (hence, in any language) with a lightweight Layout Transformer to have a LayoutLM-like model for any language.

<img src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/transformers/model_doc/lilt_architecture.jpg" alt="drawing" width="600"/>

## Intended uses & limitations

The model is meant to be fine-tuned on tasks like document image classification, document parsing and document QA. See the [model hub](https://huggingface.co/models?search=lilt) to look for fine-tuned versions on a task that interests you.

### How to use

For code examples, we refer to the [documentation](https://huggingface.co/transformers/main/model_doc/lilt.html).

### BibTeX entry and citation info

```bibtex

@misc{https://doi.org/10.48550/arxiv.2202.13669,

doi = {10.48550/ARXIV.2202.13669},

url = {https://arxiv.org/abs/2202.13669},

author = {Wang, Jiapeng and Jin, Lianwen and Ding, Kai},

keywords = {Computation and Language (cs.CL), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {LiLT: A Simple yet Effective Language-Independent Layout Transformer for Structured Document Understanding},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}

```

|

yuekai/model_repo_whisper_large_v2

|

yuekai

| 2023-08-31T07:57:15Z | 0 | 0 | null |

[

"onnx",

"region:us"

] | null | 2023-08-17T09:53:49Z |

### Client

https://huggingface.co/spaces/yuekai/triton-asr-client

https://github.com/yuekaizhang/Triton-ASR-Client

### Server

```sh

docker pull soar97/triton-whisper:23.06

docker run -it --name "whisper-server" --gpus all --net host -v $your_mount_dir --shm-size=2g soar97/triton-whisper:23.06

apt-get install git-lfs

git-lfs install

git clone https://huggingface.co/yuekai/model_repo_whisper_large_v2.git

export CUDA_VISIBLE_DEVICES="1"

model_repo_path=./model_repo_whisper

tritonserver --model-repository $model_repo_path \

--pinned-memory-pool-byte-size=2048000000 \

--cuda-memory-pool-byte-size=0:4096000000 \

--http-port 10086 \

--metrics-port 10087

```

### Benchmark Results

Decoding on a single V100 GPU, audios are padding to 30s, using aishell1 test set files

| Model | Backend | Concurrency | RTF |

|-------|-----------|-----------------------|---------|

| Large-v2 | ONNX FP16 | 4 | 0.14 |

|Module| Time Distribution|

|--|--|

|feature_extractor|0.8%|

|encoder|9.6%|

|decoder|67.4%|

|greedy search|22.2%|

|

victornica/mini_molformer_gsf_6epochs

|

victornica

| 2023-08-31T07:56:29Z | 153 | 0 |

transformers

|

[

"transformers",

"pytorch",

"tensorboard",

"gpt2",

"text-generation",

"generated_from_trainer",

"license:mit",

"autotrain_compatible",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] |

text-generation

| 2023-08-30T22:56:12Z |

---

license: mit

tags:

- generated_from_trainer

model-index:

- name: mini_molformer_gsf_6epochs

results: []

---

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mini_molformer_gsf_6epochs

This model is a fine-tuned version of [gpt2](https://huggingface.co/gpt2) on an unknown dataset.

It achieves the following results on the evaluation set:

- Loss: 0.6470

## Model description

More information needed

## Intended uses & limitations

More information needed

## Training and evaluation data

More information needed

## Training procedure